Advancing Breeding Programs: A Comprehensive Guide to Genomic Prediction Models

This article provides a comprehensive overview of the latest advancements in genomic prediction (GP) models and their transformative impact on modern breeding programs.

Advancing Breeding Programs: A Comprehensive Guide to Genomic Prediction Models

Abstract

This article provides a comprehensive overview of the latest advancements in genomic prediction (GP) models and their transformative impact on modern breeding programs. It explores the foundational principles of GP, from traditional genomic estimated breeding values (GEBVs) to more sophisticated cross-performance tools (GPCP) that account for dominance effects. The scope extends to methodological innovations, including the integration of multi-omics data and advanced statistical learning techniques, which significantly enhance prediction accuracy for complex traits. The article also addresses critical challenges in model optimization, selection, and validation, offering practical insights for researchers and scientists in drug development and agriculture. Finally, it presents a comparative analysis of model performance across different breeding contexts and species, empowering professionals to select the most effective strategies for accelerating genetic gain and achieving precision in breeding outcomes.

The Genomic Prediction Landscape: From GEBV to Cross-Performance and Foundational Concepts

Core Principles of Genomic Selection and its Impact on Breeding Cycles

Genomic Selection (GS) is a modern breeding strategy that utilizes genome-wide marker information to predict the genetic merit of selection candidates, thereby accelerating genetic gains in both plant and animal breeding programs. Unlike traditional marker-assisted selection (MAS), which is effective only for traits controlled by a few major genes, GS is designed for complex quantitative traits influenced by many genes with small effects [1]. The core principle involves estimating the effect of thousands of molecular markers spread across the entire genome to calculate a Genomic Estimated Breeding Value (GEBV) for each individual. This value represents the sum of all marker effects and provides an early, accurate prediction of an individual's breeding potential, even in the absence of its own phenotypic record [1] [2]. By enabling selection based on GEBVs, GS significantly shortens the generational interval, especially for traits that are difficult or time-consuming to measure, such as those expressed late in life or dependent on specific environmental conditions [1] [3]. The implementation of GS has been shown to considerably increase the rates of genetic gain and is transforming breeding programs worldwide [4].

Core Principles and Key Factors

The efficiency of genomic selection is governed by several interconnected factors that influence the accuracy of genomic prediction.

- Training Population: The foundation of an accurate GS model is a well-designed training population. Key considerations include its size, the genetic diversity within it, and its relationship to the breeding population (the candidates for selection). A larger training population generally improves prediction accuracy, though benefits diminish beyond an optimal size, necessitating a balance with resource allocation [3]. The genetic relationship between the training and breeding populations is critical; a closer relationship typically leads to higher prediction accuracy [5].

- Markers and Genetic Architecture: The density and distribution of genetic markers (e.g., Single Nucleotide Polymorphisms or SNPs), along with the level of Linkage Disequilibrium (LD) between markers and quantitative trait loci (QTL), are vital. Higher marker density is required for populations with low LD. Furthermore, the genetic architecture of the target trait—including its heritability and the number and effect sizes of the underlying QTL—profoundly affects how well it can be predicted [3].

- Statistical Models: A variety of statistical models are employed to estimate marker effects and compute GEBVs. These range from classical mixed models like Genomic Best Linear Unbiased Prediction (GBLUP) to more complex machine learning and deep learning algorithms capable of modeling non-additive genetic effects and complex interactions [6] [7]. The choice of model depends on the trait's genetic architecture and the available computational resources.

Table 1: Key Factors Influencing Genomic Prediction Accuracy

| Factor | Description | Impact on Prediction Accuracy |

|---|---|---|

| Training Population Size | Number of phenotyped and genotyped individuals used to train the model. | Generally increases with size, but with diminishing returns [3]. |

| Marker Density | Number of genetic markers used per genome. | Higher density improves accuracy, especially in populations with low LD [3]. |

| Trait Heritability | Proportion of phenotypic variance due to genetic factors. | Higher heritability traits are predicted more accurately [8] [3]. |

| Genetic Relationship | Relatedness between the training and breeding populations. | Closer relationships lead to substantially higher accuracy [5]. |

| Genetic Architecture | Number of genes controlling a trait and their effect sizes. | Traits controlled by many small-effect genes are well-suited to GS [1]. |

Experimental Protocols and Workflows

A Standard Genomic Selection Protocol

The following protocol outlines the key steps for implementing GS in a breeding program, adaptable for species like wheat, maize, or livestock.

Step 1: Training Population Design and Phenotyping

- Objective: Assemble a representative set of individuals that capture the genetic diversity of the broader breeding population.

- Procedure: Select a few hundred to a few thousand individuals from existing breeding lines or a reference population [9]. Ensure this group has a strong genetic relationship to the future selection candidates. For each individual, collect high-quality phenotypic data for the target trait(s) in replicated trials across multiple environments to obtain robust estimates of performance [5].

Step 2: Genotyping and Data Quality Control

- Objective: Obtain high-density genotype data for the training population.

- Procedure: Extract DNA from tissue samples (e.g., blood, leaf). Genotype using a high-density SNP array or, for a more cost-effective approach, low-coverage whole genome sequencing (lcWGS) followed by imputation to recover missing genotypes [9].

- Quality Control: Filter raw genotype data using software like PLINK [9]. Apply thresholds for minor allele frequency (e.g., MAF > 0.01), individual and marker call rates, and Hardy-Weinberg equilibrium to ensure data integrity [9].

Step 3: Model Training and Validation

- Objective: Develop a prediction model that links genotypes to phenotypes.

- Procedure: Use statistical software or machine learning platforms to train the model. The phenotypic records are the response variable, and the genotype markers are the predictors [1] [7].

- Validation: Assess model accuracy using cross-validation techniques. For a realistic assessment of predicting new families, use Leave-One-Family-Out (LOFO) cross-validation, where the model is trained on all families except one, which is used for validation [5].

Step 4: Genomic Prediction and Selection

- Objective: Predict the performance of unphenotyped selection candidates.

- Procedure: Genotype the breeding population (selection candidates) using the same platform as the training population. Apply the trained model to their genotype data to calculate GEBVs for all candidates.

- Selection: Select top-performing individuals based on their GEBVs for advancement in the breeding program or as parents for the next generation.

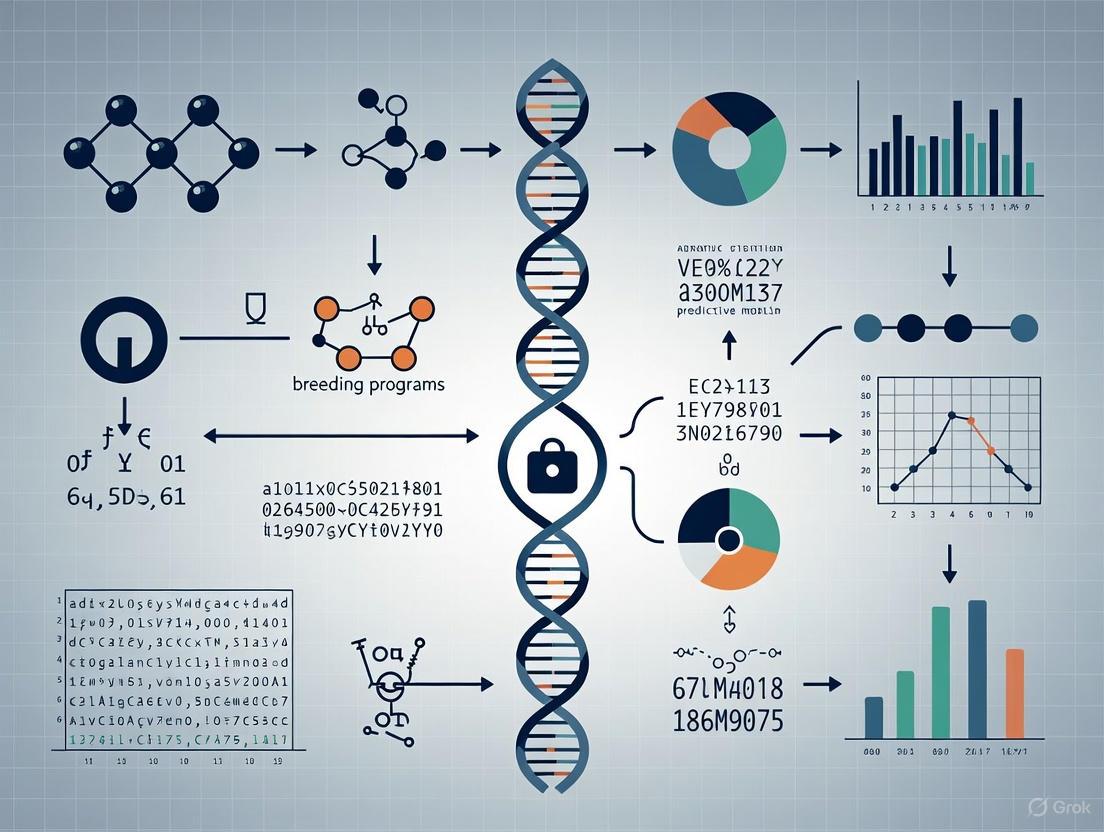

Diagram 1: Genomic Selection Workflow. This diagram outlines the standard steps for implementing genomic selection in a breeding program, from population design to selection.

Protocol for a Cost-Effective GS Approach Using Low-Coverage Sequencing

For species without commercial SNP arrays, lcWGS with imputation provides a cost-effective alternative [9].

Step 1: Library Preparation and Low-Coverage Sequencing

- Procedure: Shear genomic DNA and prepare sequencing libraries. Sequence libraries on a platform like Illumina NovaSeq to achieve an average genome coverage of 1x to 4x [9].

Step 2: Genotype Imputation

- Objective: Infer missing genotypes from low-coverage data.

- Procedure: Use imputation algorithms such as STITCH or Beagle to predict ungenotyped variants. STITCH is particularly useful when a reference haplotype panel is unavailable, as it constructs haplotypes directly from sequencing read data [9].

- Evaluation: Assess imputation accuracy by comparing imputed genotypes with a truth set (e.g., high-coverage sequences from a subset of individuals). Accuracy is influenced by sequencing depth, sample size, and minor allele frequency [9].

Step 3: Genomic Prediction with Imputed Data

- Procedure: Use the imputed genotype dosages to construct a genomic relationship matrix (G matrix) for models like GBLUP. Multi-trait GBLUP models can be employed to improve accuracy for correlated traits [9].

Table 2: Comparison of Common Genomic Prediction Models

| Model Category | Example Models | Underlying Principle | Best Suited For |

|---|---|---|---|

| Parametric / Mixed Models | GBLUP, RR-BLUP | Assumes all markers have a normally distributed effect; uses a genomic relationship matrix [1]. | Traits with additive genetic architecture; computationally efficient [7]. |

| Bayesian Methods | BayesA, BayesB, BayesC | Allows for marker-specific variances, assuming some markers have large effects and others small [8] [4]. | Traits with a mix of small and large-effect QTL; more computationally intensive [4]. |

| Machine Learning (ML) | Regularized Regression (LASSO), Ensemble Methods (Random Forests), Deep Learning | Flexible algorithms that can capture non-linear and interaction effects without pre-specified assumptions [6] [7]. | Complex traits with non-additive effects; performance is data- and trait-dependent [7]. |

The Scientist's Toolkit

Successful implementation of GS relies on a suite of reagents, technologies, and software.

Table 3: Essential Research Reagents and Tools for Genomic Selection

| Item | Function / Description | Application in GS Protocol |

|---|---|---|

| DNA Extraction Kit (e.g., QIAamp DNA Investigator Kit) | Isolates high-quality, pure genomic DNA from tissue samples (blood, leaf). | Essential first step for all downstream genotyping, whether using SNP arrays or sequencing [9]. |

| SNP Genotyping Array | A targeted genotyping platform that assays a predefined set of thousands to millions of SNPs. | Provides high-quality, reproducible genotype data for training and breeding populations. Common in established breeding programs [5]. |

| Illumina Sequencing Library Prep Kit | Prepares DNA fragments for sequencing on Illumina platforms (e.g., NovaSeq). | Required for whole-genome sequencing approaches, including low-coverage WGS [9]. |

| Imputation Software (e.g., STITCH, Beagle) | Infers missing genotypes in a dataset based on a reference panel or read data. | Critical for cost-effective GS using low-coverage sequencing data to create a unified, high-density genotype dataset [9]. |

| Statistical Software (e.g., R/python with specialized packages) | Provides environment for data QC, model training (GBLUP, Bayesian, ML), and prediction. | Used in the model training and validation step to analyze the relationship between genotype and phenotype [7]. |

Genomic selection fundamentally reshapes and accelerates the breeding cycle. Traditional breeding relies heavily on multi-year, multi-location field trials to accurately measure phenotypic performance, which lengthens the generation interval. In contrast, GS allows breeders to select juvenile animals or seedlings based solely on their GEBVs, drastically reducing the time per cycle [1] [2]. This enables more cycles of selection per unit time, leading to a direct increase in the rate of genetic gain per year [4]. Furthermore, GS increases selection intensity by allowing breeders to evaluate a much larger number of candidates at an early stage with minimal phenotyping costs [1]. The integration of GS is therefore not merely an incremental improvement but a paradigm shift that turbocharges breeding programs. It enhances the utilization of genetic resources and is poised to play a critical role in developing climate-resilient crops and livestock to meet future food security challenges [1] [2].

Genomic Estimated Breeding Values (GEBVs) are a fundamental tool in modern breeding programs, enabling the prediction of an individual's genetic merit based on genome-wide marker data. The traditional additive model, which forms the basis of GEBV calculation, operates on the principle that the genetic value of an individual can be approximated by summing the additive effects of thousands of genetic markers across the genome. This approach assumes that all single nucleotide polymorphisms (SNPs) contribute equally to the genetic variance of the trait, providing a robust framework for genomic selection that has significantly accelerated genetic gains in both plant and animal breeding.

The genomic best linear unbiased prediction (GBLUP) method has emerged as one of the most widely implemented approaches for calculating GEBVs, particularly in dairy cattle, pig, and poultry breeding programs [10] [11]. By leveraging dense marker panels and mixed model methodology, GBLUP efficiently captures the additive genetic relationships between individuals, allowing for more accurate selection decisions earlier in an animal's life. The implementation of GBLUP has reduced generation intervals in dairy cattle from 7 years to less than 2.5 years, dramatically reducing breeding costs while accelerating genetic progress [10].

Fundamental Principles of the Additive Model

Theoretical Foundation

The traditional additive model for GEBV calculation is rooted in quantitative genetics theory, specifically the infinitesimal model which posits that traits are controlled by an infinite number of genes, each with infinitesimally small effects. In practice, this is implemented using dense genetic markers that cover the entire genome, allowing breeders to capture the collective effect of quantitative trait loci (QTL) without necessarily identifying individual loci.

The GBLUP method implements this additive genetic principle through the statistical model:

y = 1μ + Zg + e [10]

Where:

- y is the vector of phenotypic observations (or deregressed proofs)

- 1 is a vector of ones

- μ is the overall mean

- Z is an incidence matrix linking observations to genomic values

- g is the vector of genomic breeding values, assumed to follow a normal distribution (g \sim N(0,G\sigma_g^2))

- e is the vector of residual errors, assumed to follow (e \sim N(0,I\sigma_e^2))

- G is the genomic relationship matrix

- (\sigmag^2) and (\sigmae^2) are the additive genetic and residual variances, respectively

The genomic relationship matrix G is calculated from marker data as:

[ G{ij} = \frac{1}{m}\sum{g=1}^{m}\frac{(M{ig} - 2pg)(M{jg} - 2pg)}{2pg(1-pg)} ]

Where (M{ig}) and (M{jg}) are the genotypes of individuals i and j at marker g, (p_g) is the allele frequency of marker g, and m is the total number of markers [10].

Key Assumptions and Limitations

The traditional additive model operates under several key assumptions that define its applicability and limitations:

- Additivity Assumption: The model assumes purely additive genetic effects, excluding dominance and epistatic interactions [12]

- Equal Variance Contribution: All SNPs are presumed to contribute equally to genetic variance, which may not reflect biological reality where some markers have larger effects [10]

- Normal Distribution: Breeding values are assumed to follow a multivariate normal distribution

- Linkage Disequilibrium: Markers are in linkage disequilibrium with QTL, allowing them to capture the genetic variance

These assumptions make the model computationally efficient and statistically robust, but can limit accuracy for traits with significant non-additive genetic components or those influenced by major genes [12] [10].

Experimental Protocols and Implementation

Standard GBLUP Implementation Workflow

The following workflow diagram illustrates the key steps in implementing GBLUP for GEBV calculation:

Detailed Methodological Protocols

Genotypic Data Processing and Quality Control

Sample Collection and Genotyping

- Collect biological samples (blood, tissue, or semen) from the reference population

- Extract genomic DNA using standardized protocols

- Perform genotyping using appropriate SNP panels (e.g., 50K, 80K, or 150K SNP arrays)

- In poultry breeding programs, whole-genome resequencing may be employed, generating millions of SNPs [11]

Quality Control Procedures

- Filter individuals with call rates < 0.90 using PLINK software [10] [11]

- Remove SNPs with minor allele frequency (MAF) < 0.05 [10] [11]

- Exclude markers failing Hardy-Weinberg equilibrium (HWE < 1e-6) [10]

- Implement genotype imputation using Beagle v5.0 or similar software to handle missing data [10] [11]

- Validate imputation accuracy using metrics like genotype correlation (COR > 0.9) and concordance rate (CR > 0.9) [10]

Phenotypic Data Preparation

Data Collection and Adjustment

- Collect phenotypic records for target traits in the reference population

- For abdominal fat in chickens, record combined weight of abdominal fat deposits in grams following slaughter [11]

- Adjust raw phenotypes for fixed effects and covariates using the model: y = μ + Line + Sex + Weight + e [11]

- Where Line represents strain effects, Sex is the fixed effect of gender, and Weight is a covariate for live weight

Heritability Estimation

- Estimate variance components using specialized software (ASReml, DMUv6) or mixed model packages [13] [11]

- Calculate heritability as (h^2 = \sigmag^2 / (\sigmag^2 + \sigma_e^2))

- Use estimated heritability to inform model parameters and expectations of prediction accuracy

Genomic Relationship Matrix Construction and GEBV Calculation

GRM Implementation

- Calculate the genomic relationship matrix G using quality-controlled SNP data

- Standardize markers using allele frequencies to ensure relationships are comparable to pedigree-based relationships

- Validate G matrix by comparing with pedigree-based relationship matrix where available

Variance Component Estimation and GEBV Prediction

- Estimate variance components using restricted maximum likelihood (REML) approaches

- Solve the mixed model equations to obtain GEBVs for all genotyped individuals

- Validate model fit and check for convergence issues

- Calculate GEBV accuracies using cross-validation or comparison with progeny tests

Performance Evaluation and Comparison

Quantitative Performance Metrics

Table 1: Comparative Performance of GBLUP Against Alternative Methods Across Species

| Species/Trait | GBLUP Accuracy | Comparison Method | Alternative Accuracy | Relative Performance |

|---|---|---|---|---|

| Holstein Cattle [10] | ||||

| Fat Percentage (FP) | Baseline | WGBLUP_BayesBπ | +4.9% | Inferior |

| Protein Percentage (PP) | Baseline | DPAnet | +1.1% | Inferior |

| Feet & Legs (FL) | Baseline | DPAnet | +1.1% | Inferior |

| Simulated Population [13] | 0.774 | BayesCπ | 0.938 | Inferior |

| Sheep [14] | ||||

| Growth Traits (h²=0.35) | Varies by strategy | BLUP (Pedigree) | Up to 62% improvement | Superior |

| Chicken Abdominal Fat [11] | Baseline | DAWSELF (ML Ensemble) | Significantly higher | Inferior |

Table 2: Factors Influencing GBLUP Prediction Accuracy

| Factor | Impact on Accuracy | Evidence | Practical Implications |

|---|---|---|---|

| Reference Population Size | Positive correlation | Cattle: 16,122 individuals [10] | Larger reference populations improve accuracy |

| Marker Density | Moderate impact | Chicken: 6-9 million SNPs [11] | Higher density improves accuracy but with diminishing returns |

| Trait Heritability | Strong positive correlation | Sheep: h²=0.35 vs h²=0.10 [14] | Higher heritability traits yield better predictions |

| Genetic Architecture | Variable impact | Purely additive vs dominance traits [12] | Superior for additive traits, inferior for non-additive |

| Genotyping Strategy | Significant impact | Sheep: Random vs selective genotyping [14] | Random genotyping outperforms selective approaches |

Computational Efficiency Considerations

GBLUP maintains a crucial advantage in computational efficiency compared to more complex methods:

- Processing Time: GBLUP requires, on average, less than one-sixth the computational time of Bayesian methods or machine learning approaches [10]

- Scalability: Efficiently handles large datasets, with implementations successfully applied to populations exceeding 16,000 individuals [10]

- Software Availability: Widely implemented in multiple software packages, making it accessible for breeding programs of various scales

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for GBLUP Implementation

| Category | Specific Tool/Reagent | Function/Application | Implementation Example |

|---|---|---|---|

| Genotyping Platforms | BovineSNP50 BeadChip (54,609 SNPs) | Standardized genotyping in cattle [10] | Holstein cattle genomic selection |

| GeneSeek GGP-bovine 80K SNP BeadChip | Higher density genotyping [10] | Enhanced prediction accuracy | |

| GGP BovineSNP 150K (139,376 SNPs) | High-density genotyping [10] | Maximum marker coverage | |

| Quality Control Tools | PLINK | SNP filtering (MAF, HWE, call rates) [10] [11] | Pre-processing of genotype data |

| VCFtools | Variant call format processing [11] | Handling sequencing data | |

| Imputation Software | Beagle v5.0 | Genotype imputation [10] [11] | Handling missing genotypes and unifying different SNP panels |

| Statistical Analysis | R software with specialized packages | Statistical implementation of GBLUP [14] | Mixed model analysis |

| ASReml (v4.2) | Variance component estimation [11] | Heritability estimation | |

| DMUv6 | Traditional BLUP and variance estimation [13] | Pedigree-based comparison | |

| Simulation Tools | AlphaSimR | Breeding program simulation [12] [14] | Method validation and optimization |

Limitations and Considerations

Contextual Limitations of the Additive Model

The traditional additive GBLUP model demonstrates specific limitations that researchers must consider when selecting genomic prediction approaches:

Genetic Architecture Constraints

- Performs suboptimally for traits with significant dominance effects, where genomic predicted cross-performance (GPCP) models superiorly identify optimal parental combinations [12]

- Assumption of equal SNP contributions limits accuracy for traits influenced by major genes [10]

- Unable to effectively capture non-additive genetic variation, which can be substantial for certain traits and populations

Implementation Challenges

- Accuracy substantially influenced by reference population design and composition [14]

- Sensitive to pedigree errors and relationship misidentification, though genomic information can mitigate some of these effects [14]

- Performance varies significantly across species and breeding systems, with different optimal implementation strategies

Strategic Applications

Despite these limitations, GBLUP remains the foundational approach for genomic selection in many contexts:

- Ideal Applications: Traits with predominantly additive genetic architecture, programs with large reference populations, initial implementation of genomic selection

- Complementary Approaches: Bayesian methods for traits with major genes, machine learning for complex non-linear relationships, GPCP for hybrid breeding schemes [12] [10] [11]

- Future Directions: Ensemble methods combining GBLUP with other approaches show promise for enhancing prediction accuracy across diverse genetic architectures [15]

The traditional additive model for GEBV calculation represents a robust, computationally efficient approach that continues to form the backbone of genomic selection in many breeding programs. While newer methods may offer advantages for specific applications, GBLUP's simplicity, interpretability, and proven effectiveness ensure its ongoing relevance in agricultural genomics research and application.

Genomic Predicted Cross-Performance (GPCP) represents a significant advancement in genomic prediction for plant and animal breeding. While traditional genomic selection has predominantly focused on estimating additive breeding values (GEBVs), GPCP utilizes a mixed linear model that incorporates both additive and directional dominance effects to predict the performance of specific parental combinations [12]. This approach provides a more comprehensive framework for breeding programs aiming to maximize genetic gain, particularly for traits influenced by non-additive genetic effects and in species where clonal propagation is prevalent.

The fundamental advantage of GPCP lies in its ability to effectively identify optimal parental combinations and enhance crossing strategies, especially for traits with significant dominance effects [12]. For clonally propagated crops where inbreeding depression and heterosis are prevalent—and reciprocal recurrent selection is impractical—GPCP offers a robust solution that maintains a higher proportion of dominance variance compared to individual-based selection on GEBV alone [12]. This protocol details the implementation, application, and analysis of GPCP within breeding programs.

Computational Protocol for GPCP Analysis

Software Environment and Installation

The GPCP tool is implemented within the BreedBase environment and is also available as an R package, gpcp, which can be installed directly from GitHub [16].

The gpcp package depends on several R packages: sommer for mixed model analysis, dplyr for data manipulation, and AGHmatrix for constructing genomic relationship matrices [16].

Data Preparation and Input Requirements

Successful GPCP analysis requires proper formatting of both genotypic and phenotypic data:

Phenotypic Data Format (CSV file):

- The phenotype file should be a data frame containing at minimum columns for genotype IDs and traits of interest.

- Fixed effects (e.g., location, replication) should be included as separate columns.

- Missing data should be appropriately coded (e.g., as NA).

Genotypic Data Format:

- Acceptable formats include VCF (Variant Call Format) or HapMap.

- For polyploid species, allele dosages must be accurately represented (0-Ploidy for polysomic inheritance).

- The data should undergo standard quality control: filtering for minor allele frequency, call rate, and Hardy-Weinberg equilibrium.

Running the GPCP Analysis

The core function runGPCP() executes the genomic prediction of cross performance. Below is a comprehensive example with all necessary parameters:

Output Interpretation

The runGPCP() function returns a data frame containing:

- Parent1: The first parent genotype ID

- Parent2: The second parent genotype ID

- CrossPredictedMerit: The predicted merit of the cross based on the weighted index of traits [16]

The output is automatically sorted by descending CrossPredictedMerit, enabling breeders to immediately identify the most promising parental combinations.

Research Reagent Solutions

Table 1: Essential research reagents and computational tools for GPCP implementation.

| Item Name | Function/Application | Specifications |

|---|---|---|

| SNP Genotyping Array | Genome-wide marker data generation | 58K SC Affymetrix Axiom SNP array for sugarcane [17]; EuChip60K for Eucalyptus [18] |

| gpcp R Package | Core GPCP analysis | Implements additive and dominance effects model; supports diploid and polyploid species [16] |

| BreedBase Platform | Integrated breeding data management | Web-based database for storing phenotypic and genotypic data; supports GPCP implementation [12] |

| sommer R Package | Mixed model analysis | Fits mixed models with additive and dominance relationship matrices; used by gpcp [12] [16] |

| AGHmatrix R Package | Genomic relationship matrices | Computes additive and dominance genomic relationship matrices for diploid and polyploid species [16] |

| AlphaSimR Package | Breeding program simulation | Simulates breeding programs for testing GPCP strategies; generates synthetic datasets [12] |

Experimental Applications and Performance Data

Performance in Clonal Crops

GPCP has demonstrated significant advantages in clonally propagated crops where non-additive effects play a substantial role:

Table 2: GPCP performance in sugarcane breeding for key agronomic traits.

| Trait | Traditional GEBV | GPCP Approach | Improvement |

|---|---|---|---|

| Tonnes Cane per Hectare (TCH) | Baseline | +57% | 57% [17] |

| Commercial Cane Sugar (CCS) | Baseline | +12% | 12% [17] |

| Fibre Content | Baseline | +16% | 16% [17] |

In sugarcane, non-additive effects account for almost two-thirds of the total genetic variance for TCH, with average heterozygosity having a major impact on this trait [19]. The extended-GBLUP model (which includes non-additive effects) improved prediction accuracies by at least 17% for TCH compared to models with only additive effects [19].

Simulation Studies

A comprehensive simulation study conducted using the AlphaSimR package evaluated GPCP across different genetic architectures [12]:

- Population sizes: 250, 500, 750, and 1000 individuals

- Dominance architectures: Mean dominance degree (meanDD) values of 0, 0.5, 1, 2, and 4

- Heritability settings: Ranging from 0.1 to 0.6

The simulation modeled a multi-stage clonal pipeline with progressively higher heritability at each stage (clonal evaluation: h² = 0.15; preliminary yield trial: h² = 0.25; advanced yield trial: h² = 0.45; uniform yield trial: h² = 0.65) [12]. GPCP proved superior to classical GEBVs for traits with significant dominance effects, effectively identifying optimal parental combinations across these diverse scenarios.

Training Set Optimization

Research in tetraploid potato has revealed important considerations for training set composition in GPCP:

- A training set of 280-480 clones with 10,000 markers was sufficient for robust predictions [20].

- Prediction within a specific market segment led to higher accuracy compared to adding clones from other market segments [20].

- Including clones with low trait values (lowest 10%) in a training set predominantly composed of high-performing clones can improve prediction accuracy by better capturing the population genetic variance [20].

Workflow and Strategic Implementation

GPCP Analysis Workflow

GPCP Decision Framework for Breeding Programs

Best Practices and Technical Notes

Model Specifications

The GPCP model implemented follows the mathematical formulation presented by [12]:

[ y = X\beta + Za a + Zd d + W\delta + \varepsilon ]

Where:

- (y) is the vector of phenotype means

- (X) is an incidence matrix for fixed effects

- (\beta) represents the vector of fixed effects

- (Z_a) is the matrix of allele dosages for additive effects

- (a) is the vector of additive effects

- (Z_d) is the matrix for dominance effects

- (d) is the vector of dominance effects

- (W) represents the vector with inbreeding coefficients

- (\delta) is a parameter indicating the effect of genomic inbreeding on performance

- (\varepsilon) is the vector of residual effects

The random effects (a), (d), and (\varepsilon) are assumed to be normally distributed with mean zero and variances (\sigmaa^2), (\sigmad^2), and (\sigma_\varepsilon^2), respectively [12].

Ploidy Considerations

The GPCP implementation supports both diploid and polyploid species:

- For diploids: allele dosages are 0, 1, 2; heterozygosity is coded as 0 (homozygous) or 1 (heterozygous)

- For polyploids (tetraploids, hexaploids): allele dosages range 0-4 or 0-6; heterozygosity represents the proportion of heterozygous allele combinations [12]

For highly polyploid species like sugarcane, a pseudo-diploid parameterization can provide appropriate approximation when exact dosage information is uncertain [19].

When to Implement GPCP

GPCP provides the greatest advantage over traditional GEBV-based selection when:

- Traits exhibit significant dominance effects and inbreeding depression [12] [21]

- Breeding programs focus on clonally propagated crops where both additive and non-additive effects can be exploited [17] [21]

- Species biology or cost prevents the use of reciprocal recurrent selection [12]

- The breeding goal is to maximize short to medium-term genetic gain while maintaining genetic diversity [21]

Genomic Predicted Cross-Performance represents a sophisticated approach to parental selection that integrates both additive and dominance genetic effects. The implementation of GPCP within the BreedBase environment and as an R package makes this powerful tool accessible to breeding programs across different species and ploidy levels. Through its ability to predict the performance of specific parental combinations rather than individual breeding values, GPCP enables more informed crossing decisions, potentially accelerating genetic gain for traits with significant non-additive genetic components. The protocols and applications detailed in this document provide a foundation for implementing GPCP in both research and commercial breeding contexts.

Genomic prediction has revolutionized plant and animal breeding by enabling the selection of superior genotypes based on molecular marker information. Two predominant models in this field are Genomic Estimated Breeding Values (GEBV) and Genomic Predicted Cross-Performance (GPCP). While GEBV focuses on additive genetic effects, GPCP incorporates both additive and non-additive effects to predict the performance of specific parental combinations. This article provides a structured comparison of these approaches and offers practical protocols for their implementation, framed within the context of optimizing breeding programs for genetic gain.

Core Concept Comparison: GEBV vs. GPCP

The choice between GEBV and GPCP fundamentally hinges on the breeding program's objectives, the reproductive biology of the species, and the genetic architecture of target traits. The table below summarizes the primary characteristics of each model.

Table 1: Fundamental Characteristics of GEBV and GPCP Models

| Feature | Genomic Estimated Breeding Value (GEBV) | Genomic Predicted Cross-Performance (GPCP) |

|---|---|---|

| Genetic Effects Captured | Additive effects only [12] [22] | Additive and directional dominance effects [12] [17] |

| Primary Output | Breeding value of an individual genotype [12] | Predicted mean genetic value of a specific cross's progeny [12] [22] |

| Primary Breeding Goal | Long-term increase of additive genetic value in a population [22] | Maximizing the total genetic value (including heterosis) of immediate progeny, particularly in clonal or hybrid programs [22] [17] |

| Optimal Use Cases | Programs with negligible dominance effects; longer time horizons focusing on additive gain [12] [22] | Traits with significant dominance, inbreeding depression, or heterosis; clonally propagated crops; hybrid breeding [12] [19] [22] |

Decision Framework: Selecting the Appropriate Model

The decision to implement GEBV or GPCP is multi-faceted. The following diagram and subsequent table outline the key factors to consider.

Diagram 1: GEBV vs. GPCP Decision Workflow

Table 2: Detailed Decision Factors for Model Selection

| Decision Factor | Favor GEBV | Favor GPCP |

|---|---|---|

| Trait Genetic Architecture | Purely additive traits or traits with negligible dominance effects [12] [22]. | Traits with significant dominance variance, inbreeding depression, and heterosis [12] [19] [22]. |

| Species Biology & Propagation | Inbred line development; species where controlled crossing is difficult or impossible [12]. | Clonally propagated crops (e.g., sugarcane, potato, strawberry) and hybrid crops [12] [22] [17]. |

| Program Time Horizon | Longer-term programs focused on sustained additive genetic gain [12] [22]. | Programs aiming to maximize the performance of the immediate progeny generation [22]. |

| Quantitative Evidence | Simulation studies show GEBV is sufficient when mean dominance deviation is 0 [12]. | For traits with dominance, GPCP produces faster genetic gain and better maintains heterozygosity [12] [22]. In sugarcane, models including non-additive effects improved TCH prediction accuracy by 17% [19]. |

Experimental Protocols

Protocol 1: Implementing a GPCP Analysis

This protocol details the steps for implementing GPCP analysis using the R package or the BreedBase environment, as presented in [12].

1. Input Data Preparation:

- Genotypic Data: A matrix of allele dosages (e.g., 0, 1, 2 for diploids) for all training and candidate individuals.

- Phenotypic Data: A vector of phenotype means (e.g., BLUPs) for the training population.

- Model Inputs: Linear selection index weights for traits, and specification of fixed or random factors.

2. Model Fitting:

Fit the GPCP mixed linear model using a package like sommer in R [12]:

[

\textbf{y} = \textbf{X}\beta + \textbf{Z}a + \textbf{W}d + \textbf{S}h + \epsilon

]

Where:

- y is the vector of phenotype means.

- X is an incidence matrix for fixed effects ((\beta)).

- Z is a matrix of allele dosages for additive effects ((a)).

- W is a matrix capturing heterozygosity for dominance effects ((d)).

- S is a vector of inbreeding coefficients, and (h) is the effect of genomic inbreeding.

- (\epsilon) is a vector of residual effects.

3. Cross-Performance Prediction: For each potential parental cross, predict the mean genetic value of the F1 progeny using the estimated additive and dominance effects from the model. The prediction is based on the differences in allele frequencies between the two parents, which allows for the maximization of heterosis [12].

4. Parent and Cross Selection: Select the top-performing parental combinations based on their predicted GPCP scores to generate the next breeding cycle.

Protocol 2: A Simulation-Based Comparison Study

This protocol outlines a method to empirically compare GEBV and GPCP within a breeding program context, based on simulation studies [12] [22].

1. Population Simulation:

- Use a software like AlphaSimR [12] to generate a founder population with realistic linkage disequilibrium and allele frequencies.

- Define multiple traits with varying degrees of dominance deviation (e.g., mean DD of 0, 0.5, 1, 2, 4) and different heritabilities.

2. Breeding Program Simulation:

- Model a multi-stage clonal pipeline (e.g., Clonal Evaluation → Preliminary Yield Trial → Advanced Yield Trial) with progressive selection [12].

- At the end of each cycle, use the candidate population as parents for the next generation.

- Apply both GEBV and GPCP selection methods independently in parallel simulations.

- For GEBV, select top individuals based on additive marker effects.

- For GPCP, select top parental crosses based on predicted cross merit.

3. Metric Tracking and Comparison:

- Run the simulation for multiple cycles (e.g., 40 cycles) [12].

- Track key metrics per cycle for both methods:

- Genetic Gain: The mean genetic value of the population.

- Usefulness Criterion (UC): Combines mean genotypic value with selection intensity and genetic standard deviation.

- Population Heterozygosity (H): To monitor genetic diversity.

- Calculate the difference in these metrics ((\Delta UC = UC{GPCP} - UC{GEBV})) to determine the superior method under different genetic architectures.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software for Genomic Prediction

| Tool Name | Type/Category | Primary Function | Application in Protocol |

|---|---|---|---|

| BreedBase [12] | Integrated Platform | A database and tool platform for managing breeding data. | Used for seamless prediction, saving, and management of crosses in GPCP. |

| GPCP R Package [12] | Statistical Software | An R package that implements the Genomic Predicted Cross-Performance model. | Direct implementation of the GPCP model as described in Protocol 1. |

| AlphaSimR [12] | Simulation Software | An R package for stochastic simulations of breeding programs and genomic data. | Generating founder populations and simulating breeding programs as in Protocol 2. |

| sommer [12] | Statistical Software | An R package for fitting mixed linear models using BLUP. | Used for fitting the GPCP model with additive and dominance relationship matrices. |

| Extended-GBLUP Model [19] [17] | Statistical Model | A genomic model that accounts for additive, dominance, and heterozygosity effects. | The core statistical model for predicting clonal performance in GPCP. |

| GBLUP Model [19] [23] | Statistical Model | A standard genomic model that accounts for additive genetic effects using a genomic relationship matrix. | The standard model for estimating GEBVs for comparison against GPCP. |

{#cover}

The Critical Role of Training Populations and Foundational Data Infrastructure

Genomic Prediction (GP) has revolutionized plant and animal breeding by enabling the selection of individuals based on their predicted genetic merit, significantly accelerating genetic gains for complex traits [24]. At the heart of any successful GP pipeline lies a robust foundational layer: a high-quality training population and a scalable data infrastructure. The training population, comprising individuals with both genotypic and phenotypic data, serves as the reference set used to build statistical models that predict the performance of new, un-phenotyped individuals [24] [25]. The accuracy of these models, and therefore the efficiency of the entire breeding program, is critically dependent on the size, genetic diversity, and phenotypic reliability of this foundational dataset. This application note details the protocols for constructing and managing these essential resources, framing them within the broader context of a modern, data-driven breeding strategy.

Core Principles and Quantitative Benchmarks

The relationship between training population design and prediction accuracy is well-established. Key factors include population size, genetic relatedness, and trait architecture. The following table synthesizes empirical findings on how these factors influence predictive performance across different species.

Table 1: Impact of Training Population Design on Genomic Prediction Accuracy

| Species | Trait | Key Finding on Training Population | Reported Impact on Accuracy | Source |

|---|---|---|---|---|

| Barley | Grain Yield & Quality | Using RNA-Seq data with parental WGS data for prediction | Achieved prediction abilities of 0.73 - 0.78; outperformed 50K SNP array in inter-population predictions [25]. | |

| Norway Spruce | Growth & Wood Quality | Preselection of ~100 top GWAS SNPs was optimal for one trait; for others, 2000-4000 SNPs were best. | Predictive ability was maximized with marker preselection for some traits [26]. | |

| Multi-Species Benchmark | Various | Benchmarking across 10 species (barley, maize, rice, pig, etc.) showed accuracy is highly species- and trait-dependent. | Mean prediction accuracy (r) was 0.62, with a range from -0.08 to 0.96 [27]. | |

| Wheat | Grain Yield | Machine learning (VBS-ML) applied to large populations (2,665 - 10,375 lines) improved accuracy. | VBS-ML consistently improved accuracy over legacy linear models on large datasets [28]. |

Protocol: Designing and Implementing a Training Population

Protocol 1: Construction of a Representative Training Population

Objective: To establish a training population that captures the genetic diversity of the target breeding program and enables accurate genomic predictions.

Materials and Reagents:

- Genetic Material: A core collection of lines or individuals representative of the current and future genetic pools of the breeding program.

- Genotyping Platform: High-density SNP array, Genotyping-by-Sequencing (GBS) kit, or whole-genome sequencing services.

- Phenotyping Resources: Controlled environment growth facilities, field trial plots, and high-throughput phenotyping equipment (e.g., for spectral imaging).

- Data Management System: A secure database (e.g., based on MySQL or PostgreSQL) for storing and version-controlling genotypic and phenotypic data.

Methodology:

- Population Sizing and Composition: The training population should be as large as feasibly possible. Studies have shown that accuracy typically increases with size, often following a diminishing returns curve. For initial implementation, a minimum of 300-500 individuals is recommended, with larger populations (>1000) being ideal for complex, polygenic traits [24] [27].

- Maximizing Genetic Diversity: Select individuals to maximize the captured genetic diversity and relatedness to the selection candidate pool. This can be achieved by ensuring the population includes founders and key ancestors from the breeding program.

- Phenotyping for Heritability: Phenotypic data must be collected with high precision. Employ replicated field trials across multiple locations and years to obtain Best Linear Unbiased Estimators (BLUPs) or Best Linear Unbiased Predictors (BLUEs), which remove non-genetic effects and provide a better estimate of the genetic value [25] [28].

- Genotypic Data Quality Control:

- Model Training and Validation: Use a cross-validation strategy (e.g., 5-fold cross-validation) within the training population to evaluate the expected accuracy of the genomic prediction model before deploying it on selection candidates [24] [7].

Workflow Diagram: The following diagram illustrates the integrated workflow for building and utilizing a genomic prediction model.

Protocol 2: Leveraging Machine Learning on Large-Scale Data

Objective: To implement a machine learning-based GP model that can handle large-scale genotypic data and capture non-additive genetic effects.

Rationale: While linear mixed models (e.g., GBLUP) are standard, machine learning (ML) methods offer advantages in modeling complex patterns and interactions, especially as data size increases [28] [7] [6].

Materials and Reagents:

- Computational Resources: High-performance computing (HPC) cluster or a workstation with substantial RAM and multi-core GPUs.

- Software: Python with libraries like TensorFlow/Keras or PyTorch for deep learning, and scikit-learn for other ML methods. R with packages like

rrBLUPorBGLRfor benchmark comparisons.

Methodology:

- Data Preparation: Standardize both genotypic (markers coded as 0,1,2) and phenotypic data. Split the data into training (e.g., 80%) and validation (20%) sets, ensuring families are not split across sets to avoid biased accuracy estimates.

- Model Selection and Sparsity:

- Consider ML models that introduce sparsity to handle high-dimensionality. For example, the VBS-ML (Variational Bayesian Sparsity in Machine Learning) architecture uses a Bayesian sparsity layer for feature selection of important markers, reducing over-parameterization in the initial network layers [28].

- Compare the ML model's performance against legacy linear models (e.g., GBLUP, BayesA) as a benchmark.

- Model Training and Tuning: Train the ML model, tuning hyperparameters (e.g., learning rate, number of layers and nodes, sparsity parameters) using the validation set. This process can be computationally intensive but is crucial for optimal performance [7].

- Accuracy Assessment: Evaluate the final model on the held-out test set. The primary metric is typically the Pearson correlation coefficient (r) between the predicted and observed values [27] [7].

Table 2: Key Reagents and Tools for Genomic Prediction Infrastructure

| Category | Item | Specific Example / Function | Application in GP Workflow |

|---|---|---|---|

| Genotyping | SNP Array | Custom 20K Affymetrix array (wheat) [28] | High-quality, standardized genome-wide marker data. |

| Genotyping-by-Sequencing (GBS) | Low-cost, high-throughput marker discovery [27]. | Ideal for species without a commercial array. | |

| Transcriptomics | RNA-Seq | VAHTS Universal V6 RNA-seq Library Prep Kit [25]. | Provides gene expression data as a predictor; can also be a source for SNP calling. |

| Phenotyping | Spatial Linear Mixed Models | Software for field trial analysis (e.g., ASReml, sommer) [28]. | Derives adjusted yield predictions by accounting for spatial field variation. |

| Data Management | Curated Benchmark Datasets | EasyGeSe database [27]. | Provides standardized datasets for method benchmarking and validation. |

| Software & Algorithms | Machine Learning Platforms | TensorFlow, PyTorch for implementing VBS-ML and other DL architectures [28] [6]. | Building and training complex, non-linear prediction models. |

| Traditional GP Software | BGLR, rrBLUP in R [24] [7]. | Implementing standard Bayesian and GBLUP models for baseline comparison. |

A meticulously designed training population and a robust, scalable data infrastructure are not merely supportive elements but are the very foundation upon which successful genomic prediction is built. As breeding programs continue to generate ever-larger multi-omics datasets, the principles outlined here—emphasizing data quality, appropriate population structure, and the integration of advanced statistical machine learning methods—will be critical for unlocking greater genetic gains and ensuring future food security.

Model Architectures in Action: From Bayesian Alphabets to Multi-Omics Integration

Genomic selection (GS) has revolutionized breeding programs by using genome-wide molecular markers to predict the genetic value of individuals, thereby accelerating genetic gain and reducing breeding cycles [29]. At the heart of GS are statistical models capable of handling high-dimensional genomic data, among which the Bayesian Alphabet and rrBLUP represent two fundamental approaches. The Bayesian Alphabet encompasses a family of methods (including BayesA, BayesB, and BayesC) that employ Bayesian statistical frameworks with different prior distributions for marker effects [30]. These models are particularly valued for their flexibility in accommodating various genetic architectures. In parallel, rrBLUP (ridge regression BLUP), which is equivalent to Genomic Best Linear Unbiased Prediction (GBLUP), operates under the assumption that all markers contribute equally to genetic variation [31] [27]. This article provides a detailed practical guide to implementing these core genomic prediction models, framed within the context of modern breeding programs. We present structured comparisons, experimental protocols, and essential tools to enable researchers to effectively apply these methods in both plant and animal breeding contexts.

Model Foundations and Comparative Analysis

Theoretical Underpinnings

The Bayesian Alphabet models share a common Bayesian framework but differ primarily in their assumptions about the distribution of marker effects, which is reflected in their prior specifications. BayesA assumes that all single nucleotide polymorphisms (SNPs) have a non-zero effect and that these effects follow a t-distribution, making it suitable for traits influenced by many genes of small effect [30] [29]. BayesB introduces a more sophisticated architecture by assuming that only a proportion of SNPs (π) have non-zero effects, with the remaining markers having zero effect, making it particularly effective for traits governed by a few genes with large effects [30] [29]. BayesC is similar to BayesB but estimates the proportion π of markers with non-zero effects from the data itself, rather than setting it as a fixed parameter [30] [29]. This model represents a balance between the assumptions of BayesA and BayesB.

In contrast, rrBLUP/GBLUP takes a different approach by using a linear mixed model that replaces the pedigree-based relationship matrix with a genomic relationship matrix (G) constructed from marker data [31] [27]. This model assumes all markers contribute equally to the genetic variance, which simplifies computation but may be less optimal for traits with a known architecture of major genes.

Performance Comparison Across Species and Traits

The performance of these models varies significantly depending on the genetic architecture of traits and the species under investigation. The table below summarizes key comparative findings from recent studies:

Table 1: Comparative Performance of Genomic Prediction Models Across Species

| Species | Trait Characteristics | Model Performance Findings | Citation |

|---|---|---|---|

| Alpine Merino Sheep | Wool traits with varying heritability | GBLUP superior for low-heritability traits; Bayesian Alphabet advantages increased with higher heritability | [30] |

| Large Yellow Croaker | Body weight (continuous trait) | GBLUP demonstrated greater efficacy for continuous traits compared to machine learning and Bayesian approaches | [31] |

| Multiple Species Benchmark | Diverse traits across 10 species | Bayesian methods showed slightly higher accuracy but significantly longer computation times vs. non-parametric methods | [27] |

Prediction accuracy in these studies was typically measured using Pearson's correlation coefficient (r) between predicted and observed values, or as the proportion of correctly predicted phenotypes in cross-validation studies [30] [27]. For instance, in the Alpine Merino sheep study, the genomic prediction accuracy for six wool traits ranged between 0.28 and 0.60 across different models and marker densities [30].

Model Selection Framework

Choosing the appropriate model requires careful consideration of multiple biological and practical factors. The following diagram illustrates the decision-making workflow for selecting among these genomic prediction models:

Experimental Protocols and Implementation

Standardized Benchmarking Protocol

To ensure reproducible comparison of genomic prediction models, we recommend the following standardized protocol based on the EasyGeSe framework, which has been validated across multiple species [27]:

Data Preparation and Quality Control: Begin with genotypic data in a standard format (e.g., VCF, PLINK). Apply quality control filters including:

- Minor Allele Frequency (MAF) > 0.05

- Individual missing rate < 0.1

- Marker missing rate < 0.1

- Remove individuals with excessive heterozygosity Impute missing genotypes using algorithms like Beagle or SVD-based imputation [27].

Population Structure Assessment: Perform Principal Component Analysis (PCA) or similar methods to identify potential population stratification that may confound predictions.

Heritability Estimation: Estimate genomic heritability using the GBLUP model to establish trait heritability baseline.

Cross-Validation Scheme: Implement a five-fold cross-validation approach where the population is randomly partitioned into five subsets. For each iteration:

- Use four subsets as the training population

- Use one subset as the validation population

- Repeat until all subsets have served as the validation set

- Ensure family structure is maintained across training and validation sets when applicable

Model Training and Prediction: Train each model (rrBLUP/GBLUP, BayesA, BayesB, BayesC) using the training population and generate Genomic Estimated Breeding Values (GEBVs) for the validation population.

Accuracy Assessment: Calculate prediction accuracy as the Pearson correlation coefficient between GEBVs and observed phenotypes in the validation population. For binary traits, use proportion of correctly classified individuals.

Table 2: Key Parameters for Bayesian Alphabet Implementation

| Model | Key Parameters | Prior Distributions | Computational Requirements | Recommended Use Cases |

|---|---|---|---|---|

| rrBLUP/GBLUP | Genetic variance, Residual variance | Normal distribution for all effects | Low; fast computation | Initial screening, traits with polygenic architecture |

| BayesA | Degrees of freedom, scale parameter | t-distribution for marker effects | Moderate | Traits with many small-effect QTLs |

| BayesB | π (proportion of non-zero markers), priors for variances | Mixture distribution (point-mass at zero and t-distribution) | High | Traits with major genes and sparse architecture |

| BayesC | π (estimated from data), priors for variances | Mixture distribution (point-mass at zero and normal distribution) | High | When proportion of causal variants is unknown |

Implementation in Breeding Programs

For integrating these models into operational breeding programs, we recommend the following workflow:

Preliminary Analysis: Start with GBLUP as a baseline model due to its computational efficiency and robustness.

Model Refinement: Based on initial results and prior knowledge of trait architecture, select appropriate Bayesian models for further refinement.

Marker Density Optimization: Evaluate prediction accuracy with different marker densities. Studies in Alpine Merino sheep showed that increasing marker density generally improves accuracy, but the degree of improvement depends on the model and trait heritability [30].

Regular Model Updating: Recalibrate models regularly as new phenotypic and genotypic data become available to maintain prediction accuracy over breeding cycles.

The Scientist's Toolkit

Essential Research Reagents and Computational Tools

Table 3: Essential Resources for Genomic Prediction Research

| Category | Resource | Description | Application in Bayesian Alphabet Research |

|---|---|---|---|

| Genotyping Platforms | Liquid SNP arrays (e.g., NingXin-III) | High-throughput genotyping systems | Generate marker data for genomic prediction [31] |

| Genotyping-by-sequencing (GBS) | Reduced-representation sequencing | Cost-effective marker discovery for large populations [27] | |

| Data Resources | EasyGeSe | Curated multi-species dataset collection | Benchmarking and comparing model performance [27] |

| BreedBase | Integrated breeding platform | Manage phenotypic and genotypic data [12] | |

| Software Packages | BGLR | R package | Implement Bayesian Alphabet models [29] |

| rrBLUP | R package | GBLUP implementation [29] | |

| AlphaSimR | R package | Breeding program simulation [12] | |

| Quality Control Tools | PLINK | Whole-genome association analysis | Data quality control and preprocessing [27] |

| BEAGLE | Software package | Genotype imputation [27] |

Workflow Visualization for Genomic Prediction

The following diagram illustrates the complete experimental workflow for implementing genomic prediction models in a breeding program context:

The Bayesian Alphabet and GBLUP models represent foundational approaches in genomic prediction, each with distinct strengths and optimal application domains. As breeding programs increasingly generate multi-omics data and larger training populations, integration of these classical models with emerging machine learning approaches presents a promising frontier [27] [29]. Recent benchmarking studies indicate that while non-parametric methods like XGBoost can offer modest accuracy improvements (+0.025 in correlation coefficient) and computational advantages for certain scenarios, Bayesian methods remain competitive, particularly for traits with known genetic architectures [27].

Future developments will likely focus on optimizing model selection for specific trait-species combinations, improving computational efficiency for large-scale applications, and integrating additional biological information to enhance prediction accuracy for complex traits. The continued development of standardized benchmarking resources like EasyGeSe will be crucial for fair comparison of new methods against these established models [27]. As one review notes, "additional artificial intelligence techniques will be required for big data management, feature processing, and model innovation to generate a comprehensive model to optimize the prediction accuracy of genomic selection" [29].

In practice, successful implementation of these models requires careful consideration of trait architecture, computational resources, and breeding program objectives. By following the protocols and guidelines presented herein, researchers can effectively leverage these powerful tools to accelerate genetic gain in breeding programs.

Genomic Best Linear Unbiased Prediction (G-BLUP) has established itself as a cornerstone method in genomic selection, revolutionizing animal and plant breeding programs over the past decade [32] [33]. As a relationship-based model, G-BLUP utilizes genomic relationship matrices derived from DNA marker information to predict the genetic merit of individuals with greater accuracy than traditional pedigree-based approaches [32] [34]. The method's robustness, computational efficiency, and interpretability have made it a preferred choice for predicting complex traits controlled by many small-effect loci [35] [33].

This article explores the fundamental principles of G-BLUP, detailing its statistical framework and practical implementation. We further examine significant extensions to the standard model that enhance its predictive capability for specific genetic scenarios, including models accounting for genomic imprinting, dominance effects, multiple-trait analyses, and the integration of known causal variants. Each extension is presented with its theoretical basis, application context, and experimental protocols to provide researchers with comprehensive guidance for implementing these advanced genomic prediction models in breeding programs.

The Basic G-BLUP Framework

Statistical Foundation

The G-BLUP method is built upon the mixed linear model framework. The basic model equation is expressed as:

y = 1μ + Zg + e [36]

Where:

- y is the vector of corrected phenotypic observations

- μ is the overall population mean

- 1 is a vector of ones

- Z is a design matrix allocating records to breeding values

- g is the vector of genomic breeding values, assumed to follow a normal distribution g ~ N(0, Gσ²g)

- e is the vector of residual errors, assumed e ~ N(0, Iσ²e)

The genomic relationship matrix (G) is central to the model, defining the covariance between individuals based on observed similarity at the genomic level rather than expected similarity based on pedigree [32] [36]. This matrix is constructed from dense single nucleotide polymorphism (SNP) markers distributed across the genome, capturing the actual proportion of the genome shared between individuals.

Computational Implementation Protocol

Protocol 1: Basic G-BLUP Implementation Using R

Data Preparation

- Format genotype data as a matrix of SNP markers (coded as 0, 1, 2)

- Format phenotype data as a vector of corrected phenotypic values

- Ensure individual identifiers match between genotype and phenotype datasets

Construction of Genomic Relationship Matrix (G)

- Calculate the genomic relationship matrix using the method of VanRaden (2008):

- Center the genotype matrix by subtracting twice the minor allele frequency for each marker

- Compute G = MM' / 2∑pᵢ(1-pᵢ), where M is the centered genotype matrix and pᵢ is the minor allele frequency of marker i

- Calculate the genomic relationship matrix using the method of VanRaden (2008):

Model Fitting

- Use the

mixed.solve()function from therrBLUPpackage in R:

- Use the

Model Validation

- Implement cross-validation by partitioning data into training and validation sets

- Calculate prediction accuracy as Pearson's correlation between predicted and observed values in the validation set

- Compute normalized root mean square error (NRMSE) to assess prediction bias [33]

Table 1: Key Research Reagents for G-BLUP Implementation

| Reagent/Software | Function | Specification |

|---|---|---|

| SNP Genotyping Array | Genotype data generation | Platform-specific (e.g., Illumina, Affymetrix) |

| R Statistical Environment | Data analysis and modeling | Version 3.5 or higher |

| rrBLUP R Package | Mixed model solving | Version 4.6 or higher |

| Phenotypic Database | Trait measurements | Standardized experimental designs |

Key Extensions of the G-BLUP Framework

G-BLUP with Imprinting Effects (GBLUP-I)

Genomic imprinting represents an epigenetic phenomenon where gene expression depends on the parental origin of the allele. Many livestock traits exhibit genomic imprinting, which can substantially contribute to the total genetic variation of quantitative traits [37].

Statistical Model Extension The GBLUP-I method extends the basic model by partitioning genetic effects into parent-of-origin components. Two primary approaches have been developed:

- GBLUP-I1: Models imprinting effects based on genotypic values

- GBLUP-I2: Models imprinting effects using gametic values [37]

The model incorporating imprinting can be represented as:

y = 1μ + Zg + Wi + e

Where:

- i is the vector of imprinting effects

- W is an incidence matrix relating observations to imprinting effects

Simulation studies demonstrate that when imprinting variances account for 1.4% to 6.0% of phenotypic variances, the accuracies of estimated total genetic values with GBLUP-I1 exceed those with standard G-BLUP by 1.4% to 7.8% [37].

Protocol 2: Implementing GBLUP with Imprinting Effects

Parental Allele Tracing

- Determine parental origin of alleles through pedigree information or molecular methods

- Phase genotypes to assign paternal and maternal alleles

Separate Relationship Matrices

- Construct paternal (Gp) and maternal (Gm) relationship matrices

- Calculate genomic relationship matrices separately for paternal and maternal alleles

Extended Model Fitting

- Fit the model with multiple random effects using specialized software such as BLUPF90 or ASReml

- Include both standard genomic effect and parent-of-origin effect

Variance Component Estimation

- Use restricted maximum likelihood (REML) to estimate variance components

- Test significance of imprinting variance using likelihood ratio tests

G-BLUP with Dominance Effects

For traits influenced by non-additive genetic effects, incorporating dominance relationships can improve prediction accuracy. The inclusion of dominance effects is particularly valuable for mating program optimization [34].

Statistical Model Extension The model with dominance effects extends the basic G-BLUP framework:

y = 1μ + Za + Zd + e

Where:

- a is the vector of additive genetic effects (a ~ N(0, Gσ²a))

- d is the vector of dominance effects (d ~ N(0, Dσ²d))

- D is the dominance relationship matrix calculated from SNP data

Studies in Holsteins and Jerseys have shown that including dominance variance can contribute 3.7-4.1% of the total genetic variance for milk yield, providing economic benefits in mating programs [34].

Multiple-Trait G-BLUP with Reaction Norm Models

Genotype-by-environment interactions (G×E) present significant challenges in breeding programs. Multiple-trait G-BLUP approaches address this issue through character-state and reaction norm models [38].

Statistical Framework The multiple-trait reaction norm model expresses breeding values as functions of environmental covariates:

gij = x'ijγi

Where:

- gij is the breeding value of individual i in environment j

- xij is the vector of environmental covariates

- γi is the vector of random regression breeding values

The equivalence between reaction norm and character-state models enables the derivation of genetic parameters for specific environments when estimates of reaction norm parameters are available [38].

Protocol 3: Implementing Multiple-Trait Reaction Norm Models

Environmental Characterization

- Quantify environmental conditions using continuous variables (temperature, humidity, management practices)

- Standardize environmental covariates to a common scale

Matrix Construction

- Construct the X matrix of environmental covariates for all trait-environment combinations

- Define the covariance structure for random regression coefficients

Parameter Estimation

- Estimate variance components for random regression coefficients using REML

- Convert reaction norm parameters to character-state parameters for specific environments using the equivalence: var(g) = X'var(γ)X [38]

Genetic Evaluation

- Predict breeding values for target environments

- Optimize selection based on multiple traits across environments

Incorporating Known Causal Variants

The accuracy of genomic prediction can be improved by incorporating information from known quantitative trait loci (QTL) or major genes, particularly through weighted approaches or two-step methods [39] [40].

Statistical Approaches

- Weighted G-BLUP (wGBLUP): Assigns different weights to markers based on their estimated effects

- Two-Step G-BLUP: Includes pre-selected markers as a separate genetic effect in the model

Research demonstrates that when known QTL explaining up to 80% of the genetic variance are included, prediction accuracy increases significantly [40]. In spring wheat, incorporating major plant adaptation genes (FT/Ppd/Rht/Vrn) as fixed effects within an RKHS framework improved genomic predictive abilities by 13.6% for grain yield, 19.8% for total spikelet number per spike, and 22.5% for heading date [39].

Protocol 4: Integrating Known Causal Variants in G-BLUP

QTL Identification

- Conduct genome-wide association studies (GWAS) or meta-analyses to identify significant markers

- Utilize prior biological knowledge of major genes

Model Specification

- For two-step approach: y = 1μ + Xb + Za + e

- b is the vector of fixed effects for known QTL

- X is the incidence matrix for known QTL

- For weighted G-BLUP: apply differential weighting to markers in the relationship matrix

- For two-step approach: y = 1μ + Xb + Za + e

Implementation

- Use specialized software such as BayZ or GCTA for weighted analyses

- Validate model performance through cross-validation

Table 2: Comparison of G-BLUP Extensions for Different Breeding Scenarios

| Extension | Genetic Architecture | Typ Accuracy Gain | Primary Application |

|---|---|---|---|

| Basic G-BLUP | Additive, polygenic | Baseline | General breeding value estimation |

| GBLUP-I | Parent-of-origin effects | 1.4-7.8% | Livestock traits with imprinting |

| Dominance G-BLUP | Non-additive effects | 3.7-4.1% (milk yield) | Mating program optimization |

| Multiple-Trait G-BLUP | G×E interactions | Environment-dependent | Multi-environment breeding |

| wGBLUP with QTL | Major genes + polygenic | Up to 22.5% (heading date) | Traits with known major genes |

Advanced Applications and Emerging Methodologies

Comparison with Machine Learning Approaches

Recent studies have compared the performance of G-BLUP with various machine learning methods, including deep learning (DL), random forests (RF), and support vector regression (SVR) [35] [40]. While DL models can capture complex, non-linear genetic patterns and may provide superior predictive performance for certain traits, G-BLUP remains highly competitive, particularly for traits with predominantly additive genetic architectures and in larger datasets [35].

A comprehensive analysis across 14 plant breeding datasets revealed that neither method consistently outperformed the other across all traits and scenarios. The success of DL models significantly depended on careful parameter optimization, whereas G-BLUP provided more stable performance with less computational demand [35]. Similarly, in simulated livestock populations, G-BLUP consistently outperformed SVR, and both models showed slight improvements when QTL information was incorporated [40].

Dynamic Genomic Prediction

Emerging methodologies extend G-BLUP to model trait development over time. The dynamicGP approach combines genomic prediction with dynamic mode decomposition to predict the developmental dynamics of multiple traits across the growth period of plants [41]. This innovation enables the prediction of trait expression at different time points, providing a more comprehensive understanding of plant development and potentially enhancing selection efficiency for complex agronomic traits.

The G-BLUP framework and its extensions represent powerful tools for modern breeding programs, offering flexibility to address various genetic architectures and breeding objectives. While basic G-BLUP remains effective for many applications, specialized extensions provide enhanced accuracy for specific scenarios, including traits influenced by imprinting, dominance, genotype-by-environment interactions, or known major genes.

As genomic selection continues to evolve, the integration of G-BLUP with emerging technologies such as dynamic modeling and machine learning offers promising avenues for further improving prediction accuracy and breeding efficiency. The protocols provided in this article serve as practical guides for researchers implementing these advanced genomic prediction models in their breeding programs.

Figure 1: Comprehensive workflow for implementing G-BLUP and its extensions in breeding programs.

Genomic selection (GS) has revolutionized plant and animal breeding by enabling the selection of superior genotypes using genomic estimated breeding values [42]. However, a key limitation of traditional GS is its reliance on genomic markers alone, which often fails to fully capture the complex molecular networks governing polygenic traits [42] [43]. The integration of multi-omics data, particularly transcriptomics and metabolomics, has emerged as a powerful strategy to enhance prediction accuracy by providing a more comprehensive view of the biological pathways linking genotype to phenotype [42] [44].

Transcriptomics reveals dynamic gene expression patterns and regulatory networks, while metabolomics captures downstream biochemical profiles that closely reflect phenotypic outcomes [44]. Together, these complementary data layers bridge critical gaps in our understanding of trait architecture, offering breeders unprecedented insights for accelerating genetic gain [45] [46]. This Application Note provides detailed protocols and frameworks for effectively integrating transcriptomic and metabolomic data into genomic prediction models, with a focus on practical implementation in breeding programs.

Quantitative Evidence for Prediction Enhancements

Substantial empirical evidence demonstrates the predictive advantages of multi-omics integration over genomic-only approaches. The following table summarizes key performance metrics from recent studies across various species:

Table 1: Predictive Performance Gains from Multi-Omics Integration

| Species | Trait Category | Genomic-Only Accuracy | Multi-Omics Accuracy | Improvement | Citation |

|---|---|---|---|---|---|

| Maize & Rice | Hybrid Performance | GP Baseline | MM_GP (Metabolic Marker-assisted) | 4.6-13.6% | [47] |

| Japanese Quail | Efficiency Traits | GBLUP | GTCBLUPi (Genomic-Transcriptomic) | Significant increase (variances explained) | [48] |

| Arabidopsis | Flowering Time | G-based Models | Integrated G+T+gbM Models | Best Performance | [46] |

| Maize | Complex Agronomic | Genomic-Only | Model-based Multi-omics Fusion | Consistent Improvement | [42] |

| Pigs | Average Daily Gain | GBLUP: 0.60 | MGBLUP: 0.61-0.74 | Small Increases | [49] |

Beyond prediction accuracy, integrated models provide significant biological insights. For flowering time prediction in Arabidopsis, different omics layers identified distinct sets of important genes, with nine additional genes validated as regulators through experimental follow-up [46]. This demonstrates how multi-omics approaches can reveal novel biological mechanisms beyond what single-omics analyses can uncover.

Experimental Protocols for Multi-Omics Integration

Metabolic Marker-Assisted Genomic Prediction (MM_GP)

The MM_GP approach enhances hybrid breeding by incorporating preselected metabolic markers identified through metabolome-wide association studies (MWAS) [47].

Table 2: Key Reagents for Metabolomic Profiling

| Reagent/Platform | Function | Application Context |

|---|---|---|

| LC-MS/MS Systems | Separation and detection of metabolites | Untargeted metabolomics |

| NMR Spectrometer | Quantitative metabolite profiling | Blood plasma/serum analysis |

| GC-MS Platforms | Volatile metabolite analysis | Plant secondary metabolites |

| Fluidigm BioMark HD | High-throughput candidate validation | Targeted metabolite screening |