Integrating Heterogeneous Biological Databases: Strategies for Next-Generation Gene Discovery

This article provides a comprehensive guide for researchers and drug development professionals on integrating heterogeneous biological databases to accelerate gene discovery.

Integrating Heterogeneous Biological Databases: Strategies for Next-Generation Gene Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating heterogeneous biological databases to accelerate gene discovery. It explores the foundational principles of data integration, from the latest NAR database collection to core computational concepts. The piece details cutting-edge methodological frameworks, including multi-omics approaches and machine learning, supported by real-world case studies in disease research. It further addresses critical challenges in data harmonization and optimization, and concludes with robust validation techniques and comparative analyses of integration tools. This resource synthesizes current knowledge to empower scientists in navigating the complex landscape of biological data for targeted discovery and therapeutic development.

The Landscape of Biological Data: Foundations for Effective Integration

Core Concepts: FAQs on Database Fundamentals

FAQ 1: What are controlled vocabularies and why are they critical for database integration?

Controlled vocabularies are specific, predefined lists of terms used to annotate data, which are essential for reducing ambiguity and duplication in biological databases [1]. Unlike free text entry, they enable both computers and humans to categorize information consistently, thereby reducing redundancy and errors [1]. This standardization is a foundational prerequisite for integrating heterogeneous databases, as it ensures that data from different sources, such as a biobank's clinical records and a public repository's genomic data, can be interoperable and jointly analyzed [2].

FAQ 2: What are the common data types encountered in integrated gene discovery research?

Gene discovery research relies on the integration of diverse data types. The table below summarizes the key categories often stored in modern biobanks and databases.

Table 1: Key Data Types in Integrated Gene Discovery Research

| Data Category | Specific Types | Role in Gene Discovery |

|---|---|---|

| Clinical Data | Demographic information, disease status, treatment history, pathology findings [2] | Provides the phenotypic context essential for correlating genotype with phenotype. |

| Omics Data | Genomic (DNA sequences, variations), Transcriptomic (gene expression), Proteomic (protein expression), Metabolomic (metabolite profiles) [2] | Identifies candidate genes and elucidates their functional impact across biological layers. |

| Image Data | Histopathological images, Medical scans (MRI, CT), Microscopy images [2] | Offers qualitative and quantitative insights into tissue and cellular morphology associated with genetic conditions. |

| Biospecimen Data | Blood, tissue biopsies, saliva, urine [2] | Serves as the primary source for molecular profiling and analysis. |

Troubleshooting Common Data Challenges

Challenge 1: Resolving "sequence import errors" in public repositories like GenBank.

A common issue when submitting sequences to repositories is the failure to import FASTA files.

- Problem: The submission fails with an error related to the FASTA definition line.

- Solution:

- Check the SeqID: Ensure the sequence identifier (SeqID) after the ">" is unique, contains no spaces, and is limited to 25 characters or less. Use only permitted characters: letters, digits, hyphens, underscores, periods, colons, asterisks, and number signs [3].

- Verify the Definition Line: The entire FASTA definition line must be a single line of text with no hard returns. Check that your editing software has not inserted any line breaks [3].

- Format Modifiers Correctly: Source organism information (e.g.,

[organism=Mus musculus]) must follow the[modifier=text]format without spaces around the "=" sign [3]. - Validate Sequence Characters: The nucleotide sequence itself should use only IUPAC symbols. For ambiguous bases, use "N" and avoid "-" or "?" characters, which will be stripped upon processing [3].

Challenge 2: Managing semantic heterogeneity during multi-database analysis.

When combining data from different resources, the same concept (e.g., "length") may be described with different terms ("length", "len", "fork length"), a problem known as semantic heterogeneity [4].

- Problem: Inability to effectively group and analyze measurements from disparate datasets.

- Solution: Utilize the identifier fields recommended by standards like Darwin Core. Instead of relying only on free-text fields like

measurementType, populate the corresponding identifier fields (measurementTypeID,measurementValueID,measurementUnitID) with Unique Resource Identifiers (URIs) from controlled vocabularies [4].- For example, always use a URI from the NERC Vocabulary Server's P01 collection for

measurementTypeIDto unambiguously define the measurement type [4]. This allows machines to correctly aggregate all length measurements, regardless of the original free-text description.

- For example, always use a URI from the NERC Vocabulary Server's P01 collection for

Experimental Protocols for Data Integration

Protocol: An Integrative Functional Genomics Workflow for Cross-Species Gene Discovery

This methodology leverages the GeneWeaver analysis system to identify novel genes underlying aging and disease by integrating heterogeneous genomic datasets [5].

- Objective: To find genes and pathways associated with a biological process (e.g., aging) by integrating data from multiple studies and species.

Materials & Reagents:

- GeneWeaver.org Account: A web-based system for storing and analyzing functional genomics gene sets [5].

- Gene Sets: Collections of genes from user-submitted experiments, published literature, and other databases (e.g., KEGG, OMIM, MSigDB) [5].

- Orthology Mapping Resources: Tools to map gene identifiers across species (e.g., mouse to human orthologs).

Procedure:

- Data Curation and Upload: Identify relevant genome-wide studies from literature searches (e.g., PubMed). Curate gene lists from these publications and upload them as distinct gene sets into your GeneWeaver workspace [5].

- Data Combination: Use the "Combine" tool to find the union of genes across multiple related gene sets. For example, create a master set of all genes associated with cellular senescence from various studies. This tool provides a count of how many sets each gene appears in, highlighting frequently implicated candidates [5].

- Similarity and Overlap Analysis: Use the "Jaccard Similarity" tool to perform pairwise comparisons between different gene sets. This calculates the similarity coefficient (size of intersection divided by size of union) and visually displays the overlap, for instance, between genes from a senescence study and those from a cognitive decline study [5].

- Statistical Validation: Employ the built-in resampling strategy to compute empirical p-values for the observed overlaps, assessing their statistical significance beyond chance [5].

- Cross-Species Validation: Identify a candidate gene (e.g., Cd63 from integrated data) and test its role in a model organism (e.g., perform RNAi knockdown of its ortholog, tsp-7, in C. elegans) to validate its effect on a phenotype like lifespan [5].

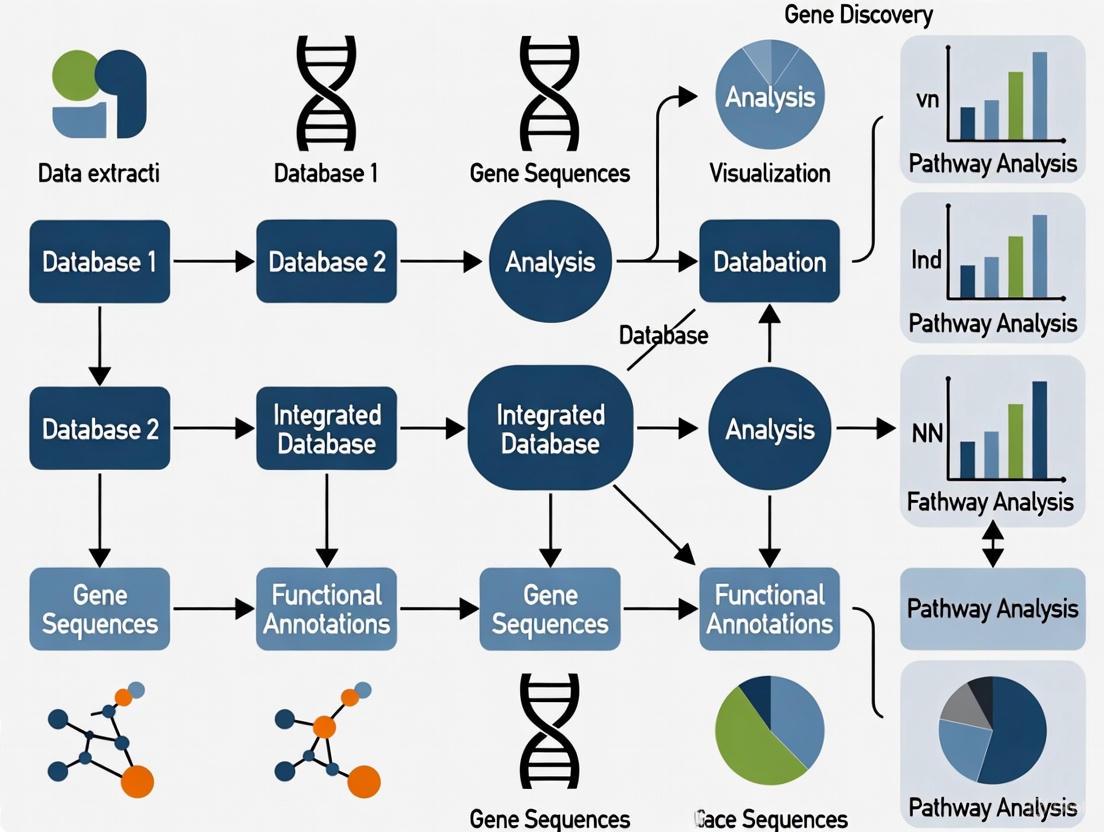

The following workflow diagram illustrates the key steps of this integrative genomics protocol:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Database Integration and Gene Discovery

| Tool / Resource | Function | Application in Research |

|---|---|---|

| BLAST (NCBI) [6] | Finds regions of local similarity between biological sequences (nucleotide/protein). | Inferring functional and evolutionary relationships; identifying members of gene families. |

| NERC Vocabulary Server [4] | Provides URIs for controlled vocabulary terms for measurements, units, and values. | Annotating data for interoperability, ensuring unambiguous data integration across platforms. |

| GeneWeaver [5] | An analysis system for the integration of heterogeneous functional genomics data. | Storing, searching, and analyzing user-submitted and public gene sets to find convergent evidence. |

| PROKKA / RATT [7] | Tools for rapid genome annotation and annotation transfer between assemblies. | Annotating novel bacterial genomes or ancestral sequences using existing reference data. |

| BODC P01 Collection [4] | A controlled vocabulary collection for unambiguously describing types of measurements. | Standardizing measurementTypeID in databases to enable accurate grouping and analysis. |

Frequently Asked Questions (FAQs)

General Concepts

Q1: What is the core difference between a data warehouse and a federated database system?

The primary difference lies in how and where data is stored and accessed. A data warehouse uses an "eager" integration approach, where data is physically copied from various source systems, transformed, and stored in a central repository. This creates a single, consistent source for querying but requires significant storage and can contain data that is not real-time [8] [9].

In contrast, a federated database system uses a "lazy" approach. It creates a virtual database that provides a unified view of data, but the data itself remains in its original, distributed source systems. When you query the federation, it retrieves and combines data from these sources on the fly, offering access to more current data without the need for massive storage duplication [8] [9] [10].

Q2: What is a "schema" in the context of biological databases?

A schema is the structured, "queryable" blueprint of a database. It defines how data is organized, including the tables, fields, relationships, and data types. In biological research, a unified or global schema is often created to map and translate data from heterogeneous sources into a consistent format, making it possible to integrate and query them together [8] [11].

Q3: Why are ontologies and standards critical for data integration?

Ontologies and standards are the foundation of successful data integration. They provide an agreed-upon set of terms and definitions for describing biological data [8]. By using standards:

- Data becomes searchable and comparable: A "kinase" defined by one research group means the same thing to another.

- Interoperability is enabled: Different databases can link and share information unambiguously.

- Annotation is consistent: Both manual and automatic annotation processes can attach meaningful metadata to raw biological entities reliably [8]. Key resources include the OBO (Open Biological and Biomedical Ontologies) Foundry and the NCBO BioPortal [8].

Troubleshooting Federated Systems

Q4: What should I do if my federated query is running very slowly?

Performance and latency are common challenges in federated systems, as queries must access multiple, potentially remote, databases [10]. To troubleshoot:

- Check the query plan: Ensure the federation's query optimizer is pushing down operations like filters and aggregations to the source systems to minimize the amount of data transferred [10].

- Review network latency: Slow network connections to one or more source databases can bottleneck the entire query.

- Consider source system load: The query might be overloading a source database not designed for heavy analytical workloads. Schedule intensive queries for off-peak hours [12] [10].

- Verify timeouts: Adjust query governance execution time (

QRYGOVEXECTIME) to be larger than the expected query execution time [12].

Q5: I encountered error 1040235: "Remote warning from federated partition." What does this mean and how can I resolve it?

This error often indicates that the metadata in your local outline is out of sync with the fact table in the remote data source [12]. To resolve it:

- Remove the federated partition and its associated connection [12].

- Manually clean up any Essbase-generated tables and objects that failed to be removed automatically from the remote database schema [12].

- Ensure outline consistency: Make the necessary changes to both the Essbase outline and the remote fact table to ensure they are aligned. Common causes include adding, renaming, or removing dimensions or stored members [12].

- Re-create the connection to the remote database [12].

- Re-create the federated partition [12].

Q6: How do I add a new biological data source to my federated system?

A key advantage of a federated architecture is its flexibility [10]. The general process involves:

- Establish a connection: The FDBMS must connect to the new source (e.g., a new genomic database) using a suitable connector or adapter [10].

- Define schema mapping: Map the source's native schema (table names, field names, data types) to the global, unified schema of the federation. This tells the system how to translate the new source's data into the common format [10].

- Update the metadata catalog: Add the mapping and connection information to the federation's central metadata catalog so the query engine knows how to access and interpret the new data [10].

Comparative Analysis of Data Integration Approaches

The table below summarizes the core characteristics of the two primary data integration models to help you select the right strategy for your research project.

Table 1: Comparison of Data Warehousing and Federation

| Feature | Data Warehousing (Eager Approach) | Data Federation (Lazy Approach) |

|---|---|---|

| Data Location | Centralized physical repository [8] [9] | Distributed across original sources [8] [9] |

| Data Freshness | Can be outdated until the next ETL cycle [9] | Real-time or near-real-time access [9] [10] |

| Storage Cost | High (stores redundant copies of data) [9] [10] | Lower (avoids data duplication) [9] [10] |

| Implementation & Maintenance | Complex ETL processes and storage management [8] | Complex query optimization and schema mapping [8] [10] |

| Impact on Source Systems | Low during querying (data is local) [9] | High during querying (load is on source systems) [10] |

| Ideal Use Case | Large-scale, reproducible analysis of historical data [8] | Integrated queries across live, up-to-date sources [9] [10] |

Technical Diagrams

Data Integration Models in Biology

Federated System Architecture

Research Reagent Solutions: Data Integration Tools

Table 2: Essential Tools and Resources for Biological Data Integration

| Tool / Resource | Type | Primary Function | Key Initiative/Example |

|---|---|---|---|

| Ontologies | Vocabulary Standard | Provides unambiguous, agreed-upon terms for describing biological entities and processes [8]. | OBO Foundry, NCBO BioPortal [8] |

| Global Schema | Data Blueprint | Defines a unified structure for mapping and querying disparate data sources [8] [11]. | Object-Protocol Model (OPM) [11] |

| Federated Query Engine | Middleware | Parses, optimizes, and executes queries across distributed sources, returning unified results [10]. | BIO2RDF [8] |

| Connectors/Wrappers | Integration Component | Translates queries and results between the federation layer and a specific source system's format [10]. | Distributed Annotation System (DAS) [8] |

Troubleshooting Guides

This section addresses common challenges researchers face when implementing data integration strategies for gene discovery.

1. Problem: My data integration query is slow, and I'm unsure whether to use an Eager or Lazy approach.

- Question: How do I choose between Eager and Lazy loading to improve performance?

- Diagnosis: Slow queries often stem from fetching too much data at once (a problem with Eager loading) or making too many individual database queries (a problem with Lazy loading). The optimal choice depends on your data size and how you will use it.

- Solution:

- Use Eager Loading when you know you will need all the related data for a set of primary objects. For example, if you are analyzing a specific gene pathway and need all associated protein interactions, gene expressions, and clinical annotations upfront, Eager Loading fetches this in a single query, preventing repetitive, smaller queries later [13] [14].

- Use Lazy Loading when you are unsure which related data you will need or are only conditionally accessing it. For instance, when browsing a genome browser, you might only load detailed transcriptome data for a gene if a user clicks on it. This defers the cost of loading that data until it is absolutely necessary [13] [15].

- Experimental Protocol: To diagnose, run your application with database query logging enabled. A single large query suggests Eager Loading, while a rapid succession of many small queries (the "N+1 queries" problem) indicates Lazy Loading is causing overhead [14]. Switch the loading strategy and re-run your benchmarks.

2. Problem: My integrated view of biological data is inconsistent or has missing links.

- Question: How can I ensure data from different sources (e.g., GenBank, UniProt) are compatible?

- Diagnosis: Heterogeneous databases use different formats, schemas, and identifiers, making integration difficult. This is a fundamental challenge in biological data integration [8].

- Solution:

- Leverage Data Standards and Ontologies: Use controlled vocabularies and ontologies from resources like the OBO (Open Biological and Biomedical Ontologies) Foundry or NCBO BioPortal [8]. These provide universally agreed-upon terms for biological entities.

- Utilize Unique Identifiers: Always use standardized, unique alphanumeric strings (e.g., from UniProt or GenBank) to refer to biological entities rather than names, which can be ambiguous [8].

- Experimental Protocol: Implement a translation layer in your integration workflow that maps external database identifiers to a unified internal ID system. Tools like the Distributed Annotation System (DAS) use a client-server system with a translation layer to integrate annotation data from multiple distant servers into a single view [8].

- Question: How can I manage the challenges of a data warehouse that becomes stale?

- Diagnosis: This is a known challenge of the "eager" data warehousing approach, where a central repository can become inconsistent with the original sources [8].

- Solution:

- Consider a Federated or Lazy Approach: Instead of maintaining a full local copy, use systems that query data from distributed sources on-demand. The Distributed Annotation System (DAS) is a prime example in bioinformatics [8].

- Implement Scheduled Updates: If a warehouse is necessary, establish an automated pipeline to regularly pull updates from source databases.

- Experimental Protocol: For a gene annotation project, you could use a DAS client to pull the latest sequence annotations from various authoritative servers (like UniProt and Ensembl) each time you run your analysis, ensuring you always have the most current data [8].

Frequently Asked Questions (FAQs)

Q1: What are the core technical differences between Eager and Lazy data integration? In computational science, the frameworks are classified into two major categories [8]:

- Eager Approach (Warehousing): Data is copied into a central repository or data warehouse. This provides a unified, global schema for querying [8] [13].

- Lazy Approach (Federated/Linked Data): Data remains in its original, distributed sources. A global schema or mapping service is used to query and combine this data on-demand when a user requests it [8] [13].

Q2: Can you provide real-world biological examples of these models? Yes, the bioinformatics field employs both models [8]:

- Eager/Warehousing: UniProt and GenBank are centralised resources. Pathway Commons collects pathway data from multiple sources into a shared repository for querying [8].

- Lazy/Federated: The Distributed Annotation System (DAS) allows a client to integrate and display annotation data from multiple distant servers in a single view without centralizing the data [8].

- Lazy/Linked Data: BIO2RDF creates a network of interlinked biological data by using hyperlinks to connect related data from multiple providers [8].

Q3: When should I definitely use one approach over the other? The choice is often a trade-off, but some general rules apply:

- Use Eager Loading/Warehousing for data-dense dashboards or reporting systems where all related data is needed immediately for analysis. It ensures no delays when accessing related data, as everything is available upfront [13].

- Use Lazy Loading/Federated for applications with conditional data fetching, like content-heavy websites or interactive tools where users may not access all available information. It optimizes initial load time and memory usage [13].

Q4: What are the key computational trade-offs? The table below summarizes the performance characteristics of Eager vs. Lazy loading in application development, which directly applies to designing data integration systems [13].

| Feature | Lazy Loading | Eager Loading |

|---|---|---|

| Initial Load Time | Faster | Slower |

| Memory Usage | Lower (only for accessed data) | Higher (loads all data upfront) |

| Number of Queries | May result in multiple queries | Typically fewer queries (sometimes just one) |

| Code Complexity | More complex to handle deferred loading | Simpler, as all data is available immediately |

| Bandwidth Usage | Uses less bandwidth initially | Can use more bandwidth if fetching unnecessary data |

Data Integration Model Comparison

The following table summarizes the key aspects of Eager and Lazy integration models as they apply to biological research, synthesizing the conceptual and practical differences [8].

| Aspect | Eager Integration (Warehousing) | Lazy Integration (Federated/Linked Data) |

|---|---|---|

| Core Principle | Data copied to a central repository. | Data remains distributed; unified on-demand. |

| Data Freshness | Challenging to keep updated; can become stale. | Queries live sources; generally more current. |

| Initial Query Speed | Can be slower due to large initial data transfer. | Faster initial response. |

| Subsequent Performance | Excellent, as all data is local. | Can suffer latency from multiple source queries. |

| Scalability | Limited by central server capacity. | Highly scalable; leverages distributed sources. |

| Example in Biology | UniProt, GenBank, Pathway Commons [8]. | Distributed Annotation System (DAS), BIO2RDF [8]. |

Experimental Protocols for Data Integration

Protocol 1: Implementing a Federated Query Using DAS for Gene Annotation

- Objective: To integrate gene sequence annotations from multiple autonomous databases without creating a local copy.

- Methodology:

- Identify Data Sources: Determine the URLs of DAS servers providing the annotations you need (e.g., for genomic sequences, protein features).

- Specify Genomic Region: Your DAS client sends a query specifying the genome and genomic coordinates of interest.

- Query Distribution: The DAS client sends simultaneous requests to all configured annotation servers.

- Data Retrieval and Integration: Each server returns its annotations in a standardized XML format. The client then integrates and overlays all annotations into a unified view.

- Significance: This protocol exemplifies the "lazy" approach, allowing researchers to always access the most up-to-date annotations from expert curators directly [8].

Protocol 2: Building a Local Data Warehouse for Multi-Omics Analysis

- Objective: To create a centralized, high-performance resource for integrated analysis of transcriptomic and proteomic data.

- Methodology:

- Schema Design: Create a global database schema (e.g., using a star or snowflake schema) to hold gene expression, protein abundance, and clinical data.

- ETL (Extract, Transform, Load):

- Extract: Download data from public repositories like The Cancer Genome Atlas (TCGA) and Gene Expression Omnibus (GEO).

- Transform: Map external identifiers to a common ontology (e.g., Gene Ontology). Convert data into a unified format.

- Load: Populate the transformed data into the central warehouse.

- Query Interface: Provide tools (e.g., SQL interfaces, custom APIs) for researchers to run complex queries across the integrated data.

- Significance: This "eager" approach optimizes query speed for complex, cross-dataset analyses, which is crucial for discovering gene-disease associations [8].

Workflow and Logical Diagrams

The following diagram illustrates the core architectural difference between the Eager (Warehousing) and Lazy (Federated) data integration models in a biological context.

Diagram 1: Architectural comparison of Eager and Lazy data integration.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key "reagents" – in this case, data resources and software tools – essential for conducting biological data integration research.

| Item | Function in Research |

|---|---|

| UniProt Knowledgebase | A central, authoritative resource for protein sequence and functional data, often used as a core component in both eager and lazy integration systems [8]. |

| Gene Ontology (GO) | A structured, controlled vocabulary (ontology) that describes gene functions. It is critical for annotating and enabling interoperability between different biological datasets [8]. |

| Distributed Annotation System (DAS) | A client-server system that allows for the integration and display of biological sequence annotations from multiple, distributed sources without centralization [8]. |

| OBO Foundry Ontologies | A suite of orthogonal, interoperable reference ontologies for the life sciences, used to standardize data representation and enable meaningful integration [8]. |

| BIO2RDF | A project that uses Semantic Web technologies to create a network of linked data for the life sciences, exemplifying the linked data approach to lazy integration [8]. |

| Python/R Bio-packages | Libraries like Biopython and BioConductor provide pre-built functions and data structures for accessing biological databases and parsing standard file formats, simplifying the creation of custom integration scripts. |

The Critical Role of Standards, Ontologies, and Unique Identifiers

Troubleshooting Guides and FAQs

FAQ: Core Concepts

Q1: What is biological data integration and why is it critical for gene discovery? Biological data integration is the computational process of combining data from different sources to provide users with a unified view, allowing them to fetch, combine, manipulate, and re-analyze data to create new datasets [8]. For gene discovery, this is crucial because it enables researchers to leverage findings from disparate studies (e.g., genomics, proteomics) to identify novel genetic associations that might be missed when analyzing single datasets in isolation [16]. Without integration, the reproducibility and expansion of biological studies are severely hampered [8].

Q2: What is the difference between a data standard and an ontology? A data standard is an agreement on the representation, format, and definition for common data [8]. An ontology is a structured way of describing data using a set of unambiguous, universally agreed terms to describe biological entities, their properties, and their relationships [8]. In practice, you use a standard to format your data file (e.g., in a specific XML schema), and you use an ontology to precisely describe the meaning of the concepts within that file (e.g., using a term from the Cell Ontology to define a cell type).

Q3: My analysis pipeline failed because gene identifiers from my single-cell RNA-seq experiment don't match those in the public protein interaction database I want to use. What should I do? This is a common issue arising from identifier heterogeneity. Follow these steps:

- Identify the Source: Determine the namespace of your identifiers (e.g., are they Ensembl Gene IDs, Entrez Gene IDs, or symbols?) and the namespace required by the target database.

- Use a Mapping Service: Utilize a dedicated service for identifier conversion. Databases like UniProt [8] and bioinformatics portals like ExPASy [8] often provide built-in mapping tools or links to external databases that can help translate between different identifier types.

- Leverage Ontologies: Where possible, map your identifiers to a central ontology like the Cell Ontology (CL) or Uberon [17]. A study showed that fine-tuned LLMs can assist in this annotation process for cell lines and cell types with high recall [17], though manual curation is still recommended to ensure validity.

Q4: How can I account for population structure as a confounder when searching for genetically heterogeneous regions associated with a disease? Standard single-marker tests can produce false positives due to confounders like population structure. To address this, use methods like FastCMH, which is specifically designed to perform a genome-wide search for associated genomic regions while correcting for categorical confounders [16]. FastCMH combines the Cochran-Mantel-Haenszel (CMH) test with an efficient multiple testing correction framework, dramatically reducing genomic inflation and false positives compared to methods that cannot adjust for covariates [16].

Troubleshooting Common Experimental Issues

Problem: Inconsistent sample annotation is blocking my data submission to a public repository.

- Solution: Implement a manual and automated annotation workflow.

- Manual Curation: For a small number of samples, use established ontologies from resources like the OBO Foundry [8] or NCBO BioPortal [8] to find the correct terms for your sample metadata (e.g., cell type, tissue).

- Automated Assistance: For larger datasets, consider using automated annotation tools. Recent research indicates that fine-tuned Large Language Models (LLMs) can be effective for assigning ontological identifiers to biological sample labels, particularly for cell lines and cell types, achieving precision of 47–64% and recall of 88–97% [17]. This can significantly accelerate the process, though expert review is still required.

- Validation: Use an ontology lookup service (OLS) to validate the identifiers and terms before submission.

Problem: I need to compare single-cell trajectories (e.g., in vitro vs. in vivo development) but standard methods assume all cell states have a direct match.

- Solution: Employ an alignment method that can handle both matches and mismatches.

- Standard Dynamic Time Warping (DTW) assumes every time point in a reference matches at least one in the query, which is often biologically inaccurate [18].

- Use the Genes2Genes (G2G) framework, a dynamic programming algorithm that jointly handles matches, warps, and mismatches (indels) [18]. This allows you to identify not only similar cell states but also states that are divergent or unobserved in one of the systems, providing a more nuanced biological interpretation.

Problem: My genome-wide association study (GWAS) for a complex trait has insufficient power because individual genetic variants have weak effects.

- Solution: Use a region-based association method that aggregates signals.

- Genetic heterogeneity means different variants in the same genomic region can influence the same phenotype. Methods like FastCMH test all possible contiguous genomic regions by creating a meta-marker for each region, which can aggregate these weak signals into a statistically powerful association [16]. This approach can discover associations missed by single-marker tests or burden tests that are limited to predefined regions like genes [16].

Experimental Protocols

Protocol 1: Aligning Single-Cell Trajectories Using Genes2Genes (G2G)

Application: Comparing dynamic processes (e.g., differentiation, disease progression) between a reference and query system at single-gene resolution [18].

Detailed Methodology:

- Input Preprocessing:

- Obtain log1p-normalized scRNA-seq count matrices for both reference and query systems, along with their pseudotime estimates.

- Normalize the pseudotime axis for each system to the [0,1] range using min-max normalization.

- For each gene, perform interpolation: estimate its expression as a Gaussian distribution at a predefined number of equispaced time points. The expression value for an interpolation point is calculated using all cells, kernel-weighted by their pseudotime distance to that point [18].

- Dynamic Programming (DP) Alignment:

- Run the G2G DP algorithm for each gene to find the optimal alignment between the reference and query interpolated trajectories.

- The algorithm uses a Bayesian information-theoretic scoring scheme based on Minimum Message Length (MML) to compute the cost of matching, warping, or inserting a gap between time points. This cost function accounts for differences in both the mean and variance of gene expression distributions [18].

- The output for each gene is an alignment described as a five-state string (M, V, W, I, D), defining matches, compression warps, expansion warps, and insertions/deletions in sequential order [18].

- Downstream Analysis:

- Clustering: Calculate the pairwise Levenshtein distance between all gene-level alignment strings. Perform agglomerative hierarchical clustering on this distance matrix to identify genes with similar alignment patterns.

- Aggregation: Generate a representative alignment for each cluster. Aggregate all gene-level alignments to create a single, cell-level alignment that provides an average mapping between the reference and query trajectories.

- Pathway Analysis: Perform gene set over-representation analysis on clusters of interest (e.g., genes showing a divergent pattern) to identify associated biological pathways [18].

Protocol 2: Accounting for Categorical Confounders in Genome-Wide Association Analysis with FastCMH

Application: Discovering genomic regions associated with a binary phenotype while correcting for categorical confounders (e.g., gender, population batch) under a model of genetic heterogeneity [16].

Detailed Methodology:

- Data Preparation:

- Encode genotypic data for

nindividuals as a sequence oflbinary markers (e.g., using a dominant/recessive model for SNPs). - Define a binary phenotype vector

y(e.g., case/control). - Record a categorical covariate

cwithkstates for each individual.

- Encode genotypic data for

- Meta-marker Construction:

- For every possible genomic region

[ts, te](wheretsis the start marker andteis the end marker), create a meta-marker for each individual. - The meta-marker

gi([ts, te]) = max(gi[ts], gi[ts+1], ..., gi[te])is1if the region contains any minor/risk allele for that individual, and0otherwise [16]. This aggregates weak signals from individual markers within the region.

- For every possible genomic region

- Association Testing with Cochran-Mantel-Haenszel (CMH):

- For each genomic region

[ts, te], test the conditional association of its meta-marker with the phenotypey, given the covariatec. - The CMH test is used for this purpose. It constructs a

2x2contingency table for each category of the confounderc, summarizing the counts of cases/controls with the meta-marker present/absent within that stratum [16]. - The CMH test statistic is computed across all strata to produce a single p-value for the region, adjusting for the confounder.

- For each genomic region

- Multiple Testing Correction with Tarone's Trick:

- FastCMH uses Tarone's trick to account for the enormous number of tests performed (all possible genomic regions). This method identifies and excludes "untestable" hypotheses—regions that can never achieve statistical significance—from the multiple testing correction procedure, thereby maintaining computational efficiency and statistical power [16].

- The final output is a list of significant genomic regions, with FWER-controlled p-values, that are associated with the phenotype after correcting for the specified confounder.

Data Presentation

Table 1: Performance of Automated Ontology Annotation Tools

This table summarizes the precision and recall of a fine-tuned GPT model compared to the text2term tool for annotating biological sample labels to specific ontologies, highlighting its utility for cell lines and types [17].

| Ontology | Ontology Domain | Fine-tuned GPT Precision (%) | Fine-tuned GPT Recall (%) | text2term Performance |

|---|---|---|---|---|

| Cell Line Ontology (CLO) | Cell Lines | 47-64 | 88-97 | Outperformed |

| Cell Ontology (CL) | Cell Types | 47-64 | 88-97 | Outperformed |

| Uberon (UBERON) | Anatomy | 47-64 | 88-97 | Outperformed |

| BRENDA Tissue (BTO) | Tissues | 14-59 (Variable) | Not Specified | Variable Performance |

Table 2: Comparison of Single-Cell Trajectory Alignment Methods

This table compares key features of trajectory alignment methods, illustrating the advanced capabilities of the Genes2Genes framework [18].

| Feature | Dynamic Time Warping (DTW) / CellAlign | TrAGEDy | Genes2Genes (G2G) |

|---|---|---|---|

| Handles Mismatches (Indels) | No | Via post-processing of DTW output | Yes, jointly with matches |

| Alignment Assumption | Every time point must match | Definite match required | Allows for no match |

| Distance Metric | Euclidean distance of means | Not Specified | Bayesian MML (mean & variance) |

| Identifies Warps | Yes | Yes | Yes |

| Output | Mapping of all time points | Processed DTW alignment | Five-state string per gene |

Workflow and Relationship Visualizations

Diagram 1: Biological Data Integration Workflow

Diagram 2: FastCMH Method for Genetic Heterogeneity

Diagram 3: Genes2Genes Trajectory Alignment Logic

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Type | Primary Function | Key Application |

|---|---|---|---|

| OBO Foundry [8] | Ontology Repository | Provides a set of principled, orthogonal reference ontologies for the biological sciences. | Finding standardized terms for annotating biological data. |

| NCBO BioPortal [8] | Ontology Repository | A comprehensive repository of biomedical ontologies and terminologies. | Browsing and searching a wide array of ontologies for data annotation. |

| UniProt [8] | Centralized Database | A comprehensive resource for protein sequence and functional information. | Accessing expertly curated protein data with rich annotation. |

| Genes2Genes (G2G) [18] | Software Framework | A dynamic programming tool for aligning single-cell pseudotime trajectories. | Comparing dynamic processes (e.g., differentiation) between two systems. |

| FastCMH [16] | R Package | A method for genome-wide search of genetically heterogeneous regions associated with a phenotype, correcting for confounders. | Discovering genomic regions with weak but aggregated signals in GWAS. |

| Color Contrast Analyser | Accessibility Tool | Checks the contrast between foreground and background colors. | Ensuring visualizations and diagrams meet accessibility standards for readability [19] [20]. |

| text2term [17] | Annotation Tool | A state-of-the-art tool for mapping text to ontological terms. | Automating the annotation of dataset labels for integration. |

From Data to Discovery: Methodological Frameworks and Real-World Applications

A Step-by-Step Tutorial for Genomic Data Integration

Genomic data integration is a cornerstone of modern gene discovery research. It involves the computational process of combining data from different sources—such as genome, transcriptome, and methylome datasets—to provide a unified view, thereby enabling the discovery of biological insights that cannot be gleaned from individual datasets alone [8] [21]. For researchers and drug development professionals, mastering this process is crucial for cross-validating noisy data, gaining broad interdisciplinary views, and identifying robust biomarkers or therapeutic targets [21] [22]. This guide provides a step-by-step tutorial and troubleshooting resource to navigate the conceptual, analytical, and practical challenges of genomic data integration.

Conceptual Framework: Understanding Data Integration

Before embarking on technical steps, it is vital to understand the key models and concepts that underpin data integration strategies in computational biology.

Key Integration Models

In computational science, theoretical frameworks for data integration are primarily classified into two categories [8]:

- Eager Approach (Data Warehousing): Data is copied from various sources into a central repository or data warehouse. This model must overcome challenges related to keeping the data updated and consistent.

- Lazy Approach (Federated Databases): Data remains in its original, distributed sources and is integrated on-demand using a global schema to map the information. The challenge here lies in optimizing the query process across sources.

The choice between these models depends on the data volume, ownership, and existing infrastructure [8].

Fundamental Terminology

Familiarity with the following terms is essential for understanding the integration process [8] [21]:

- Data Integration: The process of combining data that reside in different sources to provide users with a unified view.

- Ontology: A structured way of describing data using a set of unambiguous, universally agreed-upon terms.

- Unique Identifier: An alphanumeric string that serves as a unique representation for a biological entity (e.g., a gene or protein), crucial for accurately linking data across databases.

- Metadata: Data that provides information about other data, such as the experimental conditions or analysis parameters.

- Controlled Vocabulary: A collection of standardized terms for describing a specific domain of interest.

Step-by-Step Tutorial for Genomic Data Integration

The following workflow outlines the best practices for integrating genomic data, from initial design to final execution. Adhering to this structured process is key to achieving reliable and interpretable results.

Step 1: Design the Data Matrix

The first step is to construct a structured data matrix where the biological units (e.g., genes) are arranged in rows, and the different genomic variables (e.g., expression levels, methylation values) are arranged in columns [22]. This format is particularly powerful for investigating gene-level relationships across multiple data types.

- Example Matrix: In a plant case study, the matrix consisted of 42,950 genes (rows) and 70 variables (columns). These variables represented transcriptome and methylome data (in CG, CHG, CHH contexts for both promoter and gene-body) across ten different populations [22].

Step 2: Formulate the Biological Question

The analytical approach is determined by the specific biological question you aim to answer. These generally fall into three categories [22]:

- Description: Understanding major interplay between variables (e.g., "How does DNA methylation impact gene expression genome-wide?").

- Selection: Identifying key biological units or biomarkers (e.g., "Which groups of genes show contrasting methylation and expression patterns?").

- Prediction: Building models to infer outcomes (e.g., "Can gene expression levels be predicted from methylation data in new individuals?").

Step 3: Select an Integration Tool

Selecting the right software tool is critical and depends on your biological question, data types, and preferred statistical methods. The following table summarizes some of the most cited tools available in the R programming environment.

Table: Select Genomic Data Integration Tools

| Tool Name | Primary Method | Supported Questions | Key Feature |

|---|---|---|---|

| mixOmics [22] | Dimension Reduction (PCA, PLS) | Description, Selection, Prediction | Suitable for integrating two or more datasets; offers extensive graphical functions. |

| MOFA [22] | Factor Analysis | Description, Selection | Uncover the main sources of variation across multiple data types. |

| iCluster [22] | Clustering | Selection | Identifies subgroups across heterogeneous datasets. |

Step 4: Preprocess the Data

Data preprocessing ensures the quality and consistency of your data before integration, which is vital for the validity of the results. This stage involves [22]:

- Handling Missing Values: Decide on a strategy such as deletion (removing rows/columns with too many missing values) or imputation (replacing missing values with estimated ones like the median).

- Addressing Outliers: Identify and decide how to handle unusual values that could skew the analysis.

- Normalization: Adjust data to remove technical biases and make different datasets comparable.

- Batch Effect Correction: Account for technical variations introduced by different experimental batches, days, or platforms.

Step 5: Conduct Preliminary Analysis

Before integration, perform descriptive statistics and analyze each dataset individually. This step helps you understand the structure and quality of each omics layer, prevents misinterpretation during integration, and can reveal data-specific patterns or biases [22].

Step 6: Execute Genomic Data Integration

Finally, run the chosen integration method (e.g., mixOmics in R) on your preprocessed and understood datasets. The output will allow you to explore the relationships between variables, select features of interest, or build predictive models as dictated by your initial biological question [22].

Troubleshooting Common Issues

Even with a careful workflow, challenges can arise. The table below outlines common problems, their symptoms, and potential solutions.

Table: Troubleshooting Common Data Integration Issues

| Problem Area | Common Symptoms | Possible Causes | Corrective Actions |

|---|---|---|---|

| Data Quality & Input [23] [24] | Low library yield; smear in electropherogram; enzyme inhibition. | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification. | Re-purify input sample; use fluorometric quantification (Qubit) over UV; check purity ratios (260/230 > 1.8). |

| Fragmentation & Ligation [23] | Unexpected fragment size; sharp ~70-90 bp peak (adapter dimers). | Over-/under-shearing; poor ligase performance; suboptimal adapter-to-insert ratio. | Optimize fragmentation parameters; titrate adapter ratios; ensure fresh enzymes and buffers. |

| Amplification & PCR [23] | Overamplification artifacts; high duplicate rate; bias. | Too many PCR cycles; carryover of enzyme inhibitors; mispriming. | Reduce the number of PCR cycles; re-purify sample to remove inhibitors; optimize annealing conditions. |

| Bioinformatics Pipeline [25] [24] | Low mapping rates; pipeline failures; incompatible formats. | Incorrect reference genome; poor quality reads; tool version conflicts; adapter contamination. | Use correct/indexed reference genome (e.g., GRCh38); perform QC with FastQC; trim adapters; use workflow managers (Nextflow, Snakemake). |

| Data Heterogeneity [21] | Inability to combine datasets; erroneous mappings. | Different file formats, structures, or identifier systems across databases. | Use translation layers or tools like Gintegrator [26] to map identifiers (e.g., between NCBI and UniProt) in real-time. |

Frequently Asked Questions (FAQs)

Q1: Why should I submit my genomic data to a public repository like GEO? Journals and funders often require data deposition in public repositories to ensure reproducibility and validation of scientific findings. Submission also provides long-term archiving, increases the visibility of your research, and integrates your data with other resources, amplifying its utility [27].

Q2: What are the key data and documentation required for submission? Repositories like the Gene Expression Omnibus (GEO) require complete, unfiltered data sets. This includes raw data (e.g., FASTQ files), processed data, and comprehensive metadata describing the samples, protocols, and overall study. Heavily filtered or partial datasets are not accepted [27].

Q3: How does the GDC handle different data types and ensure consistency? The NCI Genomic Data Commons (GDC) employs a process called harmonization. It realigns incoming genomic data (e.g., BAM files) to a consistent reference genome (GRCh38) and applies uniform pipelines for generating high-level data like mutation calls and RNA-seq quantifications. This creates a standardized resource that facilitates direct comparison across different cancer studies [28].

Q4: What are the consent requirements for sharing human genomic data? For studies involving human data, NHGRI expects explicit consent for future research use and broad data sharing. Data submitted to controlled-access repositories like dbGaP require authorization for access, ensuring patient privacy is protected in accordance with ethical and legal standards [28] [29].

Table: Key Research Reagent Solutions and Databases

| Resource Name | Type | Primary Function in Integration |

|---|---|---|

| NCBI GEO [27] | Data Repository | Archives and redistributes functional genomic datasets; crucial for data submission and access. |

| GDC [28] | Data Repository & Knowledge Base | Provides harmonized cancer genomic data, standardizing data from projects like TCGA for integrated analysis. |

| UniProt [8] | Protein Database | Provides a central repository of protein sequence and functional data. |

| OBO Foundry [8] | Ontology Resource | Provides a suite of open, standardized biological ontologies to enable consistent data annotation. |

| Gintegrator [26] | Identifier Translation Tool | Translates gene and protein identifiers across major databases (e.g., NCBI, UniProt, KEGG) in real-time. |

| mixOmics [22] | R Software Package | Provides statistical and graphical functions for the integration of multiple omics datasets. |

Frequently Asked Questions (FAQs)

Q1: What is the core value of a multi-omics approach compared to single-omics studies? A multi-omics approach provides a holistic and complementary view of the different layers of biological information. While a single-omics dataset (e.g., genomics) shows one piece of the puzzle, integrating multiple 'omes' (e.g., genomics, transcriptomics, proteomics, metabolomics) allows researchers to uncover the complex, causative relationships between them. This leads to a more comprehensive picture of cellular biology, enabling the discovery of more robust biomarkers and drug targets that would not be identifiable from a single data type alone [30] [31].

Q2: What are the primary types of multi-omics data integration? Multi-omics data integration strategies are broadly categorized based on how the samples are collected [32]:

- Matched (Vertical) Integration: Multiple types of omics data (e.g., DNA, RNA, protein) are measured from the same set of samples. This keeps the biological context consistent and is powerful for identifying direct associations between different molecular layers [32].

- Unmatched (Horizontal) Integration: Data is combined from different studies, cohorts, or samples that measure the same type of omic. This is often used to increase statistical power by combining datasets from multiple sources [21] [32].

Q3: My multi-omics datasets have different scales, formats, and lots of missing values. What are the first steps to handle this? This is a common challenge due to the inherent heterogeneity of omics technologies. A standard first step is preprocessing and normalization tailored to each data type [32]. This involves:

- Format Conversion: Converting data from different sources into a common structure and dimension for integration [21].

- Noise and Batch Effect Correction: Accounting for technical variations introduced by different platforms, labs, or experimental conditions [21] [32].

- Missing Value Imputation: Using computational methods to infer plausible values for missing data points, which is crucial for downstream statistical analysis [33].

Q4: What are the main computational methods for integrating matched multi-omics data? There are several classes of methods, each with a different approach. The choice depends on your biological question (e.g., unsupervised clustering vs. supervised classification). The table below summarizes some commonly used methods [32]:

| Method Name | Integration Type | Key Principle | Best For |

|---|---|---|---|

| MOFA (Multi-Omics Factor Analysis) | Unsupervised | Identifies latent factors that are common sources of variation across all omics datasets. | Discovering hidden structures and subgroups in data without prior labels. |

| DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) | Supervised | Identifies components that discriminate pre-defined sample groups (e.g., healthy vs. disease). | Identifying multi-omics biomarker panels for disease classification. |

| SNF (Similarity Network Fusion) | Unsupervised | Fuses sample-similarity networks from each omics layer into a single combined network. | Clustering patient samples into integrative molecular subtypes. |

| MCIA (Multiple Co-Inertia Analysis) | Unsupervised | A multivariate method that finds a shared dimensional space to reveal correlated patterns across datasets. | Jointly visualizing and interpreting relationships across multiple omics datasets. |

Q5: How can I interpret the results from a multi-omics integration analysis to gain biological insights? After running an integration model, focus on:

- Factor/Loading Interpretation (for MOFA): Examine which features (genes, proteins, etc.) have the highest weights ("loadings") in each latent factor. Features with high loadings for the same factor are co-varying across omics layers and are likely biologically linked [32].

- Pathway and Network Analysis: Input the list of important features identified by the integration model into pathway enrichment or protein-protein interaction network tools. This helps place the multi-omics findings in the context of established biological processes [32].

- Validation: Use independent experimental techniques (e.g., PCR, western blot) or cross-reference with publicly available databases to confirm key findings [30].

Troubleshooting Guides

Problem: My integrated model is overfitting and fails to generalize to new data. Potential Causes and Solutions:

- Cause 1: High Dimensionality, Low Sample Size (HDLSS). The number of variables (genes, proteins) vastly exceeds the number of samples, a common issue in omics [33].

- Solution: Employ feature selection before integration to reduce dimensionality. Use methods embedded in algorithms like DIABLO, which include penalization (e.g., Lasso) to select only the most informative features [32].

- Cause 2: Data Leakage. Information from the test dataset was inadvertently used during the model training process [31].

- Solution: Strictly partition your data into training, validation, and test sets before any preprocessing. Ensure that normalization parameters are learned only from the training set and then applied to the validation/test sets.

- Cause 3: Under-specification. The training process can produce many models that fit your training data well but make different predictions on new data [31].

- Solution: Use ensemble methods or perform multiple training runs with different initializations to ensure the stability and robustness of your model.

Problem: I am getting inconsistent signals between different omics layers (e.g., high RNA but low protein for a gene). Potential Causes and Solutions:

- Cause 1: Biological Regulation. This discrepancy is often biologically real and informative, due to post-transcriptional regulation, differences in protein turnover rates, or technical limitations in detecting certain proteins [30] [34].

- Solution: Do not assume perfect correlation. Use integration methods that can handle these non-linear relationships. Investigate the specific genes/proteins involved in the context of known biology; this inconsistency may reveal important regulatory mechanisms.

- Cause 2: Technical Noise. Different omics technologies have varying sensitivities and specificities [21] [32].

- Solution: Apply technology-specific quality control thresholds. For proteomics, be aware that mass spectrometry may not detect low-abundance proteins, which could explain a missing signal despite high RNA expression [34].

Problem: My data has significant batch effects from different experimental runs. Potential Causes and Solutions:

- Cause: Systematic technical variations introduced when samples are processed in different batches, on different days, or by different personnel [21] [32].

- Solution:

- Study Design: Whenever possible, randomize samples across batches to avoid confounding batch with biological groups.

- Batch Correction Algorithms: Use tools like ComBat or functions in R packages (e.g.,

sva,limma) to statistically remove batch effects after normalization but before data integration. Always validate that correction preserves biological signal.

- Solution:

Experimental Protocols for Key Multi-Omics Workflows

Protocol 1: A Basic Matched Multi-Omics Workflow from a Single Tissue Sample This protocol outlines how to process a single tissue sample to extract multiple analytes for integrated analysis.

1. Sample Lysis and Fractionation:

- Objective: To simultaneously isolate DNA, RNA, protein, and metabolites from a single sample.

- Steps: a. Homogenize ~50 mg of frozen tissue in a commercially available trizol-like or multi-omics lysis reagent. b. Perform phase separation by adding chloroform and centrifuging. The resulting mixture will separate into: * Upper aqueous phase: Contains RNA. * Interphase: Contains DNA. * Lower organic phase: Contains proteins and metabolites. c. Carefully collect each phase separately for downstream processing.

2. Omics-Specific Processing:

- Genomics/DNA: a. Precipitate DNA from the interphase using ethanol. b. Wash, purify, and resuspend the DNA pellet. c. Proceed to DNA sequencing library preparation (e.g., for Whole Genome Sequencing).

- Transcriptomics/RNA: a. Precipitate RNA from the aqueous phase using isopropanol. b. Wash the RNA pellet (e.g., with 75% ethanol) and dissolve in RNase-free water. c. Assess RNA quality (e.g., RIN > 8) using a Bioanalyzer. d. Proceed to RNA sequencing library preparation (e.g., mRNA enrichment, reverse transcription to cDNA).

- Proteomics/Proteins: a. Precipitate proteins from the organic phase with isopropanol. b. Wash the protein pellet and dissolve in a suitable buffer. c. Digest proteins into peptides using trypsin. d. Desalt the peptides and analyze by Liquid Chromatography-Mass Spectrometry (LC-MS/MS).

- Metabolomics/Metabolites: a. Dry down the metabolite-containing organic phase under nitrogen or vacuum. b. Reconstitute in a solvent compatible with your analysis platform (e.g., LC-MS or GC-MS).

Logical Workflow Diagram:

Protocol 2: Transcriptomic and Proteomic Integration for Biomarker Discovery This protocol details a paired analysis of gene and protein expression from the same biological condition.

1. Sample Preparation:

- Split a cell pellet or tissue homogenate into two aliquots.

- Aliquot 1 (for Transcriptomics): Preserve in RNA-later or immediately extract total RNA. Proceed with RNA-seq library prep and sequencing.

- Aliquot 2 (for Proteomics): Lyse cells in a protein-compatible buffer (e.g., RIPA with protease inhibitors). Quantify total protein. Proceed with protein digestion and LC-MS/MS.

2. Data Preprocessing and Normalization:

- RNA-seq Data: a. Generate raw count tables from sequencing reads using an alignment tool (e.g., STAR) or pseudoalignment (e.g., Kallisto). b. Normalize raw counts using a method like TMM (for cross-sample comparison) and transform to log2-CPM (Counts Per Million).

- Proteomics Data: a. Identify and quantify proteins from MS/MS spectra using search engines (e.g., MaxQuant). b. Normalize protein abundance values to correct for technical variation (e.g., using median or quantile normalization).

3. Data Integration and Analysis:

- Objective: Identify genes that show concordant or discordant regulation at the RNA and protein level.

- Steps: a. Match gene symbols (for RNA) to corresponding protein names. b. Calculate the fold-change (e.g., diseased vs. control) for both RNA and protein. c. Create a scatter plot of RNA log2-fold-change vs. Protein log2-fold-change. d. Statistically test for correlation (e.g., Pearson) and identify outliers (genes with large protein fold-change but minimal RNA change, suggesting post-transcriptional regulation). e. Input the list of concordant, highly changing biomarkers into a supervised integration tool like DIABLO to build a robust multi-omics classifier.

Data Integration Strategy Diagram:

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential reagents and tools for generating multi-omics data, with a focus on nucleic acid-based methods which form the core of genomics, epigenomics, and transcriptomics [30].

| Reagent / Tool | Function / Application | Relevant Omics Layer(s) |

|---|---|---|

| DNA Polymerases | Enzymes that synthesize new DNA strands; critical for PCR, library amplification, and sequencing. | Genomics, Epigenomics, Transcriptomics |

| Reverse Transcriptases | Enzymes that transcribe RNA into complementary DNA (cDNA); essential for RNA-seq. | Transcriptomics |

| PCR Kits & Master Mixes | Optimized buffered solutions containing polymerase, dNTPs, and co-factors for efficient and specific DNA amplification. | Genomics, Epigenomics, Transcriptomics |

| Oligonucleotide Primers | Short, single-stranded DNA sequences designed to bind to a specific target region and initiate DNA synthesis by polymerase. | All nucleic acid-based layers |

| dNTPs (deoxynucleotide triphosphates) | The building blocks (A, T, C, G) for DNA synthesis. | Genomics, Epigenomics, Transcriptomics |

| Methylation-Sensitive Enzymes | Restriction enzymes or other modifying enzymes used to detect and study DNA methylation patterns. | Epigenomics |

| Restriction Enzymes | Proteins that cut DNA at specific recognition sequences; used in various library prep and epigenomic assays. | Genomics, Epigenomics |

| Mass Spectrometry Kits | Reagents for protein/peptide standard curves, digestion, labeling (e.g., TMT), and cleanup for LC-MS/MS. | Proteomics, Metabolomics |

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What are the most common data-related issues causing poor ML model performance in biological data fusion? Poor ML model performance often stems from data quality issues rather than algorithmic problems. The most common culprits include:

- Incomplete or insufficient data: Missing values in genomic datasets or datasets too small for model training lead to underfitting and poor generalization [35].

- Data corruption: Mismanagement or improper formatting of heterogeneous biological data from different sources [35].

- Imbalanced data: Unequal distribution of data classes, such as rare disease variants versus common variants, skews predictions [35].

- Inadequate feature selection: Including irrelevant genomic features that don't contribute to predictive output [35].

Q2: How can we effectively integrate heterogeneous biological databases with different structures and formats? Successful integration requires both technical and strategic approaches:

- Adaptive data modeling: Use hybrid database architectures combining relational databases for structured genomic data and document-oriented databases (e.g., MongoDB) for unstructured experimental data [36].

- Graph databases: Implement graph databases (e.g., Neo4j) for highly interconnected biological data like protein-protein interaction networks [36].

- Automated data pipelines: Deploy tools like Apache NiFi with error-handling frameworks to process raw biological data files (e.g., FASTQ) and normalize inputs [36].

- Interactive data environments: Create specialized integration layers that enable cross-repository analyses while preserving specialized governance of underlying databases [36].

Q3: What preprocessing steps are essential for genomic data before ML analysis? Essential preprocessing steps include [35]:

- Handling missing data: Remove entries with excessive missing values or impute using mean/median values for minor missing data.

- Addressing outliers: Use box plots to identify and remove values that distinctly stand out from the dataset.

- Feature normalization/standardization: Scale features to the same magnitude to prevent models from giving undue weight to high-magnitude features.

- Balancing imbalanced datasets: Apply resampling techniques or data augmentation to address skewed class distributions.

Q4: How can we validate that our gene prioritization framework is performing optimally? Validation should include multiple approaches:

- Cross-validation: Divide data into k subsets, using k-1 for training and one for testing, repeated k times [35].

- Benchmarking against established tools: Compare performance against tools like GEO2R and STRING [37] [38].

- Performance metrics: Evaluate using precision, recall, F1 score, and AUC-ROC curves [37] [38].

- Bias-variance tradeoff assessment: Ensure models balance underfitting and overfitting through regularization and complexity management [35].

Q5: What are the key advantages of using AI-driven literature analysis in target prioritization? AI-driven literature analysis provides:

- Automated evidence synthesis: GPT-4 and similar models can automate the synthesis of preclinical and clinical evidence, identifying targets with mechanistic and translational relevance [37] [38].

- Reduced manual curation: Significantly decreases the time required for literature review while maintaining comprehensive coverage [37].

- Identification of structural domains: LLMs can predict essential information about gene targets, including structural domains, toxicity, functional significance, and clinical relevance [37].

Troubleshooting Common Experimental Issues

Issue 1: Model Overfitting on Genomic Data

- Symptoms: Excellent performance on training data but poor performance on validation/test data [35].

- Diagnosis: Low bias but high variance, often occurring with complex models on limited genomic datasets [35].

- Solutions:

Issue 2: Handling Rare Genetic Variants in Prediction Models

- Symptoms: Poor prediction accuracy for rare variants despite good overall model performance [39].

- Diagnosis: Tree-based models may treat individuals with 1 or 2 risk alleles identically due to sparse data, incidentally learning a dominant model [39].

- Solutions:

- Apply specialized sampling techniques to oversample rare variant cases.

- Use ensemble methods combining multiple algorithms.

- Consider semi-supervised learning approaches for partially labeled data [39].

- Implement feature engineering to create aggregated rare variant scores.

Issue 3: Integration of Multi-omics Data with Different Scales and Distributions

- Symptoms: Model performance degrades when combining genomic, transcriptomic, and proteomic data.

- Diagnosis: Different omics data types have varying magnitudes, units, and distributions [35].

- Solutions:

- Apply modality-specific normalization before integration.

- Use hybrid AI frameworks like graph neural networks that can handle heterogeneous data structures [40].

- Implement multi-stage integration approaches rather than simple concatenation.

- Employ dimensionality reduction techniques like PCA on individual omics layers before fusion [35].

Issue 4: Interpretability of AI Models in Biological Discovery

- Symptoms: Difficulty understanding model decisions and biological relevance of prioritized genes.

- Diagnosis: Black-box models like deep neural networks provide limited biological insights [40].

- Solutions:

- Incorporate explainable AI techniques like SHAP or LIME.

- Use biologically constrained models that embed prior knowledge.

- Implement attention mechanisms in neural networks to highlight relevant features.

- Validate findings through network-based prioritization and functional annotations [37].

Experimental Protocols & Methodologies

GETgene-AI Framework for Disease Gene Prioritization

The GETgene-AI framework provides a systematic approach for prioritizing actionable drug targets in cancer research, demonstrated through a pancreatic cancer case study [37] [38].

Detailed Methodology

1. Initial Gene List Generation

- G List (Genetic Variants): Compile 2,493 genes with high mutational frequency, functional significance, and genotype-phenotype associations from databases like TCGA and COSMIC [37] [38].

- E List (Differential Expression): Identify 2,000 genes exhibiting significant differential expression in pancreatic ductal adenocarcinoma compared to normal tissues [37] [38].

- T List (Known Targets): Curate 131 genes annotated as drug targets in clinical trials, patents, or approved therapies [37] [38].

2. Network-Based Prioritization and Expansion

- Process each list through the Biological Entity Expansion and Ranking Engine (BEERE) [37] [38].

- Iteratively prioritize by taking the top 500 genes from each list, re-expanding and ranking [37] [38].

- Leverage protein-protein interaction networks, functional annotations, and experimental evidence [37].

3. Multi-list Integration and Annotation

- Merge the refined G, E, and T lists.

- Annotate with biologically significant features.

- Benchmark against genes implicated in pancreatic cancer clinical trials to set weights for RP score ranking [37].

4. AI-Driven Literature Validation

- Integrate GPT-4o for automated literature analysis [37] [38].

- Validate prioritized targets through synthesis of preclinical and clinical evidence [37].

- Further annotate the target list based on literature evidence [37].

Performance Evaluation

The framework was benchmarked against established tools with the following results:

Table 1: Performance Comparison of GETgene-AI Against Established Tools

| Metric | GETgene-AI | GEO2R | STRING |

|---|---|---|---|

| Precision | Superior | Lower | Moderate |

| Recall | Superior | Lower | Moderate |

| Efficiency | Higher | Lower | Moderate |

| False Positive Mitigation | Effective | Limited | Moderate |

The framework successfully prioritized high-priority targets such as PIK3CA and PRKCA, validated through experimental evidence and clinical relevance [37].

Machine Learning for Polygenic Risk Scoring in Brain Disorders

Methodology for Enhanced PRS with ML

1. Data Preparation and Quality Control

- Apply standard GWAS quality control procedures [39].

- Conduct population stratification correction using principal component analysis [39].

- Implement linkage disequilibrium pruning [39].

2. Feature Selection and Engineering

- Univariate and Bivariate Selection: Identify features firmly related to output variables using statistical tests [35].

- Principal Component Analysis (PCA): Reduce dimensionality while preserving features with high variance [35].

- Feature Importance Analysis: Leverage algorithms like Random Forest and ExtraTreesClassifier to select high-importance features [35].

3. Model Selection and Training

- Algorithm Selection: Choose based on prediction task: regression for numerical values, classification for categorical data, clustering for structure discovery [35].

- Ensemble Methods: Implement boosting, bagging, stacking, or cascading for complex datasets [35].

- Neural Networks: Apply for complex and larger datasets [35].

4. Hyperparameter Tuning and Validation

- Tune hyperparameters (e.g., k in k-nearest neighbors) to optimize performance [35].

- Implement k-fold cross-validation to assess model generalizability [35].

- Balance bias-variance tradeoff by selecting optimal model complexity [35].

Table 2: Performance of ML-Enhanced PRS for Brain Disorders

| Disorder | Traditional PRS (AUC) | ML-Enhanced PRS (AUC) | Notes |

|---|---|---|---|

| Schizophrenia | 0.73 | 0.54-0.95 (varied) | Highly heritable disorder [39] |

| Alzheimer's Disease | 0.70-0.75 (clinical) 0.84 (pathological) | Improved range reported | APOE status significantly impacts risk [39] |

| Bipolar Disorder | 0.65 | Improved range reported | Lower heritability than schizophrenia [39] |

Workflow Visualization

GETgene-AI Framework Workflow

ML Model Development & Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for ML in Biological Data Fusion

| Tool/Resource | Function | Application in Research |

|---|---|---|

| BEERE (Biological Entity Expansion and Ranking Engine) | Network-based prioritization tool | Expands and ranks gene lists using protein-protein interaction networks and functional annotations [37] [38] |

| GPT-4o | Large language model | Automates literature analysis, synthesizes preclinical and clinical evidence for target validation [37] [38] |

| Graph Databases (Neo4j) | Relationship modeling | Stores and queries highly interconnected biological data like protein-protein interaction networks [36] |

| Document-oriented Databases (MongoDB) | Flexible data storage | Captures variable biological data with nested structures, suitable for single-cell sequencing experiments [36] |

| Apache NiFi | Data pipeline automation | Processes raw biological data files with error-handling frameworks [36] |

| TCGA (The Cancer Genome Atlas) | Genomic data repository | Provides comprehensive genomic data for cancer research and target discovery [37] [38] |

| COSMIC (Catalogue of Somatic Mutations in Cancer) | Mutation database | Curates comprehensive information on somatic mutations in human cancer [37] [38] |

| AlphaFold | Protein structure prediction | Accurately predicts protein 3D structures using advanced neural networks [40] |

| DeepBind | DNA/RNA binding site prediction | Identifies protein binding sites and regulatory elements in genomes [40] |

FAQs: Gene Discovery Methods and Challenges

Q: What are the primary computational methods for discovering new disease-gene associations in rare diseases?

A: Gene burden testing is a primary analytical framework. This method tests for the enrichment of rare, protein-coding variants in cases versus controls. The process involves:

- Variant Filtering: Focusing on rare, predicted pathogenic variants (e.g., loss-of-function, de novo variants).

- Statistical Modeling: Using methods like Firth’s logistic regression to account for rare events and unbalanced studies.

- Multiple Testing Correction: Applying false discovery rate (FDR) adjustments to identify significant associations. Tools like the open-source geneBurdenRD R package have been developed specifically for this purpose in Mendelian diseases [41].

Q: How can a case-only study design be valid for identifying disease genes?

A: While case-control designs are ideal, large-scale collaborations often generate data only for affected individuals. A case-only design can be effective with careful execution [42]:

- Variant Filtering: Use publicly available control databases (e.g., gnomAD, 1000 Genomes) to filter out common variants (typically with Minor Allele Frequency, MAF, >1%).

- Variant Prioritization: Prioritize rare variants with predicted high functionality, such as loss-of-function or splice-site alterations.

- Gene Prioritization: Combine genetic data with other evidence, such as gene constraint or pathway information. This approach is particularly powerful for identifying rare, penetrant variants when large control sets sequenced with the same technology are unavailable [42].

Q: What role do graph databases play in integrating data for gene discovery?

A: Graph databases like Neo4j are increasingly valuable for managing the complex, interconnected nature of biological data. They offer significant advantages over traditional relational databases (e.g., MySQL) for gene discovery research [43]:

- Efficient Querying: They natively represent relationships, allowing for fast traversal of complex connections (e.g., between genes, proteins, diseases, and drugs) without computationally expensive "join" operations.

- Hypothesis Generation: By easily querying diverse relationships, researchers can uncover novel associations, such as potential drug repurposing opportunities.

- Performance: In performance tests, a graph database containing 114,550 nodes and over 82 million relationships significantly outperformed MySQL in query execution speed, especially for complex queries [43].

Q: What is the clinical impact of solving undiagnosed rare disease cases?

A: Obtaining a definitive genetic diagnosis can be transformative for patients and families, ending a long "diagnostic odyssey." The impact includes [44]: