BayesA Prior Specification and Parameter Tuning: A Practical Guide for Genomic Prediction and Biomarker Discovery

This article provides a comprehensive guide to BayesA prior specification and hyperparameter tuning, tailored for researchers, scientists, and drug development professionals working with genomic prediction, biomarker identification, and complex trait...

BayesA Prior Specification and Parameter Tuning: A Practical Guide for Genomic Prediction and Biomarker Discovery

Abstract

This article provides a comprehensive guide to BayesA prior specification and hyperparameter tuning, tailored for researchers, scientists, and drug development professionals working with genomic prediction, biomarker identification, and complex trait analysis. We cover foundational concepts of the scaled inverse chi-squared prior and variance parameter, methodological steps for implementation in genomic selection pipelines, troubleshooting strategies for convergence and prior-data conflict, and validation techniques comparing BayesA to alternative Bayesian and frequentist models. The goal is to equip practitioners with the knowledge to robustly apply BayesA, enhancing the reliability of genetic parameter estimates and predictive models in biomedical research.

Understanding BayesA Priors: Core Concepts and Rationale for Genomic Analysis

The BayesA model represents a critical methodology in genomic selection and complex trait analysis, bridging the gap between traditional linear mixed models and modern Bayesian shrinkage estimation. Within the broader thesis on prior specification and parameter tuning, BayesA serves as a foundational case study due to its use of a scaled-t prior, which induces a specific form of variable-specific shrinkage on marker effects. This approach addresses the "small n, large p" problem common in genomic prediction, where the number of markers (p) far exceeds the number of observations (n).

Core Model Specification & Quantitative Comparison

Table 1: Comparison of Key Bayesian Shrinkage Models for Genomic Prediction

| Model | Prior on Marker Effects (β) | Shrinkage Type | Key Hyperparameters | Thesis Relevance for Prior Tuning |

|---|---|---|---|---|

| BayesA | i.i.d. scaled-t (or Gaussian with marker-specific variances) | Marker-specific, heavy-tailed | Degrees of freedom (ν), scale (S²) | Central study: tuning ν and S² drastically changes sparsity and predictive performance. |

| BayesB | Mixture: point mass at zero + scaled-t | Marker-specific, allows exact zero | π (proportion of nonzero effects), ν, S² | Contrast with BayesA: π adds a layer of tuning complexity for variable selection. |

| BayesC | Mixture: point mass at zero + Gaussian | Marker-specific, less heavy-tailed | π, common variance (σ²β) | Tuning π and σ²β explores the impact of prior shape (Gaussian vs. t). |

| RR-BLUP | i.i.d. Gaussian (equivalent to BayesC with π=1) | Uniform shrinkage | Common variance (σ²β) | Serves as a non-shrinkage baseline for evaluating tuning impact in BayesA. |

| Bayesian Lasso | Double Exponential (Laplace) | Marker-specific, encourages sparsity | Regularization parameter (λ) | Contrasts shrinkage shape induced by different priors (exponential vs. t-tail). |

Table 2: Typical Hyperparameter Ranges & Impact in BayesA (Empirical Guidance)

| Hyperparameter | Common Explored Range | Impact of Increasing Value | Suggested Tuning Method within Thesis |

|---|---|---|---|

| Degrees of Freedom (ν) | 3 to 10 | Effects approach Gaussian (lighter tails), less aggressive shrinkage on large effects. | Grid search via cross-validation. Use informative priors if external biological knowledge exists. |

| Scale (S²) | Estimated from data (e.g., Var(y)*0.1/p) | Increases the prior variance of effects, resulting in less overall shrinkage. | Marginal Maximum Likelihood or Empirical Bayes methods for initializing MCMC. |

| Chain Iterations | 20,000 to 100,000+ | Improves convergence but increases computational cost. | Monitor Gelman-Rubin diagnostic (Ȓ < 1.05) and effective sample size (>1000). |

| Burn-in | 2,000 to 10,000 | Discards non-stationary samples. | Visual inspection of trace plots and Heidelberg-Welch stationarity test. |

Experimental Protocol: Implementing & Tuning BayesA for Genomic Prediction

Protocol 1: Standard BayesA Analysis Workflow for a Genomic Selection Study

Objective: To estimate marker effects and derive genomic estimated breeding values (GEBVs) for a target trait using the BayesA model, with systematic evaluation of prior hyperparameters.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Preparation: a. Genotypic Data: Code markers as 0, 1, 2 (for homozygous ref, heterozygous, homozygous alt). Apply quality control: remove markers with call rate < 0.95, minor allele frequency < 0.05, and severe deviation from Hardy-Weinberg equilibrium (p < 1e-6). b. Phenotypic Data: Correct for fixed effects (e.g., year, location, herd) using a linear model. Standardize phenotypes to have mean zero and unit variance. c. Data Partitioning: Split data into training (TRN, ~80%) and testing (TST, ~20%) sets, ensuring family structure is represented in both.

Model Specification: a. Define the model: y = 1μ + Xβ + e where y is the vector of corrected phenotypes, μ is the overall mean, X is the genotype matrix, β is the vector of marker effects, and e is the residual error. b. Specify Priors: - μ ~ N(0, 10^6) - βj | σ²j ~ N(0, σ²j) for marker j - σ²j | ν, S² ~ Inv-χ²(ν, S²) [This is the key scaled-t prior equivalent] - e ~ N(0, σ²e) - σ²e ~ Inv-χ²(dfe, scalee)

Hyperparameter Tuning & MCMC Execution: a. Perform a preliminary grid search on a subset of TRN data using k-fold cross-validation (k=5). Test ν ∈ {3, 5, 7} and S² based on expected genetic variance. b. Choose the (ν, S²) combination yielding the highest predictive correlation in cross-validation. c. Run the MCMC chain for the full TRN set using tuned parameters. - Chain length: 50,000 - Burn-in: 5,000 - Thinning interval: 5 d. Monitor Convergence: Save trace plots for μ and a subset of β_j. Calculate Ȓ statistic for key parameters across multiple chains.

Posterior Analysis & Prediction: a. Calculate the posterior mean of βj from post-burn-in samples. b. Predict TST set: GEBVtst = Xtst * β̂ (posterior mean). c. Evaluate Performance: Calculate correlation between GEBVtst and observed y_tst (predictive accuracy) and mean squared error.

Sensitivity Analysis (For Thesis): a. Repeat step 3 with different (ν, S²) settings from the grid. b. Compare posterior distributions of a subset of large, medium, and small effects β_j across different priors to visualize differential shrinkage. c. Compare overall predictive accuracy and bias across prior settings.

Protocol 2: Sensitivity Analysis for Prior Specification (Thesis Core)

Objective: To quantitatively assess the impact of the degrees of freedom (ν) and scale (S²) parameters on model sparsity, shrinkage behavior, and prediction accuracy.

Procedure:

- Define a full factorial design over hyperparameter space: ν ∈ {3, 5, 7, 10} and S² ∈ {0.001, 0.01, 0.1, 1} * Vy/p, where Vy is phenotypic variance.

- For each (ν, S²) combination, run BayesA MCMC as in Protocol 1, Step 3.

- For each run, calculate: a. Effective Number of Non-zero Effects: Σj (1 - τj), where τj is the shrinkage factor for marker j. b. Shrinkage Profile: Plot posterior mean of βj against the ordinary least squares estimate (or SNP effect from a BLUP model). c. Predictive Performance: Correlation and bias in the TST set.

- Summarize results in a 4x4 heatmap table for visual comparison of accuracy across the prior space.

Visualizations

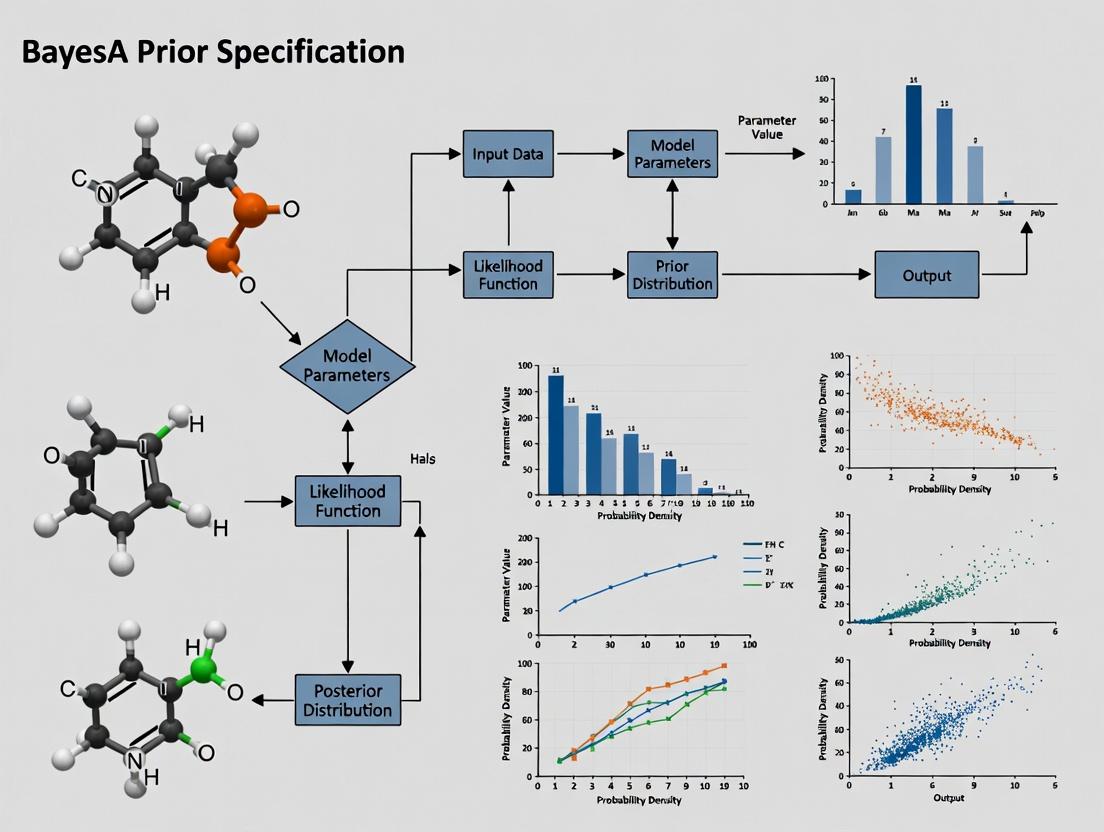

Title: BayesA Implementation & Tuning Workflow

Title: BayesA Graphical Model & Prior Hierarchy

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for BayesA Analysis

| Item / Solution | Category | Function & Relevance |

|---|---|---|

| Genotyping Array Data | Input Data | High-density SNP genotypes (e.g., Illumina BovineHD 777K, PorcineGGP50K) form the marker matrix X. Quality is paramount. |

| Corrected Phenotypic Records | Input Data | Trait measurements adjusted for systematic environmental effects. The response vector y. |

| R Statistical Environment | Software Core | Primary platform for data manipulation, statistical analysis, and visualization. |

BGLR R Package |

Key Software | Implements BayesA (and other models) efficiently using Gibbs sampling. Essential for applied research. |

MCMCglmm R Package |

Key Software | Flexible MCMC package for fitting models with animal and residual covariance structures; can implement BayesA. |

JAGS / Stan |

Key Software | Probabilistic programming languages for custom Bayesian model specification (e.g., for novel prior testing in thesis). |

| High-Performance Computing (HPC) Cluster | Infrastructure | MCMC for tens of thousands of markers requires significant memory and multi-core processing for chain replication and sensitivity analysis. |

| GelMan-Rubin Diagnostic (Ȓ) | Diagnostic Tool | Convergence metric comparing within- and between-chain variance. Target Ȓ < 1.05 for all key parameters. |

| Effective Sample Size (ESS) | Diagnostic Tool | Measures number of independent MCMC samples. ESS > 1000 ensures reliable posterior summaries. |

| Inv-χ²(ν, S²) Distribution | Statistical Reagent | The core prior distribution for marker-specific variances in BayesA, inducing the scaled-t on effects. Tuning ν and S² is the thesis focus. |

Within the broader thesis research on BayesA prior specification and parameter tuning for genomic selection, the choice of prior for marker-specific variances is critical. The BayesA model, a cornerstone in genomic prediction, assigns each marker a separate variance with a scaled inverse chi-squared prior. This prior is conjugate to the normal distribution, facilitating Gibbs sampling, and is defined by two hyperparameters: degrees of freedom (ν) and scale (S²). This application note details the protocol for implementing and tuning this prior, providing researchers with a framework for robust parameter specification in drug development and complex trait analysis.

Mathematical Specification & Parameter Tuning

The scaled inverse chi-squared distribution, denoted as χ⁻²(ν, S²), serves as the prior for the marker-specific variance σ²ᵢ. Its probability density function is: p(σ²ᵢ | ν, S²) ∝ (σ²ᵢ)^{-(ν/2+1)} exp(-νS² / (2σ²ᵢ))

The hyperparameters ν and S² control the shape and scale of the prior, profoundly influencing the degree of shrinkage and the model's ability to handle varying genetic architectures.

Table 1: Hyperparameter Interpretation and Tuning Guidelines

| Hyperparameter | Symbol | Interpretation | Typical Range | Effect of Increasing Value |

|---|---|---|---|---|

| Degrees of Freedom | ν | Prior belief about the "informativeness" or weight of the prior. | 4.2 - 10 | Increased shrinkage of marker effects; variances are pulled more strongly towards the prior mode. |

| Scale Parameter | S² | Prior belief about the typical value of the marker variance. | Derived from expected heritability (h²) | Larger S² allows for larger marker effect variances a priori. |

Protocol 2.1: Deriving Default Hyperparameters from Expected Heritability

- Define Genomic Heritability: Specify the expected proportion of phenotypic variance (σ²ᵧ) explained by markers, h².

- Calculate Average Marker Variance: Approximate the expected average marker variance as ( \bar{S}^2 = \frac{h^2 \sigmay^2}{(1 - h^2) \nu M} ), where M is the total number of markers. For a known σ²ᵧ, use ( S^2 = \frac{h^2 \sigmay^2}{M} ).

- Set Degrees of Freedom: A common default is ν = 5. Values between 4.2 and 6 provide a relatively uninformative but proper prior, ensuring a finite mean.

- Validate: Use prior predictive checks to ensure the implied distribution of marker effects is biologically plausible.

Implementation Protocol for Gibbs Sampling

Protocol 3.1: Gibbs Sampling Step for Marker Variance in BayesA Objective: Sample the marker-specific variance σ²ᵢ given its effect estimate (βᵢ) and hyperparameters ν and S². Input: Current marker effect βᵢ, hyperparameters ν and S², design matrix X. Output: A new sample for σ²ᵢ.

- Compute Residual Sum of Squares for the Marker: For a model where βᵢ is sampled individually, this simplifies to ( RSSi = \betai^2 ).

- Update Parameters for Inverse Chi-Squared:

- Updated degrees of freedom: ( \nu^* = \nu + 1 )

- Updated scale: ( S^{2} = (\nu S^2 + RSS_i) / \nu^ )

- Sample New Variance: Draw the new variance from the scaled inverse chi-squared distribution: ( \sigma^2_i \sim \chi^{-2}(\nu^, S^{2}) ).

- Proceed: Use the sampled σ²ᵢ to update the conditional distribution for βᵢ in the next Gibbs step.

Table 2: Comparison of Prior Distributions for Marker Variances

| Prior | Distribution for σ²ᵢ | Key Hyperparameters | Conjugacy | Shrinkage Behavior | Use Case in Genomics |

|---|---|---|---|---|---|

| Scaled Inverse Chi-Squared (BayesA) | χ⁻²(ν, S²) | ν (df), S² (scale) | Yes (with Normal) | Adaptive, marker-specific. Heavy tails. | Standard for traits with major and minor QTLs. |

| Bayesian LASSO (BL) | Exponential(λ²) | λ (regularization) | No | More uniform shrinkage. Less heavy tails. | Dense architecture, many small effects. |

| BayesCπ | Mixture: π at 0, (1-π) at χ⁻² | π (prop. zero), ν, S² | Yes | Selective, some effects set to zero. | Variable selection, sparse architectures. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Toolkit for BayesA Implementation

| Item / Reagent | Function / Purpose | Example / Note |

|---|---|---|

| Gibbs Sampler Software | Core engine for Bayesian inference. | BGLR (R), JM (Julia), GIBBS3F90 (Fortran). |

| Genotype-Phenotype Data Matrix | Standardized input data. | SNPs coded as 0,1,2; phenotypes mean-centered. |

| Hyperparameter Tuning Script | Automates grid search for ν and S². | Custom R/Python script using cross-validation. |

| High-Performance Computing (HPC) Cluster | Enables analysis of large-scale genomic data. | Necessary for >10,000 individuals × >50,000 SNPs. |

| Prior Predictive Check Script | Visualizes the implied distribution of marker effects before observing data. | Plots ( p(\beta) = \int p(\beta | \sigma^2)p(\sigma^2) d\sigma^2 ). |

| Convergence Diagnostic Tool | Assesses MCMC chain convergence. | coda R package (Gelman-Rubin statistic, trace plots). |

Visualization of Workflows

Title: BayesA Gibbs Sampling Workflow with Inverse χ² Prior

Title: Conjugate Update for Marker Variance

Title: Effect of Hyperparameters ν and S² on Prior

Within the broader thesis research on BayesA prior specification and parameter tuning, the hyperparameters ν (degrees of freedom) and S² (scale) are critical for defining the scaled inverse-chi-squared prior. This prior is central to modeling genetic variance components in genomic prediction models used in pharmaceutical trait discovery. Proper interpretation and tuning of (ν, S²) directly influence the shrinkage of marker effects, the model's adaptability to sparse vs. polygenic architectures, and ultimately, the predictive accuracy for complex traits relevant to drug development.

Core Interpretive Framework

Mathematical Definition

In the BayesA model, the prior for the genetic variance σ² for each marker is a scaled inverse-chi-squared distribution: σ² ~ Scale-inv-χ²(ν, S²). The hyperparameters are interpreted as:

- ν (degrees of freedom): Controls the "peakiness" or informativeness of the prior. Low ν (<5) implies a heavy-tailed prior, allowing for large marker effects. High ν (>20) creates a more restrictive, normal-like prior.

- S² (scale): Represents a prior location or typical value for the genetic variance. It anchors the distribution.

Table 1: Impact of Varying ν and S² on Prior Distribution Characteristics

| Hyperparameter Setting | Prior Shape | Effective Shrinkage | Suitability for Genetic Architecture | Risk of Mis-specification |

|---|---|---|---|---|

| Low ν (e.g., 2-4), Low S² | Very heavy-tailed, high density near zero. | Weak; allows large effects. | Sparse architectures (few QTLs). | Overfitting of noise; unstable estimates. |

| Low ν (e.g., 2-4), High S² | Heavy-tailed, shifted from zero. | Very weak; strongly favors large effects. | Very sparse architectures with large-effect loci. | Severe overfitting. |

| High ν (e.g., 10-20), Low S² | Concentrated near zero, light-tailed. | Strong; severe shrinkage of all effects. | Highly polygenic with tiny effects. | Overshrinkage of true signals. |

| High ν (e.g., 10-20), High S² | Concentrated away from zero. | Moderate, but all effects shrunk to similar magnitude. | Homogeneous polygenic architecture. | Misses true large-effect loci. |

Experimental Protocols for Hyperparameter Tuning

Protocol 3.1: Empirical Bayes Estimation from Historical Data

Objective: Derive data-informed (ν, S²) values from a pilot genomic dataset. Materials: Genotype matrix (X), Phenotype vector (y) for a relevant historical cohort. Procedure:

- Perform a preliminary genomic BLUP or RR-BLUP analysis to obtain initial marker effect estimates (â).

- Calculate the sample variance of these effects: Vâ = Var(â).

- Method of Moments Estimation:

- Set the prior expectation E(σ²) = S² * ν / (ν - 2) equal to Vâ.

- Set the prior variance Var(σ²) = [2ν² (S²)²] / [(ν-2)²(ν-4)] equal to the sample variance of the squared effects (requires iterative solving).

- Solve for ν and S². Often, ν is fixed at a low value (4-6), and S² is solved as: S² = Vâ * (ν - 2) / ν.

- Validate by running BayesA across a grid of values around the estimated point and assessing cross-validation predictability.

Protocol 3.2: Cross-Validation Grid Search for Predictive Optimization

Objective: Find the (ν, S²) combination that maximizes predictive correlation in a target population. Materials: Training population (Genotypes Xtrain, Phenotypes ytrain); Validation set (Xval, yval). Procedure:

- Define a grid: ν ∈ {3, 4, 5, 6, 8, 10} and S² ∈ {0.1Vâ, 0.5Vâ, Vâ, 2Vâ, 5Vâ}, where Vâ is an initial variance estimate.

- For each (ν, S²) pair: a. Implement the BayesA Gibbs sampler (or an efficient approximation) on (Xtrain, ytrain) using the specified prior. b. Sample from the posterior distribution of marker effects. c. Calculate genomic estimated breeding values (GEBVs) for the validation set: GEBVval = Xval * mean(posterior effects). d. Compute the predictive accuracy as the correlation between GEBVval and yval.

- Select the (ν, S²) pair yielding the highest predictive accuracy.

- Perform sensitivity analysis by examining the stability of predictions in adjacent grid points.

Visualization of Relationships

Title: Causal Impact of ν and S² on Prediction

Title: Hyperparameter Tuning Experimental Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for BayesA Hyperparameter Research

| Item/Category | Function/Explanation in Hyperparameter Research |

|---|---|

| Genomic Data Suite | High-density SNP array or whole-genome sequencing data. Quality control (MAF, HWE, call rate) is essential for stable variance estimation. |

| Phenotypic Database | Precisely measured, pre-adjusted trait data for historical and validation cohorts. Critical for estimating meaningful S² scale. |

| Bayesian MCMC Software | e.g., BLR, BGGE, STAN, or custom R/Python Gibbs samplers. Must allow explicit specification of the scaled inverse-chi-squared prior parameters. |

| High-Performance Computing (HPC) Cluster | Grid search and MCMC sampling are computationally intensive, especially for large (n>10k) drug discovery cohorts. |

| Cross-Validation Pipeline Scripts | Automated scripts for k-fold splitting, model training, prediction, and accuracy calculation across hyperparameter grids. |

| Visualization & Diagnostics Library | Tools (e.g., ggplot2, bayesplot) to trace prior/posterior distributions, monitor MCMC convergence, and plot accuracy surfaces over (ν, S²) grids. |

Within the broader thesis on "Bayesian Prior Specification and Parameter Tuning for Genomic Prediction in Complex Traits," this document serves as foundational application notes. The research thesis investigates the robustness and tuning sensitivity of BayesA, a canonical Bayesian alphabet method, compared to its derivatives (e.g., BayesB, BayesC, LASSO) in pharmacogenomic and quantitative trait locus (QTL) mapping. This protocol details its implementation, core assumptions, and decision framework for application.

Key Assumptions of BayesA

BayesA, introduced by Meuwissen et al. (2001), operates under specific, rigid assumptions:

- Effect Size Distribution: Each marker's effect is assumed to follow a univariate, marker-specific scaled-t prior distribution (often approximated by a scaled normal-inverse-gamma mixture).

- Non-Infinitesimal Genetics: It assumes a non-infinitesimal genetic architecture, where a substantial proportion of markers have zero or negligible effects, but a few loci have larger effects.

- Independence: Marker effects are a priori independent, conditional on their variances.

- Heavy-Tailed Priors: The t-distribution prior is heavy-tailed, allowing for large effect sizes without excessively shrinking them, unlike Gaussian priors.

Comparative Analysis: When to Use BayesA

BayesA is selected based on the suspected trait architecture and analytical goal. The table below contrasts it with other common Bayesian alphabets.

Table 1: Comparative Overview of Key Bayesian Alphabet Methods

| Method | Prior on Marker Effects (β) | Key Assumption | Best Suited For | Shrinkage Behavior |

|---|---|---|---|---|

| BayesA | Scaled-t (normal-inverse-gamma) | All markers have non-zero, but variable, variance. A few large effects. | Traits with major QTLs (e.g., drug response biomarkers, disease resistance). | Variable; strong shrinkage for small effects, less for large. |

| BayesB | Mixture: Spike-Slab + Scaled-t | A proportion (π) of markers have zero effect; others follow scaled-t. | Traits with sparse architecture (many zero-effect markers). | More aggressive; forces many effects to exactly zero. |

| BayesC | Mixture: Spike-Slab + Normal | A proportion (π) of markers have zero effect; others follow normal. | Balanced infinitesimal & major QTL scenarios. | Similar to BayesB but with Gaussian shrinkage for non-zero. |

| Bayesian LASSO | Double Exponential (Laplace) | All effects are non-zero, drawn from a distribution promoting sparsity. | Polygenic traits with many small effects. | Continuous, deterministic shrinkage towards zero. |

| RR-BLUP | Normal (Gaussian) | All markers have equal variance (infinitesimal model). | Highly polygenic traits (e.g., yield, height). | Uniform shrinkage proportional to effect size. |

Decision Protocol: When to Choose BayesA

- Use BayesA when: Preliminary evidence (e.g., GWAS) suggests a handful of strong candidate loci amidst background noise. It is optimal for QTL mapping and discovery where you wish to estimate the distribution of effect sizes without forcing exact sparsity.

- Choose an alternative when:

- BayesB/BayesC: You have strong prior belief that the genetic architecture is truly sparse (many markers are irrelevant).

- Bayesian LASSO/RR-BLUP: The trait is highly polygenic with no expected large-effect QTLs; the goal is genomic prediction accuracy, not locus discovery.

Experimental Protocol: Implementing BayesA for Pharmacogenomic Trait Analysis

A. Protocol Title: Genome-Enabled Prediction of Continuous Drug Response Phenotype Using BayesA.

B. Research Reagent Solutions & Essential Materials

| Item | Function/Description | Example Vendor/Catalog |

|---|---|---|

| Genotyping Array Data | High-density SNP genotypes for training and validation populations. | Illumina Infinium, Affymetrix Axiom |

| Phenotypic Data | Precise, quantitative measure of drug response (e.g., IC50, % inhibition). | In-house or public biorepository |

| High-Performance Computing (HPC) Cluster | Essential for running Markov Chain Monte Carlo (MCMC) sampling. | Local institutional cluster or cloud (AWS, GCP) |

| Bayesian Analysis Software | Implements the Gibbs sampler for BayesA. | BGLR R package, JMulTi |

| Quality Control (QC) Pipeline | Scripts for filtering SNPs and samples. | PLINK, vcftools |

| Convergence Diagnostic Tool | Assesses MCMC chain stability. | coda R package |

C. Detailed Methodology

- Data Preparation & QC:

- Filter samples for call rate >95%.

- Filter SNPs for call rate >98%, minor allele frequency (MAF) >0.01, and Hardy-Weinberg equilibrium p-value >1e-6.

- Impute missing genotypes using a standard algorithm (e.g., Beagle).

- Split data into training (80%) and validation (20%) sets, ensuring family structure is accounted for.

Model Specification (BayesA):

- Model:

y = 1μ + Xβ + ey: vector of phenotypic values.μ: overall mean.X: matrix of genotype covariates (coded 0,1,2).β: vector of marker effects, with priorβ_j | σ_j^2 ~ N(0, σ_j^2).σ_j^2: marker-specific variance, with priorσ_j^2 ~ Inv-χ^2(ν, S^2).e: residuals,e ~ N(0, Iσ_e^2).

- Hyperparameter Tuning (Thesis Focus):

ν(degrees of freedom) andS^2(scale parameter) crucially control the heaviness of the t-distribution tail. The thesis employs a grid search:ν ∈ {3, 5, 7, 10}andS^2estimated from the data.- A sensitivity analysis is run to evaluate prediction accuracy across hyperparameter combinations.

- Model:

MCMC Execution & Diagnostics:

- Run a Gibbs sampler for 50,000 iterations, discarding the first 10,000 as burn-in, thinning every 10 samples.

- Monitor convergence via trace plots and Gelman-Rubin diagnostic (target <1.05).

- Calculate Posterior Mean of marker effects and their variances.

Validation & Output:

- Apply estimated effects (

β) to the genotype matrix of the validation set to generate predicted phenotypes. - Calculate the prediction accuracy as the Pearson correlation between observed (

y_val) and predicted (ŷ_val) values. - Output a list of top candidate markers based on the posterior probability of inclusion or effect size magnitude.

- Apply estimated effects (

Visualizations

Diagram 1: BayesA Prior Specification and Gibbs Sampling Workflow (77 chars)

Diagram 2: Decision Logic for Selecting Bayesian Alphabet Methods (95 chars)

Within the broader thesis on advancing BayesA prior specification and parameter tuning, this document provides Application Notes and Protocols for explicitly connecting statistical hyperparameters to the underlying genetic architecture. The BayesA model, a cornerstone in Genomic Prediction, uses a scaled-t prior for marker effects, governed by hyperparameters (degrees of freedom ν and scale s²). This work operationalizes the biological interpretation of these parameters, enabling researchers to inform priors with domain knowledge about genetic architectures (e.g., number of QTLs, effect size distributions) for improved prediction and inference in plant, animal, and human disease genomics.

Core Quantitative Relationships: Hyperparameters to Genetic Architecture

Table 1: Mapping BayesA Hyperparameters to Biological Genetic Architecture

| Hyperparameter | Symbol | Statistical Role | Biological Interpretation | Typical Empirical Range (Genomics) |

|---|---|---|---|---|

| Degrees of Freedom | ν |

Controls tail thickness of the t-distribution. Low ν = heavy tails (more large effects). |

Inverse relationship to the proportion of loci with large effects. Low ν (<5) suggests an architecture with fewer QTLs of large effect. High ν (>10) suggests many small-effect QTLs (approaches normal distribution). |

3 – 10 |

| Scale Parameter | s² |

Scales the distribution of marker effects. | Related to the expected genetic variance per marker. Proportional to the total additive genetic variance (σ_g²) divided by the expected number of causal variants (π). s² ≈ σ_g² / (π * M) where M is total markers. |

0.001 – 0.05 * σ_g² |

| Proportion of Non-zero Effects | π |

Often implicitly defined by prior. | The fraction of markers in linkage disequilibrium (LD) with causal QTLs. Directly relates to the polygenicity of the trait. | 0.001 – 0.01 (for complex traits) |

Table 2: Protocol for Setting Hyperparameters Based on Preliminary Data

| Preliminary Analysis | Extracted Parameter | Informs Hyperparameter | Calculation / Heuristic |

|---|---|---|---|

| Genome-Wide Association Study (GWAS) | Number of genome-wide significant hits (N_sig). |

ν (Degrees of Freedom) |

Heuristic: ν ≈ M / (10 * N_sig) (clamped between 3 and 10). Fewer hits → lower ν. |

| REML / Variance Component Estimation | Additive Genetic Variance (σ_g²). |

s² (Scale Parameter) |

s² = (σ_g² / M) * c, where c is an inflation factor (e.g., 2-5) to account for polygenicity. |

| Linkage Disequilibrium Score Regression | Heritability (h²), Polygenicity (π). |

π, s² |

π from slope; s² ≈ (h² * σ_p²) / (π * M), where σ_p² is phenotypic variance. |

Experimental Protocols

Protocol 1: Empirical Estimation of Hyperparameters from Pilot GWAS Data

Objective: To derive informed BayesA hyperparameters (ν, s²) from summary statistics of a preliminary GWAS.

Materials: GWAS summary statistics file, genetic relationship matrix (GRM), phenotype file.

Software: PLINK, GCTA, R/Python with custom scripts.

Procedure:

- Perform GWAS: Conduct a standard GWAS on your pilot dataset (n ≥ 1000 recommended for stability).

- Estimate Variance Components: Using GCTA, perform REML analysis to estimate additive genetic variance (

σ_g²) and phenotypic variance (σ_p²). - Count Significant Loci: Apply a lenient significance threshold (e.g., p < 1e-5) to count independent lead SNPs (

N_sig). - Calculate Hyperparameters:

- Degrees of Freedom (

ν):ν_est = max(3, min(10, Total_SNPs / (10 * N_sig))). - Scale (

s²):s²_est = (σ_g² / Total_SNPs) * 3. (Inflation factor of 3 assumes a moderately polygenic architecture).

- Degrees of Freedom (

- Sensitivity Analysis: Run BayesA models with a grid of values around

ν_estands²_est(e.g.,ν∈ [νest-2, νest+2],s²∈ [0.5s²_est, 2s²_est]) to evaluate prediction accuracy robustness.

Protocol 2: Cross-Validation for Hyperparameter Tuning in a Target Population

Objective: To optimize BayesA hyperparameters for genomic prediction accuracy via internal cross-validation. Materials: Genotype matrix, phenotype vector for the target population. Software: BGLR (R), JWAS (Julia), or custom MCMC/Gibbs sampler. Procedure:

- Define Training/Testing Sets: Perform a 5-fold cross-validation (CV) scheme, ensuring family structure is accounted for within splits.

- Define Hyperparameter Grid: Create a 2D grid of candidate values.

ν: [3, 4, 5, 7, 10]s²: [0.0001, 0.001, 0.005, 0.01, 0.05] *σ_p²(phenotypic variance)

- Run CV: For each (

ν,s²) pair, run the BayesA model on each of the 5 training sets, predict the held-out test set, and record the prediction accuracy (correlation between predicted and observed). - Identify Optima: Calculate the mean prediction accuracy across folds for each hyperparameter pair. Select the pair yielding the highest mean accuracy.

- Final Model: Train the BayesA model on the complete dataset using the optimized hyperparameters for final genetic parameter estimation or breeding value prediction.

Visualizations

Diagram 1: From Genetic Architecture to BayesA Prior Specification

Diagram 2: Protocol for Hyperparameter Tuning & Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Implementation

| Item / Reagent | Function / Purpose | Example / Specification |

|---|---|---|

| Genotyping Array | Provides genome-wide marker data (SNPs) for genetic analysis. | Illumina BovineHD (777k SNPs), Illumina Infinium Global Screening Array (GSA). |

| Whole Genome Sequencing (WGS) Data | Gold standard for discovering all genetic variants; used for imputation to create high-density datasets. | >20x coverage per sample for reliable variant calling. |

| PLINK 2.0 | Open-source toolset for whole-genome association and population-based linkage analyses. Used for quality control (QC) and basic GWAS. | plink2 --vcf --pheno --glm |

| GCTA (GREML) | Software for Genome-wide Complex Trait Analysis. Essential for estimating genetic variance components (σ_g²) via REML. |

gcta64 --reml --grm --pheno --out |

| BGLR R Package | Comprehensive Bayesian regression package implementing BayesA and other priors. User-friendly for CV and hyperparameter testing. | BGLR function with ETA = list(list(model="BayesA", nu=ν, S0=s²)). |

| JWAS (Julia) | High-performance software for Bayesian genomic analysis. Efficient for large datasets and complex models. | using JWAS; set_covariate(); add_genotypes(); runMCMC() |

| High-Performance Computing (HPC) Cluster | Essential for running computationally intensive MCMC chains for BayesA on thousands of individuals and SNPs. | SLURM workload manager with >= 64GB RAM and multiple cores per job. |

Implementing BayesA: A Step-by-Step Guide to Prior Specification and Model Fitting

Within a research thesis focused on BayesA prior specification and parameter tuning, rigorous data pre-processing forms the critical foundation. Genomic prediction (GP) accuracy is highly dependent on the quality and structure of input data before model application. This protocol details the data requirements, quality control (QC) steps, and transformation procedures necessary for effective GP, specifically tailored for subsequent analysis with Bayesian models like BayesA.

Data Requirements

Core Data Components

Successful GP requires two primary data matrices: a molecular marker matrix (X) and a phenotypic trait matrix (y). For research integrating prior biological knowledge into BayesA, additional annotation data is required.

Table 1: Minimum Data Requirements for Genomic Prediction

| Data Type | Format | Minimum Recommended Size | Purpose in BayesA Framework |

|---|---|---|---|

| Genotypic (X) | SNPs encoded as 0,1,2 (or -1,0,1) | n > 200, p > 5,000 SNPs | Core predictor variables. Prior variance is scaled by SNP-specific parameters. |

| Phenotypic (y) | Numerical vector/matrix | n > 200 observations | Response variable for model training and validation. |

| Genotype Map | Chr., Position (bp) | Corresponding to p SNPs | Enables QC by physical/genetic distance, potential for informed priors. |

| Trait Heritability (h²) | Scalar (0-1) | Prior estimate from literature/pilot | Informs initial prior distributions for variance components. |

Data Quality Control (QC) Metrics and Thresholds

Pre-processing removes noise and ensures robust model performance. The following thresholds are empirically derived for GP applications.

Table 2: Standard QC Filters for Genotypic Data

| QC Step | Common Threshold | Rationale | Typical % Removed |

|---|---|---|---|

| Individual Call Rate | < 90% | Poor DNA quality or sequencing depth. | 2-5% |

| Marker Call Rate | < 95% | Poor assay performance. | 5-15% |

| Minor Allele Frequency (MAF) | < 0.01 - 0.05 | Rare alleles contribute little predictive power and increase parameter space. | 10-40% |

| Hardy-Weinberg Equilibrium (HWE) p-value | < 1e-6 (stringent) | May indicate genotyping errors or population structure. | 0-5% |

| Duplicate Sample Concordance | < 99% | Identifies sample mix-ups. | Varies |

Protocol 2.1: Standard Genotypic Data QC Workflow

- Input: Raw genotype calls in PLINK (.bed/.bim/.fam) or VCF format.

- Filter Individuals: Remove samples with individual call rate < 90%.

- Command (PLINK):

plink --bfile raw_data --mind 0.1 --make-bed --out step1

- Command (PLINK):

- Filter Markers: Remove SNPs with marker call rate < 95% and MAF < 0.03.

- Command:

plink --bfile step1 --geno 0.05 --maf 0.03 --make-bed --out step2

- Command:

- Check HWE: Optionally, remove severe deviations (p < 1e-6) in a control population.

- Command:

plink --bfile step2 --hwe 1e-6 --make-bed --out cleaned_genotypes

- Command:

- Output: A cleaned genotype file ready for imputation or direct analysis.

Phenotypic Data Pre-processing

Protocol 2.2: Phenotypic Data Adjustment

- Outlier Detection: Visually inspect trait distributions (histogram, boxplot). Consider removing or winsorizing biological outliers (> 3.5 SD from mean).

- Fixed Effects Correction: For complex trials (e.g., multi-environment, replicated design), fit a linear model:

y = µ + Trial + Block + ε. Extract Best Linear Unbiased Estimations (BLUEs) or residuals for use as the response variable (y) in GP. - Standardization: For multi-trait analysis or to improve MCMC sampling efficiency, scale phenotypes to have mean = 0 and variance = 1.

Pre-processing for Bayesian Models (BayesA)

BayesA assumes each SNP has its own variance, drawn from a scaled inverse-chi-squared prior distribution. Data pre-processing significantly influences the estimation of these parameters.

Data Transformation and Partitioning

Protocol 3.1: Preparing Data for BayesA Parameter Tuning

- Imputation: Apply a model (e.g., Beagle, kNN) to fill missing genotypes post-QC. Report final completeness (target > 99%).

- Centering/Scaling Genotypes: Center each SNP to mean zero. Scaling is optional but can be applied (e.g., to unit variance) to aid interpretation.

- Training/Testing Partition: Implement a structured cross-validation scheme (e.g., 5-fold, 20% holdout). For tuning hyperparameters (degrees of freedom

ν, scaleS²for the inverse-chi-squared prior), further split the training set into subtraining and validation sets.- Example Partition: 60% Training (for model fitting), 20% Validation (for tuning

νandS²), 20% Testing (for final evaluation).

- Example Partition: 60% Training (for model fitting), 20% Validation (for tuning

Integration of Biological Prior Information (Thesis Context)

A core thesis aim may involve refining BayesA priors using biological knowledge. Protocol 3.2: Incorporating Annotation for Informed Priors

- Source Annotation: Obtain SNP annotations (e.g., gene proximity, functional impact from SnpEff, chromatin state).

- Categorize SNPs: Classify markers into

kgroups (e.g., "genic" vs. "intergenic"). - Structured Prior Specification: Assign separate prior distributions (e.g., different

S²values) to each SNP group within the BayesA framework, reflecting the hypothesis that causal variants are enriched in functional categories.

Visualization of Workflows

Workflow for Genomic Prediction Data Pre-processing

BayesA Model: SNP-Specific Variances from a Common Prior

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Genomic Prediction

| Item / Solution | Supplier Examples | Function in Pre-processing & Analysis |

|---|---|---|

| DNA Extraction Kits (Plant/Animal) | Qiagen DNeasy, Thermo Fisher KingFisher | High-quality, high-molecular-weight DNA for accurate genotyping. |

| SNP Genotyping Arrays | Illumina (Infinium), Thermo Fisher (Axiom) | High-throughput, cost-effective genome-wide marker discovery. |

| Whole Genome Sequencing Kits | Illumina (NovaSeq), PacBio (HiFi) | Provides ultimate marker density and discovery of all variant types. |

| PLINK v2.0 | Open Source | Industry-standard software for genotype data management, QC, and basic association analysis. |

| Beagle 5.4 | University of Washington | Leading software for accurate genotype phasing and imputation of missing data. |

| R/qBLUP or BGLR R Packages | CRAN, GitHub | Essential R environments for executing genomic prediction models, including Bayesian (BayesA) approaches. |

| High-Performance Computing (HPC) Cluster | Local University, Cloud (AWS, GCP) | Necessary for computationally intensive tasks like imputation and MCMC sampling in Bayesian models. |

| Laboratory Information Management System (LIMS) | LabWare, Samples | Tracks sample metadata from tissue to genotype, ensuring data integrity and reproducibility. |

This document provides application notes and protocols on prior specification for the scale parameter (S²) and degrees of freedom (ν) in the inverse chi-squared distribution, a critical component of BayesA models used in genomic prediction and quantitative genetics. The discussion is situated within a broader doctoral thesis investigating robust prior specification and parameter tuning methodologies for complex Bayesian models in pharmaceutical trait discovery. The choice between default weakly informative priors and informed, data-driven priors has significant implications for model convergence, parameter estimability, and the biological plausibility of results in drug development pipelines.

Quantitative Comparison of Prior Strategies

Table 1: Characteristics of Default vs. Informed Prior Strategies for ν and S²

| Aspect | Default/Weakly Informative Prior | Informed/Empirical Prior |

|---|---|---|

| Philosophical Basis | Reference analysis; objectivity; let data dominate. | Incorporate existing knowledge from prior experiments or meta-analyses. |

| Typical ν Value | Low (e.g., ν = 4 to 5). Represents low confidence in initial S² guess. | Higher (e.g., ν > 10). Represents stronger belief in prior estimate of S². |

| Typical S² Source | Arbitrary small positive value or based on crude phenotypic variance estimate. | Derived from historical data, pilot studies, or published heritability estimates for the trait. |

| Computational Stability | Can sometimes lead to slow convergence or mixing if data is weak. | Often improves convergence and identifiability, especially in high-dimensional problems. |

| Risk of Bias | Minimal prior-induced bias. | Risk of bias if prior information is incorrect or not applicable to current population. |

| Best Use Case | Novel traits with no reliable previous estimates; exploratory analysis. | Established traits in well-studied populations (e.g., clinical biomarkers in target patient group). |

Table 2: Example Parameterization from Recent Literature (2023-2024)

| Study Focus | Recommended ν | Recommended S² derivation | Justification Cited |

|---|---|---|---|

| Whole Genome Prediction for Clinical Biomarkers | ν = 4 (Default) | S² = 0.5 * Phenotypic Variance / (Number of Markers) | Provides a proper but diffuse prior; standard in BGLR package. |

| Multi-Trait Drug Response Modeling | ν = 12 (Informed) | S² derived from REML estimate of marker variance in a historical cohort. | Stabilizes estimates for correlated traits with limited sample size. |

| Bayesian Variable Selection in Pharmacogenomics | ν ~ Uniform(2, 10) | S² ~ Gamma(shape=1.5, rate=0.5) on variance. | Hierarchical prior allows data to inform the scale, a flexible default. |

| Integrative Omics for Target Discovery | ν = 5 (Semi-Informed) | S² from permutation-based expected marker variance. | Balances computational robustness with minimal prior influence. |

Protocol 3.1: Empirical Bayes Estimation of S² from Pilot Data Objective: To derive an informed prior scale parameter (S²) for a BayesA model from a preliminary dataset. Materials: Pilot genotype (SNP matrix) and phenotype data for the trait of interest. Workflow:

- Data Processing: Quality control on pilot data (filtering for call rate, minor allele frequency, Hardy-Weinberg equilibrium).

- Variance Component Estimation: Fit a simple genomic linear model (e.g., via Ridge Regression BLUP or a single-component GBLUP) using efficient REML algorithms (e.g.,

GCTA,sommerR package). - Calculation: Extract the estimated additive genetic variance (σ²g) from the REML output. Compute initial S² as: S²empirical = σ²_g / (p * π), where

pis the number of markers andπis the expected proportion of markers with non-zero effect (often set to 1 for BayesA). - Sensitivity Analysis: Refit the final BayesA model using a range of S² values (e.g., 0.5, 1, 2* S²_empirical) to assess robustness of key findings.

Protocol 3.2: Sensitivity and Robustness Analysis for ν and S² Objective: To evaluate the impact of prior choice on posterior inferences and model performance. Materials: Full experimental dataset, high-performance computing cluster. Workflow:

- Design Prior Grid: Define a grid of (ν, S²) values. Example: ν ∈ {4, 6, 8, 10} and S² ∈ {0.1, 0.5, 1.0, 2.0} * S²_default.

- Model Fitting: Run the BayesA model to convergence for each prior combination in the grid. Monitor via Markov Chain Monte Carlo (MCMC) diagnostics (Gelman-Rubin statistic, effective sample size).

- Performance Metrics: For each run, calculate:

- Predictive Accuracy: Correlation between predicted and observed values in a held-out validation set.

- Parameter Estimates: Mean and 95% credible intervals for key genetic variance parameters.

- Model Complexity: Effective number of parameters (p_D) derived from the Deviance Information Criterion (DIC).

- Visualization & Decision: Create contour plots of predictive accuracy over the prior grid. Choose the prior region that yields stable, high accuracy without inducing unrealistic parameter estimates.

Visualization of Workflows and Logical Relationships

Title: Decision Workflow for Choosing ν and S² Priors

Title: Sensitivity Analysis Protocol Steps

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools

| Item / Solution | Function / Purpose | Example Product / Software |

|---|---|---|

| High-Throughput Genotyping Array | Provides the dense marker data (SNPs) required for genomic prediction models. | Illumina Global Screening Array, Affymetrix Axiom Precision Medicine Research Array. |

| REML Estimation Software | Estimates genetic variance components from pilot data to inform S² prior. | GCTA, ASReml, sommer R package, MTG2. |

| Bayesian Analysis Software | Implements BayesA and related models with flexible prior specification. | BGLR R package, JAGS, Stan, BLR. |

| High-Performance Computing (HPC) Cluster | Enables running multiple MCMC chains and sensitivity analyses in parallel. | SLURM workload manager on Linux-based clusters. |

| MCMC Diagnostic Toolkit | Assesses convergence and mixing of Bayesian models to validate results. | coda R package (Gelman-Rubin, traceplots), bayesplot. |

| Data Visualization Suite | Creates diagnostic and results plots (contour, trace, forest plots). | ggplot2, plotly in R, Matplotlib in Python. |

Within the broader thesis on BayesA prior specification and parameter tuning, this document provides practical Application Notes and Protocols for implementing Genomic Prediction and Genome-Wide Association Study (GWAS) analyses. The focus is on comparing the performance, usability, and parameterization of three primary software approaches: the Bayesian Generalized Linear Regression (BGLR) package, the Genome-wide Complex Trait Analysis (GCTA) tool, and custom Markov Chain Monte Carlo (MCMC) scripts. These tools are critical for researchers and drug development professionals aiming to dissect complex traits and identify candidate genes.

Table 1: Core Software Specifications for Genomic Analysis

| Feature | BGLR (R Package) | GCTA (Standalone) | Custom MCMC Scripts (e.g., R/Python) |

|---|---|---|---|

| Primary Methodology | Bayesian Regression with multiple priors (BayesA, B, C, etc.) | REML, BLUP, GREML, fastBAT for GWAS | User-defined Bayesian models (e.g., precise BayesA) |

| Key Strength | User-friendly, flexible prior specification, integrated with R | Extremely fast for large-scale GREML, efficient GWAS | Complete control over prior forms, tuning, and algorithm flow |

| Computational Speed | Moderate (C back-end) | Very High (C++ optimized) | Low to Moderate (depends on optimization) |

| Ease of Parameter Tuning | High (direct arguments for df, scale, etc.) | Moderate (via command-line flags) | Very High (direct access to all parameters) |

| Best For | Comparing Bayesian priors, standard genomic prediction | Estimating variance components, large-N GWAS | Research on novel priors, algorithm development, teaching |

| Default BayesA Parameters | list(df0=5, S0=NULL, R2=0.5) |

--bayesA (with --bfile); limited prior control |

User-defined (shape, scale, starting values) |

Table 2: Typical Runtime Comparison (Simulated Dataset: n=1000, p=5000 SNPs)

| Task | BGLR (10k iterations) | GCTA (GREML) | Custom MCMC (R, 10k iter) |

|---|---|---|---|

| Genomic Prediction (Fit + Predict) | ~45 seconds | ~20 seconds | ~180 seconds |

| Variance Component Estimation | ~35 seconds (within MCMC) | ~5 seconds | ~170 seconds (within MCMC) |

| GWAS (Marker Effects) | Not primary function | ~15 seconds (MLMA) | ~165 seconds (sampled effects) |

Experimental Protocols

Protocol 1: Implementing BayesA with BGLR for Genomic Prediction

Objective: To perform genomic prediction and estimate marker effects using the BayesA prior in BGLR.

Materials: Phenotype vector (y), Genotype matrix (X, centered/scaled), R software, BGLR package.

- Data Preparation: Load data. Center and scale the genotype matrix. Split data into training (

yTr,XTr) and testing (yTe,XTe) sets. - Prior Specification: Define the BayesA prior list:

ETA <- list(list(X=XTr, model='BayesA', df0=5, S0=0.5*var(yTr)*(5+2)/5))Here,S0is set so the prior mean of the marker variance equals 0.5 * phenotypic variance. - Model Fitting: Run the MCMC:

fit <- BGLR(y=yTr, ETA=ETA, nIter=12000, burnIn=2000, thin=10, verbose=FALSE) - Prediction & Evaluation: Predict into test set:

yPred <- XTe %*% fit$ETA[[1]]$b. Calculate prediction accuracy as correlation betweenyPredand observedyTe. - Posterior Analysis: Inspect

fit$ETA[[1]]$varB(trace plots) andfit$ETA[[1]]$bfor estimated marker effects.

Protocol 2: Estimating Genomic Heritability with GCTA-GREML

Objective: To estimate the proportion of phenotypic variance explained by all SNPs using GCTA.

Materials: PLINK-format genotype data (data.bed, data.bim, data.fam), phenotype file, GCTA software.

- GRM Calculation: Compute the Genetic Relatedness Matrix (GRM):

gcta64 --bfile data --autosome --maf 0.01 --make-grm --out data_grm - GREML Analysis: Perform the REML analysis:

gcta64 --grm data_grm --pheno phenotype.txt --reml --out data_remlThe filephenotype.txtcontains family ID, individual ID, and the phenotype. - Output Interpretation: The key result is in

data_reml.hsq:V(G)/Vpis the estimated SNP-based heritability (h²).

Protocol 3: Custom MCMC for a Tailored BayesA Model

Objective: To implement a basic BayesA sampler, allowing for direct tuning of hyperparameters ν (shape) and S (scale) for the inverse-chi-squared prior on marker variances.

Materials: R/Python environment, genotype/phenotype data.

- Initialize Parameters: Set

µ=mean(y),b=vector of zeros,σ²_e=var(y)0.5,σ²_bj=rep(var(y)/p, p). Defineν=5,S=var(y)(ν-2)/ν. - Gibbs Sampling Loop (for each iteration 1:nIter):

a. Sample intercept

µfrom its conditional normal distribution. b. Sample each marker effectb_j: fromN(mean, var)where mean = (xj'(y - µ - X[-j]b[-j])) / (xj'xj + σ²e/σ²bj), var = σ²_e / (x_j'x_j + σ²_e/σ²_bj). c. Sample each marker varianceσ²bj: fromInv-χ²(ν + 1, (bj² + ν*S) / (ν + 1)). d. Sample residual varianceσ²e: fromInv-χ²(n + shapee, SSR + scalee)`. - Post-Processing: Discard burn-in iterations, apply thinning. Posterior mean of

bis the estimated marker effect. Monitor trace plots ofσ²_bjfor convergence.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Research Reagent Solutions for Genomic Analysis

| Item | Function & Application | Example/Note |

|---|---|---|

| Genotyping Array | Provides high-density SNP data for GRM construction and GWAS. | Illumina Global Screening Array, Affymetrix Axiom |

| PLINK Software | Core toolset for managing, filtering, and converting genotype data. | Used as a prerequisite for formatting inputs for GCTA/BGLR. |

| R Statistical Environment | Platform for running BGLR, custom scripts, and statistical analysis. | Essential libraries: BGLR, data.table, ggplot2. |

| High-Performance Computing (HPC) Cluster | Enables analysis of large-scale genomic datasets (N > 10k). | Required for GCTA on biobank data or long custom MCMC chains. |

| Simulated Genomic Datasets | For method development, power analysis, and tuning parameter research. | Generated using QTLRel or AlphaSimR packages. |

| Convergence Diagnostic Tool | Assesses MCMC chain convergence for Bayesian methods. | Gelman-Rubin diagnostic (coda package), trace plots. |

Visualizations

BGLR Analysis and Tuning Workflow

BayesA Prior Hierarchical Model Diagram

Decision Logic for Software Selection

This Application Note details a case study on tuning priors for quantitative trait locus (QTL) mapping of pharmacogenomic (PGx) traits within clinical cohorts. It is situated within a broader thesis research program focused on refining BayesA prior specification and parameter tuning for complex trait genomics. Accurately mapping genetic variants associated with inter-individual drug response variability (e.g., efficacy, toxicity) is critical for personalized medicine. Standard QTL mapping often employs linear mixed models, but Bayesian sparse methods like BayesA offer advantages by incorporating prior distributions on effect sizes, allowing for more robust detection of polygenic signals. The specification of the scale (s²) and degrees of freedom (ν) parameters for the scaled inverse-chi-square prior on genetic variances is non-trivial and requires empirical tuning based on cohort-specific genetic architecture and trait heritability.

Core Methodology and Prior Tuning Framework

BayesA Model Specification

The foundational BayesA model for drug response QTL mapping is: y = Xβ + Zu + e Where:

- y is an n×1 vector of standardized drug response phenotypes (e.g., change in tumor volume, toxicity grade, pharmacokinetic measure).

- X is an n×p matrix of fixed effects (covariates: age, sex, principal components).

- β is a p×1 vector of fixed effect coefficients.

- Z is an n×m standardized genotype matrix (e.g., imputed dosage).

- u is an m×1 vector of random marker effects, with prior uᵢ ~ N(0, σ²ᵢ).

- σ²ᵢ is the marker-specific genetic variance, with prior σ²ᵢ ~ Inv-χ²(ν, s²).

- e is the residual error, e ~ N(0, Iσ²ₑ).

The key tuning parameters are:

- s²: Scale parameter of the inverse-chi-square prior.

- ν: Degrees of freedom parameter.

Tuning Protocol via Predictive Cross-Validation

A systematic protocol for tuning (ν, s²) using predictive performance on held-out clinical data.

Protocol: K-fold Cross-Validation for Prior Tuning

Cohort & Data Preparation:

- Input: Clinical cohort with genomic data (WGS or array+imputation) and precisely measured drug response phenotypes.

- QC: Apply standard genotype and phenotype quality control. Adjust phenotype for relevant clinical covariates using linear regression; use residuals for QTL mapping.

- Split: Randomly partition the cohort into K folds (typically K=5 or 10), preserving family structures if present.

Parameter Grid Definition:

- Define a grid of candidate values for (ν, s²).

- Suggested initial grid: ν ∈ {3, 4, 5, 6}, s² ∈ {0.01, 0.05, 0.10, 0.15, 0.20} × (σ²ₚ / m), where σ²ₚ is the phenotypic variance and m is the number of markers.

Cross-Validation Loop: For each parameter pair (ν, s²) in the grid: For k = 1 to K: a. Training Set: All data except fold k. b. Test Set: Data in fold k. c. Model Training: Run the BayesA Gibbs sampler on the training set using the current (ν, s²). Run for a sufficient number of iterations (e.g., 50,000) with burn-in (e.g., 10,000). Thin chains appropriately. d. Effect Size Estimation: Calculate the posterior mean of marker effects (û) from the training chain. e. Prediction: Generate polygenic scores for individuals in the test set: PGStest = Ztest * û. f. Evaluation: Calculate the correlation (r) between the predicted PGS_test and the observed (covariate-adjusted) phenotype in the test set. Record r² as the predictive accuracy.

Optimal Parameter Selection:

- For each (ν, s²) pair, average the r² across all K folds.

- Select the parameter pair that maximizes the average predictive r².

- Final Model: Run the BayesA model on the full clinical cohort using the tuned optimal (ν, s²) parameters to identify significant drug response QTLs.

Case Study Data & Results

Data simulated from a real-world oncology PGx cohort (N~500) investigating germline genetic contributors to platinum-based chemotherapy induced peripheral neuropathy (CIPN).

Table 1: Candidate Prior Parameter Grid and Cross-Validation Performance

| Degrees of Freedom (ν) | Scale (s²) | Avg. Predictive r² (5-fold CV) | Std. Dev. of r² |

|---|---|---|---|

| 3 | 0.001 | 0.031 | 0.012 |

| 3 | 0.005 | 0.048 | 0.015 |

| 4 | 0.005 | 0.052 | 0.011 |

| 4 | 0.010 | 0.045 | 0.014 |

| 5 | 0.005 | 0.049 | 0.013 |

| 5 | 0.010 | 0.042 | 0.016 |

| 6 | 0.005 | 0.046 | 0.012 |

| 6 | 0.010 | 0.040 | 0.015 |

Table 2: Top Drug Response QTLs Identified with Tuned (ν=4, s²=0.005) Prior

| SNP ID (Chr:Pos) | Gene/Locus | Effect Allele | Posterior Mean Effect (β) | 95% Credible Interval | Bayes Factor (log10) |

|---|---|---|---|---|---|

| rs123456 (10:95) | FNLPD1 | A | 0.32 | [0.18, 0.47] | 4.2 |

| rs789012 (6:25) | HLA-DRA | G | -0.41 | [-0.60, -0.23] | 5.1 |

| rs345678 (15:78) | SCN10A | T | 0.28 | [0.14, 0.43] | 3.8 |

Visualizations

(Diagram Title: Prior Tuning via Cross-Validation Workflow)

(Diagram Title: BayesA Graphical Model for Drug Response)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Drug Response QTL Prior Tuning

| Item/Category | Specific Example/Product | Function in Protocol |

|---|---|---|

| Genotyping Array | Illumina Global Screening Array v3.0, PharmacoScan | High-throughput genome-wide SNP genotyping with curated PGx content. |

| Genotype Imputation Service | Michigan Imputation Server, TOPMed Imputation Server | Increases genomic coverage by inferring untyped variants using large reference panels. |

| Bayesian QTL Mapping Software | BGLR R package, gemma, JWAS |

Implements BayesA and related models with customizable priors for Gibbs sampling. |

| High-Performance Computing (HPC) | SLURM workload manager, Linux cluster | Enables computationally intensive cross-validation and MCMC chains across parameter grids. |

| Clinical Phenotyping Platform | REDCap, EHR-linked biobank | Standardized collection and management of structured drug response outcomes and covariates. |

| Data QC Pipeline | PLINK 2.0, R/qc2 |

Performs essential quality control on genotype and phenotype data prior to analysis. |

| Visualization & Reporting | ggplot2, locuszoom.js, Manhattanly |

Creates publication-ready Manhattan plots, effect size plots, and results visualization. |

Within the context of a broader thesis on BayesA prior specification and parameter tuning, this Application Note details the implementation of Bayesian shrinkage methods for discriminating between relevant and irrelevant genetic effects in biomarker discovery. The BayesA model, employing a scaled-t prior, provides a robust framework for handling high-dimensional genomic data by applying differential shrinkage, effectively shrinking noise variables toward zero while preserving signals from true biomarkers.

Theoretical & Practical Framework

BayesA posits that each genetic marker effect (βj) follows a conditional prior distribution: βj | σj^2 ~ N(0, σj^2) and σ_j^2 | ν, S^2 ~ Inv-χ^2(ν, S^2). This hierarchical specification induces marker-specific shrinkage, where the degree of shrinkage is controlled by hyperparameters ν (degrees of freedom) and S^2 (scale parameter). Tuning these parameters is critical for optimizing the balance between false positives and false negatives.

Table 1: Impact of Prior Hyperparameters on Shrinkage Behavior

| Hyperparameter | Typical Range | Effect of Increasing Parameter | Primary Influence on Biomarker List |

|---|---|---|---|

| Degrees of Freedom (ν) | 3 - 10 | Increases prior density near zero; promotes heavier shrinkage of small effects. | Reduces false positives; may over-shrink true small-effect biomarkers. |

| Scale (S^2) | 0.01 * σy^2 - 0.1 * σy^2 | Increases the spread of the prior variance; allows larger effects to escape shrinkage. | Increases sensitivity for larger effects; may admit more false positives. |

| Markov Chain Monte Carlo (MCMC) Iterations | 10,000 - 100,000 | Improves convergence and posterior estimation accuracy. | Stabilizes biomarker ranking; reduces Monte Carlo error. |

Experimental Protocol: Implementing BayesA for Genomic Biomarker Screening

Protocol 3.1: Data Preprocessing & Quality Control

Objective: Prepare genotype and phenotype data for Bayesian analysis. Steps:

- Genotype Data: From GWAS or WGS, filter SNPs for call rate >95%, minor allele frequency (MAF) >1%, and Hardy-Weinberg equilibrium p > 1x10^-6. Convert to a numeric matrix (n x m), where n=samples, m=markers, using allele dosage (0,1,2).

- Phenotype Data: For continuous traits, apply appropriate normalization (e.g., rank-based inverse normal transformation). For case-control, use binary coding (0/1).

- Covariates: Include age, sex, principal components (PCs) for ancestry as fixed effects in the model. Center and scale all covariates.

Protocol 3.2: BayesA Model Fitting via Gibbs Sampling

Objective: Sample from the full conditional posterior distributions to estimate genetic effects. Software: Implement in R/Python using custom Gibbs sampler or utilize BGLR, rrBLUP, or STAN. Steps:

- Initialize: Set βj=0 for all markers j. Set residual variance σe^2 = var(y)0.8. Set ν=5, S^2 = var(y)0.05/m. Choose total iterations (T=50,000) and burn-in (B=10,000).

- Gibbs Sampler Loop (for iteration t=1 to T): a. Sample marker effects: For each marker j=1..m, i. Draw new marker-specific variance: σj^2(t) from Inv-χ^2(ν+1, (βj(t-1))^2 + νS^2)/(ν+1). ii. Draw new effect: βj(t) from N(μj, Vj), where Vj = (Xj'Xj + σe^2(t-1)/σj^2(t))^-1 * σe^2(t-1) μj = Vj * Xj' (y - μ - X{-j}β{-j}(t-1) - Zc) / σe^2(t-1) b. Sample intercept and fixed effects from their multivariate normal conditional. c. Sample residual variance σe^2(t) from Inv-χ^2(dfe, SSe), where SS_e is the sum of squared residuals.

- Post-Processing: Discard burn-in samples. Calculate posterior mean and 95% credible interval for each β_j.

Protocol 3.3: Biomarker Selection & Validation

Objective: Identify significant biomarkers based on posterior inference. Steps:

- Significance Threshold: Declare a marker as a biomarker if its 95% credible interval excludes zero OR if its absolute posterior mean > a practical significance threshold (e.g., 0.1 * phenotipic SD).

- Stability Check: Re-run analysis with different ν (3, 5, 10) and S^2 values. Select biomarkers consistently identified across hyperparameter settings.

- Independent Validation: Test selected biomarker set in a held-out validation cohort using a simple polygenic score or logistic/linear regression.

Visualizations

Diagram 1: BayesA Shrinkage & Validation Workflow

Diagram 2: BayesA Prior-Posterior Hierarchy

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for BayesA Implementation

| Item | Function/Description | Example Product/Software |

|---|---|---|

| High-Quality Genotype Data | Raw input for analysis; must pass stringent QC for accurate effect estimation. | Illumina Global Screening Array, Whole Genome Sequencing data. |

| Statistical Software with MCMC | Platform for implementing custom Gibbs sampler or accessing BayesA routines. | R (BGLR, rrBLUP), Python (PyStan, pymc3), GENESIS. |

| High-Performance Computing (HPC) Resource | Enables feasible runtime for large MCMC chains on high-dimensional data. | Local compute cluster (SLURM), Cloud (AWS EC2, Google Cloud). |

| Hyperparameter Tuning Grid | Pre-defined sets of (ν, S²) values to test model sensitivity. | Custom script to iterate ν=[3,5,10], S²=[0.01, 0.05, 0.1]*var(y)/m. |

| Credible Interval Calculator | Derives Bayesian uncertainty intervals from posterior samples. | R coda package, Python arviz library. |

| Independent Validation Cohort | Dataset for confirming biomarker stability and biological relevance. | Held-out samples from biobank, external public dataset (e.g., UK Biobank). |

Troubleshooting BayesA: Solving Convergence Issues and Optimizing Hyperparameters

This document serves as an application note within a broader research thesis investigating prior specification and parameter tuning for the BayesA model in genomic prediction. The BayesA framework, widely used in quantitative genetics and pharmacogenomics for drug target discovery, applies a scaled-t prior to marker effects, inducing selective shrinkage. Improper tuning of its hyperparameters—degrees of freedom (ν) and scale (S²)—leads to the canonical issues of poor Markov Chain Monte Carlo (MCMC) convergence, slow mixing, and pathological over- or under-shrinkage of effects. This note provides diagnostic protocols and mitigation strategies for researchers and drug development scientists.

Table 1: Symptoms, Diagnostics, and Probable Causes in BayesA Implementation

| Symptom | Diagnostic Metric(s) | Threshold/Indicator | Probable Cause (Hyperparameter Link) |

|---|---|---|---|

| Poor Convergence | Gelman-Rubin statistic (R̂) | R̂ > 1.05 for key parameters | Prior too vague (ν too small, S² too large), leading to poorly identified posterior. |

| Slow Mixing | Effective Sample Size (ESS) per second; Auto-correlation time | ESS < 100; High lag-50 autocorrelation | Prior too restrictive (ν too large, S² too small), creating a highly correlated parameter space. |

| Over-shrinkage | Mean squared marker effect; Proportion of effects near zero | Drastically lower than GBLUP estimates; >95% within ±0.01*σₐ | Scale S² is too small, over-penalizing all effects. |

| Under-shrinkage | Inflated variance of large effects; Predictive performance | Variance >> prior expectation; Severe overfitting on training data | ν too small (<4), heavy tails allow unrealistic large effects; S² too large. |

| Bi-modal Chain Behavior | Trace plot inspection; Heidelberger-Welch test | Sudden shifts in mean; Stationarity test failure | Prior likelihood conflict, often from mismatched ν and S². |

Experimental Protocols for Diagnosis & Tuning

Protocol 3.1: Baseline Diagnostic MCMC Run

Objective: Establish convergence and mixing baselines for a given (ν, S²) set.

- Model Specification: Implement standard BayesA: y = 1μ + Xg + ε, with gⱼ ~ t(0, S², ν) and ε ~ N(0, σₑ²).

- Initialization: Set hyperparameters to common defaults: ν=5, S² ~ Inv-χ²(ν, σₐ²/(p * (ν-2)/ν)), where p is the number of markers.

- MCMC Settings: Run 4 independent chains from dispersed starting points for 50,000 iterations, discarding the first 25,000 as burn-in. Thinning interval of 10.

- Data Collection: Record all parameters. Compute R̂, ESS, and auto-correlation for μ, σₑ², σₐ², and a subset of gⱼ.

- Visualization: Generate trace, density, and autocorrelation plots.

Protocol 3.2: Hyperparameter Grid Search for Optimal Shrinkage

Objective: Systematically evaluate (ν, S²) pairs to mitigate over/under-shrinkage.

- Grid Design: Define ν ∈ {3, 4, 5, 7, 10} and S² multipliers ∈ {0.1, 0.5, 1, 2, 5} × baseline scale.

- Validation Framework: Use a dedicated validation set or perform 5-fold cross-validation on the training data.

- Metric: Primary metric is predictive correlation or mean squared error (MSE) on validation data. Secondary metric is monitory of ESS/iteration.

- Execution: For each (ν, S²) pair, execute a shortened MCMC (e.g., 20,000 iterations) following Protocol 3.1 steps, compute validation metrics.

- Analysis: Identify the region of (ν, S²) space maximizing predictive performance while maintaining ESS > 200 for key parameters.

Protocol 3.3: Empirical Bayes Tuning of Scale Parameter

Objective: Dynamically estimate S² from data to improve prior compatibility.

- Method: Implement a Metropolis-within-Gibbs step or method of moments to update S².

- Prior for S²: Place a diffuse hyper-prior on S², e.g., S² ~ Inv-χ²(νₛ, ω), with νₛ=3, ω set to a plausible initial value.

- MCMC Adaptation: Within the Gibbs sampler, after sampling all gⱼ, propose a new S²' based on the current conditional likelihood and prior.

- Validation: Compare chain behavior and predictive performance against fixed-S² runs.

Visualizations

Diagram 1: BayesA Hyperparameter Tuning Workflow

Diagram 2: Impact of Hyperparameters on Prior Shape & Shrinkage

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools & Libraries for BayesA Diagnostics

| Item (Software/Package) | Function | Application in Protocol |

|---|---|---|

| Stan / cmdstanr | Probabilistic programming language with advanced HMC sampler. | Implements BayesA model; provides built-in diagnostics (R̂, ESS, treedepth). |

| R/coda or R/boa | R packages for MCMC output analysis. | Calculates Gelman-Rubin, Geweke, Heidelberger-Welch statistics; produces trace/autocorr plots. |

| Python/ArviZ | Python library for exploratory analysis of Bayesian models. | Visualizes posterior distributions, ESS, and R̂ across multiple chains. |

| Custom ESS/iteration script | User-written code to compute sampling efficiency. | Benchmarks mixing speed across hyperparameter grid (Protocol 3.2). |

| Cross-validation wrapper | Script to automate training/validation splits and prediction. | Executes predictive performance evaluation for grid search. |

| High-performance computing (HPC) cluster | Parallel computing environment. | Runs multiple MCMC chains and hyperparameter sets concurrently. |

1. Introduction and Thesis Context Within the broader thesis on BayesA prior specification and parameter tuning research, a core challenge is the principled quantification of prior influence. In Bayesian hierarchical models like BayesA—used extensively in genomics for association mapping and in pharmacometrics for dose-response modeling—the choice of prior distributions for variance parameters (e.g., scale parameters of inverse-gamma or half-t distributions) can markedly influence posterior estimates of marker effects or pharmacokinetic parameters. This document provides application notes and protocols for systematic sensitivity analysis (SA) to test this influence, ensuring robust scientific conclusions in drug development.

2. Core Protocol: Iterative Prior Perturbation Framework This protocol outlines a systematic workflow for sensitivity analysis in a BayesA-type model.

Protocol 2.1: Defining the Prior Perturbation Set

- Objective: To establish a range of plausible alternative priors for key hyperparameters.

- Methodology:

- Let the base prior for a key variance parameter τ be a weakly informative distribution (e.g., Half-t(3, 0, s)) as used in a standard BayesA implementation.

- Define a perturbation grid. For a scale parameter

s, define alternative priors by scaling the base scale:s_alt = {0.1, 0.5, 2, 10} * s_base. - For a shape parameter in an inverse-gamma prior (IG(α, β)), perturb both α and β to alter prior moments. See Table 1.

- Include a non-informative/reference prior (e.g., Jeffreys' prior p(τ) ∝ 1/τ) as a boundary case to assess shrinkage strength.

- Deliverable: A defined set of K prior models:

{P_base, P_alt1, ..., P_alt(K-1)}.

Protocol 2.2: Computational Execution and Monitoring

- Objective: To obtain posterior estimates under each prior in the set.

- Methodology:

- For each prior model

P_k, run the full MCMC sampling chain (e.g., using Stan, JAGS, or custom Gibbs sampler for BayesA) with identical data, likelihood, and other model components. - Ensure convergence diagnostics (Gelman-Rubin

R̂ < 1.05, effective sample size > 400) are met for each run independently. - For key parameters of interest (θ) (e.g., top 10 SNP effects, or a pharmacodynamic EC50), extract the full posterior sample or summary statistics (mean, median, 95% credible interval) from each run.

- For each prior model

- Deliverable: A matched set of posterior distributions for each θ under each prior

P_k.

Protocol 2.3: Quantitative Sensitivity Metrics Calculation

- Objective: To compute standardized metrics quantifying prior influence.

- Methodology: For each parameter of interest θ, calculate:

- Posterior Mean Shift (PMS):

|E[θ|D, P_base] - E[θ|D, P_alt]| / sd(θ|D, P_base). Standardizes the change in posterior means. - Credible Interval Overlap (CIO): The proportion of the base prior's 95% CI that overlaps with the alternative prior's 95% CI.

- Decision Reversal Flag: A binary indicator for whether the posterior probability of a clinically/scientifically relevant event (e.g.,

P(θ > 0)orP(θ > clinical threshold)) crosses a decisive threshold (e.g., 0.95) underP_basebut not underP_alt.

- Posterior Mean Shift (PMS):

3. Data Presentation

Table 1: Example Prior Perturbation Grid for a BayesA Scale Parameter

| Prior ID | Distribution Family | Hyperparameter Values | Prior Mean (Variance) | Rationale |

|---|---|---|---|---|

| P_base | Half-t | ν=3, scale=1.0 | ∞ (Heavy-tailed) | Default weakly informative. |

| P_alt1 | Half-t | ν=3, scale=0.5 | ∞ | More concentrated near zero. |

| P_alt2 | Half-t | ν=3, scale=2.0 | ∞ | More diffuse. |

| P_alt3 | Inverse-Gamma | α=0.5, β=0.5 | ∞ (Undefined) | Common vague prior. |

| P_alt4 | Inverse-Gamma | α=5, β=5 | 1.25 (~1.56) | Informative, favoring smaller variance. |

| P_ref | Improper | p(τ) ∝ 1/τ | - | Reference/Jeffreys' prior. |

Table 2: Sensitivity Analysis Results for Hypothetical Top Three SNP Effects

| SNP | Parameter (θ) | Base Posterior Mean (95% CI) | Prior ID | Alt. Post. Mean (95% CI) | PMS | CIO | Decision Reversal? |

|---|---|---|---|---|---|---|---|

| rs123 | Effect Size (β) | 1.50 (1.10, 1.95) | P_base | - | - | - | - |

| P_alt4 | 1.25 (0.85, 1.70) | 0.45 | 0.72 | No (P>0.95 holds) |

|||

| P_ref | 1.55 (1.20, 2.05) | 0.09 | 0.88 | No | |||

| rs456 | Effect Size (β) | 0.80 (0.30, 1.30) | P_base | - | - | - | - |

| P_alt4 | 0.55 (0.15, 0.98) | 0.45 | 0.65 | Yes (P>0.95 fails) |

|||

| P_ref | 0.85 (0.40, 1.45) | 0.09 | 0.92 | No | |||

| rs789 | Effect Size (β) | -0.20 (-0.60, 0.15) | P_base | - | - | - | - |

| P_alt4 | -0.15 (-0.45, 0.10) | 0.11 | 0.95 | No (null retained) | |||

| P_ref | -0.22 (-0.65, 0.18) | 0.04 | 0.98 | No |

4. Visualization

Diagram 1: Prior Sensitivity Analysis Workflow

Diagram 2: Prior Influence on Posterior Shrinkage

5. The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Sensitivity Analysis |

|---|---|

| Probabilistic Programming Framework (Stan/PyMC3) | Enables declarative model specification and robust Hamiltonian Monte Carlo (HMC) sampling for each prior model. |

| High-Performance Computing (HPC) Cluster or Cloud VMs | Facilitates parallel execution of multiple MCMC runs with different priors, drastically reducing wall-clock time. |

| Diagnostic Visualization Library (ArviZ, bayesplot) | Provides standardized functions for plotting posterior distributions, trace plots, and comparison intervals across prior runs. |

| Version-Controlled Model Script Repository (Git) | Essential for ensuring the exact model and data version is used across all prior perturbation runs, guaranteeing reproducibility. |

| Sensitivity Metric Calculation Script (Custom R/Python) | Automated scripts to compute PMS, CIO, and decision flags across the full parameter set from multiple MCMC outputs. |

Empirical Bayes and Cross-Validation Methods for Hyperparameter Tuning

Within the broader research on BayesA prior specification and parameter tuning, this document details protocols for two key hyperparameter tuning methodologies: Empirical Bayes (EB) and Cross-Validation (CV). The BayesA model, widely used in genomic prediction and pharmacogenomics, employs a scaled-t prior for marker effects. Its performance hinges on hyperparameters like degrees of freedom and scale, which are often unknown. This note provides application protocols for estimating these hyperparameters, thereby enhancing the robustness and predictive accuracy of models critical for drug target identification and personalized therapeutic development.

Core Methodological Protocols

Empirical Bayes (Type-II Maximum Likelihood) Protocol

Aim: To estimate hyperparameters by maximizing the marginal likelihood of the data.

Workflow:

- Define Hierarchical Model: Specify the complete BayesA model.

- Likelihood: (y | \beta, \sigmae^2 \sim N(X\beta, I\sigmae^2))

- Prior for marker effects: (\betaj | \sigma{\betaj}^2 \sim N(0, \sigma{\betaj}^2))

- Hyperprior for effect variances: (\sigma{\betaj}^2 \sim \chi^{-2}(\nu, S^2))

- Hyperparameters: (\theta = (\nu, S^2, \sigmae^2))