BayesDπ vs. BayesCπ for QTL Mapping: Which Model Wins When QTL Numbers Are Unknown?

Accurate genetic mapping of quantitative trait loci (QTL) is critical for complex disease research and precision medicine, but the unknown number of causal variants poses a significant challenge.

BayesDπ vs. BayesCπ for QTL Mapping: Which Model Wins When QTL Numbers Are Unknown?

Abstract

Accurate genetic mapping of quantitative trait loci (QTL) is critical for complex disease research and precision medicine, but the unknown number of causal variants poses a significant challenge. This article provides a comprehensive analysis of two powerful Bayesian variable selection models—BayesCπ and BayesDπ—designed specifically for this scenario. We cover their foundational principles, methodological implementations in genomic prediction and GWAS, practical strategies for troubleshooting and optimizing Markov Chain Monte Carlo (MCMC) performance, and a rigorous comparative validation of their accuracy and computational efficiency. Targeted at researchers and drug development professionals, this guide synthesizes current best practices to empower more robust discovery of genetic associations in biomedical datasets.

Beyond the Known: How BayesDπ and BayesCπ Tackle the Unknown QTL Problem

Quantitative Trait Loci (QTL) mapping and Genomic Prediction (GP) are foundational to modern genomic selection and association studies. A central, often unobserved, variable is the true number of QTL (pγ) underlying a complex trait. This unknown parameter critically influences the performance and interpretation of models like BayesCπ and BayesDπ, which explicitly model the proportion of non-zero effect markers (π). An incorrectly specified or estimated pγ leads to biased effect estimates, reduced prediction accuracy, and inflated Type I/II errors in Genome-Wide Association Studies (GWAS).

Table 1: Impact of Misspecified QTL Number on Genomic Prediction Accuracy (Simulation Studies)

| True QTL Number (pγ) | Assumed/Estimated π in Model | Prediction Accuracy (r) | Bias in GEBV | Reference Key |

|---|---|---|---|---|

| 50 (Low) | π = 0.99 (Too High) | 0.68 | High Overfitting | Habier et al., 2011 |

| 50 (Low) | π = 0.01 (Too Low) | 0.71 | High Shrinkage | |

| 50 (Low) | π Estimated (BayesCπ) | 0.79 | Minimal | |

| 500 (High) | π = 0.99 (Too Low) | 0.65 | High Shrinkage | Cheng et al., 2015 |

| 500 (High) | π = 0.01 (Too High) | 0.62 | Severe Overfitting | |

| 500 (High) | π Estimated (BayesDπ) | 0.75 | Minimal |

Table 2: Consequences for GWAS Power and Error Rates

| Scenario | QTL Detection Power (%) | False Discovery Rate (FDR) | Notes |

|---|---|---|---|

| Model assumes too few QTL (π too low) | Low (e.g., 40%) | Low (e.g., 5%) | Severe shrinkage; true QTL missed. |

| Model assumes too many QTL (π too high) | High (e.g., 85%) | Very High (e.g., 30%) | Noise markers included; spurious associations. |

| Model estimates π (BayesCπ/Dπ) | Balanced (e.g., 75%) | Controlled (e.g., 10%) | Adaptive balance between power and FDR. |

Core Protocols for Investigating Unknown QTL Number with BayesCπ/BayesDπ

Protocol 3.1: Simulation of Genomic Data with Known Ground Truth QTL

Purpose: To create a controlled environment for testing BayesCπ/Dπ performance under varying true QTL numbers. Materials: See Scientist's Toolkit. Procedure:

- Generate Genotype Matrix (X): Simulate n individuals and m biallelic markers (e.g., 1000 individuals, 50K SNPs) using a coalescent simulator (e.g., ms). Mimic linkage disequilibrium (LD) patterns.

- Define True QTL Set: Randomly select pγ markers (e.g., 50, 500) from the m total as true QTL.

- Assign QTL Effects: Draw true additive effects (β) for the pγ QTL from a mixture distribution: β ~ (1-π)N(0, σ²_g/pγ) + πδ0, where δ0 is a point mass at zero. For simulations, set true π = *pγ / m.

- Calculate Genetic Values: Compute GEBV = Xγ * βγ, where Xγ is the genotype matrix for QTL only.

- Simulate Phenotypes: y = GEBV + e, where e ~ N(0, σ²e). Set heritability h² = σ²g/(σ²g+σ²e) to a desired level (e.g., 0.3).

- Output: Genotype file, phenotype file, and a key file listing true QTL positions and effects.

Protocol 3.2: Implementing BayesCπ/BayesDπ for Genomic Prediction

Purpose: To perform genomic prediction while estimating the proportion of non-zero markers.

Materials: High-performance computing cluster, Bayesian analysis software (e.g., JWAS, BLR, GBLUP with variable selection).

Procedure:

- Model Specification:

- BayesCπ: y = 1μ + Xb + e. Prior for marker effect: bi | π, σ²b ~ (1-π)N(0, σ²b) + πδ0. Prior for π ~ Uniform(0,1) or Beta(α,β).

- BayesDπ: Extension where σ²b is also assigned a prior (e.g., scaled inverse-χ²), allowing for a distribution of effect sizes.

- Parameter Initialization: Set initial values for μ, b, σ²e, σ²b, and π.

- Gibbs Sampling: Run a Markov Chain Monte Carlo (MCMC) chain for ≥ 50,000 iterations, discarding the first 10,000 as burn-in.

- Sample μ from its full conditional distribution.

- Sample each b_i conditional on all other parameters (often using a residual update).

- Sample indicator variable δi (1 if bi is non-zero) from a Bernoulli distribution.

- Sample π from Beta(#non-zero + α, m - #non-zero + β).

- Sample variances (σ²e, σ²b) from their inverse-χ² full conditionals.

- Posterior Inference: Calculate posterior mean of b_i as the average of sampled effects post-burn-in. Posterior mean of π indicates estimated proportion of non-zero markers.

- Prediction: Predict genomic values for validation set as ŷval = 1μ + Xval * b_postmean.

Protocol 3.3: GWAS via Posterior Inclusion Probabilities (PIPs) from BayesCπ/Dπ

Purpose: To identify significant marker-trait associations while accounting for unknown QTL number. Procedure:

- Run BayesCπ/Dπ: Follow Protocol 3.2 on the full discovery/training dataset.

- Calculate PIP: For each marker i, compute the Posterior Inclusion Probability (PIP) as the proportion of MCMC samples where its effect was non-zero (δ_i=1).

- Set Significance Threshold: Use a permutation procedure (see Protocol 3.4) or a heuristic threshold (e.g., PIP > 0.8 or top 0.1% of markers).

- Identify QTL: Declare markers exceeding the threshold as significant associations. The list length is an estimate of the number of QTL.

Protocol 3.4: Permutation Test for GWAS Threshold Calibration

Purpose: To establish a genome-wide significance threshold for PIPs that controls the Family-Wise Error Rate (FWER). Procedure:

- Run Original Analysis: Perform BayesCπ/Dπ on the real data, obtain PIP_obs for all markers.

- Permutation Loop (100-1000 times): a. Randomly shuffle phenotype vector (y) relative to genotype matrix (X), breaking any true association. b. Run a shortened MCMC chain (e.g., 10,000 iterations) on the permuted dataset. c. Record the maximum PIP value obtained in this permuted run (PIPmaxperm).

- Construct Null Distribution: Create a vector of all recorded PIPmaxperm values.

- Determine Threshold: The 95th percentile of the null distribution serves as the genome-wide FWER=0.05 significance threshold for PIP_obs.

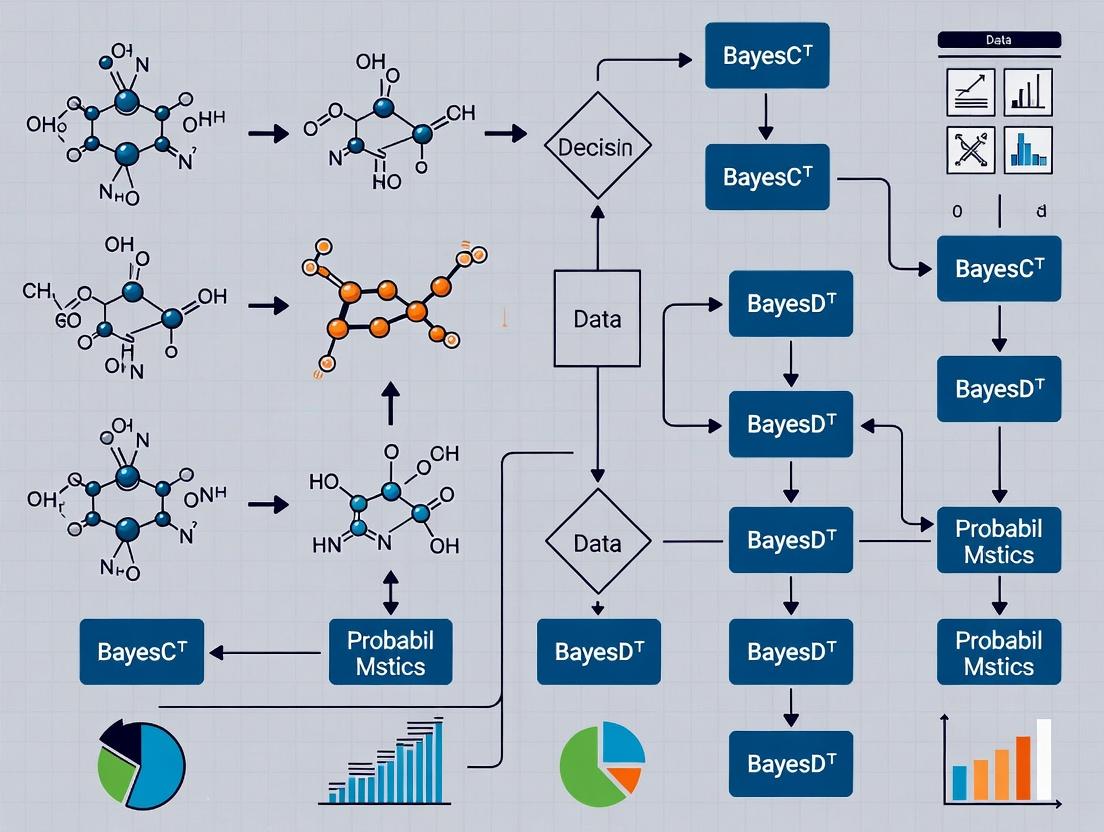

Visualizations

BayesCπ/Dπ Analysis & GWAS Workflow

BayesCπ Prior Structure Graph

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for QTL Number Research

| Item | Function & Relevance to BayesCπ/Dπ | Example/Note |

|---|---|---|

| Genomic Simulators | Generates synthetic genotype/phenotype data with known QTL architecture for method validation. | ms (coalescent), AlphaSimR (flexible, R-based), QTLSim. |

| High-Performance Computing (HPC) Cluster | Enables running long MCMC chains for BayesCπ/Dπ (10Ks of iterations) on large datasets (1000s individuals, 100Ks SNPs). | Linux cluster with SLURM scheduler. Essential for practical use. |

| Bayesian Analysis Software | Implements Gibbs sampler for BayesCπ, BayesDπ, and related models. | JWAS (Julia), BLR (R), GCTA, GS3 (Fortran). |

| Phenotyping Platforms | Accurate, high-throughput phenotyping is critical for defining the trait y in real-world analysis. | High-resolution imagers, spectrometers, automated clinical measurement devices. |

| Genotyping/Sequencing Arrays | Provides the high-density marker matrix X. Density affects ability to resolve QTL. | SNP chips (e.g., Illumina Infinium), whole-genome sequencing. |

| Statistical & Plotting Libraries | For post-MCMC analysis, calculating accuracy, PIPs, and creating publication-quality figures. | R with coda, ggplot2, tidyverse; Python with arviz, matplotlib. |

| Reference Genomes & Annotations | For interpreting GWAS results: mapping significant markers to genes and pathways. | Ensembl, NCBI, species-specific databases (e.g., Animal QTLdb). |

This document details the application and protocols for Bayesian variable selection methods in quantitative trait loci (QTL) mapping. The methodological progression from BayesA and BayesB to BayesCπ and BayesDπ represents a core evolution in handling the critical assumption of unknown QTL number within genomic prediction and genome-wide association studies (GWAS). The BayesCπ and BayesDπ frameworks, central to modern genomic research, introduce a mixture prior with a point mass at zero and a variance proportion parameter (π), which is either estimated from the data (BayesCπ) or integrated over (BayesDπ). This allows for probabilistic variable selection, where π represents the probability that a marker has zero effect. This is a cornerstone thesis in animal breeding, plant genetics, and pharmaceutical biomarker discovery for polygenic diseases.

Core Methodological Comparison & Quantitative Data

Table 1: Evolution of Key Bayesian Regression Methods for Genomic Selection

| Method | Prior on Marker Effects (β) | Variance Assumption | Key Hyperparameter (π) | Handles Unknown QTL Number? | Primary Application |

|---|---|---|---|---|---|

| BayesA | t-distribution (or scaled normal) | Marker-specific (σ²βᵢ) | No | No | Initial models for large-effect QTL. |

| BayesB | Mixture: Spike (0) + Slab (t-dist) | Marker-specific if in slab | Fixed (user-defined) | Partial (fixed proportion) | Sparse architectures; some variable selection. |

| BayesCπ | Mixture: Spike (0) + Slab (normal) | Common variance (σ²β) for slab | Estimated (from data) | Yes (main innovation) | Standard for variable selection with unknown QTL number. |

| BayesDπ | Mixture: Spike (0) + Slab (normal) | Common variance, but scaled by marker-specific scale parameter | Integrated over (as random) | Yes (more flexible) | Accounts for potential heterogeneity in genetic architecture. |

Table 2: Typical Hyperparameter Specifications and MCMC Output for BayesCπ

| Parameter | Prior Distribution | Typical Specification | Interpretation in Output |

|---|---|---|---|

| π (Inclusion Probability) | Beta(α, β) | Beta(1,1) (Uniform) | Posterior mean estimates proportion of non-zero markers. |

| σ²β (Effect Variance) | Scaled-Inverse-χ² | Scaled-Inverse-χ²(ν, S²) | Scale of genetic effects for selected markers. |

| σ²e (Residual Variance) | Scaled-Inverse-χ² | Scaled-Inverse-χ²(ν, S²) | Model residual/unexplained variance. |

| δᵢ (Inclusion Indicator) | Bernoulli(π) | Sampled each MCMC iteration | Indicator = 1: marker included; 0: excluded. |

Experimental Protocols

Protocol 1: Implementation of BayesCπ for QTL Mapping Objective: To identify markers associated with a continuous trait (e.g., disease severity, yield, drug response) using variable selection.

Materials: Genotypic matrix (X, n x m), Phenotypic vector (y, n x 1), High-performance computing cluster.

Procedure:

- Data Standardization: Center phenotypes (y) to mean zero. Center and genotype matrix (X) to mean zero for each marker.

- Prior Specification:

- Set prior for π: π ~ Beta(α=1, β=1).

- Set prior for genetic variance: σ²β ~ Scaled-Inverse-χ²(ν=-2, S²=0), equivalent to flat prior.

- Set prior for residual variance: σ²e ~ Scaled-Inverse-χ²(ν=-2, S²=0).

- Initialize all marker inclusion indicators (δᵢ) to 1.

- Gibbs Sampling (MCMC) Setup:

- Set chain length (e.g., 50,000 iterations), burn-in period (e.g., 10,000), and thinning interval (e.g., store every 10th sample).

- Core Gibbs Sampling Loop (per iteration): a. Sample marker effects (β): For each marker j, if δj=1, sample from conditional posterior (normal distribution). If δj=0, set βj=0. b. Sample inclusion indicators (δ): For each marker j, sample δj from Bernoulli distribution with probability based on Bayes Factor comparing inclusion vs. exclusion. c. Sample π: π | δ ~ Beta(1 + ∑δᵢ, 1 + m - ∑δᵢ). d. Sample σ²β: from Scaled-Inverse-χ² distribution conditional on current β and δ. e. Sample σ²e: from Scaled-Inverse-χ² distribution conditional on y, X, and β.

- Post-Analysis:

- Posterior Inclusion Probability (PIP): Calculate for each marker as (∑δᵢ) / (total stored iterations). Markers with PIP > 0.8-0.9 are considered significant QTL.

- Genetic Variance Explained: Calculate from sampled β and σ²β.

Protocol 2: Cross-Validation for Predictive Ability Assessment Objective: To evaluate the genomic prediction accuracy of the BayesCπ model.

- Data Partitioning: Randomly split data into k folds (e.g., 5-fold).

- Iterative Training/Testing: For each fold, use the other k-1 folds as the training set to run Protocol 1 (BayesCπ). Use the estimated marker effects (posterior mean) to predict phenotypes in the held-out test fold.

- Accuracy Calculation: Correlate predicted genomic breeding values (GEBVs) with observed phenotypes in the test set. Repeat across all folds and average the correlation coefficient.

- Comparison: Compare accuracy to BayesA, BayesB, and GBLUP benchmarks.

Visualizations

Title: Evolution of Bayesian Variable Selection Methods

Title: BayesCπ Gibbs Sampling Workflow for QTL Mapping

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item/Resource | Function/Description | Application Note |

|---|---|---|

| Genotyping Array | High-density SNP chip or whole-genome sequencing data. | Provides the genotype matrix (X). Quality control (MAF, HWE, call rate) is critical. |

| Phenotypic Data | Precisely measured continuous trait of interest. | Must be adjusted for fixed effects (e.g., age, batch, population structure) prior to analysis. |

| BRR & BGLR R Packages | Implements Bayesian regression models including BayesCπ. | BGLR offers user-friendly implementation with adjustable priors. Essential for protocol execution. |

| High-Performance Computing (HPC) Cluster | Enables parallel MCMC chains and analysis of large datasets. | Gibbs sampling is computationally intensive (10⁵ markers x 10⁴ iterations). |

| Python (PyMC3/Stan) | Probabilistic programming languages for custom model building. | Required for implementing novel variations like BayesDπ or incorporating complex priors. |

| Posterior Inclusion Probability (PIP) | Primary output statistic for variable selection. | PIP > 0.95 indicates strong evidence for a QTL. More robust than p-values from frequentist GWAS. |

Within the broader thesis investigating Bayesian mixture models for genomic selection with an unknown number of quantitative trait loci (QTL), BayesCπ and BayesDπ represent pivotal methodologies. This document focuses on BayesCπ, a model designed to address variable (e.g., SNP) inclusion uncertainty by treating the inclusion probability (π) as an unknown with its own prior distribution. This contrasts with fixed-π models, allowing the data to inform the proportion of markers with non-zero effects, which is critical when the true number of QTL is unknown.

Core Model Specification

BayesCπ assumes that each marker effect, βj, is drawn from a mixture distribution. A proportion, π, of markers have zero effect, and a proportion, (1-π), have effects drawn from a univariate normal distribution.

Model Equation:

y = 1μ + Xβ + e

Where:

yis an n×1 vector of phenotypic observations.μis the overall mean.Xis an n×p design matrix of SNP genotypes (centered).βis a p×1 vector of random SNP effects.eis an n×1 vector of residual errors,e ~ N(0, Iσ²_e).

Prior Distributions for BayesCπ:

βj | π, σ²β ~ (1-π)N(0, σ²β) + πδ0, whereδ0is a point mass at zero.π ~ Uniform(0, 1)orBeta(α, β).σ²β ~ Scale-Inv-χ²(νβ, S²β).σ²e ~ Scale-Inv-χ²(νe, S²e).

Table 1: Comparative Overview of Bayesian Mixture Models for Genomic Selection

| Model Feature | BayesCπ | BayesDπ | BayesB (Fixed π) |

|---|---|---|---|

| Mixture Distribution | Two-component: Spike at 0 + Normal Slab | Two-component: Spike at 0 + t-distribution Slab | Two-component: Spike at 0 + Normal Slab |

| Variance Prior | Common variance for all non-zero effects | Marker-specific variances scaled by a global parameter | Marker-specific variances |

| Inclusion Probability (π) | Unknown, estimated from data (prior: Beta/Uniform) | Unknown, estimated from data (prior: Beta/Uniform) | Fixed by the user |

| Key Assumption | All non-zero effects share the same variance | Non-zero effects have heavier tails (t-dist) | Each SNP has its own variance |

| Computational Demand | Moderate | Higher than BayesCπ | High |

| Primary Use Case | Unknown QTL number, many SNPs with small effects | Unknown QTL number, potential for large-effect outliers | Assumption of many non-effect SNPs |

Table 2: Typical Hyperparameter Settings for BayesCπ in Genomic Prediction Studies

| Parameter | Common Prior/Setting | Justification/Role |

|---|---|---|

| π | π ~ Beta(α=1, β=1) (Uniform) |

Uninformative prior, letting data drive inclusion rate. |

| σ²β | Scale-Inv-χ²(νβ=4, S²β=0.01) |

Weakly informative prior on the variance of non-zero SNP effects. |

| σ²e | Scale-Inv-χ²(νe=4, S²e=1.0) |

Weakly informative prior on residual variance. Often derived from phenotype. |

| Chain Length | 30,000 - 100,000 iterations | Ensures convergence and adequate sampling of the posterior distribution. |

| Burn-in | 5,000 - 20,000 iterations | Discards initial samples before the Markov Chain reaches stationarity. |

| Thinning | Every 10th - 50th sample | Reduces autocorrelation between stored samples. |

Experimental Protocol for Implementing BayesCπ

Protocol 1: Genomic Prediction Pipeline Using BayesCπ

Objective: To perform genomic prediction for a complex trait using high-density SNP data and the BayesCπ model.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Data Preparation & Quality Control (QC):

a. Genotypic Data: Load

genotype_matrix(n individuals × p SNPs). Apply QC filters: call rate >95%, minor allele frequency (MAF) >1%, remove duplicates. Code genotypes as 0, 1, 2 (homozygote, heterozygote, alternate homozygote). b. Phenotypic Data: Loadphenotype_vector(n×1). Adjust for fixed effects (e.g., year, herd) if necessary. Standardize to mean = 0, variance = 1. c. Data Partitioning: Split data intotraining_set(e.g., 80%) andvalidation_set(20%). Ensure families are not split across sets.

Model Setup & Gibbs Sampling: a. Initialize Parameters: Set

μ=mean(y_train),β=zeros(p),π=0.5,σ²e=var(y_train)*0.8,σ²β=var(y_train)*0.2/p. Create an indicator vectorδwhereδj=1if SNP j is in the model. b. Define Conditional Distributions for Gibbs sampler: * SampleμfromN( Σ(yi - Xiβ)/n , σ²e/n ). * Sample eachβjconditional onδj: * Ifδj=1:βj ~ N( (Xj'Xj + σ²e/σ²β)⁻¹ * Xj'r , σ²e/(Xj'Xj + σ²e/σ²β) ). * Ifδj=0:βj = 0. * Updater = y - 1μ - Xβ(current residuals). * SampleδjfromBernoulli( (1-π)*N(βj|0,σ²β) / [ (1-π)*N(βj|0,σ²β) + π*δ0(βj) ] ). * SampleπfromBeta( p - Σδj + a, Σδj + b ), wherea,bare Beta hyperparameters. * Sampleσ²βfromScale-Inv-χ²( νβ + Σδj , (S²β*νβ + Σ(βj² for δj=1))/(νβ + Σδj) ). * Sampleσ²efromScale-Inv-χ²( νe + n , (S²e*νe + Σ(y - 1μ - Xβ)²)/(νe + n) ). c. Run MCMC: Iterate through steps 2b for the predetermined number of cycles (e.g., 50,000). Discard the first 10,000 as burn-in. Store every 25th sample post-burn-in.Prediction & Validation: a. Calculate Posterior Means: Compute the mean of the stored samples for

μ,β, andπ. b. Predict Breeding Values: For individuals in the validation set:GEBV_validation = X_validation * β_mean. c. Assess Accuracy: Calculate the Pearson correlation (r) betweenGEBV_validationand the observed (adjusted)y_validation. Reportras prediction accuracy.

Visualizations

Title: Gibbs Sampling Workflow for BayesCπ Implementation

Title: BayesCπ Model Structure and Priors

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools for BayesCπ Analysis

| Item/Tool Name | Category | Function & Explanation |

|---|---|---|

| High-Density SNP Array Data | Biological Data | Genotype calls (e.g., 0,1,2) for thousands to millions of SNPs across the genome. Primary input for X. |

| Phenotypic Records | Biological Data | Measured trait values for the population, after correction for systematic environmental effects. Input for y. |

| R Statistical Environment | Software | Primary platform for statistical analysis. Essential for data QC, visualization, and interfacing with specialized libraries. |

BGLR R Package |

Software | Comprehensive Bayesian regression library. Includes implemented BayesCπ and BayesDπ models for out-of-the-box application. |

JWAS (Julia) |

Software | High-performance software for genomic analysis using Julia. Efficiently handles large datasets and complex models like BayesCπ. |

| Custom Gibbs Sampler (C++, Python) | Software | For full control and customization, researchers often implement the Gibbs sampling algorithm (as in Protocol 1) in a low-level language. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Necessary for running MCMC chains (tens of thousands of iterations) on large genomic datasets (n>10,000, p>50,000). |

| Gelman-Rubin Diagnostic | Analytical Tool | Used to assess MCMC convergence by running multiple chains and comparing within/between chain variances. |

This application note is situated within a broader thesis investigating advanced Bayesian models for genomic selection in livestock and plant breeding, specifically focusing on the unknown number of quantitative trait loci (QTL). The thesis contrasts two seminal models: BayesCπ and BayesDπ. While BayesCπ assumes a common variance for all non-zero marker effects, BayesDπ extends this framework by modeling effect sizes with a mixture of a point mass at zero and a slab distribution (typically a scaled-t or normal). This allows for a more flexible representation where markers deemed to have an effect can have effect sizes drawn from a continuous, heavy-tailed distribution, accommodating both small and large effects realistically. This document provides detailed protocols and analytical frameworks for implementing and interpreting the BayesDπ model.

The BayesDπ model is specified hierarchically. The key innovation is the prior on the marker effects (β_j).

Model Equation:

y = μ + Xβ + e

where y is the vector of phenotypic observations, μ is the overall mean, X is the genotype matrix, β is the vector of marker effects, and e is the residual error.

Prior for Effect β_j:

β_j | π, σ_β^2 ~ (1 - π) * δ_0 + π * N(0, σ_β^2)

or, more commonly with a heavy-tailed slab:

β_j | π, σ_β^2, ν ~ (1 - π) * δ_0 + π * t(0, σ_β^2, ν)

where δ_0 is the point mass at zero (the "spike"), π is the prior probability that a marker has a non-zero effect, and the t-distribution (or normal) is the "slab." The degrees of freedom ν can be fixed or estimated.

Key Hyperparameters and Their Priors:

π: Often assigned a Beta prior (e.g.,Beta(p0, π0)) or estimated.σ_β^2: The scale parameter of the slab; assigned a scaled inverse-χ² prior.ν: For a t-slab, can be fixed (e.g., ν=4) or given a Gamma prior for estimation.

Table 1: Comparison of Key Parameters in BayesCπ and BayesDπ Models

| Parameter | BayesCπ | BayesDπ | Interpretation |

|---|---|---|---|

| Effect Size Prior | β_j | π, σ_β^2 ~ (1-π)δ_0 + πN(0, σ_β^2) |

β_j | π, σ_β^2, ν ~ (1-π)δ_0 + πt(0, σ_β^2, ν) |

BayesDπ uses a heavy-tailed slab (t) vs. normal. |

| Variance Prior | Common σ_β^2 for all non-zero effects. |

Common scale σ_β^2, but effect-specific variance via t-distribution. |

Allows for larger range of effect sizes. |

| Inclusion Prob. | π |

π |

Probability a marker is in the slab. |

| Key Hyperparameter | π, σ_β^2 |

π, σ_β^2, ν (df) |

ν controls tail thickness of slab. |

Experimental Protocol for BayesDπ Analysis

Protocol 1: Implementing BayesDπ via Markov Chain Monte Carlo (MCMC)

Objective: To sample from the posterior distribution of marker effects, model parameters, and genetic values using the BayesDπ model.

Materials & Software:

- Genotype data (e.g., SNP array, sequence data) in centered {0,1,2} or {-1,0,1} format.

- Phenotype data (continuous traits), pre-corrected for fixed effects if necessary.

- High-performance computing cluster.

- Programming environment (R, Python, Julia).

- Custom or specialized software (e.g., modified GCTB, JWAS, or custom scripts).

Procedure:

- Data Preparation:

- Standardize genotype matrix

Xsuch that each column (marker) has mean 0 and variance 1. - Center phenotype vector

yto have mean 0.

- Standardize genotype matrix

- Parameter Initialization:

- Set initial values:

μ=mean(y),β=0,π=0.5,σ_β^2 = Variance(y)/sum(2*p*q),σ_e^2 = Variance(y)*0.1,ν=4. - Define hyperparameters: e.g., for

σ_β^2 ~ Scale-inv-χ²(ν_β, S_β²).

- Set initial values:

- MCMC Sampling Loop (for 50,000 - 100,000 iterations, discarding first 20% as burn-in):

- Sample

μ: From its full conditional normal distribution. - Sample each

β_j: Using a Gibbs sampler with a Metropolis-Hastings step or a data augmentation approach.- Draw inclusion indicator

γ_j ~ Bernoulli(p_j), wherep_j = (π * ϕ(β_j^*)) / ((1-π) * δ_0 + π * ϕ(β_j^*))(conceptual, actual Gibbs step differs). - If

γ_j=1, sampleβ_jfrom its conditional posterior (a t-distribution or normal, depending on slab formulation). - If

γ_j=0, setβ_j=0.

- Draw inclusion indicator

- Sample

π: From its full conditionalBeta(p0 + sum(γ_j), π0 + m - sum(γ_j)), wheremis total markers. - Sample

σ_β^2: From its conditional scaled inverse-χ² distribution. - Sample

σ_e^2: From its conditional scaled inverse-χ² distribution based on residuals. - (Optional) Sample

ν: If not fixed, use a Metropolis-Hastings step to update degrees of freedom.

- Sample

- Post-Processing:

- Calculate posterior mean of

β_jby averaging samples post-burn-in. - Calculate marker inclusion probability as

mean(γ_j)over iterations. - Estimate genomic breeding values as

ĝ = Xβ_postmean.

- Calculate posterior mean of

Table 2: Research Reagent & Computational Toolkit

| Item Name | Category | Function / Description |

|---|---|---|

| Standardized Genotype Matrix | Data | Centered/scaled SNP matrix (m x n). Essential for model stability and prior specification. |

| Phenotype Vector | Data | Pre-processed, de-regressed phenotypic observations, often corrected for major fixed effects. |

| GCTB (GPU) | Software | Genomic Complex Trait Bayesian software; can be extended/modified to implement BayesDπ. |

| JWAS | Software | Julia-based Whole-genome Analysis Suite; flexible for custom model specification like BayesDπ. |

| R/coda package | Software | For MCMC chain diagnostics (convergence, effective sample size). |

| Inverse-χ² Prior | Statistical | Conjugate prior for variance components (ν, S²). Governs the distribution of σ_β^2. |

| Beta Prior | Statistical | Conjugate prior for the mixing proportion π (Beta(p0, π0)). |

Visualization of Model Structure and Workflow

Diagram 1: BayesDπ Model Hierarchical Structure

Diagram 2: MCMC Sampling Workflow for BayesDπ

Within genomic selection and quantitative trait locus (QTL) mapping, accurately modeling the genetic architecture of complex traits is paramount. The parameter π (pi) serves as a critical hyperparameter in Bayesian mixture models, such as BayesCπ and BayesDπ. It explicitly models the prior probability that a given marker has a non-zero effect on the trait. This is foundational for research where the number of true QTLs is unknown, as it allows the model to learn the sparsity of genetic effects from the data itself, moving beyond fixed, subjective thresholds.

Quantitative Data on π in Genomic Prediction

The performance and behavior of BayesCπ/BayesDπ are highly sensitive to the specification and estimation of π. The following table summarizes key quantitative findings from recent studies.

Table 1: Impact of π Specification and Estimation on Model Performance

| Study Context | Fixed π Value Tested | Estimated π (Posterior Mean) | Key Performance Metric | Result Summary |

|---|---|---|---|---|

| Dairy Cattle (Milk Yield) | 0.01, 0.001, 0.0001 | 0.0005 (from data) | Predictive Accuracy (rgy) | Estimated π yielded accuracy ~0.01 higher than poorly chosen fixed values. |

| Porcine (Growth Traits) | 0.05, 0.10, 0.30 | 0.12 - 0.18 | Model Sensitivity (True Positives) | Fixed π=0.10 underestimated QTL number; estimated π better matched simulation truth. |

| Arabidopsis (Flowering Time) | 0.01, 0.001 | 0.003 | Bayes Factor for QTL Detection | Estimated π produced more stable Bayes factors, reducing false positives from polygenic noise. |

| Simulation (1000 QTLs / 50k SNPs) | Various | Converged near 0.02 | Computational Time | Models with fixed, mis-specified π converged faster but with biased effect sizes. Estimation added ~15% runtime. |

| Human GWAS (Height) | N/A (SSBLUP) | ~0.005 | Proportion of Variance Explained | π estimate indicated a highly polygenic architecture, consistent with literature. |

Experimental Protocols for Implementing and Validating π

Protocol 3.1: Gibbs Sampling Implementation for Estimating π in BayesCπ

Objective: To sample the hyperparameter π from its conditional posterior distribution within a Markov Chain Monte Carlo (MCMC) scheme.

Reagents & Materials: Genotypic matrix (n x m), Phenotypic vector (n x 1), High-performance computing cluster.

Procedure:

- Initialization: Set initial values for all parameters: marker effects (α = 0), residual variance (σ²e), genetic variance (σ²α), and π (e.g., 0.5 or a guess based on prior knowledge).

- Prior Specification: Assign a Beta(αβ, ββ) prior to π. Common non-informative choices are Beta(1,1) (Uniform) or sparse-favoring priors like Beta(1,4).

- MCMC Iteration Loop (for t = 1 to T iterations): a. Sample indicator variable (δj) for each marker j from its Bernoulli full conditional: * P(δj = 1 | ELSE) ∝ π * N(y* | αj, ...) * P(δj = 0 | ELSE) ∝ (1-π) * N(y* | 0, ...) where y* is the phenotype corrected for all other effects. b. Sample effect size (αj) from a normal distribution if δj=1, otherwise set αj=0. c. Sample variances (σ²α, σ²e) from their inverse-scale Chi-squared (or inverse-Gamma) full conditionals. d. Sample π: Draw a new value from its Beta conditional posterior: * π | ELSE ~ Beta(αβ + ∑δj, ββ + m - ∑δj) where ∑δj is the current number of markers with non-zero effects, and m is the total number of markers.

- Post-Processing: Discard the first B iterations as burn-in. Calculate the posterior mean of π as the average of sampled values from iterations B+1 to T. The posterior distribution of π can be visualized as a histogram.

Protocol 3.2: Cross-Validation for Comparing Fixed vs. Estimated π

Objective: To empirically determine if estimating π improves genomic prediction accuracy compared to using a fixed value.

Reagents & Materials: Phenotyped and genotyped dataset, Software for BayesCπ (e.g., GCTB, JWAS, R packages).

Procedure:

- Data Partitioning: Randomly divide the dataset into K-folds (e.g., K=5). Designate one fold as the validation set and combine the remaining K-1 folds as the training set. Repeat for all folds.

- Model Training (Fixed π): For each fold, run the BayesCπ model with π fixed at a series of plausible values (e.g., 0.001, 0.01, 0.05, 0.10) on the training set. Record the predicted breeding values (GEBVs) for the individuals in the validation set.

- Model Training (Estimated π): For each fold, run the BayesCπ model with π assigned a prior and estimated (as in Protocol 3.1) on the same training set. Record the GEBVs for the validation set.

- Accuracy Calculation: For each method and fold, compute the predictive accuracy as the correlation between the GEBVs and the observed (corrected) phenotypes in the validation set.

- Statistical Comparison: Perform a paired t-test across folds to compare the accuracy of the best-performing fixed π model against the estimated π model. Report mean accuracy and standard error.

Visualizations

BayesCπ Gibbs Sampling Workflow for π

Cross-Validation Design for π Evaluation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for BayesCπ/Dπ Research

| Tool / Reagent | Category | Primary Function / Description | Example or Specification |

|---|---|---|---|

| Genotyping Array | Wet-lab / Data Generation | Provides high-density SNP markers for constructing the genomic relationship matrix. | Illumina BovineHD (777k SNPs), Affymetrix Axiom AgriBio Arrays |

| HPC Cluster | Computational Infrastructure | Enables running MCMC chains (often >10,000 iterations) for multiple models in parallel. | Linux-based cluster with SLURM job scheduler and sufficient RAM per node. |

| GCTB (Genome-wide Complex Trait Bayesian) | Software | Specialized tool for large-scale Bayesian regression, including BayesCπ and BayesDπ. | Command-line tool for efficient Gibbs sampling on genomic data. |

R with BGLR/qgg Packages |

Software / Statistical Analysis | Flexible R environments for implementing Bayesian models, post-processing MCMC output, and calculating accuracy metrics. | BGLR package for general models; qgg for genomic analyses. |

| Beta(α, β) Prior Parameters | Statistical Reagent | Defines the prior belief about the distribution of π before seeing data. Influences sparsity. | Beta(1,1): Uniform prior; Beta(1,4): Expect ~20% non-zero effects. |

| Reference Genome Assembly | Bioinformatics Resource | Essential for aligning sequence data, imputing genotypes, and interpreting QTL locations. | ARS-UCD1.2 (Cattle), GRCm39 (Mouse), GRCh38 (Human). |

| Phenotyping Kits/Platforms | Wet-lab / Data Generation | Standardized measurement of the complex trait of interest (e.g., ELISA, mass spectrometry, automated imaging). | High-throughput serum analyzers, precision livestock weighing scales. |

Within the broader thesis on BayesCπ and BayesDπ for unknown QTL (Quantitative Trait Loci) number research, positioning these models against other "Bayesian Alphabet" models is critical. The core challenge in genomic selection is modeling the genetic architecture of complex traits, particularly when the number of causal variants (QTL) is unknown. The Bayesian Alphabet provides a suite of methods that differ primarily in their prior assumptions about marker effects. This analysis provides application notes and protocols for implementing and comparing these models, with a focus on the practical utility of Cπ and Dπ for resolving unknown QTL number.

Comparative Data: Key Bayesian Alphabet Models

Table 1: Core Specification of Major Bayesian Alphabet Models

| Model | Prior for Marker Effects (βⱼ) | Variance Assumption | Key Parameter for QTL Number | Best For |

|---|---|---|---|---|

| BayesA | t-distribution (Scaled-t) | Marker-specific (σ²ⱼ) | π = 1 (All markers have effect) | Traits with many small-effect QTL |

| BayesB | Mixture: Spike-Slab | Marker-specific (σ²ⱼ) | π (Proportion of markers with zero effect) | Traits with few large-effect QTL |

| BayesC | Mixture: Common Variance | Common (σ²β) for non-zero effects | π (Proportion of markers with zero effect) | Intermediate architectures; computationally efficient |

| BayesCπ | Mixture: Common Variance | Common (σ²β); π is estimated | Estimated π (Data learns sparsity) | Unknown QTL number (Primary focus) |

| BayesDπ | Mixture: Common Variance | Common (σ²β); π & effect variance estimated | Estimated π & genetic variance | Unknown QTL number & genetic variance |

| BayesR | Mixture of Normals (Multi-spike) | Multiple common variance classes | Proportion in each effect class | Capturing effect size distribution |

Table 2: Quantitative Performance Comparison (Hypothetical Simulation Scenario)

| Model | Avg. Prediction Accuracy (r) | Computation Time (Relative Units) | Mean π Estimated (True π=0.05) | QTL Detection Power (Sensitivity) |

|---|---|---|---|---|

| BayesA | 0.65 | 100 | 1.00 (Fixed) | High (Prone to false positives) |

| BayesB (π=0.95) | 0.70 | 95 | 0.95 (Fixed) | Moderate |

| BayesC (π=0.95) | 0.71 | 50 | 0.95 (Fixed) | Moderate |

| BayesCπ | 0.73 | 55 | 0.94 (Estimated) | High |

| BayesDπ | 0.74 | 60 | 0.93 (Estimated) | High |

| BayesR | 0.72 | 120 | N/A | High |

Application Notes for Cπ and Dπ

When to Choose BayesCπ

- Primary Use Case: Your research hypothesis centers on estimating the proportion of markers with non-zero effects (π) from the data, but you assume a constant genetic variance for these effects.

- Advantage: More computationally efficient than BayesDπ while still learning sparsity. Provides a direct, interpretable estimate of genetic architecture complexity.

- Thesis Context Application: Use when the total genetic variance is reasonably stable across populations or environments, but the number of underlying QTL is not.

When to Choose BayesDπ

- Primary Use Case: You need to simultaneously estimate both the sparsity (π) and the genetic variance contributed by non-zero markers. This is often more biologically realistic.

- Advantage: Joint estimation can lead to more accurate genomic predictions and parameter estimates, especially when genetic variance is uncertain.

- Thesis Context Application: The preferred choice for novel traits or populations where prior knowledge of genetic variance is minimal, aligning with the "unknown QTL number" core problem.

Detailed Experimental Protocols

Protocol 1: Implementing BayesCπ/BayesDπ for Genomic Prediction

Objective: Perform genomic prediction and estimate π using BayesCπ/Dπ. Workflow:

- Genotype & Phenotype Preparation: Quality control (QC) on SNP data (MAF, call rate, Hardy-Weinberg equilibrium). Correct phenotypes for fixed effects (e.g., herd, year, batch).

- Model Specification:

- Base Model: y = 1μ + Xβ + e

- Prior for βⱼ (BayesCπ/Dπ): βⱼ ~ π × N(0, σ²β) + (1-π) × δ₀ (where δ₀ is a point mass at zero).

- Key Difference: In Cπ, σ²β is assigned a scaled inverse-χ² prior. In Dπ, the scale parameter of this prior is estimated from the data.

- Prior for π: A uniform(0,1) or Beta(p,q) prior is typical.

- Gibbs Sampling:

- Set chain length (e.g., 50,000), burn-in (e.g., 10,000), and thinning interval (e.g., 10).

- Sampling Steps: Sample μ, sample each βⱼ conditional on its inclusion indicator (δⱼ), sample δⱼ, sample σ²β, sample π, sample residual variance σ²e.

- Posterior Analysis: Discard burn-in, assess chain convergence (e.g., Gelman-Rubin diagnostic). Calculate posterior mean of π and breeding values (XB). Estimate prediction accuracy via cross-validation.

Protocol 2: Cross-Validation for Model Comparison

Objective: Compare prediction accuracy of BayesCπ, BayesDπ, and other alphabet models.

- Data Splitting: Perform k-fold (e.g., 5-fold) cross-validation. Randomly partition the phenotypic data into k subsets.

- Iterative Training/Prediction: For each fold i:

- Set fold i as the validation set. The remaining k-1 folds form the training set.

- Run Protocol 1 for each Bayesian model using only the training set.

- Predict phenotypes for the validation set individuals using their genotypes and the estimated marker effects from the training model: ŷval = Xval * β̂_train.

- Accuracy Calculation: Correlate predicted values (ŷval) with observed (corrected) phenotypes (yval) across all folds. Report mean and standard error of correlation.

- Architecture Inference: Compare the posterior mean of π from each model across folds.

Protocol 3: Simulating Data for Unknown QTL Number Studies

Objective: Generate synthetic data to test model performance under known genetic architectures.

- Generate Genotypes: Simulate N individuals with M biallelic SNP markers. Use a coalescent or random mating simulation to create realistic LD patterns.

- Define True Genetic Architecture: Randomly select a true proportion (π_true, e.g., 0.05) of SNPs as QTL. Draw their effects from a distribution (e.g., N(0,1)). Set all other SNP effects to zero.

- Calculate Genetic Value: G = X * β_true.

- Simulate Phenotype: y = G + e, where e ~ N(0, σ²e). Set σ²e so that heritability h² = var(G) / (var(G)+σ²e) is at a desired level (e.g., 0.3).

- Analysis: Apply models to the simulated (X, y), treating π as unknown. Evaluate their ability to recover π_true and predict G.

Visualizations

Diagram 1: Bayesian Alphabet Model Selection Logic

Diagram 2: Gibbs Sampling Workflow for BayesCπ/Dπ

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Computational Tools & Packages

| Item | Function/Description | Example/Note |

|---|---|---|

| Genotyping Platform Data | High-density SNP array or whole-genome sequencing data provides the input matrix (X). | Illumina BovineHD BeadChip (777k SNPs), GBS (Genotyping-by-Sequencing). |

| Phenotypic Data Management Software | Curates and corrects trait measurements for fixed effects prior to analysis. | R, Python Pandas, specialized farm management software (e.g., DairyComp 305). |

| Bayesian GS Software | Implements Gibbs samplers for Bayesian Alphabet models. | GCTB (Recommended for Cπ/Dπ), BLR, JMulTi, ASReml (with Bayesian options). |

| High-Performance Computing (HPC) Cluster | Essential for running long MCMC chains for thousands of individuals and markers. | Linux-based clusters (Slurm/PBS job schedulers). Cloud computing (AWS, Google Cloud). |

| Convergence Diagnostics Tool | Assesses MCMC chain mixing and convergence to the target posterior distribution. | R packages: coda, boa. Check trace plots, Gelman-Rubin statistic (R-hat < 1.05). |

| Visualization Library | Creates plots for results: trace plots, density plots, manhattan plots of marker effects. | R ggplot2, bayesplot, CMplot. Python matplotlib, seaborn. |

A Practical Guide to Implementing BayesDπ and BayesCπ in Genomic Studies

Within the broader thesis on advancing genomic selection for complex traits with unknown QTL (Quantitative Trait Loci) numbers, BayesCπ and BayesDπ represent pivotal methodological frameworks. These approaches address the critical limitation of assuming a known number of causal variants by treating the proportion of SNPs (Single Nucleotide Polymorphisms) with non-zero effects (π) as an unknown parameter with its own prior distribution. This application note details the protocol for constructing the statistical models and specifying priors, moving from theory to practical implementation.

Core Statistical Model Specification

Both BayesCπ and BayesDπ are built upon a univariate linear mixed model for a continuous phenotype. The foundational equation is:

y = 1μ + Xg + e

Where:

- y is an n×1 vector of phenotypic observations (n = number of individuals).

- μ is the overall population mean.

- 1 is an n×1 vector of ones.

- X is an n×m design matrix of SNP genotypes (m = number of markers), typically centered and scaled.

- g is an m×1 vector of random SNP effects.

- e is an n×1 vector of residual errors, assumed e ~ N(0, Iσₑ²).

The key distinction between BayesCπ and BayesDπ lies in the prior assumption for the SNP effects (g).

Table 1: Model Comparison and Priors for SNP Effects

| Component | BayesCπ | BayesDπ |

|---|---|---|

| Effect Prior | Mixture Distribution: gⱼ | π, σᵍ² ~ i.i.d. { 0 with prob. π; N(0, σᵍ²) with prob. (1-π) } | Mixture Distribution: gⱼ | π, σⱼ² ~ i.i.d. { 0 with prob. π; N(0, σⱼ²) with prob. (1-π) } |

| Variance Prior | Common variance for all non-zero effects: σᵍ². Assigned an inverse-scale χ² or Scaled-Inverse-χ² prior. | Marker-specific variance: σⱼ². Each σⱼ² assigned an identical inverse-scale χ² prior. |

| Key Feature | All non-zero effects share the same variance. Simpler, more aggressive shrinkage. | Non-zero effects have heterogeneous variances. More flexible, allows for varying effect sizes. |

Step-by-Step Prior Specification Protocol

This protocol outlines the hierarchical prior structure for implementing the models via Markov Chain Monte Carlo (MCMC) sampling (e.g., Gibbs sampling).

Protocol 3.1: Defining the Hierarchical Prior Structure

- Specify the prior for the mixture probability (π):

- π is treated as an unknown parameter with a Beta prior: π ~ Beta(α, β).

- Default/Common Choice: A uniform Beta(1,1) prior, implying all values between 0 and 1 are equally likely a priori.

- Informative Choice: Use Beta(α, β) with α, β > 1 to encode a prior belief about the sparsity of QTLs. For example, Beta(2,10) places more density on smaller π (fewer QTLs).

Define the prior for SNP effects (gⱼ) conditional on π:

- Introduce a binary indicator variable δⱼ for each SNP j, where:

- P(δⱼ = 1) = (1-π), meaning the SNP has a non-zero effect.

- P(δⱼ = 0) = π, meaning the SNP effect is zero.

- The conditional prior for gⱼ is:

- gⱼ \| δⱼ=0 = 0

- gⱼ \| δⱼ=1, σ² ~ N(0, σ²) where σ² is either σᵍ² (BayesCπ) or σⱼ² (BayesDπ).

- Introduce a binary indicator variable δⱼ for each SNP j, where:

Assign priors to the variance components:

- For BayesCπ (Common Effect Variance):

- Assign an inverse-scale χ² prior to σᵍ²: σᵍ² ~ Scale-Inv-χ²(νᵍ, Sᵍ²).

- Hyperparameters: νᵍ (degrees of freedom, often set to 4-6), Sᵍ² (scale, can be derived from expected genetic variance).

- For BayesDπ (Marker-Specific Variances):

- Assign identical inverse-scale χ² priors to each σⱼ²: σⱼ² ~ Scale-Inv-χ²(ν, S²).

- This hierarchical prior allows variances to be informed by a common distribution.

- Residual Variance Prior: σₑ² ~ Scale-Inv-χ²(νₑ, Sₑ²). νₑ is often set to a small value (e.g., 4-5); Sₑ² can be informed by phenotypic variance estimates.

- For BayesCπ (Common Effect Variance):

Prior for the overall mean (μ): A flat or weakly informative normal prior, e.g., μ ~ N(0, 10⁶), is typically used.

Table 2: Summary of Key Prior Distributions and Hyperparameters

| Parameter | Prior Distribution | Typical Hyperparameter Choices | Interpretation/Rationale |

|---|---|---|---|

| π (Mixing Prob.) | Beta(α, β) | α=1, β=1 (Uniform) | Uninformative; all sparsity levels equally likely. |

| δⱼ (Indicator) | Bernoulli(1-π) | Derived from π | Latant variable controlling inclusion/exclusion. |

| σᵍ² (BayesCπ) | Scale-Inv-χ²(νᵍ, Sᵍ²) | νᵍ=5, Sᵍ²=(Varₐ*(νᵍ-2))/νᵍ/(1-π)/m | Prior scaled by prior guess of additive variance (Varₐ). |

| σⱼ² (BayesDπ) | Scale-Inv-χ²(ν, S²) | ν=5, S²=(Varₐ*(ν-2))/ν/(1-π)/m | Same scaling principle as BayesCπ. |

| σₑ² (Residual) | Scale-Inv-χ²(νₑ, Sₑ²) | νₑ=5, Sₑ²=Varₑ*(νₑ-2)/νₑ | Scaled by prior guess of residual variance (Varₑ). |

| μ (Mean) | N(μ₀, σμ²) | μ₀=0, σμ²=10⁶ | Essentially flat, non-informative. |

Experimental Protocol for Prior Sensitivity Analysis

Protocol 4.1: Assessing the Impact of Prior Choice on Genomic Prediction Accuracy

- Data Preparation: Partition a well-phenotyped and genotyped dataset (e.g., from dairy cattle, crops) into training (~80%) and validation (~20%) sets.

- Prior Grid Definition: Define a grid of hyperparameters for the Beta prior on π (e.g., (α, β) = (1,1), (1,5), (5,1), (2,10), (10,2)) and for the scale parameters of variance priors (e.g., S² values corresponding to 0.5, 1, 1.5 times the estimated genetic variance).

- Model Fitting: Run the BayesCπ/BayesDπ MCMC chain for each prior combination in the grid. Standard settings: 50,000 iterations, 10,000 burn-in, thin every 5 samples.

- Prediction & Evaluation: Predict breeding values for the validation set. Calculate the prediction accuracy as the correlation between predicted and observed phenotypes.

- Analysis: Plot prior hyperparameters against prediction accuracy and estimated π. Determine the range of hyperparameters for which results are robust.

Visualizations

Title: Gibbs Sampling Workflow for BayesCπ/Dπ

Title: Hierarchical Prior Structure of BayesCπ/Dπ Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources

| Tool/Resource | Category | Function in BayesCπ/Dπ Analysis |

|---|---|---|

| Genotyping Array or WGS Data | Input Data | Provides the marker matrix (X). Quality control (MAF, call rate, HWE) is critical. |

| Phenotype Database | Input Data | Provides the response vector (y). Requires adjustment for fixed effects (herd, year, batch). |

| BLAS/LAPACK Libraries | Computational | Optimizes linear algebra operations (matrix multiplications, solving systems) crucial for MCMC speed. |

| C/C++/Fortran Compiler | Software | Required for compiling high-performance genetics software like GBLUP, JM suites, or BGLR. |

| R/Python Statistical Environment | Software | Used for data pre-processing, post-analysis of MCMC outputs, visualization, and prior sensitivity checks. |

| Specialized Software (e.g., BGLR, GCTA) | Analysis Tool | Implements the Gibbs sampler for the described model. BGLR R package is a common flexible choice. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Enables running long MCMC chains (tens of thousands of iterations) for large datasets (m > 50K). |

| MCMC Diagnostic Tools (CODA, boa) | Analysis Tool | Assesses chain convergence (Gelman-Rubin statistic, trace plots, autocorrelation) for parameters like π. |

Data Requirements and Preprocessing for Optimal Performance

This application note details the data requirements and preprocessing protocols essential for the optimal performance of Bayesian regression models, specifically BayesCπ and BayesDπ, within the context of quantitative trait locus (QTL) discovery for complex traits. The accurate estimation of marker effects and the number of QTLs (π) is critically dependent on the quality and structure of the input genotypic and phenotypic data. Proper preprocessing mitigates biases and enhances the computational efficiency and statistical power of these high-dimensional analyses.

Core Data Requirements

BayesCπ and BayesDπ analyses require two primary data matrices: a high-dimensional genomic matrix (X) and a phenotypic vector (y). Table 1 summarizes the specifications.

Table 1: Core Data Requirements for BayesCπ/BayesDπ Analysis

| Data Component | Specification | Format | Critical Quality Metric |

|---|---|---|---|

| Genotypic Data (X) | Biallelic markers (e.g., SNPs). Count ≥ 10K. | n x m matrix (n=samples, m=markers). Encoded as 0,1,2 (ref/hom, het, alt/hom). | Call Rate > 95%, Minor Allele Frequency (MAF) > 0.01, Hardy-Weinberg Equilibrium p > 1e-6. |

| Phenotypic Data (y) | Continuous trait measurements. | n x 1 vector. Must align with genotypic data order. | Normality (Shapiro-Wilk p > 0.05 after fixed effects correction), outliers > 3.5 SD flagged. |

| Fixed Effects (W) | Non-genetic factors (e.g., herd, year, batch). | n x c design matrix. | Factors must be class variables; avoid high collinearity (VIF < 5). |

| Genetic Relationship Matrix (G) | Optional, but recommended for polygenic background control. | n x n symmetric, positive-definite matrix. | Derived from genotype matrix X using method of VanRaden (2008). |

Sample Size Considerations

Current research indicates that robust estimation of π and marker effects in BayesCπ/Dπ requires substantial sample sizes. For genome-wide association studies (GWAS) with dense marker panels, a minimum of n > 1,000 unrelated individuals is recommended to achieve reliable convergence of the Markov Chain Monte Carlo (MCMC) chains. For highly polygenic traits, n > 5,000 may be necessary to distinguish true QTLs from background noise effectively.

Preprocessing Protocols

Genotypic Data Preprocessing Workflow

The following protocol must be executed sequentially prior to model fitting.

Protocol 1: Genotype Quality Control (QC)

- Input: Raw genotype calls (e.g., from array or imputed sequence data).

- Sample-Level Filtering:

- Remove samples with overall call rate < 97%.

- Remove samples exhibiting extreme heterozygosity (±3 SD from mean).

- Verify genetic sex if applicable.

- Marker-Level Filtering:

- Remove markers with call rate < 95%.

- Remove markers with Minor Allele Frequency (MAF) < 0.01.

- Remove markers failing Hardy-Weinberg Equilibrium test (p < 1e-6).

- Data Imputation: Use dedicated software (e.g., Beagle, Minimac4) to impute missing genotypes post-QC. Document imputation accuracy (R² > 0.8 is acceptable).

- Output: A clean, complete n x m genotype matrix (X).

Diagram 1: Genotype QC and Imputation Workflow

Phenotypic Data Preprocessing Workflow

Phenotypic data must be adjusted for fixed environmental effects before Bayesian analysis to prevent confounding.

Protocol 2: Phenotype Adjustment

- Input: Raw phenotype measurements (y_raw), fixed effects design matrix (W).

- Outlier Detection: Flag records where |yraw - mean(yraw)| > 3.5 standard deviations. Review flagged records for measurement error.

- Fixed Effects Model: Fit a linear model: y_raw = Wβ + ε. Where β are fixed effect coefficients and ε are residuals.

- Extract Adjusted Phenotype: The vector of residuals (yadj) from the model in step 3 serves as the adjusted phenotype: yadj = y_raw - Wβ.

- Normality Check: Test y_adj for normality using Shapiro-Wilk test. Apply rank-based inverse-normal transformation if violation is severe (p < 0.01).

- Output: Adjusted phenotype vector (y) ready for genomic analysis.

Diagram 2: Phenotype Adjustment and Transformation

Model Input Preparation

Standardization of Genotypes

For BayesCπ and BayesDπ, centering and scaling of the genotype matrix (X) is crucial. The standard protocol is to center each marker column to mean zero. Scaling (dividing by the standard deviation) is optional but common. This puts all marker effects on a comparable scale and improves MCMC mixing.

Protocol 3: Genotype Standardization

- Input: Clean genotype matrix X (n x m).

- Calculate: For each marker j (column of X):

- Mean: μj = sum(Xij) / n

- Standard Deviation: σj = sqrt( pj * (1-pj) ), where pj is the allele frequency of the second allele.

- Center & Scale: Compute standardized genotype Zij = (Xij - μj) / σj.

- Output: Standardized n x m matrix (Z). This is the final input for the Bayesian model.

Construction of the Genetic Relationship Matrix (GRM)

Including a GRM as a covariate helps control for population stratification and polygenic background.

Protocol 4: GRM Calculation (VanRaden Method 1)

- Input: Clean, standardized genotype matrix Z (n x m).

- Compute: GRM = (Z * Z') / m, where Z' is the transpose of Z.

- Output: n x n symmetric Genetic Relationship Matrix (G). This can be used as a random effect or to adjust phenotypes in a two-step approach.

The Scientist's Toolkit: Key Reagents & Materials

Table 2: Essential Research Reagent Solutions for BayesCπ/Dπ Studies

| Item / Solution | Function / Role in Protocol |

|---|---|

| High-Density SNP Array (e.g., Illumina Infinium, Affymetrix Axiom) | Platform for generating high-throughput biallelic genotype data (Matrix X). |

| Genotype Imputation Software (e.g., Beagle5, Minimac4, Eagle2) | Infers missing genotypes and increases marker density using reference haplotype panels. |

| Genotype QC Pipeline (e.g., PLINK2.0, GCTA, bcftools) | Performs sample/marker filtering, basic statistics (MAF, HWE), and data format conversion. |

| Statistical Software for Phenotype Prep (R, Python pandas/statsmodels) | Conducts outlier detection, fixed effect adjustment, and normality transformation. |

| High-Performance Computing (HPC) Cluster | Executes computationally intensive MCMC chains for BayesCπ/BayesDπ models (often >100,000 iterations). |

| Bayesian Analysis Software (e.g., JWAS, GMRF, BLR, custom scripts in R/JAGS) | Implements the Gibbs sampling algorithms for BayesCπ and BayesDπ model fitting. |

| Genomic Reference Panel (e.g., 1000 Genomes, species-specific panel) | Haplotype database essential for accurate genotype imputation in step 3.1. |

| MCMC Diagnostic Tools (CODA in R, ArviZ in Python) | Assesses chain convergence (Gelman-Rubin statistic, effective sample size, trace plots). |

Within the broader thesis investigating Bayesian models for genomic selection, this document details the practical implementation of Markov Chain Monte Carlo (MCMC) sampling for the BayesCπ and BayesDπ models. These models are pivotal for research into the number of unknown Quantitative Trait Loci (QTL) in complex traits, with significant applications in agricultural genetics and human pharmacogenomics for drug target discovery.

Model Formulations and Key Differences

BayesCπ Model

Assumes each SNP effect is either zero (with probability π) or follows a normal distribution.

Model: ( y = 1\mu + Zu + X\beta + e ) Where:

- ( y ) is the vector of phenotypic observations.

- ( \mu ) is the overall mean.

- ( u ) is a vector of random effects (optional).

- ( Z ) is the design matrix for ( u ).

- ( \beta ) is the vector of SNP effects, with ( \betaj | \pi, \sigma\beta^2 \sim (1-\pi)\delta0 + \pi N(0, \sigma\beta^2) ).

- ( X ) is the genotype matrix (coded as 0, 1, 2).

- ( e ) is the residual, ( e \sim N(0, I\sigma_e^2) ).

BayesDπ Model

Extends BayesCπ by modeling a locus-specific variance for SNP effects, drawn from a scaled inverse-χ² distribution, allowing for variable effect sizes.

Model: ( y = 1\mu + Zu + X\beta + e ) Key difference: ( \betaj | \pi, \sigma{\betaj}^2 \sim (1-\pi)\delta0 + \pi N(0, \sigma{\betaj}^2) ), with ( \sigma{\betaj}^2 \sim \nu\beta s\beta^2 \cdot \chi{\nu\beta}^{-2} ).

Table 1: Core Model Comparison

| Feature | BayesCπ | BayesDπ |

|---|---|---|

| Variance Assumption | Common variance (( \sigma_\beta^2 )) for all non-zero SNP effects. | Locus-specific variance (( \sigma{\betaj}^2 )) for each non-zero SNP effect. |

| Effect Size Distribution | Homogeneous (Normal with fixed variance). | Heterogeneous (Normal with variable variance; t-distributed marginal). |

| Key Hyperparameter | ( \pi ): Probability a SNP has a non-zero effect. | ( \pi ): Probability a SNP has a non-zero effect; ( \nu\beta, s\beta^2 ): Scale parameters for variance distribution. |

| Computational Complexity | Lower. | Higher (requires sampling individual SNP variances). |

Gibbs Sampler Steps: Detailed Protocol

The following is a step-by-step MCMC sampling protocol for both models. Let n be the number of individuals, m the number of SNPs, and k the current number of fitted covariates/random effects.

Pre-processing and Initialization Protocol

- Genotype Standardization: Center and scale the genotype matrix ( X ) such that each column has mean 0 and variance 1. This stabilizes sampling.

- Parameter Initialization: Set initial values for all parameters (e.g., ( \mu^{(0)}, \beta^{(0)}, \sigmae^{2(0)}, \pi^{(0)} )). For ( \sigma{\beta_j}^{2(0)} ) in BayesDπ, draw from the prior.

- Residual Calculation: Compute initial residuals: ( r^{(0)} = y - 1\mu^{(0)} - X\beta^{(0)} ).

Core Gibbs Sampling Loop (Per Iteration t)

For each iteration t from 1 to the total number of MCMC iterations (e.g., 50,000):

Step 1: Sample the overall mean (µ).

- Full Conditional: ( \mu | \text{else} \sim N(\hat{\mu}, \sigma_e^2 / n) )

- Computation:

- ( \hat{\mu} = \frac{1}{n} \sum{i=1}^n (yi - xi'\beta) )

- Update residuals: ( r = r{old} + 1(\mu{old} - \mu{new}) )

Step 2: Sample SNP effects (β_j) for j = 1 to m. This is a key divergent step between models.

- Compute Ridge Regression Parameter: ( \lambdaj = \sigmae^2 / \sigma{\beta}^2 ) (BayesCπ) or ( \lambdaj = \sigmae^2 / \sigma{\beta_j}^2 ) (BayesDπ).

- Compute the Least Squares Estimate: ( \hat{\beta}j = \frac{xj' r}{xj' xj + \lambdaj} + \beta{j,old} )

- Compute the Conditional Posterior Variance: ( vj = \frac{\sigmae^2}{xj' xj + \lambda_j} )

- For BayesCπ:

- Compute the inclusion probability ( pj ): ( pj = \frac{ \pi \cdot \phi(\hat{\beta}j | 0, vj) }{ (1-\pi) \cdot \phi(0 | 0, \sigmae^2/(xj'xj)) + \pi \cdot \phi(\hat{\beta}j | 0, v_j) } )

- Draw an indicator variable ( \deltaj \sim \text{Bernoulli}(pj) ).

- If ( \deltaj = 1 ): Sample ( \betaj^{(t)} \sim N(\hat{\beta}j, vj) ).

- If ( \deltaj = 0 ): Set ( \betaj^{(t)} = 0 ).

- For BayesDπ:

- The process is identical to BayesCπ for sampling ( \deltaj ) and ( \betaj ), except ( \lambdaj ) uses the locus-specific ( \sigma{\beta_j}^2 ).

- Update Residuals: After sampling each ( \betaj ), immediately update: ( r = r + xj (\beta{j,old} - \beta{j,new}) ).

Step 3: Sample the common SNP effect variance (σ²_β) for BayesCπ.

- Full Conditional: ( \sigma\beta^2 | \text{else} \sim \text{Scale-inv-}\chi^2(\nu\beta + m1, \frac{\nu\beta s\beta^2 + \beta{(1)}'\beta{(1)}}{\nu\beta + m_1}) )

- Where ( m1 = \sum{j=1}^m \deltaj ) (number of non-zero effects), and ( \beta{(1)} ) is the vector of non-zero effects.

Step 4: Sample the locus-specific SNP effect variances (σ²_βj) for BayesDπ.

- Full Conditional (for j where δj = 1): ( \sigma{\betaj}^2 | \text{else} \sim \text{Scale-inv-}\chi^2(\nu\beta + 1, \frac{\nu\beta s\beta^2 + \betaj^2}{\nu\beta + 1}) )

- For loci where ( \deltaj = 0 ), ( \sigma{\betaj}^2 ) is sampled from the prior: ( \sigma{\betaj}^2 \sim \nu\beta s\beta^2 \cdot \chi{\nu_\beta}^{-2} ).

Step 5: Sample the residual variance (σ²_e).

- Full Conditional: ( \sigmae^2 | \text{else} \sim \text{Scale-inv-}\chi^2(\nue + n, \frac{\nue se^2 + r'r}{\nu_e + n}) )

- Where ( r ) is the current vector of residuals.

Step 6: Sample the mixing proportion (π).

- Full Conditional: ( \pi | \text{else} \sim \text{Beta}(p0 + m1, q0 + m - m1) )

- Where ( p0 ) and ( q0 ) are prior hyperparameters (e.g., ( p0=1, q0=1 ) for a uniform prior).

Step 7 (Optional): Sample random effects (u) and polygenic variance.

- If included, sample from: ( u | \text{else} \sim N(\hat{u}, (Z'Z + \frac{\sigmae^2}{\sigmau^2}I)^{-1} \sigmae^2 ) ), where ( \hat{u} = (Z'Z + \frac{\sigmae^2}{\sigma_u^2}I)^{-1} Z' r ).

- Sample polygenic variance: ( \sigmau^2 | \text{else} \sim \text{Scale-inv-}\chi^2(\nuu + k, \frac{\nuu su^2 + u'u}{\nu_u + k}) ).

- Update residuals.

Post-processing Protocol

- Burn-in Discarding: Discard the first 5,000-10,000 iterations as burn-in.

- Thinning: Retain every 10th-50th sample to reduce autocorrelation.

- Convergence Diagnostics: Assess chain convergence using trace plots and the Gelman-Rubin diagnostic (if multiple chains are run).

- Posterior Inference: Calculate posterior means, medians, and credible intervals from the retained samples for all parameters of interest (e.g., SNP effects, π).

Visual Workflow

Title: Gibbs Sampling Workflow for BayesCπ and BayesDπ

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Packages

| Item / Software Package | Primary Function | Application in BayesCπ/Dπ Analysis |

|---|---|---|

| R Statistical Environment | Open-source platform for statistical computing and graphics. | Primary environment for data manipulation, running samplers, and conducting posterior analysis. |

| Rcpp / RcppArmadillo | R packages for seamless C++ integration. | Used to write high-performance Gibbs sampling loops in C++ for speed, called from R. |

BGLR R Package |

Comprehensive Bayesian regression library. | Contains efficient, pre-coded implementations of BayesCπ and related models for applied research. |

Julia Language |

High-performance, just-in-time compiled technical computing language. | Emerging alternative for writing custom, fast MCMC samplers from scratch. |

| PLINK / GCTA | Standard tools for genome-wide association studies and genetic data management. | Used for quality control (QC), formatting genotype data, and calculating genomic relationship matrices for random effects. |

| High-Performance Computing (HPC) Cluster | Parallel computing resources (e.g., Slurm scheduler). | Essential for running long MCMC chains (100k+ iterations) for large datasets (n > 10k, m > 100k). |

| Parallel MCMC | Technique to run multiple chains or parallelize within a chain. | Used to assess convergence (multiple chains) and accelerate sampling of independent SNP blocks. |

| Standardized Genotype File (e.g., .bed, .raw) | Binary or text format for storing large SNP matrices. | The raw input data. QC includes filtering for minor allele frequency (MAF) and call rate. |

This document provides application notes and protocols for implementing genomic prediction and genome-wide association study (GWAS) models, specifically the Bayesian BayesCπ and BayesDπ methods. These methods are central to a broader thesis investigating genetic architecture with an unknown number of quantitative trait loci (QTL). BayesCπ assumes a known common variance for non-zero SNP effects, while BayesDπ incorporates an unknown distribution for this variance, offering flexibility for traits with complex genetic architectures. The practical implementation of these models is facilitated by specialized software tools: GenSel, BGLR, and custom DIY scripts.

The table below summarizes the core features, supported models, and use-case recommendations for the three primary implementation avenues.

Table 1: Comparison of Software Tools for Implementing BayesCπ and BayesDπ

| Feature | GenSel | BGLR (R Package) | DIY Scripts (e.g., in R/Python) |

|---|---|---|---|

| Primary Use | Large-scale genomic selection in animal breeding. | General Bayesian regression models in R. | Full control for methodological research. |

| BayesCπ Support | Yes (as "Bayes Cpi"). | Yes (via model="BayesC" with probIn=π). |

Implemented per algorithm specification. |

| BayesDπ Support | Indirectly via BayesC with estimated variance. | Yes (via model="BayesC" with probIn=π & listRho). |

Full customization possible. |

| Ease of Use | Command-line driven, requires specific file formats. | High, within R environment. | Low, requires advanced programming. |

| Computational Speed | Highly optimized (C/C++). | Moderate (R/C++ core). | Variable, depends on optimization. |

| Flexibility | Low to moderate. | High, many prior options. | Maximum. |

| Best For | Applied, large-scale genomic prediction. | Prototyping, simulation studies, applied research. | Algorithm development, testing novel priors. |

| Key Citation | Fernando & Garrick (2013) | Pérez & de los Campos (2014) | Custom implementation based on Habier et al. (2011) |

Detailed Experimental Protocols

Protocol 3.1: Implementing BayesCπ/BayesDπ using GenSel

Objective: Perform genomic prediction for a complex trait using BayesCπ model. Materials:

- Genotype file (

genotype.txt): Coded as 0,1,2 for AA, AB, BB. - Phenotype file (

phenotype.txt): Individual IDs and trait values. - Parameter file (

gensel.par): Controls model and chain parameters.

Procedure:

- Data Preparation: Convert genotypes to GenSel format (space-delimited, one row per SNP). Center and scale phenotypes.

- Parameter File Configuration:

- Set

analysisType BayesCpi - Define

pi 0.01(or appropriate starting value for π). - Set

varGenic 0.95(proportion of genetic variance explained by SNPs). - Specify chain parameters:

chainLength 41000,burnIn 1000.

- Set

- Execution: Run command

gensel gensel.parin terminal/command prompt. - Output Analysis: Analyze

MCMCSamplesfiles for SNP effect estimates, π values, and breeding values. Monitor convergence.

Protocol 3.2: Implementing BayesCπ/BayesDπ using BGLR

Objective: Fit BayesCπ model within an R environment for genomic prediction. Materials:

- R installation with BGLR package.

- Genotype matrix

X(n x m, n=samples, m=SNPs), centered. - Phenotype vector

y.

Procedure:

Protocol 3.3: Core Algorithm for DIY Implementation

Objective: Outline the Gibbs sampling step for a DIY BayesCπ script. Key Steps per iteration (t):

- Sample overall mean (μ): From a normal distribution conditional on residuals.

- Sample SNP effects (βj):

- For each SNP j, calculate the conditional posterior.

- With probability (1-π), set βj^(t) = 0.

- With probability π, sample β_j^(t) from a normal distribution with mean and variance conditional on other parameters.

- Sample residual variance (σ_e²): From a scaled inverse-χ² distribution.

- Sample common variance of non-zero effects (σβ²) [BayesCπ]: From a scaled inverse-χ² distribution conditional on all non-zero βj.

- Sample the indicator variable (δj) and π: Update δj (1 if βj ≠ 0) based on a Bernoulli draw. Then sample π from a Beta distribution based on sum(δj).

Visualization of Workflows

Title: Bayesian Genomic Analysis Workflow

Title: BayesCπ/BayesDπ Graphical Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Reagents

| Item | Function/Description | Example/Note |

|---|---|---|

| Genotype Dataset | Raw input of genetic variants (SNPs). Quality is critical. | PLINK (.bed/.bim/.fam) or VCF format. Requires stringent QC. |

| Phenotype Dataset | Measured trait values for each individual. | Correct for fixed effects (year, herd, batch) before analysis. |

| GenSel Software Suite | Optimized executable for running Bayesian models on large datasets. | Command-line tool. Requires specific parameter file structure. |

| BGLR R Package | Flexible R environment for Bayesian Generalized Linear Regression. | Enables rapid prototyping and integration with other R stats tools. |

| High-Performance Computing (HPC) Cluster | Essential for MCMC analysis of large datasets (n > 10,000). | Manages long runtimes (hours-days) and memory-intensive tasks. |

| Convergence Diagnostic Tool | Assesses MCMC chain stability and sampling adequacy. | Use coda R package to compute Gelman-Rubin statistic, trace plots. |

| Genetic Relationship Matrix (GRM) | Optional input to account for population structure/polygenic background. | Can be calculated from genotypes and fitted as an extra random effect. |

Application Notes

Genomic Prediction (GP) for complex traits is a cornerstone of modern quantitative genetics, enabling the estimation of breeding values or disease risk from dense genome-wide marker panels. Traits with a polygenic architecture are influenced by many quantitative trait loci (QTLs), each with a small effect. Within the thesis context of evaluating BayesCπ and BayesDπ for scenarios with an unknown number of true QTLs, these methods offer distinct advantages over traditional GBLUP or single-SNP models. BayesCπ assumes a proportion π of markers have zero effect, while BayesDπ further models the variance of non-zero markers, allowing for a more flexible fit to the unknown distribution of QTL effects.

Current State: Recent advances leverage large-scale biobank data (e.g., UK Biobank) and improved computational algorithms. The integration of GP in drug development is growing, particularly in pharmacogenomics for predicting patient-specific drug response phenotypes.

Key Findings from Recent Literature:

- BayesDπ often demonstrates superior predictive accuracy for traits where a small subset of variants have moderately larger effects amidst a sea of very small effects.

- Computational efficiency remains a challenge; however, algorithmic improvements like Gibbs sampling with data augmentation are widely adopted.

- The predictive performance is highly dependent on the genetic architecture of the target trait, heritability, and the size and relatedness of the reference population.

Protocols for Genomic Prediction using BayesCπ/BayesDπ

Protocol 1: Data Preparation & Quality Control

Objective: To prepare genotype and phenotype data for genomic prediction analysis.

Materials:

- Genotype data (e.g., SNP array or imputed sequence-level data in PLINK format).

- Phenotype data (cleaned, with appropriate corrections for fixed effects like age, sex, population structure).

- High-performance computing (HPC) environment.

Methodology:

- Genotype QC: Filter markers based on call rate (>95%), minor allele frequency (>0.01), and Hardy-Weinberg equilibrium (p > 1e-6). Filter individuals for call rate (>97%).

- Phenotype QC: Remove outliers (e.g., values beyond ±3.5 standard deviations from the mean). Apply appropriate transformations (e.g., logarithmic) to achieve normality if required.

- Data Integration: Merge genotype and phenotype files, ensuring correct individual matching.

- Population Structure: Calculate principal components (PCs) using genotype data to account for population stratification. Include top PCs as covariates in the model if needed.

- Training/Validation Partition: Randomly split the data into a training set (typically 80-90%) for model development and a validation set (10-20%) for testing prediction accuracy.

Protocol 2: Model Implementation via Gibbs Sampling

Objective: To implement BayesCπ and BayesDπ models for genomic value prediction.

Materials:

- QC-ed genotype (

X) and phenotype (y) matrices. - Software: Custom scripts in R/Python interfacing with compiled sampling routines, or specialized packages like

BGLRorJM.

Methodology (BayesCπ):

- Model Specification: Define the statistical model:

y = 1μ + Xg + ewhereyis the vector of phenotypes,μis the overall mean,Xis the genotype matrix (coded as 0,1,2),gis the vector of SNP effects, andeis the residual. - Prior Specifications:

g_i | π, σ_g^2~ i.i.d. mixture: (1-π)δ(0) + π N(0, σ_g^2)π~ Beta(p0, π0) (often p0=1, π0=0.5, or estimated).σ_g^2~ Scale-inverse-χ²(v, S).σ_e^2~ Scale-inverse-χ²(v, S).

- Gibbs Sampling:

- Initialize all parameters.

- Sample

g_ifrom its full conditional posterior distribution (a mixture of a point mass at zero and a normal distribution). - Sample the indicator variable for whether

g_iis zero or not. - Sample

πfrom its Beta posterior. - Sample

the variance componentsσg^2andσe^2`. - Repeat for a large number of cycles (e.g., 50,000), discarding the first 20% as burn-in.

- Posterior Inference: Calculate the posterior mean of SNP effects (

g) and genomic estimated breeding values (GEBVs =Xg).

Methodology (BayesDπ): The protocol is identical to BayesCπ, except in the prior for g_i. In BayesDπ, each non-zero SNP effect has its own variance drawn from a common scaled inverse-χ² prior, allowing for a more heavy-tailed distribution of effects.

Protocol 3: Model Validation & Accuracy Assessment

Objective: To evaluate the predictive performance of the fitted models.

Methodology:

- Prediction: Apply the SNP effect estimates from the training set to the genotype data of the validation set to predict phenotypes (

ŷ_val). - Accuracy Calculation: Correlate the predicted values (

ŷ_val) with the observed phenotypes (y_val). This correlation is the prediction accuracy. - Bias Assessment: Regress observed values (

y_val) on predicted values (ŷ_val). The slope of the regression indicates prediction bias (ideal slope = 1).

Data Tables

Table 1: Comparative Performance of BayesCπ and BayesDπ on Simulated Traits

| Trait Architecture (QTL Number) | Heritability (h²) | Method | Prediction Accuracy (r) | Computation Time (hrs) |

|---|---|---|---|---|

| Highly Polygenic (10,000) | 0.5 | BayesCπ | 0.68 ± 0.02 | 12.5 |

| BayesDπ | 0.69 ± 0.02 | 14.1 | ||

| Moderately Polygenic (100) | 0.3 | BayesCπ | 0.52 ± 0.03 | 10.8 |

| BayesDπ | 0.58 ± 0.03 | 12.5 | ||

| Mixed (10 large + 990 small) | 0.4 | BayesCπ | 0.61 ± 0.02 | 11.7 |

| BayesDπ | 0.65 ± 0.02 | 13.3 |

Note: Simulation based on n=5,000 individuals, p=50,000 markers. Accuracy reported as mean ± sd over 20 replicates.

Table 2: Key Research Reagent Solutions

| Item | Function in GP Research |

|---|---|

| Genotyping Array (e.g., Illumina Infinium) | Provides high-throughput, cost-effective genome-wide SNP data for constructing the X matrix. |

| Imputation Server (e.g., Michigan Imputation Server) | Increases marker density from array data to whole-genome sequence variants, improving resolution. |

| BGLR R Package | Provides efficient implementations of various Bayesian regression models, including BayesCπ. |

| PLINK Software | Industry-standard for genotype data management, quality control, and basic association analysis. |

| High-Performance Computing Cluster | Essential for running computationally intensive MCMC chains for thousands of individuals and markers. |