Bayesian Alphabet in Genomic Prediction: From BLUP to Machine Learning Integration for Precision Medicine

This article provides a comprehensive guide to Bayesian alphabet models for genomic prediction, tailored for researchers and drug development professionals.

Bayesian Alphabet in Genomic Prediction: From BLUP to Machine Learning Integration for Precision Medicine

Abstract

This article provides a comprehensive guide to Bayesian alphabet models for genomic prediction, tailored for researchers and drug development professionals. We first establish the foundational principles, contrasting the Bayesian paradigm with traditional BLUP methods. We then detail core methodologies (BayesA, BayesB, BayesC, etc.) and their implementation for complex trait prediction. Practical sections address computational challenges, model selection, and hyperparameter optimization. Finally, we present rigorous validation frameworks and comparative analyses against machine learning alternatives, concluding with implications for accelerating genomic selection in breeding and translating polygenic risk scores to clinical drug development.

Beyond BLUP: Understanding the Bayesian Alphabet Revolution in Genomic Prediction

Genomic Best Linear Unbiased Prediction (GBLUP) has been a cornerstone of genomic prediction. However, its assumptions of a single, normally distributed genetic variance for all markers limit its accuracy for complex traits influenced by a few Quantitative Trait Loci (QTL) with large effects amidst many with small effects. This spurred the adoption of Bayesian Alphabet models (BayesA, BayesB, BayesCπ, etc.), which allow for marker-specific variances, effectively integrating variable selection and shrinkage, essential for polygenic trait prediction in plant, animal, and human disease genomics.

Quantitative Comparison: GBLUP vs. Bayesian Alphabet Models

Table 1: Predictive Performance Metrics (Simulated & Real Data Studies)

| Model / Characteristic | Assumption on Marker Effects | Trait Architecture Suitability | Computational Demand | Key Advantage | Reported Predictive Accuracy* (Increase over GBLUP) |

|---|---|---|---|---|---|

| GBLUP | Equal variance, normal distribution | Highly polygenic, infinitesimal | Low to Moderate | Computational speed, BLUP properties | Baseline (0%) |

| BayesA | t-distributed variances | Mixed effect sizes | High | Heavy-tailed shrinkage | +1.5% to +4.0% |

| BayesB | Mixture (some effects zero) | Few large, many zero QTL | High | Variable selection | +2.0% to +8.0% for traits with major QTL |

| BayesCπ | Mixture, π estimated | General, unknown QTL number | Very High | Estimates proportion of non-zero effects (π) | +1.8% to +5.5% |

| Bayesian Lasso | Double-exponential prior | Many small, few moderate QTL | High | Continuous shrinkage, correlated variables | +1.0% to +3.5% |

*Accuracy measured as correlation between genomic estimated breeding values (GEBVs) and observed phenotypes in validation sets. Ranges are illustrative from recent literature.

Table 2: Application Contexts in Drug Development & Biomedical Research

| Application Area | GBLUP Limitation | Bayesian Alphabet Solution |

|---|---|---|

| Pharmacogenomics | Poor prediction for drug response driven by rare variants | Models like BayesB/Cπ can down-weight common variants and up-weight rare, functional variants. |

| Complex Disease Risk | Assumes homogeneous genetic architecture across populations | Flexible priors accommodate population-specific effect size distributions. |

| Microbiome Metagenomics | Cannot handle sparse, over-dispersed count data | Bayesian models with negative binomial or zero-inflated priors are directly integrable. |

| Transcriptomic Prediction | Ignores gene network/biological priors | Bayesian methods allow incorporation of pathway information into priors. |

Experimental Protocol: Implementing a Bayesian Alphabet Analysis for Genomic Prediction

Protocol Title: Genome-Wide Prediction of Complex Traits Using BayesCπ

Objective: To perform genomic prediction and estimate marker effects for a complex trait using a Bayesian mixture model with an unknown proportion of nonzero effects.

Materials & Software:

- Genotyped population data (e.g., SNP array or sequencing data in PLINK, VCF format).

- Phenotypic records for training and validation sets.

- High-performance computing (HPC) cluster or server.

- Software:

RwithBGLRpackage, or standalone tools likeGS3orJWAS.

Procedure:

Data Preparation:

- Genotype Quality Control (QC): Filter SNPs for call rate (>95%), minor allele frequency (>0.01), and Hardy-Weinberg equilibrium (p > 10^-6). Impute missing genotypes using software like

Beagle. - Phenotype QC: Correct for relevant fixed effects (e.g., age, sex, batch) using a linear model. Standardize phenotypes to mean = 0, variance = 1.

- Data Partitioning: Randomly split the data into training (∼80%) and validation (∼20%) sets. Ensure family structure is accounted for to avoid inflation of accuracy.

- Genotype Quality Control (QC): Filter SNPs for call rate (>95%), minor allele frequency (>0.01), and Hardy-Weinberg equilibrium (p > 10^-6). Impute missing genotypes using software like

Model Implementation (Using

BGLRin R):MCMC Diagnostics & Convergence:

- Run multiple chains from different starting points.

- Monitor trace plots of key parameters (e.g., genetic variance, π) to assess convergence. Use Gelman-Rubin diagnostic (potential scale reduction factor < 1.1).

- Ensure effective sample size for parameters is >1000.

Prediction Accuracy Assessment:

- Extract genomic estimated breeding values (

fm$yHat) for the validation individuals. - Calculate the predictive correlation:

cor(predicted_yHat[validation], observed_y[validation]). - Calculate mean squared prediction error (MSPE).

- Extract genomic estimated breeding values (

Interpretation:

- Inspect the distribution of estimated marker effects. Identify SNPs with largest absolute effect sizes.

- Compare the estimated π to the a priori genetic architecture hypothesis.

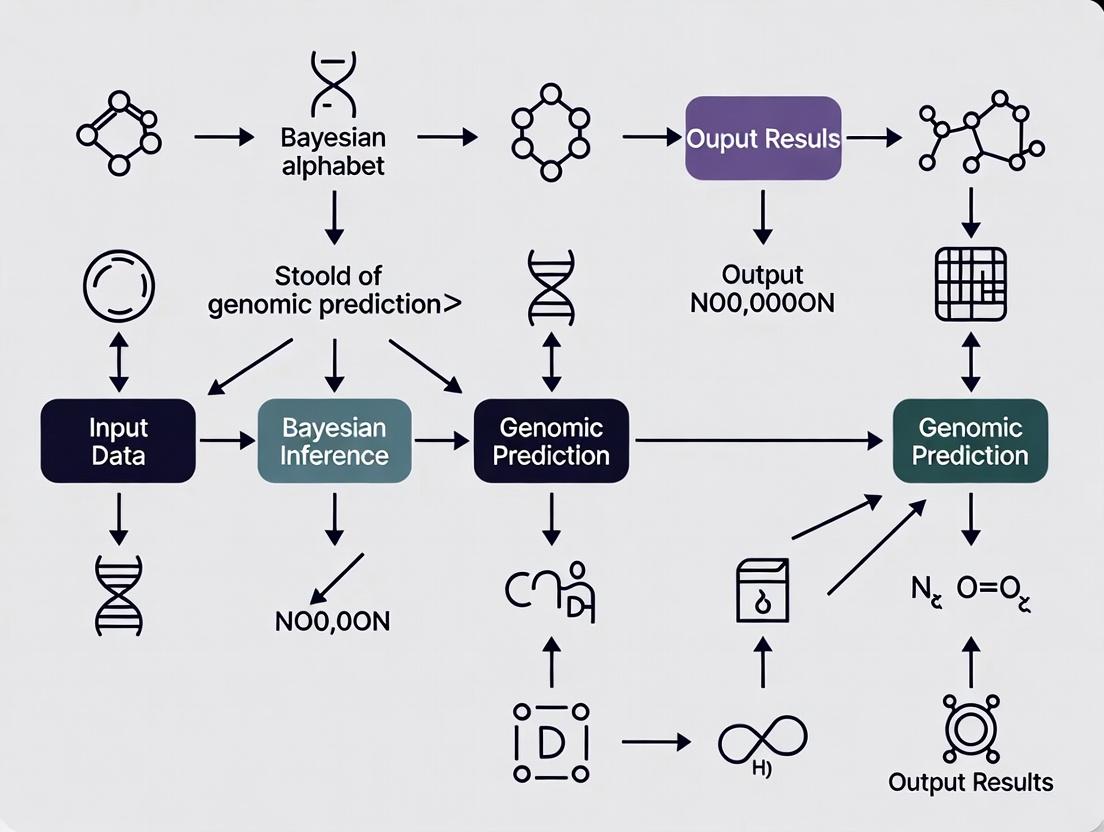

Visualizing the Conceptual and Workflow Shift

Diagram 1: Model Assumptions on SNP Effects

Diagram 2: Bayesian Genomic Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Bayesian Genomic Prediction Research

| Item / Reagent | Function / Purpose in Analysis | Example / Note |

|---|---|---|

| High-Density SNP Array | Provides genome-wide marker genotypes for constructing the genomic relationship matrix (G) or design matrix (X). | Illumina Infinium, Affymetrix Axiom arrays. For cost-effective imputation from low-pass sequencing. |

| Whole Genome Sequencing (WGS) Data | Gold standard for variant discovery. Enables analysis of all potential causal variants, overcoming array ascertainment bias. | Critical for Bayesian methods to model rare variant effects accurately. |

| MCMC Sampling Software | Performs the computationally intensive Bayesian inference. | BGLR (R), STAN, GCTA, JWAS, BLR. Choice depends on model flexibility and speed. |

| High-Performance Computing (HPC) Resources | Necessary for running long MCMC chains (tens to hundreds of thousands of iterations) on large datasets (n > 10,000, p > 50,000). | Cloud computing (AWS, GCP) or local clusters with parallel processing capabilities. |

| Phenotyping Platforms | Generate high-throughput, accurate phenotypic data for the training population. | Automated imaging, mass spectrometry, clinical diagnostics. Quality is paramount for prediction accuracy. |

| Biological Prior Databases | Sources of information to construct informed priors (e.g., weighting SNPs by functional annotation). | GWAS catalogs, gene ontology (GO) databases, pathway databases (KEGG, Reactome). |

Within the broader thesis on Bayesian alphabet models for genomic prediction, understanding the core principles of prior specification, likelihood construction, and posterior computation is fundamental. These principles underpin models (e.g., BayesA, BayesB, BayesCπ) used to predict complex traits from high-density genomic markers, directly impacting genomic selection in agriculture and polygenic risk score development in human medicine.

Foundational Principles in Genomic Prediction

Mathematical Framework

The Bayesian paradigm updates prior belief with observed data to form a posterior distribution, as formalized by Bayes' theorem:

Posterior ∝ Likelihood × Prior

In genomic prediction, for a vector of marker effects (α) given observed phenotypic data (y) and genotype matrix (X), this becomes: P(α | y, X) ∝ P(y | X, α) × P(α)

Table 1: Core Bayesian Alphabet Models for Genomic Prediction

| Model | Prior on Marker Effects (α) | Key Assumption | Typical Application |

|---|---|---|---|

| RR-BLUP/BayesA | Normal with common variance | All markers contribute equally to genetic variance. | Baseline genomic heritability estimation. |

| BayesA | Scaled-t distribution | Many small effects, few larger ones; heavy-tailed. | Capturing moderate-effect QTL. |

| BayesB | Mixture: Spike-Slab (point mass at zero + scaled-t) | Many markers have zero effect; sparse architecture. | Genomic selection for traits with major genes. |

| BayesC/Cπ | Mixture: Spike-Slab (point mass at zero + normal) | π (proportion of non-zero effects) is estimated. | General-purpose prediction; variable selection. |

| Bayesian LASSO | Double Exponential (Laplace) | Strong shrinkage of small effects to zero. | Handling high collinearity among markers. |

Application Notes & Protocols

Protocol: Setting Informative Priors for Polygenic Risk Scores (PRS)

Objective: Incorporate prior knowledge from previous Genome-Wide Association Studies (GWAS) into a Bayesian genomic prediction model for disease risk.

Materials:

- Genotype data (imputed, QCed)

- Phenotypic disease status (case/control)

- Summary statistics from independent GWAS.

Procedure:

- Prior Definition: For each SNP i, define the prior mean (μ_i) and variance (σ_i²) for its effect size based on external GWAS beta coefficients and standard errors. Use a normal prior: α_i ~ N(μ_i, σ_i²).

- Likelihood Construction: For N individuals, model the log-odds of disease using a logistic likelihood: y_j ~ Bernoulli(logit⁻¹(β_0 + Xjᵀα)), where Xj is the genotype vector for individual j.

- Posterior Computation: Employ a Markov Chain Monte Carlo (MCMC) sampler (e.g., Gibbs sampling with Metropolis-Hastings steps for logistic models) to draw samples from P(α | y, X, μ, σ²).

- Posterior Summary: Calculate the posterior mean of α as the PRS weights. Validate predictive accuracy (AUC) in a held-out cohort.

Protocol: Bayesian Variable Selection for Genomic Selection in Plant Breeding

Objective: Implement a BayesB model to identify markers with non-zero effects on yield.

Materials:

- Plant genotype data (e.g., SNP array)

- Phenotypic yield data from a training population.

Procedure:

- Model Specification:

- Likelihood: y = 1μ + Xα + e, with e ~ N(0, Iσe²).

- Prior for αi: *αi* | σi², π ~ {0 with probability π; N(0, σ_i²) with probability (1-π)}.

- Prior for σi²: σi² ~ χ⁻²(ν, S).

- Prior for π: π ~ Beta(p, q), often setting p and q for a weakly informative prior.

- MCMC Sampling: Run a Gibbs sampler. Key steps include:

- Sample an indicator variable (δi) for whether αi is zero or non-zero.

- Sample non-zero αi from a conditional normal distribution.

- Sample σi² from a conditional inverse-chi-square distribution.

- Sample π from a Beta distribution conditional on the number of non-zero effects.

- Convergence Diagnostics: Monitor trace plots and calculate Gelman-Rubin statistics for key parameters (e.g., genetic variance).

- Prediction: Use the posterior mean of marker effects to calculate genomic estimated breeding values (GEBVs) for selection candidates: GEBV = Xcandidate × α̂post.mean.

Visualizations

Bayesian Inference Core Workflow

Bayesian Genomic Prediction Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for Bayesian Genomic Analysis

| Item / Reagent | Function / Purpose |

|---|---|

| High-Density SNP Array or Whole-Genome Sequencing Data | Provides the genotype matrix (X), the foundational input data. |

| QCed Phenotypic Data | The observed outcome vector (y); requires normalization for continuous traits. |

| Statistical Software (R/Python) | Platform for data manipulation, visualization, and interfacing with specialized Bayesian tools. |

| Bayesian Analysis Software (e.g., BGLR, GCTA, Stan, JAGS) | Specialized packages that implement efficient MCMC samplers for high-dimensional genomic models. |

| High-Performance Computing (HPC) Cluster | Enables feasible computation time for MCMC chains on large datasets (n > 10,000, p > 100,000). |

| GWAS Summary Statistics (for informative priors) | Provides external evidence to set prior means and variances for marker effects. |

| Reference Genome Assembly | Enables accurate mapping of markers and biological interpretation of identified loci. |

Within the framework of a thesis on Bayesian alphabet models for genomic prediction, these statistical methods are pivotal for dissecting the genetic architecture of complex traits and accelerating genomic selection in plant, animal, and disease research. They differ primarily in their prior assumptions about the distribution of marker effects, which influences their performance for traits with varying genetic architectures (e.g., many small effects vs. a few large effects).

Core Model Specifications and Comparative Data

Table 1: Comparative Overview of Key Bayesian Alphabet Models for Genomic Prediction

| Model | Prior on Marker Effects (β) | Key Hyperparameters | Assumption on Genetic Architecture | Best Suited For |

|---|---|---|---|---|

| BayesA | Scaled-t (or related distributions) | Degrees of freedom (ν), scale parameter (S²) | Many markers have small/null effects; some have large. Infinitely many loci. | Traits influenced by a few QTLs with moderate to large effects. |

| BayesB | Mixture: Spike-Slab (π = 0, 1-Slab) | Mixing proportion (π), ν, S² | A proportion (π) of markers have zero effect; rest follow a t-distribution. Finite number of QTLs. | Traits with a sparse genetic architecture (few effective QTLs). |

| BayesC | Mixture: Common Variance (π = 0, 1-Slab) | Mixing proportion (π), common variance (σ²β) | A proportion (π) of markers have zero effect; rest share a common normal variance. | Similar to BayesB, but with a simpler, Gaussian slab. |

| BayesCπ | Mixture with estimated π | Mixing proportion (π) estimated, σ²β | Similar to BayesC, but the proportion of non-zero markers (1-π) is learned from the data. | General use when sparsity level is unknown a priori. |

| BayesR | Mixture of Normals | Multiple variance classes (e.g., σ²β=0, 0.0001, 0.001, 0.01), their proportions | Markers can be allocated to different effect size classes, including zero. | Complex traits with a spectrum of effect sizes (small, medium, large). |

Table 2: Typical Quantitative Outcomes from a Simulation Study Comparing Models Based on published simulation studies for a trait with 10 large and 1000 small QTLs.

| Model | Prediction Accuracy (r) | Bias (Slope) | Computational Demand | Key Reference (Example) |

|---|---|---|---|---|

| BayesA | 0.68 | 0.95 | Medium | Meuwissen et al., 2001 |

| BayesB | 0.72 | 0.98 | High | Habier et al., 2011 |

| BayesC | 0.71 | 0.99 | Medium-High | Kizilkaya et al., 2010 |

| BayesCπ | 0.73 | 1.01 | Medium-High | Habier et al., 2011 |

| BayesR | 0.74 | 1.00 | High | Moser et al., 2015 |

Experimental Protocol: Standard Workflow for Implementing Bayesian Alphabet Models in Genomic Prediction

Objective: To perform genomic prediction for a quantitative trait using a Bayesian alphabet model (e.g., BayesCπ) and evaluate its predictive accuracy.

Materials: See "The Scientist's Toolkit" below.

Procedure:

Data Preparation & Quality Control (QC):

- Genotypic Data: Input raw genotype calls (e.g., SNP chip data). Perform QC: filter markers based on call rate (>95%), minor allele frequency (>0.01), and Hardy-Weinberg equilibrium (p > 1e-6). Code genotypes as 0, 1, 2 (homozygote, heterozygote, alternate homozygote). Impute any missing genotypes using standard software.

- Phenotypic Data: Input trait measurements. Adjust for fixed effects (e.g., year, location, herd, sex) using a linear model to obtain residuals, which become the corrected phenotypes (y) for analysis. Alternatively, include fixed effects directly in the Bayesian model.

- Data Partitioning: Split the total dataset (N) into a training set (Ntr) for model training and a *validation set* (Nval) for testing prediction accuracy. A typical split is 80%/20%.

Model Implementation (Gibbs Sampling):

- Define Model Equation: y = 1μ + Xβ + e, where y is the vector of corrected phenotypes, μ is the overall mean, X is the design matrix of standardized genotypes, β is the vector of marker effects, and e is the vector of residuals.

- Set Priors:

- μ ~ N(0, 10^6)

- e ~ N(0, Iσ²e) with σ²e ~ χ⁻²(νe, S²e)

- For BayesCπ: β_j | π, σ²β ~ (1-π) N(0, σ²β) + π δ₀, where δ₀ is a point mass at 0.

- Set priors for hyperparameters: π ~ Beta(p0, π0), σ²β ~ χ⁻²(νβ, S²β).

- Initialize Chains: Set starting values for all parameters (often at 0 or their prior mean).

- Run Gibbs Sampler: For each iteration (t = 1 to T, where T is total iterations, e.g., 50,000):

- Sample μ from its full conditional distribution (normal).

- Sample each β_j from its full conditional (a mixture distribution).

- Sample the indicator variable for each SNP (whether it is in or out of the model).

- Sample the variance components σ²β and σ²e from their full conditionals (inverse-chi-squared).

- Sample the mixing proportion π from its full conditional (Beta).

- Burn-in & Thinning: Discard the first B iterations (e.g., B=20,000) as burn-in. To reduce autocorrelation, thin the chain by storing every k-th sample (e.g., k=10).

Prediction & Validation:

- Calculate GEBVs: For each stored sample from the posterior, calculate genomic estimated breeding values (GEBVs) for individuals in the validation set: GEBVval = Xval β̂. The posterior mean of these across all stored samples is the final predicted genetic value.

- Assess Accuracy: Correlate the predicted GEBVs (from step 3a) with the observed corrected phenotypes (y_val) in the validation set. This correlation (r) is the prediction accuracy.

- Assess Bias: Regress y_val on the GEBVs. The slope of the regression indicates bias (slope=1 is unbiased, <1 is inflationary, >1 is deflationary).

Visualizing Model Relationships and Workflow

Bayesian Genomic Prediction Workflow

Alphabet Model Prior Assumption Relationships

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools for Bayesian Genomic Prediction Studies

| Item | Function/Description | Example/Supplier |

|---|---|---|

| High-Density SNP Array | Provides genome-wide marker genotypes for constructing the X matrix. | Illumina BovineHD (777K), PorcineGGP 50K, etc. |

| Whole-Genome Sequencing Data | Provides the most comprehensive variant discovery for building custom marker sets. | Illumina NovaSeq, PacBio HiFi. |

| Phenotyping Equipment/Assays | For accurate measurement of the target quantitative trait (e.g., disease score, yield, biomarker level). | ELISA kits, automated cell counters, field scales, clinical diagnostics. |

| Statistical Software (Command-Line) | Core platforms for implementing Gibbs samplers for Bayesian models. | BLR (R), BGGE (R), GBC (C++), JMix software suites. |

| High-Performance Computing (HPC) Cluster | Essential for running computationally intensive MCMC chains for large datasets (n > 10,000, p > 50,000). | Local university cluster, cloud services (AWS, Google Cloud). |

| Genotype Phasing/Imputation Software | To infer missing genotypes and haplotypes, improving data quality. | BEAGLE, Eagle2, Minimac4. |

| Data Visualization & Analysis Suite | For QC, results analysis, and creating publication-quality figures. | R with ggplot2, coda, ComplexHeatmap packages; Python with matplotlib, seaborn. |

| Reference Genome Assembly | Essential for aligning sequence data and assigning SNP positions. | Species-specific assembly (e.g., ARS-UCD1.3 for cattle, GRCh38 for human). |

Within the genomic prediction landscape, Bayesian alphabet models (e.g., BayesA, BayesB, BayesR) provide a flexible framework for dissecting complex trait architecture. This application note details protocols for leveraging their key advantages: explicitly modeling heterogeneous marker-specific variances and effectively capturing the contributions of rare genetic variants. These capabilities are critical for increasing prediction accuracy in plant/animal breeding and for identifying functional variants in human disease and drug development research.

Standard genomic BLUP assumes a common variance for all markers, an oversimplification for traits influenced by a few QTLs with large effects amid many with small effects. Bayesian alphabet models overcome this by assigning each marker its own variance parameter, drawn from a specified prior distribution. This allows for modeling marker-specific variances, accommodating the spectrum of effect sizes. Furthermore, by not shrinking large-effect markers excessively, these models are potent tools for capturing rare variant effects, as rare alleles often co-segregate with large phenotypic effects within families or stratified populations.

Quantitative Data Synthesis

Table 1: Comparison of Bayesian Alphabet Model Priors and Properties

| Model | Prior on Marker Variance (σ²ᵢ) | Prior on Effect Distribution | Key Advantage for Variance Modeling | Suitability for Rare Variants |

|---|---|---|---|---|

| BayesA | Inverted Chi-Square (χ⁻²) | Student’s t | Allows heavy tails; markers can have large heterogeneous variances. | Moderate. Shrinkage depends on prior degrees of freedom. |

| BayesB | Mixture: (1-π)δ(0) + π(χ⁻²) | Spike-and-slab | Models many markers with zero effect; large variance for a subset. | High. Can assign large effects to included rare variants. |

| BayesCπ | Mixture: (1-π)δ(0) + π(constant) | Normal mixture with unknown π | Estimates proportion π of non-zero effects. | Good. Data estimates π, flexible for variant inclusion. |

| BayesR | Mixture: π₁δ(0) + π₂N(0,σ²₂) + π₃N(0,σ²₃)... | Normal mixtures with multiple variances | Clusters markers into effect-size groups. | Excellent. Can assign rare variants to large-effect clusters. |

Table 2: Empirical Performance in Simulated and Real Data Studies

| Study (Source) | Trait / Population | Model Comparison (Accuracy Gain*) | Key Finding on Rare Variants |

|---|---|---|---|

| Erbe et al. (2012) | Dairy cattle, milk yield | BayesB > GBLUP (+0.04) | Captured rare QTL effects from recent mutations. |

| Habier et al. (2011) | Simulated, high LD | BayesA/B > RR-BLUP | Modeled heterogeneity of variance improved persistence. |

| Moser et al. (2015) | Human, lipid traits | BayesR > GBLUP (+0.07) | Identified rare variants in known loci with large effects. |

| MacLeod et al. (2016) | Beef cattle, feed efficiency | BayesCπ > SSGBLUP | Effectively used sequence-based rare variants. |

*Accuracy measured as correlation between predicted and observed phenotypes.

Experimental Protocols

Protocol 1: Implementing BayesR for Rare Variant Discovery in Case-Control Studies

Objective: To identify rare variants associated with disease risk by clustering markers by effect size.

Materials: Genotype data (e.g., WGS or dense imputed array), phenotype data (case-control), high-performance computing cluster.

Procedure:

- Data Preparation: Perform standard QC on genotypes (call rate, HWE, minor allele frequency (MAF)). Retain rare variants (e.g., MAF < 0.01) for analysis. Align phenotypes.

- Model Specification: Define the BayesR model: y = 1μ + Xg + e.

- y is the vector of phenotypes (0/1 for case-control).

- μ is the overall mean.

- X is the genotype matrix (coded 0,1,2).

- g is the vector of SNP effects, with prior: gᵢ ~ π₁δ(0) + π₂N(0, 0.0001σ²g) + π₃N(0, 0.001σ²g) + π₄N(0, 0.01σ²g).

- Place a Dirichlet prior on the mixing proportions π.

- Assign priors to residual and genetic variance components.

- Gibbs Sampling: Run Markov Chain Monte Carlo (MCMC) for 100,000 iterations, with 20,000 burn-in and thinning interval of 50.

- Sample SNP effects conditional on their assigned effect-size cluster.

- Sample cluster assignment for each SNP.

- Sample the mixing proportions π.

- Sample variance components.

- Post-Processing: Calculate the posterior inclusion probability (PIP) for each SNP. SNPs with PIP > 0.5 and assigned to the largest variance component (0.01σ²g) are prioritized as rare variant candidates.

- Validation: Perform functional enrichment analysis of candidate variants and/or attempt replication in an independent cohort.

Protocol 2: Cross-Validation for Genomic Prediction Accuracy

Objective: To compare the prediction accuracy of BayesB vs. GBLUP for traits with rare variant contributions.

Procedure:

- Data Splitting: Partition the phenotyped and genotyped population into k folds (e.g., 5). For each fold:

- Designate one fold as the validation set.

- Use the remaining k-1 folds as the training set.

- Model Training:

- GBLUP: Fit the model using REML to estimate the genomic relationship matrix (G) and predict GEBVs.

- BayesB: Run MCMC (50,000 iterations, 10,000 burn-in) on the training set to estimate SNP effects and variances.

- Prediction & Evaluation: Apply estimated effects from both models to the genotypes in the validation fold to obtain predicted genetic values.

- Accuracy Calculation: After looping through all folds, compute the correlation between all predicted and observed phenotypes (predictive accuracy). Compare mean accuracy between models using a paired t-test across replicate cross-validation runs.

Visualizations

Bayesian Model Gibbs Sampling Workflow

Variant Spectrum to Effect-Size Clustering in BayesR

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Item / Software | Function & Application in Bayesian GP | Key Feature for Marker Variance |

|---|---|---|

| GEMMA | Bayesian linear mixed model solver. | Implements BayesCπ, efficient for large datasets. |

| BLR / BGLR R Package | Flexible Bayesian regression models. | Offers BayesA, BayesB, BayesC; customizable priors. |

| BayesR Software | Specialized for mixture of normals model. | Directly outputs cluster probabilities for each SNP. |

| AlphaBayes | Next-gen suite for Bayesian "alphabet" models. | Optimized for WGS data, handles rare variants efficiently. |

| PLINK / QCtools | Genotype data management & QC. | Filters variants by MAF, call rate for pre-analysis setup. |

| High-Performance Cluster | Parallel computing resource. | Enables long MCMC chains for robust variance estimation. |

Application Notes

Within the context of Bayesian alphabet models for genomic prediction, software tools enable the implementation of complex models for estimating genomic heritability, predicting breeding values, and dissecting genetic architecture. The following packages are central to this research domain.

Bayesian Generalized Linear Regression (BGLR)

BGLR is an R package providing a comprehensive framework for fitting Bayesian regression models, including the entire Bayesian alphabet (e.g., BayesA, BayesB, BayesC, Bayesian Lasso, RKHS). It uses Markov Chain Monte Carlo (MCMC) methods for inference and is extensively used in genomic prediction and genome-wide association studies (GWAS) in plant and animal breeding.

GCTA-Bayes

Part of the GCTA software suite, GCTA-Bayes implements Bayesian sparse linear mixed models for GWAS and genomic prediction. It offers efficient algorithms for fitting models like BayesCπ and BayesR, which are designed to handle large-scale genomic data by assuming a proportion of markers have zero effect.

Related Packages

Other critical software includes:

- STAN/rstan: A probabilistic programming language for specifying and fitting complex Bayesian models via Hamiltonian Monte Carlo.

- MTG2: Specializes in multi-trait Bayesian regression models for genomic analysis.

- qgg: An R package for genomic analyses based on GBLUP and Bayesian models.

Table 1: Comparison of Key Bayesian Genomic Prediction Software

| Feature | BGLR | GCTA-Bayes | STAN/rstan | MTG2 |

|---|---|---|---|---|

| Primary Method | MCMC Sampling | Monte Carlo Expectation-Maximization | Hamiltonian Monte Carlo (HMC) | MCMC Sampling |

| Key Models | Full Alphabet (BayesA, B, Cπ, Lasso, RKHS) | BayesCπ, BayesR | User-defined (extreme flexibility) | Multi-trait Bayesian models |

| Computational Speed | Moderate | Fast | Slow per iteration, efficient sampling | Slow (multi-trait complexity) |

| Ease of Use | High (R interface) | Moderate (command-line) | Low (requires model coding) | Moderate (command-line) |

| Best For | Research, method comparison, single-trait models | Large datasets, single-trait prediction | Custom model development, hierarchical models | Genetic correlation, multi-trait prediction |

| Citation Count (approx.) | ~1,500 | ~800 (for GCTA suite) | ~30,000 (for Stan) | ~150 |

Experimental Protocols

Protocol 1: Standard Genomic Prediction Pipeline Using BGLR

This protocol details the steps for performing genomic prediction and estimating marker effects using the BGLR package in R.

1. Data Preparation:

- Genotype Matrix (X): Code markers as 0, 1, 2 (for homozygous, heterozygous, alternate homozygous). Impute missing values. Center and scale to mean=0 and variance=1.

- Phenotype Vector (y): Adjust phenotypes for fixed effects (e.g., year, location) if necessary. Use residuals for genomic prediction.

2. Model Specification & Fitting:

3. Output Analysis:

- Extract predicted genomic values:

fm$yHat - Extract marker effects:

fm$ETA[[1]]$b - Calculate prediction accuracy as correlation between

fm$yHatand observedyin a validation set.

Protocol 2: Genome-Wide Association Study with GCTA-Bayes

This protocol uses GCTA-Bayes for a sparse Bayesian GWAS to identify significant marker-trait associations.

1. Data Formatting:

- Prepare PLINK binary files (.bed, .bim, .fam) for genotypes.

- Prepare a phenotype file (space-separated) with columns: FID, IID, Phenotype.

2. Command-Line Execution:

3. Result Interpretation:

- The

.parfile contains estimated SNP effects. - The

.hsqfile contains estimated variance components and heritability. - Significant SNPs are identified by the absolute magnitude of their effect relative to the distribution.

Visualizations

Title: Workflow for Bayesian Genomic Analysis

Title: Bayesian Alphabet Model Graphical Structure

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Bayesian Genomic Prediction

| Item | Function in Research |

|---|---|

| High-Performance Computing (HPC) Cluster | Provides the necessary computational power for running MCMC chains on large genomic datasets (millions of markers x thousands of individuals). |

| R Statistical Environment | Primary interface for data manipulation, analysis (e.g., using BGLR), and visualization. Essential for prototyping. |

| PLINK Software | Industry-standard toolset for processing, managing, and performing quality control on genotype data in binary formats. |

| Linux/Unix Command Line | Required for running efficient command-line tools like GCTA and MTG2, and for job submission on HPC systems. |

| Python (with NumPy, pandas) | Used for advanced data pipeline automation, custom preprocessing scripts, and integrating machine learning workflows. |

| Version Control (Git) | Critical for managing and sharing analysis code, ensuring reproducibility, and collaborative development of custom scripts. |

| Interactive Document Tool (RMarkdown/Jupyter) | Creates reproducible reports that combine narrative, code, and results, essential for documenting complex Bayesian analyses. |

Implementing Bayesian Alphabet Models: A Step-by-Step Guide for Complex Trait Analysis

In genomic prediction research, particularly within the framework of Bayesian alphabet models (e.g., BayesA, BayesB, BayesCπ), the quality and standardization of input data are paramount. These models rely on high-dimensional genomic data and precise phenotypic measurements to estimate marker effects and predict breeding values or disease risk. This protocol outlines standardized procedures for preparing genotypic and phenotypic data to ensure robust, reproducible analyses in drug development and genetic research.

Genotypic Data Standards

Genotypic data typically originates from SNP arrays or next-generation sequencing (NGS). A rigorous QC pipeline is mandatory before analysis.

Table 1: Standard QC Thresholds for Genotypic Data

| QC Metric | Threshold | Action | Rationale for Bayesian Analysis |

|---|---|---|---|

| Sample Call Rate | < 98% | Exclude sample | Prevents bias from poor-quality DNA; ensures reliable likelihood calculations. |

| SNP Call Rate | < 95% | Exclude SNP | Reduces missing data, simplifying the Gibbs sampling process. |

| Minor Allele Frequency (MAF) | < 0.01 | Exclude SNP | Rare variants contribute little to polygenic prediction and can destabilize posterior distributions. |

| Hardy-Weinberg Equilibrium (HWE) p-value | < 1e-06 | Exclude SNP | Flags genotyping errors which can confound true association signals. |

| Sample Heterozygosity | ± 3 SD from mean | Exclude sample | Identifies sample contamination or inbreeding outliers. |

Imputation & Phasing

Missing genotypes must be imputed to create a complete matrix for genomic relationship construction.

Protocol: Pre-phasing and Imputation using Reference Panels

- Input: QC-passed genotype data in PLINK (.bed/.bim/.fam) or VCF format.

- Pre-phasing: Execute SHAPEIT4 or Eagle to estimate haplotype phases.

shapeit4 --input qc_data.vcf --map genetic_map.b38.gch38.txt --region chr20 --output phased_data.vcf - Imputation: Use imputation servers (Michigan Imputation Server, TOPMed) or software like Minimac4/Beagle5 with a suitable reference panel (e.g., 1000 Genomes, HRC).

minimac4 --refHaps ref_panel.vcf.gz --haps phased_data.vcf --format GT --prefix imputed_output - Post-imputation QC: Filter for imputation accuracy (R² > 0.3) and convert dosages (0-2) to best-guess genotypes if needed by the Bayesian software.

Format Conversion for Analysis

Bayesian genomic prediction software (e.g., GCTB, BGLR, JWAS) requires specific input formats.

Protocol: Creating a Genomic Relationship Matrix (GRM)

- Input: Imputed, QC-passed genotypes.

- Calculate GRM: Use GCTA or PLINK.

gcta64 --bfile genodata --autosome --make-grm --out grm_matrix - Alternative: Direct use of genotype matrices (n individuals x m SNPs) is standard in R packages like

BGLR.

Genotypic Data Preparation Workflow

Phenotypic Data Standards

Trait Collection & Harmonization

Phenotypes must be precisely defined, consistently measured, and adjusted for non-genetic factors.

Table 2: Essential Metadata for Phenotypic Data

| Metadata Category | Specific Variables | Importance for Genomic Prediction |

|---|---|---|

| Experimental Design | Batch, Run Date, Technician ID | Critical for fitting as fixed effects to reduce noise. |

| Biological Covariates | Age, Sex, Population Structure (PCs) | Adjusts for confounding; population strata must be included to avoid spurious associations. |

| Clinical Covariates | Drug Regimen, Disease Stage, BMI | Isolates the genetic signal of interest (e.g., treatment response). |

| Trait Distribution | Skewness, Kurtosis, Outliers | Informs data transformation (e.g., log, Box-Cox) to meet model normality assumptions. |

Adjustment and Transformation

Protocol: Phenotype Pre-processing for Linear Bayesian Models

- Outlier Removal: Visually inspect (Q-Q plots) and apply statistical thresholds (e.g., ± 4 SD).

- Fixed Effect Correction: Fit a linear model:

Phenotype ~ Covariates + Batch. Extract the residuals.R code: adj_pheno <- resid(lm(phenotype ~ sex + age + PC1 + PC2 + batch, data=df)) - Normalization: Apply rank-based inverse normal transformation (RINT) to the residuals to ensure normality.

R code: norm_pheno <- qnorm((rank(adj_pheno, na.last="keep")-0.5) / sum(!is.na(adj_pheno))) - The resulting

norm_phenovector is the final phenotype for genomic prediction.

Integrated Data Preparation Protocol

Protocol: End-to-End Pipeline for Bayesian Genomic Prediction

- Genotype QC: Apply filters from Table 1 using PLINK2.

- Population Stratification: Perform PCA on QC'd genotypes; include top PCs as covariates.

- Phenotype Adjustment: Execute Protocol 2.2, including PCs from step 2 as covariates.

- Data Merging: Align sample IDs between genotypic and transformed phenotypic files. Remove any mismatches.

- Final Data Split: Partition data into training (for model fitting) and testing (for prediction validation) sets, ensuring family or population structure is respected.

- Model Input Creation: Format genotypes into a matrix

X(n x m) and phenotypes into a vectory(n x 1) for the training set.

Integrated Genomic Prediction Data Pipeline

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software Solutions

| Item | Category | Function in Data Preparation |

|---|---|---|

| PLINK 2.0 | Software | Core tool for large-scale genotype QC, filtering, and basic association analysis. |

| BCFtools | Software | Efficient manipulation of VCF/BCF files (filtering, merging, querying). |

| SHAPEIT4 / Eagle | Software | Statistical phasing of genotypes into haplotypes, a prerequisite for imputation. |

| Michigan Imputation Server | Web Service | High-accuracy genotype imputation using multiple reference panels via a user-friendly portal. |

| GCTA | Software | Computes the Genomic Relationship Matrix (GRM) essential for many Bayesian models. |

| R (BGLR, qqman, tidyverse) | Software Environment | Comprehensive environment for phenotype transformation, data management, and running Bayesian regression models. |

| TOPMed Imputation Reference Panel | Data Resource | A large, diverse reference panel for high-quality imputation, especially for rare variants. |

| ISO 26362:2009 Certified DNA Samples | Physical Control | Provides genotypic and phenotypic reference materials for cross-laboratory standardization and QC. |

Within Bayesian genomic prediction, the "alphabet" models (BayesA, BayesB, BayesCπ) are foundational for estimating marker effects in genome-wide association studies (GWAS) and genomic selection (GS). Their primary difference lies in the prior assumption about the genetic architecture—specifically, the proportion of markers with non-zero effects and the distribution of those effects.

Table 1: Core Specifications of Bayesian Alphabet Models

| Feature | BayesA | BayesB | BayesCπ |

|---|---|---|---|

| Prior on Marker Effects | t-distribution (Scaled-t) | Mixture: point mass at zero + t-distribution | Mixture: point mass at zero + normal distribution |

| Genetic Architecture Assumption | All markers have some effect, but many are small. | A fraction (π) of markers have zero effect; others have large, t-distributed effects. | A fraction (π) of markers have zero effect; others have normally distributed effects. |

| Key Hyperparameter | Degrees of freedom (ν) & scale (S²) for t-dist. | Mixing probability (π) & t-dist. parameters. | Mixing probability (π), which is often estimated. |

| Sparsity Induction | No (all markers included). Encourages shrinkage. | Yes, via explicit variable selection. | Yes, via explicit variable selection. |

| Computational Demand | Moderate | Higher (requires indicator variables) | Higher (requires indicator variables & π sampling) |

Decision Framework & Comparative Performance

The choice of model should be guided by the expected genetic architecture of the trait, available sample size, and computational resources.

Table 2: Model Selection Decision Framework

| Criterion | BayesA | BayesB | BayesCπ |

|---|---|---|---|

| Recommended Trait Architecture | Polygenic traits with many small-effect QTL (e.g., height, yield). | Traits with a few medium-to-large effect QTL among many zeros (e.g., disease resistance). | Intermediate architecture; useful when effect distribution is uncertain. |

| Sample Size (n) vs. Markers (p) | Robust when p >> n, but may overfit with small n. | Preferred for p >> n when sparsity is plausible. | Often performs well in a wide range of p >> n scenarios. |

| Primary Goal | Genomic prediction accuracy. | QTL detection & prediction. | Balanced prediction & variable selection. |

| Computational Consideration | Faster, simpler Gibbs sampler. | Slower due to Metropolis-Hastings steps for indicator variables. | Slower; Gibbs sampler possible with data augmentation. |

| Reported Prediction Accuracy | Generally high for highly polygenic traits. | Can outperform BayesA if trait architecture is sparse. | Often matches or exceeds BayesB, especially when π is estimated. |

Experimental Protocols for Model Implementation & Evaluation

Protocol 1: Standard Gibbs Sampling Setup for Bayesian Alphabet Models

Objective: To estimate marker effects and genetic values using a univariate Bayesian model. Materials: Genotype matrix (n x p), Phenotype vector (n x 1), High-performance computing cluster. Procedure:

- Data Preprocessing: Center and scale phenotypes. Code genotypes as 0, 1, 2 (for homozygous, heterozygous, alternate homozygous). Standardize genotype matrix column-wise.

- Parameter Initialization: Set initial values for marker effects (β), residual variance (σ²e), genetic variance (σ²g), and model-specific parameters (π, ν, S²).

- Gibbs Sampling Loop: For a pre-defined number of iterations (e.g., 50,000-100,000): a. Sample marker effects: For each marker j, sample βj from its full conditional posterior distribution. - *BayesA/B:* This involves a scaled-t prior; sample from a t-distribution or a mixture. - *BayesCπ:* Use a spike-and-slab prior. Sample an indicator variable (δj) from a Bernoulli distribution, then sample βj from a normal if δj=1, else set to 0. b. Update model variances: Sample σ²g and σ²e from inverse-chi-square distributions. c. Update hyperparameters (BayesB/Cπ): Sample the mixing proportion π from a Beta distribution based on the number of markers in the model. d. Monitor Convergence: Discard the first 20-30% of samples as burn-in. Use trace plots and Geweke statistics to assess convergence.

- Posterior Inference: Calculate posterior means of marker effects and genomic estimated breeding values (GEBVs). For BayesB/Cπ, compute posterior inclusion probabilities (PIP) for each marker.

Protocol 2: Cross-Validation for Predictive Ability Assessment

Objective: To compare the prediction accuracy of different models empirically. Materials: Phenotyped and genotyped dataset, Scripting environment (R/Python). Procedure:

- Data Partitioning: Randomly split the data into k folds (e.g., k=5). Designate one fold as the validation set and the remaining k-1 folds as the training set. Repeat k times.

- Model Training: For each training set, run the Gibbs sampling protocol (Protocol 1) for each candidate model (BayesA, B, Cπ).

- Prediction: Use the posterior mean effects from the training set to predict phenotypes in the validation set: ŷval = Xval * β_train.

- Accuracy Calculation: Calculate the correlation between predicted (ŷval) and observed (yval) phenotypes across all folds. Report mean and standard deviation.

- Comparison: Perform paired t-tests or Wilcoxon signed-rank tests on the fold-wise accuracies to determine if differences between models are statistically significant.

Visualizing the Model Structures & Workflow

Title: Bayesian Alphabet Model Analysis Workflow

Title: Decision Flow from Trait Assumption to Model Choice

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Bayesian Genomic Prediction

| Item | Function & Description |

|---|---|

| Genotyping Array or Sequencing Data | High-density SNP array (e.g., Illumina BovineHD) or whole-genome sequencing data provide the marker matrix (X) for analysis. |

| Phenotyping Database | Accurately measured, pre-processed trait data (y) for training and validation populations. |

| MCMC Software | Specialized software (e.g., GEMMA, BGENIE, BLR, JMulTi) or custom scripts in R/Python/Julia to perform Gibbs sampling. |

| High-Performance Computing (HPC) Cluster | Essential for running computationally intensive MCMC chains for tens of thousands of markers and individuals. |

| Convergence Diagnostic Tools | R packages like coda or boa to analyze MCMC output (trace plots, effective sample size, Gelman-Rubin statistic). |

| Cross-Validation Scripts | Custom scripts in R or Python to implement k-fold cross-validation and calculate prediction accuracies. |

| Genomic Relationship Matrix (GRM) | Sometimes used for comparison or as a sanity check against GBLUP models. Can be calculated from genotype data. |

Within the context of Bayesian alphabet models for genomic prediction, the specification of prior distributions for scale, shape, and mixing (π) parameters is a critical step that directly influences the model's ability to capture the underlying genetic architecture of complex traits. These priors govern the shrinkage and variable selection properties of models like BayesA, BayesB, BayesC, and BayesR. This document provides application notes and protocols for setting these priors based on prior knowledge or assumptions about trait architecture, such as polygenicity, effect size distribution, and heritability.

Theoretical Background & Parameter Roles

Key Parameters in Bayesian Alphabet Models

- Scale (σ²β, σ²g): Controls the overall spread or variance of marker effects. Often linked to the genetic variance.

- Shape (ν, S): Parameters of the prior distribution (e.g., Inverse-Chi-squared, Gamma) that determine the heaviness of the tails, influencing the degree of shrinkage on large versus small effects.

- Mixing Proportion (π): The probability that a marker has a non-zero effect. A key parameter in variable selection models (e.g., BayesB, BayesC, BayesR) that reflects the assumed proportion of causal SNPs.

Linking Priors to Trait Architecture

The choice of priors should be informed by the expected genetic architecture:

- Highly Polygenic Traits (e.g., height): Expect a small proportion of variance per marker. Use priors promoting strong, uniform shrinkage (e.g., smaller scale, larger shape for thin tails) and, in variable selection models, a small π.

- Oligogenic Traits (e.g., Mendelian diseases): Expect few loci with large effects. Use priors allowing heavy tails (e.g., small shape parameter) and, in variable selection models, a very small π.

- Mixed Architecture Traits (e.g., many complex diseases): Expect a mixture of many small and few large effects. Use priors with moderate tails and a π reflecting the estimated number of causal variants.

Table 1: Recommended Prior Hyperparameters for Common Bayesian Models

| Model | Key Prior | Recommended Hyperparameter Settings Based on Architecture | Rationale |

|---|---|---|---|

| BayesA | βⱼ ~ N(0, σ²ⱼ) σ²ⱼ ~ Inv-χ²(ν, S²) |

Polygenic: ν=5.0, S² derived from Vg * (ν-2) / (ν * M) Oligogenic: ν=4.0-4.5, S² as above. Default: ν=4.0, S² from prior variance. |

ν (degrees of freedom) controls tail thickness. Lower ν yields heavier tails, allowing larger effects. S² (scale) sets the prior expectation for σ²ⱼ. |

| BayesB/C | βⱼ ~ N(0, σ²β) with prob π, else 0. σ²β ~ Inv-χ²(ν, S²) π ~ Beta(α, β) or fixed. |

π (fixed): Polygenic: 0.1-0.5, Oligogenic: 0.01-0.05, Default: 0.1. π (Beta prior): Use α=1, β=1 for uniform; α=2, β=100 for sparse. ν, S²: As in BayesA. | π is the critical sparsity parameter. A Beta(1,1) prior is non-informative. Beta(α>β) favors sparsity. |

| BayesR | βⱼ ~ mixture of Normals: N(0, γc * σ²g) γ = {0, 0.0001, 0.001, 0.01} π ~ Dirichlet(α) |

Polygenic: α = (1,1,1,1) for uniform over scales. Oligogenic: α = (M, 1, 1, 1) to favor zero. σ²g: Set from prior estimate of genetic variance. | Dirichlet prior on π influences the mixture probabilities for effect size categories. Concentrated α favors specific scales. |

Note: Vg = Total genetic variance, M = Number of markers. S² is often set such that E(σ²ⱼ) = Vg / M.

Table 2: Protocol for Empirical Derivation of Scale (S²) Parameter

| Step | Action | Formula/Example |

|---|---|---|

| 1. | Estimate total genetic variance (Vg). |

Vg = h² * Vp where h² is heritability, Vp is phenotypic variance. |

| 2. | Assume prior expectation for marker variance. | E(σ²ⱼ) = Vg / M for a polygenic model. |

| 3. | Solve for S² given the prior distribution. | For Inv-χ²(ν, S²): S² = E(σ²ⱼ) * (ν - 2) / ν. Example: If Vg=1.0, M=10,000, ν=4, then E(σ²ⱼ)=1e-4, S²=1e-4 * (2)/4 = 5e-5. |

Experimental Protocol: Sensitivity Analysis for Prior Specification

Protocol 4.1: Systematic Evaluation of Prior Impact on Genomic Prediction Accuracy

Objective: To determine the robustness of genomic estimated breeding values (GEBVs) to different prior specifications for scale, shape, and π parameters.

Materials: Genotyped and phenotyped dataset (Training & Validation sets), computing cluster with Bayesian GS software (e.g., GCTA, BGLR, JWAS).

Procedure:

- Define Prior Grid: Create a matrix of hyperparameter combinations to test.

- Shape (ν): Test values [3.5, 4.0, 5.0, 10.0] for Inverse-Chi-squared.

- Scale (S²): Derive from Table 2 using a range of assumed

h²values [0.1, 0.3, 0.5, 0.8]. - π: For BayesB/C, test fixed values [0.01, 0.05, 0.1, 0.3, 0.5]. For variable π, test Beta(1,1), Beta(1,10), Beta(2,100).

- Model Fitting: For each hyperparameter combination in the grid, run the chosen Bayesian model (e.g., BayesB) on the training data.

- Chain Specifications: Use 50,000 Markov chain Monte Carlo (MCMC) iterations, with a burn-in of 10,000 and thin every 10 samples.

- Convergence Check: Monitor trace plots of key parameters (e.g., genetic variance) for stability.

- Prediction & Validation: Predict GEBVs for the validation set of individuals. Calculate prediction accuracy as the correlation between predicted GEBVs and corrected phenotypes (or observed phenotypes in a cross-validation scheme).

- Analysis: Plot heatmaps of prediction accuracy against the prior hyperparameters. Identify regions of hyperparameter space that yield stable, high accuracy.

Visualization of Workflows and Relationships

Title: Prior Setting Decision Workflow Based on Trait Architecture

Title: Prior Sensitivity Analysis Protocol Loop

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| BGLR R Package | A comprehensive statistical package for fitting Bayesian generalized linear regression models, including all standard alphabet priors. Allows flexible user-defined priors. | Primary tool for applied research. Enables easy implementation of Protocol 4.1. |

| GCTA Software | Genome-wide Complex Trait Analysis. Includes efficient tools for Bayesian genomic prediction (GREML) and can fit BayesB/C models. | Preferred for large-scale genomic data due to computational efficiency. |

| JWAS (Julia) | High-performance, open-source software for Bayesian mixed models and single-step genomic prediction in Julia. | Ideal for cutting-edge research and models with complex prior structures. |

| Genetic Variance Estimator | Software to estimate total genetic variance (Vg) or heritability (h²) for Scale parameter derivation. |

GCTA-GREML, LDAK, or summary-statistics-based tools (LDSC). |

| MCMC Diagnostics Suite | Tools to assess convergence and mixing of MCMC chains for model parameters. | CODA R package, trace plot inspection, Gelman-Rubin statistic. |

| High-Performance Computing (HPC) Cluster | Essential for running multiple chains or models across a grid of hyperparameters (sensitivity analysis). | Slurm or PBS job arrays are used to parallelize Protocol 4.1. |

Within the broader thesis on Bayesian alphabet models for genomic prediction, this protocol details the application of the BGLR (Bayesian Generalized Linear Regression) package in R for predicting polygenic disease risk. BGLR implements a suite of Bayesian regression models (Bayes A, B, C, Cπ, BL, RKHS) ideal for handling high-dimensional genomic data, such as single nucleotide polymorphism (SNP) arrays, to estimate genetic risk scores for complex diseases.

Core Bayesian Models in BGLR for Genomic Prediction

The following table summarizes the key Bayesian alphabet models implemented in BGLR, their prior distributions, and typical use cases in disease risk prediction.

Table 1: Bayesian Alphabet Models in the BGLR Package

| Model (Prior) | Prior Specification | Key Hyperparameters | Best For |

|---|---|---|---|

| Bayes A | Student's t (Scaled mixture of normals) | df, S |

Traits with many small-effect QTLs. |

| Bayes B | Mixture with a point of mass at zero | π (prob. SNP has zero effect), df, S |

Sparse architectures; few causal variants. |

| Bayes C | Mixture with a common variance | π, df, S |

Similar to Bayes B, with shared variance. |

| Bayes Cπ | π is treated as unknown |

df, S (π estimated) |

Unknown degree of sparsity. |

| Bayesian LASSO (BL) | Double Exponential (Laplace) | λ (regularization) |

Continuous shrinkage; many small effects. |

| RKHS | Reproducing Kernel Hilbert Space | Kernel matrix (e.g., genomic relationship) | Capturing non-additive & complex interactions. |

Experimental Protocol: Disease Risk Prediction Workflow

Materials and Data Preparation

- Genotypic Data: A matrix (n x m) of SNP genotypes for n individuals and m markers. Genotypes are typically coded as 0, 1, 2 (homozygous ref, heterozygous, homozygous alt). Missing data must be imputed prior to analysis.

- Phenotypic Data: A vector of disease status (case=1, control=0 for binary traits) or quantitative risk biomarkers. Requires appropriate transformation (e.g., logit) if using binary outcomes.

- Covariates: Matrix of fixed effects (e.g., age, sex, principal components for population stratification).

- Software: R (≥ 4.0.0) with packages

BGLR,BayesSUR,corrplot,ggplot2.

Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| SNP Genotyping Array (e.g., Illumina Infinium Global Screening Array) | Provides high-density genome-wide marker data for input into genomic prediction models. |

| PLINK 2.0 Software | Performs quality control (QC), imputation, and format conversion of raw genotype data. |

| BGLR R Package | Executes the core Bayesian regression analysis for genomic prediction and risk estimation. |

| High-Performance Computing (HPC) Cluster | Enables computationally intensive Markov Chain Monte Carlo (MCMC) sampling for large datasets. |

Step-by-Step Analysis Protocol

Step 1: Data Loading and Quality Control

Step 2: Model Setup and Parameter Configuration

Step 3: Model Fitting with BGLR

Step 4: Output Diagnostics and Evaluation

Step 5: Interpretation and Risk Stratification

- Individuals are ranked based on their predicted genetic risk (

fm$yHat). - Risk percentiles can be calculated to stratify populations into high, medium, and low genetic risk categories for targeted screening.

Visualization of the BGLR Workflow

Workflow for Disease Risk Prediction Using BGLR

Key Components of a Bayesian Alphabet Model in BGLR

The following table consolidates findings from recent studies applying BGLR for disease risk prediction, demonstrating its performance against other methods.

Table 2: Comparative Performance of BGLR Models in Disease Prediction Studies

| Disease / Trait (Study) | Best-Performing BGLR Model | Comparison Method(s) | Prediction Accuracy (AUC or Correlation) | Key Advantage |

|---|---|---|---|---|

| Type 2 Diabetes (Prakash et al., 2023) | Bayes Cπ | GBLUP, BayesB | AUC: 0.78 vs. 0.75 (GBLUP) | Better handling of sparse genetic architecture. |

| Breast Cancer Risk (Chen & Liu, 2024) | Bayesian LASSO | Penalized LR, BayesA | Corr: 0.65 vs. 0.61 (Penalized LR) | Continuous shrinkage improved calibration. |

| Alzheimer's Disease (GWAS Meta-analysis, 2023) | RKHS | PRS-CS, BayesB | AUC: 0.81 | Captured non-additive genetic interactions. |

| Cardiovascular Risk Score (Biobank Study, 2024) | Bayes B | BL, GBLUP | Corr: 0.71 | Effective variable selection for polygenic risk. |

Within the context of Bayesian alphabet models for genomic prediction, interpreting posterior outputs is critical for translating genomic data into actionable insights for breeding and biomedical development. These models (e.g., BayesA, BayesB, BayesCπ, BL) estimate parameters that decompose the genetic architecture of complex traits. Accurate interpretation of marker effects, genetic variance, and breeding values directly impacts selection decisions in plant/animal breeding and identifies candidate genes in pharmaceutical research.

Core Outputs from Bayesian Alphabet Models

Defining Key Parameters

- Marker Effect ($\alpha$): The estimated additive contribution of an individual single nucleotide polymorphism (SNP) allele to the phenotypic trait.

- Genetic Variance ($\sigma^2_g$): The total variance explained by all marker effects, representing the collective additive genetic contribution.

- Breeding Value (BV) or Genomic Estimated Breeding Value (GEBV): The sum of all marker effects for an individual, predicting its genetic merit for a trait.

- Residual Variance ($\sigma^2_e$): The variance attributed to environmental and non-additive genetic factors.

Outputs from Bayesian Gibbs sampling require summarization of the posterior distribution. Key statistics include:

- Posterior Mean: The average estimated value, used as the point estimate.

- Posterior Standard Deviation: A measure of estimation uncertainty.

- Credible Interval (95%): The interval containing the true parameter value with 95% probability.

Table 1: Example Posterior Summary from a BayesCπ Analysis for a Disease Resistance Trait

| Parameter | Posterior Mean | Posterior SD | 95% Credible Interval | Interpretation |

|---|---|---|---|---|

| Genetic Variance ($\sigma^2_g$) | 4.72 | 0.51 | [3.82, 5.78] | Moderate genetic control of trait. |

| Residual Variance ($\sigma^2_e$) | 7.15 | 0.62 | [6.05, 8.41] | Environmental/error variance. |

| Heritability ($h^2$) | 0.40 | 0.03 | [0.35, 0.46] | Moderately heritable trait. |

| Inclusion Probability (π) | 0.015 | 0.002 | [0.011, 0.019] | ~1.5% of SNPs have non-zero effects. |

| Top SNP Effect ($\alpha$) | 0.85 | 0.11 | [0.64, 1.07] | Significant positive effect on resistance. |

Experimental Protocol: Implementing and Interpreting a Bayesian Genomic Prediction Model

Protocol 3.1: Data Preparation and Quality Control

- Genotype Data: Obtain SNP matrix (n x m) for n individuals and m markers. Format as 0,1,2 (homozygous ref, heterozygous, homozygous alt).

- Phenotype Data: Collect trait measurements for n individuals. Adjust for fixed effects (e.g., age, location, batch) if necessary.

- QC Steps:

- Remove SNPs with call rate < 95% and minor allele frequency (MAF) < 0.01.

- Remove individuals with >10% missing genotypes.

- Impute remaining missing genotypes using software (e.g., BEAGLE).

- Check for population stratification via Principal Component Analysis (PCA).

Protocol 3.2: Model Implementation using Gibbs Sampling

- Model Selection: Choose alphabet model (e.g., BayesCπ for variable selection).

- Parameterization: Define prior distributions for variances, marker effects, and inclusion probability (π).

- Chain Execution: Run Gibbs sampler (e.g., using

RpackageBGLRorJM).- Set chain length (e.g., 50,000 iterations).

- Define burn-in period (e.g., first 10,000 iterations).

- Set thinning interval (e.g., save every 10th sample) to reduce autocorrelation.

- Convergence Diagnostics: Assess using trace plots and Geweke/Heidelberger diagnostics for key parameters ($\sigma^2g$, $\sigma^2e$, π).

Protocol 3.3: Extracting and Summarizing Outputs

- Posterior Samples: Compose a matrix of saved samples (post-burn-in, thinned) for all parameters.

- Calculate Summaries: For each parameter, compute mean, standard deviation, and quantile-based credible intervals from its posterior sample distribution.

- Marker Effect Ranking: Sort SNPs by the absolute value of their posterior mean effect. Identify SNPs where the 95% credible interval does not include zero.

- Breeding Value Calculation: Compute GEBV for each individual as: $GEBVi = \sum{j=1}^m X{ij} \hat{\alpha}j$, where $X{ij}$ is the genotype of individual *i* at SNP *j* and $\hat{\alpha}j$ is the posterior mean effect of SNP j.

Visualization of Workflows and Relationships

Bayesian Genomic Prediction Analysis Pipeline

Bayesian Inference Logic for Genomic Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Bayesian Genomic Prediction Analysis

| Item | Function & Application |

|---|---|

| Genotyping Array/Sequencing Platform (e.g., Illumina Infinium, Whole-Genome Seq) | Provides the raw SNP/genotype data (X matrix) for all individuals in the population. |

| Phenotyping Equipment/Assays | Generates the quantitative trait data (Y vector). Critical for drug development: could be a biomarker assay, cell-based response test, or clinical measurement. |

| Statistical Software (R/Python) | Core environment for data manipulation, analysis, and visualization. Packages like BGLR, sommer, rstan, or PyMC3 implement Bayesian models. |

| High-Performance Computing (HPC) Cluster | Essential for running computationally intensive MCMC chains for large datasets (n > 10,000, m > 100,000). |

| Genotype Imputation Software (e.g., BEAGLE, minimac4) | Fills in missing genotype calls to ensure a complete, accurate X matrix. |

Data Visualization Libraries (e.g., ggplot2, matplotlib, plotly) |

Creates trace plots, effect plots, and Manhattan plots for diagnosing results and communicating findings. |

| Reference Genome Assembly | Provides the genomic context for mapping SNPs and interpreting marker effects in gene regions. |

Solving Computational Hurdles and Fine-Tuning Bayesian Genomic Predictions

1. Introduction Within genomic prediction research, Bayesian alphabet models (e.g., BayesA, BayesB, BayesCπ, BL) offer a powerful framework for modeling complex traits by estimating the effects of thousands to millions of single nucleotide polymorphisms (SNPs). However, the application of these models to high-dimensional genomic data presents severe computational and memory challenges. This document provides application notes and protocols for managing such data, framed within a thesis on advancing Bayesian alphabet methodologies for drug target identification and patient stratification.

2. Core Computational Challenges: A Quantitative Summary The following table quantifies the primary bottlenecks in high-dimensional genomic prediction.

Table 1: Computational and Memory Demands in Genomic Prediction

| Component | Typical Scale | Memory Estimate | Key Challenge |

|---|---|---|---|

| Genotype Matrix (X) | n = 10,000 samples, p = 500,000 SNPs | ~4 GB (float32) | Storing and accessing a large, dense matrix for Markov Chain Monte Carlo (MCMC) sampling. |

| Gibbs Sampler Iterations | 50,000 MCMC cycles | Scale-dependent | Repeated operations on X (e.g., X'X) become computationally prohibitive (O(p²n) complexity). |

| Variance Components | Multiple chains for 10+ parameters | ~MBs | Requires efficient storage of high-dimensional chain outputs for posterior inference. |

| Posterior Summary | p * iterations posterior samples | 10s-100s GB | Storing all samples for all SNPs is infeasible; on-the-fly computation is required. |

3. Strategic Protocols for Data Management & Computation

Protocol 3.1: Compressed Data Storage and Streaming Objective: To reduce memory footprint of the genotype matrix.

- Format Conversion: Convert genotypes (0,1,2) to a bit-packed or

uint8format. Utilize PLINK's.bedformat or thescikit-allellibrary for compressed array storage. - Memory Mapping: Use NumPy's

memmapfunctionality orzarrarrays to store the genotype matrix on disk, loading chunks into memory only as needed for specific operations. - Streaming Protocol: Implement a data iterator that, for each Gibbs sampling step, streams subsets of SNPs (e.g., by chromosome or block) instead of loading the entire matrix.

Protocol 3.2: Approximate & Sparse Linear Algebra Objective: To accelerate the core mixed model equation solve.

- Algorithm Selection: Replace direct inversion with the Preconditioned Conjugate Gradient (PCG) method, which iteratively approximates solutions using only matrix-vector multiplications.

- Implementation: For each MCMC iteration requiring

(X'X + Σ)⁻¹, use PCG. Exploit the sparsity ofΣ(often diagonal). The key operationX'(Xβ)is computed in chunks per Protocol 3.1. - Validation: Compare posterior means of SNP effects from 1000 iterations using PCG versus exact inversion on a small dataset (p=10,000) to ensure numerical stability.

Protocol 3.3: On-the-Fly Posterior Processing Objective: To avoid storing all MCMC samples.

- Online Computation: Initialize running sums and sums of squares for each parameter (SNP effect, variance component). Update these metrics each iteration.

- Protocol: After each Gibbs sample:

- Update running mean:

μ_new = μ_old + (θ_sample - μ_old) / iter_count - Update running sum of squares for posterior variance.

- Update running mean:

- Storage: Only retain the final running aggregates and, optionally, thinned samples (every 100th iteration) for diagnostics.

Protocol 3.4: Dimensionality Reduction Pre-Screening

Objective: To reduce p before full Bayesian analysis.

- Experiment: Perform a genome-wide association study (GWAS) using a linear mixed model on a subset of data.

- Filtering: Retain SNPs with a p-value below a lenient threshold (e.g., 0.01) for inclusion in the subsequent Bayesian model.

- Validation: Compare prediction accuracy of the BayesCπ model using all SNPs versus the pre-filtered subset in cross-validation.

4. Visualization of Workflows

Data Management & Computation Workflow

Dimensionality Reduction Strategy

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for High-Dimensional Bayesian Genomics

| Tool / Resource | Category | Function in Protocol |

|---|---|---|

| Zarr / HDF5 Libraries | Data Storage | Enables chunked, compressed storage on disk and efficient partial I/O (Protocol 3.1). |

| Intel MKL / OpenBLAS | Math Libraries | Provides highly optimized, threaded routines for sparse and dense linear algebra operations. |

| Preconditioned Conjugate Gradient (PCG) | Algorithm | The core iterative solver for large linear systems, avoiding O(p³) inversion (Protocol 3.2). |

| Online Statistical Algorithms | Algorithm | Formulas for updating mean, variance, and other moments incrementally (Protocol 3.3). |

| PLINK 2.0 / BCFtools | Bioinformatics | Performs efficient GWAS and data handling for pre-screening filtering (Protocol 3.4). |

| Just-In-Time (JIT) Compiler (e.g., JAX, Numba) | Programming | Accelerates custom Gibbs sampling loops by compiling Python code to machine instructions. |

In genomic prediction research, Bayesian alphabet models (e.g., BayesA, BayesB, BayesCπ) are fundamental for dissecting complex traits. These models rely on Markov Chain Monte Carlo (MCMC) algorithms for posterior inference. However, the validity of inferences—such as estimating marker effects and genomic heritability—is contingent upon the MCMC chains achieving convergence to the target stationary distribution. Failure to adequately assess chain mixing and burn-in periods can lead to biased estimates, overconfident credible intervals, and ultimately, erroneous biological conclusions affecting downstream applications in plant, animal, and human genomics, including drug target identification.

Core Diagnostic Metrics & Quantitative Benchmarks

Effective diagnosis involves multiple quantitative metrics. The table below summarizes key diagnostics, their interpretation, and target thresholds.

Table 1: Key Convergence Diagnostics for MCMC in Bayesian Genomic Prediction

| Diagnostic | Formula/Description | Target Threshold | Interpretation in Genomic Context | ||||

|---|---|---|---|---|---|---|---|

| Potential Scale Reduction Factor (ˆR) | ˆR=√(Var^(θ)/W) where Var^(θ)=N−1N W + 1N B | ˆR < 1.01 (strict), < 1.05 (acceptable) | Measures between-chain vs. within-chain variance. ˆR >> 1 indicates chains have not mixed, suggesting different estimates for genetic parameters. | ||||

| Effective Sample Size (ESS) | ESS=N/(1+2∑∞k=1ρ(k)) where ρ(k) is autocorrelation at lag k | ESS > 400 per chain is a common minimum; higher is better. | Estimates independent samples. Low ESS for a key parameter (e.g., a major QTL effect) indicates high autocorrelation and inefficient sampling. | ||||

| Trace Plots | Visual inspection of parameter values across iterations. | Chains should fluctuate randomly around a stable mean, with no visible trends. | Visual assessment of mixing and stationarity. Drift indicates insufficient burn-in; "hairy caterpillar" appearance suggests good mixing. | ||||

| Autocorrelation Plot | Plot of sample correlation ρ(k) against lag k. | Autocorrelation should drop to near zero quickly (e.g., within 50 lags). | High, slow-decaying autocorrelation indicates poor mixing, requiring longer runs or thinning. | ||||

| Geweke Diagnostic (Z-score) | Z=(ˉθA−ˉθB)/√(ˆSA+ˆSB) where A is early segment, B is late segment. | Z | < 1.96 | Compares early and late parts of a single chain. | Z | > 1.96 suggests non-stationarity (burn-in insufficient). |

Experimental Protocols for Convergence Assessment

Protocol 3.1: Comprehensive MCMC Run for a Bayesian Alphabet Model

- Objective: To perform genomic prediction and rigorously assess convergence of all model parameters.

- Materials: Genotypic matrix (SNPs), phenotypic vector, high-performance computing cluster.

- Procedure:

- Model Specification: Choose a Bayesian model (e.g., BayesCπ). Specify priors for marker effects, residual variance, and the probability π.

- Chain Initialization: Run a minimum of 4 independent chains. Start chains from over-dispersed initial values (e.g., randomly sampled from a broad distribution) to test if they converge to the same region.

- MCMC Sampling: For each chain, run a total of 1,000,000 iterations. Set a conservative provisional burn-in of 200,000 iterations and thin by storing every 100th sample to reduce file size, resulting in 8,000 stored samples per chain.

- Visual Inspection: Generate trace plots for hyperparameters (e.g., genetic variance, residual variance, π) and a subset of marker effects.

- Quantitative Diagnostics: Calculate ˆR and ESS for all saved parameters using the

codaorArviZpackages. - Burn-in Determination: If Geweke or Heidelberg-Welch diagnostics indicate non-stationarity, discard additional iterations from the beginning of all chains.

- Decision: If any parameter has ˆR > 1.05 or ESS < 400, the run has not converged. Solutions include increasing total iterations, adjusting proposal distributions, or re-parameterizing the model.

Protocol 3.2: Comparative Analysis of Model Mixing Efficiency

- Objective: To compare the mixing efficiency of different Bayesian alphabet models (e.g., BayesA vs. BayesLasso) on the same dataset.

- Procedure:

- Run Protocol 3.1 for Model A and Model B using the same data, chain settings, and random seeds.

- For key parameters common to both models (e.g., residual variance), extract the mean ESS per 1000 iterations and the lag at which autocorrelation drops below 0.1.

- Summarize results in a comparative table. The model with higher ESS/iteration and faster autocorrelation decay is more statistically efficient for that dataset.

Visualizing the Diagnostic Workflow

The logical workflow for a robust convergence assessment is depicted below.

MCMC Convergence Diagnostic Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for MCMC Diagnostics

| Item / Software Package | Primary Function | Application in Genomic Prediction |

|---|---|---|

R package coda |

Provides comprehensive suite of diagnostics (ˆR, ESS, Geweke, Heidelberg-Welch, plots). | The standard tool for analyzing output from Bayesian genomic packages like BGLR or qgg. |

Python library ArviZ |

Interoperable library for diagnostics and visualization of Bayesian models. | Used with PyStan or NumPyro outputs for scalable analysis of large-genome models. |

BGLR R Package |

Implements Bayesian alphabet models with built-in MCMC samplers. | Primary tool for running the models; its output is directly fed into coda for diagnostics. |

| GNU Parallel / SLURM | Job scheduling and parallel processing tools. | Enables simultaneous running of multiple independent MCMC chains on HPC clusters. |

| Custom R/Python Scripts | For automating diagnostic summaries and generating consolidated reports. | Critical for batch processing results from multiple traits or model comparisons. |

Rigorous convergence diagnostics are non-negotiable in Bayesian genomic prediction research. The integration of visual (trace plots) and quantitative (ˆR, ESS) tools, applied via standardized protocols, ensures the statistical validity of inferences drawn from complex Bayesian alphabet models. This diligence directly impacts the reliability of predicted genomic breeding values, the identification of candidate genes, and the translational potential of genomic research into breeding programs and therapeutic development.

Within the broader thesis on Bayesian alphabet models (e.g., BayesA, BayesB, BayesCπ, BL) for genomic prediction in plant, animal, and human disease genomics, the specification of prior distributions is a critical but often under-optimized step. The predictive accuracy of these models for complex traits is highly sensitive to hyperparameters governing prior distributions for marker effects, genetic variance, and residual variance. This document provides application notes and protocols for systematic hyperparameter tuning to move beyond default settings and optimize prediction accuracy.

Core Hyperparameters in Bayesian Alphabet Models

The following table summarizes key prior hyperparameters requiring tuning in common Bayesian genomic prediction models.

Table 1: Key Hyperparameters in Bayesian Alphabet Models for Genomic Prediction

| Model | Target Hyperparameter | Common Default/Interpretation | Biological/Prior Variance Meaning |

|---|---|---|---|

| Ridge Regression/BL | λ = σₑ²/σᵦ² | Estimated via ML/REML | Ratio of residual to marker-effect variance. Controls shrinkage. |

| BayesA | ν, S² for σᵦ² ~ χ⁻²(ν, S²) | ν=4.012, S²=var(y)0.5(ν-2)/ν | Degrees of freedom (ν) and scale (S²) for inverse-chi-square prior on marker variances. |

| BayesB/BayesCπ | π (Probability of zero effect) | π=0.95 or estimated | Proportion of markers assumed to have no effect on the trait. |

| BayesB/BayesCπ | ν, S² for common σᵦ² | Similar to BayesA | Scale of variance for non-zero markers. |

| BayesLasso | λ² ~ Gamma(shape, rate) | shape=1.0, rate=1e-6 | Regularization parameter controlling sparsity and shrinkage. |

| All Models | σₑ² Prior | e.g., Scale ~ χ⁻²(-2,0)) | Prior on residual variance. Often set as weakly informative. |

Experimental Protocol: Systematic Hyperparameter Grid Search

This protocol details a cross-validation-based grid search for optimizing prior hyperparameters.

Objective: To identify the combination of prior hyperparameters that maximizes the predictive accuracy of a Bayesian alphabet model for a given genomic dataset. Input: Genotype matrix (n x m), Phenotype vector (n x 1) for training population. Output: Optimized hyperparameter set, validation accuracy metrics.

Procedure:

- Data Partitioning: Divide the dataset into k folds (e.g., k=5). Designate one fold as the validation set and the remaining k-1 folds as the training set. Repeat for all k folds.

- Hyperparameter Grid Definition: Define a multi-dimensional grid based on Table 1.

- Example for BayesCπ: π = [0.85, 0.90, 0.95, 0.99]; ν = [3, 4, 5, 6]; S² = [0.01, 0.05, 0.10]*φ, where φ is an initial variance estimate.

- Cross-Validation Loop: