Bioinformatics Tools for Candidate Gene Prioritization: A Comprehensive Guide for Researchers

This article provides a comprehensive overview of computational strategies for prioritizing candidate disease genes, a critical step in translating high-throughput genomic data into biological insights and therapeutic targets.

Bioinformatics Tools for Candidate Gene Prioritization: A Comprehensive Guide for Researchers

Abstract

This article provides a comprehensive overview of computational strategies for prioritizing candidate disease genes, a critical step in translating high-throughput genomic data into biological insights and therapeutic targets. We explore the foundational principles of gene prioritization, including the 'guilt-by-association' concept and the use of diverse data sources like protein-protein interaction networks and ontologies. A detailed analysis of methodological approaches covers network-based, machine learning, and hybrid tools, with a specific focus on widely-used platforms such as Exomiser. The guide also offers evidence-based troubleshooting and optimization strategies to enhance tool performance in real-world research and diagnostic settings. Finally, we discuss the importance of robust benchmarking and validation frameworks, highlighting recent advances and future directions for integrating multi-omics data to improve prioritization accuracy for both Mendelian and complex diseases.

The Gene Prioritization Problem: From Genomic Loci to Candidate Lists

Defining the Gene Prioritization Challenge in the Genomic Era

The advent of high-throughput genomic technologies has revolutionized the field of genetics, enabling the generation of vast amounts of data on genetic variations and their potential associations with diseases. However, this wealth of data presents a significant analytical challenge: distinguishing truly causative genes from hundreds or thousands of candidate genes identified in studies. Modern high-throughput experiments, such as genome-wide association studies (GWAS) and differential expression studies, generate numerous potential associations between genes and diseases, making experimental validation of all discovered associations time-consuming and expensive [1]. The gene prioritization problem emerged alongside the growth of genetic linkage analysis, which often yielded large loci containing many candidate genes, only a few of which were genuinely associated with the investigated phenotype [1]. This challenge has persisted with the transition to GWAS, which, while providing cheaper, faster, and more precise genetic mapping, still produces extensive lists of candidate genes requiring further evaluation [1].

The fundamental goal of gene prioritization is to arrange candidate genes in order of their potential likelihood to be truly associated with a specific disease or phenotype based on prior knowledge about these genes and the disease in question [1]. This process enables researchers to focus their experimental validation efforts on the most promising candidates, thereby accelerating the discovery of disease mechanisms and potential therapeutic targets. The development of computational methods for gene prioritization has become essential to manage the deluge of genomic data and facilitate the translation of genetic findings into biological insights and clinical applications.

Computational Approaches to Gene Prioritization

Gene prioritization tools generally consist of two core components: a collection of evidence sources (databases of associations between genes, diseases, and other biological entities) and a prioritization module that calculates scores reflecting each gene's likelihood of being responsible for the phenotype [1]. These tools can be broadly classified based on their underlying assumptions and data representation models.

Foundational Methodological Assumptions

Most gene prioritization strategies operate on one of two fundamental principles. The first assumes that genes may be directly associated with a disease if they are systematically altered in the disease compared to controls (e.g., carrying disease-specific variants) [1]. The second operates on the guilt-by-association principle, positing that the most probable candidate genes are those linked to genes or other biological entities previously shown to impact the phenotype of interest [1] [2]. Tools following the first strategy typically require users to provide keywords or ontology terms specifying the disease and then integrate various gene-disease associations, while those following the second strategy accept a set of seed genes (known disease genes) and prioritize candidates based on their similarity or proximity to these seeds [1].

Table 1: Categories of Gene Prioritization Approaches

| Category | Underlying Principle | Required Input | Examples |

|---|---|---|---|

| Disease-Centric | Integration of all evidence supporting gene-disease associations | Disease keywords or ontology terms | PolySearch2, Open Targets |

| Seed-Based | Guilt-by-association with known disease genes | Set of seed genes | Endeavour, ToppGene |

| Hybrid | Combination of both approaches | Disease terms or seed genes | PhenoRank, Phenolyzer |

Data Representation and Algorithmic Strategies

From a computational perspective, gene prioritization methods can be categorized by how they represent and process biological data. Score aggregation methods integrate multiple evidence sources by calculating independent scores for each data type and then combining them into a global ranking [1]. In contrast, network analysis methods represent biological entities as nodes in a network and analyze their connections, based on the observation that disease-associated proteins tend to cluster in protein-protein interaction networks [1]. More recently, machine learning approaches, including graph convolutional networks, have emerged that can learn complex patterns from integrated datasets and automatically extract relevant features for prioritization [3].

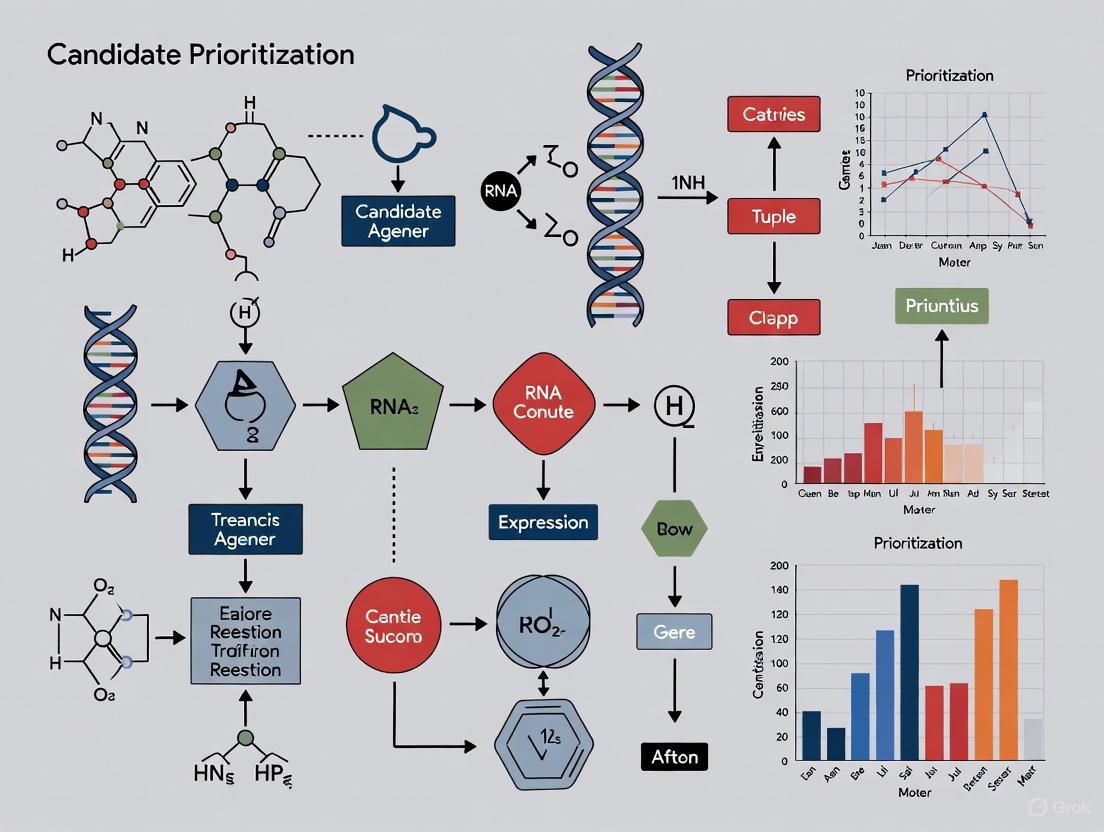

The following diagram illustrates the typical workflow of a gene prioritization pipeline, integrating multiple data sources and analytical approaches:

Benchmarking Methodologies for Performance Validation

Robust benchmarking is essential for evaluating the performance of gene prioritization tools and guiding researchers in selecting appropriate methods for their specific applications. However, the validation of these tools presents significant challenges, including the risk of knowledge cross-contamination when benchmark data has been used in tool development and the limitations of prospective validations which often lack sufficient statistical power [4].

Cross-Validation Framework Using Gene Ontology

A robust benchmarking approach utilizes the intrinsic properties of the Gene Ontology (GO) database, where genes annotated with the same term are associated with similar biological processes, cellular components, or molecular functions [4]. This natural clustering makes GO terms ideal for cross-validation experiments. In this framework, genes annotated with a specific GO term are randomly divided into three equally sized parts. Two parts are used as training data (seed genes), and the prioritization tool's ability to recover the held-out genes is evaluated [4]. This approach can be applied to terms of different sizes to investigate whether the level of GO-term specificity impacts performance.

Table 2: Key Performance Metrics for Gene Prioritization Tools

| Metric | Calculation | Interpretation |

|---|---|---|

| Area Under Curve (AUC) | Probability of ranking a random positive higher than a random negative | Overall performance across all thresholds |

| Partial AUC (pAUC) | AUC calculated up to a specific false positive rate (e.g., 2%) | Performance focused on top-ranked candidates |

| Median Rank Ratio (MedRR) | Median rank of true positives divided by total number of candidates | Central tendency of true positive rankings |

| Normalized Discounted Cumulative Gain (NDCG) | Weighted sum of relevance scores with logarithmic rank discount | Ranking quality emphasizing top positions |

The Benchmarker Framework for GWAS Data

For gene prioritization in the context of genome-wide association studies, the Benchmarker method provides an unbiased, data-driven approach that compares prioritization strategies using leave-one-chromosome-out cross-validation with stratified linkage disequilibrium score regression [5]. This method addresses limitations of traditional "gold standard" benchmarks, which may be biased and incomplete, by systematically evaluating how well similarity-based prioritization strategies perform across different genomic contexts without relying on potentially incomplete reference sets [5].

Application Notes: Experimental Protocol for Gene Prioritization

Protocol: Implementing the Endeavour Prioritization Pipeline

The following protocol describes the implementation of gene prioritization using Endeavour, a widely used tool that integrates 75 data sources across six species [2].

Step 1: Species and Data Source Selection

- Select the target species from the six available options: H. sapiens, M. musculus, R. norvegicus, D. melanogaster, C. elegans, and D. rerio.

- Choose relevant data sources from categories including gene and protein function, biomolecular pathways, interaction networks, chemical information, phenotypic information, expression data, and sequence-based features.

- To avoid redundant information, select complementary rather than highly correlated data sources.

Step 2: Training Set Preparation

- Compile a set of seed genes (typically 5-40 genes) known to be associated with the phenotype or disease of interest.

- Ensure seed genes have sufficient annotation in the selected data sources.

- For diseases with limited known associations, consider using orthologous genes from model organisms or genes associated with similar phenotypes.

Step 3: Candidate Gene Definition

- Define the set of candidate genes for prioritization, which can be derived from experimental results (e.g., genes from a deletion region observed in patients) or can encompass the entire genome if no prior filtering is desired.

- Ensure consistent gene identifiers across seed and candidate gene sets.

Step 4: Prioritization Execution

- Execute the prioritization workflow, which involves three computational steps:

- Training a model of the biological process using the seed genes

- Scoring candidate genes based on their fit to the model across all selected data sources

- Integrating rankings from individual data sources into a global ranking using order statistics

Step 5: Result Interpretation

- Analyze the global ranking of candidate genes, focusing on those with the highest scores and lowest p-values.

- Examine individual data source rankings to understand which evidence types contributed most to the prioritization of specific candidates.

- Use the parallel coordinate visualization to identify patterns and correlations across different data sources.

Protocol: Cross-Validation Benchmarking of Prioritization Tools

This protocol describes the implementation of a rigorous benchmarking procedure for evaluating gene prioritization tools using Gene Ontology terms [4].

Step 1: Benchmark Dataset Preparation

- Download Gene Ontology annotations from BioMart Ensembl or the GO Consortium.

- Filter GO terms based on size ranges of interest (e.g., 10-30, 31-100, and 101-300 genes) to avoid terms that are too specific or too general.

- For each selected GO term, extract all associated genes to form a complete gene set.

Step 2: Cross-Validation Setup

- For each GO term, randomly partition the associated genes into three equally sized folds.

- Designate two folds as training genes (seed genes) and one fold as test genes.

- Repeat the process such that each fold serves as the test set once.

Step 3: Tool Execution and Result Collection

- For each cross-validation iteration, run the prioritization tool using the training genes as seeds.

- Record the rankings of the test genes in the resulting candidate list.

- Aggregate results across all GO terms and all cross-validation iterations.

Step 4: Performance Calculation

- For each cross-validation iteration, calculate performance metrics including AUC, partial AUC (focusing on top rankings), Median Rank Ratio, and Normalized Discounted Cumulative Gain.

- Compute the distribution of performance metrics across all evaluated GO terms.

- Use statistical tests (e.g., Mann-Whitney U test with Benjamini-Hochberg correction for multiple testing) to compare the performance of different tools.

Successful implementation of gene prioritization pipelines requires access to comprehensive biological databases and computational tools. The following table details key resources essential for gene prioritization research.

Table 3: Essential Research Reagents and Resources for Gene Prioritization

| Resource Category | Specific Examples | Primary Function in Gene Prioritization |

|---|---|---|

| Gene Ontology Databases | Gene Ontology Annotations, GO Slim | Provide functional context for genes based on biological process, molecular function, and cellular component [4] |

| Protein Interaction Networks | FunCoup, BioGrid, IntAct | Offer physical and functional interaction data for network-based prioritization [1] [4] |

| Pathway Databases | Reactome, KEGG | Supply information on gene involvement in biological pathways [2] |

| Phenotype Databases | OMIM, Rat Disease Ontology | Curate gene-disease associations for training and validation [2] |

| Expression Databases | PaGenBase, GEO | Provide gene expression patterns across tissues and conditions [2] |

| Sequence Databases | InterPro, Ensembl | Offer sequence-based features and domain annotations [2] |

| Prioritization Tools | Endeavour, PhenoRank, Open Targets | Implement algorithms for ranking candidate genes [1] [2] |

Advanced Methodologies: Graph Convolutional Networks

Recent advances in gene prioritization include the application of graph convolutional networks (GCNs), which combine network topology with node features in a semi-supervised learning framework [3]. These methods construct feature vectors for genes using GO terms from molecular function, cellular component, and biological process ontologies, then train a graph convolution network on protein-protein interaction data to identify disease candidate genes [3]. The GCN approach simultaneously considers local graph structure and node features, learning hidden layer representations that encode both topological information and functional attributes [3].

The following diagram illustrates the architecture of a graph convolutional network for gene prioritization:

Experimental validation of GCN-based prioritization methods has demonstrated superior performance compared to traditional network-based and machine learning approaches, achieving better precision, AUC values, and F1-scores across multiple disease datasets [3]. This performance advantage stems from the ability of GCNs to effectively integrate multiple data types and capture complex network patterns that are challenging for conventional methods to detect.

The pursuit of candidate genes for complex diseases is a fundamental challenge in bioinformatics. For years, the guilt-by-association (GBA) principle has been a dominant paradigm, operating on the core assumption that genes interacting with known disease genes are likely to be involved in the same disease. However, emerging research critically examines this static view, introducing a more dynamic "guilt-by-rewiring" principle that focuses on changes in gene network connectivity between healthy and diseased states. This application note details the core biological assumptions, provides quantitative comparisons, and offers standardized protocols for applying these concepts in candidate gene prioritization research, framing them within the context of a broader thesis on bioinformatics tools.

Core Principles and Quantitative Comparison

The Established Paradigm: Guilt-by-Association

The traditional GBA principle posits that genes are functionally related if they are "associated," meaning they are physically interacting, co-expressed, or share other relational properties. In disease studies, this translates to an assumption that unknown disease genes will be located close to known disease genes within a molecular network. This principle has been widely scaled up using machine learning algorithms that propagate association signals through networks [6] [7].

The Emerging Paradigm: Guilt-by-Rewiring

In contrast, the "guilt-by-rewiring" principle focuses on network dynamics. It assumes that disease genes are more likely to undergo significant changes in their network connections (rewiring) between control and patient conditions, while most of the network remains stable. This approach does not assume proximity to known disease genes but rather identifies genes whose contextual relationships are most disrupted in the disease state [6].

Table 1: Core Assumptions of GBA versus Guilt-by-Rewiring

| Feature | Guilt-by-Association (GBA) | Guilt-by-Rewiring |

|---|---|---|

| Network View | Static reference network | Dynamic, condition-specific networks |

| Core Assumption | Disease genes cluster in network neighborhoods | Disease genes change connectivity between states |

| Primary Data | Single network integrated from multiple sources | Paired expression data from case/control studies |

| Typical Output | Prioritized gene list based on network proximity | Prioritized gene list based on differential connectivity |

Experimental Validation and Performance

Evidence for Network Rewiring in Disease

Application of the rewiring principle to Crohn's disease demonstrated that rewiring is not randomly distributed but enriched in biologically relevant pathways. Immune system genes showed significantly higher rewiring density compared to the genome-wide background (Binomial test, P-value ≤ 0.001). When the rewiring network density was 0.01, immune-related subnetworks contained 2,465 rewiring edges versus 1,815 expected by chance [6].

Comparative Performance in Gene Prioritization

Studies directly comparing both principles found the rewiring approach generates more replicable results. In Crohn's disease and Parkinson's disease, integrating network rewiring features within a Markov random field framework improved replication rates and implicated biologically plausible disease pathways that were missed by static GBA methods [6].

Table 2: Empirical Performance Comparison in Crohn's Disease Analysis

| Method | Network Density | Trait-Associated Module Recovery | Replication Rate |

|---|---|---|---|

| Static GBA | 0.01 | Baseline | Lower |

| Guilt-by-Rewiring | 0.01 | Enhanced | Higher |

| Static GBA | 0.05 | Baseline | Lower |

| Guilt-by-Rewiring | 0.05 | Enhanced | Higher |

Limitations and Critical Assessment of GBA

Recent critical assessments reveal that GBA's performance may be driven more by the multifunctionality of highly connected genes than specific network topology. One study found that a network of millions of edges could be reduced to just 23 associations while maintaining similar GBA performance, indicating that functional information is concentrated in very few connections [7] [8]. For autism spectrum disorder, GBA methods performed no better than generic measures of gene constraint and were not competitive with genetic association studies for identifying novel risk genes [9].

Protocol: Implementing Guilt-by-Rewiring Analysis

Experimental Workflow

The following diagram illustrates the core workflow for a guilt-by-rewiring analysis, from data preparation to gene prioritization.

Step-by-Step Methodology

Step 1: Data Collection and Preprocessing

- Sample Selection: Obtain transcriptomic data (microarray or RNA-seq) from both disease and matched control tissues. The Crohn's disease analysis used 172 intestine biopsies from GEO accession GSE20881 [6].

- Quality Control: Perform standard normalization and batch effect correction. Filter genes with low expression. The referenced study resulted in 12,007 genes passing quality control [6].

- Network Nodes: Define the universal gene set as the intersection between genes in your expression data and those in your GWAS study.

Step 2: Co-expression Network Construction

- Calculate Correlations: For both control and disease sample groups separately, compute pairwise Pearson Correlation Coefficients (PCC) for all gene pairs.

- Define Edges: Apply a threshold to create unweighted networks where edges represent significant co-expression relationships. The threshold can be determined by achieving a specific network density (e.g., 0.1, 0.05, or 0.01) [6].

- Alternative Approach: For weighted networks, retain all edges with their correlation strengths.

Step 3: Identify Rewired Connections

- Statistical Testing: Use Fisher's method to test for significant differences between PCCs in control versus disease networks for each gene pair.

- Rewiring Metric: Calculate the degree of rewiring as (1 - P-value) from the significance test, creating a weighted rewiring network where values range from 0-1, with higher values indicating more significant rewiring [6].

- Discretization (Optional): Apply thresholds to create binary rewiring networks at various densities for downstream analysis.

Step 4: Gene Prioritization using Markov Random Field (MRF)

- Input Features: Integrate GWAS association P-values with rewiring network information.

- MRF Framework: Model the probability of a gene being disease-associated as dependent on both its GWAS signal and the states of its neighbors in the rewiring network.

- Implementation: The MRF objective function can be formulated as:

P(Y|X) ∝ exp(ΣαiXi + Σβij|Xi - Xj|)where Y represents association status, X represents genes, α controls influence of GWAS evidence, and β controls influence of network neighbors [6]. - Output: A prioritized list of candidate genes ranked by their posterior probabilities.

Validation and Interpretation

- Pathway Enrichment: Test prioritized genes for enrichment in biologically relevant pathways using tools like Enrichr or GSEA.

- Independent Validation: Assess replication in independent datasets when available.

- Comparison to Baseline: Compare results with traditional GBA approaches applied to the same data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Guilt-by-Rewiring Analysis

| Resource Category | Specific Tools/Databases | Application in Protocol |

|---|---|---|

| Gene Expression Data | GEO (GSE20881), ArrayExpress | Source of condition-specific transcriptomic data [6] |

| Protein Interactions | STRING, InWeb, OmniPath | Supplementary network data [10] |

| Pathway Annotations | Reactome, KEGG, Gene Ontology | Functional validation of prioritized genes [6] [11] |

| Disease Gene Associations | GWAS Catalog, SFARIGene | Validation and benchmark datasets [10] [9] |

| Co-expression Tools | WGCNA, COEX | Alternative network construction methods |

| Module Identification | M-module algorithm, K1 algorithm | Identifying disease-relevant subnetworks [12] [10] |

| Benchmarking Frameworks | PhEval, DREAM Challenge | Standardized performance assessment [13] [10] |

Advanced Applications: Network Module Identification

The DREAM Challenge on disease module identification comprehensively assessed 75 module identification methods across diverse networks, providing robust benchmarks for network-based approaches. Key findings include:

Table 4: Top-Performing Module Identification Methods from DREAM Challenge

| Method Category | Representative Algorithm | Key Features | Performance Insight |

|---|---|---|---|

| Kernel Clustering | K1 (Top performer) | Diffusion-based distance metric with spectral clustering | Most robust performance across networks [10] |

| Modularity Optimization | M1 (Runner-up) | Resistance parameter controlling module granularity | High performance with size control [10] |

| Random-Walk Based | R1 (Third rank) | Markov clustering with adaptive granularity | Balanced module sizes [10] |

| Multi-Network | Various integrated methods | Leverages multiple network types simultaneously | No significant performance improvement over single-network [10] |

The challenge found that top methods recover complementary trait-associated modules rather than converging on identical solutions, suggesting that applying multiple approaches can provide more comprehensive biological insights [10].

Pathway and Module Dynamics Diagram

The following diagram illustrates how module connectivity changes between disease states, a key concept in the guilt-by-rewiring principle.

The guilt-by-association principle, while useful, presents significant limitations due to its static nature and biases toward multifunctional genes. The guilt-by-rewiring principle offers a dynamic alternative that leverages changes in network connectivity between disease states, proving more effective for identifying replicable disease genes in complex disorders like Crohn's disease and Parkinson's disease. By implementing the standardized protocols outlined in this application note and utilizing the recommended research reagents, researchers can more effectively prioritize candidate genes and uncover novel disease mechanisms. Future development of bioinformatics tools for gene prioritization should focus on dynamic network properties and rigorous benchmarking against standardized frameworks like PhEval and DREAM challenges to ensure biological relevance and translational impact.

In the field of candidate gene prioritization research, the integration of multi-omics data has become fundamental for identifying disease-associated genes with greater accuracy and efficiency. Three essential data sources form the cornerstone of modern computational approaches: Protein-Protein Interaction (PPI) networks, which map the physical and functional connections between proteins; Gene Ontology (GO), which provides a structured vocabulary for gene function across biological processes, molecular functions, and cellular components; and Phenotype Ontologies, particularly the Human Phenotype Ontology (HPO), which systematically describes abnormal human phenotypes. When integrated within sophisticated computational frameworks, these resources enable researchers to move beyond simple positional cloning to systems-level analyses that dramatically improve the identification of causal genes for both Mendelian and complex diseases. This application note details the essential characteristics of these data sources, their integration methodologies, and practical protocols for their application in gene prioritization research, providing a comprehensive toolkit for researchers, scientists, and drug development professionals.

Table 1: Essential Data Sources for Candidate Gene Prioritization

| Data Source | Primary Content | Key Statistics | Use in Gene Prioritization |

|---|---|---|---|

| PPI Networks | Physical and functional interactions between proteins | STRING: 59.3 million proteins, >20 billion interactions across 12,535 organisms [14]. Human PPI: 21,557 proteins, 342,353 interactions [15]. | Network-based prioritization using connectivity patterns, neighborhood analysis, and diffusion algorithms. |

| Gene Ontology (GO) | Standardized terms for biological processes, molecular functions, cellular components | Comprehensive functional annotations across multiple species [16]. Three structured ontologies: Biological Process, Molecular Function, Cellular Component [16] [17]. | Functional enrichment analysis, semantic similarity calculations, and feature vector generation for machine learning. |

| Phenotype Ontologies (HPO) | Structured vocabulary of human phenotypic abnormalities | HPO contains over 15,000 terms describing phenotypic abnormalities [18]. Phen2Gene uses HPO2Gene Knowledgebase (H2GKB) with weighted gene lists for each term [18]. | Phenotype-driven prioritization by matching patient phenotypes to known gene-phenotype associations. |

Integration Frameworks and Tools

Table 2: Bioinformatics Tools and Integration Platforms

| Tool/Platform | Integration Methodology | Data Sources Utilized | Performance Characteristics |

|---|---|---|---|

| STRING | Functional association network integrating multiple evidence sources | Physical interactions, co-expression, text mining, database imports, gene fusion, and co-occurrence [14]. | Provides confidence scores for interactions; enables network clustering and functional enrichment analysis [14]. |

| Phen2Gene | Probabilistic model with HPO term weighting by skewness | HPO annotations, gene-disease databases (OMIM, ClinVar, Orphanet), gene-gene databases (HPRD, Biosystems) [18]. | Rapid prioritization (median 0.94 seconds); outperforms existing tools in speed while maintaining accuracy [18]. |

| Graph Convolutional Networks | Semi-supervised learning on biological networks | PPI networks, GO terms as feature vectors, known disease gene associations [3]. | Achieves superior performance in precision, AUC, and F1-score compared to eight state-of-the-art methods [3]. |

Integration Methodologies and Experimental Protocols

Network-Based Machine Learning Approaches

Network propagation techniques leverage the guilt-by-association principle, where genes interacting with known disease genes are considered strong candidates. Several machine learning approaches have been successfully applied:

Heat Kernel Diffusion Ranking implements a discrete approximation of the heat kernel rank approach for scoring candidate genes [19]. This method models the spread of differential expression signals through a PPI network, assuming that genes causally related to a disease tend to be surrounded by differentially expressed neighbors. The algorithm requires parameter tuning for the diffusion rate (α), typically set to 0.5, and utilizes differential expression data computed from knockout versus control experiments [19].

Kernel Ridge Regression Ranking employs Laplacian exponential diffusion kernels, Regularized Commute Time kernels, or Regularized Laplacian Diffusion kernels to define a similarity network between genes [19]. This approach smooths a candidate gene's differential expression levels through kernel ridge regression, with parameters λ (regularization parameter) and nn (maximum number of neighbors) requiring optimization across multiple values [19].

Arnoldi Diffusion Ranking applies the Arnoldi algorithm based on a Krylov Space method for network diffusion [19]. This numerical approach approximates matrix exponentials for efficient computation of network propagation, particularly useful for large-scale PPI networks with thousands of nodes and edges.

Direct Neighborhood Ranking provides a straightforward baseline method that combines a gene's differential expression with the average differential expression of its direct neighbors in a PPI network [19]. While less sophisticated than diffusion approaches, this method offers interpretable results and computational efficiency.

Phenotype-Driven Prioritization Protocol

The Phen2Gene workflow demonstrates a robust protocol for phenotype-driven gene prioritization [18]:

HPO Term Acquisition: Clinicians manually curate HPO terms or utilize natural language processing tools like Doc2HPO to extract relevant phenotypic terms from clinical notes.

Term Weighting: Phen2Gene automatically weights input HPO terms by calculating the skewness of gene score distributions for each term, giving more weight to specific, informative terms over general ones [18].

Knowledgebase Query: The tool accesses the HPO2Gene Knowledgebase (H2GKB), which contains precomputed weighted gene lists for each HPO term, generated through Enhanced Phenolyzer (v0.4.0) [18].

Gene Ranking: The system combines the ranked gene lists for all input HPO terms, incorporating term weights, to generate a final prioritized candidate list.

Result Integration: The output provides gene-disease relationships that can be integrated with sequencing data to identify potential causative variants.

Graph Convolutional Network Protocol

A novel semi-supervised learning approach using Graph Convolutional Networks (GCNs) represents the cutting edge in gene prioritization methodology [3]:

Feature Vector Construction: Create three separate feature vectors for each gene using terms from GO's molecular function, cellular component, and biological process ontologies [3].

Network Integration: Train a graph convolution network on these feature vectors using PPI network data to learn representations that encode both local graph structure and node features [3].

Model Training: Implement semi-supervised learning on the biological network, treating known disease genes as labeled nodes and candidate genes as unlabeled nodes.

Classification and Ranking: The trained GCN classifies and ranks candidate genes based on their likelihood of disease association, outperforming traditional network and machine learning methods [3].

Visualization of Workflows and Signaling Pathways

Gene Prioritization Integration Workflow

Gene Prioritization Data Integration Workflow

This workflow illustrates how the three essential data sources—PPI networks (yellow), Gene Ontology (red), and Human Phenotype Ontology (green)—are integrated through computational platforms (blue) to generate prioritized candidate gene lists.

Phenotype-Driven Prioritization Mechanism

Phenotype-Driven Gene Prioritization

This diagram outlines the Phen2Gene workflow, beginning with patient phenotypic data, moving through HPO term extraction and weighting, knowledgebase querying, and culminating in a ranked gene list for clinical validation.

Research Reagent Solutions: Essential Materials and Databases

Table 3: Essential Research Resources for Gene Prioritization Studies

| Resource Category | Specific Resources | Function in Research | Access Information |

|---|---|---|---|

| PPI Networks | STRING [14], BioGRID [19], I2D [19], FunCoup [4] | Provide physical and functional interaction data for network-based prioritization algorithms. | STRING: https://string-db.org/ [14]; BioGRID: https://thebiogrid.org/ |

| Ontology Resources | Gene Ontology [16], Human Phenotype Ontology [18] | Standardized vocabularies for gene function and phenotypic abnormalities enabling computational analysis. | GO: http://geneontology.org/ [16]; HPO: https://hpo.jax.org/ |

| Annotation Databases | OMIM, ClinVar, Orphanet, GeneReviews [18] | Curated gene-disease associations for seeding prioritization algorithms and validating predictions. | OMIM: https://www.omim.org/; ClinVar: https://www.ncbi.nlm.nih.gov/clinvar/ |

| Software Tools | Phen2Gene [18], Enhanced Phenolyzer [18], Graph Convolutional Networks [3] | Implement prioritization algorithms and provide user-friendly interfaces for researchers. | Phen2Gene: https://phen2gene.wglab.org/ [18] |

| Benchmark Resources | GO term benchmarks [4], patient validation sets [18] | Enable objective performance comparison between different prioritization methods and algorithms. | Custom construction from published datasets [4] [18] |

Validation and Benchmarking Protocols

Cross-Validation Using Gene Ontology

Robust benchmarking of gene prioritization methods requires careful experimental design to avoid knowledge cross-contamination. The Gene Ontology provides an intrinsic clustering property that enables objective benchmarking through cross-validation [4]:

Term Selection: Select GO terms annotated with 10-300 genes to avoid terms that are too general or too specific [4].

Data Partitioning: Implement three-fold cross-validation where genes annotated with a specific GO term are randomly divided into three equal parts [4].

Query Formation: Use two parts as the query set for the prioritization tool being assessed.

Performance Measurement: Evaluate the presence and ranking of the held-out genes in the tool's output list, calculating true positives, false positives, true negatives, and false negatives.

Statistical Analysis: Calculate performance measures including Area Under the Curve (AUC), partial AUC (focusing on FPR ≤ 0.02), Median Rank Ratio (MedRR), and Normalized Discounted Cumulative Gain (NDCG) [4].

Performance Metrics and Clinical Validation

To assess practical utility in clinical settings, prioritization tools should be evaluated using multiple performance metrics:

Area Under the Curve (AUC) represents the probability of ranking a randomly chosen positive instance higher than a randomly chosen negative one, with partial AUC (pAUC) focusing on the most highly ranked genes (FPR ≤ 0.02) [4].

Median Rank Ratio (MedRR) calculates the ratio between the median rank of true positives and the total rank, normalizing for candidate list length and accounting for the expected skewness of true positive ranks [4].

Normalized Discounted Cumulative Gain (NDCG) from information retrieval penalizes true positives late in the list, emphasizing the importance of retrieving relevant genes as early as possible for practical experimental validation [4].

Clinical Diagnostic Yield measures the tool's performance on real patient data, as demonstrated by Phen2Gene's validation on 197 patients from scientific articles and 85 de-identified patient HPO term datasets from the Children's Hospital of Philadelphia [18].

The integration of PPI networks, Gene Ontology, and phenotype ontologies represents a powerful paradigm for candidate gene prioritization that leverages complementary data types to overcome the limitations of individual approaches. Network-based methods incorporating machine learning and diffusion algorithms capitalize on the guilt-by-association principle, while phenotype-driven approaches directly connect clinical observations to genetic causes. The continuing development of more sophisticated integration frameworks, particularly graph neural networks and semi-supervised learning methods, promises further improvements in prioritization accuracy. As these resources continue to expand in coverage and quality, and as computational methods become more advanced, bioinformatics approaches to gene prioritization will play an increasingly central role in both basic research and clinical diagnostics, accelerating the pace of gene discovery and therapeutic development for rare and complex diseases.

The field of human genetics has undergone a profound transformation over the past three decades, moving from studying simple Mendelian disorders to unraveling the complex architecture of common diseases. This evolution began with family-based linkage analysis and progressed through the genome-wide association study (GWAS) era, finally arriving at today's multi-omics integration approaches. This methodological progression has fundamentally reshaped how researchers identify and prioritize candidate genes, enhancing our understanding of disease mechanisms and accelerating therapeutic development.

The limitations of initial approaches became apparent as researchers sought to understand common complex diseases. As noted in a recent analysis, "when scientists sought to understand the genetic contributions to more common, complex diseases like heart disease, schizophrenia, and diabetes, they realized that relying on linkage analysis would not work" [20]. This recognition spurred the development of GWAS, which has since become a cornerstone of modern genetic epidemiology.

The Era of Linkage Analysis: Foundations of Genetic Mapping

Principles and Methodologies

Linkage analysis represented the first systematic approach to mapping disease genes in humans. This method relied on family pedigrees and the co-inheritance of genetic markers with traits of interest across generations. The fundamental principle was that genes located close to each other on a chromosome tend to be inherited together, allowing researchers to approximate the location of disease genes relative to known marker positions.

The typical workflow for linkage studies included:

- Pedigree Construction: Assembling multi-generation family trees showing which members inherited a particular trait

- Marker Genotyping: Using restriction fragment-length polymorphisms (RFLPs) as genetic markers

- LOD Score Calculation: Determining the statistical likelihood that a marker and trait are linked versus inherited independently

- Positional Cloning: Systematically narrowing the chromosomal region to identify the causal gene

This approach proved highly successful for monogenic disorders with clear inheritance patterns. As one analysis notes, "Some of the first genes that scientists tied to specific traits using linkage analysis were ones involved in rare, Mendelian diseases like Huntington's disease, sickle cell anemia, and cystic fibrosis" [20].

Limitations and Transition to GWAS

Despite its successes with monogenic disorders, linkage analysis faced significant challenges when applied to complex traits:

- Limited Resolution: The resolution was insufficient to pinpoint specific genes in complex regions

- Reduced Power for Complex Traits: Most common diseases involve multiple genes with small effects

- Family Recruitment Challenges: Collecting sufficient multi-generation families with adequate statistical power was logistically challenging

The recognition of these limitations set the stage for the GWAS era, particularly after Risch and Merikangas published their seminal 1996 paper demonstrating that "an association study that analyzes one million genetic markers from a sample of unrelated individuals could be more powerful, statistically, than a linkage analysis" [20].

The GWAS Revolution: Scale, Discovery, and Challenges

Technological and Methodological Foundations

The emergence of GWAS required convergence of several critical technological developments that transformed genetic epidemiology:

Table 1: Key Technological Enablers of the GWAS Era

| Development | Description | Impact |

|---|---|---|

| SNP Arrays | Commercial microarrays for genotyping hundreds of thousands of SNPs | Enabled cost-effective genome-wide genotyping; early arrays detected ~1,400 SNPs, modern ones approach 1 million [20] |

| International HapMap | Catalog of common haplotypes and tag SNPs | Provided shortcut for comprehensive genome coverage using ~500,000 tag SNPs instead of 10+ million SNPs [20] |

| Large Biobanks | Collections of DNA samples with linked phenotype data | Provided the large sample sizes needed for statistical power; examples include UK Biobank (~500,000 participants) and 23andMe [21] [20] |

| Statistical Imputation | Methods to infer ungenotyped variants using reference panels | Dramatically increased genomic coverage beyond directly genotyped SNPs [22] |

The GWAS approach fundamentally differed from linkage studies by examining statistical associations between genetic variants and traits in unrelated individuals across the population. The standard case-control design compared allele frequencies between affected and unaffected individuals, requiring stringent significance thresholds (typically P < 5 × 10⁻⁸) to account for multiple testing [23].

Major Discoveries and Insights

GWAS has generated remarkable insights into the genetic architecture of complex traits since the first landmark study in 2005 on age-related macular degeneration [24]. By 2023, the NHGRI-EBI Catalog of Human Genome-wide Association Studies documented "over 45,000 GWASs across 5,000 human traits" [20].

Key conceptual advances emerging from GWAS include:

- Ubiquitous Polygenicity: Most complex traits are influenced by thousands of genetic variants with small effects [22]

- Extensive Pleiotropy: Many genetic variants influence multiple seemingly unrelated traits [22]

- Missing Heritability: While GWAS has identified numerous trait-associated variants, they typically explain only a fraction of the estimated heritability [23]

The scale of GWAS has expanded dramatically, with sample sizes growing from thousands to millions of participants. As noted in a 2023 review, "Over the past 5 years, the average sample size per publication has more than tripled, substantially increasing the number of significant associations" [21].

Persistent Challenges and Limitations

Despite these successes, GWAS faces several persistent challenges that limit its translational potential:

- Limited Functional Insights: GWAS identifies statistical associations but does not directly reveal biological mechanisms [24]

- European Ancestry Bias: Approximately 80% of GWAS participants have European ancestry, limiting generalizability [24] [25]

- Linkage Disequilibrium Complications: Correlation between nearby variants makes pinpointing causal genes difficult [24]

- Clinical Translation Gap: Despite numerous discoveries, concrete medical applications remain limited [24]

As critically noted in a recent analysis, "The March 2025 bankruptcy of 23andMe serves as a stark reminder of the limited translational value of GWAS to the general public" [24].

Multi-Omics Integration: The Next Frontier

Conceptual Framework and Methodologies

Multi-omics integration represents the current frontier in genetic research, combining data from multiple molecular levels to bridge the gap between genetic associations and biological mechanisms. This approach recognizes that "each type of omics data—genomics, transcriptomics, epigenomics, proteomics, metabolomics, lipidomics, glycomics, and microbiomics—provides unique insights into different aspects of biological systems" [26].

Table 2: Major Multi-Omics Integration Approaches

| Approach | Description | Example Tools |

|---|---|---|

| Statistical and Enrichment Methods | Combine multiple omics layers to compute pathway enrichment scores | IMPaLA, Pathway Multiomics, MultiGSEA, PaintOmics, ActivePathways [26] |

| Machine Learning Methods | Use supervised or unsupervised learning to predict pathway activities | DIABLO, OmicsAnalyst (using LASSO regression), clustering, PCA [26] |

| Network-Based Methods | Construct interaction networks to identify key regulatory nodes | Oncobox, TAPPA, TBScore, Pathway-Express, SPIA, iPANDA [26] |

The power of multi-omics integration is exemplified in studies of complex traits like metabolic syndrome and sarcopenia, where researchers have leveraged "integrative genetics and transcriptome to identify potential biomarkers and immune interactions" [27]. Similarly, in stuttering research, integration of "genomic, transcriptomic and phenomic evidence" has helped "unravel the biological architecture of complex speech disorders" [28].

Practical Applications and Workflows

A representative multi-omics workflow for candidate gene prioritization includes:

- Genetic Association Mapping: Conduct GWAS to identify trait-associated genomic regions

- Transcriptomic Integration: Combine with expression quantitative trait locus (eQTL) data from resources like GTEx to identify genes whose expression is associated with trait-associated variants [27]

- Functional Annotation: Overlap associated regions with epigenetic marks, chromatin interactions, and protein-binding data

- Pathway Analysis: Identify biological pathways enriched for associations using tools like SPIA (Signaling Pathway Impact Analysis) [26]

- Network Construction: Build gene regulatory networks to identify key drivers and modules

- Experimental Validation: Prioritize candidates for functional follow-up studies

This integrated approach is particularly powerful for drug target discovery, as it helps bridge the gap between statistical associations and causal mechanisms. As noted in recent research, "Multi-omics data integration has been extensively used to study normal and pathological conditions by assessing molecular pathway activation" [26].

Integrated Protocols for Candidate Gene Prioritization

Protocol 1: Multi-Omics Data Integration for Pathway Analysis

Purpose: To integrate multiple omics data types for pathway activation assessment and candidate gene prioritization.

Materials:

- Genotype data from GWAS

- RNA-seq or microarray expression data

- Epigenomic data (DNA methylation, histone modifications)

- Pathway databases (OncoboxPD, KEGG, Reactome)

- Computational tools: SPIA, DEI (Drug Efficiency Index) software [26]

Methodology:

Data Preprocessing

- Perform quality control on each omics dataset separately

- Normalize data using appropriate methods for each data type

- Annotate genomic features using reference databases

Differential Analysis

- Identify differentially expressed genes between case and control samples

- Detect differentially methylated regions

- Find significant genetic associations from GWAS

Pathway Activation Calculation

- Apply topology-based pathway analysis using SPIA algorithm

- Calculate perturbation factors for each gene in pathways:

Acc = B·(I - B)−1·ΔE- Where

Accis accuracy vector,Bis adjacency matrix,Iis identity matrix, andΔEis differential expression vector [26]

- Compute pathway enrichment statistics

Multi-Omics Integration

- Integrate non-coding RNA data by considering their regulatory effects on mRNA

- Incorporate DNA methylation data as negative regulators of gene expression

- Calculate integrated pathway scores across omics layers

Candidate Gene Prioritization

- Rank genes based on consistent signals across multiple omics layers

- Prioritize genes located in network bottlenecks or hub positions

- Validate candidates using independent cohorts or functional experiments

Protocol 2: Cross-Phenotype Association Analysis

Purpose: To identify pleiotropic genes and variants influencing multiple related traits.

Materials:

- GWAS summary statistics for multiple traits

- Genomic annotation resources

- Computational tools: CPASSOC, COLOC, LD Score Regression [27]

Methodology:

Genetic Correlation Analysis

- Estimate genetic correlations between traits using LD score regression

- Assess shared heritability using MiXeR tool [27]

Pleiotropic Variant Identification

- Apply cross-phenotype association analysis (CPASSOC)

- Test each variant for association with multiple traits

- Identify variants showing significant effects across phenotypes

Colocalization Analysis

- Determine if trait associations share causal variants

- Calculate posterior probabilities for different colocalization scenarios

Transcriptomic Integration

- Perform transcriptome-wide association study (TWAS) to identify pleiotropic genes

- Integrate single-cell RNA-seq data to map gene distribution across cell types [27]

Functional Validation

- Construct clinical predictive models using pleiotropic genes

- Validate findings in animal models where appropriate [27]

Table 3: Key Research Reagents and Computational Tools for Multi-Omics Research

| Category | Resource/Tool | Function | Application Context |

|---|---|---|---|

| Genotyping Arrays | Affymetrix, Illumina SNP chips | Genome-wide genotyping of common variants | Initial GWAS discovery phase [20] |

| Reference Panels | 1000 Genomes, gnomAD, HaplMap | Reference for imputation and functional annotation | Improving genomic coverage and annotation [20] [22] |

| Expression Atlases | GTEx, Human Cell Atlas | Tissue and cell-type specific expression patterns | Linking variants to gene regulation [27] |

| Pathway Databases | KEGG, Reactome, OncoboxPD | Curated biological pathways and interactions | Pathway enrichment analysis [26] |

| Analysis Tools | PLINK, LDSC, SPIA, CPASSOC | Statistical analysis of genetic and multi-omics data | Association testing, genetic correlation, pathway analysis [27] [26] |

| Biobanks | UK Biobank, All of Us, Biobank Japan | Large-scale collections of genotyped samples with phenotype data | GWAS discovery and validation [21] |

Visualization Frameworks

Historical Evolution of Genetic Analysis Methods

Multi-Omics Integration Workflow

The evolution from linkage analysis to GWAS and multi-omics integration represents a fundamental transformation in how we approach the genetic architecture of complex traits. While each era has built upon the previous, the current multi-omics framework provides the most comprehensive approach yet for candidate gene prioritization.

Future directions will likely focus on several key areas:

- Artificial Intelligence Integration: Leveraging machine learning and deep learning to identify complex patterns across omics layers [24]

- Improved Diversity: Addressing the European ancestry bias through dedicated studies in underrepresented populations [25]

- Temporal Dynamics: Incorporating longitudinal data to understand how molecular profiles change over time

- Single-Cell Resolution: Applying multi-omics approaches at single-cell resolution to understand cellular heterogeneity

- Clinical Translation: Developing frameworks to more effectively move from statistical associations to therapeutic applications

As the field continues to evolve, the integration of diverse data types and the development of sophisticated analytical frameworks will further enhance our ability to prioritize candidate genes and unravel the complex biology of human disease.

A Methodologist's Toolkit: Network, Machine Learning, and Hybrid Approaches

The identification of genes associated with diseases is a fundamental challenge in biomedical research. High-throughput technologies often generate large lists of candidate genes, but experimental validation of all candidates remains costly and time-consuming. Gene prioritization addresses this bottleneck by computationally ranking candidate genes based on their likelihood of being associated with a specific disease or phenotype, enabling researchers to focus experimental efforts on the most promising targets. Network-based prioritization strategies have emerged as powerful tools that leverage the "guilt-by-association" principle, which posits that genes causing similar diseases tend to interact with each other or reside in the same network neighborhoods [3].

Network-based methods can be broadly categorized into three main classes: neighborhood-based methods, which consider direct interactions between genes; diffusion-based methods, which propagate information across the network; and random walk methods, which explore network paths to identify relevant genes. These approaches transform sparse genomic data into biologically meaningful patterns by leveraging the complex web of molecular interactions, providing a systems-level perspective on gene-disease associations [29]. The scaffolding for these analyses typically consists of protein-protein interaction (PPI) networks or functional association networks, which serve as maps of functional relationships between genes and proteins [30] [19].

This article provides application notes and detailed protocols for implementing these network-based strategies, focusing on their practical application in candidate gene prioritization for researchers, scientists, and drug development professionals.

Key Concepts and Biological Rationale

Theoretical Foundations

The fundamental premise underlying network-based gene prioritization is that genes associated with similar diseases tend to cluster in specific regions of molecular networks. This concept, often called the "local hypothesis," suggests that the topological proximity between genes in a network reflects their functional relatedness and shared involvement in disease mechanisms [29]. Neighborhood-based methods operate on the direct connections between genes, assuming that disease genes often interact directly with other disease genes. The underlying principle is that if a candidate gene interacts directly with several known disease genes, it has a higher probability of being associated with the same disease [3].

Diffusion-based methods extend this concept beyond immediate neighbors by considering the global structure of the network. These methods simulate how information or influence spreads through the network, allowing the identification of genes that may not be direct neighbors but still reside in network proximity to known disease genes. The mathematical machinery of network diffusion amplifies associations between genes that lie in network proximity, transforming sparse input data into dense patterns that highlight biologically relevant network regions [29].

Random walk methods, particularly Random Walk with Restart (RWR), provide a sophisticated approach to quantifying network proximity by simulating a walker that randomly traverses the network, with a probability of returning to seed nodes (known disease genes). This approach captures the complex relational patterns between genes by considering all possible paths in the network, not just the shortest ones [30] [19]. The stationary distribution of the random walker provides a measure of the functional relatedness between genes, with higher probabilities indicating stronger associations with the seed genes.

Molecular Networks as Biological Scaffolds

Network-based methods require molecular networks that represent interactions between genes or proteins. The most commonly used networks include:

- Protein-Protein Interaction (PPI) Networks: These represent physical interactions between proteins and can be sourced from databases like BioGRID, I2D, and STRING [19].

- Functional Association Networks: These include both physical interactions and functional associations derived from various evidence types, such as gene co-expression, phylogenetic profiles, and text mining. STRING and FunCoup are prominent examples of such networks [19] [4].

These networks serve as the scaffolding upon which prioritization algorithms operate, providing the relational context that enables the inference of gene-disease associations. The choice of network can significantly impact prioritization results, as different networks vary in coverage, quality, and the types of interactions they represent [19].

Methodological Approaches

Neighborhood-Based Methods

Neighborhood-based methods constitute the most straightforward approach to network-based gene prioritization. These methods operate on the principle of direct connectivity, assuming that genes causing similar diseases often interact directly with each other in molecular networks.

Neighborhood Rough Set (NRS) Reduction is a representative neighborhood-based method that selects informative gene subsets by analyzing the discriminative power of gene neighborhoods. In this approach, the neighborhood of a sample in the gene expression space is defined as:

δ_B(s_i) = {s_j | s_j ∈ S, Δ_B(s_i, s_j) ≤ δ}

where δ is a threshold and Δ_B(s_i, s_j) is a distance function in the gene subspace B [31]. The method evaluates the quality of gene subsets by calculating the dependency degree of the decision attribute (e.g., disease class) on the gene subset:

γ_B(D) = |Pos_B(D)| / |S|

where Pos_B(D) represents the samples that can be definitively classified based on the gene subset B [31]. This approach allows for the selection of minimal gene subsets that maintain high classification accuracy while reducing noise and redundancy.

Direct Neighborhood Ranking is a simpler approach that combines a gene's differential expression with the average differential expression of its direct neighbors in a functional association or PPI network. This method leverages the observation that disease genes often reside in network neighborhoods characterized by coherent differential expression patterns [19].

Diffusion-Based Methods

Diffusion-based methods extend the concept of neighborhood by considering the global structure of the network and simulating the propagation of information or influence across multiple steps. These methods are particularly effective at identifying disease genes that may not be direct neighbors of known disease genes but reside in broader network modules.

Heat Kernel Diffusion employs the heat kernel of a graph to simulate a diffusion process. The heat kernel is defined as:

H = e^{-αL}

where L is the Laplacian matrix of the network and α is a diffusion parameter that controls the rate of diffusion [19]. This method assigns scores to genes by considering the differential expression patterns in their extended network neighborhoods, with the influence of genes decreasing with their network distance from the target gene.

Kernel Ridge Regression Ranking uses kernel-based machine learning to smooth differential expression signals across the network. This approach constructs a similarity matrix between genes using network diffusion kernels, such as the Laplacian exponential diffusion kernel or regularized Laplacian kernel, and then applies kernel ridge regression to predict gene-disease associations [19].

Arnoldi Diffusion Ranking utilizes the Arnoldi algorithm, a Krylov subspace method, to approximate the diffusion process in large networks efficiently. This method is particularly useful for large-scale networks where explicit computation of matrix exponentials is computationally prohibitive [19].

Random Walk Methods

Random walk methods provide a powerful framework for capturing the complex relational patterns in biological networks by simulating a random walker that traverses the network according to defined transition probabilities.

Random Walk with Restart (RWR) is the most prominent random walk approach for gene prioritization. In RWR, a random walker starts from a set of seed nodes (known disease genes) and at each step either moves to a neighboring node or restarts from one of the seed nodes. The RWR algorithm can be formalized as:

p_{t+1} = (1 - r)Mp_t + rp_0

where p_t is the probability vector at time t, M is the column-normalized adjacency matrix of the network, r is the restart probability, and p_0 is the initial probability vector with equal probabilities for all seed genes [30]. The stationary distribution of this process provides a measure of proximity to the seed genes, with higher probabilities indicating stronger functional relatedness.

RWR has been successfully applied to prioritize lymphoma-associated genes by mining raw candidate genes from a PPI network and subsequently filtering them through permutation, linkage, and enrichment tests to control false positives [30]. This approach identified 108 inferred genes with strong associations to lymphoma pathogenesis, including RAC3, TEC, IRAK2/3/4, and SMAD3.

Biological Random Walk (BRW) is an advanced variant that incorporates biological information into the random walk process. Unlike standard RWR, which typically uses uniform transition probabilities, BRW biases the random walk based on biological knowledge, such as gene expression or functional annotations, leading to more biologically informed prioritization [3].

Performance Comparison and Benchmarking

Quantitative Performance Metrics

Evaluating the performance of gene prioritization methods requires appropriate metrics that capture their ability to rank true disease genes highly. Commonly used metrics include:

- Area Under the ROC Curve (AUC): Measures the overall ranking performance, with values closer to 1.0 indicating better performance [19] [4].

- Partial AUC (pAUC): Focuses on a specific region of the ROC curve, typically the initial high-ranking portion, which is more relevant for practical applications where only the top candidates are considered [4].

- Median Rank Ratio (MedRR): Calculates the ratio between the median rank of true positive genes and the total number of candidates, with lower values indicating better performance [4].

- Normalized Discounted Cumulative Gain (NDCG): A measure from information retrieval that emphasizes the importance of retrieving true positives early in the ranked list [4].

Comparative Performance Analysis

Table 1: Performance comparison of network-based gene prioritization methods

| Method | Category | Average Rank | AUC | Error Reduction vs. Simple Expression Ranking |

|---|---|---|---|---|

| Simple Expression Ranking | Baseline | 17 | 83.7% | - |

| Heat Kernel Diffusion Ranking | Diffusion-based | 8 | 92.3% | 52.8% |

| Kernel Ridge Regression Ranking | Diffusion-based | ~12* | ~89%* | ~30%* |

| Arnoldi Diffusion Ranking | Diffusion-based | ~13* | ~88%* | ~25%* |

| Direct Neighborhood Ranking | Neighborhood-based | ~15* | ~85%* | ~10%* |

| Random Walk with Restart | Random Walk | Varies by implementation | Varies by implementation | Varies by implementation |

Note: Values marked with * are approximate based on reported results in [19].

A large-scale benchmark study utilizing Gene Ontology terms and the FunCoup network compared state-of-the-art gene prioritization algorithms, including network diffusion methods and MaxLink, which utilizes network neighborhood [4]. The study demonstrated that network-based methods consistently outperform simple expression-based ranking, with diffusion-based methods generally showing superior performance compared to neighborhood-based approaches.

The performance of these methods can be influenced by several factors, including the quality and completeness of the underlying molecular network, the choice of parameters (e.g., diffusion rate, restart probability), and the specific characteristics of the disease under investigation [19] [4]. Methods like Heat Kernel Diffusion have shown particularly strong performance, achieving an average rank position of 8 out of 100 genes compared to 17 for simple expression ranking, with an AUC value of 92.3% versus 83.7% for the baseline approach [19].

Experimental Protocols

Protocol 1: Gene Prioritization Using Random Walk with Restart

Purpose: To prioritize candidate genes for a specific disease using Random Walk with Restart on a protein-protein interaction network.

Materials and Reagents:

- Known disease genes (seed genes) from databases such as DisGeNET

- Protein-protein interaction network from STRING or BioGRID

- Computing environment with R or Python

Procedure:

- Prepare Seed Genes: Compile a list of known disease-associated genes from curated databases. For lymphoma, this might include 1,458 known lymphoma-associated genes [30].

- Preprocess Network: Download and preprocess a PPI network, filtering interactions by confidence score if necessary. For STRING networks, consider using a confidence threshold (e.g., score > 700) [30].

- Construct Normalized Adjacency Matrix: Create an adjacency matrix A from the network, then compute the normalized matrix M by dividing each column by its sum.

- Set Initial Probability Vector: Create vector p₀ with equal probabilities for all seed genes (summing to 1) and zeros for all other genes.

- Run Iterative Random Walk: Iterate the equation p_{t+1} = (1 - r)Mp_t + rp_0 until convergence (when the change between pt and p{t+1} falls below a threshold, e.g., 10⁻⁸).

- Filter Results: Apply permutation, linkage, and enrichment tests to control false positives. For lymphoma, this process identified 108 high-confidence candidate genes [30].

- Validate Findings: Search scientific literature and analyze protein interaction partners to assess the biological relevance of top-ranked candidates.

Protocol 2: Diffusion-Based Prioritization Using Heat Kernel

Purpose: To prioritize candidate genes using heat kernel diffusion on a functional association network.

Materials and Reagents:

- Gene expression data from case-control studies

- Functional association network (e.g., STRING, FunCoup)

- Differential expression statistics

Procedure:

- Compute Differential Expression: Calculate differential expression measures (e.g., log2 ratio, test statistics) between case and control samples. Preselect top p genes (e.g., 300) based on statistical significance to reduce noise [19].

- Construct Network Laplacian: Build the Laplacian matrix L of the functional association network, defined as L = D - A, where D is the diagonal degree matrix and A is the weighted adjacency matrix.

- Compute Heat Kernel: Calculate the heat kernel matrix H = e^{-αL} using matrix exponentiation, where α is the diffusion parameter (typically around 0.5) [19].

- Smooth Expression Signals: Compute smoothed expression scores by multiplying the heat kernel matrix with the vector of differential expression values.

- Rank Candidate Genes: Rank genes based on their smoothed expression scores, with higher scores indicating stronger association with the disease.

- Evaluate Performance: Assess prioritization performance using cross-validation on known disease genes if available.

Protocol 3: Neighborhood-Based Prioritization Using Rough Sets

Purpose: To select minimal gene subsets with high discriminative power using neighborhood rough sets.

Materials and Reagents:

- Normalized gene expression dataset

- Class labels (e.g., disease subtypes)

- Computing environment with MATLAB or Python

Procedure:

- Normalize Data: Normalize expression measurements per gene by subtracting the minimum and dividing by the range, scaling values to [0,1] [31].

- Preselect Genes: Perform initial gene preselection using statistical tests (e.g., Kruskal-Wallis rank sum test for multi-class problems) and select top p genes (e.g., 300) [31].

- Define Neighborhoods: For each candidate gene subset, define neighborhoods for each sample using a distance metric (e.g., Manhattan distance) and a threshold δ.

- Compute Dependency Degree: Calculate the dependency degree γB(D) = |PosB(D)|/|S|, where Pos_B(D) contains samples whose neighborhoods consistently belong to a single class [31].

- Search for Reducts: Implement a breadth-first heuristic search to find minimal gene subsets (reducts) that maintain high dependency degree.

- Prioritize Genes: Rank genes based on their significance (sig) parameter, which reflects their occurrence frequency in multiple selected gene subsets [31].

- Validate Classifiers: Build classifiers using the selected gene subsets and evaluate their classification accuracy on test datasets.

Visualization of Workflows

Random Walk with Restart Workflow

Title: Random Walk with Restart gene prioritization workflow

Network Diffusion Strategy Diagram

Title: Network diffusion-based gene prioritization methodology

Research Reagent Solutions

Table 2: Essential research reagents and resources for network-based gene prioritization

| Resource Type | Specific Examples | Function in Analysis | Key Characteristics |

|---|---|---|---|

| Protein Interaction Networks | STRING, BioGRID, I2D, FunCoup | Provides scaffolding for network analyses; represents functional relationships between genes | Varying coverage and confidence scores; STRING includes functional associations beyond physical interactions [30] [19] [4] |

| Disease Gene Databases | DisGeNET, OMIM | Sources of known disease genes for seed sets and validation | Curated associations with evidence levels; DisGeNET contains 1,458 lymphoma-associated genes [30] |

| Gene Ontology Annotations | GO Biological Process, Molecular Function, Cellular Component | Provides functional context and feature vectors for machine learning approaches | Hierarchical structure with parent-child relationships; enables robust benchmarking [4] [3] |

| Gene Expression Data | GEO, ArrayExpress | Input for differential expression analysis and network propagation | Requires normalization (MAS5, RMA, GCRMA); differential measures include log2 ratio, test statistics [19] |

| Prioritization Algorithms | RWR, Heat Kernel, NRS Reduction | Core computational methods for ranking candidate genes | Varying parameters: restart probability (r), diffusion rate (α), neighborhood threshold (δ) [31] [30] [19] |

| Validation Frameworks | Cross-validation, Permutation Tests | Performance assessment and false positive control | Metrics: AUC, pAUC, MedRR, NDCG; three-fold cross-validation recommended [4] |

Network-based strategies, including neighborhood, diffusion, and random walk methods, have revolutionized candidate gene prioritization by leveraging the organizational principles of biological systems. These approaches transform sparse genomic data into biologically meaningful patterns, enabling researchers to identify the most promising candidate genes for experimental validation. The integration of multiple data types, including protein interactions, gene expression, and functional annotations, within a network framework provides a powerful paradigm for elucidating gene-disease associations.

As the field advances, several trends are shaping the development of network-based prioritization methods. The incorporation of deep learning approaches, particularly graph convolutional networks, represents a promising direction that can capture complex network patterns and integrate heterogeneous data sources [3]. Additionally, the emergence of single-cell technologies and multi-omics integration presents new opportunities and challenges for network-based analysis [29]. The development of robust benchmarking frameworks, such as those based on Gene Ontology terms, will be crucial for objectively evaluating new methods and guiding their application to specific research contexts [4].

For researchers and drug development professionals, the selection of an appropriate prioritization strategy should be guided by the specific research question, data availability, and biological context. Neighborhood methods offer simplicity and interpretability, diffusion methods provide robust signal propagation across networks, and random walk methods excel at capturing complex relational patterns. By understanding the strengths and limitations of each approach, researchers can effectively leverage these powerful computational strategies to accelerate the discovery of disease-associated genes and the development of novel therapeutics.

Application Notes: Machine Learning for Gene Prioritization

Candidate gene prioritization is a critical step in bioinformatics that accelerates the translation of genomic discoveries into therapeutic insights by identifying genes most likely to be associated with a disease or phenotype. The application of machine learning (ML) has revolutionized this field by enabling the integration and analysis of diverse, high-dimensional biological data. Below, we summarize the core machine learning paradigms used in gene prioritization, their key applications, and quantitative performance comparisons.

Table 1: Machine Learning Paradigms in Gene Prioritization

| ML Paradigm | Definition & Principle | Key Applications in Gene Prioritization | Representative Tools/Methods |

|---|---|---|---|

| Supervised Learning | Trains models on labeled input-output pairs to predict outputs for new, unseen data. [32] | Classification of genes as disease-associated or not; regression for estimating association scores. [3] [32] | PROSPECTR (Decision Trees), Support Vector Machines (SVMs), Random Forests [3] [32] |

| Semi-Supervised Learning | Leverages a small amount of labeled data and a large amount of unlabeled data to improve learning performance. [3] [33] | Gene-disease association prediction using labeled seed genes and unlabeled candidate genes in a network. [3] | Graph Convolutional Networks (GCNs) with pseudo-labeling [3] [33] |

| Deep Learning on Graphs | A subset of deep learning that uses neural network architectures on graph-structured data. [3] [34] | Prioritizing genes by learning from biological networks (e.g., PPI) and node features. [3] [35] | GCNs, regX (Mechanism-informed DNN), DeepGenePrior (VAE) [3] [36] [35] |

The performance of these methods is typically evaluated using metrics such as precision, the area under the ROC curve (AUC), and F1-score. [3]

Table 2: Comparative Performance of Selected Gene Prioritization Methods

| Method | ML Paradigm | Key Data Sources | Reported Performance |

|---|---|---|---|

| GCN with GO features [3] | Semi-Supervised Learning | Protein-Protein Interaction (PPI) network, Gene Ontology (GO) terms | Achieved best results in terms of precision, AUC, and F1-score across 16 diseases when compared to eight state-of-the-art methods. [3] |

| DeepGenePrior [36] | Deep Learning (Variational Autoencoder) | Copy Number Variants (CNVs) | Showed a 12% increase in fold enrichment in brain-expressed genes and a 15% increase in genes associated with mouse nervous system phenotypes compared to other tools. [36] |

| Semi-Supervised Learning with Pseudo-labeling [33] | Semi-Supervised Learning | DNA sequences (e.g., ChIP-seq, ATAC-seq) from human and other mammalian genomes | Showed strong predictive performance improvements in regulatory genomics compared to standard supervised learning, especially for transcription factors with very few binding data. [33] |

Experimental Protocols

Protocol 1: Semi-Supervised Gene Prioritization using Graph Convolutional Networks (GCNs)

Objective: To prioritize candidate disease genes by training a semi-supervised Graph Convolutional Network on a protein-protein interaction network integrated with Gene Ontology features. [3]

Research Reagent Solutions

Table 3: Essential Materials for GCN Protocol

| Item | Function/Description | Example Source |

|---|---|---|