FAIR Principles for Biological Data Integration: A Complete Guide for Biomedical Researchers

This comprehensive guide explores the critical role of FAIR (Findable, Accessible, Interoperable, Reusable) principles in biological data integration for research and drug development.

FAIR Principles for Biological Data Integration: A Complete Guide for Biomedical Researchers

Abstract

This comprehensive guide explores the critical role of FAIR (Findable, Accessible, Interoperable, Reusable) principles in biological data integration for research and drug development. We demystify the core concepts, provide actionable methodological frameworks for implementation, address common technical and cultural challenges, and validate approaches through comparative analysis of tools and platforms. Designed for researchers, scientists, and drug development professionals, this article equips you to transform disparate biological data into a powerful, integrated, and machine-actionable knowledge asset that accelerates discovery.

What Are FAIR Principles? Demystifying the Foundation for Modern Biological Data Integration

The integration of biological data across disparate sources is a cornerstone of modern biomedical research, enabling discoveries in genomics, proteomics, and drug development. The FAIR Guiding Principles—Findable, Accessible, Interoperable, and Reusable—have emerged as a critical framework to address data fragmentation and siloing. This whitepaper provides an in-depth technical guide to the FAIR principles, framed within the thesis that systematic implementation of FAIR is not merely a data management concern but a foundational requirement for scalable, reproducible, and integrative biological research. By dissecting each component with technical rigor, this document aims to equip researchers and drug development professionals with the methodologies and tools necessary for practical implementation.

The FAIR Principles: A Technical Decomposition

Findable

The first step to data reuse is discovery. Findability is predicated on machine-actionable, rich metadata and persistent, unique identifiers.

Core Requirements:

- Globally Unique and Persistent Identifiers (PIDs): Data and metadata must be assigned a PID (e.g., DOI, ARK, accession number) that outlives the initial location or creator.

- Rich Metadata: Data must be described with a plurality of accurate and relevant attributes (metadata).

- Metadata Indexing in a Searchable Resource: Metadata records must be registered or indexed in a searchable resource (e.g., a repository, data catalog).

- Clear Data Identifier in Metadata: The PID for the described data must be explicitly included within the metadata record itself.

Experimental Protocol for Ensuring Findability:

- Pre-registration: Prior to data generation, register your study in a registry (e.g., ClinicalTrials.gov for clinical studies) to obtain a study-level PID.

- Repository Selection: Deposit data in a certified, domain-specific repository (e.g., ENA/NCBI SRA for sequences, PRIDE for proteomics, BioStudies for multi-omics) that issues PIDs.

- Metadata Schema Application: Describe the dataset using a community-agreed metadata standard (e.g., MIAME for microarray, ISA-Tab as a general framework).

- Harvestable Exposure: Ensure repository metadata is exposed via standard protocols (e.g., OAI-PMH) for harvesting by broader search engines like Google Dataset Search or the European Open Science Cloud (EOSC) portal.

Accessible

Once found, data and metadata must be retrievable by standardized, open, and free protocols.

Core Requirements:

- Standardized Communication Protocol: Data is retrieved using a standardized, open, and universally implementable protocol (e.g., HTTP(S), FTP).

- Authentication & Authorization: The protocol should allow for an authentication and authorization procedure, where necessary.

- Metadata Persistence: Metadata must remain accessible even if the underlying data is no longer available (e.g., due to legal, technical, or privacy constraints).

Experimental Protocol for Ensuring Accessibility:

- Protocol Selection: Use HTTPS for public data access. For large-scale data transfers, consider protocols like Aspera or GridFTP, but ensure an HTTPS fallback for metadata.

- Access Tier Definition: Define clear access tiers: a) Open (public), b) Registered (basic login), c) Controlled (e.g., Data Access Committee approval for human genomic data under GA4GH standards).

- Metadata Archiving: Submit metadata to an archival resource independent of the data storage system. Use services that provide metadata-PID persistence (e.g., DataCite).

Interoperable

Data must integrate with other data and applications for analysis, storage, and processing.

Core Requirements:

- Use of Formal, Accessible, Shared Language: Use controlled vocabularies, ontologies, and knowledge graphs (e.g., GO, ChEBI, SNOMED CT, OBO Foundry ontologies).

- Use of Qualified References: Metadata should include qualified references to other (meta)data using PIDs and relationship descriptors.

Experimental Protocol for Ensuring Interoperability:

- Ontology Annotation: Map all key metadata attributes to terms from public ontologies. Use tools like the Ontology Lookup Service (OLS) or Zooma.

- Semantic Enrichment: Use text-mining tools (e.g., Whatizit, NCBO Annotator) to annotate free-text descriptions with ontology terms.

- Linked Data Modeling: Structure metadata as Linked Data using schemas like Schema.org in JSON-LD format, creating explicit RDF triples (Subject-Predicate-Object) that link your dataset to external resources (e.g., linking a gene identifier in your data to its entry in Ensembl).

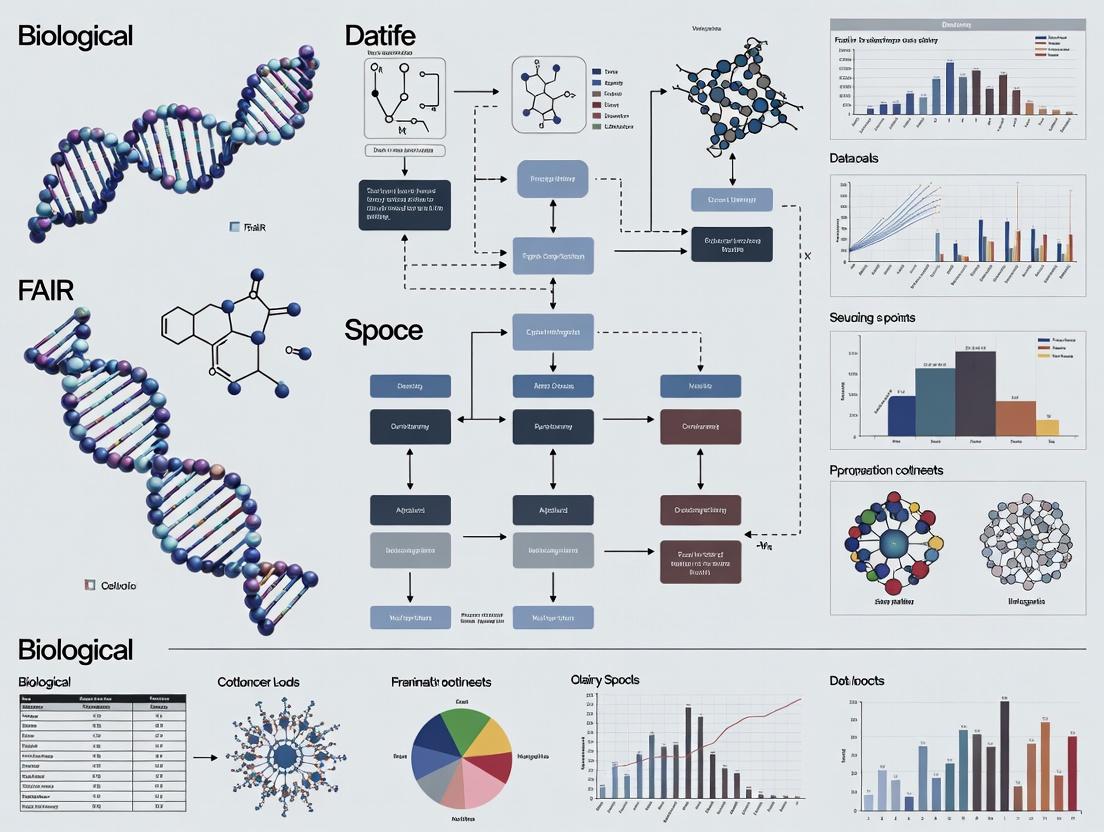

Diagram Title: Semantic Interoperability Workflow

Reusable

The ultimate goal is the optimal reuse of data. This requires comprehensive, accurate, and domain-relevant metadata providing clear context and license.

Core Requirements:

- Plurality of Relevant Attributes: Metadata is described with a plurality of precise and relevant attributes, defined by community standards.

- Clear Usage License: Data has a clear and accessible data usage license (e.g., CCO, BY 4.0).

- Detailed Provenance: Data is associated with detailed provenance (how it was generated, processed, and modified).

- Domain Community Standards: Data meets domain-relevant community standards (e.g., MINSEQE for sequencing, MIBBI guidelines).

Experimental Protocol for Ensuring Reusability:

- Adopt a Checklist: Use the FAIR Cookbook or RDMkit checklist relevant to your domain.

- Provenance Tracking: Use a workflow management system (e.g., Nextflow, Snakemake, Galaxy) that automatically captures and exports provenance in a standard format like PROV-O.

- License Attachment: Explicitly attach a machine-readable license (e.g., from Creative Commons or Open Data Commons) to both data and metadata.

- Readme File Creation: Create a comprehensive

READMEfile or a Data Descriptor document following templates like the "Dataset_README" from Cornell University.

Quantitative Impact of FAIR Implementation

Table 1: Comparative Analysis of Data Reuse Efficiency

| Metric | Non-FAIR Aligned Data | FAIR-Aligned Data | Measurement Source / Study |

|---|---|---|---|

| Data Discovery Time | 50-80% of project time spent searching & validating | Reduced to <20% of project time | Data Science Journal (2023), Survey of 500 Bio-researchers |

| Inter-Study Integration Success Rate | ~30% success in automated integration attempts | >85% success in automated integration attempts | Nature Scientific Data (2022), Analysis of 100+ omics studies |

| Citation & Reuse Rate | 17% average reuse citation for generic repository data | 42% average reuse citation for certified FAIR repositories | PLOS ONE (2023), Meta-analysis of dataset citations |

| Reproducibility of Analysis | <25% of studies fully reproducible from deposited data | >70% reproducibility when linked to computational workflows | EMBO Reports (2024), Case study on cancer genomics pipelines |

Table 2: FAIR Maturity Levels & Key Indicators (Simplified Model)

| Maturity Level | Findability (PID) | Accessibility (Protocol) | Interoperability (Ontology) | Reusability (License & Provenance) |

|---|---|---|---|---|

| Initial (F0-A0-I0-R0) | None. Local filename. | Local file system only. | None. Free-text only. | None specified. |

| Managed (F1-A1-I1-R1) | Internal project ID. | Available on request via email. | Basic keywords/tags. | Readme file with contact. |

| Defined (F2-A2-I2-R2) | Public, non-persistent URL. | Direct download via HTTPS. | Some use of community keywords. | Basic license (e.g., "Free to use"). |

| Quantitatively Managed (F3-A3-I3-R3) | Repository-assigned PID (e.g., Accession). | Standard protocol, metadata always available. | Key metadata mapped to ontologies. | Clear license + human-readable provenance. |

| Optimizing (F4-A4-I4-R4) | Multiple PIDs for data subsets. | Standard protocol with authentication/authorization. | Rich, qualified references as Linked Data. | Machine-readable license + provenance (PROV-O). |

Case Study: Implementing FAIR in a Multi-Omics Drug Target Discovery Project

Thesis Context: This case exemplifies the core thesis that FAIR is a prerequisite for integrative analysis, enabling the connection of genomic variants to cellular phenotypes and compound interactions.

Experimental Protocol for FAIR Data Generation:

- Study Design: Use the ISA (Investigation-Study-Assay) framework to structure the experimental design metadata from the outset.

- Data Generation: Perform whole-genome sequencing (WGS) and RNA-seq on patient-derived cell lines (control vs. disease). Assay drug response via high-throughput screening (HTS).

- Metadata Curation:

- Sample: Link to biospecimen ontology (BRENDA tissue, Cell Ontology).

- Sequencing: Use MINSEQE standards, reference genome GRCh38.p13 with PID.

- HTS: Use CRISP guidelines; annotate compounds with PubChem CIDs and ChEBI IDs.

- Data Deposition:

- Sequence data → European Nucleotide Archive (ENA: ERPxxxxxx).

- Processed transcriptomics → ArrayExpress (E-MTAB-xxxx).

- HTS dose-response data & analysis → BioStudies (S-BSSTxxxx).

- Integration & Analysis: Use the PIDs and ontology terms to programmatically fetch and integrate the three datasets into a knowledge graph for target identification.

Diagram Title: FAIR Multi-Omics Integration Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools & Resources for FAIR Implementation

| Category | Item / Solution | Function / Purpose |

|---|---|---|

| Metadata & Standards | ISA Tools Suite | Provides format and software to manage metadata from planning to public deposition using the ISA framework. |

| FAIR Cookbook | A live, online resource with hands-on recipes to make and keep data FAIR. | |

| RDMkit | Research Data Management toolkit providing domain-specific guidance, including for life sciences. | |

| Identifiers & Registries | DataCite | Provides persistent Digital Object Identifiers (DOIs) for research data and other research outputs. |

| identifiers.org | A central resolution service for life science identifiers, providing stable redirection. | |

| Ontologies & Mapping | OLS (Ontology Lookup Service) | A repository for biomedical ontologies that facilitates browsing, visualization, and mapping. |

| ZOOMA | A tool for mapping strings to ontology terms based on curated annotations from EBI databases. | |

| Repositories | BioStudies | A generic repository for complex multi-omics and imaging datasets, linking related data. |

| Zenodo | A general-purpose open repository supported by CERN and the EU, issuing DOIs. | |

| Provenance & Workflow | Nextflow / Snakemake | Workflow management systems that ensure reproducibility and automatically capture provenance. |

| PROV-O | The W3C standard ontology for representing provenance information. | |

| Evaluation | FAIR Data Maturity Model | A set of core assessment criteria for evaluating the FAIRness of a digital resource. |

| FAIR Evaluator | A web service that can run community-defined FAIR assessment tests against a digital resource. |

The FAIR principles represent a paradigm shift from data as a passive output to data as a primary, active, and reusable research asset. As argued in the overarching thesis, the integration of complex biological data for translational research and drug development is untenable without a systematic FAIR approach. This guide has detailed the technical specifications, protocols, and tooling required to operationalize each facet of FAIR. The quantitative evidence demonstrates tangible gains in efficiency, reproducibility, and reuse. Ultimately, moving from theory to practice requires embedding these protocols into the research lifecycle, supported by institutional policy, infrastructure investment, and a culture that values data stewardship as integral to the scientific endeavor.

The modern biomedical research enterprise is generating data at an unprecedented scale and complexity. However, the potential of this data deluge is being severely undercut by systemic issues in data management. This whitepaper, framed within the broader thesis on FAIR (Findable, Accessible, Interoperable, Reusable) principles for biological data integration, details the urgent need for systemic reform. The proliferation of data silos, the ongoing reproducibility crisis, and the resulting missed insights represent a critical impediment to scientific progress and therapeutic development.

The Scope of the Problem: Quantitative Evidence

Recent analyses quantify the scale of data fragmentation and reproducibility challenges.

Table 1: Quantifying Data Silos in Public Repositories

| Repository | Estimated % of Datasets with Incomplete Metadata | % Lacking Standardized Formats | Common Data Types Affected |

|---|---|---|---|

| Gene Expression Omnibus (GEO) | ~30-40% | ~25% | RNA-seq, Microarray |

| Sequence Read Archive (SRA) | ~20-30% | ~15% (missing adapters) | Genomic, Metagenomic |

| ProteomeXchange | ~25-35% | ~20% | Mass Spectrometry |

| Generalist (e.g., Figshare) | ~50-60% | ~40% | Mixed, Supplementary |

Table 2: Economic & Efficiency Costs of Non-FAIR Data

| Metric | Estimated Impact | Source/Calculation |

|---|---|---|

| Annual cost of irreproducible preclinical research | ~$28 Billion USD | Freedman et al., PLoS Biol (2015) extrapolation |

| Researcher time spent finding/formatting data | ~30-50% of analysis time | Recent researcher surveys |

| Duplication of data generation efforts | ~15-20% of grant budgets | NIH/Wellcome Trust estimates |

| Failed clinical trial rate (linked to preclinical data) | ~85% (oncology) | Hay et al., Nature Biotechnol (2014) update |

Core Experimental Protocol: A Case Study in Integrated Analysis

The following protocol illustrates a typical multi-omics integration study hampered by non-FAIR data, and how FAIR practices resolve it.

Protocol Title: Integrated Analysis of Transcriptomic and Proteomic Data for Biomarker Discovery in Non-Small Cell Lung Cancer (NSCLC).

Objective: To identify a unified protein-RNA signature predictive of response to PD-1 inhibitor therapy.

Pre-FAIR Scenario Challenges:

- Findability: Publicly deposited RNA-seq data (GSE123456) lacks crucial sample phenotype labels (e.g., "responder" vs "non-responder").

- Accessibility: Corresponding proteomics data is in a university FTP server requiring individual email request.

- Interoperability: Proteomics data is in a proprietary software output format (.raw); RNA-seq counts are in a non-standard matrix.

- Reusability: Manuscript methods section states "data normalized as previously described," with no code.

FAIR-Compliant Experimental Protocol:

Step 1: Data Acquisition with Persistent Identifiers.

- Retrieve RNA-seq data using its DOI from a FAIR-compliant repository (e.g., Zenodo or GEO with detailed metadata).

- Access proteomics data via its unique accession (PXDXXXXX) from ProteomeXchange.

- Link clinical metadata using a controlled vocabulary (e.g., CDISC standards) from a separate, linked repository.

Step 2: Standardized Preprocessing.

- RNA-seq: Execute quantification via

salmonorkallistousing a referenced version of the transcriptome (GRCh38.p13, GENCODE v35). Record all parameters in a JSON or CWL workflow file. - Proteomics: Process .raw files using

MaxQuant(version 2.1.0.0) with the same reference proteome. Deposit search parameters file (.xml) with the data. - Code: Implement both pipelines in a containerized environment (Docker/Singularity). Share code via public Git repository with an open license (e.g., MIT).

Step 3: Integrative Statistical Analysis.

- Load normalized RNA expression (TPM) and protein abundance (LFQ) matrices into R/Python.

- Use the

MOFA2R package for multi-omics factor analysis. - Key Method: Apply canonical correlation analysis (CCA) to identify shared variance components between omics layers. Test for association with the clinical outcome variable (response status) using a linear mixed model.

- Reproducibility Step: Set a random seed at the start of the analysis script. Use

renv(R) orpoetry(Python) to capture exact package dependencies.

Step 4: Result Deposition.

- Deposit the final, tidy combined analysis matrix (features x samples) in a public repository.

- Publish the computational workflow on a platform like

workflowhub.euorDockstore. - Register the project with a resource identifier (RRID) in the

Resource Identification Portal.

Diagram Title: FAIR Multi-omics Analysis Workflow (100 chars)

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Tools for FAIR Data Implementation

| Tool / Resource Category | Specific Example(s) | Function in FAIR Protocol |

|---|---|---|

| Persistent Identifiers | DOI, RRID, Accession Numbers (PXD, GSE) | Ensures permanent findability and citability of datasets, antibodies, cell lines. |

| Metadata Standards | MIAME, MIAPE, CDISC, ISA-Tab | Provides structured, machine-readable context for data, enabling interoperability. |

| Controlled Vocabularies/Ontologies | EDAM, OBI, GO, SNOMED CT | Uses standard terms for concepts (e.g., 'heart'), making data searchable and linkable. |

| Containerization | Docker, Singularity | Packages software, dependencies, and environment to guarantee reproducible execution. |

| Workflow Management | Nextflow, Snakemake, CWL | Defines, executes, and shares multi-step computational pipelines. |

| Data Repositories | Zenodo, Figshare, GEO, ProteomeXchange | Provides curated, long-term storage with metadata requirements and access controls. |

| Code Repositories | GitHub, GitLab, Bitbucket (with DOI via Zenodo) | Enables version control, collaboration, and sharing of analysis scripts. |

Diagram Title: Cycle of Non-FAIR Data Consequences (99 chars)

A Pathway to Resolution: Implementing FAIR

The transition to FAIR requires a cultural and technical shift. Key actions include:

- Mandating FAIR Data Management Plans in grant applications.

- Investing in data curation and biocurator roles as essential research staff.

- Adopting interoperable, open-source tools and platforms that embed FAIR principles by design.

- Recognizing data sharing and software production as valuable research outputs in tenure and promotion reviews.

The urgency for FAIR is not merely technical; it is foundational to the integrity, pace, and societal return on investment of biomedical research. By dismantling silos, restoring reproducibility, and enabling data fusion, we can unlock the transformative insights currently hidden within disconnected datasets, accelerating the path from discovery to cure.

The FAIR principles (Findable, Accessible, Interoperable, Reusable) were established to guide data stewardship toward computational use. Within biological data integration research, the original thesis positioned FAIR as a catalyst for human-driven discovery. However, the rapid ascent of artificial intelligence and machine learning necessitates an evolution of this thesis: FAIR must be re-contextualized as a foundational framework for machine-actionability and AI readiness. This whitepaper provides a technical guide for transforming FAIR from a compliance checklist into an engineered infrastructure that enables autonomous agents and advanced AI models to find, interpret, and reason over complex biological data at scale.

Deconstructing Machine-Actionability Across the FAIR Spectrum

True machine-actionability requires each FAIR principle to be implemented with precision, leveraging specific technologies and standards.

Table 1: Technical Specifications for Machine-Actionable FAIR

| FAIR Principle | Human-Centric Implementation | Machine-Actionable & AI-Ready Implementation | Key Enabling Standards/Technologies |

|---|---|---|---|

| Findable | Data has a human-readable title and a persistent identifier (PID). | PIDs are resolvable via APIs returning structured metadata (e.g., JSON-LD). Rich metadata is indexed in knowledge graphs using ontologies. | DOI, ARK, compact identifiers; Schema.org, Bioschemas; Elasticsearch, SPARQL endpoints. |

| Accessible | Data is downloadable via a standard web link, possibly with login. | Data is retrievable via standardized, anonymous APIs (e.g., REST, GraphQL). Authentication uses machine-friendly protocols (OAuth, API keys). Metadata is always available. | HTTPS, RESTful APIs, GA4GH DRS (Data Repository Service); OAuth 2.0. |

| Interoperable | Data formats are common (e.g., CSV, PDF). Metadata uses free-text descriptions. | Data uses open, structured, and semantically defined formats. Metadata uses formal, shared vocabularies/ontologies with explicit URIs. | JSON, XML, RDF; OWL, RDFS; EDAM, OBO Foundry ontologies, UMLS. |

| Reusable | Data has a human-readable license and basic provenance. | License is expressed in machine-readable form (e.g., SPDX). Provenance follows a formal model (e.g., W3C PROV-O). Domain-relevant community standards are used. | SPDX license identifiers, W3C PROV-O, MIAME, CIMC. |

Experimental Protocols for Validating AI Readiness

To assess and implement AI-ready FAIR data, specific experimental and validation protocols are required.

Protocol 3.1: Automated Metadata Completeness and Ontology Coverage Audit

Objective: Quantify the richness and semantic interoperability of dataset metadata for AI consumption.

- Metadata Harvesting: Use a script to call the dataset's PID resolution API or OAI-PMH endpoint to collect all available metadata.

- Completeness Check: Validate against a target metadata schema (e.g., Bioschemas

Datasetprofile). Report the percentage of mandatory/recommended properties present. - Ontology Term Extraction: Parse the metadata for terms linked to known ontology URIs (e.g., from EDAM, SBO, NCIT).

- Coverage Metric Calculation:

- Vocabulary Saturation: (Number of properties using ontology terms) / (Total number of properties) * 100%.

- Graph Connectivity: Map extracted ontology terms to a knowledge base (e.g., EMBL-EBI's OLS) to determine if they form a connected subgraph, indicating semantic coherence.

Protocol 3.2: Machine Agent Retrieval and Integration Test

Objective: Evaluate the end-to-end machine-actionability of a data resource.

- Agent Definition: Configure a simple autonomous agent (e.g., a Python script using

requestsandrdfliblibraries) with a query: "Find all datasets related to Homo sapiens CRISPR screening for gene EGFR in lung cancer cell lines." - Discovery Phase: Agent queries a public data index (e.g., a BioCatalogue for APIs, Google Dataset Search) using structured keywords and ontology terms (e.g.,

organism: "Homo sapiens",technique: "CRISPR screen",target: "EGFR",cell line: "A549"). - Retrieval & Parsing: Agent accesses the identified dataset via its standardized API (e.g., GA4GH DRS), retrieves metadata in JSON-LD, and parses the license and provenance information automatically.

- Integration Simulation: Agent "integrates" the dataset's metadata with a mock local knowledge graph by aligning its ontology terms with the local graph's schema. Success is measured by the agent's ability to complete the process without human intervention and correctly assert the dataset's properties into the graph.

Diagram Title: Machine Agent Workflow for FAIR Data Retrieval and Integration

Signaling Pathways as FAIR, Computable Knowledge

A critical application is representing biological pathways—canonical sources of drug target insight—as AI-ready knowledge.

Table 2: Comparison of Pathway Representation Formats for AI Readiness

| Format | Human Readability | Machine-Actionability | Semantic Richness | Query & Reasoning Support |

|---|---|---|---|---|

| PDF/Image | High | None | None | No |

| Simple List (CSV) | Medium | Low (structured) | Low | Basic Filtering |

| Biological Pathway Exchange (BioPAX) | Medium (via viewers) | High | High (standard ontology) | Yes (via pathway databases) |

| Systems Biology Markup Language (SBML) | Low | High (simulation-ready) | Medium | Yes (constrained to models) |

| Knowledge Graph (RDF/OWL) | Low (requires tools) | Very High | Very High (any ontology) | Yes (powerful SPARQL, inference) |

Implementing a pathway as a FAIR knowledge graph involves:

- Entity Identification: Each protein, complex, and small molecule is assigned a URI from authoritative sources (e.g., UniProt, ChEBI).

- Relationship Assertion: Interactions (phosphorylates, inhibits) are defined using predicates from ontologies like SIO or RO, creating subject-predicate-object triples.

- Contextual Annotation: Cellular compartment (GO), tissue (UBERON), and disease (MONDO) terms are linked to relevant entities.

Diagram Title: FAIR Knowledge Graph Representation of a Signaling Pathway Fragment

The Scientist's Toolkit: Research Reagent Solutions for FAIRification

Implementing AI-ready FAIR data requires a suite of tools and resources.

Table 3: Essential Toolkit for Creating & Validating AI-Ready FAIR Data

| Tool/Resource Category | Specific Tool/Service | Function in FAIRification Process |

|---|---|---|

| Metadata Schema & Ontology | Bioschemas, ISA framework, OBO Foundry ontologies | Provides templates and standardized vocabularies for annotating data with machine-understandable semantics. |

| PID & Metadata Registry | DataCite, ePIC, bio.tools, Fairsharing.org | Generates persistent identifiers and registers datasets/tools with rich, searchable metadata. |

| Data Repository (FAIR-native) | Zenodo, Figshare, EBRAINS, SPARC Data Portal | Hosting platforms that natively implement FAIR principles, including standardized APIs and metadata support. |

| FAIR Assessment Tool | FAIR Evaluator, F-UJI, FAIR-Checker | Automated services that score the FAIRness of a digital object by testing its metadata and accessibility. |

| Knowledge Graph Construction | Protégé, RDfLib (Python), Biolink Model | Software for building, managing, and querying semantic knowledge graphs from biological data. |

| Workflow & Provenance | Common Workflow Language (CWL), W3C PROV-O, Nextflow | Captures the precise computational methods and data lineage in a machine-executable and interpretable format. |

| Standardized API | GA4GH DRS & TRS APIs, BRAPI (Plant breeding) | Provides uniform, programmatic interfaces for retrieving data (DRS) and analysis tools/workflows (TRS). |

The evolution of the FAIR principles from a guide for human-centric data integration to a framework for machine-actionability represents a paradigm shift. For researchers and drug development professionals, this transition is not merely technical but strategic. By engineering biological data resources to be AI-ready—through rigorous ontology use, standardized APIs, and computable knowledge representations—we lay the groundwork for the next generation of discovery: where AI agents can autonomously generate hypotheses, identify novel targets, and integrate across previously siloed domains. The future of biological research hinges not just on data being FAIR, but on it being FAIR for Machines.

Within the broader thesis on FAIR (Findable, Accessible, Interoperable, and Reusable) principles for biological data integration, two pivotal actors, GO-FAIR and ELIXIR, have emerged as foundational forces. Their initiatives, coupled with a rapidly evolving regulatory environment, are shaping the infrastructure and governance of global life science data. This technical guide examines their core architectures, synergistic roles, and the experimental protocols that underpin FAIR data implementation in drug development and biomedical research.

Core Actors: Architectural and Operational Analysis

GO-FAIR Initiative

GO-FAIR is a bottom-up, stakeholder-driven movement that facilitates the implementation of the FAIR principles. It operates through a decentralized network of Implementation Networks (INs).

Key Structural Components:

- FAIR Principles: The non-negotiable framework.

- Implementation Networks (INs): Thematic or disciplinary communities co-creating FAIR solutions.

- GO FAIR Foundation: Provides coordination and support.

- FAIR Digital Objects (FDOs): A core technical concept where data, metadata, and identifiers are encapsulated.

Experimental Protocol: Establishing a FAIR Implementation Network

- Community Mobilization: Identify a disciplinary community with a shared data challenge.

- Statement of Intent: Draft and sign a Memorandum of Understanding outlining the IN's goals.

- FAIRification Plan: Map current data flows and define target FAIR metrics.

- Tool & Standard Selection: Choose persistent identifiers (e.g., DOIs, PIDs), semantic artifacts (ontologies), and repositories.

- Pilot Execution: Apply the plan to a representative dataset; measure FAIRness increase.

- Documentation & Scaling: Publish workflows and encourage broader adoption within the discipline.

ELIXIR Infrastructure

ELIXIR is an intergovernmental organization that builds and coordinates a sustainable European infrastructure for biological data. It provides actual platforms, tools, and standards.

Key Structural Components:

- Nodes: National centers of excellence (e.g., EMBL-EBI, SIB, CSC).

- Platforms: Technical domains: Data, Tools, Interoperability, Compute, and Training.

- Communities: Focused on specific life science domains (e.g., Human Data, Marine Metagenomics).

- Core Data Resources: Financially supported fundamental biomolecular databases.

Experimental Protocol: Deploying a Tool via ELIXIR Tools Platform

- Containerization: Package the analysis tool using Docker or Singularity.

- Metadata Registration: Describe the tool in the ELIXIR Tool Registry (bio.tools) using the EDAM ontology.

- Workflow Integration: Optionally package as a CWL or Nextflow workflow for the ELIXIR Workflow Hub.

- GA4GH Standard Adoption: Implement standards like TRS for tool execution or DRStic APIs for data access.

- Deployment to Cloud: Utilize the ELIXIR Cloud (EGA, TESK) for scalable execution.

- Training Material: Deposit tutorials in the ELIXIR Training Platform (TeSS).

Quantitative Comparison of Core Functions

Table 1: Comparative Analysis of GO-FAIR and ELIXIR

| Feature | GO-FAIR | ELIXIR |

|---|---|---|

| Primary Role | Advocacy, coordination, and methodology for FAIR implementation. | Operation and integration of a sustained data infrastructure. |

| Governance Model | Distributed, community-driven (via Implementation Networks). | Centralized coordination of decentralized national nodes. |

| Key Output | FAIRification frameworks, guides, and community standards. | Core Data Resources, registries (bio.tools, TeSS), platforms (EGA), and production services. |

| Technical Focus | Conceptual framework, FAIR Digital Objects, semantic interoperability. | Practical deployment, compute orchestration, tool interoperability, and long-term data preservation. |

| Funding Model | Project-based funding, membership fees for the Foundation. | National node contributions, EU project funding (e.g., H2020, Horizon Europe), and institutional support. |

The Evolving Regulatory Landscape

Regulatory bodies are increasingly recognizing FAIR data as a catalyst for innovation and transparency. Key drivers include:

- In Vitro Diagnostic Regulation (IVDR) / Medical Device Regulation (MDR): Demands rigorous clinical evidence, bolstering the need for FAIR clinical and performance data.

- European Health Data Space (EHDS): Aims to enable secondary use of health data for research, requiring FAIR-aligned interoperability and governance.

- FDA Modernization Act 2.0 & ICH M11: Encourage computer models and structured data, aligning with FAIR principles for regulatory submission.

Experimental Protocol: Preparing a Regulatory Submission with FAIR-Aligned Data

- Data Curation: Annotate all datasets (clinical, omics, safety) using controlled vocabularies (e.g., SNOMED CT, EDAM).

- Identifier Assignment: Assign globally unique, persistent identifiers (PIDs) to key entities (samples, protocols, analysts).

- Metadata Specification: Create machine-readable metadata following a structured schema (e.g., ISA model, CEDAR templates).

- Repository Deposition: Deposit raw and processed data in a FAIR-aligned, recognized repository (e.g., EGA for human data, BioStudies for project data).

- Submission Dossier Linkage: In the eCTD dossier, explicitly link to the deposited datasets using their PIDs and accession numbers.

- Computable Analysis: Where possible, provide the analysis workflow (e.g., Nextflow/Snakemake script) in a public registry like WorkflowHub.

Visualization of Relationships and Workflows

Diagram 1: FAIR Ecosystem Actors and Interactions

Diagram 2: FAIRification Protocol Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Tools for FAIR Data Implementation

| Item | Function in FAIR Data Pipeline | Example/Provider |

|---|---|---|

| Persistent Identifiers (PIDs) | Globally unique and persistent labels for datasets, samples, or researchers, ensuring findability and reliable citation. | DOI (DataCite), Handle, RRID for antibodies, ORCID for researchers. |

| Metadata Standards & Templates | Structured schemas to capture machine-readable metadata, enabling interoperability and reuse. | ISA model, CEDAR templates, MIAME (microarrays), MINSEQE (sequencing). |

| Semantic Artefacts (Ontologies) | Controlled vocabularies and relationships that define terms, enabling data integration and machine-actionability. | EDAM (operations), OBI (investigations), CHEBI (chemicals), SNOMED CT (clinical terms). |

| Containerization Platforms | Packages software and its dependencies into standardized units for reproducible execution across compute environments. | Docker, Singularity, Podman. |

| Workflow Languages | Scripts that define, execute, and share complex data analysis pipelines in a portable and reproducible manner. | Common Workflow Language (CWL), Nextflow, Snakemake. |

| FAIR Repositories | Data archives that comply with FAIR principles by providing PIDs, rich metadata, and standardized access protocols. | European Genome-phenome Archive (EGA), BioStudies, Zenodo, ArrayExpress. |

| Tool/Workflow Registries | Curated catalogs describing bioinformatics tools and workflows with standardized metadata, enhancing findability and reuse. | ELIXIR's bio.tools, WorkflowHub. |

| Data Access APIs | Standardized programmatic interfaces for querying and retrieving data, enabling automated and interoperable access. | GA4GH DRStic & TES APIs, EGA's Beacon API. |

This whitepaper delineates the tangible benefits derived from implementing FAIR (Findable, Accessible, Interoperable, Reusable) principles for biological data integration. Within the modern research ecosystem, FAIRification is not merely a conceptual framework but a critical enabler for accelerating drug discovery pipelines, facilitating robust multi-omics studies, and powering sophisticated computational analyses. The systematic application of these principles ensures that data generated from disparate sources—genomic, transcriptomic, proteomic, and metabolomic—can be seamlessly integrated, queried, and reused, thereby transforming raw data into actionable biological insight.

FAIR Data Integration: Core to Modern Discovery

The FAIR principles provide a scaffold for data management that maximizes its utility for both human and machine-driven discovery. In the context of drug discovery and multi-omics, this translates to specific technical implementations.

Key FAIR Implementation Pillars:

- Findable: Use of globally unique and persistent identifiers (PIDs) for datasets, digital object identifiers (DOIs), and rich metadata registered in searchable resources.

- Accessible: Data is retrievable by their identifier using a standardized, open, and free communication protocol, with metadata remaining accessible even if the data is not.

- Interoperable: Use of formal, accessible, shared, and broadly applicable knowledge representation languages and vocabularies (e.g., ontologies like GO, CHEBI, MONDO).

- Reusable: Data are described with a plurality of accurate and relevant attributes, clear usage licenses, and detailed provenance.

Accelerating Drug Discovery

FAIR data integration directly shortens preclinical development timelines by enabling predictive in silico modeling and reducing costly experimental repetition.

Table 1: Impact of FAIR Data on Drug Discovery Metrics

| Metric | Pre-FAIR (Traditional) | Post-FAIR Implementation | Quantitative Benefit |

|---|---|---|---|

| Target Identification Time | 12-18 months | 6-9 months | ~50% reduction |

| Lead Compound Screening Cycle | 4-6 weeks per iterative cycle | 1-2 weeks via integrated virtual screening | 70-80% faster iteration |

| Preclinical Attrition Rate | ~90% failure rate from target to IND | Potential reduction to ~80% with better models | ~10% absolute risk reduction |

| Data Re-use Efficiency | <20% of historical data is readily reusable | >70% of data is FAIR and machine-actionable | 3.5x increase in asset utilization |

Experimental Protocol: IntegratedIn SilicoTarget Validation

This protocol leverages FAIR-integrated data to prioritize and validate novel therapeutic targets.

- Data Assembly: Query federated databases (e.g., EBI RDF, IDG Knowledge Graph) using SPARQL to retrieve FAIR data on gene-disease associations (from DisGeNET), protein structures (from PDBe), known ligands (from ChEMBL), and expression profiles (from GTEx).

- Target Prioritization: Apply a machine learning classifier (e.g., Random Forest or GNN) trained on known successful/failed target attributes. Features include druggability scores, genetic constraint metrics, pathway essentiality, and safety profiles (from FAIR safety pharmacology data).

- Computational Validation:

- Perform molecular docking of the target's predicted structure against virtual libraries of drug-like compounds (ZINC20).

- Run systems biology simulations (using COPASI or Tellurium) to model target perturbation within a FAIR-curated pathway model (from Reactome).

- Output: A ranked list of targets with associated confidence scores, predicted binding compounds, and simulated phenotypic impacts.

Title: FAIR Data Workflow for In Silico Target Validation

Enabling Multi-Omics Studies

FAIR principles are foundational for integrative multi-omics, allowing researchers to superimpose data layers to derive a systems-level understanding.

Table 2: Multi-Omics Integration Enabled by FAIR Data Standards

| Data Layer | Key FAIR Resource | Standard Identifier | Primary Integration Utility |

|---|---|---|---|

| Genomics | ENA, dbSNP, gnomAD | ENSEMBL ID, rsID | Variant calling, population frequency |

| Transcriptomics | GEO, ArrayExpress, ENCODE | ENSEMBL Gene ID, SRA ID | Differential expression, splicing events |

| Proteomics | PRIDE, PeptideAtlas | UniProtKB ID | Protein abundance, post-translational modifications |

| Metabolomics | MetaboLights, HMDB | InChIKey, CHEBI ID | Metabolic pathway mapping, flux analysis |

| Epigenomics | ICGC, Roadmap Epigenomics | GEO Accession, UCSC loci | Methylation patterns, chromatin state |

Experimental Protocol: Cross-Omic Pathway Perturbation Analysis

A detailed protocol for analyzing the impact of a genetic variant across molecular layers.

- Sample Preparation: Process matched samples (e.g., control vs. treated, disease vs. healthy) for WGS, RNA-seq, and LC-MS/MS proteomics using standardized SOPs. Assign a unique Sample ID linked to all data outputs.

- FAIR Data Generation:

- Genomics: Call variants (GATK best practices). Annotate using ENSEMBL VEP. Store raw FASTQ in ENA (ERP ID) and variants in dbSNP (submitter SNP IDs).

- Transcriptomics: Align RNA-seq reads (STAR). Quantify gene expression (Salmon). Deposit in GEO (GSE ID).

- Proteomics: Process spectra (MaxQuant). Identify proteins using UniProtKB reference proteome. Deposit in PRIDE (PXD ID).

- Data Integration:

- Map all data to common identifiers: Genomic coordinates (for variants), ENSEMBL Gene ID (for RNA), UniProtKB ID (for protein).

- Use a resource like OmicsDI or a custom R/Python pipeline to join tables based on these IDs and associated ontology terms (e.g., GO biological process).

- Analysis: Perform causality inference using tools like MEMo or PARADIGM. Visualize concordance/discordance across omics layers for genes in a perturbed pathway (e.g., MAPK signaling).

Title: Multi-Omic FAIR Data Integration Workflow

Powering Computational Analysis

FAIR data is inherently computable, serving as high-quality fuel for artificial intelligence and large-scale simulation.

Table 3: Computational Models Powered by FAIR Data

| Model Type | Example Use Case | FAIR Data Requirement | Performance Gain with FAIR Data |

|---|---|---|---|

| Graph Neural Networks (GNN) | Drug-target interaction prediction | Knowledge graphs with ontology-based relationships | 15-25% higher AUC compared to non-integrated data |

| Generative AI | De novo molecule design | Standardized chemical representations (SMILES, InChI) with bioactivity annotations | 2-3x increase in synthesizable, bioactive candidates |

| Mechanistic Simulation | Whole-cell model | Parameterized reaction data with consistent units and identifiers | Model accuracy improved by >30% |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Materials for Featured Experiments

| Item | Function | Example Product/Catalog # |

|---|---|---|

| Poly(A) mRNA Magnetic Beads | Isolation of polyadenylated RNA for RNA-seq library prep. | NEBNext Poly(A) mRNA Magnetic Isolation Module (E7490) |

| Trypsin/Lys-C Mix, MS Grade | High-specificity enzymatic digestion of proteins for LC-MS/MS analysis. | Promega Trypsin/Lys-C Mix, Mass Spec Grade (V5073) |

| Streptavidin-Coated Magnetic Beads | Pull-down of biotinylated molecules in target validation assays. | Dynabeads MyOne Streptavidin C1 (65001) |

| Single-Cell 3' Gel Bead Kit | Partitioning and barcoding for single-cell RNA-seq. | 10x Genomics Chromium Next GEM Chip J (1000127) |

| TMTpro 16plex Label Reagent Set | Multiplexed isobaric labeling for quantitative proteomics. | Thermo Scientific TMTpro 16plex Label Reagent Set (A44520) |

| Protein A/G Magnetic Beads | Immunoprecipitation of antibody-antigen complexes for interactome studies. | Protein A/G Magnetic Beads (B23202) |

| DNase I, RNase-free | Removal of genomic DNA contamination from RNA preps. | DNase I, RNase-free (EN0521) |

| PhosSTOP Phosphatase Inhibitor Cocktail | Preservation of protein phosphorylation states in lysates. | PhosSTOP (4906845001) |

Implementing FAIR: A Step-by-Step Methodology for Integrating Biological Data

The imperative for reproducible and integrative biological research has crystallized around the FAIR principles—Findable, Accessible, Interoperable, and Reusable. This guide addresses the foundational first pillar: Findability. In biological data integration research, a dataset's utility is zero if it cannot be discovered. Findability is engineered through the synergistic application of Persistent Identifiers (PIDs), rich, structured metadata, and indexed discovery portals. This step is the critical gateway upon which all subsequent data integration and drug development workflows depend.

Persistent Identifiers (PIDs): The Digital DNA of Data

A Persistent Identifier (PID) is a long-lasting reference to a digital resource—a dataset, sample, publication, or researcher. It resolves to a current location and metadata, even if the underlying data moves.

Key PID Systems in Life Sciences

| PID System | Administering Body | Example | Primary Use Case |

|---|---|---|---|

| Digital Object Identifier (DOI) | Crossref, DataCite, others | 10.5281/zenodo.1234567 |

Citing published datasets, software, articles. |

| Archival Resource Key (ARK) | California Digital Library, INRIA | ark:/13030/m5br8st1 |

Identifying objects held in archival systems. |

| Life Science Identifiers (LSID) | TDWG (Discontinued but in use) | urn:lsid:example.org:taxname:12345 |

Identifying biological taxonomy, specimens. |

| Persistent URL (PURL) | Internet Archive | purl.org/example/123 |

Redirecting to the current URL of a resource. |

| Handle System | DONA Foundation | 21.T11981/example |

Underlying technology for DOIs; general-purpose. |

| RRID (Research Resource ID) | SciCrunch | RRID:SCR_007358 |

Identifying antibodies, model organisms, software. |

| BioSample / BioProject | NCBI | SAMN00123456 |

Identifying biological samples and project contexts. |

Quantitative Comparison of Major PID Providers

Table 1: Comparison of DOI Registration Agencies for Biological Data.

| Feature / Agency | DataCite | Crossref | Zenodo (uses DataCite) |

|---|---|---|---|

| Primary Focus | Research data, software | Scholarly publications | Multidisciplinary repository |

| Cost Model | Membership-based | Membership-based | Free for up to 50GB/dataset |

| Metadata Schema | DataCite Metadata Schema | Crossref Metadata Schema | DataCite Schema |

| Required Fields | Identifier, Creator, Title, Publisher, PublicationYear, ResourceType | Similar, publication-focused | Similar to DataCite |

| Integration with | Repositories (Zenodo, Dryad), ORCID | Journals, ORCID | GitHub, ORCID, CERN infra |

| Total DOIs Issued (Approx.) | ~15 million (2025) | ~150 million (2025) | ~2 million (2025) |

Protocol: Minting a DOI via DataCite for a Biological Dataset

Objective: Assign a persistent, citable DOI to a transcriptomics dataset. Materials: Data files, metadata description, account with a DataCite member repository (e.g., Zenodo, Dryad). Procedure:

- Prepare Data: Clean and format data (e.g., FASTQ, count matrix). Use open, non-proprietary formats (e.g., .fastq, .tsv).

- Prepare Rich Metadata: Compose a

datacite.jsonfile. Mandatory fields include:identifier(will be assigned),creators(with ORCID PIDs),titles,publisher,publicationYear,resourceType(e.g., "Dataset"),subject(from EDAM Ontology).- Crucial for Bioscience: Add fields for

geoLocation,relatedIdentifier(linking to BioProject),descriptionwith experimental protocol.

- Upload: Log into your chosen repository. Upload data files and the metadata file or fill web form.

- Reserve DOI: Use the repository's "Reserve DOI" function. This creates a placeholder (e.g.,

10.5072/zenodo.123). - Publish: Finalize and publish the dataset. The reserved DOI becomes active and resolves to the dataset landing page.

- Validate: Test the DOI by resolving it with

https://doi.org/[your-doi].

Rich Metadata: The Semantic Enrichment Layer

Metadata is structured information that describes, explains, locates, or otherwise makes data findable and usable. For FAIRness, metadata must be rich, standardized, and machine-readable.

Essential Metadata Standards for Biological Data

Table 2: Core Metadata Standards for Bioscience Data Integration.

| Standard / Schema | Scope | Key Fields for Findability | Governance |

|---|---|---|---|

| DataCite Metadata Schema | General-purpose for citation | Identifier, Creator, Title, Publisher, Subject (ontology), RelatedIdentifier | DataCite |

| ISA (Investigation-Study-Assay) | Life sciences experimental metadata | Study design, protocols, sample characteristics, technology type | ISA Community |

| MIAME / MINSEQE | Transcriptomics data | Experimental design, sample characteristics, array/layout, sequencing protocol | FGED, SeqBio |

| BioCompute Object | Computational workflows | Computational workflow provenance, parameters, input/output specs | IEEE-2791-2020 |

| EDAM Ontology | Bioscience data & operations | Topic, operation, data format, identifier (as ontology terms) | ELIXIR |

Protocol: Annotating a Proteomics Dataset Using MIAPE and Ontologies

Objective: Create rich, machine-actionable metadata for a mass-spectrometry proteomics dataset. Materials: Raw spectra files (.raw, .mgf), identification files (.dat, .mzid), sample information sheet. Reagent Solutions:

- Proteomics Standards Initiative (PSI) Formats: Standardized data formats (mzML, mzIdentML) ensure interoperability.

- ProteomeXchange Submission Tool: Enforces MIAPE guidelines and uploads to public repositories.

- Ontology Lookup Service (OLS): API to fetch controlled vocabulary terms (e.g., from PSI-MS, UO, NCBI Taxon). Procedure:

- Convert Data: Convert raw instrument files to standard mzML format using

msConvert(ProteoWizard). - Describe Investigation: Create an ISA-Tab configuration. In the i_investigation.txt file, define the overall study goals.

- Annotate Samples: In the s_study.txt ISA file, for each sample, list:

Source Name: Biological source (e.g., "liver tissue").Characteristics[]: Annotate with ontology terms (e.g.,Characteristics[organism]= "Mus musculus" (NCBI:txid10090);Characteristics[cell type]= "hepatocyte" (CL:0000182)).Protocol REF: Link to sample preparation protocol.

- Describe Assay: In the a_assay.txt file, specify:

Technology Type: "mass spectrometry" (OBI:0000470).Assay Name: Descriptive name.Raw Data File: Link to mzML file.

- Validate and Submit: Use the

isatab-validatorand then submit the ISA archive and data files to the ProteomeXchange consortium via the PX Submission Tool, which will assign a dataset identifier (e.g., PXDxxxxxx).

Discovery Portals: The Federated Search Interface

Discovery portals aggregate metadata from distributed repositories using open APIs, providing a single search point. They are the user-facing manifestation of findability.

Key Portals for Biological and Drug Development Research

Table 3: Comparison of Major Data Discovery Portals.

| Portal Name | Scope | Data Sources | Key Features |

|---|---|---|---|

| NCBI Data Discovery | Biomedical & genomic | SRA, GEO, dbGaP, PubChem, Protein | Federated search, filters by organism, assay type. |

| EMBL-EBI Search | Life sciences | ArrayExpress, ENA, UniProt, PRIDE, ChEMBL | Powerful API (EBI Search), ontology-based linking. |

| Google Dataset Search | Cross-domain | Any site using schema.org/Dataset | Broad crawl, link to data location and papers. |

| DataCite Commons | Research outputs | All DataCite DOIs (data, software) | PID graph, affiliation/ORCID filters, citation counts. |

| ClinicalTrials.gov | Clinical research | Trial registrations worldwide | Advanced search by condition, intervention, location. |

| OpenTargets Platform | Drug target discovery | Genomics, drugs, disease data | Integrative evidence for target-disease association. |

Architecture of a FAIR Data Discovery Portal

Title: Architecture of a FAIR Data Discovery Portal

The Scientist's Toolkit: Research Reagent Solutions for Data Findability

Table 4: Essential Tools and Resources for Implementing Findability.

| Tool / Resource | Category | Function / Purpose |

|---|---|---|

| ORCID ID | Researcher PID | Provides a persistent, unique identifier for researchers, disambiguating names and linking to contributions. |

| DataCite DOI | Data PID | A citable, persistent identifier specifically designed for research data and other outputs. |

| ISAframework Tools | Metadata Creation | Suite of software (ISAcreator, isatools API) for creating and managing ISA-Tab formatted metadata. |

| EDAM Ontology | Controlled Vocabulary | Provides bioscience-specific terms for annotating data types, formats, topics, and operations. |

| Bioconductor AnVIL | Cloud Workspace | Integrates data discovery (via Data Explorer) with analysis tools for genomic data, leveraging PIDs. |

| FAIRsharing.org | Standards Registry | A curated portal to discover and select appropriate metadata standards, repositories, and policies. |

| EBI Search API | Programmatic Discovery | Enables building custom search applications over EMBL-EBI's vast data resources. |

| CWL / WDL | Workflow Language | Describes computational workflows in a reusable way, linking to input/output data via PIDs for provenance. |

Achieving Findability, as mandated by the FAIR principles, is a technical and cultural endeavor requiring the systematic application of PIDs, rich metadata, and discoverable portals. For biological data integration research and drug development, this triad ensures that valuable data assets are not siloed but become accessible starting points for integrative analysis, meta-studies, and machine learning, thereby accelerating the pace of scientific discovery and therapeutic innovation.

Within the FAIR (Findable, Accessible, Interoperable, Reusable) principles for scientific data, Accessible (A1) is explicitly defined: (Meta)data are retrievable by their identifier using a standardized communications protocol. A1.1 requires the protocol to be open, free, and universally implementable. A1.2 further mandates that the protocol allows for an authentication and authorization procedure, where necessary. This pillar ensures that data, once found, can be reliably and securely retrieved. For biomedical and life sciences research, where data sensitivity and ethical constraints are paramount, implementing robust Authentication (AuthN), Authorization (AuthZ), and standardized Open Protocols (APIs) is not merely technical but a foundational requirement for collaborative, integrative research and drug development.

This guide provides a technical framework for implementing these components in biological data integration platforms, ensuring seamless yet secure access for researchers, scientists, and professionals.

Core Concepts: AuthN, AuthZ, and APIs

- Authentication (AuthN): The process of verifying the identity of a user or system. It answers the question "Who are you?"

- Authorization (AuthZ): The process of determining what permissions an authenticated identity has. It answers "What are you allowed to do?"

- API (Application Programming Interface): A set of defined rules and protocols that allow different software applications to communicate with each other. Open, standards-based APIs are the technical embodiment of the FAIR A1 principle.

Quantitative Comparison of Common Access Protocols & Standards

The choice of protocol depends on data sensitivity, use case, and community standards.

Table 1: Common Data Access Protocols in Biomedical Research

| Protocol/Standard | Primary Use Case | AuthN/AuthZ Support | Open/Free (A1.1) | Common in Life Sciences |

|---|---|---|---|---|

| HTTPS/RESTful API | General-purpose data retrieval & submission. | High (OAuth 2.0, API Keys, JWT) | Yes | Ubiquitous (e.g., GA4GH APIs, NCBI E-utilities) |

| OIDC (OpenID Connect) | Federated user authentication. | High (Built for AuthN) | Yes | Increasingly used for cross-institutional login (e.g., ELIXIR, NIH) |

| SAML 2.0 | Enterprise/Institutional single sign-on. | High | Yes, but often enterprise-bound | Common in academic institutions |

| FTP / SFTP | Bulk file transfer. | Low (Basic) / Med (SSH Keys) | Yes | Legacy genomic data repositories |

| GA4GH Passports | Standardized, visa-based authorization. | High (for AuthZ) | Yes | Emerging standard for multi-resource access (e.g., Dockstore, AnVIL) |

| WebDAV | Collaborative web-based editing. | Med (Basic, Digest) | Yes | Certain data management platforms |

Table 2: Standardized APIs for Biological Data (GA4GH Driver Project Examples)

| API Standard | Governed By | Purpose | Key Endpoints (Examples) |

|---|---|---|---|

| DRS (Data Repository Service) | GA4GH | Fetch data objects (files) by a global ID. | /objects/{object_id}, /objects/{object_id}/access |

| WES (Workflow Execution Service) | GA4GH | Execute and manage analysis workflows. | /runs, /runs/{run_id} |

| TES (Task Execution Service) | GA4GH | Execute discrete tasks. | /tasks, /tasks/{task_id} |

| Beacon API | GA4GH | Query for the presence of specific genetic variants. | /query, /info |

| htsget API | GA4GH | Stream genomic read data (BAM/CRAM) by genomic region. | /reads/{id}, /variants/{id} |

Experimental Protocol: Implementing a Secure, FAIR-Compliant Data Access Endpoint

This protocol details the setup of a data access service using a RESTful API with OAuth 2.0 authorization, mirroring real-world implementations in projects like the NHLBI BioData Catalyst.

Title: Protocol for Deploying a Secure DRS-Compatible API Server

Objective: To deploy a microservice that provides secure, programmatic access to genomic dataset files, compliant with the GA4GH DRS specification and FAIR A1 principles.

Materials & Software:

- Server (Cloud VM or physical)

- Linux OS (Ubuntu 22.04 LTS)

- Docker & Docker Compose

- PostgreSQL database

- Identity Provider (e.g., Keycloak for testing, or ELIXIR AAI for production)

- DRS API server software (e.g.,

bond/drs-serveror custom Flask/Django implementation)

Methodology:

Infrastructure Provisioning:

- Launch a virtual machine with a public IP address. Configure firewall rules to allow HTTPS (443) and SSH (22) traffic only.

Identity Provider (IdP) Configuration:

- Deploy a Keycloak instance via Docker.

- Create a new realm (e.g.,

genomics-lab). - Register a new client for the DRS API. Set

Access Typetoconfidential. - Configure valid redirect URIs (e.g.,

https://your-drs-api.org/*). - Define user roles (e.g.,

public_user,registered_user,privileged_user) and assign them to test users.

DRS API Server Deployment:

- Clone a reference DRS implementation:

git clone https://github.com/elixir-cloud/bond.git - Navigate to the

drs-serverdirectory. - Configure the

docker-compose.ymland environment variables to point to the PostgreSQL database and the Keycloak endpoint (forOIDC_ISSUERandOIDC_AUDIENCE). - Populate the database with metadata for test data objects, mapping each object to access URLs and necessary authorization scopes.

- Clone a reference DRS implementation:

Access Policy Definition (AuthZ Logic):

- In the API server code, implement middleware that maps the OAuth 2.0

access_token's claims (e.g.,roles,scope) to permissions. - Example Policy:

- Public Data:

GET /objects/{public_id}→ No token required. - Controlled-Access Data:

GET /objects/{controlled_id}→ Requires token with scopedrs:readand roleregistered_user. - Write Operations:

POST /objects/→ Requires token with scopedrs:writeand roleprivileged_user.

- Public Data:

- In the API server code, implement middleware that maps the OAuth 2.0

Testing & Validation:

- Use

curlor Postman to simulate client requests. - Test 1: Retrieve a public object ID without a token. Expected: HTTP 200 with DRS object metadata.

- Test 2: Request a download URL for a controlled-access object without a token. Expected: HTTP 401/403.

- Test 3: Obtain a client credentials grant token from Keycloak. Use it to request the download URL for the controlled-access object. Expected: HTTP 200 with a signed, time-limited URL to the data in object storage (e.g., AWS S3).

- Use

Visualizing the Authentication & Data Access Workflow

Diagram Title: OAuth 2.0 Client Credentials Flow for Secure DRS API Access

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for Implementing FAIR-Accessible Data Services

| Tool / Reagent | Category | Function in the Experiment / Field |

|---|---|---|

| Keycloak | Identity & Access Management (IAM) | Open-source IdP for testing and managing users, clients, and tokens. Acts as the OAuth 2.0 / OIDC server. |

| ELIXIR AAI | Federated Authentication | Production-grade federated identity service for life sciences. Allows researchers to use their home institution credentials to access many resources. |

| GA4GH DRS API Specification | API Standard | Blueprint for building interoperable file access services. Ensures compatibility with a global ecosystem of clients (e.g., Terra, Seven Bridges). |

| Gen3 Services | Data Platform Stack | An open-source software suite that provides out-of-the-box DRS, authentication, and authorization services for managing large-scale biomedical data. |

OAuth 2.0 / OIDC Libraries (e.g., oauthlib, pyoidc) |

Software Development Kit (SDK) | Pre-built code modules to integrate OAuth 2.0 and OIDC functionality into custom API servers or client applications. |

Postman / curl |

API Testing Client | Tools used to manually test API endpoints, construct HTTP requests with proper headers, and debug authentication flows during development. |

| JWT (JSON Web Token) | Security Token Format | A compact, URL-safe means of representing claims to be transferred between parties. The standard format for OAuth 2.0 access tokens. |

Within the framework of FAIR (Findable, Accessible, Interoperable, Reusable) principles for biological data integration, achieving true interoperability is the most technically demanding step. It requires moving beyond simple data exchange to semantically meaningful integration. This involves the coordinated use of community-developed ontologies, rigorous reporting standards like ISA and MIAME, and the implementation of semantic frameworks that allow machines to unambiguously interpret and reason across disparate datasets.

Foundational Components of Interoperability

Ontologies: The Semantic Backbone

Ontologies are formal, machine-readable representations of knowledge within a domain, consisting of concepts, relationships, and constraints. They provide the shared vocabulary necessary for semantic interoperability.

Key Biological Ontologies:

- Gene Ontology (GO): Describes gene functions (Molecular Function, Biological Process, Cellular Component).

- Sequence Ontology (SO): Describes features and attributes of biological sequences.

- Chemical Entities of Biological Interest (ChEBI): Focuses on small molecular compounds.

- Ontology for Biomedical Investigations (OBI): Provides terms for describing biological and clinical investigations.

Experimental Protocol: Ontology Annotation of Transcriptomic Data

- Data Input: Start with a differentially expressed gene list (e.g., from RNA-Seq analysis).

- Term Mapping: Use an API (e.g., EMBL-EBI's QuickGO, Ontology Lookup Service) to map each gene identifier to its associated GO terms.

- Enrichment Analysis: Employ tools like clusterProfiler (R) or g:Profiler to perform statistical over-representation analysis of GO terms against a background set (e.g., all expressed genes).

- Annotation Curation: Filter results for significance (adjusted p-value < 0.05) and relevance. Use the ontology's hierarchical structure to infer broader or more specific biological interpretations.

- Output: Generate a structured annotation table linking genes, GO terms, evidence codes, and p-values for downstream integration.

Standards: The Structural Framework

Standards ensure data is consistently structured and reported, enabling reliable aggregation and comparison.

- ISA (Investigation-Study-Assay) Framework: A generic, modular framework for describing experimental metadata from biological studies. It structures information hierarchically: an Investigation (the overall project context) contains one or more Studies (a unit of research) which employ one or more Assays (analytical measurements).

- MIAME (Minimum Information About a Microarray Experiment): A pioneer standard defining the minimum information required to unambiguously interpret and potentially reproduce a microarray experiment. It has inspired many other "MI" standards (e.g., MINSEQE for sequencing).

Table 1: Comparison of Key Reporting Standards in Life Sciences

| Standard | Full Name | Primary Scope | Core Requirements (Summary) | Governance Body |

|---|---|---|---|---|

| MIAME | Minimum Information About a Microarray Experiment | Microarray gene expression data | Raw data, processed data, experimental design, sample annotations, platform details, protocols. | FGED Society |

| MINSEQE | Minimum Information about a High-Throughput SEQuencing Experiment | Next-generation sequencing data | Similar to MIAME, with specifics for sequencing (e.g., read lengths, alignment software). | FGED Society |

| MIAPE | Minimum Information About a Proteomics Experiment | Proteomics data | Instrument configuration, data processing parameters, identified molecules, confidence metrics. | HUPO-PSI |

| ARRIVE | Animal Research: Reporting of In Vivo Experiments | Pre-clinical animal studies | Study design, sample size, ethical statements, animal details, results interpretation. | NC3Rs |

Experimental Protocol: Implementing the ISA Framework for a Multi-Omics Study

- Investigation-Level Metadata: Define the project title, description, submission date, and overall personnel/contacts.

- Study-Level Design: For each cohort or experimental group, create a study descriptor. Define the sources (e.g., human subjects, cell lines) and their characteristics. Document the sample collection protocol.

- Assay-Level Annotation: For each analytical technique (e.g., RNA-Seq, LC-MS proteomics), create a separate assay file.

- Map each sample to its respective data file (raw FASTQ, .raw mass spec file).

- Describe the detailed technical protocol: instrument model, library preparation kit, data processing pipeline with software versions and key parameters.

- Tool Usage: Utilize the ISAcreator software or the

isatoolsPython library to populate the ISA-Tab format (a set of tab-delimited files:i_*.txt,s_*.txt,a_*.txt). - Validation & Submission: Use the ISA validator to check compliance, then submit the structured metadata alongside data to a public repository like MetaboLights or PRIDE.

Semantic Frameworks: The Integration Engine

Semantic frameworks, such as knowledge graphs and RDF (Resource Description Framework) triples, combine ontologies and standards to create interconnected, queryable webs of data.

Core Technology Stack:

- RDF: A graph-based data model representing information as subject-predicate-object triples (e.g., "Gene A - isinvolvedin - Pathway B").

- SPARQL: The query language for RDF databases, enabling complex, federated queries across multiple data sources.

- Linked Data: A set of best practices for publishing and connecting structured data on the web using URIs and RDF.

Integrated Workflow for FAIR Interoperability

Diagram 1: Semantic interoperability workflow.

The Scientist's Toolkit: Research Reagent Solutions for Interoperability

Table 2: Essential Tools & Resources for Achieving Semantic Interoperability

| Tool/Resource Name | Category | Function | Key Features / Use Case |

|---|---|---|---|

| ISAcreator / isatools | Metadata Management | Assists in creating, editing, and validating ISA-Tab formatted metadata. | Guided forms, configurable templates, validation against community standards. |

| Ontology Lookup Service (OLS) | Ontology Service | A repository for searching and browsing biomedical ontologies via API. | Centralized access to 200+ ontologies, term auto-suggestion, JSON-LD output. |

| RO-Crate | Packaging Framework | A method for packaging research data with their metadata in a machine-readable way. | Uses schema.org JSON-LD, creates self-contained, FAIR research objects. |

| Bioconductor (AnnotationHub) | Bioinformatics Platform | Provides unified R-based interfaces to vast genomic annotation resources. | Programmatic access to genomic coordinates, gene IDs, and ontology mappings. |

| Protégé | Ontology Engineering | An open-source platform for building and editing ontologies and knowledge bases. | Visual modeling, logical consistency checking, export to OWL/RDF formats. |

| SPARQL Endpoint | Query Interface | A web service that accepts SPARQL queries and returns results (e.g., from Wikidata, EBI RDF). | Allows federated queries across linked open data sources directly from code. |

| LinkML (Linked Data Modeling Language) | Modeling Framework | A modeling language for generating schemas, validation tools, and conversion frameworks for linked data. | Converts simple YAML schemas into OWL, JSON-Schema, or Python data classes. |

Case Study: Integrating Drug Response and Genomic Data

Objective: Enable semantic queries like "Find all drugs that target pathways containing genes mutated in patients resistant to Compound X."

Protocol:

- Data Standardization:

- Genomic Data: Store somatic variant calls (VCF files) annotated with HUGO gene symbols and sequence ontology (SO) terms (e.g.,

SO:0001583for missense variant) using ISA-Tab. - Drug Response Data: Store IC50 values from dose-response assays, annotated with ChEBI identifiers for compounds and Cell Line Ontology (CLO) IDs.

- Genomic Data: Store somatic variant calls (VCF files) annotated with HUGO gene symbols and sequence ontology (SO) terms (e.g.,

- Ontology Alignment: Map all gene symbols to NCBI Gene identifiers. Map all drug targets to their respective UniProt IDs.

- Knowledge Graph Construction:

- Use RDF to create triples:

<Patient001> <has_variant_in> <Gene:TP53>.<Drug:Doxorubicin> <has_target> <Protein:TOP2A>.<Gene:TP53> <is_part_of> <Pathway:p53_signaling>.

- Use RDF to create triples:

- Semantic Querying: Execute a SPARQL query to join data across these relationships, inferring connections not explicitly stated in the original datasets.

Diagram 2: Knowledge graph for drug-genome integration.

Achieving interoperability under the FAIR principles is not a single task but a layered approach involving the mandatory use of standards for structure, ontologies for meaning, and semantic frameworks for integration. This technical infrastructure transforms isolated datasets into a connected, queryable knowledge ecosystem, ultimately accelerating hypothesis generation and validation in biomedical research and drug development. The protocols and tools outlined here provide a concrete starting point for researchers to implement these principles in their data management workflows.

Within the FAIR principles (Findable, Accessible, Interoperable, Reusable) for biological data integration, Reusability (R1) is the ultimate objective, dependent on the first three. It mandates that data and metadata are sufficiently well-described to allow replication and integration in new research. This step focuses on the three pillars enabling this: rigorous Provenance, clear Licensing, and the use of Community-Approved Formats. Without these, integrated datasets become "black boxes," unusable for downstream validation or novel discovery in translational research and drug development.

Pillar 1: Provenance (R1.1, R1.2)

Provenance, or the documentation of data lineage, is critical for assessing data quality, reproducibility, and trust. It addresses FAIR principles R1.1 (richly described with plurality of accurate and relevant attributes) and R1.2 (clear usage licenses).

Minimum Information Standards

Community-developed Minimum Information (MI) standards ensure datasets are reported with sufficient experimental and analytical context.

Table 1: Key Minimum Information Standards for Biological Data

| Standard | Scope | Primary Use Case | Reference |

|---|---|---|---|

| MIAME | Microarray experiments | Transcriptomics data submission to ArrayExpress, GEO. | Brazma et al., 2001 |

| MINSEQE | Sequencing experiments | Next-Generation Sequencing (NGS) data reporting. | Sequence Read Archive (SRA) |

| MIAPE | Proteomics experiments | Mass spectrometry and protein interaction data. | Taylor et al., 2007 |

| ARRIVE | In vivo experiments | Reporting animal research for reproducibility. | Percie du Sert et al., 2020 |

| ISA-Tab | General-purpose framework | Structuring metadata from diverse omics technologies. | Sansone et al., 2012 |

Protocol: Capturing Computational Provenance with Research Object Crate (RO-Crate)

RO-Crate is a method for packaging research data with machine-readable metadata, explicitly capturing provenance.

Materials:

- Dataset files (raw, processed).

- Code scripts (analysis, preprocessing).

- Workflow description (e.g., CWL, Nextflow, or plain-text).

- RO-Crate Python library (

rocrate).

Methodology:

- Installation:

pip install rocrate - Crate Initialization: Create a new directory and initialize the RO-Crate.

Add Data Entities: Add all relevant files, tagging their roles.

Define Provenance Relationships: Link entities using the

wasGeneratedByandwasDerivedFrompredicates.Export: The crate's

ro-crate-metadata.jsonfile now provides a machine-actionable provenance record.

Diagram Title: Computational Provenance Captured in RO-Crate

Pillar 2: Licensing (R1.1, R1.2)

A clear license is non-negotiable for reuse. It removes ambiguity about how data can be accessed, used, modified, and redistributed.

Table 2: Common Licenses for Biomedical Data and Code

| License | Type | Key Terms for Re-users | Best For |

|---|---|---|---|

| CC0 | Public Domain Dedication | No restrictions; waives all rights. | Maximal data reuse, database integration. |

| CC BY 4.0 | Attribution License | Must give appropriate credit. | Most research data, encouraging reuse with credit. |

| ODC BY | Open Data Commons Attribution | Similar to CC BY, tailored for databases. | Databases and data collections. |

| MIT / BSD | Permissive Software License | Free use/modify/distribute, with disclaimer. | Analysis code, software tools. |

| GPL v3 | Copyleft Software License | Derivative works must be open under GPL. | Tools where derivatives must remain open. |

| Restrictive | Custom Institutional | Often for non-commercial use only; requires MTA. | Sensitive data (e.g., patient cohorts). |

Protocol: Applying a License to a Dataset in a Public Repository

Methodology:

- Select a License: Choose based on intended reuse (e.g., CC BY 4.0 for general data).

- Create a LICENSE File: In the dataset's root directory, create a plain-text file named

LICENSE.txtorLICENSE.md. Copy the full license text from the official source (e.g., creativecommons.org). - Embed in Metadata:

- For Zenodo: Use the "Licenses" dropdown during upload. The license is automatically appended to the record.

- For BioStudies/BioSamples: Select from provided license options in the submission form.

- For GitHub: Use the built-in license selector when creating a repository, which generates the

LICENSEfile.

- Cite in README: Explicitly state the license in the

README.mdfile: "This dataset is licensed under the Creative Commons Attribution 4.0 International License (CC BY 4.0)."

Pillar 3: Community-Approved Formats (I1, I2, R1.3)

Formats that are open, documented, and widely adopted are essential for Interoperability (I1, I2) and long-term Reusability (R1.3).

Table 3: Community-Approved vs. Closed Formats in Biology

| Data Type | Community-Approved Format | Closed/Problematic Format | Reason for Preference |

|---|---|---|---|

| Sequencing Data | FASTQ, BAM, CRAM | Proprietary sequencer output (e.g., .bcl) | Open standard, tool-agnostic. |

| Genomic Variants | VCF, gVCF | Excel (.xlsx) tables | Structured, defined schema, handles complex alleles. |

| Protein Structures | PDB, mmCIF | Chemical sketch files (.cdx) | Standardized atomic coordinates, rich metadata. |

| Microarray Data | MIAME-compliant SOFT/TXT | Native scanner image files | Contains required MIAME metadata for reuse. |

| General Tables | TSV/CSV with schema (JSON Schema) | Word documents (.docx) | Machine-readable, parsable, schema defines columns. |

| Workflows | CWL, Nextflow, Snakemake | Graphical UI saved binaries | Portable, reproducible, version-controllable. |

Diagram Title: Decision Tree for Assessing Data Format Reusability

Integrated Case Study: Publishing a FAIR Multi-Omics Dataset

Scenario: A study integrating RNA-Seq (transcriptomics) and LC-MS/MS (proteomics) to identify therapeutic targets in a rare cancer cell line.