From Sequence to Significance: A Comprehensive Guide to Functional Validation of Genetic Variants

The rapid expansion of next-generation sequencing has generated a deluge of genetic variants of uncertain significance (VUS), creating a critical bottleneck in research and clinical diagnostics.

From Sequence to Significance: A Comprehensive Guide to Functional Validation of Genetic Variants

Abstract

The rapid expansion of next-generation sequencing has generated a deluge of genetic variants of uncertain significance (VUS), creating a critical bottleneck in research and clinical diagnostics. This article provides a comprehensive roadmap for researchers, scientists, and drug development professionals to navigate the complex landscape of functional validation. We explore the foundational challenge of VUS interpretation, detail cutting-edge methodological approaches from single-cell multi-omics to CRISPR-based screens, and provide strategies for troubleshooting and optimizing experimental workflows. Finally, we establish a framework for validating functional evidence and integrating it into standardized variant classification systems, empowering confident translation of genetic findings into biological insights and therapeutic applications.

The VUS Challenge: Understanding the Imperative for Functional Validation

Next-generation sequencing (NGS) has revolutionized clinical genetics, but its unprecedented capacity to detect genetic variants has also created a significant diagnostic bottleneck: the overclassification of Variants of Uncertain Significance (VUS). A VUS is a genetic alteration for which the clinical impact cannot be definitively determined, leaving patients and clinicians without clear guidance [1]. This article examines the scale of the VUS challenge and explores how functional studies are providing the critical evidence needed to resolve these uncertainties and advance precision medicine.

The NGS Revolution and the Inevitability of VUS

The core advantage of NGS—its ability to sequence millions of DNA fragments in parallel—is also the source of the VUS challenge. Compared to traditional Sanger sequencing, NGS is thousands of times faster and has reduced the cost of sequencing a human genome from billions of dollars to under $1,000 [2] [3]. This democratization of sequencing has led to widespread testing, but the interpretation of the vast number of discovered variants has not kept pace with the technology's detection capabilities.

The Scale of the Problem

The VUS problem is pervasive, particularly in the realm of rare diseases. A descriptive analysis of the ClinVar database using the term 'rare diseases' revealed that, of the 94,287 variants identified, the majority were categorized as VUS [1]. This high volume of uncertain results complicates clinical decision-making, can lead to inappropriate management, and causes psychological distress for patients [4].

The following table summarizes the key differences between traditional and next-generation sequencing that have contributed to the VUS bottleneck.

| Feature | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Throughput | Low (single fragment per reaction) [2] | Ultra-high (millions to billions of fragments per run) [2] [3] |

| Cost per Genome | ~$3 billion (Human Genome Project) [2] | Under $1,000 [2] [3] |

| Speed | Slow (days for individual genes) [3] | Rapid (whole genomes in days) [3] |

| Typical Use Case | Targeted confirmation of specific variants [2] | Unbiased discovery across the whole exome or genome [5] [1] |

| Primary Output Challenge | Limited data volume | Interpretation of millions of variants, leading to a high rate of VUS [1] |

Beyond Bioinformatics: The Critical Role of Functional Assays

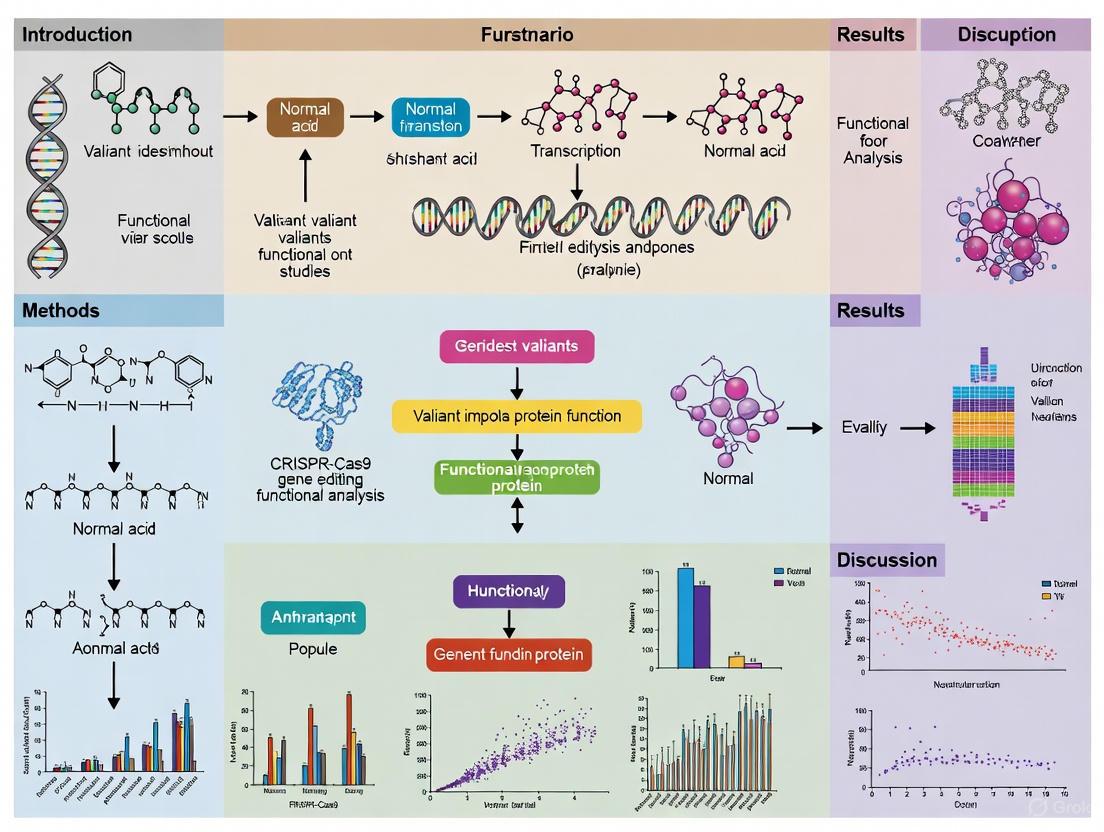

Bioinformatic prediction tools are the first step in variant interpretation, but they are often insufficient for classifying a variant as pathogenic or benign. Functional validation is essential for translating genetic findings into clinical practice [5] [6]. The following diagram illustrates the pathway from NGS discovery to clinical resolution of a VUS.

Key Experimental Methodologies for Functional Validation

Researchers employ a diverse toolkit of experimental methods to determine the functional consequences of a VUS. The table below details several key protocols and their applications as demonstrated in recent studies.

| Experimental Method | Protocol Summary | Key Application Example |

|---|---|---|

| Mini-Gene Splicing Assay | A segment of the patient's gene containing the VUS is cloned into a vector and transfected into cells. RNA is then extracted and analyzed to see if the variant causes abnormal splicing [5] [6]. | Used to confirm that a splicing variant (c.1217 + 2T>A) in the DEPDC5 gene disrupts alternative splicing, causing familial focal epilepsy [5] [6]. |

| Enzyme Activity Assay | The mutant protein is expressed, and its catalytic activity is measured and compared to the wild-type protein using spectrophotometry or mass spectrometry [5] [6]. | A splicing mutation in the HMBS gene (c.648_651+1delCCAGG) was shown to reduce HMBS enzyme activity, leading to acute intermittent porphyria [5] [6]. |

| Cell Viability / Functional Genomics Platform | Wild-type and mutant genes are expressed in cell lines (e.g., MCF10A, Ba/F3). Oncogenic potential is assessed by measuring growth factor-independent cell proliferation [7]. | A study of 438 VUS found that 106 (24%) increased cell viability. Variants pre-classified as "Potentially actionable" were 3.94x more likely to be oncogenic than "Unknown" ones [7]. |

| Metabolic Marker Analysis | Mass spectrometry is used to quantify metabolite levels in patient plasma or urine. Elevated markers can indicate a pathogenic block in a metabolic pathway [5] [6]. | In methylmalonic acidemia (MMA), patients with VUS in MMUT/MMACHC had significantly higher levels of C3, C3/C0, and C3/C2 metabolites than non-carriers [5] [6]. |

From VUS to Actionability: Clinical Impact and Reclassification

The ultimate goal of functional studies is to resolve diagnostic uncertainty and improve patient care. Successful reclassification has direct clinical implications.

Case Studies in Reclassification

- Tumor Suppressor Genes: A 2025 study reassessed VUS in seven tumor suppressor genes (NF1, TSC1, TSC2, RB1, PTCH1, STK11, FH) using updated ClinGen guidance that places greater weight on phenotype-specificity. This new criteria allowed 31.4% of the remaining VUS (32 variants) to be reclassified as Likely Pathogenic, with the highest reclassification rate in STK11 (88.9%) [4].

- Actionability in Oncology: A rule-based actionability framework developed by MD Anderson's PODS team classifies VUS in actionable genes as either "Unknown" or "Potentially" actionable based on their location in functional domains or proximity to known oncogenic variants. When tested, variants categorized as "Potentially actionable" were significantly more likely to be functionally oncogenic (37%) than those categorized as "Unknown" (13%) [7].

The Scientist's Toolkit: Essential Reagents for Functional Studies

| Research Reagent / Tool | Critical Function in VUS Validation |

|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Allows creation of patient-specific cell models to study the impact of a VUS in relevant cell types (e.g., neurons, cardiomyocytes) [5] [6]. |

| Clustered Regularly Interspaced Short Palindromic Repeats (CRISPR) Gene Editing | Enables precise introduction or correction of a VUS in cell lines to establish a direct causal link between the genotype and observed phenotype [8]. |

| Transposon System | Facilitates the stable integration of genetic constructs into a host genome, useful for long-term expression of a mutant gene for functional analysis [5] [6]. |

| Plasmid Vectors for Mini-Gene Assays | Serve as the backbone for cloning gene fragments containing splice-site VUS to study their impact on mRNA processing outside of the patient's native genomic context [5] [6]. |

The field is moving towards more integrated and scalable solutions. Emerging trends for 2025 include the use of artificial intelligence (AI) to analyze multiomic datasets and the continued refinement of disease-specific variant classification guidelines [8] [9] [4]. The convergence of genomic data, functional assays, and advanced computational tools is paving the way for a more definitive resolution of the VUS bottleneck.

In conclusion, while NGS has created a diagnostic challenge through the proliferation of VUS, it has also provided the data necessary to tackle it. Functional studies are no longer a niche research activity but a fundamental component of modern genetic diagnosis. By systematically characterizing the functional impact of VUS, the scientific community is building the evidence base required to translate genetic data into precise diagnoses and effective personalized treatments.

The American College of Medical Genetics and Genomics and the Association for Molecular Pathology (ACMG/AMP) guidelines established a crucial framework for standardizing variant classification. Automated computational tools built on these guidelines have significantly improved the efficiency of variant interpretation, yet they face substantial limitations in clinical practice. A comprehensive 2025 analysis of automated variant interpretation tools revealed that while they demonstrate high accuracy for clearly pathogenic or benign variants, they show significant limitations with variants of uncertain significance (VUS) [10]. Despite advances in automation, expert oversight remains essential in clinical contexts, particularly for challenging VUS interpretation [10].

The fundamental challenge lies in the fact that computational tools primarily automate the evaluation of established criteria within guidelines, but struggle with nuanced cases requiring integrated biological understanding. As the field evolves with updated frameworks like the forthcoming ACMG Version 4 guidelines—which introduce a points-based system for more nuanced interpretation—the integration of functional validation becomes increasingly critical for resolving ambiguous cases [11]. This article examines the specific scenarios where computational predictions fall short and demonstrates how functional studies provide the necessary evidence to advance beyond uncertainty.

Performance Gaps in Automated Interpretation Tools

Comparative Accuracy of Automated Tools

Table 1: Performance Comparison of Variant Interpretation Methods

| Method | Overall Accuracy | VUS Resolution Rate | Key Limitations |

|---|---|---|---|

| ACMG-2015 Guidelines | 65.6% | Baseline | Qualitative approach, subjective interpretation |

| ClinGen-Revised Guidelines | 89.2% | 8% reduction in VUS classifications | Limited for non-coding variants |

| Automated Tools (General) | High for clear pathogenic/benign | Significant limitations | Struggles with VUS, requires expert oversight |

| popEVE AI Model | Identified 123 novel disease-gene links | Diagnosed ~33% of previously undiagnosed cases | Requires further clinical validation |

Recent studies directly comparing interpretation methodologies reveal crucial performance differences. When analyzing the same variant sets, the ClinGen-Revised protocol demonstrated significantly improved accuracy (89.2%) compared to the original ACMG-2015 criteria (65.6%) [12]. The updated framework also achieved an 8% overall reduction in VUS classifications, thereby refining the prioritization of actionable variants for clinical decision-making [12].

Despite these improvements, comprehensive analyses of automated interpretation tools show they maintain critical weaknesses. A 2025 evaluation of tools through comparison with ClinGen Expert Panel interpretations for 256 cardiomyopathy, hereditary cancer, and monogenic diabetes variants found that while tools performed well for straightforward classifications, they showed substantial limitations with VUS interpretation [10]. This performance gap underscores the continued necessity of expert oversight when using these tools in clinical settings, particularly for ambiguous cases [10].

Emerging AI models like popEVE attempt to bridge this gap by predicting variant pathogenicity through integrated evolutionary and population data. In testing, this model successfully distinguished pathogenic from benign variants and identified 123 previously unknown genes linked to developmental disorders [13]. However, even advanced models require further validation before they can independently support clinical decisions without functional confirmation.

Key Failure Points of Computational Prediction

Computational tools face several specific challenges that limit their clinical utility:

Over-reliance on Population Frequency Data: Tools often overweight population allele frequency in isolation from functional context, potentially misclassifying rare variants that are truly pathogenic but ultra-rare [12].

Inadequate Handling of Conflicting Evidence: When pathogenic and benign evidence coexists, automated systems struggle with balanced interpretation, frequently defaulting to VUS classifications rather than nuanced assessment [10] [11].

Limited Incorporation of Functional Evidence: Most automated tools insufficiently integrate functional data from transcriptomic, proteomic, or metabolic studies, creating interpretation gaps that persist without experimental validation [14].

The evolution toward quantitative, points-based systems in ACMG V4 guidelines represents a positive shift, but simultaneously highlights the need for more sophisticated computational approaches that can incorporate diverse evidence types, including functional data [11].

Experimental Pathways for Functional Validation

Single-Cell Multi-Omic Validation Workflow

Functional validation bridges the gap between computational prediction and biological impact. Single-cell DNA–RNA sequencing (SDR-seq) represents a cutting-edge approach that simultaneously profiles genomic DNA loci and gene expression in thousands of single cells [15]. This method enables accurate determination of variant zygosity alongside associated gene expression changes, providing direct evidence of functional impact.

Diagram: SDR-seq Functional Validation Workflow

Experimental Protocol: SDR-seq for Variant Functional Phenotyping

Cell Preparation and Fixation

- Prepare single-cell suspension from target tissue or cell line

- Fix cells using glyoxal (superior to PFA for nucleic acid preservation)

- Permeabilize cells to enable reagent access [15]

In Situ Reverse Transcription

- Perform reverse transcription using custom poly(dT) primers

- Add unique molecular identifiers (UMIs), sample barcodes (BCs), and capture sequences (CSs) to cDNA molecules

- Maintain cell integrity throughout process [15]

Droplet-Based Partitioning and Amplification

- Load cells onto microfluidics platform (e.g., Tapestri from Mission Bio)

- Generate first droplet emulsion followed by cell lysis and proteinase K treatment

- Perform multiplexed PCR with target-specific primers in droplets

- Amplify both gDNA and RNA targets simultaneously [15]

Library Preparation and Sequencing

- Separate gDNA and RNA libraries using distinct overhangs on reverse primers

- Prepare sequencing libraries optimized for each data type

- Sequence using appropriate NGS platforms [15]

Data Integration and Analysis

- Process gDNA data to determine variant zygosity with high confidence

- Analyze RNA data for gene expression changes linked to genotypes

- Correlate specific variants with functional transcriptional outcomes [15]

This methodology enables researchers to confidently associate coding and noncoding variants with distinct gene expression patterns in their endogenous genomic context, providing direct functional evidence that surpasses computational prediction alone.

Research Reagent Solutions for Functional Studies

Table 2: Essential Research Reagents for Functional Validation

| Reagent / Tool | Function | Application in Validation |

|---|---|---|

| SDR-seq Platform | Simultaneous DNA+RNA profiling | Links genotype to phenotype at single-cell level |

| Hybridization Capture Panels | Target enrichment for specific genomic regions | Enables focused analysis of candidate variants |

| CRISPR-Cas9 Systems | Precise genome editing | Creates isogenic controls for functional comparison |

| ADAR-Based RNA Editing | Reversible RNA modification | Assesses impact of specific RNA changes without DNA alteration |

| REVEL Algorithm | Ensemble variant pathogenicity prediction | Provides pre-validation prioritization of variants for functional study |

The selection of appropriate research reagents critically impacts the success of functional validation studies. The REVEL algorithm has emerged as a preferred in silico prediction tool, recommended in the upcoming ACMG V4 guidelines, providing consistent computational evidence to prioritize variants for functional analysis [11]. For experimental validation, single-cell multi-omics platforms like SDR-seq enable comprehensive functional phenotyping by linking variant zygosity to transcriptional consequences in thousands of individual cells simultaneously [15].

Advanced gene editing tools, particularly CRISPR-Cas systems, facilitate the creation of isogenic cell lines that differ only by the variant of interest, enabling controlled functional comparisons [16]. Meanwhile, RNA editing technologies utilizing ADAR enzymes offer reversible modulation of genetic information, allowing researchers to test the functional impact of specific changes without permanent genomic alteration [16]. These reagents collectively form a toolkit for comprehensive functional validation that extends beyond computational prediction.

Integrating Functional Evidence into Classification Frameworks

The transition toward more quantitative variant classification frameworks creates opportunities for tighter integration of functional evidence. The upcoming ACMG V4 guidelines introduce a points-based system that allows for more nuanced interpretation and better accommodation of functional data [11]. This evolution addresses key limitations of previous versions by enabling more granular distinctions within criteria and facilitating the balancing of pathogenic and benign evidence [11].

Functional validation studies directly support several specific ACMG/AMP criteria:

PS3/BS3 (Functional Data): Evidence from SDR-seq or other functional assays provides direct support for these criteria, with quantitative data strengthening the evidence level [12].

PM1 (Variant Location): Functional studies can confirm whether variants in mutational hotspots or critical domains actually disrupt protein function [12].

PP1/BS4 (Segregation Evidence): Functional data can strengthen or weaken familial segregation evidence by providing mechanistic explanations [11].

Recent research demonstrates that systematic integration of functional evidence significantly improves classification accuracy. The ClinGen-Revised guidelines, which incorporate more structured functional evidence assessment, achieved approximately 24% higher accuracy compared to ACMG-2015 criteria [12]. This improvement was particularly notable for variants with conflicting computational predictions, where functional data helped resolve classification uncertainties.

Computational prediction tools built upon ACMG/AMP guidelines have transformed variant interpretation, but their limitations in handling variants of uncertain significance necessitate a complementary approach incorporating functional validation. The evolving landscape of variant interpretation—with updated guidelines, advanced functional assays, and integrated AI models—points toward a future where computational prediction and experimental validation work synergistically.

For researchers and clinicians, this integrated approach offers the most robust pathway for resolving ambiguous variants and advancing precision medicine. Functional studies provide the critical biological context needed to transform computational predictions into clinically actionable knowledge, particularly for rare variants and those with conflicting evidence. As single-cell multi-omics and gene editing technologies continue to advance, their systematic integration with computational tools will be essential for unlocking the full potential of genomic medicine.

The post-genomic era has generated an unprecedented volume of genetic data, with genome-wide association studies (GWAS) identifying thousands of genetic variants associated with human diseases and traits. However, a significant challenge persists: the majority of disease-associated variants are merely correlated with disease states rather than proven to be causal. This correlation-causation gap represents a critical bottleneck in translating genetic discoveries into mechanistic biological insights and therapeutic applications. Functional evidence provides the essential experimental bridge that connects genetic associations to biological mechanisms, enabling researchers to move beyond statistical links to demonstrate how specific genetic variants directly influence molecular pathways, cellular functions, and ultimately, phenotypic expression. This guide objectively compares the performance of current methodologies for generating functional evidence, providing researchers with a structured framework for selecting appropriate strategies based on their specific research contexts and objectives.

Comparative Analysis of Functional Validation Approaches

The following analysis compares the performance, applications, and limitations of predominant methodologies used in functional genomics, synthesizing data from recent studies and technological assessments.

Table 1: Comparison of Major Functional Validation Approaches for Genetic Variants

| Methodology | Key Applications | Throughput | Key Strengths | Major Limitations | Supporting Evidence |

|---|---|---|---|---|---|

| In vitro functional assays (Western blot, luciferase reporter, immunofluorescence) | Characterization of coding variant effects on protein function and signaling pathways | Low to medium | • Direct measurement of protein and pathway activity• Well-established protocols• Quantitative results | • May oversimplify complex cellular environments• Lower throughput• Requires variant-specific assay development | LRP6 variant study demonstrated impaired β-catenin expression and reduced TCF/LEF transcriptional activity [17] |

| Single-cell multi-omics (SDR-seq) | Simultaneous profiling of DNA variants and transcriptomic consequences in single cells | High | • Links genotype to gene expression at single-cell resolution• Captures cellular heterogeneity• Works in primary patient samples | • Higher technical complexity• Substantial computational requirements• Expensive per sample | Simultaneous measurement of 480 genomic DNA loci and genes in thousands of single cells [15] |

| Computational prediction (in silico tools) | Prioritization of potentially deleterious variants from large datasets | Very high | • Extremely scalable• Low cost• Rapid results for variant prioritization | • Predictive rather than demonstrative• Variable accuracy• Limited to predefined parameters | 13-tool pipeline identified deleterious missense SNPs in RAAS genes; requires experimental validation [18] |

| CRISPR-based screening | High-throughput functional assessment of coding and non-coding variants | High | • Endogenous genomic context• Massive parallelization• Precise editing | • Potential off-target effects• Variable editing efficiency• Complex experimental design | Enables precise editing and interrogation of gene function in health and disease [14] |

Detailed Experimental Protocols for Key Methodologies

In vitro Functional Characterization of Coding Variants

The protocol for functional characterization of missense and truncating variants in the LRP6 gene provides a robust template for studying coding variants in disease contexts [17]. This comprehensive approach employs multiple orthogonal methods to build compelling evidence for variant pathogenicity:

Whole-exome sequencing and variant identification: Genomic DNA is extracted from patient peripheral blood samples using commercial kits (e.g., Beijing Tiangen Biochemical Technology). Libraries are prepared and sequenced on platforms such as Illumina's Nova6000. Variants are filtered based on frequency (<0.01 in population databases) and predicted pathogenicity using tools like SIFT, PolyPhen-2, and MutationTaster [17].

Subcellular localization analysis: Immunofluorescence microscopy is performed to determine whether variants alter protein trafficking and cellular distribution. Cells transfected with wild-type or variant constructs are fixed, permeabilized, and incubated with primary antibodies against the target protein, followed by fluorophore-conjugated secondary antibodies. Nuclei are counterstained with DAPI, and localization patterns are visualized by confocal microscopy [17].

Western blot analysis of signaling pathways: Protein lysates are separated by SDS-PAGE, transferred to membranes, and probed with antibodies against pathway components (e.g., β-catenin for WNT signaling). Detection is performed using chemiluminescent substrates, with quantification of band intensity normalized to loading controls [17].

Dual-luciferase reporter assays: The TOP-Flash/FOP-Flash system is used to measure TCF/LEF transcriptional activity as a readout of WNT/β-catenin pathway function. Cells are co-transfected with variant constructs and reporter plasmids, followed by lysis and measurement of firefly and Renilla luciferase activities. Results are expressed as TOP/FOP flash ratios to quantify pathway activity [17].

Single-cell DNA-RNA Sequencing (SDR-seq) for Unified Genotype-Phenotype Analysis

The SDR-seq protocol enables simultaneous assessment of genetic variants and their transcriptional consequences in thousands of single cells [15]:

Cell preparation and fixation: Cells are dissociated into single-cell suspensions and fixed with either paraformaldehyde (PFA) or glyoxal. Glyoxal fixation provides superior RNA recovery due to reduced nucleic acid cross-linking [15].

In situ reverse transcription: Fixed and permeabilized cells undergo reverse transcription using custom poly(dT) primers containing unique molecular identifiers (UMIs), sample barcodes, and capture sequences, effectively labeling each cDNA molecule with its cellular origin [15].

Droplet-based partitioning and amplification: Cells are loaded onto microfluidic platforms (e.g., Mission Bio Tapestri) where they are encapsulated into droplets with barcoding beads. Following lysis, a multiplexed PCR simultaneously amplifies targeted genomic DNA loci and cDNA molecules, with cell barcoding achieved through complementary capture sequence overhangs [15].

Library preparation and sequencing: gDNA and RNA amplicons are separated using distinct overhangs on reverse primers (R2N for gDNA, R2 for RNA) and converted into sequencing libraries. This allows optimized sequencing conditions for each data type - full-length coverage for gDNA variants and transcript-barcode information for RNA targets [15].

Computational Prediction Pipelines for Variant Prioritization

Large-scale functional studies typically begin with computational prioritization to identify the most promising candidates from thousands of variants:

Multi-tool consensus approach: Variants are analyzed through a pipeline of 13 computational tools including SIFT, PolyPhen-2, and MutationTaster to predict deleterious effects. Variants consistently classified as damaging across multiple tools receive higher priority [18].

Protein stability and conservation analysis: Tools such as I-Mutant 3.0, MUpro, and DynaMut2 predict impacts on protein stability, while ConSurf evaluates evolutionary conservation of variant positions [18].

Structural modeling and analysis: Project HOPE and similar tools model variant effects on protein structure, assessing changes in charge, size, hydrophobicity, and secondary structure elements [18].

Functional annotation with aggregator tools: Ensembl Variant Effect Predictor (VEP) and ANNOVAR provide comprehensive annotation of variants, mapping them to genomic features and integrating functional predictions from multiple databases [19].

Visualizing Functional Genomics Workflows and Pathways

Integrated Functional Validation Pipeline for Genetic Variants

Functional Genomics Validation Workflow

WNT/β-Catenin Signaling Pathway Impact

WNT Signaling Pathway Disruption by LRP6 Variants

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Resources for Functional Genomics

| Resource Category | Specific Tools/Platforms | Primary Applications | Key Features/Benefits |

|---|---|---|---|

| Sequencing Technologies | Illumina NovaSeq X, Oxford Nanopore | Whole genome/exome sequencing, targeted sequencing | High-throughput, long-read capabilities, variant discovery [14] |

| Functional Annotation Databases | Ensembl VEP, ANNOVAR, DECIPHER | Variant effect prediction, clinical interpretation | Comprehensive annotation, integration with clinical data [19] [20] |

| Cell Line Resources | Human induced pluripotent stem cells (iPSCs) | Disease modeling, differentiation to relevant cell types | Patient-specific genetic background, reprogramming capability [15] |

| Gene Editing Tools | CRISPR-Cas9, base editing, prime editing | Precise variant introduction, functional screening | High precision, modularity, scalability [14] |

| Pathway Analysis Reagents | TOP/FOP-Flash luciferase system, pathway-specific antibodies | Signaling pathway assessment, protein quantification | Pathway-specific readouts, quantitative results [17] |

| Single-Cell Platforms | Mission Bio Tapestri, 10x Genomics | Single-cell multi-omics, cellular heterogeneity analysis | Combined DNA-RNA profiling, high cellular throughput [15] |

| Computational Resources | DeepVariant, FINEMAP, SuSiE | Variant calling, statistical fine-mapping, prioritization | AI-powered accuracy, Bayesian inference frameworks [14] [21] |

The evolving landscape of functional genomics presents researchers with multiple validated pathways for connecting genetic variants to disease mechanisms. The most robust conclusions emerge not from reliance on a single methodology, but from the strategic integration of complementary approaches: computational predictions to prioritize candidates, single-cell technologies to capture cellular context, and targeted functional assays to establish mechanistic causality. As noted in recent assessments of variant classification, functional evidence represents "unprecedented value for genomic diagnostics" yet challenges remain in standardized application and interpretation [22]. The continuing development of higher-throughput functional assays, more sophisticated computational predictions, and unified multi-omics platforms promises to further accelerate the transformation of genetic correlations into validated biological mechanisms with therapeutic potential.

The interpretation of genetic variants represents a significant challenge in modern genomics, particularly with the proliferation of data from Whole Genome Sequencing (WGS) and Genome-Wide Association Studies (GWAS) [19]. While over 90% of disease-associated variants from GWAS are located in non-coding regions, their functional impact is often elusive [15]. Functional assays provide the critical bridge between genetic observation and biological understanding, enabling researchers to move beyond correlation to establish causal relationships between variants and phenotypic outcomes. For drug development professionals and researchers, the strategic selection and implementation of these assays directly impact the efficiency of target validation, the prediction of clinical trial success, and the understanding of disease mechanisms. This guide provides a comparative analysis of the current technological landscape for functional validation, offering detailed methodological insights and performance data to inform evidence-based decision-making in genetic research and therapeutic development.

The Validation Spectrum: From Clinical Correlation to Definitive Assays

The confidence in variant pathogenicity spans a continuum, from initial clinical observations to definitive functional proof. The table below outlines this spectrum, highlighting the types of evidence and their respective strengths and limitations.

Table: The Spectrum of Evidence for Variant Validation

| Evidence Level | Description | Key Strengths | Principal Limitations |

|---|---|---|---|

| Clinical Correlation | Statistical associations from patient cohorts (e.g., GWAS, family studies). | Identifies variants of potential clinical relevance; provides population-level data. | Cannot establish causality; often confounded by linkage disequilibrium and population structure [19]. |

| Computational Prediction | In silico assessment of variant impact using bioinformatic tools (e.g., VEP, ANNOVAR). | High-throughput; cost-effective for initial variant prioritization [19]. | Prone to false positives/negatives; provides predictions, not empirical evidence. |

| Intermediate Functional Data | Evidence from non-native systems (e.g., reporter assays, heterologous overexpression). | Can isolate specific molecular functions (e.g., promoter activity); scalable. | May lack the native genomic and cellular context; results can be misleading [15]. |

| Definitive Functional Assays | Direct measurement of gene product function in a biologically relevant environment. | Provides direct, mechanistic evidence; highly specific for the disease mechanism. | Often lower throughput; requires specialized expertise and validation [23] [24]. |

The following diagram illustrates the logical workflow for progressing through these evidence levels to achieve validated status for a genetic variant.

Comparative Analysis of Functional Assay Technologies

Established vs. Emerging Functional Assay Platforms

The field of functional genomics utilizes a diverse array of platforms, each with specific applications and performance characteristics. The following table provides a structured comparison of key technologies.

Table: Comparative Performance of Functional Assay Platforms

| Assay Platform | Primary Application | Throughput | Key Performance Metrics | Regulatory Acceptance |

|---|---|---|---|---|

| Classical Cell-Based | Protein function, signaling pathways (e.g., cell invasion, aggregation). | Low to Medium | Varies by specific assay; requires strict validation (replicates, controls) [23]. | Used by multiple VCEPs (e.g., CDH1, RASopathy); accepted with strong validation [24]. |

| CRISPR Screens | High-throughput gene disruption to identify essential genes. | Very High | Functional hit identification; depends on gRNA efficiency and coverage. | Emerging; not yet standard for single-variant interpretation. |

| Massively Parallel Reporter Assays (MPRAs) | High-throughput testing of non-coding variant effects on gene regulation. | Very High | Effects on transcriptional activation/repression. | Limited for clinical interpretation due to episomal, non-native context [15]. |

| Single-Cell DNA-RNA Sequencing (SDR-seq) | Linking genotype to phenotype at single-cell resolution for coding/non-coding variants. | Medium | High multiplexing (480+ loci), >80% detection rate, low cross-contamination (<1.6%) [15]. | Emerging as a powerful method for endogenous variant phenotyping. |

Quantitative Assay Performance Metrics

Robust validation of any functional assay requires the calculation of specific performance metrics to ensure reliability and reproducibility. The table below defines key parameters used in assay validation.

Table: Key Performance Metrics for Functional Assay Validation

| Metric | Formula/Definition | Interpretation | Optimal Value | ||

|---|---|---|---|---|---|

| Z′ Factor | ( Z' = 1 - \frac{3(\sigma{sample} + \sigma{control})}{ | \mu{sample} - \mu{control} | } ) | Measure of assay quality and separation between positive/negative controls [25]. | > 0.5 is excellent; > 0 is acceptable. |

| Signal Window (SW) | ( SW = \frac{ | \mu{sample} - \mu{control} | }{\sqrt{\sigma{sample}^2 + \sigma{control}^2}} ) | Dynamic range between controls, normalized for variability. | Larger values indicate better separation. |

| Assay Variability Ratio (AVR) | Related to the coefficient of variation. | Measure of assay precision. | Smaller values indicate lower variability. |

Detailed Experimental Protocols

SDR-seq for Endogenous Variant Phenotyping

Single-cell DNA–RNA sequencing (SDR-seq) is a cutting-edge method that simultaneously profiles genomic DNA loci and transcriptomes in thousands of single cells, enabling accurate determination of variant zygosity alongside associated gene expression changes [15]. The detailed workflow is as follows.

Key Materials and Reagents:

- Cells: Fixed and permeabilized human induced pluripotent stem (iPS) cells or primary patient cells (e.g., B cell lymphoma).

- Fixative: Glyoxal (recommended over PFA for superior RNA target detection and UMI coverage) [15].

- Primers: Custom panels for gDNA loci (e.g., 480 targets) and RNA transcripts. Reverse primers contain distinct overhangs (R2N for gDNA, R2 for RNA).

- Enzymes: Reverse transcriptase, proteinase K, thermostable DNA polymerase.

- Platform: Tapestri microfluidics system (Mission Bio) for droplet generation and barcoding.

Critical Steps and Validation Parameters:

- Panel Design: Include shared gDNA and RNA targets across panels of different sizes (e.g., 120, 240, 480-plex) for cross-panel performance comparison.

- Species-Mixing Control: Perform a control experiment mixing human and mouse cells (e.g., WTC-11 iPS and NIH-3T3) to quantify and account for ambient RNA cross-contamination, which can be mitigated using sample barcode information [15].

- Data Analysis: After sequencing, separate gDNA and RNA reads based on their overhangs. Filter high-quality cells and remove doublets using sample barcode information. For gDNA analysis, expect >80% of targets detected in >80% of cells. For RNA, expression levels should correlate highly with bulk RNA-seq data (e.g., R² > 0.9) [15].

ClinGen-Compliant Cell-Based Assay

For clinical variant interpretation, the ClinGen consortium has established guidelines for "well-established" functional assays. The following protocol outlines a general framework for a cell-based assay compliant with these standards.

Methodology:

- Assay Selection: Choose an assay that directly reflects the disease mechanism for the gene of interest (e.g., cell aggregation assay for CDH1, myristoylation assay for RASopathy genes) [23] [24].

- Vector Construction: Clone the wild-type and variant sequences into an appropriate expression vector. Prefer endogenous knock-in strategies over overexpression to maintain native genomic context [15].

- Cell Transfection: Use a relevant cell line and transfert with wild-type, variant, and empty vector controls. Include a known pathogenic variant as a positive control and a known benign variant as a negative control.

- Functional Readout: Perform the specific functional measurement (e.g., cell invasion, protein-protein interaction, signaling activity) with a minimum of three experimental replicates.

- Statistical Analysis: Compare variant activity to wild-type and control runs using pre-defined statistical thresholds (e.g., <30% residual activity for loss-of-function). The assay must demonstrate a clear separation between known pathogenic and benign controls [23].

Validation Parameters as per VCEPs:

- Replicates: A minimum of three independent experimental replicates.

- Controls: Inclusion of both positive (pathogenic) and negative (benign) controls in each run.

- Thresholds: Pre-specified, statistically robust thresholds for classifying abnormal function.

- Blinding: Ideally, perform experiments blinded to the variant's clinical status.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful implementation of functional assays relies on a suite of critical reagents and platforms. The following table catalogs key solutions for the functional genomics researcher.

Table: Essential Research Reagent Solutions for Functional Genomics

| Tool Category | Specific Examples | Function in Validation |

|---|---|---|

| Bioinformatic Annotation Tools | Ensembl VEP, ANNOVAR [19] | Provides initial variant impact prediction and functional genomic context to prioritize variants for experimental testing. |

| Functional Antibodies | Antibodies for FACS, ELISA, Western Blot, Immunofluorescence (e.g., from Precision Antibody) [26] | Enable quantification of protein expression, localization, and post-translational modifications in cell-based assays. |

| CRISPR Reagents | Cas9 nucleases, base editors, gRNA libraries [14] | Facilitate precise genome editing for creating isogenic cell lines with specific variants for functional testing. |

| Cell-Based Assay Kits | Cell invasion, reporter gene, protein-protein interaction kits | Provide standardized, off-the-shelf systems for measuring specific molecular functions relevant to disease mechanisms. |

| Multi-Omic Profiling Platforms | SDR-seq [15], Single-cell RNA-seq, ATAC-seq | Allow for the integrated measurement of multiple molecular layers (DNA, RNA) from the same sample or single cell. |

The landscape of functional assay development is rapidly evolving to address the critical need for validating the deluge of genetic variants identified in sequencing studies. While classical cell-based assays, when rigorously validated, remain the gold standard for clinical interpretation by expert panels like ClinGen [23] [24], emerging technologies are pushing the boundaries of scale and resolution. SDR-seq represents a significant advance by enabling the simultaneous readout of hundreds of genomic loci and the transcriptome in single cells, directly linking genotype to phenotype in an endogenous context and for both coding and non-coding variants [15].

The future of functional validation lies in the integration of these advanced technologies with artificial intelligence and machine learning to handle the scale and complexity of genomic data [14]. Furthermore, the emphasis on standardized performance metrics, such as the Z′ factor, and adherence to regulatory guidelines will be paramount for ensuring that functional data reliably informs drug development and clinical decision-making [26] [25]. By strategically selecting from the spectrum of available assays—from clinical correlation to definitive functional tests—researchers and drug developers can build a robust, evidence-based case for variant pathogenicity, ultimately accelerating the development of targeted therapies and precision medicine.

The Functional Genomics Toolbox: From Single-Cell Multi-omics to Genome-Wide Screens

The validation of genetic variants and their role in disease pathogenesis is a cornerstone of modern functional studies research. For years, this field has been hampered by models that rely on artificial overexpression systems, which can misrepresent protein stoichiometry, localization, and function. The integration of induced pluripotent stem cells (iPSCs) with CRISPR-based genome editing has emerged as a transformative solution, enabling the precise manipulation of genes within their native genomic and cellular context. This synergy allows for the creation of advanced cellular models that recapitulate patient-specific genetics and are isogenic, thereby isolating the functional impact of a single variant. This guide provides a objective comparison of the current platforms and methodologies for generating these models, focusing on their performance in validating genetic variants for research and drug development.

Technology Comparison: Editing Platforms and Their Performance

CRISPR-edited iPSC models are developed using various editing strategies, each with distinct strengths and applications in functional genomics. The table below compares the core technologies.

Table 1: Comparison of CRISPR Genome Editing Platforms for iPSC Engineering

| Editing Platform | Primary Editing Outcome | Key Advantage | Typical Efficiency in iPSCs | Ideal Application in Functional Studies |

|---|---|---|---|---|

| CRISPR-Cas9 Nuclease [27] | Insertions/Deletions (Indels) causing gene knockout | Simplicity; effective for complete gene disruption | Variable; highly dependent on guide RNA design [28] | Initial gene-disease linkage studies and pathway analysis [28] |

| CRISPR-Cas9 HDR [29] | Introduction of specific point mutations or small tags via Homology-Directed Repair | Precision; enables knock-in of specific variants | 25% to >90% (with optimized protocols) [29] | Precise modeling of single nucleotide polymorphisms (SNPs) and patient-specific mutations [30] [31] |

| Base Editing [32] | Direct conversion of one DNA base into another without double-stranded breaks | High efficiency; reduced indel byproducts | Not explicitly quantified in results, but reported as "high" | Introducing or correcting point mutations with minimal on-target artifacts |

| Prime Editing [32] | Versatile editing including all 12 possible base-to-base conversions, small insertions, and deletions | Unprecedented versatility without double-stranded breaks | Not explicitly quantified in results, but reported as "high" | Modeling complex mutations beyond single nucleotide changes |

| Multi-Guide "XDel" Strategy [28] | Deletion of a defined genomic fragment between two guide RNA sites | Highly consistent and reproducible knockouts; minimizes incomplete editing | >95% (on-target editing efficiency) [28] | Generating robust, high-confidence knockout pools for high-throughput screening |

Experimental Data and Protocol Comparison

The performance of a CRISPR-iPSC system is ultimately measured by its editing efficiency and the fidelity of the resulting model. The following table summarizes key experimental data and the methodologies used to obtain them.

Table 2: Summary of Experimental Data and Methodologies from Key Studies

| Study Focus / Application | Key Quantitative Result | Cell Line / Model Used | Critical Methodological Insight |

|---|---|---|---|

| High-Efficiency Point Mutation [29] | HDR efficiency increased from 4% to 25% (6-fold) and from 2.8% to 59.5% (21-fold) for different SNPs. | Human iPSCs (multiple lines) | Co-transfection with p53 shRNA plasmid and use of pro-survival small molecules (CloneR). |

| Endogenous Protein Tagging [31] | Successful C-terminal HA-tagging of endogenous α-synuclein (SNCA) without affecting neuronal electrophysiology. | Human iPSCs (healthy control line) | Use of C-terminal tagging strategy to preserve protein function and avoid degradation by-products. |

| Multi-Guide Knockout Efficiency [28] | Multi-guide (XDel) strategy showed significantly higher and more consistent on-target editing efficiency compared to single-guide RNAs across 7 target loci. | Immortalized and iPSC lines | Employing up to 3 sgRNAs for a single gene to induce a predictable fragment deletion. |

| Single-Cell Multi-Omic Editing Analysis [33] | CRAFTseq method enabled concurrent DNA, RNA, and protein (ADT) analysis in thousands of single cells, identifying genotype-dependent outcomes. | Primary human T cells and cell lines (Jurkat, Daudi) | Plate-based method for targeted genomic DNA sequencing alongside transcriptome and surface protein profiling. |

Detailed Experimental Protocol: High-Efficiency Knock-in

The following workflow, based on a highly efficient published method [29], details the steps for introducing a point mutation in iPSCs.

Key Protocol Steps:

- Cell Preparation: Use iPSCs maintained in feeder-free conditions and culture in StemFlex or mTeSR Plus medium on Matrigel. Change to cloning media (StemFlex with 1% RevitaCell and 10% CloneR) one hour before nucleofection [29].

- RNP Complex Formation: Combine synthetic modified sgRNA (e.g., from IDT) with a high-fidelity Cas9 nuclease (e.g., Alt-R S.p. HiFi Cas9 Nuclease V3) and incubate at room temperature for 20-30 minutes to form the ribonucleoprotein (RNP) complex [29] [28].

- Nucleofection Mix: The RNP complex is combined with a single-stranded oligonucleotide (ssODN) repair template, a fluorescent marker plasmid (e.g., pmaxGFP), and a plasmid for transient p53 knockdown (e.g., pCXLE-hOCT3/4-shp53-F) [29].

- Nucleofection and Recovery: Electroporation is performed using an appropriate nucleofector system. Cells are immediately plated in cloning media supplemented with a ROCK inhibitor to enhance survival [29].

- Validation and Subcloning: After expansion, editing efficiency is analyzed in the bulk cell pool using next-generation sequencing (NGS) and tools like ICE (Inference of CRISPR Edits) analysis. Successfully edited pools are then subcloned to generate single-cell clones, which are validated through karyotyping and pluripotency tests [29] [28].

The Scientist's Toolkit: Essential Reagents and Solutions

Successful generation of CRISPR-edited iPSC models relies on a suite of specialized reagents. The table below details key solutions used in the featured experiments.

Table 3: Essential Research Reagent Solutions for CRISPR-iPSC Workflows

| Reagent / Solution | Function | Example Product / Component |

|---|---|---|

| High-Fidelity Cas9 Nuclease [29] | Reduces off-target editing effects while maintaining high on-target activity. | Alt-R S.p. HiFi Cas9 Nuclease V3 |

| Chemically Modified sgRNA [28] | Enhances stability and editing efficiency of the RNP complex. | Synthetic modified sgRNA (e.g., from IDT or EditCo's proprietary design) |

| Pro-Survival Supplement [29] | Improves cell survival post-nucleofection, critical for sensitive iPSCs. | CloneR (STEMCELL Technologies) |

| p53 Suppression Tool [29] | Transiently inhibits p53-mediated cell death in response to DNA double-strand breaks, dramatically boosting HDR efficiency. | pCXLE-hOCT3/4-shp53-F plasmid (Addgene #27077) |

| Cloning Media Supplement [29] | A defined supplement that improves cell recovery and survival after cloning and single-cell passage. | RevitaCell (Gibco) |

| NGS-Based QC Analysis Software [28] | A computational tool for analyzing sequencing data to determine the spectrum and frequency of indels in edited cell pools. | ICE (Inference of CRISPR Edits) Analysis (Synthego) |

Analysis of Model Applications in Key Disease Contexts

The combination of CRISPR and iPSCs is particularly powerful for studying complex diseases. The following diagram illustrates the logical workflow from gene editing to disease modeling and therapeutic discovery.

Specific Disease Contexts:

- Neurodegenerative Diseases (Alzheimer's, Parkinson's): CRISPR-iPSCs are used to introduce mutations in genes like APP, PSEN1, PSEN2, and SNCA to model disease pathology in derived neurons and glial cells [34] [32] [31]. These models recapitulate key features like Aβ accumulation, Tau hyperphosphorylation, and α-synuclein aggregation, providing platforms for drug screening [35].

- Monogenic Disorders (Xeroderma Pigmentosum, Thalassemia): Precisely engineered iPSCs, such as those with homozygous mutations in the XPA gene, create isogenic models for studying DNA repair defects [30]. Similarly, editing iPSCs to correct globin gene mutations offers a path for autologous cell therapy in thalassemia [36].

- Cancer Immunotherapy: iPSCs are edited using CRISPR to generate hypoimmunogenic CAR-T cells, where genes triggering immune rejection are knocked out. This creates an "off-the-shelf" cellular product that can evade the host immune system while maintaining anti-tumor activity [27].

The objective comparison of advanced cellular models reveals a clear trajectory towards highly precise, efficient, and functionally relevant systems. While standard CRISPR-Cas9 nuclease editing remains a powerful tool for gene knockout, newer methods like optimized HDR, base editing, and multi-guide deletion strategies offer researchers a refined toolkit for specific applications. The choice of platform depends critically on the experimental goal: knock-in for precise variant modeling or knockout for gene function studies. The experimental data underscores that protocol optimization—particularly the transient suppression of p53 and the use of pro-survival factors—is no longer optional but essential for achieving the high efficiencies required for robust functional studies. As these technologies continue to mature, they will undoubtedly solidify the role of endogenously contextualized iPSC models as the gold standard for validating genetic variants and accelerating therapeutic discovery.

The systematic study of how genetic variants influence gene expression and drive disease mechanisms has long been hampered by technological limitations. While over 95% of disease-associated variants occur in non-coding regions of the genome, existing single-cell methods have struggled to confidently link these variants to their functional consequences in the same cell [37] [38]. Single-cell DNA-RNA sequencing (SDR-seq) represents a transformative approach that enables simultaneous profiling of genomic DNA loci and gene expression in thousands of single cells, finally enabling researchers to determine variant zygosity alongside associated gene expression changes with high precision and scalability [15] [39]. This technological advancement provides a powerful platform for dissecting regulatory mechanisms encoded by genetic variants, advancing our understanding of gene expression regulation and its implications for human disease [15].

Technical Foundations of SDR-seq

Core Methodology and Workflow

SDR-seq combines in situ reverse transcription of fixed cells with a multiplexed PCR in droplets using Tapestri technology [15] [40]. The experimental workflow proceeds through several critical stages:

- Cell Preparation: Cells are dissociated into a single-cell suspension, fixed, and permeabilized. Fixation methods have been optimized, with glyoxal demonstrating advantages over paraformaldehyde due to reduced nucleic acid cross-linking, resulting in improved RNA target detection [15].

- In Situ Reverse Transcription: Custom poly(dT) primers perform reverse transcription, adding unique molecular identifiers (UMIs), sample barcodes, and capture sequences to cDNA molecules [15].

- Droplet Generation and Lysis: Single cells are encapsulated in first droplets using the Tapestri platform, then lysed and treated with proteinase K [15].

- Multiplexed PCR Amplification: A second droplet generation step incorporates reverse primers for each gDNA or RNA target, forward primers with capture sequence overhangs, PCR reagents, and barcoding beads with cell barcode oligonucleotides. A multiplexed PCR simultaneously amplifies both gDNA and RNA targets within each droplet [15].

- Library Preparation and Sequencing: Distinct overhangs on reverse primers enable separate sequencing library generation for gDNA and RNA, allowing optimized sequencing for each data type [15].

The following diagram illustrates the complete SDR-seq experimental workflow:

Key Technological Innovations

SDR-seq incorporates several innovations that address limitations of previous multi-omic methods:

- High-Sensitivity Dual Modality Detection: The platform achieves high coverage across both DNA and RNA targets, detecting 82% of gDNA targets with the vast majority of cells and varying RNA expression levels corresponding to expected patterns [15].

- Reduced Cross-Contamination: Species-mixing experiments demonstrated minimal cross-contamination between cells, with gDNA cross-contamination below 0.16% on average, and most ambient RNA contamination removable using sample barcode information [15].

- Accurate Zygosity Determination: Unlike previous droplet-based approaches that suffered from high allelic dropout rates (>96%), SDR-seq provides confident determination of whether a variant is present on one or both copies of a gene [15] [37].

Performance Comparison: SDR-seq vs. Alternative Methodologies

Technical Capabilities Comparison

The table below compares the key technical capabilities of SDR-seq against other single-cell multi-omic technologies:

Table 1: Technical capabilities comparison of SDR-seq versus alternative methodologies

| Technology | Max Targets | Variant Zygosity Detection | Non-Coding Variant Analysis | Throughput (Cells) | Key Advantages | Key Limitations |

|---|---|---|---|---|---|---|

| SDR-seq | 480 gDNA/RNA targets [15] | Accurate determination [15] | Comprehensive capability [37] | Thousands [15] | Endogenous context, scalable targeted approach | Targeted, not whole genome |

| Droplet-based scDNA+scRNA | Not specified | High ADO rates (>96%) [15] | Limited [15] | Thousands | Whole-genome capability | Cannot determine zygosity confidently |

| Perturb-seq | Genome-scale | Indirect via gRNAs [15] | Limited to CRISPR-targetable regions | Thousands | Genome-scale screening | Requires exogenous perturbation |

| Massively Parallel Reporter Assays | High throughput | Not applicable | Limited to constructed sequences [15] | N/A | High-throughput variant screening | Lacks endogenous genomic context |

Scalability and Sensitivity Performance

SDR-seq demonstrates remarkable scalability with only minimal sensitivity loss as panel size increases. Experimental testing with panels of 120, 240, and 480 targets (with equal gDNA and RNA targets) showed consistent performance across different panel sizes [15]:

Table 2: SDR-seq performance metrics across different panel sizes

| Performance Metric | 120-Panel | 240-Panel | 480-Panel | Experimental Details |

|---|---|---|---|---|

| gDNA Target Detection | >80% targets detected in >80% cells [15] | >80% targets detected in >80% cells [15] | >80% targets detected in >80% cells [15] | iPS cells, shared targets between panels |

| RNA Target Detection | High detection | Minor decrease vs. 120-panel [15] | Minor decrease vs. 120-panel [15] | Targets chosen based on expression level range |

| Cross-contamination (gDNA) | <0.16% on average [15] | <0.16% on average [15] | <0.16% on average [15] | Species-mixing experiment |

| Cross-contamination (RNA) | 0.8-1.6% on average [15] | 0.8-1.6% on average [15] | 0.8-1.6% on average [15] | Species-mixing experiment |

Experimental Applications and Validation

Functional Phenotyping of Genetic Variants

In proof-of-concept experiments, SDR-seq successfully associated both coding and noncoding variants with distinct gene expression patterns in human induced pluripotent stem cells [15] [39]. The technology demonstrated particular strength in profiling primary patient samples, as evidenced by its application to B-cell lymphoma [15] [38]. In these primary samples, researchers discovered that cancer cells with higher mutational burden exhibited elevated B-cell receptor signaling and tumorigenic gene expression programs [37] [38]. This finding directly links specific variant profiles to disease-relevant cellular states, highlighting SDR-seq's ability to connect genotype to phenotype in clinically relevant contexts.

The diagram below illustrates how SDR-seq enables functional validation of genetic variants by linking genotype to cellular phenotype:

Comparison with Other Single-Cell Clustering Methods

While SDR-seq focuses on DNA-RNA multi-omic integration, other computational approaches have been developed for clustering single-cell data. A comprehensive benchmarking study evaluated 28 clustering algorithms across 10 paired transcriptomic and proteomic datasets [41]. The top-performing methods for transcriptomic data included scDCC, scAIDE, and FlowSOM, with these same methods also performing best for proteomic data, demonstrating their strong generalization across modalities [41]. This independent benchmarking provides context for where SDR-seq fits within the broader landscape of single-cell analysis tools, specializing in genotype-to-phenotype linking rather than general clustering tasks.

Implementation of SDR-seq requires several key reagents and computational resources, as detailed below:

Table 3: Essential research reagents and resources for SDR-seq implementation

| Category | Reagent/Resource | Specification/Function | Application Notes |

|---|---|---|---|

| Platform | Tapestri Technology (Mission Bio) | Microfluidic droplet generation | Enables single-cell encapsulation and barcoding [15] |

| Cell Preparation | Glyoxal fixative | Cell fixation without nucleic acid cross-linking | Superior to PFA for RNA target detection [15] |

| Primer Design | Custom poly(dT) primers | In situ reverse transcription with UMI, sample barcode | Critical for target-specific amplification [15] |

| Computational Tools | Custom barcode deconvolution | Decodes complex DNA barcoding system | Developed by Stegle group at EMBL [38] |

| Target Panels | Multiplexed PCR panels | Targeted amplification of genomic loci | Scalable to 480 total gDNA and RNA targets [15] |

Discussion and Future Perspectives

SDR-seq represents a significant advancement in single-cell multi-omic technology, specifically addressing the critical challenge of linking genetic variants to their functional consequences in the same cell. Its targeted approach provides a practical balance between scalability and sensitivity, enabling studies that were previously impossible due to technical limitations [15] [37].

The technology's ability to profile non-coding variants in their endogenous genomic context is particularly valuable, as these regions harbor the vast majority of disease-associated variants but have been notoriously difficult to study functionally [37] [38]. As noted by lead developer Dominik Lindenhofer, "In this non-coding space, we know there are variants related to things like congenital heart disease, autism, and schizophrenia that are vastly unexplored" [38]. SDR-seq directly addresses this exploration challenge.

For the research community, SDR-seq offers a powerful tool to advance our understanding of gene expression regulation and its implications for disease. According to senior author Lars Steinmetz, "This capability opens up a wide range of biology that we can now discover. If we can discern how variants actually regulate disease and understand that disease process better, it means we have a better opportunity to intervene and treat it" [38]. As the technology sees broader adoption, it promises to accelerate the functional validation of genetic variants across diverse biological contexts and disease states.

Deep Mutational Scanning (DMS) and CRISPR Base Editing (BE) represent two powerful technological approaches for high-throughput functional characterization of genetic variants. While DMS establishes a gold standard for comprehensive variant phenotyping using library-based overexpression, BE enables direct genome modification in native genomic contexts. Recent head-to-head comparisons reveal that with optimized experimental design, BE screens can achieve a surprising degree of correlation with DMS datasets, supporting its utility for functional variant annotation at scale. The choice between these methodologies depends critically on research objectives, with DMS providing exhaustive mutational coverage and BE offering physiological relevance through endogenous genome modification.

Table 1: Fundamental Characteristics of DMS and Base Editing Screens

| Feature | Deep Mutational Scanning (DMS) | CRISPR Base Editing (BE) |

|---|---|---|

| Core Principle | Introduction of saturation mutagenesis libraries via cDNA constructs [42] | Direct, precise conversion of endogenous DNA bases using CRISPR-guided deaminases [43] |

| Genomic Context | Ectopic expression (often from cDNA); may use safe harbor "landing pads" [42] | Endogenous genomic locus [42] [43] |

| Mutation Types | All possible amino acid changes at each position; comprehensive [42] | Primarily transition mutations (C>T or A>G) [42] |

| Phenotype Measurement | Direct sequencing of variant alleles from cDNA [42] | Traditionally, surrogate measurement via sgRNA sequencing; can directly sequence edits [42] [44] |

| Key Advantage | Unbiased, comprehensive measurement of variant effects [42] [44] | Studies protein function in its native regulatory context [43] |

Direct Performance Comparison: Experimental Evidence

A landmark 2024 study conducted the first direct side-by-side comparison of DMS and BE in the same laboratory and cell line (Ba/F3 cells), providing unprecedented quantitative evidence for their relative performance [42] [44].

Table 2: Summary of Key Comparative Findings from Sokirniy et al. [42] [44]

| Comparison Metric | Findings | Experimental Support |

|---|---|---|

| Overall Correlation | "Surprising degree of correlation" and "surprisingly high degree of correlation" between BE and gold standard DMS [42] [44] | Direct comparison of a BCR-ABL kinase domain DMS dataset with a tiling BE screen. |

| Impact of sgRNA Filtering | Focusing on sgRNAs producing single edits within their editing window dramatically enhanced agreement with DMS [42] [44] | Applied filters for most likely predicted edits and highest efficiency sgRNAs. |

| Handling Multi-edit Guides | When multi-edit guides are unavoidable, directly measuring the variants created in the pool recovers high-quality data [42] | Used error-corrected sequencing to directly quantify edited variants rather than relying on sgRNA abundance. |

| Data Quality | A simple filter for single-edit guides could sufficiently annotate a large proportion of variants directly from sgRNA sequencing [44] | Analysis of sgRNA depletion/enrichment explained by predicted edits. |

Detailed Experimental Protocols

Deep Mutational Scanning (DMS) Workflow

- Library Design & Construction: A saturating mutagenesis library is generated, typically encompassing all possible single amino acid changes across the protein domain of interest. For example, Sokirniy et al. used a library targeting the N-lobe of the ABL kinase domain [42].

- Library Delivery: The cDNA library is cloned into a lentiviral vector (e.g., pUltra) and transfected into packaging cells (e.g., HEK293Ts). Viral supernatant is then used to infect target cells (e.g., Ba/F3 cells) at a low multiplicity of infection to ensure single-copy integration [42].

- Cell Sorting & Selection: Successfully transduced cells are often enriched using fluorescence-activated cell sorting (FACS) if the vector contains a fluorescent marker like EGFP. The screen proceeds by applying a selective pressure (e.g., cytokine withdrawal in Ba/F3 cells) over multiple days [42].

- Variant Abundance Quantification: Genomic DNA is harvested at baseline and endpoint timepoints. A key modern step is the use of Unique Molecular Identifiers (UMIs) and single-strand consensus sequencing to generate error-corrected reads. This allows for accurate calculation of mutant growth rates based on changes in mutant allele frequency over time, accounting for cell dilution [42].

Base Editing (BE) Workflow

- sgRNA Library Design: Design a tiling library of sgRNAs targeting the genomic regions of interest using tools like CHOP-CHOP, specifying the appropriate PAM (e.g., 'NGN' for SpG base editors) [42].

- Base Editor Stable Cell Line: Generate a cell line stably expressing the base editor (e.g., ABE8e SpG for A>G edits) under antibiotic selection to ensure consistent editing efficiency [42].

- sgRNA Library Delivery & Screening: The pooled sgRNA library is delivered via lentiviral transduction at a low MOI. Cells are then subjected to the phenotypic selection of interest [42].

- Outcome Measurement - Two Methods:

- Traditional (Indirect): Genomic DNA is harvested, sgRNAs are amplified and sequenced. Variant effects are inferred from sgRNA abundance changes [42].

- Enhanced (Direct): To overcome limitations of multi-edit guides, the edited genomic regions are directly sequenced using amplicon sequencing. Error-corrected sequencing (e.g., CRISPR-DS) can be applied to precisely quantify the frequency of each specific variant in the population before and after selection [42] [44].

Visualizing Screening Workflows

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Resources for DMS and BE Screens

| Reagent / Resource | Function | Example Sources / Systems |

|---|---|---|

| Lentiviral Vectors | Efficient delivery of cDNA or sgRNA libraries into target cells. | pUltra (Addgene #24129) for cDNA; lenti-sgRNA hygro (Addgene #104991) for sgRNAs [42] |

| Base Editor Plasmids | Engineered fusion proteins (nCas9 + deaminase) for precise base conversion. | ABE8e SpG (for A>G edits; Addgene #179099), CBEd SpG (for C>T edits) [42] |

| sgRNA Design Tools | In silico design and ranking of guide RNAs for optimal on-target efficiency. | CHOP-CHOP [42] |

| Error-Corrected Sequencing | High-fidelity quantification of variant frequencies from complex pools. | Single Strand Consensus Sequencing with UMIs [42]; genoTYPER-NEXT [45] |

| Cell Models | Appropriate in vitro systems for screening, often with selectable phenotypes. | Ba/F3 (murine pro-B cell line with cytokine dependence) [42], haploid cell lines, diverse cancer lines [46] |

DMS and BE are complementary, not competing, technologies in the functional genomics toolkit. DMS remains the most exhaustive method for characterizing protein sequence-function relationships in a single experiment. In contrast, BE provides a path to study variants in their native genomic and regulatory context, which can be critical for understanding subtle effects on splicing, regulation, and protein stoichiometry [47] [43].

The emerging frontier lies in computational integration of these large-scale perturbation data. Models like the Large Perturbation Model (LPM) are being developed to integrate heterogeneous data from DMS, BE, and other perturbation types to predict the outcomes of unobserved experiments and generate novel biological insights [48]. Furthermore, newer technologies like prime editing sensor libraries are being established to overcome the limitation of BE by enabling the study of all possible SNVs and indels in a high-throughput manner, promising even greater scalability and precision in variant functional annotation [47].

This guide objectively compares the performance of modern mechanism-specific assays, which are crucial for validating genetic variants in functional studies and drug development.

Splicing Reporter Assays

Splicing reporters are engineered systems that detect changes in alternative splicing, a process disrupted in many genetic diseases.

Comparative Performance of Splicing Reporters

Table 1: Comparison of Splicing Reporter Technologies

| Reporter Type | Key Features | Throughput | Sensitivity/Performance | Primary Applications |

|---|---|---|---|---|

| Dual Nano/Firefly Luciferase [49] | Dual detector cassette with frameshift; PEST degradation sequences for reduced background | High-throughput; screen of ~95,000 compounds [49] | Highly sensitive, linear detection; 150-fold increased luminescence vs. other proteins [49] | Screening small molecule modulators of splicing (e.g., for autism, cancer) |

| Dual Fluorescence | Frameshift design with two fluorescent proteins | Moderate | Subject to false positives from modulators affecting protein expression independently [49] | General splicing modulation studies |

| GFP-Based [50] | Single fluorescent protein output | Lower | Visualized by fluorescence microscopy; suitable for live cells [50] | Basic splicing analysis in cultured mammalian cells |

| RT-PCR/Multiplexed Assays [49] [51] | Direct measurement of spliced mRNA products | Challenging to scale beyond a few thousand compounds [49] | High information content (e.g., detects multiple isoforms) | Targeted analysis of specific splicing events |

Principle: A target alternative exon is engineered with a single-nucleotide frameshift. Exon inclusion or skipping produces mRNA that translates into either Firefly (FLuc) or Nano Luciferase (NLuc), respectively. The ratio of luminescence signals quantifies splicing efficiency.

Key Workflow Steps:

- Reporter Design: Clone the target alternative exon (e.g., the 21 nt microexon from Mef2d) into a vector upstream of dual luciferase open reading frames.

- Cell Line Generation: Stably integrate the reporter construct into the genome of mammalian cells (e.g., mouse Neuro-2a) using a system like Flp-In to ensure consistent expression.

- Compound Transfection/Treatment: Transfert cells with a library of small molecules or splicing factor vectors (e.g., SRRM4). Use a doxycycline-inducible promoter for controlled expression.

- Luminescence Measurement: Lyse cells and sequentially measure Firefly and Nano luciferase activities using their respective substrates.

- Data Analysis: Calculate the percentage of Firefly or Nano luminescence to determine the percent spliced-in (PSI) metric. Validate with RT-PCR.

Critical Reagents:

- Cell Line: Neuro-2a (N2A) rtTA Flp-In [49]

- Reporters: Dual NLuc/FLuc constructs with PEST sequences [49]

- Inducer: Doxycycline [49]

- Detection Kit: Dual-Luciferase or Nano-Glo Assay systems

Enzyme Activity Assays

Enzyme assays measure catalytic activity, essential for characterizing metabolic variants and enzyme-targeting therapeutics.

Comparative Performance of Enzyme Assay Platforms

Table 2: Comparison of Enzyme Activity Assay Methods

| Method | Detection Principle | Throughput | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Spectrophotometer | Absorption change of substrates/products [52] | Low | Low cost; widely used [52] | Manual steps; inconsistent results; several variables to control [52] |

| Microplate Photometry | Absorption in 96-/384-well plates [52] | High | High-throughput; small assay volumes (200 µL) [52] | Temperature instability; pathlength correction needed; "edge effect" evaporation [52] |

| Discrete Analyzer (Gallery Plus) | Absorption in individual cuvettes [52] | High | Superior temperature control (25-60°C); no edge effects; flexible parameters [52] | Higher initial instrument cost |

| HPLC-Based | Separation and quantification of product [52] | Low | High specificity; can be used when reaction must be stopped [52] | Slow (30 min/analysis); complex operation [52] |

Principle: Monitor the rate of substrate conversion or product formation under well-defined conditions to determine enzyme activity.

Key Workflow Steps:

- Solution Preparation: Prepare buffer, substrate, and cofactor solutions. Strictly control pH, ionic strength, and temperature.

- Reaction Initiation: Mix enzyme with substrate to start the reaction. For automated systems, this is done in disposable cuvettes.

- Kinetic Measurement: Continuously monitor the change in absorbance or fluorescence at specific wavelengths over time.

- Data Calculation: Determine the initial reaction velocity (V₀). One unit of enzyme activity is defined as the amount converting 1 µmol of substrate per minute.

Critical Parameters:

- Temperature: Maintain within ±0.1°C; 1°C change causes 4-8% activity variation [52].

- pH: Use optimal pH for the specific enzyme; it affects activity, charge, and substrate shape [52].

- Substrate Concentration: Use saturating levels for Vmax determination.

Biomarker Profiling Assays

Biomarker profiling identifies and validates molecular signatures for disease diagnosis, prognosis, and treatment response.

Comparative Performance of Biomarker Profiling Modalities

Table 3: Comparison of Biomarker Profiling Technologies

| Technology | Biomarker Type | Applications in Drug Development | Performance / Validation Level |

|---|---|---|---|

| RNA Splicing Biomarkers [53] | Alternative Splicing (AS) Events (PSI values) | Host-response diagnosis for infectious disease (e.g., COVID-19); earlier detection than pathogen tests [53] | 98.4% accuracy for SARS-CoV-2 diagnosis; superior to gene-expression signatures [53] |

| Gene Expression Signatures | Differential Gene Expression (mRNA levels) | Patient stratification; therapeutic response prediction [53] | Outperformed by AS biomarkers in cross-cohort accuracy [53] |

| Proteogenomics (Splicify) [54] | Protein Isoforms from RNA-Seq & Mass Spec | Identification of cancer-specific protein biomarkers from aberrant splicing [54] | Detected 2172 differentially expressed isoforms upon SF3B1 knockdown; peptide confirmation [54] |

| Known Valid Genomic Biomarkers [55] | Specific genetic variants (e.g., HER2, K-RAS) | Patient selection for targeted therapies (e.g., Trastuzumab for HER2+ breast cancer) [55] | "Known Valid" status: widespread agreement in scientific community on clinical significance [55] |

Principle: Leverage RNA alternative splicing events in blood, which have potential normalization and platform stability advantages over gene expression, for diagnostic assay development.

Key Workflow Steps:

- Cohort & Sequencing: Collect whole-blood specimens from a prospective cohort (e.g., infected vs. healthy). Perform RNA sequencing (RNA-seq).

- Splicing Quantification: Compute Percent Spliced In (PSI) values for alternative splicing events from RNA-seq data.

- Statistical Modeling: Use linear mixed models to identify disease-associated splicing events, controlling for covariates.

- Classifier Training: Train a logistic regression classifier using PSI values of significant differential splicing events.