GBLUP in Biomedical Research: Demystifying Assumptions, Limitations, and Best Practices for Genetic Prediction

This article provides a comprehensive exploration of the Genomic Best Linear Unbiased Prediction (GBLUP) model, a cornerstone in genomic prediction for biomedical research.

GBLUP in Biomedical Research: Demystifying Assumptions, Limitations, and Best Practices for Genetic Prediction

Abstract

This article provides a comprehensive exploration of the Genomic Best Linear Unbiased Prediction (GBLUP) model, a cornerstone in genomic prediction for biomedical research. Tailored for researchers, scientists, and drug development professionals, it systematically dissects the model's core mathematical assumptions, practical application methodologies, common pitfalls, and validation strategies. We clarify when GBLUP is the optimal choice, its inherent limitations regarding complex trait architecture, and how it compares to alternative models like Bayesian methods and machine learning. The content offers actionable guidance for troubleshooting, optimizing predictions, and validating results to ensure robust, reproducible outcomes in studies of complex diseases, pharmacogenomics, and quantitative trait analysis.

What is GBLUP? Core Concepts and Foundational Assumptions for Researchers

This technical guide details the evolution from Best Linear Unbiased Prediction (BLUP) to Genomic BLUP (GBLUP), a cornerstone model in genomic selection. Framed within a broader thesis on model assumptions and limitations, this document provides an in-depth analysis of the methodological foundations, computational protocols, and contemporary applications in plant, animal, and biomedical research, including drug development.

Best Linear Unbiased Prediction (BLUP) is a mixed-model technique originally developed by C. R. Henderson for the genetic evaluation of livestock. It predicts random effects (e.g., breeding values) by combining pedigree-based relationship information with phenotypic data. The core model is:

y = Xb + Zu + e

Where:

- y is a vector of phenotypic observations.

- b is a vector of fixed effects (e.g., herd, year).

- u is a vector of random genetic effects ~N(0, Aσ²ᵤ), where A is the additive genetic relationship matrix derived from pedigree.

- X and Z are incidence matrices.

- e is the residual error ~N(0, Iσ²ₑ).

The advent of high-density genome-wide single nucleotide polymorphism (SNP) markers enabled a paradigm shift. The Genomic BLUP (GBLUP) model replaces the pedigree-based A matrix with a genomic relationship matrix (G), constructed directly from marker data. The random effects are now ~N(0, Gσ²ᵍ), where G quantifies the genomic similarity between individuals based on allele sharing.

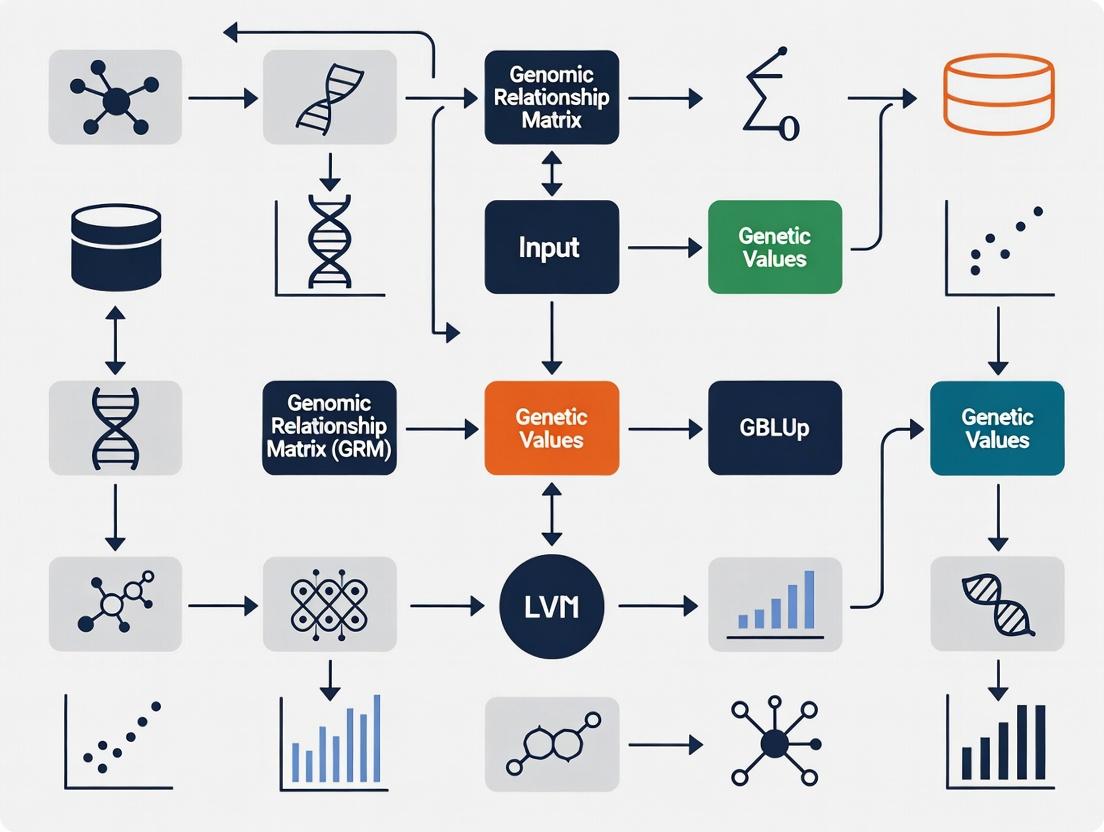

Logical Workflow: From Phenotype to Genomic Prediction

Core Methodologies and Protocols

Construction of the Genomic Relationship Matrix (G)

The G matrix is fundamental to GBLUP. The standard method (VanRaden, 2008) is:

G = (M - P)(M - P)' / 2∑pᵢ(1-pᵢ)

- M is an n × m matrix of marker alleles for n individuals and m SNPs, coded as 0, 1, or 2 for the number of reference alleles.

- P is a matrix of allele frequencies, where column j contains 2pᵢ, with pᵢ being the frequency of the reference allele for SNP j.

- The denominator scales G to be analogous to the pedigree-based A matrix.

Protocol: Building the G Matrix

- Genotype Quality Control: Filter SNPs based on call rate (>95%), minor allele frequency (MAF >0.01-0.05), and Hardy-Weinberg equilibrium (p > 10⁻⁶). Filter individuals for call rate (>90%).

- Centering and Coding: Adjust the genotype matrix M by subtracting the column means (P) to center on zero.

- Calculation: Compute the cross-product of the centered matrix and scale by the expected variance under Hardy-Weinberg equilibrium.

- Quality Check: Ensure G is positive definite. If not, apply blending (e.g., G* = 0.95G + 0.05A) or bounding methods.

Solving the GBLUP Model

The GBLUP model is solved via Henderson's Mixed Model Equations (MME):

[ \begin{bmatrix} X'X & X'Z \ Z'X & Z'Z + G^{-1}\lambda \end{bmatrix} \begin{bmatrix} \hat{b} \ \hat{u}

\end{bmatrix}

\begin{bmatrix} X'y \ Z'y \end{bmatrix} ]

Where λ = σ²ₑ/σ²ᵍ (the ratio of residual to genomic variance). Solutions are obtained computationally via:

- Direct Inversion: Feasible only for small G matrices (n < ~10,000).

- Iterative Methods: Preferred for large datasets. Standard protocols use Preconditioned Conjugate Gradient (PCG) or Gauss-Seidel methods iterating until solutions converge (e.g., relative change < 10⁻⁶).

- Single-Step GBLUP (ssGBLUP): A key advancement that jointly uses genotyped and non-genotyped individuals by constructing a combined relationship matrix H that merges A and G.

Experimental Validation Protocol

The predictive accuracy of GBLUP is validated via cross-validation.

Protocol: k-Fold Cross-Validation for GBLUP

- Population Partitioning: Randomly divide the phenotyped and genotyped reference population into k subsets (folds), typically k=5 or 10.

- Iterative Prediction: For each fold i:

- Designate fold i as the validation set.

- Combine the remaining k-1 folds as the training set.

- Fit the GBLUP model using only the training set's phenotypes and genotypes.

- Use the estimated model to predict Genomic Estimated Breeding Values (GEBVs) for individuals in validation set i.

- Accuracy Calculation: After all folds are processed, correlate the predicted GEBVs with the observed (but computationally hidden) phenotypes in the validation sets. The Pearson correlation (r) is the primary accuracy metric.

Quantitative Data & Performance

Table 1: Representative Predictive Accuracies of GBLUP in Various Species

| Species/Trait | Number of Individuals | Number of SNPs | Validation Method | Predictive Accuracy (r) | Source/Study Context |

|---|---|---|---|---|---|

| Dairy Cattle (Milk Yield) | 15,000 | 45,000 | 5-Fold CV | 0.65 - 0.75 | Standard for highly polygenic traits. |

| Wheat (Grain Yield) | 600 | 15,000 | Leave-One-Out CV | 0.50 - 0.60 | Accuracy influenced by population structure. |

| Swine (Backfat Thickness) | 3,500 | 60,000 | 10-Fold CV | 0.55 - 0.70 | Moderate heritability trait. |

| Humans (Height) | 10,000 | 1,000,000 | Independent Cohort | 0.40 - 0.55 | Demonstrates portability to human complex traits. |

| Model Comparison: GBLUP vs. BLUP | GBLUP Accuracy Gain | Assumption | |||

| Dairy Cattle (Young Bulls) | +20% to +40% | Pedigree vs. Genomic Links | Earlier, more accurate selection. |

Table 2: Key Assumptions and Their Potential Limitations in GBLUP

| Assumption | Mathematical Form | Practical Implication | Potential Violation & Consequence |

|---|---|---|---|

| Linearity | y = Xb + Zu + e | Additive gene action. | Non-additive (dominance, epistasis) effects can be missed, limiting accuracy. |

| Infinitesimal Model | u ~ N(0, Gσ²ᵍ) | All markers explain some variance; many small effects. | Fails if trait is controlled by a few large-effect QTLs; Bayesian methods may be superior. |

| Homogeneous Variance | Var(u) = Gσ²ᵍ | Genetic variance is constant across groups. | Population stratification or heterogeneity can bias predictions. |

| Known G Matrix | G is fixed & correct | G perfectly captures genomic relationships. | Poor QC, rare alleles, or differing allele frequencies can distort G, reducing accuracy. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for a GBLUP Genomic Selection Pipeline

| Item/Category | Function/Description | Example/Specification |

|---|---|---|

| DNA Extraction Kit | High-throughput, high-quality genomic DNA isolation from tissue/blood. | Qiagen DNeasy 96 Kit, magnetic bead-based platforms. |

| SNP Genotyping Array | Genome-wide variant profiling. Key determinant of G matrix quality. | Illumina BovineSNP50 (Cattle), Illumina Infinium WheatHD (Plants), Affymetrix Axiom arrays. |

| Genotype Imputation Service | Infers missing genotypes or projects from low- to high-density, increasing G resolution. | Minimac4, Beagle software; requires a reference haplotype panel. |

| Mixed-Model Solver Software | Computationally solves MME for GBLUP. Critical for large-scale analysis. | BLUPF90 family (e.g., AIREMLF90, GBLUPF90), ASReml, GNU R sommer package. |

| Genetic Core Parameter | Ratio of residual to genomic variance; must be estimated or supplied. | Estimated via REML using AIREMLF90 or within cross-validation. |

Advanced Extensions and Current Frontiers

GBLUP serves as a platform for advanced models. Key extensions include:

- ssGBLUP Workflow: Integrating all available data.

- rrBLUP (Random Regression BLUP): Models marker-specific effects, but shrinks them towards zero, effectively reverting to GBLUP under certain priors.

- GBLUP-CN: Incorporating copy number variants (CNVs) or structural variations by adding alternate relationship matrices.

- Integrating Omics Data: Using transcriptomic or metabolic data as intermediate phenotypes to enhance prediction for complex traits.

GBLUP represents a critical implementation of genomic prediction, translating dense marker data into actionable genetic values. Its strength lies in its computational efficiency and robustness for highly polygenic traits. However, as outlined in this thesis context, its assumptions of linearity, additivity, and the infinitesimal model define its limitations. Future directions focus on overcoming these constraints through non-linear kernels, integrative omics, and sophisticated validation frameworks, ensuring its continued evolution in precision agriculture and biomedicine.

This whitepaper provides an in-depth technical deconstruction of the Genomic Best Linear Unbiased Prediction (GBLUP) model's core linear mixed model (LMM) equation: y = Xb + Zu + e. Framed within a broader thesis on GBLUP's assumptions and limitations, this analysis is critical for researchers, scientists, and drug development professionals applying genomic prediction in complex trait analysis, pharmacogenomics, and personalized medicine. The reliance of modern genomic selection and prediction on this model necessitates a rigorous understanding of its components, underlying statistical assumptions, and the practical consequences when these assumptions are violated.

Deconstructing the Equation: Terms and Assumptions

The LMM for GBLUP partitions the observed phenotypic data (y) into components explained by fixed effects, random genetic effects, and residual error.

Table 1: Core Components of the GBLUP LMM Equation

| Component | Symbol | Description | Key Assumptions | Dimension |

|---|---|---|---|---|

| Phenotype Vector | y | A vector of observed phenotypic values (e.g., disease severity, drug response) for n individuals. | Measured without systematic error; approximately normally distributed conditional on the model. | n x 1 |

| Design Matrix for Fixed Effects | X | An incidence matrix linking observations to fixed effects (e.g., trial location, sex, dosage cohort). | Known without error; full column rank is typically assumed. | n x p |

| Vector of Fixed Effects | b | Unknown constants to be estimated (e.g., mean effect of a treatment). | These are population parameters, not random variables. | p x 1 |

| Design Matrix for Random Effects | Z | An incidence matrix, often an identity matrix I, linking observations to random genetic effects. | Known without error; usually a simple structure. | n x n |

| Vector of Random Genetic Effects | u | Unobserved additive genetic values for each individual. | u ~ N(0, Gσ²ᵤ); Follows a multivariate normal distribution with covariance matrix G. | n x 1 |

| Residual Error Vector | e | Unobserved environmental and non-additive genetic noise. | e ~ N(0, Iσ²ₑ); Independent and identically distributed normal errors. | n x 1 |

The Genomic Relationship Matrix (G)

The G matrix is the cornerstone of GBLUP, quantifying the genetic similarity between individuals based on marker data. It is typically constructed as G = WW' / k, where W is a centered and scaled genotype matrix of markers, and k is a scaling constant (e.g., the number of markers). This matrix defines the covariance structure for u: Var(u) = Gσ²ᵤ. The critical assumption is that G accurately captures all relevant additive genetic relationships and that the markers explain the genetic variance.

Methodological Protocols for Key Experiments

Protocol: Estimating Variance Components (σ²ᵤ and σ²ₑ)

Objective: To estimate the genetic (σ²ᵤ) and residual (σ²ₑ) variance components using Restricted Maximum Likelihood (REML).

- Inputs: Phenotype vector y, fixed effects design matrix X, genomic relationship matrix G.

- Model Fitting: Employ REML algorithms (e.g., Average Information (AI), Expectation-Maximization (EM)) to maximize the restricted log-likelihood function, integrating over the fixed effects.

- Iteration: Iteratively solve the mixed model equations (MME) until convergence of variance component estimates.

- Output: Estimates of σ²ᵤ, σ²ₑ, and subsequently, the heritability h² = σ²ᵤ / (σ²ᵤ + σ²ₑ).

Protocol: Cross-Validation for Prediction Accuracy

Objective: To empirically evaluate the predictive ability of the GBLUP model.

- Partitioning: Randomly divide the phenotyped and genotyped reference population into k folds (e.g., 5 or 10).

- Iterative Prediction: For each fold i:

- Use individuals in all other folds as the training set.

- Fit the GBLUP model (y = Xb + Zu + e) to the training data to estimate b and predict u.

- Apply the estimated effects to the genotypic data of individuals in fold i (the validation set) to obtain predicted genetic values (ĝ).

- Validation: Correlate the predicted values (ĝ) with the observed phenotypes (y) in the validation set across all folds.

- Output: The mean correlation coefficient (r) serves as the estimate of prediction accuracy.

Protocol: Testing for Model Assumption Violations

Objective: To diagnose departures from LMM assumptions that may bias GBLUP results.

- Normality of Residuals: Generate a Q-Q plot of the studentized residuals from the fitted model. Systematic deviations from the diagonal line suggest non-normality.

- Homoscedasticity: Plot residuals against fitted values. A funnel-shaped pattern indicates heteroscedasticity.

- Genetic Architecture: Perform a GWAS using the residuals from a GBLUP model fitted with only an intercept as a fixed effect. An excess of small p-values may signal major-effect loci not captured by the infinitesimal G matrix.

Assumptions, Limitations, and Research Implications

Table 2: Key Assumptions and Their Practical Limitations in GBLUP

| Assumption | Theoretical Justification | Common Violations & Consequences | Mitigation Strategies in Research |

|---|---|---|---|

| Infinitesimal Genetic Architecture | All markers explain a small amount of variance; trait controlled by many QTLs. | Presence of large-effect QTLs leads to biased h² estimates and suboptimal predictions. | Use Bayesian models (e.g., BayesR) or incorporate known major genes as fixed effects. |

| Additive Gene Action | The G matrix models only additive effects. | Non-additive (dominance, epistasis) effects inflate residual variance and reduce accuracy. | Construct separate dominance/epistasis relationship matrices; use non-linear ML models. |

| Correctly Specified G Matrix | G perfectly captures co-ancestry and Mendelian sampling. | Population structure, ascertainment bias in markers, or close relatives lead to inaccurate G. | Use pedigree A matrix, adjust G for allelic frequency, or use LD-adjusted G. |

| Homogeneous Residual Variance | Residual error (σ²ₑ) is constant across all observations. | Heteroscedasticity due to uneven measurement error or genotype-by-environment interaction. | Apply transformations (log, Box-Cox) to y; use weighted analyses. |

| No Correlation Between u and e | Genetic and environmental effects are independent. | Presence of gene-environment correlation (e.g., assortative mating) biases h² estimates. | Explicitly model the correlation structure if the causative environment is known. |

Visualizing the GBLUP Framework and Workflow

Title: GBLUP Analysis Workflow Diagram

Title: Decomposition of Phenotypic Value in LMM

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools and Resources for GBLUP Research

| Item (Software/Package) | Function & Application | Key Features for GBLUP |

|---|---|---|

R with sommer/rrBLUP |

Primary statistical environment for fitting LMMs and GBLUP. | Efficient REML solvers, direct handling of G matrix, cross-validation utilities. |

| GCTA (Genome-wide Complex Trait Analysis) | Standalone tool for variance component estimation and genomic prediction. | Specialized for large-scale genetic data, advanced G matrix options (GRM). |

| PLINK 2.0 | Whole-genome association analysis and data management. | Quality control, genotype filtering, and creation of input files for G matrix calculation. |

Python numpy/scipy + pygwas |

Custom scripting and analysis for non-standard model extensions. | Flexible linear algebra for prototyping new relationship matrices or algorithms. |

| BLUPF90 Suite | High-performance computing suite for animal breeding models. | Extremely efficient for very large n (millions), parallel processing capabilities. |

| TASSEL | Integrated platform for trait analysis and association mapping. | User-friendly GUI and pipeline for GBLUP, integrates with plant genomics datasets. |

| High-Performance Computing (HPC) Cluster | Infrastructure for computationally intensive REML analysis on large cohorts. | Essential for datasets with >10k individuals and >100k markers. |

Within the genomic best linear unbiased prediction (GBLUP) framework, the infinitesimal model is a foundational assumption. It posits that complex traits are influenced by a very large number of genetic variants, each with an exceedingly small, additive effect, and that these effects are normally distributed. This whitepaper provides a technical examination of this assumption, its evidence, implications for GBLUP, and methodologies for its validation in the context of modern genomic research and drug target discovery.

Theoretical Foundation and GBLUP Implications

The GBLUP model relies on the relationship matrix (G) to estimate genomic breeding values. The G matrix is built under the infinitesimal assumption, implying that all markers contribute to the genetic relationship and, by extension, to the trait variance. A key limitation arises when this assumption is violated; for instance, the presence of large-effect variants or substantial non-additive variance can reduce the accuracy of GBLUP predictions and bias heritability estimates.

Empirical Evidence and Quantitative Data

Recent genome-wide association studies (GWAS) and whole-genome sequencing projects provide data on the distribution of variant effects for complex traits.

Table 1: Summary of Variant Effect Distributions for Selected Complex Traits (Based on Recent Large-Scale GWAS Meta-Analyses)

| Trait | Approx. Number of Associated Loci (p<5e-8) | Estimated Proportion of SNP Heritability Explained by Top Loci | Estimated Number of Causal Variants (Infinitesimal) | Largest Reported Effect Size (OR / Beta) | Primary Study Source |

|---|---|---|---|---|---|

| Schizophrenia | >10,000 | ~20% | Tens of thousands | OR ~1.2 | Trubetskoy et al., Nature, 2022 |

| Height | ~12,000 | ~25% | >100,000 | ~1 cm / allele | Yengo et al., Nature, 2022 |

| Coronary Artery Disease | ~300 | ~15% | Thousands | OR ~1.7 | Aragam et al., Nature Genetics, 2022 |

| Type 2 Diabetes | ~1,400 | ~15-20% | Thousands | OR ~1.7 | Suzuki et al., Nature, 2021 |

| Educational Attainment | ~3,900 | ~10% | Tens of thousands | ~0.02 SD / allele | Okbay et al., Nature, 2022 |

Table 2: Methods for Evaluating the Infinitesimal Assumption

| Method | Principle | What it Tests | Key Output |

|---|---|---|---|

| Linkage Disequilibrium Score Regression (LDSC) | Uses GWAS summary statistics and LD structure | Polygenicity vs. bias; whether trait heritability is spread across many variants. | Intercept (confounding bias), h² SNP, Mean χ². |

| Mixture Models (e.g., BGMIX, FINEMAP) | Bayesian sparse modeling | Proportion of variants with non-zero effects and their distribution. | Posterior inclusion probabilities (PIPs), effect size distributions. |

| Genomic Partitioning/Annotation | Stratifies heritability by functional annotations | Whether heritability is enriched in specific genomic regions, contradicting uniform infinitesimal spread. | Enrichment statistics per annotation. |

| GBLUP Prediction Accuracy Comparison | Compares models using all SNPs vs. pruned/filtered SNP sets | Sensitivity of prediction to inclusion of many small-effect variants. | Prediction accuracy (r²) in hold-out validation sets. |

Experimental Protocols for Validation

Protocol: LD Score Regression to Assess Polygenicity

Objective: Quantify the degree of polygenicity and test for deviation from a pure infinitesimal model. Inputs: GWAS summary statistics for the trait of interest, pre-computed LD scores for a reference population (e.g., 1000 Genomes EUR). Software: LDSC (v1.0.1 or higher). Steps:

- Data Preparation: Munge sumstats: Ensure summary statistics file contains SNP ID, effect allele, other allele, effect size (beta or OR), standard error, p-value, and sample size. Use provided munge_sumstats.py script to harmonize with reference LD scores.

- Run LDSC: Execute

ldsc.py --h2 [sumstats.gz] --ref-ld-chr [baselineLD_v.x.x/] --w-ld-chr [weights.] --out [output_prefix]. - Interpretation: A high intercept indicates confounding (e.g., population stratification). The SNP-based heritability (h² SNP) scaled by the number of SNPs indicates average variant contribution. A low "mean χ²" relative to total h² suggests many very small effects (infinitesimal), while high values suggest fewer, larger effects.

Protocol: Evaluating GBLUP Prediction Accuracy with SNP Subsets

Objective: Empirically test if prediction accuracy deteriorates when excluding small-effect variants.

Population: Genotyped and phenotyped cohort (n > 2000 recommended).

Software: PLINK, GCTA, or R packages like rrBLUP.

Steps:

- Data Split: Randomly divide the population into a training set (e.g., 80%) and a validation set (20%).

- GWAS on Training Set: Perform GWAS on the training set to obtain per-SNP p-values and effect sizes.

- Create SNP Subsets: From the full SNP set, create subsets:

- Set A: All SNPs (standard infinitesimal assumption).

- Set B: SNPs with GWAS p-value < 0.05.

- Set C: Top k independent SNPs (e.g., 1000) via clumping (PLINK

--clump).

- Build GRMs: Construct Genomic Relationship Matrices (GRMs) in GCTA for each SNP subset (

--make-grm). - Run GBLUP: Estimate GEBVs for the validation set using each GRM from the training set.

- Calculate Accuracy: Correlate predicted GEBVs with observed phenotypes in the validation set. A gradual drop in accuracy from Set A to Set C supports the infinitesimal model's requirement for many variants.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Infinitesimal Architecture Research

| Item | Function/Description | Example/Provider |

|---|---|---|

| High-Density Genotyping Arrays | Capture common SNP variation across the genome for large cohort studies. | Illumina Global Screening Array, Affymetrix Axiom Biobank Array. |

| Whole Genome Sequencing Services | Provide complete variant calling for assessing ultra-rare and structural variation contributions. | Illumina NovaSeq, PacBio HiFi, BGI platforms. |

| GWAS Summary Statistics | Pre-computed association data for meta-analysis and polygenicity testing. | Public repositories: GWAS Catalog, PGS Catalog, IEU OpenGWAS. |

| LD Reference Panels | Population-specific linkage disequilibrium data for heritability and fine-mapping analyses. | 1000 Genomes Phase 3, UK Biobank reference, gnomAD. |

| LDSC Software Suite | Primary tool for LD Score regression to estimate heritability, polygenicity, and genetic correlation. | Available from the Broad Institute (github.com/bulik/ldsc). |

| GCTA Software | Tool for genome-wide complex trait analysis, including GRM construction and GBLUP. | Yang et al., Nature Genetics, 2011 (cnsgenomics.com/software/gcta). |

| Functional Annotation Databases | Annotate SNPs by genomic context to partition heritability. | ANNOVAR, Ensembl Variant Effect Predictor (VEP), Roadmap Epigenomics, GTEx. |

| High-Performance Computing (HPC) Cluster | Essential for processing large genomic datasets, running mixed models, and simulations. | Local institutional clusters or cloud solutions (AWS, Google Cloud). |

Within the Genomic Best Linear Unbiased Prediction (GBLUP) model framework, the Genomic Relationship Matrix (G) is a foundational component, central to the assumption that genomic relationships capture the additive genetic covariance between individuals. The accuracy and interpretability of GBLUP directly hinge on the correct specification and calculation of G. This technical guide examines the methodologies for constructing G, its interpretation as a measure of genetic similarity, and the implications of its calculation on the broader GBLUP model assumptions, particularly regarding population structure and the equivalence of genomic and pedigree-based relationships.

Core Methodologies for Calculating G

The standard G matrix is an n x n symmetric matrix, where n is the number of genotyped individuals. Each element Gᵢⱼ represents the genomic relationship between individuals i and j. The predominant method, proposed by VanRaden (2008), has several key variants.

Experimental Protocol for Constructing G (VanRaden Method 1):

- Genotype Data Preparation: Obtain a matrix M of dimensions n x m, where m is the number of biallelic markers. Code genotypes as 0, 1, or 2 for the number of copies of a designated reference allele.

- Allele Frequency Calculation: Compute the allele frequency pₖ for the reference allele at locus k across all individuals.

- Centering the Matrix: Create a matrix Z by centering M: Z = M - P, where P is a matrix where column k contains 2pₖ.

- Normalization: Calculate the scaling factor D = Σ [2pₖ(1-pₖ)] across all k markers.

- Matrix Computation: Compute the Genomic Relationship Matrix as: G = (Z Zᵀ) / D.

Alternative Calculation (VanRaden Method 2): This method substitutes the denominator D with D₂ = Σ [2pₖ(1-pₖ)] for observed allele frequencies, or uses expected frequencies under Hardy-Weinberg equilibrium, affecting the scaling of relationships relative to the pedigree base population.

Table 1: Effect of Allele Frequency and Marker Density on Genomic Relationship Estimates

| Parameter | Value/Range Tested | Impact on G Matrix Element (Gᵢⱼ) | Key Reference |

|---|---|---|---|

| Minor Allele Frequency (MAF) Filter | No filter vs. MAF > 0.01 | Inclusion of rare alleles inflates diagonal elements (self-relationships). Standardization reduces this bias. | Yang et al., 2010 |

| Number of Markers (m) | 50K, 500K, Whole Genome Seq | Increases as √m; plateaus at high density. Higher m reduces sampling error, better approximates true IBD. | Habier et al., 2013 |

| Base Allele Frequency | Current vs. Historical | Using historical pₖ anchors G to a defined genetic base. Using observed pₖ centers on the sample mean. | VanRaden, 2008 |

| Standardization Method | VanRaden Method 1 vs. Method 2 | Method 2 produces relationships more comparable to pedigree A-matrix under selection. | Forneris et al., 2016 |

Interpretation and Critical Assumptions

The G matrix is interpreted as an empirical estimate of the proportion of the genome that is identical by descent (IBD) between two individuals. Its use in GBLUP rests on critical assumptions:

- Additivity: G captures only additive genetic effects.

- Representativeness: The markers adequately cover the genome and are in linkage disequilibrium with causal variants.

- Population Definition: The choice of allele frequencies defines the base population to which relationships are referenced. Incorrect specification can lead to biased heritability estimates and predictions.

Visualization of G Matrix Calculation and Role in GBLUP

Workflow for Constructing the G Matrix

Role of G Matrix within the GBLUP Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Genomic Relationship Analysis

| Item / Reagent | Function / Purpose | Example Vendor/Software |

|---|---|---|

| High-Density SNP Array | Provides standardized, genome-wide genotype data for constructing G. | Illumina BovineHD (777K), Affymetrix Axiom |

| Whole Genome Sequencing (WGS) Data | Gold standard for marker discovery and ultimate density for IBD estimation. | Illumina NovaSeq, PacBio HiFi |

| PLINK Software | Industry-standard toolkit for processing raw genotype data, QC, and preliminary G matrix calculations. | Purcell et al., 2007 |

| GCTA Software | Specialized tool for advanced genetic analyses, including computing G matrix and estimating genomic heritability. | Yang et al., 2011 |

| PREGSF90 (BLUPF90 Suite) | Standard in animal breeding for large-scale genomic prediction, implements G matrix in mixed model equations. | Misztal et al., 2002 |

R rrBLUP Package |

Comprehensive R package for genomic prediction, including G matrix construction and GBLUP model fitting. | Endelman, 2011 |

| MAF Filter Scripts | Custom scripts or software parameters to remove rare variants, stabilizing G matrix estimates. | Typically implemented in PLINK/GCTA |

Within the genomic best linear unbiased prediction (GBLUP) model, the assumption of normality for the random genetic effects (u) and the residuals (e) is a foundational statistical premise. The core mixed model equation is represented as y = Xb + Zu + e, where y is the vector of phenotypic observations, X and Z are design matrices, and b is the vector of fixed effects. The assumptions are u ~ N(0, Gσ²u) and e ~ N(0, Iσ²e), where G is the genomic relationship matrix. Violations of this bivariate normality can lead to biased variance component estimates, inaccurate standard errors, and suboptimal predictive accuracy, ultimately compromising the validity of genomic heritability estimates and selection decisions in breeding or genetic risk prediction in pharmaceutical development.

Quantitative Impact of Normality Violations

The following table summarizes key findings from simulation studies on the consequences of non-normal u and e.

Table 1: Consequences of Violating Normality Assumptions in GBLUP

| Violation Type | Impact on Variance Component (σ²_u) | Impact on Predictive Ability (r̂) | Impact on Model Fit (Log-Likelihood) | Primary Reference Method |

|---|---|---|---|---|

| Heavy-tailed e (t-distribution) | Overestimation | Moderate decrease (~5-15%) | Significant decrease | Bayesian Robust Regression |

| Skewed u (Exponential) | Underestimation | Minor decrease (~2-8%) | Decrease | Data Transformation (e.g., Box-Cox) |

| Contaminated e (Mixture) | Severe overestimation | Substantial decrease (~10-25%) | Severe decrease | Mixture Models / Trimming |

| Normal u, Non-normal e | Bias propagates to σ²_u | Variable, often reduced | Unreliable | Residual Bootstrap |

Experimental Protocols for Assessment

Protocol 1: Graphical Diagnostics (Quantile-Quantile Plots)

- Model Fitting: Fit the GBLUP model using REML or Bayesian methods to obtain predictions for û and residuals ê.

- Standardization: Standardize the predicted random effects and residuals by their estimated standard errors.

- Plot Generation: Create a Q-Q plot of the standardized values against the theoretical quantiles of a standard normal distribution.

- Interpretation: Systematic deviations from the diagonal line (e.g., S-shapes, heavy tails) indicate departures from normality. This is a fundamental first step in any model diagnostics suite.

Protocol 2: Formal Hypothesis Testing (Shapiro-Wilk / Mardia’s Test)

- Residual Collection: Extract the vector of residuals ê from the fitted GBLUP model.

- Univariate Test: Apply the Shapiro-Wilk test to ê. A significant p-value (e.g., p < 0.01) rejects the null hypothesis of normality.

- Multivariate Test (for u): For the predicted random effects û, test for multivariate normality using Mardia’s test (skewness and kurtosis).

- Limitation Note: These tests are sensitive to large sample sizes common in genomics, where even trivial deviations may be flagged as significant.

Protocol 3: Simulation-Based Assessment (Parametric Bootstrap)

- Simulate under H₀: Generate a new phenotype vector ysim using the estimated variance components (σ̂²u, σ̂²e) and the assumption u ~ N(0, Gσ̂²u), e ~ N(0, Iσ̂²_e).

- Refit Model: Fit the GBLUP model to ysim and store the standardized ûsim and ê_sim.

- Repeat: Perform steps 1-2 for B iterations (e.g., B=1000).

- Generate Envelope: Calculate empirical confidence intervals (e.g., 95%) for the Q-Q plot at each quantile from the bootstrap distribution.

- Compare: Overlay the observed Q-Q plot from the real data. Observed points falling outside the simulation envelope indicate a violation of the normality assumption.

Visualization of Diagnostic Workflow

Diagram 1: Normality Assessment Workflow for GBLUP.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Normality Diagnostics

| Tool/Reagent | Function in Diagnostics | Example Implementation / Package |

|---|---|---|

| REML/Bayesian Software | Fits the GBLUP model to obtain variance components and predictions of u and e. | sommer (R), ASReml-R, BLR (R), stan |

| Statistical Test Suite | Provides formal tests for univariate and multivariate normality. | stats::shapiro.test (R), MVN::mardiaTest (R) |

| Visualization Library | Creates diagnostic plots (Q-Q plots, histograms, density overlays). | ggplot2::geom_qq, car::qqPlot (R) |

| Bootstrap Engine | Automates simulation-based validation and confidence interval construction. | boot (R), custom for-loops with MASS::mvrnorm |

| Data Transformer | Applies transformations to phenotypes to mitigate non-normality in e. | forecast::BoxCox (R), bestNormalize (R) |

| Robust Modeling Package | Fits alternative models that are less sensitive to non-normal tails (e.g., using t-distributions). | robustBLUP (R), MCMCglmm (R, Bayesian) |

Within the genomic best linear unbiased prediction (GBLUP) framework, Assumption 4 posits that phenotypic variation is explained by the additive sum of individual genetic marker effects, with negligible contribution from non-additive epistatic interactions (gene-gene interactions). This assumption simplifies the complex architecture of quantitative traits, enabling computationally efficient genomic predictions. This whitepaper critically examines the biological validity, mathematical formulation, and practical implications of this assumption in the context of modern genomic selection and drug target discovery.

Mathematical Foundation of Additivity in GBLUP

The standard GBLUP model is represented as:

y = Xβ + Zu + e

Where:

- y is the vector of phenotypic observations.

- X is the design matrix for fixed effects (β).

- Z is the design matrix relating observations to random genetic effects.

- u is the vector of random additive genetic effects, assumed ~N(0, Gσ²ₐ).

- e is the vector of residual errors, ~N(0, Iσ²ₑ).

The G matrix is the genomic relationship matrix, calculated from marker data, and captures additive genetic covariances between individuals. The explicit omission of an epistatic variance component (σ²ᵢ) is the core of Assumption 4.

Variance Component Partitioning Under the Additive Model

The total genetic variance (σ²₉) is assumed to be fully additive:

σ²ₚ = σ²ₐ + σ²ₑ

Where σ²ₚ is the phenotypic variance. In reality, the total genetic variance may be partitioned as:

σ²₉ = σ²ₐ + σ²d + σ²ᵢ + σ²{a×d} + ...

Where σ²_d represents dominance variance and σ²ᵢ represents epistatic variance (e.g., additive×additive, additive×dominance).

Table 1: Comparative Variance Component Estimates for Complex Traits

| Trait/Disease | Estimated Additive Variance (σ²ₐ) | Estimated Epistatic Variance (σ²ᵢ) | Study/Model | Reference |

|---|---|---|---|---|

| Human Height (UK Biobank) | ~80% of σ²₉ | < 5% of σ²₉ | GREML with SNP-based GRM | [1] |

| Dairy Cattle Milk Yield | 85-90% of σ²₉ | 2-8% of σ²₉ | Extended GBLUP with epistatic GRM | [2] |

| Maize Grain Yield | 60-70% of σ²₉ | 10-20% of σ²₉ | Non-parametric kernel methods | [3] |

| Arabidopsis Thaliana (Fitness) | ~40% of σ²₉ | ~25% of σ²₉ | Diallel crossing experiment | [4] |

Experimental Protocols for Detecting Epistasis

Genome-Wide Epistasis Scan via Variance Heterogeneity

This protocol tests for genetic interactions by detecting loci whose effects depend on the genetic background.

1. Sample & Genotyping: Use a population of N individuals with high-density SNP genotypes and precise phenotype measurements. 2. Phenotype Residualization: Regress the phenotype on fixed covariates (e.g., age, sex, principal components for population structure). Save the standardized residuals (yres). 3. Variance Association Test: For each SNP *i*: a. Split the sample into genotype groups (e.g., AA, Aa, aa). b. Perform Levene's test or a similar test to compare the variance of yres across genotype groups. c. A significant result (p < α, adjusted for multiple testing) indicates the SNP is involved in epistatic interactions (a "vQTL"). 4. Follow-up Interaction Modeling: For significant vQTLs, explicitly test for pairwise interactions between the vQTL SNP and all other genome-wide SNPs using a regression model: y ~ SNPvQTL + SNPj + SNPvQTL×SNPj.

Epistatic Genomic Relationship Matrix (Gᵢ) Construction

This methodology quantifies and incorporates epistatic variance into a prediction model.

1. Calculate Additive GRM (G): Standard method using centered and scaled SNP matrix M: G = (MM') / k, where k is the number of SNPs. 2. Construct Epistatic GRM (Gᵢ): For additive×additive epistasis, the Hadamard product is used: Gᵢ = G # G (element-wise multiplication). This matrix represents expected covariances due to pairwise multiplicative interactions. 3. Extended GBLUP Model Fitting: Fit the model: y = Xβ + Z₁u + Z₂v + e, where u ~ N(0, Gσ²ₐ) and v ~ N(0, Gᵢσ²ᵢ). Variance components σ²ₐ and σ²ᵢ are estimated via REML. 4. Model Comparison: Compare the model fit (via log-likelihood or prediction accuracy) to the standard additive GBLUP to assess the importance of epistasis.

Visualizing Genetic Architecture and Experimental Workflows

Diagram 1: Contrasting Additive and Epistatic Models

Diagram 2: Epistasis Detection & Modeling Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Tools for Studying Genetic Effects

| Item/Category | Function & Relevance to Additivity/Epistasis Research | Example Product/Technology |

|---|---|---|

| High-Density SNP Arrays | Genotype thousands to millions of markers genome-wide to construct accurate G and Gᵢ matrices. Essential for partitioning variance. | Illumina Global Screening Array, Affymetrix Axiom arrays. |

| Whole Genome Sequencing (WGS) | Provides complete genetic variant data, enabling the most comprehensive search for causal variants and their potential interactions. | Illumina NovaSeq, PacBio HiFi. |

| Phenotypic Automation | High-throughput, precise phenotyping is critical for detecting subtle interaction effects and reducing environmental noise (σ²ₑ). | Li-Cor photosynthesis systems, automated cell counters (e.g., Countess 3). |

| Statistical Genetics Software | Fits complex variance component models (REML), performs GWAS and epistasis scans. | GCTA (GREML analysis), PLINK (epistasis tests), R packages (sommer, rrBLUP). |

| CRISPR-Cas9 Gene Editing | Enables functional validation of predicted epistatic interactions by creating specific multi-gene knockouts/knockins in model systems. | Synthego engineered kits, IDT Alt-R system. |

| Epistasis Network Visualization | Software to map and interpret complex interaction networks identified from genome-scale data. | Cytoscape with dedicated plugins (e.g., aMat). |

Implications for Drug Development

The additive assumption carries significant implications for pharmacogenomics and polygenic risk scores (PRS). If disease susceptibility involves non-negligible epistasis, PRS based on additive models will have limited portability across diverse populations with different allele frequencies and thus different interaction landscapes. In drug target discovery, assuming additivity may overlook synergistic pathways where targeting an interacting pair of genes yields a therapeutic effect greater than the sum of individual inhibitions. Validating Assumption 4 is therefore not merely a statistical exercise but a prerequisite for robust translational genomics.

[1] Yang et al., Nature Genetics, 2015. "Genetic variance estimation with imputed variants finds negligible missing heritability for human height." [2] Jiang et al., Genetics Selection Evolution, 2017. "The extent of linkage disequilibrium and epistasis in breeding populations." [3] Technow et al., Genetics, 2014. "Genomic prediction of hybrid performance in maize with models incorporating dominance and epistasis." [4] Huang et al., Nature, 2022. "Context-dependent genetic architecture in Arabidopsis thaliana."

Within the broader thesis examining the assumptions and limitations of the Genomic Best Linear Unbiased Prediction (GBLUP) model, a pivotal question arises: how can a model predicated on genomic relationships accurately predict complex traits without identifying causal variants? The answer lies in Linkage Disequilibrium (LD)—the non-random association of alleles at different loci. GBLUP exploits the pervasive structure of LD across the genome, using dense marker panels as proxies for causal quantitative trait loci (QTL). This whitepaper provides an in-depth technical guide on LD as the mechanistic engine enabling GBLUP, detailing the underlying principles, experimental validation, and practical implications for researchers and drug development professionals.

Theoretical Foundation: LD, Genomic Relationships, and GBLUP

The GBLUP Model Formulation

The standard GBLUP model is represented as: y = Xβ + Zg + e Where:

- y is the vector of phenotypic observations.

- X is the design matrix for fixed effects (β).

- Z is the design matrix relating individuals to their genomic random effect (g).

- g is the vector of genomic breeding values, assumed g ~ N(0, Gσ²_g).

- e is the vector of residual errors, e ~ N(0, Iσ²_e).

The core of the model is the Genomic Relationship Matrix (G), calculated from a dense set of m genome-wide markers: G = (MM') / m, where M is the centered (and sometimes scaled) genotype matrix. The fundamental assumption is that G captures the true additive genetic relationships and, by extension, the co-inheritance of chromosomal segments harboring causal variants.

LD as the Proxy Mechanism

GBLUP does not require markers to be causal. Instead, it requires that markers be in LD with one or more QTL. The statistical relationship can be summarized as follows:

- Marker-QTL LD: A marker allele

M1is in LD with a QTL alleleQ1. The effect ofM1estimated by GBLUP is not its own biological effect but a reflection of the average effect ofQ1with which it co-segregates in the population. - Genomic Relationships as LD Summaries: The relationship between two individuals

iandjin G is essentially the correlation of their marker genotypes across the genome. High genomic relationship implies they share many chromosomal segments identical-by-descent (IBD). These shared segments are, by definition, stretches of high LD. Therefore, if two individuals share a marker allele, they are also likely to share the linked QTL allele, leading to similar phenotypic values. - Prediction as an LD-Weighted Average: The genomic estimated breeding value (GEBV) for an individual is a weighted sum of the effects of all markers, where the weights are the individual's genotypes. Since marker effects are correlated (via LD) with nearby QTL effects, the GEBV effectively approximates the sum of effects of all QTL in LD with the marker panel.

Experimental Evidence and Protocols

Key experiments have validated the central role of LD in GBLUP's predictive ability.

Protocol: Simulating Traits with Known Architecture to Decouple LD and Relationship

Objective: To isolate the contribution of LD from that of overall pedigree relationship. Methodology:

- Generate a Founder Population: Simulate a large historical population with random mating for many generations to establish realistic LD patterns.

- Create a Recent Pedigree: From the last generation of the historical population, create a structured pedigree (e.g., half-sib families).

- Define Causal Variants and Markers: Randomly select a set of loci as true QTL. A separate, denser set of SNPs is used as markers.

- Assign Effects: Draw QTL effects from a specified distribution (e.g., normal, mixture).

- Calculate True Breeding Value (TBV): TBV = Σ(QTLgenotype * QTLeffect).

- Phenotype Simulation: Phenotype = TBV + environmental noise.

- Analysis:

- Scenario A (LD Present): Use the dense marker panel (in LD with QTL) to construct G and run GBLUP.

- Scenario B (LD Broken): "Shuffle" the marker alleles across chromosomes within the recent pedigree generation, destroying marker-QTL LD while retaining the overall genomic relationship structure. Run GBLUP.

- Compare prediction accuracies (correlation between GEBV and TBV) between Scenarios A and B.

Protocol: Varying Marker Density and Population History

Objective: To demonstrate that prediction accuracy scales with the extent of LD captured. Methodology:

- Use real or simulated genotypes from populations with different effective sizes (Ne), which inversely determines LD extent (small Ne = long LD blocks, large Ne = short LD blocks).

- For each population, subset markers to create panels of varying density (e.g., 1K, 50K, 500K, Whole Genome Sequence).

- Apply GBLUP for a simulated or real trait.

- Measure prediction accuracy in a validation set not used in model training.

Table 1: Impact of LD and Marker Density on GBLUP Accuracy

| Experimental Factor | Condition | Typical Impact on GBLUP Accuracy | Key Implication |

|---|---|---|---|

| Marker-QTL LD | High LD (Markers close to QTL) | High | LD is necessary for marker to proxy QTL. |

| No LD (Markers unlinked to QTL) | Near Zero | Accuracy collapses without LD, even with correct relationships. | |

| Marker Density | Low Density (< Required for LD coverage) | Low | Insufficient markers to "cover" all QTL via LD. |

| High Density (Saturating LD coverage) | High, plateaus | Additional markers provide diminishing returns. | |

| Population Effective Size (Ne) | Small Ne (Long-range LD) | High with fewer markers | Fewer markers needed to tag QTLs. |

| Large Ne (Short-range LD) | Requires many more markers | Need high density (e.g., sequencing) to capture short LD blocks. | |

| Training-Validation Relationship | Closely Related (Share recent LD blocks) | High | Shared chromosome segments enable accurate prediction. |

| Distantly/Unrelated (LD blocks not shared) | Low | Prediction relies on LD being consistent across subpopulations. |

Visualizing the Core Concepts

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for GBLUP/LD Research

| Item | Function in Research | Example/Specification |

|---|---|---|

| High-Density SNP Array | Genotyping platform to obtain marker data for G matrix construction. Provides the necessary density to capture LD. | Illumina BovineHD (777K), Illumina Infinium Global Screening Array (GSA), Affymetrix Axiom arrays. |

| Whole Genome Sequencing (WGS) Data | Gold-standard for identifying all variants. Used to study the limits of LD-based prediction and the role of rare variants. | Short-read sequencing (Illumina NovaSeq), Long-read sequencing (PacBio, Oxford Nanopore). |

| Genotype Imputation Software | Increases marker density and sample size by inferring ungenotyped variants from a reference panel, enhancing LD coverage. | Beagle5, Minimac4, IMPUTE5. |

| GBLUP/REML Software | Fits the mixed linear model, estimates variance components (σ²g, σ²e), and solves for GEBVs. | GCTA, BLUPF90 family, ASReml, R packages (rrBLUP, sommer). |

| LD Calculation & Visualization Tool | Quantifies and visualizes pairwise LD between loci to assess population structure and marker coverage. | PLINK (--r2), Haploview, R (genetics, LDheatmap). |

| Genetic Relationship/Kinship Matrix Calculator | Constructs G from genotype data, often with options for different standardization methods. | PLINK (--make-rel), GCTA (--make-grm), PREGSF90. |

| Phenotypic Database | Curated, high-quality trait measurements for training and validating the GBLUP model. | Requires robust data management systems (e.g., LIMS) and standardized protocols. |

| Simulation Software | Generates synthetic genomes and phenotypes with known genetic architectures to test theoretical assumptions about LD. | QMSim, XSim, R/sim1000G, AlphaSimR. |

Implications and Limitations within the GBLUP Thesis

The reliance on LD shapes GBLUP's performance and limitations:

- Within-Population vs. Across-Population Prediction: Accuracy is high when predicting individuals within the same population as the training set because LD patterns (marker-QTL phase) are consistent. Prediction across divergent populations often fails because LD phases differ (the "LD decay" problem).

- Long-Range LD from Selection: In breeding populations, selection can create long-range, persistent LD around major QTL, which GBLUP leverages effectively. However, this may inflate predictions for relatives if not properly validated.

- Non-Additive and Rare Variants: GBLUP's additive relationship matrix G primarily captures additive effects tagged by common markers in LD. Non-additive effects or rare variants not in LD with common markers are poorly captured, representing a model limitation.

- A Black Box? While GBLUP works without knowing causal variants, this is also a critique: it provides little biological insight into the trait's architecture. The effects are smeared across markers in LD, making marker interpretation difficult.

Linkage Disequilibrium is the fundamental engine that powers the GBLUP framework. By leveraging the correlation structure among markers induced by LD, the genomic relationship matrix G serves as a sufficient statistic for the genetic covariance arising from shared causal variants. This allows for accurate genetic prediction entirely from marker data, bypassing the formidable challenge of QTL identification. Understanding this mechanism is crucial for researchers and drug development professionals to correctly apply GBLUP, interpret its results, anticipate its limitations—particularly in cross-population prediction—and strategically design studies (e.g., choosing marker density and training population structure) to harness the power of LD effectively.

Implementing GBLUP: A Step-by-Step Guide for Biomedical Data Analysis

The Genomic Best Linear Unbiased Prediction (GBLUP) model is a cornerstone of modern genomic prediction and genome-wide association studies (GWAS). Its validity and predictive accuracy are fundamentally contingent upon the quality of input data. Violations of core GBLUP assumptions—including, but not limited to, Hardy-Weinberg equilibrium, minimal genotyping error, a homogeneous population structure, and properly normalized phenotypic distributions—can lead to biased variance component estimates, inflated type I error rates, and reduced predictive ability. This guide details the rigorous data preparation pipeline necessary to ensure these assumptions are reasonably met, thereby underpinning robust genomic analyses.

Genotype Quality Control (QC)

The primary goal of genotype QC is to filter out unreliable markers and samples to minimize technical artifacts.

Experimental Protocol for Genotype QC

A standard QC protocol, executed on raw genotype array data prior to imputation, involves sequential steps:

- Initial Data Format: Start with PLINK binary files (.bed, .bim, .fam).

- Sample-Level QC:

- Call Rate: Remove individuals with a genotype call rate < 0.98 (--mind 0.02 in PLINK).

- Sex Discrepancy: Compare reported sex with sex inferred from X chromosome heterozygosity. Discordant samples are flagged for exclusion or correction.

- Relatedness & Duplicates: Calculate pairwise identity-by-descent (IBD) using a pruned set of autosomal SNPs. Remove one sample from each pair with pi-hat > 0.1875 (indicating duplicates or monozygotic twins) or > 0.125 (first-degree relatives) based on study design.

- Population Stratification: Perform multidimensional scaling (MDS) or principal component analysis (PCA) on a linkage disequilibrium (LD)-pruned SNP set. Visually inspect plots to identify and remove outliers from the main cluster.

- Variant-Level QC:

- Call Rate: Exclude SNPs with a call rate < 0.98 (--geno 0.02).

- Hardy-Weinberg Equilibrium (HWE): Test in controls (or a random subset for case/control studies). Remove SNPs with HWE p-value < 1e-06 (--hwe 1e-06).

- Minor Allele Frequency (MAF): Remove SNPs with MAF < 0.01 (--maf 0.01) to reduce noise and imputation errors.

Table 1: Standard Quality Control Filters and Thresholds

| QC Level | Metric | Typical Threshold | Rationale |

|---|---|---|---|

| Sample | Individual Call Rate | ≥ 98% | Excludes low-quality DNA samples |

| Sample | Sex Discrepancy | Discordance Flagged | Ensures sample identity integrity |

| Sample | Relatedness (pi-hat) | < 0.1875 (or 0.125) | Controls for cryptic relatedness |

| Variant | SNP Call Rate | ≥ 98% | Removes poorly performing assays |

| Variant | Minor Allele Frequency (MAF) | ≥ 1% | Removes rare, poorly estimated variants |

| Variant | Hardy-Weinberg P-value | > 1e-06 | Flags genotyping errors, stratification |

Genotype Imputation

Imputation infers ungenotyped variants using a reference haplotype panel, increasing marker density and enabling meta-analysis.

Experimental Protocol for Imputation

- Pre-Imputation Preparation: Liftover genomic coordinates to the reference panel build (e.g., GRCh38). Pre-phase haplotypes using software like Eagle or SHAPEIT to improve accuracy.

- Reference Panel Selection: Choose a large, population-matched panel (e.g., TOPMed, HRC, 1000 Genomes Phase 3).

- Imputation Execution: Use a computationally efficient tool like Minimac4 (for Michigan/TOPMed server) or Beagle5. Split the genome into chunks (e.g., by chromosome) for parallel processing.

- Post-Imputation QC:

- Info Score/RSQ: Filter imputed variants with an imputation quality score < 0.7 (for dosage data).

- MAF Filtering: Apply a MAF filter appropriate for the analysis (e.g., MAF > 0.005).

- Format Conversion: Convert output (.vcf) to analysis-ready formats (e.g., PLINK2, BGEN).

Table 2: Key Metrics for Evaluating Imputation Quality

| Metric | Description | Acceptable Threshold | Interpretation |

|---|---|---|---|

| Info / RSQ | Estimated squared correlation between imputed and true genotype | > 0.7 (0.8 for fine-mapping) | Primary measure of imputation accuracy. Higher is better. |

| Allele Frequency Correlation | Correlation between imputed and reference panel MAF | > 0.95 | Indicates frequency calibration. |

| Properly Called % | Percentage of genotypes with highest probability > 0.9 | High percentage | Reflects certainty of the imputed calls. |

Phenotypic Standardization

Phenotype processing is critical for GBLUP, which assumes residuals are normally distributed.

Experimental Protocol for Phenotype Processing

- Outlier Detection & Treatment: For continuous traits, identify outliers (e.g., beyond ±4 SD from the mean). Winsorize or set to missing based on biological plausibility.

- Covariate Adjustment: Fit a linear model:

Phenotype ~ Age + Sex + Genotyping_PC1 + ... + PCk + Other_Relevant_Covariates. Use the residuals for genomic analysis. - Normalization: Apply an inverse normal transformation (INT) to the adjusted residuals. This forces the phenotypic distribution to follow a standard normal distribution, satisfying the GBLUP normality assumption and reducing the influence of extreme values.

- Binary Traits: For case/control studies, ensure sufficient sample size and account for prevalence in variance component estimation. Covariate adjustment remains essential.

Table 3: Impact of Phenotypic Standardization Steps on Distribution

| Processing Step | Goal | Effect on Distribution | Relevance to GBLUP |

|---|---|---|---|

| Covariate Adjustment | Remove non-genetic variance | Reduces skew/kurtosis induced by covariates | Minimizes confounding, improves heritability estimation. |

| Inverse Normal Transform | Enforce normality | Maps residuals to a standard normal distribution (mean=0, SD=1) | Directly satisfies the model's residual normality assumption. |

| Winsorization | Mitigate outlier impact | Limits extreme values without discarding data | Reduces the influence of erroneous or non-representative data points. |

Visualizations

Diagram 1: Genomic Data Preparation Workflow for GBLUP

Diagram 2: Relationship Between Data Issues & GBLUP Assumption Violations

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Genomic Data Preparation

| Item / Software | Primary Function | Key Application in Pipeline |

|---|---|---|

| PLINK 2.0 | Whole-genome association analysis toolset. | Core tool for genotype QC, format conversion, and basic association testing. |

| BCFtools | Utilities for variant calling file (VCF) manipulation. | Filtering, querying, and managing VCF files pre- and post-imputation. |

| Eagle / SHAPEIT | Haplotype phasing algorithms. | Accurate phasing of genotypes prior to imputation, improving accuracy. |

| Minimac4 / Beagle5 | Genotype imputation engines. | Inferring untyped variants using a reference haplotype panel. |

| QCTOOL / SNPTEST | Tool for processing genetic data and computing summary statistics. | Calculating QC metrics (e.g., info scores) and post-imputation analysis. |

| R / Python (statsmodels) | Statistical programming environments. | Phenotype standardization, covariate adjustment, inverse normal transformation, and visualization. |

| Reference Panels (TOPMed, HRC) | Curated collections of whole-genome sequenced haplotypes. | Essential resource for accurate genotype imputation. Population-matched panel is critical. |

| High-Performance Computing (HPC) Cluster | Distributed computing environment. | Necessary for computationally intensive steps: phasing, imputation, and GBLUP model fitting. |

Within the Genomic Best Linear Unbiased Prediction (GBLUP) framework, the Genomic Relationship Matrix (G) is a cornerstone, replacing the traditional pedigree-based numerator relationship matrix. The construction of G profoundly impacts the accuracy and bias of genomic estimated breeding values (GEBVs). This technical guide details prevalent methods, with a critical focus on their embedded assumptions and implications for GBLUP model performance in genomic selection and pharmaceutical trait mapping.

The VanRaden Method: Formulations and Protocol

The VanRaden (2008) method is the most widely adopted approach. It estimates genomic relationships by comparing individuals' marker genotypes to a defined base population.

Protocol 1: Constructing G via VanRaden Method 1

- Genotype Coding: Code genotypes for

nindividuals andmbiallelic SNPs. For each SNP, assign values as 0, 1, or 2 representing the number of copies of a reference allele. - Allele Frequency Calculation: Calculate the allele frequency

p_ifor the reference allele at thei-th SNP from the current population or a specified base population. - Matrix Construction: Create an

n x mmatrix Z, where each element is(x_ij - 2p_i), withx_ijbeing the genotype code for individualjat SNPi. - Normalization: Compute the genomic relationship matrix G as: G = (Z Z') / (2 * Σ [pi(1-pi)]) The denominator scales G to be analogous to the pedigree-based relationship matrix.

Protocol 2: Constructing G via VanRaden Method 2 (Allowing for Dominance)

- Follow steps 1-3 from Protocol 1.

- Alternative Normalization: Compute G as:

G = (Z D Z'), where D is a diagonal matrix with

D_ii = 1 / [2p_i(1-p_i)]. This weighting assumes SNPs with intermediate frequencies contribute more information.

Workflow for VanRaden G Matrix Construction

Alternative Methods and Modifications

Method of Moments (Astle & Balding, 2009)

This approach accounts for population structure by estimating locus-specific weights.

Protocol:

- Compute the covariance matrix of SNP allele counts.

- Estimate the sharing of alleles identical-by-descent between individuals

jandkat SNPiusing a moment estimator. - Average these estimates across all SNPs to form the overall genomic relationship matrix.

Weighted G Matrices (e.g., GBLUP|GA)

SNP-specific weights (e.g., based on prior variance estimates or GWAS results) can be incorporated into G.

Protocol:

- Obtain a weight

w_ifor each SNPi. - Construct a diagonal weight matrix W with

W_ii = w_i. - Compute the weighted G matrix as: G_w = (Z W Z') / trace(W).

Correcting for Allele Frequency Extremes (Endpoint Coding)

To reduce the influence of rare alleles, endpoints can be recoded (e.g., set genotypes for SNPs with minor allele frequency < threshold to heterozygous).

The Single-Step GBLUP (ssGBLUP) and H Matrix

The H matrix blends G and the pedigree-based relationship matrix A to allow for the simultaneous evaluation of genotyped and non-genotyped individuals.

Protocol:

- Construct G (scaled to be compatible with A22, the sub-matrix of A for genotyped animals).

- Compute the blended inverse relationship matrix: H⁻¹ = A⁻¹ + [ [0, 0], [0, (G⁻¹ - A22⁻¹)] ].

Single-Step GBLUP Relationship Integration

Comparative Analysis of Methods

Table 1: Comparison of Genomic Relationship Matrix Construction Methods

| Method | Core Formula | Key Assumption | Primary Limitation | Impact on GBLUP |

|---|---|---|---|---|

| VanRaden 1 | G = Z Z' / 2Σp(1-p) |

SNPs contribute equally; base p_i known. |

Sensitive to allele frequency estimation. | Can bias GEBVs if base pop mis-specified. |

| VanRaden 2 | G = Z D Z' |

SNP variance ~ 1/[2p(1-p)]. |

Overweights low-MAF SNPs, increasing sampling variance. | May overfit, reducing prediction accuracy. |

| Method of Moments | Iterative moment estimation | Population structure inflates covariance. | Computationally intensive for large m. |

Reduces bias under population stratification. |

| Weighted GBLUP | G_w = (Z W Z')/trace(W) |

Prior weights reflect true QTL effects. | Weight accuracy is critical; can introduce error. | Can improve accuracy if weights are informative. |

| ssGBLUP (H Matrix) | H⁻¹ = A⁻¹ + [[0,0],[0,G⁻¹-A22⁻¹]] |

G and A22 are compatible. |

Requires careful scaling and blending. | Enables unified analysis, leveraging all data. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Genomic Relationship Matrix Construction

| Item / Reagent | Function in G Matrix Research |

|---|---|

| High-Density SNP Array | Provides standardized genome-wide marker genotypes (e.g., Illumina BovineHD, PorcineGGP) for constructing Z. |

| Whole Genome Sequencing Data | Offers the most comprehensive variant discovery for creating custom, high-resolution G matrices. |

| Genotype Imputation Software (e.g., Minimac4, Beagle) | Infers missing or ungenotyped markers, ensuring complete genotype matrices for all individuals. |

| Allele Frequency Database | Provides external base population allele frequencies (p_i) for proper centering in VanRaden Method 1. |

| BLUPF90 Family Software | Industry-standard suite (e.g., PREGSF90, BLUPF90) for efficiently computing G and solving GBLUP models. |

| High-Performance Computing (HPC) Cluster | Enables the manipulation of large n x m genotype matrices and the inversion of large n x n G matrices. |

| Pedigree Management Software | Maintains accurate pedigree records essential for constructing the A matrix and its inverse for ssGBLUP. |

The Genomic Best Linear Unbiased Prediction (GBLUP) model is a cornerstone of modern genetic evaluation, enabling the prediction of breeding values using dense genome-wide marker data. Its core assumption is that marker effects are identically and independently distributed, implying an infinitesimal genetic architecture. This whitepaper examines popular software packages for implementing GBLUP and related mixed models, framed within a critical thesis on the model's inherent assumptions—such as homogeneous variance across loci, perfect linkage equilibrium between markers and QTL, and a genomic relationship matrix (GRM) that adequately captures all genetic variance—and their practical limitations in complex trait prediction and drug target identification.

Core Software Packages: A Technical Comparison

The following table summarizes key quantitative and functional attributes of major software toolkits used for GBLUP analysis, based on current sources (2024-2025).

Table 1: Comparison of Popular Software Packages for GBLUP and Mixed Models

| Feature | GCTA | ASReml | R sommer | BLUPF90 Suite |

|---|---|---|---|---|

| Primary Use Case | Genome-wide Complex Trait Analysis; GRM estimation, REML, GWAS. | Commercial standard for REML variance component estimation & prediction. | Flexible mixed model fitting within R, including multi-trait & non-normal data. | High-throughput genetic evaluation for large-scale animal breeding. |

| License & Cost | Free (GPL). | Commercial (substantial license fee). | Free (CRAN R package). | Free for research. |

| Core Algorithm | REML via AI/EM, GRM built from SNPs. | REML via Average Information (AI). | REML via Efficient Mixed Model Association (EMMA)/AI. | Iterative solving (PCG, Gauss-Seidel) for BLUP. |

| GBLUP Implementation | Yes, via --reml and GRM. |

Yes, via custom variance structures. | Yes, via mmer() with vsr() for random effects. |

Yes, standard in blupf90/remlf90. |

| Handling Large-N | Moderate (efficient GRM construction). | Good with sparse matrices. | Moderate to good for R. | Excellent (optimized for >1 million animals). |

| Key Strength | GRM tools, LD Score regression, complex trait analysis. | Model flexibility, robustness, and diagnostic tools. | User-friendly R interface, complex covariance structures. | Unmatched speed for large models, extensive suite of programs. |

| Noted Limitation | Less efficient for ultra-large N. | Closed source, cost-prohibitive. | Memory-bound within R environment. | Steeper learning curve, less accessible for non-animal breeders. |

| Typical Runtime Benchmark | ~2 hrs for REML on 10k individuals, 50k SNPs. | ~1 hr for similar model. | ~3 hrs for similar model. | ~30 mins for similar model (using remlf90). |

Experimental Protocols for GBLUP Analysis

A standard GBLUP analysis protocol is detailed below, common across the reviewed software with implementation-specific variations.

Protocol 1: Standard GBLUP for Genomic Prediction

Objective: Estimate genomic breeding values (GEBVs) and the proportion of variance explained by markers.

Materials: Phenotypic data file, genotypic data (e.g., SNP array data in PLINK format), relevant fixed effects data (e.g., year, location, batch).

Procedure:

- Quality Control (QC): Filter genotypic data for minor allele frequency (MAF > 0.01), call rate (> 0.95 per SNP and individual), and Hardy-Weinberg equilibrium (p > 1e-6). This is often done with PLINK or within GCTA.

- Genomic Relationship Matrix (GRM) Calculation: Compute the GRM (G) using the filtered SNP data. A common method (GCTA default) is:

G = (M-P)(M-P)' / 2∑p_j(1-p_j), where M is a matrix of allele counts (0,1,2), and P contains allele frequencies (2p_j). - Model Fitting: Fit the GBLUP mixed linear model: y = Xb + Zg + e Where: y is the vector of phenotypes; X is the design matrix for fixed effects (b); Z is the design matrix relating individuals to genetic effects (g); g ~ N(0, Gσ²g) is the vector of genomic breeding values; e ~ *N*(0, Iσ²e) is the residual.

- Variance Component Estimation: Use REML (via AI, EM, or similar algorithm) to estimate σ²g and σ²e. Heritability is derived as h² = σ²g / (σ²g + σ²_e).

- GEBV Prediction: Solve the mixed model equations (MME) to obtain BLUP solutions for g. These are the GEBVs.

- Validation: Perform k-fold cross-validation (e.g., 5-fold) to assess prediction accuracy as the correlation between predicted GEBVs and observed phenotypes in the validation set.

Protocol 2: Single-Step GBLUP (ssGBLUP) Protocol

Objective: Integrate genotyped and non-genotyped individuals into a single analysis for more accurate evaluations.

Procedure:

- Construct H⁻¹ Matrix: Build the inverse combined relationship matrix H⁻¹ = A⁻¹ + [ 0 0; 0 (G⁻¹ - A₂₂⁻¹) ], where A is the pedigree-based numerator relationship matrix, and A₂₂ is the block for genotyped individuals.

- Model Fitting: Replace A⁻¹ with H⁻¹ in the standard animal model MME.

- Solve & Predict: Solve the MME to obtain GEBVs for all animals, leveraging information from both pedigree and genomic data. This is the default in BLUPF90 suites (

blupf90/mix99).

Visualization of Core Workflows and Relationships

Title: Standard GBLUP Analysis Workflow

Title: GBLUP Model Assumptions and Linked Limitations

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagents and Computational Materials

| Item | Function in GBLUP Experiments | Example/Specification |

|---|---|---|

| High-Density SNP Array | Provides genome-wide marker data for GRM construction. | Illumina BovineHD (777k), PorcineGGP50K, HumanCoreExome. |

| Whole Genome Sequencing (WGS) Data | Provides the most comprehensive variant set for constructing more accurate GRMs. | Short-read sequencing (e.g., Illumina) at >10x coverage. |

| Phenotypic Database | Curated, high-quality trait measurements for model training and validation. | Should include fixed effects covariates (age, season, treatment batch). |

| Pedigree Information | Required for constructing the numerator relationship matrix (A) in ssGBLUP. | Three+ generations of lineage records. |

| High-Performance Computing (HPC) Cluster | Essential for REML estimation and solving large mixed models (N > 10,000). | Nodes with high RAM (>256GB) and multi-core processors. |

| Quality Control (QC) Pipeline Software | Filters raw genotype data to ensure analysis robustness. | PLINK, GCTA's --make-bed and --geno/--maf options. |

| Cross-Validation Scripts | Automates the partitioning of data to assess prediction accuracy unbiasedly. | Custom R/Python scripts for k-fold or leave-one-out schemes. |

This guide details the practical computational workflow for deriving Genomic Estimated Breeding Values (GEBVs) using the Genomic Best Linear Unbiased Prediction (GBLUP) model. It is framed within a broader thesis examining the foundational assumptions and limitations of the GBLUP model in research and applied breeding. The GBLUP model assumes an infinitesimal genetic architecture, where all markers explain equal genetic variance, and relies on the construction of a genomic relationship matrix (GRM) that presuppposes Hardy-Weinberg and linkage equilibrium. Violations of these assumptions, such as major genes or population stratification, are key limitations discussed in the wider thesis, informing the critical steps and quality controls in this workflow.

Core Workflow and Methodology

Data Preparation and Quality Control (QC)

The initial phase transforms raw genotype data into a curated dataset suitable for genomic prediction.

Experimental Protocol for Genotype QC:

- Input: Raw genotype files (e.g., PLINK .bed/.bim/.fam, VCF).

- Sample QC: Remove individuals with call rates < 0.95, excessive heterozygosity (>3 SD from mean), or mismatches between genetic and reported sex.

- Variant QC: Exclude single nucleotide polymorphisms (SNPs) with:

- Call rate < 0.95 across all samples.

- Minor allele frequency (MAF) < 0.01.

- Significant deviation from Hardy-Weinberg equilibrium (HWE p-value < 10⁻⁶).

- Population Stratification: Perform principal component analysis (PCA) on the pruned SNP set to identify and, if necessary, account for population outliers.

- Output: A clean, high-quality genotype matrix X of dimensions n × m (n individuals, m SNPs).

Construction of the Genomic Relationship Matrix (GRM)

The GRM (G) is central to the GBLUP model, quantifying genetic similarity between individuals based on genome-wide markers.

Methodology for GRM Calculation (VanRaden Method 1): The GRM is computed using the formula: [ G = \frac{WW'}{2 \sum pi(1-pi)} ] where W is the n × m matrix of centered and standardized genotypes. For each SNP i, the genotype codes (0,1,2 for homozygote, heterozygote, alternate homozygote) are centered by subtracting (2pi) ((pi) is the allele frequency). Standardization divides by (\sqrt{2pi(1-pi)}). The denominator scales the matrix such that the average diagonal is approximately 1 + F (inbreeding coefficient).

Phenotype Data Preparation and Modeling

Protocol:

- Collection: Gather phenotype data for the target trait(s), ensuring accurate matching of individual IDs with genotype data.

- Adjustment: Apply fixed effects corrections (e.g., for year, location, age, sex) using a linear model to obtain residuals or directly include these as fixed effects in the mixed model.

- Normalization: For non-normally distributed traits, apply appropriate transformations (e.g., log, Box-Cox).

Implementing the GBLUP Model

The statistical model for GBLUP is: [ \mathbf{y} = \mathbf{X}\mathbf{\beta} + \mathbf{Z}\mathbf{u} + \mathbf{e} ] Where:

- (\mathbf{y}) is the vector of (adjusted) phenotypes.

- (\mathbf{X}) is the design matrix for fixed effects (e.g., mean, contemporary groups).

- (\mathbf{\beta}) is the vector of fixed effects solutions.

- (\mathbf{Z}) is the design matrix relating individuals to phenotypes.

- (\mathbf{u}) is the vector of genomic breeding values, assumed (\mathbf{u} \sim N(0, \mathbf{G}\sigma^2_u)).

- (\mathbf{e}) is the vector of random residuals, assumed (\mathbf{e} \sim N(0, \mathbf{I}\sigma^2_e)).

Variance components ((\sigma^2u) and (\sigma^2e)) are estimated via Restricted Maximum Likelihood (REML) using the clean GRM and phenotype data. The GEBV for each individual i is the solution (\hat{u}_i) from the mixed model equations.

Validation and Accuracy Estimation

Protocol for k-fold Cross-Validation:

- Randomly partition the genotyped and phenotyped population into k subsets (folds).

- For each fold k:

- Designate fold k as the validation set. The remaining k-1 folds form the training set.

- Fit the GBLUP model using only data from the training set.

- Predict GEBVs for individuals in the validation set using their genotypes and the GRM derived from the entire population (or using a separate relationship matrix approach).

- Correlate the predicted GEBVs with the observed (adjusted) phenotypes in the validation set across all folds.

- The prediction accuracy is reported as the Pearson correlation coefficient (r). The unbiasedness is assessed by regressing observed values on predictions (target slope = 1).

Title: GBLUP Workflow from Genotypes to GEBVs

Title: GBLUP Model Assumptions and Limitations

Key Data Tables

Table 1: Standard Quality Control Thresholds for Genotype Data

| Parameter | Typical Threshold | Rationale |

|---|---|---|

| Individual Call Rate | > 95% | Excludes low-quality DNA samples. |

| SNP Call Rate | > 95% | Removes poorly performing assays. |

| Minor Allele Frequency (MAF) | > 1% | Removes uninformative rare variants. |

| Hardy-Weinberg Equilibrium (HWE) p-value | > 10⁻⁶ | Flags genotyping errors or selection. |

| Heterozygosity Rate | Mean ± 3 SD | Identifies sample contamination. |

Table 2: Example GBLUP Analysis Output Metrics

| Component | Estimated Value | Interpretation |

|---|---|---|

| Genetic Variance (σ²_u) | 12.5 | Estimated variance due to genomic markers. |

| Residual Variance (σ²_e) | 7.2 | Estimated variance due to environment/error. |

| Genomic Heritability (h²) | 0.63 | σ²u / (σ²u + σ²_e). Proportion of phenotypic variance explained by markers. |

| Prediction Accuracy (r) | 0.72 | Correlation between predicted GEBV and observed phenotype in validation. |

| Regression Slope (b) | 0.98 | Slope of observed on predicted; measures bias (ideal = 1). |

The Scientist's Toolkit: Research Reagent Solutions