Integrating NGS Data for Novel Gene Discovery: Strategies, Tools, and Validation for Biomedical Research

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the integrated analysis of Next-Generation Sequencing (NGS) data to uncover novel disease-associated genes.

Integrating NGS Data for Novel Gene Discovery: Strategies, Tools, and Validation for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the integrated analysis of Next-Generation Sequencing (NGS) data to uncover novel disease-associated genes. We explore the foundational principles of multi-omics integration, detail current methodological workflows and computational tools for data synthesis, address common analytical pitfalls and optimization strategies, and present rigorous frameworks for validating and benchmarking discovered genes. The content bridges exploratory bioinformatics with translational research, offering actionable insights to accelerate the journey from genomic data to therapeutic targets.

The Core Concepts: Why NGS Data Integration is Key to Unlocking Novel Biology

Novel gene discovery has evolved from reliance on single-omics datasets (e.g., genomics-only) to the mandatory integration of multi-omics data. This paradigm shift, driven by Next-Generation Sequencing (NGS) technologies, acknowledges that biological complexity arises from the dynamic interplay between the genome, epigenome, transcriptome, proteome, and metabolome. The core thesis is that true discovery of functionally novel genes—including non-coding RNA genes, small open reading frames (smORFs), and context-specific isoforms—requires the triangulation of evidence across multiple molecular layers. Single-dataset analyses are prone to high false discovery rates and functional misinterpretation. Effective integration resolves this by distinguishing technical artifact from biological signal, placing candidate genes within functional pathways, and revealing regulatory mechanisms.

The following table summarizes the primary NGS-based omics layers integral to modern gene discovery, their key outputs, and typical scale in a human cohort study.

Table 1: Core NGS Omics Modalities for Integrated Gene Discovery

| Omics Layer | Key Technology | Primary Output | Scale (Typical Human Study) | Role in Gene Discovery |

|---|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS) | Germline & somatic variants, structural variation | 3 billion bp/genome | Provides DNA blueprint; identifies novel loci, gene-disrupting variants. |

| Epigenomics | ChIP-Seq, ATAC-Seq, WGBS | Transcription factor binding, chromatin accessibility, DNA methylation | ~20-50 million peaks/assay (ChIP/ATAC) | Defines regulatory elements; links non-coding regions to potential regulatory genes. |

| Transcriptomics | RNA-Seq (bulk/single-cell) | Gene expression levels, splice isoforms, fusion transcripts | 20-50 million reads/sample (bulk) | Identifies expressed transcripts; reveals unannotated RNAs and isoform diversity. |

| Proteomics | Mass Spectrometry (w/ NGS-guided databases) | Peptide sequences, protein abundance, post-translational modifications | 5,000-10,000 proteins/sample | Validates translational output of novel coding genes; detects novel microproteins. |

| Metabolomics | LC/MS, GC/MS | Small molecule metabolite identity & abundance | Hundreds to thousands of metabolites | Provides phenotypic readout; connects gene function to biochemical pathways. |

Integrated Analysis Workflow and Protocol

This protocol outlines a systematic, multi-omics workflow for novel gene discovery from sample processing to candidate prioritization.

Protocol 3.1: Multi-Omics Data Generation and Integration for Novel Gene Discovery

I. Sample Preparation & Parallel Sequencing (Weeks 1-4)

- Objective: Generate matched multi-omics data from the same biological system (e.g., patient tissue, cell line perturbation).

- Materials: Fresh or snap-frozen tissue/cells, DNA/RNA/protein extraction kits, library prep kits for WGS, RNA-Seq, ATAC-Seq.

- Procedure:

- Aliquot sample for parallel nucleic acid and protein extraction.

- DNA: Perform WGS library prep (e.g., Illumina DNA PCR-Free). Sequence to ≥30x coverage.

- RNA: Perform ribosomal RNA-depleted total RNA-Seq (to capture non-coding RNAs) and/or poly-A selected RNA-Seq. Sequence to depth of 40-100 million paired-end reads/sample.

- Chromatin: Perform ATAC-Seq on nuclei to profile open chromatin. Sequence to depth of 50-100 million reads/sample.

- Proteins: Perform tryptic digestion and prepare peptides for liquid chromatography-tandem mass spectrometry (LC-MS/MS).

II. Omics-Specific Processing & Novel Feature Detection (Weeks 5-8)

- Objective: Generate a comprehensive list of potential novel genomic elements from each dataset independently.

- Bioinformatic Tools: StringTie, Salmon (transcriptomics); MACS2, MEME-ChIP (epigenomics); Proteomic search engines (MaxQuant, FragPipe).

- Procedure:

- Transcriptomics: Align RNA-Seq reads to reference genome (STAR). Use de novo transcript assembly (StringTie, Cufflinks) to identify unannotated transcripts. Filter for those with ≥2 splice junctions and expression >1 FPKM.

- Epigenomics: Call peaks from ATAC-Seq/ChIP-Seq data (MACS2). Intersect peaks with unannotated genomic regions. Use motif analysis (HOMER) to predict transcription factor binding.

- Proteomics: Search MS/MS spectra against a custom database containing both reference proteome and in silico translated novel transcripts from Step II.1. Use a percolator FDR <0.01 to identify novel peptides.

- Genomics: Perform de novo genome assembly (where applicable) or structural variant calling (Manta, Delly) to identify novel genomic regions absent from reference.

III. Multi-Omic Integration & Candidate Prioritization (Weeks 9-12)

- Objective: Integrate evidence to generate a high-confidence list of novel functional genes.

- Bioinformatic Tools: Bedtools, R/Bioconductor (GenomicRanges), custom Python scripts.

- Procedure:

- Evidence Aggregation: Create a unified genomic coordinate file. Annotate each novel transcript with supporting evidence: presence of open chromatin (ATAC-Seq peak), coding potential (CPC2, PhyloCSF), translational evidence (MS/MS peptide), and evolutionary conservation.

- Triangulation Scoring: Implement a scoring system (see Table 2). Assign points for each supporting omics evidence type.

- Prioritization Filter: Rank candidates by total score. Apply a mandatory filter: candidates must have evidence from at least two different omics layers (e.g., transcribed + translated, or transcribed + regulated by open chromatin).

- Contextualization: Correlate expression of high-confidence novel genes with phenotype (e.g., disease state) from matched samples. Perform co-expression network analysis (WGCNA) to infer functional associations.

Table 2: Candidate Gene Prioritization Scoring Matrix

| Evidence Type | Assay | Supporting Finding | Points | Rationale |

|---|---|---|---|---|

| Transcription | RNA-Seq | Multi-exonic, unannotated transcript | +3 | Primary evidence of expression. |

| Translation | MS/MS Proteomics | High-confidence peptide match | +4 | Definitive evidence of protein production. |

| Regulatory Potential | ATAC-Seq/ChIP-Seq | Peak in promoter region | +2 | Suggests regulated transcription. |

| Coding Potential | In silico analysis | PhyloCSF score > 50 | +2 | Evolutionary evidence of selection. |

| Genetic Association | WGS | Linked to phenotype-associated SNP | +3 | Connects genotype to phenotype. |

| Conservation | Comparative Genomics | Conserved in ≥2 mammalian species | +1 | Indicates functional importance. |

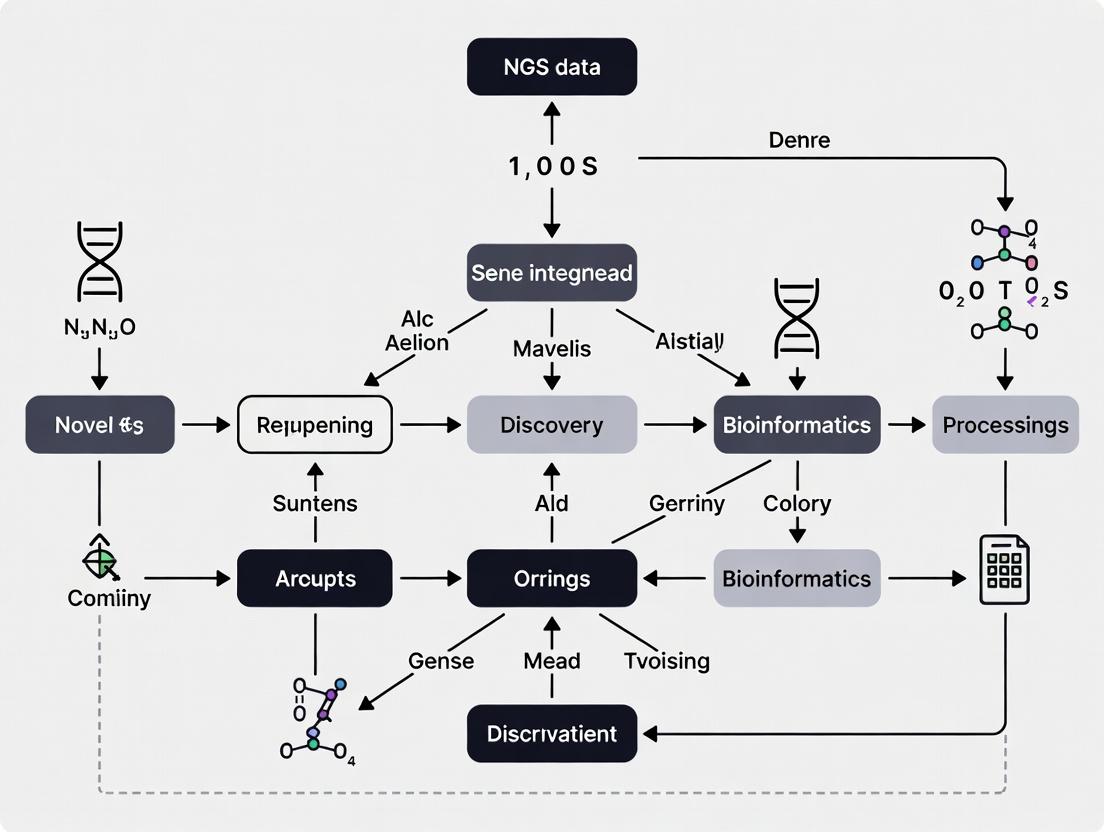

Visualizing the Integrated Workflow and Logic

Diagram 1: Multi-Omics Integration Workflow for Gene Discovery

Diagram 2: Logic of Multi-Omics Evidence Triangulation

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Materials for Multi-Omics Gene Discovery

| Item | Category | Example Product/Kit | Function in Workflow |

|---|---|---|---|

| Ribosomal RNA Depletion Kit | Transcriptomics | Illumina Ribo-Zero Plus, NEBNext rRNA Depletion | Removes abundant rRNA, enriching for mRNA and non-coding RNA, crucial for detecting novel transcripts. |

| Ultra II FS DNA Library Prep Kit | Genomics/Epigenomics | NEBNext Ultra II FS DNA Library Prep | Prepares sequencing libraries from low-input DNA for WGS or ATAC-Seq. |

| Tn5 Transposase | Epigenomics | Illumina Tagment DNA TDE1 Enzyme | Enzymatically fragments DNA and adds sequencing adapters in ATAC-Seq, mapping open chromatin. |

| Trypsin, MS Grade | Proteomics | Trypsin Gold, Mass Spectrometry Grade | High-purity protease for digesting proteins into peptides for LC-MS/MS analysis. |

| TMTpro 16plex | Proteomics | Thermo Fisher TMTpro 16plex Label Reagent Set | Allows multiplexed quantitative proteomics of up to 16 samples in one MS run, improving throughput. |

| NovaSeq S4 Flow Cell | Sequencing | Illumina NovaSeq S4 Flow Cell (300 cycles) | High-output sequencing platform for generating the deep coverage required for multi-omics integration. |

| Poly(A) RNA Selection Beads | Transcriptomics | Dynabeads mRNA DIRECT Purification Kit | Isolates polyadenylated RNA for standard mRNA-seq library preparation. |

| Cross-link Reversal Buffer | Epigenomics | ChIP Elution Buffer (Active Motif) | Reverses formaldehyde cross-linking after ChIP-Seq to release immunoprecipitated DNA for sequencing. |

Advancing novel gene discovery in the post-genomic era requires moving beyond single-omics analyses. The integration of Genomics (DNA sequence), Transcriptomics (RNA expression), Epigenomics (chromatin state/DNA methylation), and Proteomics (protein abundance/post-translational modification) provides a multi-dimensional view of cellular function and dysfunction. This holistic approach is critical for elucidating complex genotype-phenotype relationships, identifying robust therapeutic targets, and understanding disease mechanisms in systems biology and precision medicine initiatives.

Key Quantitative Findings in Integrated Omics Studies

Table 1: Summary of Key Findings from Recent Integrated Omics Studies in Cancer Research

| Study Focus | Genomics Finding | Transcriptomics Correlation | Epigenomics Driver | Proteomics Validation | Novel Insight |

|---|---|---|---|---|---|

| Resistance in NSCLC (2023) | EGFR T790M mutation (100% allele freq in selected cells) | Upregulation of AXL kinase (Log2FC=+4.2) | Hypomethylation of AXL promoter (Δβ=-0.45) | AXL protein overexpression (3.8-fold increase) | Epigenetically driven bypass signaling axis identified. |

| Metastatic Prostate Cancer (2024) | AR gene amplification (65% of cases) | AR-V7 splice variant detected (20% of amplifications) | H3K27ac peaks at AR-V7 cryptic enhancer | AR-V7 protein detectable; phospho-proteome shift | Integrated view of canonical and variant androgen signaling. |

| Immune Therapy Response (2023) | High Tumor Mutational Burden (TMB >10 mut/Mb) | IFN-γ signature elevated (GEP score >0.8) | Open chromatin at PD-L1 locus (ATAC-seq peak) | PD-L1 protein high (H-score >150) | Multi-modal biomarker panel predicts response with 89% accuracy. |

Application Notes: A Multi-Omic Workflow for Novel Oncogene Discovery

Aim: To identify and validate novel driver genes in a pan-cancer cohort by correlating copy number alterations (Genomics) with transcriptional output, epigenetic regulation, and downstream protein signaling.

1. Genomic & Epigenomic Layer Integration

- Protocol: Concurrent Whole Genome Sequencing (WGS) and Assay for Transposase-Accessible Chromatin using sequencing (ATAC-seq) on flash-frozen tissue.

- Methodology:

- Extract high-molecular-weight DNA and native nuclei from the same tissue aliquot using a dual-purpose extraction kit.

- Perform 30x WGS. Process data through a somatic variant/CNV pipeline (e.g., GATK, Control-FREEC).

- In parallel, perform ATAC-seq on 50,000 nuclei using the Omni-ATAC protocol. Sequence libraries and call peaks (using MACS2). Annotate peaks to gene promoters/enhancers.

- Integration Point: Overlap regions of focal genomic amplification with significantly accessible chromatin peaks (FDR < 0.01) to shortlist cis-regulated candidate genes.

2. Transcriptomic & Proteomic Correlation

- Protocol: Stranded total RNA-seq and Data-Independent Acquisition (DIA) Mass Spectrometry (MS) from adjacent tissue section.

- Methodology:

- Extract total RNA and perform rRNA depletion. Prepare libraries and sequence to a depth of 40M paired-end reads. Quantify expression (e.g., via Salmon).

- From matched protein lysates, perform tryptic digestion, fractionate peptides, and acquire spectra on a high-resolution LC-MS/MS system using a DIA method (e.g., 32-variable window setup).

- Analyze DIA data using a spectral library (constructed from pooled samples) via Spectronaut or DIA-NN.

- Integration Point: Correlate mRNA expression (Log2 TPM) with corresponding protein abundance (Log2 intensity) for candidate genes from Step 1. Genes showing strong concordance (Pearson r > 0.7) are prioritized as consistently deregulated.

3. Causal Validation via Epigenomic Perturbation

- Protocol: CRISPR-interference (CRISPRi) targeting candidate gene enhancers followed by multi-omic readout.

- Methodology:

- Design guide RNAs targeting the amplified/open chromatin region identified in Step 1.

- Transduce relevant cell lines with dCas9-KRAB lentivirus and sgRNAs. Perform FACS selection.

- Assess phenotype (proliferation/apoptosis).

- Post-perturbation, perform RT-qPCR (transcriptomics), western blot (proteomics), and targeted ATAC-seq (epigenomics) on the same cell pool.

- Integration Point: Confirmation of a direct epigenetic-regulatory mechanism is achieved if enhancer repression leads to concomitant downregulation of candidate gene mRNA and protein, and a phenotypic shift.

Visualization of the Integrated Workflow and Signaling

Multi-omics Discovery Workflow

Integrated View of Oncogene Activation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Integrated Multi-Omic Studies

| Item Name | Category | Function in Workflow |

|---|---|---|

| AllPrep DNA/RNA/Protein Mini Kit | Nucleic Acid/Protein Extraction | Simultaneous co-extraction of genomic DNA, total RNA, and protein from a single tissue sample, preserving multi-omic integrity. |

| Nextera DNA Flex Library Prep Kit | Genomics | Prepares high-quality sequencing libraries from low-input DNA for WGS, compatible with FFPE and fresh-frozen samples. |

| Chromatin Prep Module for ATAC-seq | Epigenomics | Provides optimized reagents for tagmentation and library preparation from nuclei, ensuring consistent chromatin accessibility profiles. |

| KAPA mRNA HyperPrep Kit | Transcriptomics | Enables stranded RNA-seq library construction from total RNA with rRNA depletion, ideal for degraded or low-input samples. |

| TMTpro 16plex Label Reagent Set | Proteomics | Allows multiplexed quantitative analysis of up to 16 samples in a single MS run, reducing batch effects and instrument time. |

| dCas9-KRAB Lentiviral Vector | Functional Validation | Enables stable, inducible transcriptional repression (CRISPRi) of candidate enhancers or promoters for causal testing. |

| Cell Titer-Glo 3D Cell Viability Assay | Phenotypic Screening | Measures cell viability/proliferation in 3D culture post-perturbation, correlating multi-omic changes with functional outcome. |

Within the thesis on NGS data integration for novel gene discovery, three key biological signal classes emerge as critical: splice variants, non-coding regulatory elements, and rare variants. This document provides application notes and detailed protocols for their identification and integration in a multi-omics context, enabling the prioritization of novel therapeutic targets and biomarkers.

Application Notes

Splice Variants

Alternative splicing contributes significantly to proteomic diversity. In disease contexts, particularly cancer and neurological disorders, aberrant splicing creates novel isoforms that can serve as drug targets or biomarkers. Integrated analysis requires aligning RNA-Seq data to a reference genome/transcriptome, followed by isoform quantification and differential splicing analysis. Key challenges include distinguishing biological variants from technical artifacts and achieving accurate quantification in complex loci.

Non-Coding Elements

Non-coding regions harbor regulatory elements (enhancers, promoters, silencers) and functional RNAs (lncRNAs, miRNAs) that govern gene expression. Integrative analysis combines chromatin accessibility assays (ATAC-Seq, ChIP-Seq for histone marks) with expression data (RNA-Seq) to link regulatory elements to target genes. The central challenge is the identification of causal variants within these elements that contribute to disease phenotypes.

Rare Variants

Rare variants, especially those with moderate-to-high penetrance, are a significant source of disease risk and Mendelian disorders. Their discovery requires large-scale sequencing studies and sophisticated burden testing. Integration with functional genomics data is essential to interpret the pathogenicity of variants of unknown significance (VUS), particularly those in non-coding regions.

Table 1: Key Statistical Tools for Signal Detection

| Signal Type | Primary Tool(s) | Typical Input Data | Key Output Metric |

|---|---|---|---|

| Splice Variants | rMATS, MAJIQ, LeafCutter | RNA-Seq (paired-end) | Percent Spliced In (PSI), ΔPSI |

| Non-Coding Elements | HOMER, MEME-ChIP, LISA | ATAC-Seq, ChIP-Seq, Hi-C | Motif Enrichment p-value, Regulatory Potential Score |

| Rare Variants | PLINK/SEQ, SKAT, STAAR | WGS/WES VCF files | Burden Test p-value, Gene-based Association Score |

Table 2: Recommended Sequencing Depth for Signal Detection

| Assay | Minimum Recommended Depth | Depth for Rare Variant Calling | Notes |

|---|---|---|---|

| Whole Genome Sequencing (WGS) | 30x | 60x+ | 60x enables >95% sensitivity for rare SNVs. |

| Whole Exome Sequencing (WES) | 100x | 200x+ | Focus on coding regions; depth varies by capture kit. |

| RNA-Seq (Bulk) | 50M reads | N/A | 100M+ reads improves splice junction detection. |

| ATAC-Seq | 50M reads | N/A | Depth scales with genome size and complexity. |

Experimental Protocols

Protocol 1: Integrated Detection of Disease-Associated Splice Variants

Objective: To identify and validate aberrant splice variants from integrated RNA-Seq and WGS data.

Materials:

- High-quality total RNA (RIN > 8) and matched genomic DNA.

- Strand-specific poly-A selected RNA-Seq library prep kit.

- WGS library prep kit.

- Illumina-compatible sequencing platforms.

Procedure:

- Library Preparation & Sequencing:

- Prepare RNA-Seq libraries using a stranded poly-A enrichment protocol. Sequence to a minimum depth of 100 million paired-end 150bp reads per sample.

- Prepare WGS libraries from matched gDNA. Sequence to a minimum depth of 30x coverage.

Bioinformatic Analysis: a. RNA-Seq Processing: - Align reads to the human reference (GRCh38) using a splice-aware aligner (e.g., STAR). - Generate a transcriptome assembly per sample using StringTie2. - Merge assemblies to create a unified transcript catalog. b. Splice Variant Calling: - Use rMATS (v4.1.2) to detect significant differential splicing events between case/control groups. Key parameters:

--readLength 150 --tstat 10. - Filter results for events with |ΔPSI| > 0.1 and FDR < 0.05. c. Variant Integration: - Call SNPs/Indels from WGS using GATK Best Practices. - Intersect variant coordinates with splice junction boundaries (±50 bp) and splicing regulatory motifs from public databases (e.g., SpliceAid2). - Annotate potential splice-disrupting variants (SDVs) using SnpEff/SpliceAI.Validation:

- Design primers flanking the alternative exon. Perform RT-PCR and analyze products via capillary electrophoresis or Sanger sequencing.

Protocol 2: Linking Non-Coding Variants to Target Genes

Objective: To connect non-coding rare variants from WGS to dysregulated genes via regulatory element mapping.

Materials:

- Frozen tissue or nuclei.

- ATAC-Seq assay kit (e.g., Illumina Tagmentase TDE1).

- Chromatin conformation capture (Hi-C) or H3K27ac HiChIP library prep reagents.

Procedure:

- Assay for Transposase-Accessible Chromatin (ATAC-Seq):

- Isolate nuclei. Perform tagmentation reaction.

- Purify and amplify library. Sequence (paired-end 75bp). Aim for 50-100 million reads.

- Regulatory Element Identification:

- Process ATAC-Seq data: align (Bowtie2), call peaks (MACS2), and identify consensus open chromatin regions (OCRs).

- Annotate OCRs with HOMER (

annotatePeaks.pl) to associate with nearest TSS and known motifs.

- Chromatin Conformation Mapping (Optional but Recommended):

- Perform Hi-C or targeted H3K27ac HiChIP to capture enhancer-promoter contacts.

- Process data using HiC-Pro or HiChIP pipeline to generate significant interaction loops.

- Integration with Genetic Data:

- Overlap rare variant coordinates (from WGS) with OCRs and/or chromatin interaction anchors.

- For each variant-containing regulatory element, assign a candidate target gene using 1) closest TSS (nearest gene), 2) chromatin interaction target (Hi-C), and 3) correlation of chromatin accessibility and target gene expression (eQTL-like analysis).

- Functional Validation (CRISPR-based):

- Design sgRNAs to target the wild-type and variant-containing regulatory element in a relevant cell model.

- Use CRISPRi (dCas9-KRAB) to repress the element or CRISPRa (dCas9-VPR) to activate it.

- Measure expression changes of the candidate target gene(s) via qRT-PCR.

Protocol 3: Rare Variant Burden Testing in Cohort Studies

Objective: To perform gene-based association tests for rare variants in a case-control cohort.

Materials:

- WES or WGS data for entire cohort.

- High-quality phenotype data with clear case/control definitions.

Procedure:

- Variant Calling & Quality Control:

- Process WGS/WES data through a unified pipeline (GATK). Joint calling is recommended.

- Apply stringent QC: sample call rate > 98%, variant call rate > 95%, HWE p > 1e-6 in controls.

- Annotate variants using ANNOVAR or VEP.

- Variant Selection for Burden Testing:

- Filter for rare variants (MAF < 0.01 or < 0.001) in a population-matched gnomAD subset.

- Focus on predicted functional variants: protein-truncating (PTVs), missense (use REVEL score > 0.7), or splice-disrupting (SpliceAI score > 0.8).

- Statistical Burden Testing:

- Use the STAAR (variant-Set Test for Association using Annotation infoRmation) pipeline.

- Construct variant sets per gene, including flanking regulatory regions (e.g., ±10 kb).

- Run SKAT-O, burden test, and ACAT-V within STAAR framework to calculate gene-based p-values, adjusting for covariates (population PCs, sex).

- Apply Bonferroni correction for the number of genes tested.

- Integration with Functional Evidence:

- Integrate significant genes (p < 2.5e-6) with prior evidence: overlap with known disease genes (OMIM), HI/TS gene scores (gnomAD), and results from Protocol 2 (non-coding links).

- Prioritize genes with convergent evidence across splice variant, regulatory, and rare variant signals.

Diagrams

Diagram 1: Integrated Analysis Workflow for Novel Gene Discovery

Diagram 2: From Non-Coding Variant to Target Gene Hypothesis

The Scientist's Toolkit

Table 3: Essential Research Reagents & Resources

| Item | Function/Description | Example Product/Resource |

|---|---|---|

| Stranded mRNA-Seq Kit | Prepares RNA-Seq libraries preserving strand information, crucial for antisense transcript and overlapping gene analysis. | Illumina Stranded mRNA Prep |

| Tagmentase TDE1 (Tn5) | Enzyme for ATAC-Seq library prep. Simultaneously fragments DNA and adds sequencing adapters in open chromatin regions. | Illumina Tagmentase TDE1 |

| dCas9-KRAB Effector | CRISPR interference (CRISPRi) protein. Fused dCas9 represses transcription of target regulatory elements for functional validation. | Addgene #71236 |

| SpliceAI Plugin | In-silico tool to predict variant impact on splicing. Provides delta scores for acceptor/donor gain/loss. | Available for ANNOVAR/VEP |

| STAAR Software Package | Comprehensive rare variant association test framework that integrates variant functional annotations. | R STAAR package on CRAN |

| REVEL Score Database | Meta-predictor for pathogenicity of missense variants. Scores >0.7 indicate likely pathogenic. | dbNSFP database |

| HOMER Suite | Software for motif discovery and functional genomics analysis (ChIP-Seq, ATAC-Seq). | http://homer.ucsd.edu |

Quantitative Data Comparison of Major Public Repositories

Table 1: Core Characteristics of Featured Consortia

| Consortium/Repository | Primary Focus | Approximate Cohort Size (as of 2024) | Key Data Types | Primary Access Mechanism |

|---|---|---|---|---|

| GTEx (Genotype-Tissue Expression) | Tissue-specific gene expression & regulation | ~17,000 samples from 54 tissues (948 donors) | RNA-Seq, WGS, Histology | dbGaP authorized access; GTEx Portal for bulk data & eQTLs. |

| TCGA (The Cancer Genome Atlas) | Comprehensive molecular characterization of cancer | >20,000 primary tumor samples across 33 cancer types | WGS, WES, RNA-Seq, DNA Methylation, Proteomics | Open-access via NCI Genomic Data Commons (GDC) Data Portal. |

| UK Biobank | Population-scale health, genetics, & deep phenotyping | 500,000 participants (aged 40-69 at recruitment) | WGS/WES on 500k, GWAS array, Imaging, EHR, Biomarkers | Registered researcher application via UK Biobank Access Management System (AMS). |

| All of Us | Building a diverse, longitudinal US health cohort | ~400,000+ participants with whole genome sequences (~245,000+ released) | WGS, EHR, Surveys, Wearable Data, Fitbit | Registered researcher access via the Researcher Workbench (Controlled Tier). |

Table 2: NGS Data Scale & Integration Utility for Novel Gene Discovery

| Data Type | GTEx | TCGA | UK Biobank | All of Us | Discovery Application |

|---|---|---|---|---|---|

| Whole Genome Seq (WGS) | ~1,000 donors | Selected Pan-Cancer | 500,000 participants (ongoing) | ~245,000+ released | Non-coding variant discovery, SV analysis, population-specific alleles. |

| Whole Exome Seq (WES) | Limited | Primary for tumors | Subset (~200k) | - | Coding variant association with traits/disease (case-control). |

| RNA-Seq (Bulk) | 54 tissues, 17k samples | Tumor & matched normal (limited) | Limited, emerging | Limited, pilot phases | eQTL mapping, splicing QTLs, novel transcript discovery, pathway dysregulation. |

| Epigenomics | Limited (ATAC-Seq on subset) | DNA Methylation array | Emerging (subsets) | Planned | Identifying regulatory regions impacted by non-coding variants. |

| Linked Phenotype | Post-mortem tissue pathology | Clinical outcomes, histology | Deep & longitudinal (EHR, imaging) | Extensive & longitudinal | Connecting molecular profiles to complex, quantitative health trajectories. |

Experimental Protocols for Integrated Discovery

Protocol 2.1: Cross-Consortia Expression Quantitative Trait Locus (eQTL) Meta-Analysis for Novel Gene-Trait Association

Objective: To discover novel gene-disease associations by identifying trait-associated genetic variants that colocalize with tissue/cell-type-specific expression QTLs from GTEx, leveraging large-scale GWAS summary statistics from UK Biobank or All of Us.

Materials & Software:

- GWAS Summary Statistics: From UK Biobank or All of Us Researcher Workbench analysis.

- eQTL Catalog/Data: GTEx v8 or later eQTL summary statistics (via GTEx Portal or eQTL Catalogue).

- Compute Environment: High-performance computing cluster or cloud (e.g., Terra, DNAnexus).

- Software/Tools:

coloc(R package),QTLtools,LocusCompareR,PLINK,FUMA.

Procedure:

- Data Harmonization: Extract GWAS summary statistics for a genomic region of interest (e.g., 1 Mb around a lead SNP). Lift over coordinates to GRCh38 if necessary.

- eQTL Extraction: For the same region, extract all significant cis-eQTLs (e.g., FDR < 0.05) from relevant GTEx tissues (e.g., artery, heart, liver for cardiovascular traits).

- Colocalization Analysis: Using the

colocR package, perform Bayesian colocalization analysis between the GWAS and each tissue's eQTL dataset. Test the posterior probability (PP4 > 0.80) that both signals share a single causal variant. - Validation in Disease Context: For colocalized signals, query the TCGA data via the GDC API to assess if the candidate gene shows differential expression or somatic mutation patterns in related cancer phenotypes (e.g., colocalized gene for lipid trait in liver -> check in HCC).

- Functional Enrichment: Use tools like

GENE2FUNCfrom FUMA to test enrichment of colocalized genes in known biological pathways.

Protocol 2.2: Splicing-QTL (sQTL) Discovery in Disease Using Integrated RNA-Seq from GTEx and TCGA

Objective: To identify genetic variants associated with alternative splicing events in normal tissue (GTEx) and investigate their dysregulation in matched tumor tissue (TCGA).

Materials & Software:

- RNA-Seq BAMs/Aligned Data: From GTEx (via dbGaP) and TCGA (via GDC).

- Genotype Data: Corresponding donor genotypes (VCF files).

- Software/Tools:

LeafCutter(for splicing quantification),QTLtools(for sQTL mapping),sQTLseekeR2,STARaligner,FastQC,MultiQC.

Procedure:

- Splicing Phenotype Quantification:

- Process RNA-Seq BAMs through

LeafCutterto identify intron excision events and calculate percent spliced in (PSI) values per sample. - Create a phenotype matrix of cluster PSI values.

- Process RNA-Seq BAMs through

- sQTL Mapping in Normal Tissue (GTEx):

- Using

QTLtools cismode, test association between genotypes (within 1 Mb of cluster) and PSI values per GTEx tissue. - Apply multiple testing correction (e.g., permutation testing).

- Using

- Differential Splicing Analysis in Tumor Tissue (TCGA):

- Group TCGA samples by the genotype of the lead sQTL variant identified in GTEx (using matched normal genotypes where available).

- Compare PSI values between genotype groups in the tumor tissue using a non-parametric test (Mann-Whitney U). Adjust for tumor purity and key covariates.

- Survival Analysis: For significant differential splicing events in TCGA, perform Kaplan-Meier survival analysis (overall/disease-free survival) based on splicing cluster usage (dichotomized by median PSI).

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Reagent Solutions and Computational Platforms for Integrated Consortium Analysis

| Item / Solution | Function / Purpose in Analysis |

|---|---|

| dbGaP Authorized Access | Mandatory data access gateway for controlled genomic data from NIH-funded projects (GTEx, some TCGA). Requires institutional certification and project approval. |

| NCI Genomic Data Commons (GDC) Data Portal | Unified platform for downloading TCGA and other cancer genomics data. Provides harmonized, analysis-ready data, APIs, and visualization tools. |

| UK Biobank Research Analysis Platform (RAP) | Cloud-based environment (DNAnexus) allowing approved researchers to analyze the full WGS/array and phenotypic data without massive local download. |

| All of Us Researcher Workbench | Cloud-based, controlled-tier workspace with cohort building tools, Jupyter notebooks, RStudio, and direct access to curated datasets (EHR, WGS, surveys). |

| Terra / AnVIL Cloud Platform | Broad Institute/NHLBI cloud platform hosting GTEx, TOPMed, and other datasets. Enables scalable workflow execution (WDL/Cromwell) and collaborative analysis. |

| Coloc R Package | Core statistical tool for Bayesian colocalization testing, assessing whether two genetic traits share a common causal variant. |

| QTLtools | Efficient and flexible command-line tool suite for QTL mapping in various molecular phenotypes (eQTL, sQTL, caQTL). Handers large-scale datasets. |

| GATK Best Practices Pipelines | Industry-standard workflows (implemented in WDL on Terra, etc.) for germline and somatic variant discovery from WGS/WES data, ensuring reproducibility. |

Visualization Diagrams (Graphviz DOT Scripts)

Title: Integrated sQTL Discovery Workflow Using GTEx and TCGA

Title: Gene-Trait Colocalization and Validation Pipeline

The discovery of novel genes and therapeutic targets from Next-Generation Sequencing (NGS) data requires a rigorous, multi-stage pipeline that moves from computational observation to biologically testable hypotheses. This process bridges large-scale omics data with mechanistic molecular biology. The core challenge is to transform statistical associations derived from integrated genomic, transcriptomic, or epigenomic datasets into hypotheses with clear biological plausibility, which can then be validated experimentally to drive drug discovery.

Core Quantitative Data Landscape from Integrated NGS Studies

The following tables summarize key quantitative benchmarks and data types central to hypothesis generation in novel gene discovery.

Table 1: Typical Output Metrics from Integrated Multi-Omics Analysis Pipelines

| Data Type | Typical Volume per Sample | Key Analytical Metrics | Common Significance Threshold |

|---|---|---|---|

| Whole Genome Sequencing (WGS) | 90-150 GB (30-50x coverage) | Coverage Depth, SNP/Indel Count, SV Count | Q-score >30, p-adj < 0.05 |

| RNA-Seq (Transcriptome) | 20-40 GB (50-100M reads) | Differentially Expressed Genes (DEGs), FPKM/TPM | |log2FC| > 1, p-adj < 0.05 |

| ChIP-Seq (Epigenomics) | 30-50 GB (50M reads) | Peak Count, Peak-Gene Associations | p-value < 1e-5, FDR < 0.01 |

| ATAC-Seq (Chromatin Access) | 20-30 GB (50M reads) | Accessible Region Count | p-value < 1e-5 |

| Single-Cell RNA-Seq | 50-100 GB (10k cells) | Cells Clustered, DEGs per Cluster | p-adj < 0.05 |

Table 2: Statistical Benchmarks for Prioritizing Candidate Genes from NGS Integration

| Prioritization Filter | Typical Cut-off Value | Rationale |

|---|---|---|

| Association p-value (GWAS/eQTL) | < 5 x 10^-8 (GWAS), < 1e-5 (eQTL) | Genome-wide significance |

| Pathway Enrichment FDR | < 0.05 | Corrects for multiple hypothesis testing |

| Protein-Protein Interaction (PPI) Degree | Top 10% of network | Indicates hub gene status |

| Evolutionary Conservation (PhyloP) | Score > 2.0 | Indicates functional constraint |

| Loss-of-Function (LoF) Observed/Expected | < 0.35 | Suggests intolerance to inactivation |

Application Notes & Detailed Protocols

Protocol 1: Integrated NGS Data Analysis for Hypothesis Generation

Objective: To identify a high-confidence, novel candidate gene from integrated genomic and transcriptomic data for a disease phenotype.

Materials:

- NGS datasets (e.g., case-control WGS, RNA-Seq).

- High-performance computing cluster.

- Bioinformatics software (see Toolkit).

Procedure:

- Data Alignment & Processing:

- Align WGS reads to a reference genome (e.g., GRCh38) using BWA-MEM or similar. Call variants (SNVs, Indels, SVs) using GATK best practices.

- Align RNA-Seq reads using STAR. Quantify gene expression with featureCounts or RSEM.

Differential Analysis & Integration:

- Perform differential expression analysis (DESeq2, edgeR). Identify DEGs.

- Integrate genomic variants (e.g., non-coding variants in regulatory regions from WGS) with DEGs using tools like QTL mapping (cis-eQTL analysis) or regulatory prediction (HaploReg, RegulomeDB).

- Prioritize genes that are both differentially expressed and contain/have linked variants of high impact in the patient cohort.

Functional Enrichment & Network Analysis:

- Subject the prioritized gene list to pathway enrichment analysis (g:Profiler, Enrichr).

- Construct a protein-protein interaction (PPI) network around the candidate genes using STRING or BioGRID. Identify key hub genes.

- Cross-reference with known disease genes from databases (OMIM, DisGeNET).

Hypothesis Formulation:

- Synthesize results into a testable hypothesis. Example: "Recurrent non-coding variants in enhancer region E123 are associated with decreased expression of novel gene XYZ, which functions within the TGF-β signaling pathway, contributing to disease pathogenesis."

Expected Outcome: A shortlist of 3-5 high-priority novel candidate genes with integrated genomic support and hypothesized biological roles.

Protocol 2:In VitroFunctional Validation of a Novel Candidate Gene

Objective: To experimentally test the biological plausibility of a hypothesis generated from Protocol 1, focusing on gene XYZ.

Materials:

- Cell line relevant to disease.

- siRNA/shRNA or CRISPR-Cas9 components for knockdown/knockout.

- qPCR reagents, Western blot supplies.

- Functional assay kits (e.g., proliferation, apoptosis, migration).

Procedure:

- Modulate Candidate Gene Expression:

- Knockdown/Knockout: Transfect cells with siRNA targeting XYZ or use CRISPR-Cas9 to generate a knockout clonal line. Include negative control (scramble siRNA/non-targeting gRNA).

- Overexpression: Clone XYZ cDNA into an expression vector and transfert.

Confirm Modulation:

- Assess mRNA level by qRT-PCR (48-72h post-transfection).

- Assess protein level by Western blot.

Phenotypic Assays:

- Perform assays pertinent to the hypothesized pathway/disease mechanism (e.g., CellTiter-Glo for proliferation, Caspase-3/7 assay for apoptosis, transwell assay for migration).

- Compare phenotypes between XYZ-modulated and control cells.

Rescue Experiment:

- In XYZ-knockdown cells, re-express a siRNA-resistant wild-type XYZ cDNA.

- Demonstrate that reintroduction of XYZ restores the wild-type phenotype, confirming specificity.

Expected Outcome: Data supporting or refuting the hypothesized functional role of XYZ in the relevant cellular phenotype.

Visualization of Core Concepts

Title: The NGS Hypothesis Generation & Validation Pipeline

Title: Hypothesized Role of Novel Gene in a Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Hypothesis-Driven NGS Research

| Category | Item/Reagent | Function & Application |

|---|---|---|

| NGS Library Prep | Poly(A) Selection / Ribosomal Depletion Kits | Isolates mRNA or total RNA for RNA-Seq to analyze gene expression. |

| Tagmentase (Tn5) & Kits (e.g., Illumina Nextera) | Facilitates rapid, simultaneous fragmentation and tagging of DNA for WGS or ATAC-Seq libraries. | |

| Functional Validation | CRISPR-Cas9 Ribonucleoprotein (RNP) Complex | Enables precise knockout of candidate genes for loss-of-function studies. |

| siRNA/shRNA Libraries & Transfection Reagents | For rapid, transient knockdown of gene expression to assess phenotypic consequences. | |

| Lentiviral Expression Vectors | For stable overexpression or knockdown of candidate genes in hard-to-transfect cells. | |

| Phenotyping | Cell Viability/Proliferation Assays (e.g., CellTiter-Glo) | Quantifies changes in cell number/metabolic activity upon gene modulation. |

| Apoptosis Detection Kits (Annexin V, Caspase) | Measures programmed cell death, a key phenotype in many diseases. | |

| Pathway-Specific Reporter Assays (Luciferase, GFP) | Tests the activity of specific signaling pathways hypothesized to involve the novel gene. | |

| Analysis | Commercial NGS Analysis Suites (e.g., Partek Flow, QIAGEN CLC) | User-friendly, integrated platforms for multi-omics data processing and statistical analysis. |

| Cloud Computing Credits (AWS, Google Cloud) | Provides scalable computational power for large-scale NGS data alignment and variant calling. |

Building the Pipeline: A Step-by-Step Guide to Integrated NGS Analysis

Within the paradigm of novel gene discovery through Next-Generation Sequencing (NGS) data integration, the construction of a robust, reproducible, and multi-modal computational workflow is paramount. This architecture must transform raw sequencing data (FASTQ) into integrated, multi-layer feature matrices that encapsulate genomic, transcriptomic, and epigenomic states. These matrices serve as the foundational data structure for downstream integrative bioinformatics and machine learning analyses aimed at identifying novel gene candidates and their regulatory mechanisms in disease contexts, directly informing target discovery in drug development.

Application Notes: Core Workflow Architecture

The end-to-end pipeline is modular, allowing for parallel processing and integration at key junctions. The primary layers are: Primary Analysis (Sequencing to Aligned Reads), Secondary Analysis (Aligned Reads to Core Features), and Tertiary Analysis (Feature Integration & Matrix Construction).

Table 1: Quantitative Metrics for Key Workflow Stages

| Workflow Stage | Key Tool/Platform | Typical Compute Time* | Key Output | Critical QC Metric |

|---|---|---|---|---|

| Raw Data QC | FastQC, MultiQC | 0.5-2 hrs / sample | HTML Report | Per base sequence quality > Q28 |

| Read Trimming | Trimmomatic, fastp | 1-3 hrs / sample | Trimmed FASTQ | >90% reads retained post-trim |

| Alignment (DNA-seq) | BWA-MEM2, Bowtie2 | 2-8 hrs / sample | BAM/SAM file | Alignment Rate > 85% |

| Alignment (RNA-seq) | STAR, HISAT2 | 1-4 hrs / sample | Sorted BAM | Exonic vs Intronic reads ratio |

| Variant Calling | GATK Best Practices | 4-12 hrs / cohort | VCF file | Transition/Transversion Ratio ~2.1 |

| Gene Expression | featureCounts, HTSeq | 0.5-1 hr / sample | Count Matrix | R^2 > 0.9 in sample correlation |

| Peak Calling (ATAC/ChIP) | MACS2 | 1-3 hrs / sample | BED file | FRiP score > 0.01 (ChIP), > 0.2 (ATAC) |

| Feature Matrix Integration | R/Python (custom) | Variable | Multi-omics Matrix | Concordance (e.g., eQTL overlap) |

*Times are approximate for mammalian genomes on high-performance compute nodes.

Experimental Protocols

Protocol 3.1: Integrated RNA-seq & ATAC-seq Processing for Regulatory Feature Matrix

Objective: To generate a coordinated gene expression and chromatin accessibility feature matrix from matched RNA-seq and ATAC-seq data.

Materials: See "Scientist's Toolkit" below. Procedure:

- Parallel Sample Processing:

- RNA-seq: Trim raw FASTQs (Trimmomatic: ILLUMINACLIP:TruSeq3-PE-2.fa:2:30:10, LEADING:3, TRAILING:3, SLIDINGWINDOW:4:15, MINLEN:36). Align to reference genome (STAR --runMode alignReads --genomeDir [Index] --readFilesIn [R1,R2]). Generate gene-level counts (featureCounts -T 8 -p -t exon -g gene_id -a [GTF] -o counts.txt [BAM]).

- ATAC-seq: Trim adapters (fastp --detectadapterforpe). Align (bowtie2 -X 2000 --very-sensitive -x [Index] -1 [R1] -2 [R2]). Filter for properly paired, non-mitochondrial reads (samtools view -f 2 -F 1804 -q 30). Shift reads for Tn5 offset (alignmentSieve --ATACshift). Call peaks (MACS2 callpeak -t [shiftedBAM] -f BAMPE --nomodel --shift -100 --extsize 200 -n [output]).

- Quality Control & Normalization:

- RNA-seq: Assess with MultiQC. Normalize counts using DESeq2's median of ratios method (for between-sample) or convert to Transcripts Per Million (TPM) for gene-level features.

- ATAC-seq: Calculate Fraction of Reads in Peaks (FRiP). Create a consensus peakset across all samples (bedtools merge). Generate a raw count matrix of reads in consensus peaks per sample.

- Feature Matrix Construction:

- Create a sample-by-feature data frame.

- Layer 1 (Expression): Incorporate normalized gene expression values (e.g., log2(TPM+1)) for all annotated genes.

- Layer 2 (Accessibility): Incorporate normalized (e.g., DESeq2 varianceStabilizingTransformation) chromatin accessibility counts for all consensus peaks.

- Layer 3 (Linked Features): Annotate ATAC-seq peaks to genes (e.g., using ChIPseeker) and create derived features such as "promoter accessibility" by averaging normalized counts of peaks within ±2kb of each gene's TSS.

- The final matrix is an

[N_samples x (M_genes + K_peaks + L_linked_features)]table, saved in HDF5 or tab-separated format for downstream analysis.

Protocol 3.2: Somatic Variant Integration into a Pan-Omics Matrix

Objective: To integrate somatic single nucleotide variants (SNVs) and small indels with expression and accessibility features. Procedure:

- Variant Calling: For tumor/normal pairs, follow GATK Mutect2 best practices for WES/WGS data to generate a high-confidence somatic VCF.

- Variant Annotation: Use SnpEff or VEP to annotate variants with genomic consequences (e.g., missense, stopgained, spliceregion).

- Feature Encoding:

- Create a binary (0/1) matrix layer of shape

[N_samples x P_genes]where1indicates the presence of a predicted damaging somatic variant (missense, nonsense, frameshift) in that gene for that sample. - Create a separate quantitative layer for variant allele frequency (VAF) for selected high-impact variants.

- Create a binary (0/1) matrix layer of shape

- Matrix Merging: Horizontally concatenate the variant binary/VAF matrices with the matrix from Protocol 3.1 using sample IDs as the primary key. Missing values (e.g., a sample without variant data) are coded as

NA.

Visualizations

Diagram 1: End-to-End Workflow Architecture

Diagram Title: End-to-End NGS Feature Matrix Pipeline.

Diagram 2: Multi-Layer Feature Matrix Structure

Diagram Title: Multi-Layer Feature Matrix Composition.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Computational Tools for Feature Matrix Generation

| Item | Supplier / Platform | Function in Workflow |

|---|---|---|

| Illumina Sequencing Kits (NovaSeq 6000) | Illumina | Generate raw paired-end FASTQ files. Foundation of all data streams. |

| TruSeq RNA Library Prep Kit | Illumina | Prepares stranded RNA-seq libraries for transcriptome analysis. |

| Nextera DNA Library Prep Kit | Illumina | Used for ATAC-seq library preparation, enabling chromatin accessibility profiling. |

| KAPA HyperPrep Kit | Roche | Robust library preparation for WES/WGS, ensuring uniform coverage for variant calling. |

| IDT for Illumina - Unique Dual Indexes | Integrated DNA Technologies | Enables high-plex, sample multiplexing to reduce batch effects in multi-sample matrices. |

| RNA Integrity Number (RIN) Reagents (Bioanalyzer RNA Kit) | Agilent Technologies | Assess RNA quality pre-library prep; critical for reliable expression features. |

| Cell Lysis & Transposition Buffer (for ATAC-seq) | Homemade or Commercial | Lyses cells and uses Tn5 transposase to fragment accessible DNA, the key ATAC-seq reaction. |

| Reference Genome & Annotation (GRCh38.p14, GENCODE v44) | GENCODE Consortium | Provides the coordinate and feature framework for alignment, quantification, and annotation. |

| High-Performance Compute Cluster (Linux, Slurm/SGE) | Local HPC or Cloud (AWS, GCP) | Essential computational infrastructure for parallel processing of NGS data. |

| Containerized Software (Docker/Singularity Images) | BioContainers, Docker Hub | Ensures version control, reproducibility, and portability of all tools in the workflow. |

Within the context of a thesis on NGS data integration for novel gene discovery, the challenge lies in synthesizing heterogeneous high-dimensional data (e.g., transcriptomics, epigenomics, proteomics) to uncover coherent biological signals. This document provides application notes and protocols for three core computational tools: MOFA+, Similarity Network Fusion (SNF), and Multi-View Clustering, which are pivotal for integrative analysis.

Application Notes & Protocols

MOFA+ (Multi-Omics Factor Analysis v2)

Application Note: MOFA+ is a statistical framework for the unsupervised integration of multi-omic data sets. It disentangles the variation in the data into a set of (potentially) interpretable latent factors, each capturing a distinct source of biological or technical variability. In novel gene discovery, it identifies factors associated with disease states that are driven by coordinated changes across omics layers, highlighting key regulatory genes.

Quantitative Data Summary:

Table 1: Typical MOFA+ Output Metrics for a Multi-Omics NGS Study

| Metric | Description | Typical Range/Value in Analysis |

|---|---|---|

| Number of Factors (K) | Latent dimensions explaining data variance | 5-15 (data-dependent) |

| Total Variance Explained (R²) | Sum of variance explained across all views & factors | 50-80% |

| Variance Explained per View | Proportion of variance explained in each data type (e.g., RNA-seq, ATAC-seq) | 10-40% per view |

| Factor 1 Variance | Variance captured by the primary factor, often linked to key phenotype | 15-30% |

| Number of Strongly Loaded Features | Features (genes, peaks) with significant weight on a factor | 100s-1000s per factor |

Experimental Protocol: MOFA+ Analysis for Gene Discovery

- Input Data Preparation: For each omics view (e.g., RNA-seq, methylation), generate a samples x features matrix. Features are genes or genomic regions. Normalize and scale data appropriately (e.g., log-CPM for RNA-seq, M-values for methylation).

- Model Training: Use the

mofapy2orMOFA2(R package) interface. - Factor Interpretation: Correlate factor values with sample metadata (e.g., disease status) to annotate factors. Extract features with high absolute weights (

factor_loadings) for each factor. - Downstream Analysis: For a disease-associated factor, perform pathway enrichment analysis on top-weighted genes from each view. Intersect top features across views to identify multi-omics driver genes.

MOFA+ Integration Workflow for Gene Discovery

Similarity Network Fusion (SNF)

Application Note: SNF constructs a single patient similarity network by fusing networks computed from different omics data types. It is robust to noise and scale differences. In cohort-based NGS studies, SNF enables the identification of disease subtypes that are consistent across multiple molecular layers, within which novel subtype-specific genes can be discovered.

Quantitative Data Summary:

Table 2: SNF Algorithm Parameters and Typical Outcomes

| Parameter/Outcome | Description | Common Setting/Value |

|---|---|---|

| K (Neighbor Size) | Number of nearest neighbors for affinity graph | 20-30 |

| μ (Hyperparameter) | Scaling factor for weight normalization | 0.3-0.8 |

| Iteration (T) | Number of fusion iterations | 10-20 |

| Final Network Clusters | Number of patient subgroups identified | 3-5 (phenotype-dependent) |

| Silhouette Score | Measure of cluster cohesion/separation | >0.2 indicates structure |

Experimental Protocol: Subtyping via SNF

- Similarity Network Construction: For each omics view, calculate a patient-to-patient similarity matrix (e.g., using Euclidean distance converted to affinity via a heat kernel).

- Network Fusion: Apply the SNF algorithm to iteratively fuse the networks.

- Clustering: Perform spectral clustering on the fused network to obtain patient subgroups.

- Differential Analysis: For each identified subtype vs. others, perform differential expression/analysis on each omics data type separately. Integrate differential gene lists to find consensus markers.

SNF-Based Multi-Omics Subtyping Process

Multi-View Clustering

Application Note: This encompasses a class of algorithms (e.g., co-regularized, deep learning-based) that perform clustering directly on multiple views of data, seeking a consensus partition. It is highly flexible and can handle non-linear relationships. For gene discovery, it provides a direct pipeline from integrated data to patient groups and their defining molecular features.

Quantitative Data Summary:

Table 3: Comparison of Multi-View Clustering Methods

| Method | Type | Key Strength | Consideration for NGS |

|---|---|---|---|

| Co-Regularized Spectral | Spectral | Theoretical guarantees, clear optimization | Prone to noise in high dimensions |

| Multi-Kernel Learning | Kernel | Handles diverse data structures | Kernel choice critical |

| Deep Embedded Clustering (DEC) | Deep Learning | Captures complex non-linear patterns | Requires larger sample sizes, tuning |

Experimental Protocol: Consensus Clustering with Regularization

- View-Specific Clustering: Generate base clusterings from each omics view.

- Consensus Optimization: Apply a multi-view clustering algorithm that minimizes the disagreement between view-specific clusterings while preserving data fidelity.

- Validation: Use internal indices (e.g., Davies-Bouldin Index) and stability measures across algorithms.

- Biomarker Extraction: For each consensus cluster, identify features that are both discriminative and consistent across views using statistical tests (e.g., ANOVA, Kruskal-Wallis) and consistency metrics.

Multi-View Clustering Consensus Pathway

The Scientist's Toolkit

Table 4: Key Research Reagent Solutions for Computational NGS Integration

| Item | Function in Analysis | Example/Note |

|---|---|---|

| Normalized NGS Count Matrices | Primary input for all tools. Requires careful pre-processing (QC, normalization, batch correction). | RNA-seq: TPM or DESeq2 vst; Methylation: M-values; ATAC-seq: peak counts. |

| High-Performance Computing (HPC) Environment | Essential for running iterative algorithms on large matrices. | Cloud instances (AWS, GCP) or local clusters with sufficient RAM (>64GB recommended). |

| MOFA2 / mofapy2 Package | Implements the MOFA+ model for factor analysis. | Available via Bioconductor (R) and PyPI (Python). |

| SNFtool / snfpy Library | Provides functions for Similarity Network Fusion. | R SNFtool and Python snfpy are standard. |

| Multi-View Clustering Libraries | Implement various consensus clustering algorithms. | R: MultiAssayExperiment, mogsa; Python: mvlearn, sckit-multiview. |

| Spectral Clustering Solver | For partitioning similarity networks (SNF) or in multi-view algorithms. | arpack in R; sklearn.cluster.SpectralClustering in Python. |

| Pathway Analysis Database | For functional interpretation of discovered gene lists. | MSigDB, KEGG, Reactome used with tools like clusterProfiler (R). |

| Interactive Visualization Suite | To explore factors, networks, and clusters. | shiny (R), plotly, or UCSC Xena for cohort-level visualization. |

Application Notes: Integrating NGS Data for Novel Gene Discovery

The integration of diverse Next-Generation Sequencing (NGS) datasets (e.g., bulk/single-cell RNA-seq, ATAC-seq, WGS, epigenetics) presents a high-dimensional, multi-modal challenge. This note details the application of three advanced computational frameworks to distill biological signals and identify novel gene candidates.

Bayesian Methods provide a principled probabilistic framework for integrating prior knowledge (e.g., from pathway databases) with noisy NGS data. They are particularly valuable for quantifying uncertainty in gene-disease associations and modeling complex hierarchical structures in biological data.

Tensor Decomposition offers a natural mathematical structure for simultaneously analyzing multi-way data (e.g., genes × samples × experimental conditions × omics types). It can uncover latent patterns and interactions that are obscured in matrix-based analyses.

Graph Neural Networks (GNNs) operate directly on biological networks (protein-protein interaction, gene co-expression, knowledge graphs). They learn meaningful representations of genes by aggregating information from their network neighbors, enabling the prediction of novel gene functions in context.

Table 1: Comparative Summary of Key Methodological Approaches

| Approach | Core Strength | Typical NGS Data Input | Primary Output for Gene Discovery |

|---|---|---|---|

| Bayesian Models | Quantifies uncertainty, incorporates prior knowledge. | Gene expression counts, variant calls, prior probability distributions. | Posterior probabilities of gene-disease association, credible intervals. |

| Tensor Decomposition | Joint analysis of multi-modal, multi-condition data. | Expression matrices from multiple omics layers and conditions stacked into a tensor. | Latent components representing gene-programmes active across conditions/omics. |

| Graph Neural Networks | Learns from relational/network structure. | Gene expression data mapped onto a biological network (nodes=genes, edges=interactions). | Low-dimensional node embeddings for gene function prediction or prioritization. |

Experimental Protocols

Protocol 2.1: Bayesian Differential Expression & Pathway Integration

Objective: To identify differentially expressed genes (DEGs) with robust uncertainty estimates and prior pathway knowledge.

- Data Preparation: Generate a gene count matrix from RNA-seq data (e.g., using STAR/HTSeq). Compile a prior gene list from relevant pathway databases (e.g., KEGG, Reactome).

- Model Specification: Implement a Bayesian hierarchical model. Use a Negative Binomial likelihood for counts. Place a weakly informative prior (e.g., Cauchy) on log fold-changes. For genes in the prior pathway list, adjust the prior mean toward non-zero.

- Inference: Perform Markov Chain Monte Carlo (MCMC) sampling using tools like

Stan,PyMC, orBRMS. Run 4 chains for 4000 iterations each. - Diagnostics & Analysis: Check chain convergence (R-hat < 1.05). Calculate the posterior probability that the absolute log2 fold-change > 0.5. Genes with probability > 0.95 are high-confidence DEGs. Analyze the posterior distribution of pathway enrichment.

Protocol 2.2: Multi-Omic Integration via Tensor Decomposition

Objective: To decompose a multi-omic data tensor into interpretable latent factors.

- Tensor Construction: Align samples across m omics assays (e.g., RNA-seq, ATAC-seq, methylation). For each assay, create a genes (or genomic regions) × samples matrix. Stack matrices to form a 3D tensor: Genes × Samples × Omics Assays.

- Preprocessing & Imputation: Log-transform and Z-score normalize each frontal slice (omics assay). Use tensor completion algorithms (e.g., via

scikit-tensor) to impute any missing values. - Decomposition: Apply Canonical Polyadic (CP) or Tucker decomposition using libraries (e.g.,

TensorLy). Determine the number of components (rank) via cross-validation or stability analysis. - Interpretation: For each latent component, extract the gene, sample, and omics loadings. Genes with high absolute loading in a component define a multi-omic programme. Correlate sample loadings with phenotypes to identify biologically relevant programmes for downstream validation.

Protocol 2.3: Gene Prioritization with Graph Neural Networks

Objective: To prioritize novel candidate genes for a phenotype using a biological knowledge graph.

- Graph Construction: Build an heterogeneous graph with nodes representing genes, diseases, and GO terms. Edges represent known relationships (e.g., PPI, gene-disease associations, gene-GO annotations). Integrate node features from NGS (e.g., mean expression, differential expression statistics).

- Model Training: Implement a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) using

PyTorch GeometricorDGL. Formulate task as semi-supervised node classification: a subset of genes are labeled (e.g., known disease-associated vs. non-associated). - Training Loop: Split gene nodes into train/validation/test sets. Train the GNN to predict the gene label. Use cross-entropy loss and Adam optimizer.

- Prioritization: Apply the trained model to all unlabeled genes. Rank genes by the predicted probability of belonging to the disease-associated class. The top-ranked genes are novel candidates.

Diagrams

DOT Script for Figure 1: NGS Data Integration Workflow

Title: NGS Data Integration for Gene Discovery

DOT Script for Figure 2: GNN Message Passing on a Gene Network

Title: GNN-based Gene Function Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for NGS Data Integration

| Item | Function/Description | Example Tool/Resource |

|---|---|---|

| Bayesian Inference Engine | Software for specifying and sampling from probabilistic models. | Stan (with CmdStanPy/brms), PyMC3 |

| Tensor Computation Library | Enables construction, decomposition, and manipulation of multi-dimensional arrays. | TensorLy (Python), rTensor (R) |

| Deep Graph Learning Framework | Provides optimized primitives for building and training GNNs. | PyTorch Geometric, Deep Graph Library (DGL) |

| Biological Network Database | Curated source of gene/protein interactions and functional associations. | STRING, BioGRID, HumanNet |

| Multi-omic Data Harmonizer | Tools for normalizing and aligning different genomic data types. | MOFA2, mixOmics, Seurat (for single-cell) |

| High-Performance Computing (HPC) / Cloud Resource | Necessary computational power for large-scale Bayesian inference, tensor operations, and GNN training. | SLURM cluster, Google Cloud AI Platform, AWS SageMaker |

Within a thesis on NGS data integration for novel gene discovery, the primary challenge is distilling hundreds of candidate genetic variants into a shortlist of high-probability disease genes. This document outlines a robust, two-tiered computational strategy that combines Functional Annotation Scoring (FAS) with Network Propagation (NP) to achieve this prioritization. The method is designed to integrate diverse genomic data types (e.g., WGS, RNA-seq) and biological knowledge, moving beyond simple variant filtering to a systems-level analysis.

Core Concept: Functional annotation provides a direct, gene-centric view of biological relevance, while network propagation contextualizes genes within the complex interactome, identifying genes that are functionally related to known disease mechanisms even if their individual variant scores are modest.

Table 1: Functional Annotation Sources & Scoring Metrics

| Annotation Category | Data Source (Example) | Priority Score Weight | Interpretation |

|---|---|---|---|

| Variant Pathogenicity | Combined Annotation Dependent Depletion (CADD), REVEL | 0.30 | Higher score = more deleterious predicted impact. |

| Gene Constraint | pLI (gnomAD), LOEUF | 0.15 | Low LOEUF / high pLI = intolerance to variation, suggesting essentiality. |

| Expression Relevance | GTEx (Tissue-specific TPM), Human Protein Atlas | 0.20 | High expression in disease-relevant tissues increases priority. |

| Functional Evidence | OMIM, ClinGen, Mouse KO phenotype (MGI) | 0.25 | Known disease association or model organism phenotype provides strong support. |

| Literature & Pathway | Gene Ontology (GO), Reactome, PubMed co-citation | 0.10 | Enrichment in relevant biological processes or pathways. |

Table 2: Network Propagation Parameters & Outcomes

| Parameter | Typical Setting | Impact on Results |

|---|---|---|

| Network | Human Protein Reference Database (HPRD), STRING, HuRI | Determines biological relationships (PPI, signaling, co-expression). |

| Restart Probability | 0.7 - 0.9 | Higher value keeps propagation closer to seed genes; lower value explores network more broadly. |

| Seed Genes | High-confidence FAS genes, known disease genes from OMIM | Quality and relevance of seeds directly dictates propagation output. |

| Propagation Output | "Heat" score per gene (0-1) | Genes with high scores are topologically central to the seed module. |

Experimental Protocols

Protocol 1: Functional Annotation Scoring Pipeline

- Input: List of candidate genes from NGS variant calling (e.g., from rare variant burden test).

- Data Aggregation: For each gene, programmatically query APIs/databases (Ensembl, gnomAD, GTEx) to collect metrics listed in Table 1.

- Normalization: Min-Max normalize each metric to a [0,1] scale per gene list.

- Composite Scoring: Calculate the weighted sum:

FAS_gene = Σ (Normalized_Metric_i * Weight_i). - Ranking: Sort genes by descending FAS score. Select top 20% as high-priority seeds for Protocol 2.

Protocol 2: Network Propagation Analysis

- Network Preparation: Download a comprehensive, high-quality PPI network (e.g., from STRING, confidence score >700). Represent as an adjacency matrix.

- Seed Definition: Input the high-priority FAS genes and a set of known disease-related genes (positive controls) as seed nodes. Assign initial probability of 1.0 to seeds, 0 to all other nodes.

- Random Walk with Restart (RWR) Execution:

a. Define the transition probability matrix W by column-normalizing the adjacency matrix.

b. Initialize the probability vector p₀ for all nodes.

c. Iterate the equation: p{t+1} = (1 - r) * W * pt + r * p₀, where

ris the restart probability (e.g., 0.8). d. Run until convergence (||p{t+1} - pt|| < 1e-10). - Output Interpretation: The steady-state probability vector p_∞ contains the "heat" for each gene. Rank all genes by this score. Prioritize genes with high network heat, even if their FAS score was moderate.

Mandatory Visualizations

Two-Tier Gene Prioritization Workflow

Network Propagation via Random Walk

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Prioritization Pipeline |

|---|---|

| Ensembl VEP (Variant Effect Predictor) | Annotates variant consequences (missense, LOF) on genes, transcripts, and regulatory regions. Critical first step. |

| CADD & REVEL Scores | Pre-computed, integrative metrics predicting the deleteriousness of genetic variants. Used in FAS. |

| gnomAD Browser | Provides population allele frequencies and gene constraint metrics (pLI, LOEUF) to filter common and tolerant variants. |

| STRING/BIANA Database | Sources of curated and predicted protein-protein interaction data for constructing the biological network. |

| Cytoscape with CytoRWR Plugin | GUI-based platform for network visualization and running/visualizing RWR propagation results. |

| R/Bioconductor (igraph, dnet) | Programming environment for scripting the entire prioritization pipeline, especially the RWR algorithm. |

| Google Colab / Jupyter Notebook | Platform for creating reproducible, documented computational protocols shared across research teams. |

Within the framework of a thesis on NGS data integration for novel gene discovery, this application note details a systematic protocol for identifying novel oncogenes from large-scale, heterogeneous pan-cancer next-generation sequencing (NGS) datasets. The approach integrates genomic, transcriptomic, and clinical data to prioritize high-confidence candidate genes for functional validation in drug development pipelines.

Core Data Analysis Workflow

Data Acquisition & Preprocessing

The initial phase involves collating multi-modal NGS data from public repositories and institutional sources. Key datasets include whole-exome/genome sequencing (WES/WGS), RNA-Seq, and single-nucleotide variant (SNV) calls.

Table 1: Representative Pan-Cancer NGS Data Sources (Current as of 2024)

| Data Source | Data Type | Approx. Sample Count | Key Use |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | WES, RNA-Seq, Clinical | >11,000 patients across 33 cancers | Foundational somatic variant & expression analysis |

| International Cancer Genome Consortium (ICGC) | WGS, WES | ~25,000 genomes | Discovery of low-frequency variants |

| Genomics Evidence Neoplasia Information Exchange (GENIE) | Targeted Panel Seq | >150,000 tumors | Real-world clinical genomic data |

| Cancer Cell Line Encyclopedia (CCLE) | WES, RNA-Seq | ~1,000 cell lines | In vitro experimental model data |

Integrated Bioinformatics Pipeline

A multi-step computational pipeline is implemented to filter and prioritize candidate oncogenes.

Table 2: Key Filtering Criteria for Oncogene Identification

| Filtering Step | Metric/Threshold | Rationale |

|---|---|---|

| Mutation Significance | Recurrence (≥5 cases in cohort); Missense/Truncating Mutations | Identifies genes mutated more frequently than background. |

| Functional Impact | Combined Annotation Dependent Depletion (CADD) score >20 | Predicts deleteriousness of genetic variants. |

| Expression Outliers | Z-score >2 in ≥10% of tumor samples per cancer type | Identifies genes with aberrant overexpression. |

| Copy Number Gain | GISTIC 2.0 q-value <0.05; Amplification frequency >5% | Highlights genomic regions of significant amplification. |

| Correlation with Proliferation | Positive correlation (Pearson r >0.3) with Ki-67/MKI67 expression | Links gene to a core cancer phenotype. |

Diagram Title: Oncogene Discovery Bioinformatics Pipeline

Experimental Protocols for Functional Validation

Protocol: CRISPR-Cas9 Knockout Proliferation Assay

Objective: To assess the dependency of cancer cell lines on a prioritized candidate gene.

Materials:

- Cancer cell lines (e.g., from CCLE) harboring candidate gene amplification/mutation.

- Lentiviral vectors for sgRNA delivery (e.g., lentiCRISPRv2).

- Puromycin for selection.

- Cell Titer-Glo Luminescent Cell Viability Assay kit.

Methodology:

- Design sgRNAs: Design three unique sgRNAs targeting exons of the candidate gene using the Broad Institute GPP Portal.

- Lentivirus Production: Co-transfect HEK293T cells with lentiCRISPRv2-sgRNA construct and packaging plasmids (psPAX2, pMD2.G). Harvest virus supernatant at 48 and 72 hours.

- Cell Line Transduction: Transduce target cancer cell lines with viral supernatant plus polybrene (8 µg/mL). Select with puromycin (2 µg/mL) for 7 days.

- Proliferation Assay: Seed 500 cells/well in 96-well plates. Measure viability using Cell Titer-Glo reagent at days 0, 3, 6, and 9 post-seeding. Normalize luminescence to day 0.

- Analysis: Calculate relative proliferation rate compared to non-targeting control (NTC) sgRNA. A significant decrease (>50%) indicates oncogene dependency.

Protocol:In VivoTumorigenesis Assay

Objective: To evaluate the oncogenic potential of candidate gene overexpression in vivo.

Materials:

- Immunocompromised mice (e.g., NSG).

- Immortalized human epithelial cells (e.g., HBEC).

- Lentiviral construct for candidate gene overexpression.

- Matrigel for subcutaneous injections.

- Calipers for tumor measurement.

Methodology:

- Generate Stable Overexpression Line: Transduce HBEC cells with lentivirus overexpressing the candidate gene or empty vector control. Select with appropriate antibiotic.

- Xenograft Formation: Resuspend 1x10^6 cells in 100 µL of 1:1 PBS:Matrigel. Inject subcutaneously into flanks of 8-week-old NSG mice (n=8 per group).

- Tumor Monitoring: Measure tumor dimensions twice weekly using calipers. Calculate volume: (length x width^2) / 2.

- Endpoint: Euthanize mice at 6 weeks or when tumor burden reaches IACUC limit. Harvest tumors for weight measurement and molecular analysis (IHC, Western blot).

- Analysis: Compare tumor incidence, latency, and final volume/weight between groups using a two-tailed Student's t-test.

Diagram Title: Functional Validation Decision Flowchart

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Oncogene Discovery & Validation

| Reagent/Tool | Supplier Examples | Function in Protocol |

|---|---|---|

| lentiCRISPRv2 Vector | Addgene (#52961) | Lentiviral backbone for delivery of sgRNA and Cas9 for knockout studies. |

| Cell Titer-Glo 2.0 | Promega (G9242) | Luminescent assay for quantifying viable cells based on ATP content. |

| Matrigel Basement Membrane Matrix | Corning (356231) | Provides a 3D substrate for in vivo tumor cell engraftment and growth. |

| Puromycin Dihydrochloride | Thermo Fisher (A1113803) | Selection antibiotic for cells transduced with puromycin-resistant vectors. |

| TruSeq RNA Exome Kit | Illumina (20020189) | Target enrichment for exome sequencing from RNA, linking variants to expression. |

| CAS9 Protein (HiFi) | Integrated DNA Technologies | High-fidelity Cas9 protein for precise in vitro editing prior to screening. |

| Human Phospho-Kinase Array | R&D Systems (ARY003C) | Multiplex immunoblotting to identify signaling pathways activated by candidate oncogene. |

Pathway Analysis & Therapeutic Implications

Following validation, mapping the candidate oncogene into known signaling networks is crucial. A commonly implicated pathway is the Receptor Tyrosine Kinase (RTK)/MAPK axis.

Diagram Title: Candidate Oncogene in RTK/MAPK/PI3K Pathway

Conclusion: This integrated protocol, from pan-cancer data mining to in vivo validation, provides a robust framework for discovering novel oncogenes. Successfully validated genes become high-priority targets for the development of small-molecule inhibitors or antibody-based therapies, directly feeding into translational drug development pipelines.

This application note, framed within a thesis on NGS data integration for novel gene discovery, details the practical implementation of two major software suites, OmicSoft (by Qiagen) and Galaxy. The focus is on constructing a reproducible, end-to-end analysis pipeline for RNA-Seq data to identify differentially expressed genes and prioritize candidates for functional validation.

Table 1: Core Feature Comparison of OmicSoft and Galaxy

| Feature | OmicSoft Suite | Galaxy Platform |

|---|---|---|

| Primary Model | Commercial, desktop/client-server. | Open-source, web-based. |

| Core Strength | Curated multi-omics databases (OmicSoft Land) & integrated analysis. | Extensible, reproducible workflow system with vast tool repository. |

| Data Management | Proprietary structured project and array server. | History-based data management with data libraries. |

| Workflow Creation | Visual workflow designer (OWS) with predefined protocols. | Graphical workflow editor from tool history. |

| Primary User Base | Biopharma, translational researchers. | Academia, core facilities, individual researchers. |

| Reproducibility | Project snapshots and protocol saves. | Publically shareable histories, workflows, and interactive pages. |

| Key for NGS Integration | Built-in normalization across 1000s of public/private experiments. | Unified interface for 1000+ bioinformatics tools. |

Application Note: RNA-Seq Analysis for Novel Gene Discovery

Objective: To identify and prioritize novel gene candidates from tumor vs. normal RNA-Seq data.

Overall Experimental Workflow:

Diagram Title: End-to-End RNA-Seq Analysis Workflow for Gene Discovery

Protocol 1: Analysis Using OmicSoft Suite

Methodology:

Data Ingestion & Curation:

- Create a new project in

Array Studio. - Import paired-end FASTQ files via

File | Import | NGS Data. Associate metadata (e.g., Tumor/Normal). - Alternatively, query the

OmicSoft Landdatabase to download curated public datasets relevant to your disease context for integrative analysis.

- Create a new project in

Alignment and Quantification:

- Navigate to

NGS | RNA-Seq | Align and Quantify. - Select the FASTQ data. Choose the

Spliced Transcripts Alignment to a Reference (STAR)aligner and an appropriate genome build (e.g., HG38). - Set gene/transcript model to

EnsemblorRefSeq. Execute the pipeline.

- Navigate to

Differential Expression (DE) Analysis:

- Select the resulting

Gene Countobject. - Go to

Analysis | Statistical Analysis | Two Group Comparison. - Define the contrast (Tumor vs. Normal). Select

DESeq2orEdgeRas the statistical test. - Apply a significance filter (e.g.,

FDR < 0.05,|Fold Change| > 2). Export the DE list.

- Select the resulting

Integration and Prioritization:

- Use the

Pathway Studiomodule (or built-in enrichment tools). Load the DE gene list. - Perform pathway enrichment (

GO,KEGG), and overlay results with potential mutation (e.g., TCGA) or protein interaction data from Land. - Filter for genes in significantly dysregulated pathways with low prior characterization—these are novel candidates.

- Use the

Protocol 2: Analysis Using Galaxy Platform

Methodology:

Data Upload & QC:

- Upload FASTQ files via

Get Data | Upload Filefrom your computer or via FTP. - Run

FastQC(Toolbox | NGS: QC and manipulation) to assess read quality. - Use

Trimmomaticorfastpto trim adapters and low-quality bases.

- Upload FASTQ files via

Alignment and Quantification:

- Use

RNA STAR(NGS: RNA Analysis) to align trimmed reads to the human reference genome (e.g., hg38). - Run

featureCounts(NGS: RNA Analysis) orSalmonto generate gene-level counts, using a GTF annotation file.

- Use

Differential Expression Analysis:

- Use the

DESeq2tool (Transcriptomics Analysis). Input the count matrix and a sample condition file. - Define the factor and condition (e.g., condition: Tumor, Normal). Execute.