Mastering GBLUP: A Practical Guide to Parameter Tuning Across Trait Heritabilities for Genomic Prediction

This article provides a comprehensive guide for researchers and drug development professionals on tuning Genomic Best Linear Unbiased Prediction (GBLUP) parameters for traits with varying heritabilities.

Mastering GBLUP: A Practical Guide to Parameter Tuning Across Trait Heritabilities for Genomic Prediction

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on tuning Genomic Best Linear Unbiased Prediction (GBLUP) parameters for traits with varying heritabilities. We explore the foundational relationship between heritability and genomic models, detail step-by-step methodological approaches for parameter optimization, address common computational and statistical challenges, and validate strategies through comparative analysis with alternative methods. The guide synthesizes current best practices to enhance the accuracy and reliability of genomic predictions in complex trait analysis and precision medicine initiatives.

Understanding the Core: How Trait Heritability Fundamentally Shapes GBLUP Model Performance

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My GBLUP model training fails with a "Matrix is not positive definite" error when using the genomic relationship matrix (G). What should I do? A1: This error indicates that your genomic relationship matrix (G) is singular or ill-conditioned. This is common when the number of markers (p) is less than the number of individuals (n) or when markers are highly correlated. Solutions include:

- Add a Small Ridge Parameter: Modify G to G* = G + λI, where λ is a small value (e.g., 0.01). This stabilizes the matrix for inversion.

- Quality Control (QC): Re-check your genotype data. Remove markers with extremely high missing rates (>10%) or minor allele frequency (MAF < 0.01). Consider pruning for high linkage disequilibrium (LD).

- Use a Different G-Matrix Construction Method: If using the first method described by VanRaden (2008), try the second method, which can be more stable in certain population structures.

Q2: The estimated genomic heritability from GBLUP is vastly different from the pedigree-based estimate. Is this a bug? A2: Not necessarily. Discrepancies are informative and often stem from biological or methodological factors.

- Genetic Architecture: GBLUP captures only the additive genetic variance tagged by your marker panel. If important causal variants are not in high LD with your markers, the genomic heritability will be underestimated.

- Population Structure: GBLUP estimates are specific to the population used to build G. Ensure your training and validation sets are from the same population.

- Marker Density: Low-density panels may not capture all trait-relevant genomic segments. Check if increasing marker density changes the estimate.

- Quality of Pedigree Data: Pedigree errors can inflate traditional heritability estimates.

Q3: How do I tune the GBLUP model parameters for traits with different heritabilities (e.g., low h² < 0.2 vs. high h² > 0.5)? A3: Tuning is crucial for prediction accuracy. The core parameter is the ratio of residual to genetic variance (λ = σ²e/σ²g = (1-h²)/h²).

- Low Heritability Traits (h² < 0.2): λ is large. Use stronger regularization. Consider:

- Increasing the weight on the residual variance.

- Using a Bayesian approach (e.g., BayesA/B/C) that allows for marker-specific variances, which can better handle polygenic architectures with many small effects.

- Pre-selecting markers via GWAS or feature selection before GBLUP.

- High Heritability Traits (h² > 0.5): λ is small. GBLUP performs very well. Ensure your G-matrix accurately reflects relationships. Parameter tuning is less critical, but cross-validation remains essential.

Q4: What is the recommended experimental workflow for validating GBLUP model performance in a new population? A4: A robust validation protocol is essential for credible research.

- Data Partitioning: Randomly split your phenotyped and genotyped population into a Training Set (~70-80%) and a Validation Set (~20-30%). Ensure families are not split across sets to avoid biased accuracy.

- Model Training: Fit the GBLUP model only on the Training Set to estimate marker effects or breeding values.

- Prediction: Use the trained model to predict the genetic merit of individuals in the Validation Set.

- Accuracy Assessment: Correlate the GBLUP-predicted values with the observed phenotypes (or corrected phenotypes) in the Validation Set. This correlation is the prediction accuracy (r). The squared accuracy (r²) approximates the proportion of genetic variance captured.

Q5: Can GBLUP handle non-additive genetic effects (dominance, epistasis)? A5: The standard GBLUP model is strictly additive. To model non-additive effects, you must extend the framework.

- Dominance: Construct a separate dominance relationship matrix (D) using specific coding for heterozygous genotypes. The model becomes: y = 1μ + Zg + Zd + e, where g and d are additive and dominance effects.

- Epistasis: Include an epistatic relationship matrix, often constructed as the Hadamard product (element-wise multiplication) of G with itself (G#G). This rapidly increases model complexity and computational demand.

Key Data Tables

Table 1: Impact of Heritability (h²) on GBLUP Parameter Tuning & Performance

| Heritability (h²) Category | Variance Ratio (λ) | Recommended Method | Key Tuning Focus | Expected Prediction Accuracy* |

|---|---|---|---|---|

| Very Low (0.0 – 0.1) | High (>9) | Bayesian Methods (BayesR), Feature Selection | Strong shrinkage, prior distributions | Low (0.1 - 0.3) |

| Low (0.1 – 0.3) | Medium-High (2.3 – 9) | GBLUP with optimized λ, rrBLUP | λ optimization, quality of G-matrix | Moderate (0.3 - 0.5) |

| Moderate (0.3 – 0.5) | Medium (1 – 2.3) | Standard GBLUP | Training population size, relationship to validation set | Good (0.5 - 0.65) |

| High (> 0.5) | Low (<1) | Standard GBLUP, Single-step GBLUP | Marker density, genotype quality | High (0.65 - 0.9) |

*Accuracy ranges are illustrative and highly dependent on population size, structure, and genetic architecture.

Table 2: GBLUP Model Components and Their Definitions

| Component | Symbol | Formula/Description | Role in the Model |

|---|---|---|---|

| Phenotype Vector | y | y = [y₁, y₂, ..., yₙ]ᵀ |

Observed trait values for n individuals. |

| Mean | μ | Scalar intercept | Overall population mean. |

| Incidence Matrix | Z | Design matrix linking random effects to phenotypes. | Typically an identity matrix for GBLUP. |

| Additive Genetic Effects | g | g ~ N(0, Gσ²_g) |

Random effects following a multivariate normal distribution with covariance G. |

| Genomic Relationship Matrix (G) | G | (MMᵀ) / [2∑p_j(1-p_j)] (VanRaden Method 1) |

M is the centered genotype matrix, p is allele frequency. Captures realized genetic similarity. |

| Residual Error | e | e ~ N(0, Iσ²_e) |

Random, uncorrelated environmental noise and measurement error. |

| Variance Ratio | λ | λ = σ²_e / σ²_g = (1-h²)/h² |

Critical parameter determining the shrinkage of marker effects. |

Experimental Protocols

Protocol 1: Constructing the Genomic Relationship Matrix (G)

Objective: To compute the G matrix as per VanRaden's first method. Materials: Genotype data in 0,1,2 format (allele counts) for n individuals and p biallelic SNP markers. Steps:

- Create Genotype Matrix X: Dimensions n x p, where element X_ij ∈ {0,1,2}.

- Calculate Allele Frequencies: For each marker j, compute the frequency of the second allele:

p_j = (sum(X_column_j) / (2*n)). - Center the Matrix: Create matrix M, where

M_ij = X_ij - 2p_j. This centers on the current population mean. - Compute G:

G = (M * Mᵀ) / [2 * sum_over_j( p_j * (1-p_j) )]. The denominator scales G to be analogous to the numerator relationship matrix from pedigree.

Protocol 2: k-Fold Cross-Validation for GBLUP

Objective: To estimate the prediction accuracy of a GBLUP model without an independent validation set. Materials: Phenotype vector y, Genotype matrix X (to compute G). Steps:

- Randomly partition the n individuals into k folds (e.g., k=5 or 10).

- For fold i (i=1 to k):

a. Assign individuals in fold i as the validation set. All other (k-1) folds are the training set.

b. Compute G using all genotype data (to maintain relationships), but fit the GBLUP model only on the training set phenotypes.

c. Predict the genetic values for individuals in the validation set (

ĝ_val). d. Calculate the correlation betweenĝ_valand the observed phenotypes (y_val). - Average the correlation coefficients across all k folds to obtain the cross-validated prediction accuracy.

Visualizations

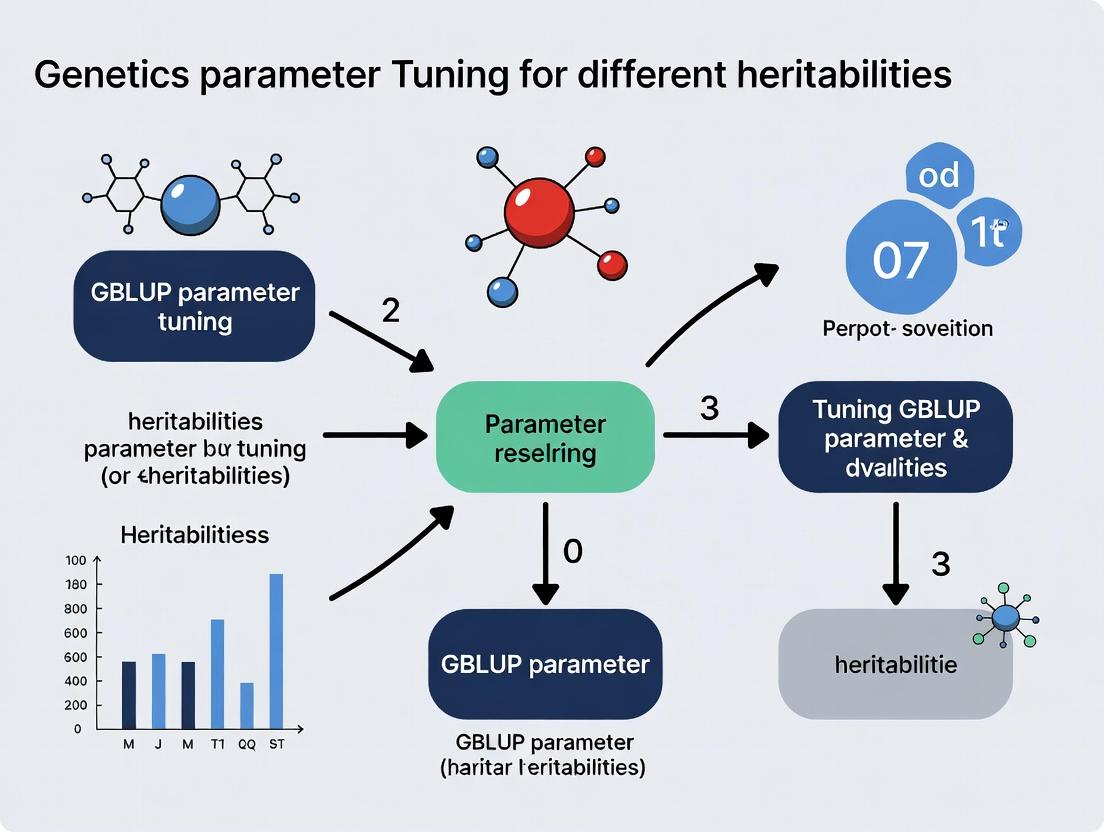

Title: GBLUP Model Implementation and Tuning Workflow

Title: Relationship Between Key Players in Genomic Prediction

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in GBLUP Research |

|---|---|

| High-Density SNP Genotyping Array | Provides the raw genotype data (0,1,2 calls) for constructing the Genomic Relationship Matrix (G). Density must be appropriate for the species (e.g., 50K-800K for livestock, 600K-1.2M for humans). |

| Genotype Imputation Software (e.g., Beagle, Minimac4) | Infers missing genotypes and allows harmonization of data from different genotyping arrays to a common set of markers, increasing sample size and marker density. |

| Mixed Model Solver Software | Core computational engine. BLUPF90, ASReml, GCTA, and R packages (e.g., rrBLUP, sommer) are used to solve the large systems of mixed model equations efficiently. |

| High-Performance Computing (HPC) Cluster | Essential for computationally intensive tasks like constructing G for large n (>10,000), running cross-validation, or employing advanced Bayesian methods. |

| Genetic Variance Component Estimation Software | Tools like GCTA-GREML or Bayesian samplers are used to estimate the genetic variance (σ²_g) and heritability (h²) from genomic data prior to GBLUP. |

| Phenotype Data Management System | Securely stores and manages trait measurements, experimental designs, and covariates, ensuring accurate merging with genotype data for analysis. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My GBLUP model shows near-zero genetic variance estimates, leading to poor predictive ability. What is the most likely cause and how do I fix it?

- Answer: This is typically a heritability mis-specification issue in the relationship matrix (K) scaling or the assumed prior. If the observed trait heritability is low but the model is parameterized with a high heritability prior, it can shrink genetic variance to zero.

- Solution: Re-estimate the population-level heritability using a REML approach on a subset of your data before full GBLUP analysis. Use this estimate to inform the scaling of K or the prior for the variance components. For K, ensure it is scaled as G = ZZ' / p (where Z is the centered/scaled genotype matrix and p is the number of markers) to reflect the expected genomic relationships.

FAQ 2: How does population structure within my training set artificially inflate heritability estimates and distort GBLUP predictions?

- Answer: Population stratification creates genomic covariance that is non-causal, causing the model to attribute phenotypic similarity due to shared ancestry to genetic effects. This inflates the estimated genomic variance (σ²g) and thus the heritability.

- Solution: Implement a two-step correction. First, perform a Principal Component Analysis (PCA) on the genomic relationship matrix (G). Second, include the top k principal components (PCs) as fixed-effect covariates in your GBLUP model: y = Xβ + Zu + ε, where X is the matrix of PCs. This adjusts for stratification, leading to a more accurate σ²g and heritability.

FAQ 3: When tuning GBLUP for a low-heritability trait, what specific parameter adjustments are critical compared to a high-heritability trait?

- Answer: The core adjustment is in the variance component ratio. The key parameter is λ = σ²e / σ²g (the ratio of residual to additive genetic variance), which is directly determined by heritability: λ = (1 - h²) / h².

- Solution: For low h², λ is large. This strongly shrinks estimated breeding values (EBVs) toward zero, emphasizing the need for a much larger training population size (N) to achieve reasonable accuracy. Prioritize increasing N over marker density. The GBLUP equation û = σ²g * Z' * (σ²g * Z*Z' + σ²e * I)^-1 * y shows how a large σ²e (relative to σ²g) reduces the influence of Z'.

FAQ 4: I have reliable heritability estimates from previous studies. What is the most efficient way to incorporate this prior knowledge into GBLUP parameterization?

- Answer: Use a Bayesian or BLUP framework with informed priors for the variance components.

- Solution: Instead of using default or data-derived REML estimates, set the prior distributions for σ²g and σ²e based on your known h² and phenotypic variance (σ²p). For example, set: σ²g ~ Scale * h² and σ²e ~ Scale * (1-h²), where Scale is related to your prior for σ²p. This is implemented in software like BLR or BUGS.

Table 1: Impact of Heritability (h²) on GBLUP Parameterization and Outcomes

| Heritability (h²) | Variance Ratio (λ = σ²e/σ²g) | Required Training Pop. Size (N) for Target Accuracy (~0.7) | Relative Weight on Genomic Info (in BLUP) | Primary Tuning Lever |

|---|---|---|---|---|

| High (0.6) | Low (0.67) | Moderate (1,000-2,000) | High | Marker density, G matrix construction |

| Moderate (0.3) | Moderate (2.33) | Large (3,000-5,000) | Moderate | N, λ specification |

| Low (0.1) | High (9.0) | Very Large (>8,000) | Low | N, accurate h² prior, fixed effects |

Table 2: Common GBLUP Parameterization Errors and Corrections

| Error Symptom | Probable Cause | Diagnostic Check | Correction Protocol |

|---|---|---|---|

| Zero genetic variance | K matrix misscaling, wrong h² prior | Compare REML h² vs. prior h² | Re-scale G to average diagonal ~1. Use REML h². |

| Predictions mirror phenotype mean | Very high λ (very low assumed h²) | Check trait h² from literature. | Re-estimate variance components with uninformative priors. |

| Overfitting in training, fails in validation | Population structure inflating h² | PCA on G, plot phenotypes vs. PC1. | Include top PCs as fixed covariates in model. |

Experimental Protocols

Protocol 1: Empirical Derivation of Heritability for GBLUP Parameterization Objective: To obtain a trait- and population-specific heritability estimate for accurate GBLUP parameter tuning.

- Genotyping & Phenotyping: Obtain high-density SNP genotypes and precise phenotypic records for a representative sample (N > 1000).

- Construct Genomic Relationship Matrix (G): Use the method of VanRaden (2008):

G = (M-P)(M-P)' / 2∑pᵢ(1-pᵢ), where M is the allele count matrix (0,1,2), P is a matrix of twice the allele frequencies, and pᵢ is the minor allele frequency. - Variance Component Estimation: Fit a linear mixed model: y = Xβ + Zu + ε, where u ~ N(0, Gσ²g) and ε ~ N(0, Iσ²e). Use REML (via

AIREMLorGREML) to estimate σ²g and σ²e. - Calculate Genomic Heritability: Compute h² = σ²g / (σ²g + σ²e). Use this h² to calculate λ for GBLUP.

Protocol 2: Stratification-Adjusted GBLUP Implementation Objective: To perform GBLUP corrected for population stratification.

- Perform Protocol 1, Steps 1-2 to get G.

- Principal Component Analysis: Decompose the centered G matrix:

svd(G)to obtain eigenvectors (PCs). - Determine Significant PCs: Use the Tracy-Widom test or a scree plot to select top k PCs (typically 3-10) capturing population structure.

- Fit Stratified GBLUP Model: Fit the model: y = Xβ + Zu + ε, where X now contains the k PCs as covariates. Estimate variance components via REML.

- Predict Breeding Values: Solve for û using the adjusted model. Validate predictive accuracy on an independent, stratified-held-out set.

Mandatory Visualization

Title: Workflow for Heritability-Informed GBLUP Parameter Tuning

Title: Logical Relationship: Heritability Drives GBLUP Outcomes

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in GBLUP Parameter Tuning | Example/Note |

|---|---|---|

| High-Density SNP Array | Provides genotype data (M matrix) to construct the Genomic Relationship Matrix (G). Essential for capturing linkage disequilibrium with QTLs. | Illumina Infinium, Affymetrix Axiom. Density should match effective population size. |

| REML Optimization Software | Estimates variance components (σ²g, σ²e) for heritability calculation. Core to parameter tuning. | GCTA (GREML), R packages (sommer, rrBLUP, ASReml-R). |

| Population Genetics Package | Handles QC, PCA, and construction of the G matrix. Corrects for stratification. | PLINK, GCTA, R (SNPRelate, gaston). |

| Bayesian Analysis Suite | Allows incorporation of informed priors for variance components based on known h². | BLR R package, Stan, BUGS. |

| Phenotyping Platform | Generates precise, replicated trait measurements (y vector). Accuracy is critical for valid h². | High-throughput phenotyping systems, controlled environment facilities. |

| Validation Cohort | An independent set of genotyped and phenotyped individuals. Used to test the predictive accuracy of the tuned GBLUP model. | Must be representative of the target breeding or prediction population. |

Technical Support Center: GBLUP Parameter Tuning for Different Heritabilities

FAQ & Troubleshooting Guide

Q1: My GBLUP model yields low genomic prediction accuracy (r<0.3) for a trait presumed to have high heritability. What are the primary troubleshooting steps? A: This common issue often stems from parameter or data mismatch. Follow this protocol:

- Verify Heritability Estimate: Re-estimate narrow-sense heritability (h²) using an independent method (e.g., REML in a linear mixed model with a pedigree-based relationship matrix) on your phenotypic data. Ensure your estimate aligns with "high" (h² > 0.4).

- Check Genomic Data Quality: Run a Quality Control (QC) pipeline:

- Sample QC: Remove individuals with call rate < 0.95.

- Marker QC: Exclude SNPs with call rate < 0.95, minor allele frequency (MAF) < 0.01, and significant deviation from Hardy-Weinberg Equilibrium (p < 1e-6).

- Imputation: Fill missing genotypes using a reference panel; discard SNPs with imputation accuracy (R²) < 0.7.

- Re-tune GBLUP Parameters:

- The core GBLUP model is:

y = Xb + Zu + e, whereu ~ N(0, Gσ²_g)ande ~ N(0, Iσ²_e). The key parameter is the ratioλ = σ²_e / σ²_g = (1-h²)/h². - Action: If your verified h² is 0.7, but you used a default

λ=1.0, forceλ = (1-0.7)/0.7 ≈ 0.43in your solver. Use cross-validation to find the optimalλaround this theoretical value.

- The core GBLUP model is:

Q2: For low-heritability complex traits, how should I adjust the GBLUP workflow to stabilize estimates? A: Low heritability (h² < 0.2) implies high environmental noise. Standard GBLUP is prone to overfitting.

- Increase Sample Size: This is the most effective solution. Use power calculations to determine the required N. For h²=0.1, you may need >5000 samples for reliable estimation.

- Incorporate Fixed Effects & Covariates: Rigorously account for batch effects, age, sex, and principal components of ancestry in the

Xbterm to reduceσ²_e. - Consider Alternative GRMs: Instead of the standard VanRaden G-matrix, try:

- Weighted GRMs: Weight SNPs by initial GWAS p-values or functional annotations.

- Tuning Parameter: Introduce a scaling parameter

τfor the GRM:G* = G * τ. Use cross-validation to optimizeτalongsideλ.

Q3: What is the recommended experimental protocol for generating phenotype data suitable for comparative heritability category analysis? A: Standardized Phenotyping Protocol for Heritability Estimation

- Objective: To collect reproducible phenotypic data for genetic analysis.

- Materials: See "Research Reagent Solutions" table.

- Methodology:

- Cohort Design: Ensure a large, genetically diverse cohort with minimal relatedness to avoid confounding. Record all relevant covariates (age, sex, batch, technical replicates).

- Trait Measurement: For quantitative traits, use calibrated instruments. For disease status, apply consistent diagnostic criteria. All measurements should be performed blinded to genotype.

- Data Transformation: Apply appropriate transformations (e.g., log, Box-Cox) to achieve normality of residuals, as required by the linear mixed model.

- Outlier Handling: Use robust statistical methods (e.g., Tukey's fences) to identify and winsorize technical outliers, but retain biological extremes.

- Replication: Include replicated measurements for a subset to estimate and correct for measurement error variance.

Q4: How do I choose the correct genetic relationship matrix (GRM) model for my heritability scenario? A: Refer to the decision workflow below and the comparison table.

GBLUP Analysis Workflow for Heritability Categories

Table 1: GBLUP Configuration Guide by Heritability Category

| Heritability Category | Typical h² Range | Recommended Min. Sample Size | Key GBLUP Tuning Parameter | Suggested GRM Type | Expected Prediction Accuracy (r) Range |

|---|---|---|---|---|---|

| Low | < 0.2 | 5,000 - 10,000 | High λ (λ > 4.0), Consider τ |

Weighted (e.g., by MAF, p-value) | 0.1 - 0.3 |

| Medium | 0.2 - 0.4 | 1,000 - 2,000 | Moderate λ (λ=1.5 - 0.67) |

Standard VanRaden (G = XX'/k) | 0.3 - 0.5 |

| High | > 0.4 | 500 - 1,000 | Low λ (λ < 0.67) |

Standard VanRaden or GCTA-GRM | 0.5 - 0.8 |

Table 2: Research Reagent Solutions for Key Experiments

| Item | Function | Example Product/Catalog # (Illustrative) |

|---|---|---|

| Genotyping Array | Genome-wide SNP profiling for GRM construction. | Illumina Global Screening Array v3.0, Affymetrix Axiom Biobank Plus |

| Whole Genome Sequencing Service | Provides highest density variant data for custom GRMs. | Illumina NovaSeq X Plus, PacBio Revio |

| DNA Extraction Kit (Blood/Tissue) | High-yield, high-purity genomic DNA isolation. | Qiagen DNeasy Blood & Tissue Kit #69504 |

| Phenotype Assay Kit (e.g., LDL-C) | Precise quantitative measurement of a biochemical trait. | Roche Diagnostics LDL Cholesterol Gen.3 #11820958 |

| Statistical Software | For REML heritability estimation & GBLUP analysis. | R (sommer, rrBLUP), GCTA, Python (pygwas), BLUPF90+ |

| High-Performance Computing (HPC) Cluster | Essential for large-scale genomic matrix operations and CV. | Slurm or Kubernetes cluster with >256GB RAM & multi-core nodes |

Signaling Pathway for Pharmacogenetic Trait Integration in GBLUP

Technical Support Center: Troubleshooting GBLUP Parameter Tuning

Troubleshooting Guides

Issue 1: Poor Predictive Ability in Low Heritability Scenarios

- Symptoms: Genomic Estimated Breeding Values (GEBVs) show near-zero variance, model explains little to no phenotypic variance, validation accuracy is at or near random.

- Diagnosis: The genomic relationship matrix (G) is receiving insufficient weight. The REML algorithm is over-shrinking the genomic variance component (

σ²_g) towards zero because the low populationh²signal is weak relative to the residual noise. - Resolution: Increase the weight of the

Gmatrix. Consider: 1) Using a tunedG*matrix (e.g., blendingGwith a small percentage of the identity matrixIto improve conditioning). 2) Applying a Bayesian approach (e.g., BayesA, BayesB) that allows marker-specific variances, which can be more powerful for detecting sparse signals in lowh²traits. 3) Ensuring the panel has sufficient marker density to capture LD with causal variants.

Issue 2: Overfitting and Inflation of Predictions in High Heritability Scenarios

- Symptoms: GEBV variance approaches phenotypic variance, training accuracy is very high but validation accuracy on unrelated individuals collapses, observed dispersion (regression coefficient) in validation is much less than 1.

- Diagnosis: The model is over-relying on

G, capturing family-specific or even environmental covariances as genetic signal. TheGmatrix is not being sufficiently "shrunken" or regularized. - Resolution: Increase shrinkage. Consider: 1) Using a compressed

Gmatrix (e.g.,Gscaled byh²). 2) Introducing a polygenic effect with anAmatrix (pedigree) to separate out familial noise. 3) Applying cross-validation strictly to tune the blending parameter if using a combinedG*matrix. 4) Using a stricter prior forσ²_gin a Bayesian framework.

Issue 3: Unstable or Non-Convergent Variance Component Estimates

- Symptoms: REML iterations fail to converge, estimates for

σ²_gandσ²_eoscillate or hit boundaries (e.g.,σ²_g = 0). - Diagnosis: Often related to ill-conditioning of the mixed model equations, frequently exacerbated by an extreme

h²context interacting poorly with theGmatrix structure (e.g., a near-singularG). - Resolution: 1) Check the eigenvalue distribution of

G. If small/zero eigenvalues exist, apply a minimal Tikhonov regularization:G* = G + δI, whereδis small (e.g., 0.01). 2) Re-scale theGmatrix to have an average diagonal of 1.0. 3) Verify the quality control of genotype data; high missingness or MAF thresholds can distortG.

Frequently Asked Questions (FAQs)

Q1: How does the heritability (h²) of a trait theoretically influence the optimal blending parameter (λ) when blending G with an identity matrix (I)?

A: The optimal blending parameter λ in G* = w*G + (1-w)*I is inversely related to h². For low h² traits, w should be smaller (more weight on I) to increase shrinkage and stabilize the inversion, preventing overfitting to noise. For high h² traits, w can be larger (more weight on the original G) to allow the strong genetic signal to be fully captured.

Q2: My validation accuracy plateaus or drops when I use the standard G matrix for a high h² trait. Why?

A: This is a classic sign of the G matrix capturing non-additive or family-specific effects that do not generalize to more distant relatives in the validation set. The model needs more shrinkage. Consider using a weighted G matrix or a combined G-A approach to better reflect the expected relationships based on the trait's architecture.

Q3: What is the most critical step in preparing the G matrix for consistent performance across different h² levels?

A: Consistent and rigorous quality control of the genomic data is paramount. This includes standardizing imputation accuracy, applying appropriate MAF filters (e.g., 0.01-0.05), and controlling for genotype call rate. Anomalies in G (extreme eigenvalues) have differential impacts depending on h², making a clean, well-calibrated G the essential foundation.

Q4: Are there standard diagnostic plots to check if the G influence is appropriate for my estimated h²?

A: Yes. Two key plots are: 1) Dispersion plot: Plot predicted GEBVs vs. observed phenotypes in a validation set. The slope indicates inflation/deflation. 2) Eigenvalue distribution plot: Plot the eigenvalues of G. The distribution should be examined relative to the estimated σ²_g/σ²_e ratio; a large ratio (high h²) paired with many near-zero eigenvalues can signal instability.

Data Presentation

Table 1: Expected Impact of Trait Heritability (h²) on GBLUP Components and Recommended Tuning Actions

| Heritability (h²) Context | Influence on Genomic Variance Component (σ²_g) | Expected Behavior of G Matrix | Risk | Recommended Tuning Action |

|---|---|---|---|---|

| Low (h² < 0.2) | Severe shrinkage towards zero by REML. | Low weight, minimal influence on predictions. | Underfitting; failure to capture genetic signal. | Use blended G* (G + λI) with smaller λ. Consider alternative (Bayesian) models. |

| Moderate (0.2 ≤ h² ≤ 0.5) | Moderate, stable estimation. | Balanced weight with residual variance. | Minimal, model is generally robust. | Standard G matrix often performs optimally. Focus on accurate h² estimation. |

| High (h² > 0.5) | Estimated with high confidence, may be inflated. | Dominates the mixed model equations. | Overfitting to familial noise; poor generalizability. | Increase shrinkage via scaled G or add a polygenic (A) effect. Use strict cross-validation. |

Table 2: Summary of Key Research Reagent Solutions for GBLUP Experiments

| Item | Function/Description | Key Consideration for h² Studies |

|---|---|---|

| Genotyping Array/Sequencing Data | Raw genomic variants (SNPs) for constructing the GRM. | Density must be sufficient to capture LD, especially for low h² polygenic traits. |

| Quality-Checked Phenotypic Data | Trait measurements for training and validation sets. | Accurate estimation of population h² is critical for setting expectations. |

G Matrix Calculation Software (e.g., PLINK, GCTA) |

Computes the genomic relationship matrix (VanRaden Method 1 or 2). | Ensure consistent scaling (average diagonal = 1). Check for outliers. |

Mixed Model Solver (e.g., BLUPF90, ASReml, sommer in R) |

Fits the GBLUP model and estimates variance components. | Must allow user-defined covariance matrices (G, G*, A). |

| Cross-Validation Pipeline Script | Automates the partitioning of data and assessment of prediction accuracy. | Essential for empirically tuning parameters (e.g., blending weight λ) for a given h². |

Experimental Protocols

Protocol 1: Empirical Tuning of the G Matrix Blending Parameter (λ)

Objective: To determine the optimal weight (w) for blending G with I (G* = w*G + (1-w)*I) for a trait with a given estimated heritability (h²).

- Estimate Base Parameters: Using the full dataset and the standard

Gmatrix, estimate the genomic (σ²_g) and residual (σ²_e) variance components via REML. Calculateh² = σ²_g / (σ²_g + σ²_e). - Define

wGrid: Create a sequence ofwvalues from 0 to 1 (e.g.,0, 0.1, 0.2, ..., 1.0). - Cross-Validation Loop: For each

wvalue: a. ConstructG*_w = w*G + (1-w)*I. b. Perform 5-fold or 10-fold cross-validation usingG*_was the relationship matrix in the GBLUP model. c. Record the average prediction accuracy (correlation between GEBV and observed phenotype) across validation folds. - Identify Optimal

w: Plot prediction accuracy againstw. Thewyielding the highest accuracy is optimal for that trait and population structure. - Validation: Apply the optimal

wto create a finalG*matrix and run a final model on the complete training set for deployment.

Protocol 2: Diagnosing G Matrix Over/Under-Influence via Validation Dispersion

Objective: To assess whether the influence of the G matrix in the fitted model is appropriate.

- Split Data: Divide the data into a training set (≥70%) and a strictly independent validation set (≤30%). Ensure no close relatives across sets.

- Train Model: Fit the GBLUP model on the training set using your chosen

G(orG*) matrix. - Predict & Record: Predict GEBVs for the validation set individuals. Record both the predicted GEBVs and their observed phenotypes.

- Analyze Dispersion: Regress the observed phenotypes on the predicted GEBVs (validation set only). Estimate the regression coefficient (slope).

- Interpret: A slope ≈ 1 indicates appropriate influence. A slope > 1 suggests GEBVs are under-dispersed (over-shrinkage,

Gunder-weighted). A slope < 1 suggests GEBVs are over-dispersed/inflated (under-shrinkage,Gover-weighted).

Mandatory Visualizations

Title: GBLUP Tuning Decision Flow Based on Trait Heritability

Title: Experimental Workflow for Tuning the Genomic Relationship Matrix

A Step-by-Step Pipeline for GBLUP Tuning: From Heritability Estimation to Model Fitting

Troubleshooting Guides & FAQs

Q1: My REML estimate for SNP-based heritability is near the boundary (0 or 1). What could be the cause and how can I resolve it? A: This often indicates data or model issues.

- Causes: Small sample size, highly stratified sample causing confounding, mis-specified GRM (e.g., using all SNPs without LD pruning), or severe population structure.

- Solutions:

- Verify sample quality control (QC) and kinship checks.

- Use a GRM constructed from LD-pruned, autosomal SNPs only.

- Include principal components (PCs) as fixed effects to control for stratification.

- Consider using a constrained optimization algorithm to keep estimates within [0,1].

- For GBLUP tuning, a boundary estimate suggests the prior heritability for the tuning run should be set cautiously (e.g., use 0.5 as a neutral start point).

Q2: LD Score Regression intercept is significantly >1 (e.g., 1.2) or <1 (e.g., 0.8). How does this affect heritability estimation and downstream GBLUP? A: The intercept reflects confounding polygenicity (intercept >1) or residual population stratification (intercept <1).

- Impact: An intercept deviating from 1 biases the SNP-h² estimate. Using this biased h² to tune GBLUP will lead to suboptimal predictive performance.

- Resolution: For intercept >1, use the LDSC intercept to correct the observed scale heritability estimate. For intercept <1, ensure you are using the

--intercept-h2option in LDSC to obtain the corrected h². Always report both raw and intercept-corrected estimates. The corrected estimate should be used for informing GBLUP parameterization.

Q3: Discrepancy between REML and LD Score Regression heritability estimates. Which one should I trust for setting GBLUP parameters? A: This is common. Each method has different assumptions and sensitivities.

- REML (via GCTA): Sensitive to sample structure and relatedness. Prone to inflation with familial relatedness or stratification if not properly controlled.

- LD Score Regression: Robust to population stratification but sensitive to LD score mis-specification and requires a large, well-matched reference panel.

- Recommendation for GBLUP Tuning: Use the LD Score Regression estimate as your primary prior if your sample has complex population structure. If your sample is relatively homogeneous and well-controlled for PCs, the REML estimate may be more precise. Consider designing your GBLUP tuning experiment to test a range of h² values centered on both estimates.

Q4: What are the key QC steps for summary statistics before running LD Score Regression? A: Poor QC leads to unreliable estimates.

- Allele Frequency Alignment: Ensure allele frequencies from your summary stats match those in the LD reference panel. Mismatches cause major bias.

- Strand Ambiguity: Remove ambiguous A/T, C/G SNPs unless you can resolve strands via a reference panel.

- Insert N: Filter out indels and structural variants; keep only bi-allelic SNPs.

- INFO Score: Filter on imputation quality (e.g., INFO > 0.9 for imputed data).

- MAF Filter: Apply a minor allele frequency filter (e.g., MAF > 0.01) consistent with your analysis.

- Munge Steps: Follow the

munge_sumstats.pyprotocol from the LDSC toolkit exactly, paying attention to column name mapping.

Table 1: Comparison of Pre-Model Heritability Estimation Methods

| Method | Software/Tool | Input Data | Key Assumptions | Output for GBLUP Tuning | Common Pitfalls |

|---|---|---|---|---|---|

| REML (Variance Component) | GCTA, GREML, GEMMA | Individual-level genotypes & phenotypes | Linear mixed model is correct; population structure is controlled via PCs/GRM. | Direct estimate of h² on the observed scale. | Sensitive to sample relatedness, stratification; can hit parameter boundaries. |

| LD Score Regression | LDSC, SumHer | GWAS Summary Statistics + LD Reference Panel | Heritability is proportional to LD score; confounding is constant across SNPs. | SNP-based h² (liability scale if requested), intercept (confounding measure). | Requires well-matched LD reference; sensitive to mis-specified LD scores. |

Table 2: Typical Parameter Ranges for GBLUP Tuning Informed by Pre-Estimated h²

| Pre-Estimated h² Range | Suggested GBLUP Heritability Prior Range for Tuning | Recommended Tuning Grid Density | Expected Impact on GBLUP Accuracy |

|---|---|---|---|

| Low (0.0 - 0.2) | 0.05, 0.1, 0.15, 0.2, 0.25 | Fine grid near estimate | High sensitivity; optimal prior is critical. |

| Moderate (0.2 - 0.5) | 0.15, 0.25, 0.35, 0.45, 0.55 | Coarse-to-medium grid | Moderate sensitivity. |

| High (0.5 - 0.8) | 0.45, 0.55, 0.65, 0.75, 0.85 | Coarse grid | Lower sensitivity; model is robust. |

Experimental Protocols

Protocol 1: Estimating Heritability via REML using GCTA Objective: To estimate the proportion of phenotypic variance explained by all SNPs using individual-level data. Materials: Phenotype file, PLINK binary genotype files, GCTA software. Procedure:

- Data QC: Prune SNPs for LD (

plink --indep-pairwise 50 5 0.2). Generate a GRM using pruned SNPs (gcta --make-grm). - Model Specification: Run the GREML analysis:

gcta --grm [GRM] --pheno [PHENO] --reml --out [OUTPUT]. Include covariates (e.g., age, sex, PCs) with--qcovaror--covar. - Output Interpretation: The

V(G)/Vpcolumn in the.hsqfile is the estimated SNP-based heritability. Check the standard error and P-value from the likelihood ratio test.

Protocol 2: Estimating Heritability via LD Score Regression Objective: To estimate SNP-based heritability and quantify confounding from GWAS summary statistics. Materials: GWAS summary statistics file, pre-computed LD scores (e.g., from 1000 Genomes Eur reference), LDSC software. Procedure:

- Munge Summary Statistics:

munge_sumstats.py --sumstats [INPUT.sumstats] --out [MUNGED] --merge-alleles [w_hm3.snplist]. - Run LD Score Regression:

ldsc.py --h2 [MUNGED.sumstats.gz] --ref-ld-chr [LD_SCORE_FILES] --w-ld-chr [LD_SCORE_FILES] --out [RESULTS]. - Output Interpretation: Key results:

Total Observed scale h2(uncorrected),Lambda GC(inflation),Intercept(confounding). TheRatio(h2/(N*Intercept)) is often used. For corrected h², use the--intercept-h2flag.

Visualizations

Pre-Model Heritability Estimation Workflow

Relationship Between h² Estimates & GBLUP Tuning

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Pre-Model Heritability Estimation

| Item | Function/Description | Example/Tool |

|---|---|---|

| LD-Pruned SNP List | A set of SNPs in approximate linkage equilibrium to build a genetically interpretable GRM, reducing artifact inflation. | Generated via PLINK: --indep-pairwise 50 5 0.2. |

| Pre-computed LD Scores | Essential reference data for LD Score Regression. Contains the sum of LD correlations for each SNP. | Downloaded from LDSC website (e.g., based on 1000 Genomes European subset). |

| HapMap3 SNP List | A curated list of ~1.2 million SNPs for standardizing analyses and munging summary statistics in LDSC. | File: w_hm3.snplist. Ensures allele alignment and reduces batch effects. |

| Principal Components (PCs) | Covariates derived from genotype data to control for population stratification in REML analyses. | Calculated via PLINK/GCTA on a pruned SNP set, typically first 10-40 PCs. |

| High-Quality LD Reference Panel | A population-matched genotype panel used to compute accurate LD scores. Critical for LDSC accuracy. | 1000 Genomes Project Phase 3, UK Biobank (restricted access), or ancestry-specific panels. |

| Genetic Relationship Matrix (GRM) | An NxN matrix of genomic similarity between all individuals, the core input for REML methods. | Generated by GCTA (--make-grm) or PLINK (--make-rel). |

Technical Support Center

FAQ & Troubleshooting Guide

Q1: I am setting up a GBLUP model for a trait with an assumed heritability (h²) of 0.3. How do I calculate the initial variance component ratios for the genetic (σ²g) and residual (σ²e) variances? A: The variance components are derived from the heritability definition: h² = σ²g / (σ²g + σ²e). Set the total phenotypic variance (σ²p = σ²g + σ²e) to 1 for scaling. Therefore:

- σ²g = h² = 0.3

- σ²e = 1 - h² = 0.7 The initial variance ratio is σ²g : σ²e = 0.3 : 0.7. Use these as starting points for REML iteration.

Q2: My REML estimation fails to converge when using my initial parameters. What are common causes and solutions? A: This is often due to unrealistic starting values.

- Cause 1: Initial variance ratio is too extreme (e.g., h² set to 0.95, leading to σ²g >> σ²e).

- Solution: Use a more conservative starting point (e.g., h²=0.5). Ensure the ratio is plausible for your trait.

- Cause 2: The genetic relationship matrix (G) is not positive definite.

- Solution: Check G for singularity. Apply a bending procedure (add a small constant to the diagonal) or ensure proper quality control on genotype data.

- Cause 3: Inadequate sample size for the number of markers.

- Solution: Ensure the number of individuals (N) is sufficiently large relative to the number of markers (M). A rule of thumb is N > M/10 for stability.

Q3: How do I validate that my tuned parameters are producing reliable Genomic Estimated Breeding Values (GEBVs)? A: Implement a cross-validation protocol.

- Partition your data into k folds (e.g., 5).

- For each fold, tune parameters using the remaining k-1 folds as the training set.

- Predict GEBVs for the validation fold.

- Calculate the correlation between predicted and observed (or pre-corrected) phenotypes in the validation sets. This predictive accuracy validates your parameter tuning.

Q4: For a low-heritability trait (h²=0.1), my GEBV accuracy is very poor. How can I adjust my experimental or analytical approach? A: Low heritability is challenging.

- Experimental Protocol: Increase phenotypic recording accuracy and replication. Use experimental designs that better control environmental noise (e.g., augmented block designs, more environments).

- Analytical Protocol: Consider using a model that accounts for more variance sources, such as a GBLUP with a secondary genetic effect (e.g., dominance) or a reaction norm model for genotype-by-environment interaction. Ensure your relationship matrix is built from markers that are in linkage disequilibrium with causal variants relevant to your population.

Data Presentation

Table 1: Initial Variance Component Parameters Based on Target Heritability (h²) (Assuming Total Phenotypic Variance σ²p = 1)

| Target Heritability (h²) | Genetic Variance (σ²g) | Residual Variance (σ²e) | Initial Ratio (σ²g : σ²e) | Recommended Use Case |

|---|---|---|---|---|

| 0.1 (Low) | 0.10 | 0.90 | 1 : 9 | Complex, polygenic traits highly influenced by environment. |

| 0.3 (Moderate) | 0.30 | 0.70 | 3 : 7 | Standard starting point for many agronomic or disease traits. |

| 0.5 (Medium-High) | 0.50 | 0.50 | 1 : 1 | Traits with known major genes and moderate environmental influence. |

| 0.7 (High) | 0.70 | 0.30 | 7 : 3 | Morphological traits or highly heritable disease resistances. |

Table 2: Cross-Validation Results for Parameter Tuning (Example)

| Heritability Scenario | Training Population Size | Validation Population Size | Predictive Accuracy (Mean r) | Optimal Tuned σ²g | Optimal Tuned σ²e |

|---|---|---|---|---|---|

| h² = 0.3 (Simulated) | 800 | 200 | 0.42 ± 0.03 | 0.28 | 0.72 |

| h² = 0.5 (Simulated) | 800 | 200 | 0.58 ± 0.02 | 0.49 | 0.51 |

| h² = 0.1 (Simulated) | 800 | 200 | 0.18 ± 0.05 | 0.09 | 0.91 |

Experimental Protocols

Protocol 1: Setting Initial Parameters and Running GBLUP with REML

- Define Heritability: Obtain a prior h² estimate from literature, previous studies, or pedigree-based analysis.

- Scale Variance: Set total phenotypic variance (σ²p) to 1. Calculate σ²g = h² and σ²e = 1 - h².

- Prepare Inputs:

- Phenotype File: Corrected phenotypes (BLUEs or adjusted means).

- Genotype File: SNP matrix coded as 0,1,2 for hom, het, alt hom.

- GRM: Compute the Genomic Relationship Matrix (G) using the first method of VanRaden (2008).

- Model Specification: Fit the model: y = Xb + Zu + e, where y is phenotype, b is fixed effects, u ~ N(0, Gσ²g) is random genetic effects, and e ~ N(0, Iσ²e) is residual.

- REML Iteration: Use software (e.g., ASReml, GCTA, sommer) to estimate variance components via REML, using the values from Step 2 as initial parameters.

- Output: Extract estimated σ²g and σ²e, compute derived h², and obtain GEBVs (u).

Protocol 2: k-Fold Cross-Validation for Model Validation

- Randomly partition the entire dataset of N individuals into k subsets (folds) of roughly equal size.

- For i = 1 to k: a. Designate fold i as the validation set. The remaining k-1 folds form the training set. b. Run the GBLUP model (Protocol 1) using only the training set data to estimate variance components and predict GEBVs. c. Apply the model from (b) to predict GEBVs for the individuals in validation fold i. d. Calculate the predictive accuracy as the Pearson correlation (r) between the predicted GEBVs and the pre-corrected observed phenotypes in the validation set.

- Average the correlation coefficients from all k folds to obtain the overall predictive accuracy. The standard deviation across folds indicates stability.

Mandatory Visualization

GBLUP Parameter Tuning and Validation Workflow

Variance Components in GBLUP Model

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for GBLUP Analysis

| Item | Function & Explanation |

|---|---|

| Genotype Data (SNP Array/Seq) | Raw genetic information. Quality-controlled SNP data (MAF, call rate, Hardy-Weinberg) is essential for building an accurate Genomic Relationship Matrix (GRM). |

| Phenotype Data (BLUEs/BLUPs) | Corrected trait measurements. Best Linear Unbiased Estimators (BLUEs) from multi-environment trials or de-regressed breeding values are ideal response variables (y) to minimize residual noise. |

| Genomic Relationship Matrix (G) | Quantifies genetic similarity between all pairs of individuals based on markers. The cornerstone of GBLUP, determining how genetic effects are correlated. |

| REML Software (e.g., ASReml, GCTA, sommer R package) | Computational engines that perform Restricted Maximum Likelihood estimation to optimally partition phenotypic variance into genetic and residual components. |

| Cross-Validation Script (R/Python) | Custom scripts to automate data partitioning, iterative model training, and calculation of predictive accuracy, which is critical for validating tuning success. |

| High-Performance Computing (HPC) Cluster | REML and cross-validation are computationally intensive. Access to HPC resources is often necessary for analyses involving thousands of individuals and markers. |

Troubleshooting Guides & FAQs

Q1: During Grid Search for GBLUP genomic heritability (h²) tuning, the cross-validation error plateaus and does not improve regardless of the parameter grid density. What is the likely cause and solution?

A: This is often due to an incorrectly defined parameter search space that does not encompass the true optimal value. First, run a preliminary coarse grid search over a very wide range (e.g., h² from 0.01 to 0.99). Analyze the CV error curve; if the minimum error is at the boundary of your grid, expand the grid in that direction. If the curve is flat, the trait may have very low heritability, or the genomic relationship matrix (GRM) may be poorly calibrated. Verify the GRM construction and consider alternative scaling methods.

Q2: When performing k-fold Cross-Validation for model evaluation, I observe high variance in the prediction accuracy across different random folds. How can I stabilize these estimates?

A: High variance suggests that your dataset may be limited in size or have underlying population structure. To stabilize estimates:

- Increase the number of folds (k): Use Leave-One-Out CV (LOOCV) for very small sample sizes (<100).

- Use stratified sampling: Ensure each fold maintains the same proportion of individuals from different families or sub-populations.

- Repeat the CV process: Perform the k-fold partitioning multiple times with different random seeds (e.g., 50-100 repeats) and report the mean and standard deviation of the accuracy.

- Increase sample size: If possible, this is the most direct solution.

Q3: My Bayesian optimization for tuning the GBLUP ridge parameter (λ = (1-h²)/h²) gets stuck in a local minimum. How can I improve the search?

A: Bayesian optimization uses an acquisition function (e.g., Expected Improvement) to balance exploration and exploitation. If stuck:

- Adjust the acquisition function: Increase the

kappaorxiparameter to favor exploration over exploitation in early iterations. - Use a different kernel: Switch from a standard Matérn kernel to an Exponential kernel for a less smooth surrogate, encouraging exploration.

- Inject random points: Interleave random parameter selections among the Bayesian suggestions (e.g., 10-20% of iterations).

- Restart from random points: If using a package like

scikit-optimize, use theOptimizerfunction withbase_estimator=GPandacq_func="gp_hedge"to allow it to switch strategies.

Q4: For a polygenic trait with low heritability (h² ~ 0.1), which tuning strategy is most computationally efficient and reliable?

A: For low heritability traits, the parameter space where models perform non-randomly is constrained. A Bayesian approach is typically most efficient, as it can quickly hone in on the small region of relevant λ values. Grid Search requires a very fine grid to pinpoint the optimum, incurring high computational cost. Ensure your Bayesian prior is set appropriately (e.g., centered over lower h² values).

Q5: I encounter a "matrix is not positive definite" error when testing certain h² values during tuning. What does this mean and how do I resolve it?

A: This error arises when the total variance-covariance matrix V = Z G Z' σ_g² + I σ_e² becomes ill-conditioned. This typically happens when the specified genomic variance (σ_g²) is too high relative to the residual variance (σ_e²) for the given GRM (G).

- Solution 1: Constrain the search space for h² to avoid extreme values very close to 1.0 (e.g., max h² = 0.98).

- Solution 2: Apply a "bending" or regularization to the GRM by adding a small value to its diagonal (e.g., 0.01) before the analysis to ensure positive definiteness across the parameter range.

Table 1: Comparison of Tuning Strategy Performance for Different Heritability Scenarios

| Heritability (h²) | Optimal Tuning Strategy | Avg. Comp. Time (min) | Prediction Accuracy (r) ± SD | Key Parameter (λ) Range |

|---|---|---|---|---|

| Low (0.1-0.3) | Bayesian Optimization | 15.2 | 0.31 ± 0.04 | 9.0 - 2.33 |

| Medium (0.4-0.6) | 5-Fold Repeated CV | 42.5 | 0.67 ± 0.03 | 1.5 - 0.67 |

| High (0.7-0.9) | Coarse-to-Fine Grid Search | 38.7 | 0.85 ± 0.02 | 0.43 - 0.11 |

Table 2: Impact of Sample Size on Tuning Stability (for h² ~ 0.5)

| Sample Size (n) | CV Strategy | Std. Dev. of Estimated h² | Recommended Min. Folds (k) |

|---|---|---|---|

| n < 100 | LOOCV or 10-fold, 100 Repeats | 0.12 | 5 or LOOCV |

| 100 ≤ n < 500 | 5-fold, 50 Repeats | 0.07 | 5 |

| n ≥ 500 | 5-fold, 10 Repeats | 0.03 | 5 |

Experimental Protocols

Protocol 1: Implementing a Coarse-to-Fine Grid Search for GBLUP

- Define Coarse Grid: Create a sequence of heritability (

h²) values:c(0.01, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 0.99). - Calculate λ: For each

h², compute the ridge parameter λ =(1-h²)/h². - Initial CV: Perform 5-fold cross-validation for each λ using your GBLUP solver (e.g.,

rrBLUP,BGLR). Record prediction accuracy (correlation between predicted and observed). - Identify Region: Locate the

h²region yielding the highest accuracy. - Define Fine Grid: Create a dense sequence of 20

h²values within ±0.15 of the best coarse value. - Final CV: Repeat 5-fold CV on the fine grid. The parameter with the highest accuracy is optimal.

Protocol 2: Nested Cross-Validation for Unbiased Evaluation

- Outer Loop (Evaluation): Split the full dataset into 5 outer folds. For each outer fold:

- Hold Out Test Set: Designate one fold as the test set.

- Inner Loop (Tuning): Use the remaining 4 folds (training+validation set) to perform a complete tuning protocol (e.g., Grid Search, Bayesian Optimization) as described in Protocol 1. This identifies the best

h². - Train Final Model: Train a GBLUP model on the entire training+validation set using the optimal

h². - Predict & Score: Predict the held-out test set and calculate accuracy.

- Aggregate: Repeat for all 5 outer folds. The mean test-set accuracy is the unbiased performance estimate.

Visualizations

GBLUP Parameter Tuning Strategy Workflow

Nested Cross-Validation for Unbiased Tuning & Evaluation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in GBLUP Tuning Experiments |

|---|---|

| Genotyping Array or WGS Data | Provides raw marker data (SNPs) for constructing the Genomic Relationship Matrix (GRM), the foundation of GBLUP. |

| High-Quality Phenotypic Data | Measured trait values for the training population. Crucial for accurate heritability estimation and model training. |

| GBLUP Software (e.g., BGLR, rrBLUP, GCTA) | Software packages that implement the mixed model equations for genomic prediction and allow parameter specification. |

| High-Performance Computing (HPC) Cluster | Essential for running computationally intensive tasks like repeated CV and Bayesian optimization on large genomic datasets. |

| Bayesian Optimization Library (e.g., scikit-optimize, GPyOpt) | Provides algorithms for efficient hyperparameter search, reducing the number of model fits needed. |

| Numerical Linear Algebra Library (e.g., Intel MKL, OpenBLAS) | Accelerates the core matrix operations (inversions, decompositions) in GBLUP, significantly speeding up tuning. |

| Data Partitioning Script (Custom R/Python) | Scripts to implement stratified sampling for k-fold CV, ensuring representative folds and stable results. |

Troubleshooting Guides & FAQs

Q1: My sommer (mmer) model in R fails to converge when fitting a GBLUP model with high-dimensional genomic data. What are the primary tuning parameters, and how should I adjust them?

A: Convergence failures in sommer often relate to the Genetic Relatedness Matrix (GRM) being near-singular or the algorithm needing more iterations.

- Primary Parameters:

tolParConv(convergence tolerance),maxIter(maximum iterations), andna.method.X/na.method.Y(missing data handling). - Protocol: 1) Check GRM condition number (

kappa()). If extremely high (>10^12), consider adding a small constant to the diagonal (e.g.,G = G + diag(0.01, ncol(G))) or using a reduced-rank approach. 2) IncreasemaxIter(e.g., from default 1000 to 5000). 3) TightentolParConv(e.g., from 1e-06 to 1e-07). 4) Ensure missing data is properly handled withna.method.Y="include". - Thesis Context: For low heritability (h²~0.1) traits, the signal is weak, and models are prone to convergence issues. A more tolerant

tolParConv(e.g., 1e-05) with highermaxItermay be necessary.

Q2: In rrBLUP, the calculated genomic estimated breeding values (GEBVs) show very low variance, underestimating the predicted genetic gain. What could be wrong?

A: This is commonly due to incorrect scaling or construction of the GRM. rrBLUP's kin.blup expects the GRM (K matrix) to represent additive genetic covariance, often requiring a specific scaling.

- Protocol: Use the

A.mat()function withinrrBLUPto compute the GRM directly from the marker matrixM(coded as -1,0,1). The standard protocol is:

- Critical Check: If using a pre-computed GRM from another software, ensure it is scaled as ( G = \frac{MM'}{p} ), where ( p ) is the number of markers. An improperly scaled GRM will distort variance component estimates.

Q3: How do I implement a basic GBLUP model in Python, and which libraries are essential?

A: The PyMVPA and scikit-allel libraries can be used to construct the GRM, and statsmodels or limix for mixed model solving.

- Experimental Protocol:

- Compute GRM: Use

scikit-allelto compute the additive GRM.

- Compute GRM: Use

Q4: In ASReml-R, how do I correctly specify a heterogeneous residual variance structure across environments in a multi-environment GBLUP analysis?

A: Use the at() function within the resid() term to define unique residual variances for each level of a factor (e.g., environment).

- Protocol:

- Thesis Context: This is crucial when analyzing traits across environments with differing measurement precision. For high heritability (h²>0.5) traits, correctly partitioning residual variance prevents inflation of GxE interaction estimates.

Table 1: Software-Specific Parameters for Variance Component Estimation

| Software | Key Function | Primary Tuning Parameters | Recommended Setting for Low h² (0.1) | Recommended Setting for High h² (0.6) |

|---|---|---|---|---|

| R (sommer) | mmer() |

tolParConv, maxIter, na.method.Y |

tolParConv=1e-05, maxIter=5000 |

tolParConv=1e-06, maxIter=1000 |

| R (rrBLUP) | kin.blup() |

K (GRM scaling), geno |

Ensure K = A.mat(M) |

Ensure K = A.mat(M) |

| Python | Custom / limix |

GRM scaling constant, solver tolerance | Add constant (0.05) to G diagonal | Standard scaling typically sufficient |

| ASReml-R | asreml() |

maxiter, G.param, R.param |

maxiter=50, Complex R.param |

maxiter=30, Simple R.param |

Table 2: Comparative Output Metrics Across Software (Simulated Data: n=500, m=10,000)

| Software | Estimated h² (True=0.3) | Time to Convergence (s) | Correlation (GEBV ~ True BV) |

|---|---|---|---|

| sommer v4.3 | 0.29 | 12.4 | 0.72 |

| rrBLUP v4.6.2 | 0.30 | 1.8 | 0.71 |

| limix v3.0.4 | 0.295 | 9.1 | 0.71 |

| ASReml-R v4.2 | 0.29 | 6.5 | 0.72 |

Diagram: GBLUP Software Implementation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GBLUP Implementation Experiments

| Item | Function/Description | Example/Note |

|---|---|---|

| Genotype Data (SNP Array/WGS) | Raw molecular data to construct GRM. Quality is critical. | Plink (.bed/.bim/.fam) or VCF format. MAF > 0.05 filter recommended. |

| Phenotype Data File | Measured trait values for training population. | CSV file with columns: ID, Trait, Fixed_Effects. |

| Genetic Relationship Matrix (GRM) | The core matrix defining additive genetic covariance between individuals. | Pre-computed using A.mat() (rrBLUP) or G = MM'/p. |

| High-Performance Computing (HPC) Access | REML estimation is computationally intensive for n > 2000. | Cluster with ≥ 32GB RAM and parallel processing capability. |

| R/Python Environment | Software ecosystem with necessary packages installed. | R: sommer, rrBLUP, ASReml-R. Python: numpy, limix, scikit-allel. |

| Variance Component Diagnostic Scripts | Custom code to check model convergence and estimate validity. | Monitor log-likelihood, parameter change, and variance component bounds. |

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: My GBLUP model for a low-heritability biomarker (h² ~0.1) shows near-zero predictive accuracy. Is the model fundamentally unsuitable?

A: Not necessarily. GBLUP can be applied to low-heritability traits, but default parameters often fail. The issue typically lies in the relationship matrix (G-matrix) capturing insufficient signal. First, verify the quality of your genomic data (e.g., SNP call rate, MAF filters). For low h², consider increasing marker density if possible, or switch to a weighted GBLUP (wGBLUP) approach where markers are weighted by their estimated effect sizes from a preliminary analysis to amplify subtle signals.

Q2: How do I decide between a standard G-matrix and a weighted or adjusted one for low h²?

A: The choice depends on genetic architecture. Use this diagnostic table:

| G-Matrix Type | Best For | Key Parameter | Expected Gain for h²~0.1 |

|---|---|---|---|

| Standard VanRaden (G) | Uniform infinitesimal effects | None (default) | Baseline |

| Weighted G (wGBLUP) | Few markers with larger effects | Weighting method (e.g., from GWAS p-values) | Moderate (5-15% accuracy increase) |

| Adjusted G (Gε) | Correcting for sampling error | Scaling parameter (ε) | Stabilizes estimates, reduces overfitting |

| Trait-Specific G (TAG) | Presumed major genes | BLUP-derived weights iteratively | High if architecture is correct |

Protocol: To test, perform 5-fold cross-validation comparing predictive correlation (rMP) across matrix types using the same training/test splits.

Q3: The optimization of the blending parameter (ε) for the G-matrix is computationally intensive. Is there a shortcut?

A: Yes. For low-heritability traits, a pragmatic starting point is to set ε to a value that increases the proportion of the genetic variance explained by the G-matrix itself. A common heuristic is ε = 0.05. Perform a rapid grid search over ε = [0.01, 0.05, 0.10] using a subset of your data (e.g., 30%) and a restricted maximum likelihood (REML) analysis. Monitor the log-likelihood; the peak indicates the optimal ε for stabilizing the matrix inversion.

Q4: How should I partition my dataset for cross-validation when sample size is limited (N<500) and heritability is low?

A: Avoid small test sets (e.g., 10%). Use a repeated k-fold approach (e.g., 5-fold CV repeated 5 times) to reduce variance in accuracy estimates. Ensure families or related individuals are not split across training and test sets to avoid upward bias. The following table summarizes strategies:

| Sample Size (N) | Recommended CV | Min. Test Set Size | Key Consideration |

|---|---|---|---|

| 100-300 | Leave-One-Out (LOO) or 5-Fold (Repeated 10x) | 20-60 | LOO is unbiased but slow; repeated folds give error estimates. |

| 300-500 | 5-Fold (Repeated 5x) | 60-100 | Balance between bias and computation time. |

| >500 | 10-Fold | >50 | Standard approach, sufficient for stable estimates. |

Q5: I'm getting highly inflated genomic estimated breeding values (GEBVs) for the top percentile of individuals. What's the cause and fix?

A: This is often due to overfitting, particularly acute with low h². Solutions are:

- Regularize the G-matrix: Increase the ε blending parameter to add a ridge, shrinking estimates toward the mean.

- Use a bivariate GBLUP model: If you have a correlated, higher-heritability trait (even a proxy), fit it jointly to borrow strength.

- Post-hoc correction: Calibrate GEBVs using the observed heritability and the regression coefficient (b) from validating predictions. If b < 1, shrink GEBVs by multiplying by b.

Key Experimental Protocols

Protocol 1: Tuning the G-Matrix Blending Parameter (ε)

- Inputs: Genotype matrix (M), phenotype vector (y), pedigree (optional).

- Compute G: Calculate the genomic relationship matrix (G) using the VanRaden method 1.

- Blend Matrix: Create Gε = (1-ε) * G + ε * I, where I is the identity matrix.

- Grid Search: Define a sequence for ε (e.g., 0.00, 0.01, 0.02, ..., 0.10).

- Model Fit: For each ε, fit the GBLUP model: y = Xβ + Zg + e, where var(g) = Gε * σ²g. Use REML to estimate σ²g and σ²e.

- Evaluate: Plot ε against the REML log-likelihood. The optimal ε maximizes this value, indicating the best model fit.

- Validate: Use cross-validation to confirm that the optimal ε improves predictive accuracy vs. the default (ε=0).

Protocol 2: Implementing Weighted GBLUP (wGBLUP) for Low h²

- Preliminary GWAS: Perform a standard GWAS on the training data to obtain initial SNP effect estimates or p-values.

- Calculate Weights: For each SNP i, calculate weight wi = 1 / (p-valuei) or wi = |effect sizei|.

- Construct Weighted G Matrix (Gw): Modify the standard formula: Gw = (M * diag(w) * M') / k, where M is the centered genotype matrix, diag(w) is a diagonal matrix of SNP weights, and k is a scaling constant.

- Model Fitting: Fit the GBLUP model using Gw in place of the standard G.

- Iteration (Optional): Use the resulting GEBVs to re-estimate SNP effects and weights, iterating until convergence (usually 2-3 rounds).

Visualizations

GBLUP Tuning Workflow for Low Heritability Traits

Logical Framework for Addressing Low Heritability in GBLUP

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in GBLUP Tuning for Low h² |

|---|---|

| High-Density SNP Chip or Whole-Genome Sequencing Data | Provides the genotype matrix (M). Higher density is crucial for capturing subtle polygenic signals in low-h² traits. |

| Quality Control (QC) Pipeline Software (e.g., PLINK, GCTA) | Filters markers based on Minor Allele Frequency (MAF), call rate, and Hardy-Weinberg Equilibrium to ensure a clean G-matrix. |

| GBLUP Fitting Software (e.g., GCTA, BLUPF90, ASReml) | Performs the core REML analysis to estimate variance components and predict GEBVs using the specified G-matrix. |

| Custom Scripting (R, Python) | Essential for automating parameter grids (ε, λ), calculating weighted G-matrices, and running cross-validation loops. |

| K-Fold Cross-Validation Scheduler | Manages data partitioning and model validation to obtain unbiased estimates of prediction accuracy. |

| High-Performance Computing (HPC) Cluster Access | REML and cross-validation are computationally intensive; parallel processing on an HPC drastically reduces runtime. |

| Phenotype Data Standardization Tools | Corrects for major covariates (age, sex, batch effects) to reduce residual error and improve h² estimation. |

Solving Common Pitfalls: Optimization Strategies for Problematic Heritability Ranges

Technical Support Center: GBLUP Parameter Tuning

Troubleshooting Guide: Common Issues with GBLUP for Low-Heritability Traits

Issue 1: Model Overfitting Despite Cross-Validation

- Symptoms: High prediction accuracy within the training set, but a severe drop (>50% relative decrease) in independent validation sets or cross-validation folds. The model captures noise instead of true genetic signal.

- Root Cause: The ratio of the number of markers (p) to the number of phenotyped individuals (n) is too high. The genomic relationship matrix (G) becomes overly parameterized for the limited phenotypic information.

- Immediate Actions:

- Increase the regularization parameter (λ = σe²/σg²). Manually set a higher λ to shrink marker effects more aggressively.

- Apply marker pre-selection or weighting using prior biological knowledge (e.g., QTL regions, functional annotations) to reduce the effective p.

- Use a hybrid model like RR-BLUP or Bayesian methods (BayesB, BayesC) with stronger shrinkage assumptions for non-informative markers.

Issue 2: Persistently Low Prediction Accuracy (r < 0.2)

- Symptoms: Accuracy remains stubbornly low across all tuning attempts, with high standard errors.

- Root Cause: The trait's low heritability (h² < 0.2) means a large proportion of phenotypic variance is non-additive or environmental, which GBLUP cannot capture with standard settings.

- Immediate Actions:

- Optimize the Relationship Matrix: Incorporate a pedigree matrix (A) alongside G in a single-step GBLUP (ssGBLUP) model to leverage family information.

- Integrate Non-Genetic Data: Explicitly model fixed effects (e.g., batch, year, location) and use high-dimensional environmental covariates in the model.

- Expand Training Population Size (N): For low-h² traits, the required N for moderate accuracy increases dramatically. Aim for N > 5000 if feasible.

Issue 3: High Computational Burden with Large Marker Sets

- Symptoms: Model fitting takes prohibitively long or runs out of memory.

- Root Cause: Inversion of the dense genomic relationship matrix (G) or the mixed model equations has O(n³) complexity.

- Immediate Actions:

- Use algorithm optimizations like the Algorithm for Proven and Young (APY) for ssGBLUP, which uses a core subset of animals to approximate G⁻¹.

- Switch to a SNP-based model (e.g., SNP-BLUP) and solve via iterative methods (e.g., preconditioned conjugate gradient).

- Employ quality-controlled LD-pruning to reduce the marker set to a largely independent subset.

Frequently Asked Questions (FAQs)

Q1: What is the recommended starting value for the regularization parameter (λ) when heritability is suspected to be low (h² ~0.1)? A: Begin with a λ value derived from a conservative heritability estimate. For GBLUP, λ = (1 - h²)/h². For h²=0.1, start with λ = 9. You should then perform a grid search around this value (e.g., λ = 5, 9, 15, 20) using cross-validation to find the optimum that minimizes prediction error.

Q2: How do I decide between GBLUP and Bayesian methods for a low-heritability trait? A: The choice hinges on the genetic architecture assumption. Use this decision guide:

- GBLUP/RR-BLUP: Assumes an infinitesimal model (all markers contribute equally). Best when the trait is highly polygenic with many small-effect QTLs. It is computationally efficient and less prone to overfitting with proper λ tuning.

- Bayesian Methods (BayesB/C): Assume a non-infinitesimal model (many markers have zero effect). They can be more powerful if the trait is driven by a few moderate-effect variants amidst many zeros, but they require more data and are prone to overfitting in low-h², high-p scenarios without strong priors.

Q3: My dataset has significant population structure. How can I adjust the GBLUP model to prevent spurious predictions? A: Population stratification must be corrected as a fixed effect.

- Perform a PCA on the genotype matrix.

- Include the top K principal components (PCs) that explain significant population structure as covariates in the fixed effects part of your mixed model.

- The model becomes: y = Xβ + Zg + e, where X now includes the PCs. Failure to do this will inflate accuracy estimates within the training population but destroy external validity.

Q4: What is the minimal effective training population size for low-heritability traits? A: There is no universal minimum, but the relationship between accuracy (r), heritability (h²), and training size (N) is approximated by r ≈ √(N * h² / (N * h² + 1)). To achieve r > 0.3 for h²=0.1, you likely need N > 1000. For r > 0.5, N may need to exceed 5000. Always conduct a scaling analysis if possible.

Table 1: Impact of Heritability and Training Population Size on Prediction Accuracy (Simulation Data)

| Heritability (h²) | Training Population (N) | Mean Accuracy (r) ± SE | Optimal λ Range |

|---|---|---|---|

| 0.10 | 1000 | 0.22 ± 0.05 | 8 - 12 |

| 0.10 | 3000 | 0.35 ± 0.03 | 8 - 12 |

| 0.10 | 5000 | 0.41 ± 0.02 | 9 - 11 |

| 0.25 | 1000 | 0.43 ± 0.04 | 2.5 - 4.0 |

| 0.25 | 3000 | 0.58 ± 0.02 | 2.8 - 3.5 |

| 0.50 | 1000 | 0.62 ± 0.03 | 0.9 - 1.2 |

Table 2: Comparison of Model Performance for a Low-Heritability Trait (h²=0.15)

| Model Type | Key Parameter/Setting | Within-CV Accuracy | Independent Test Accuracy | Computation Time (Relative) |

|---|---|---|---|---|

| Standard GBLUP | λ = 5.67 | 0.40 | 0.18 | 1.0x (Baseline) |

| Tuned GBLUP | λ = 12.5 | 0.30 | 0.25 | 1.0x |

| ssGBLUP | λ = 10, w=0.8* | 0.33 | 0.28 | 1.5x |

| Bayesian Ridge | - | 0.38 | 0.20 | 3.0x |

| BayesB (π=0.95) | - | 0.45 | 0.15 | 5.0x |

Note: w is the weight on the genomic relationship matrix in the combined H matrix for ssGBLUP.

Experimental Protocols

Protocol 1: Cross-Validation Framework for Parameter Tuning Objective: To reliably estimate the optimal regularization parameter (λ) and prevent overfitting.

- Data Partitioning: Randomly divide the phenotyped and genotyped dataset into K folds (typically K=5 or 10). Maintain consistent family distributions across folds if structure exists.

- Iterative Training/Validation: For each fold k:

- Designate fold k as the validation set. The remaining K-1 folds form the training set.

- For each candidate λ value in the search grid (e.g., 1, 5, 10, 15, 20):

- Fit the GBLUP model on the training set using the candidate λ.

- Predict breeding values for individuals in validation set k.

- Calculate the correlation (r) or mean squared error (MSE) between predicted and observed values in the validation set.

- Aggregate Results: Average the performance metric (r or MSE) for each λ value across all K folds.

- Parameter Selection: Choose the λ value that maximizes the average prediction accuracy (r) or minimizes the average MSE. This λ is then used to fit the final model on the entire dataset.

Protocol 2: Implementing Single-Step GBLUP (ssGBLUP) Objective: To combine pedigree (A) and genomic (G) relationship matrices for improved accuracy, especially when not all individuals are genotyped.

- Data Requirements: A pedigree file for all individuals and a genotype file (e.g., SNP array data) for a subset.

- Matrix Construction:

- Build the numerator relationship matrix (A) from the pedigree.

- Build the genomic relationship matrix (G) from genotypes, scaling it to be compatible with A (e.g., using the method by VanRaden, scaled to the same base population).

- Construct the H Matrix: The inverse of the combined relationship matrix H⁻¹ is built as: H⁻¹ = A⁻¹ + [ 0 0; 0 (G⁻¹ - A₂₂⁻¹) ], where A₂₂ is the sub-matrix of A for genotyped individuals.

- Model Fitting: Fit the mixed model y = Xβ + Za + e, where a ~ N(0, Hσ_g²). The vector a contains breeding values for all animals, with relationships defined by H.

- Validation: Use the cross-validation protocol (Protocol 1), ensuring that the construction of G and H is re-calculated within each training fold to avoid information leakage.

Visualizations

Title: GBLUP Parameter Tuning via Cross-Validation Workflow

Title: Single-Step GBLUP Matrix Construction Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for GBLUP Experiments

| Item Name/Type | Function & Purpose in Experiment | Key Consideration for Low-h² Traits |

|---|---|---|

| High-Density SNP Array or WGS Data | Provides genotype matrix (X) for constructing the Genomic Relationship Matrix (G). | Coverage and quality are critical. Use stringent QC (MAF, call rate, HWE) to reduce noise. |

| Phenotype Database (Trait & Covariates) | Contains the response variable (y) and fixed effects (e.g., environment, batch). | Must be large-scale (N > 1000). Precise measurement and correction for non-genetic factors is paramount. |

| Pedigree Records | Enables construction of the numerator relationship matrix (A) for ssGBLUP. | Completeness and accuracy improve the blending of genomic and pedigree information. |

| High-Performance Computing (HPC) Cluster | Essential for matrix operations (inversion) and cross-validation with large N and p. | Requires optimized BLAS libraries and parallel processing capabilities for Bayesian methods. |

| GWAS/QTL Summary Statistics | Can be used for weighting SNPs or pre-selecting markers in a weighted GBLUP model. | Prior biological knowledge helps guide the model when phenotypic signal is weak. |

| Mixed Model Solver Software (e.g., BLUPF90, ASReml, BGLR) | Software suite to implement GBLUP, ssGBLUP, and Bayesian models. | Choose software that supports custom variance component ratios (λ) and large-scale data. |

Troubleshooting Guides and FAQs

FAQ 1: Why does my GBLUP model produce consistently overestimated GEBVs for low-heritability traits?

Answer: This bias often stems from improper variance component estimation. In low-heritability scenarios (h² < 0.2), the residual variance dominates, causing the REML algorithm to struggle with partitioning variance correctly. This leads to an upward bias in the estimated additive genetic variance. To mitigate this, ensure your relationship matrix (G-matrix) is built with high-quality, imputed genotypes and consider using a Bayesian approach (e.g., BayesA) for initial variance component estimation to inform your GBLUP starting values.

FAQ 2: My REML estimation fails to converge within the default iteration limit. What are the primary causes?

Answer: Convergence failure in REML for GBLUP is commonly caused by:

- Ill-conditioned G-matrix: Near-singularity due to high collinearity among individuals (e.g., from clones or repeated genotypes).

- Extreme Heritability Boundaries: The algorithm oscillates when true h² is near 0 or 1.

- Insufficient Sample Size: The ratio of individuals to markers is too low, leading to unstable solutions.

Protocol to Resolve:

- Check the condition number of your G-matrix (

kappa(G)). If > 10^6, apply a bending procedure (add a small value, e.g., 0.01, to the diagonal). - Re-scale the G-matrix to be comparable to the pedigree-based A-matrix.

- Increase the maximum number of REML iterations (e.g., to 200).

- Use a more robust optimization algorithm (e.g., AI-REML instead of EM-REML).

FAQ 3: How do I diagnose and correct for inflation/deflation of GEBVs (Genomic Estimated Breeding Values)?

Answer: Inflation (mean of GEBVs > mean of observed phenotypes) indicates underestimated additive variance. Deflation indicates the opposite. Diagnose using the regression of observed phenotypes on GEBVs (slope should be ~1).

Corrective Protocol:

- Calculate the regression coefficient (b) of phenotypes on GEBVs in a validation set.

- If