Mastering Large-Scale Genetic Prediction: A Comprehensive Guide to GBLUP Methodology for Big Data in Biomedical Research

This article provides a comprehensive examination of the Genomic Best Linear Unbiased Prediction (GBLUP) methodology in the context of large reference populations, tailored for researchers and drug development professionals.

Mastering Large-Scale Genetic Prediction: A Comprehensive Guide to GBLUP Methodology for Big Data in Biomedical Research

Abstract

This article provides a comprehensive examination of the Genomic Best Linear Unbiased Prediction (GBLUP) methodology in the context of large reference populations, tailored for researchers and drug development professionals. It begins by establishing the foundational concepts of GBLUP and its superiority for polygenic trait prediction. The core methodological section details the computational steps, matrix operations, and software implementations required to handle vast genomic datasets. We address critical challenges such as computational bottlenecks, data quality issues, and model optimization strategies. Finally, the article validates GBLUP against alternative methods (like ssGBLUP and Bayesian approaches) and explores its applications in complex disease risk prediction and pharmacogenomics. This guide serves as a strategic resource for leveraging large-scale genomic data to accelerate precision medicine.

GBLUP Unpacked: Core Principles and Why It Dominates Large-Scale Genomic Prediction

This application note is framed within a broader thesis investigating the optimization of Genomic Best Linear Unbiased Prediction (GBLUP) methodology for large reference populations in quantitative genetics and complex trait prediction. The evolution from the traditional BLUP, reliant on pedigree-based relationship matrices (A), to GBLUP, which utilizes genomic relationship matrices (G), represents a paradigm shift in predictive accuracy for breeding values and polygenic risk scores, particularly as reference population sizes (N) scale into the thousands.

Core Methodological Evolution: BLUP to GBLUP

Foundational Models

The core statistical models underpin the methodological evolution.

Traditional BLUP (Pedigree-Based):

y = Xb + Zu + e

Where:

y= vector of phenotypesb= vector of fixed effectsu= vector of random additive genetic effects ~ N(0, Aσ²_a)- A = Numerator Relationship Matrix derived from pedigree.

e= vector of residuals.

Genomic BLUP (Marker-Based):

y = Xb + Zg + e

Where:

g= vector of random genomic breeding values ~ N(0, Gσ²_g)- G = Genomic Relationship Matrix, estimated from genome-wide marker data.

Quantitative Comparison of Predictive Accuracy

The following table summarizes key metrics documenting the performance gain with GBLUP over BLUP, particularly in large-N scenarios, as supported by recent literature.

Table 1: Comparative Performance of BLUP vs. GBLUP in Various Species/Traits

| Species/Trait | Reference Population Size (N) | BLUP Accuracy (r) | GBLUP Accuracy (r) | Gain (%) | Key Note | Source (Type) |

|---|---|---|---|---|---|---|

| Dairy Cattle (Milk Yield) | ~10,000 | 0.35 - 0.40 | 0.65 - 0.75 | ~80 | Early adoption in genomics-enabled selection. | Simulation & Field Data |

| Humans (Height PRS) | >100,000 | 0.20* | 0.40 - 0.45* | ~100 | *Correlation with held-out phenotype; demonstrates scalability. | Large Cohort Study |

| Maize (Grain Yield) | 1,500 - 2,000 | 0.30 | 0.50 - 0.60 | ~67 | Effective with moderate N, capturing dominance. | Experimental Cross Data |

| Swine (Feed Efficiency) | 3,500 | 0.45 | 0.60 | 33 | GBLUP better controls pedigree errors. | Industry Breeding Program |

| Wheat (Rust Resistance) | 800 | 0.55 | 0.70 | 27 | Even for traits with major genes, GBLUP improves accuracy. | Panel Study |

Experimental Protocols for GBLUP Implementation

Protocol 3.1: Constructing the Genomic Relationship Matrix (G)

This is the fundamental step differentiating GBLUP from BLUP.

Objective: To compute the G matrix from dense SNP genotype data.

Reagents/Materials: Genotype data (e.g., SNP array or imputed sequence data) in PLINK (.bed/.bim/.fam) or VCF format.

Software: R (rrBLUP, AGHmatrix), Python (scikit-allel), or standalone tools (GCTA).

Procedure:

- Data QC: Filter SNPs for minor allele frequency (MAF > 0.01), call rate (> 95%), and individuals for genotype call rate (> 90%). Remove mismatched or duplicate samples.

- Code Genotypes: Convert genotypes (AA, AB, BB) to a numerical matrix M (n x m), where n = individuals, m = markers. Common coding: AA=0, AB=1, BB=2.

- Allele Frequency Correction: Calculate the allele frequency pᵢ for each SNP i.

- Center the Matrix: Create a centered matrix Z where each element Zⱼᵢ = Mⱼᵢ - 2pᵢ.

- Calculate G Matrix: Use the VanRaden (2008) Method 1:

G = ( Z Zᵀ ) / [ 2 * Σᵢ ( pᵢ (1 - pᵢ) ) ]Where Zᵀ is the transpose of Z. This yields an n x n symmetric matrix. - Matrix Adjustment: (Optional) Blend G with a small portion of the identity matrix (e.g., G* = 0.99G + 0.01I) to ensure positive definiteness and invertibility.

Protocol 3.2: Cross-Validation for Predictive Accuracy Assessment

Objective: To empirically estimate the prediction accuracy of a GBLUP model.

Procedure:

- Data Partitioning: Randomly split the phenotyped and genotyped reference population (N total) into k folds (typically k=5 or 10). For each iteration i:

- Training Set: k-1 folds (e.g., ~80% of N for k=5).

- Validation Set: The remaining 1 fold (e.g., ~20% of N).

- Model Training: Fit the GBLUP mixed model using the G matrix constructed from only the training set individuals on the training set phenotypes.

- Prediction: Use the estimated model effects to predict the genomic estimated breeding values (GEBVs) for the individuals in the validation set.

- Accuracy Calculation: For iteration i, calculate the correlation (rᵢ) between the predicted GEBVs and the observed phenotypes (or corrected phenotypes) in the validation set.

- Iteration & Aggregation: Repeat steps 2-4 for all k folds. The overall cross-validation accuracy is the mean of all rᵢ values.

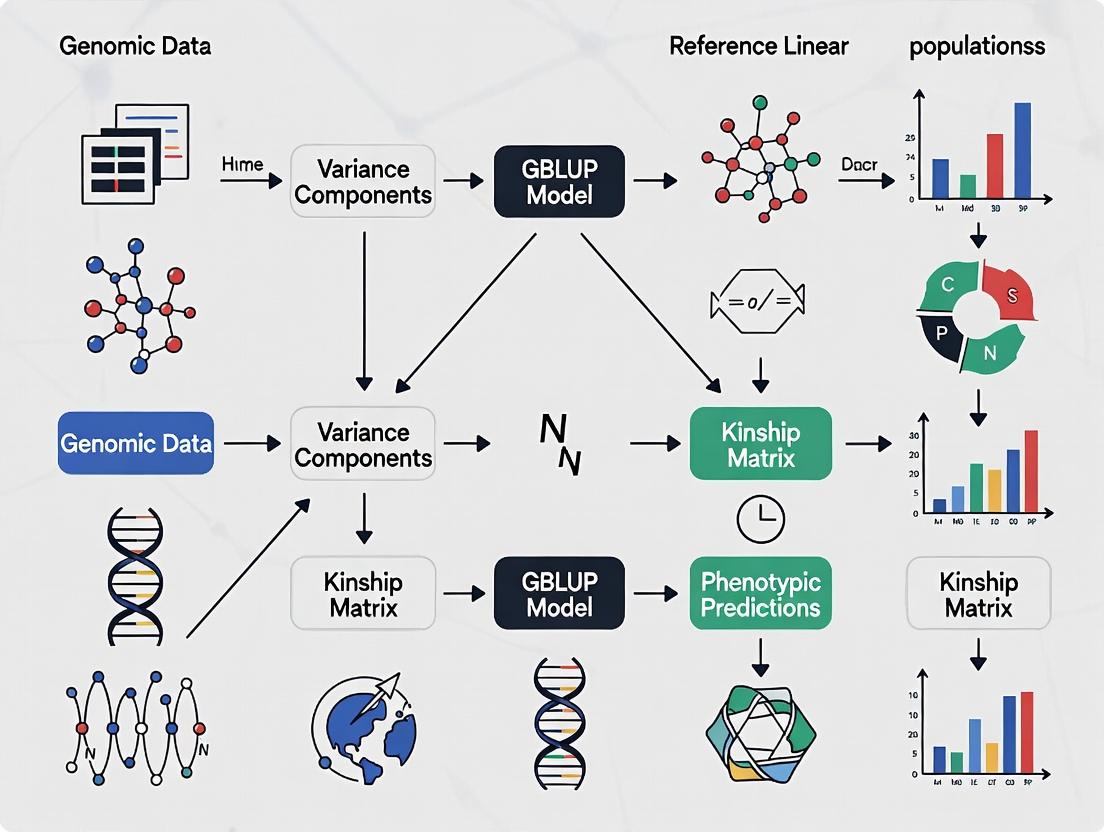

Visualizing the Methodological Workflow

Diagram 1: BLUP to GBLUP Methodological Evolution

Diagram 2: k-Fold Cross-Validation Protocol for GBLUP

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Software for GBLUP Implementation

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| High-Density SNP Array | Genotyping Reagent | Provides genome-wide marker data (e.g., 50K-800K SNPs) for constructing the G matrix. Cost-effective for large-N studies. |

| Whole Genome Sequence (WGS) Data | Genotyping Reagent | Gold-standard for marker discovery. Used for imputation to increase marker density and improve G matrix resolution. |

| PLINK 2.0 | Bioinformatics Software | Performs essential genotype data Quality Control (QC), filtering, format conversion, and basic population genetics analyses. |

| GCTA (GREML Tool) | Statistical Genetics Software | Specialized software for efficiently constructing G matrices and solving large-scale GBLUP/REML models. |

R rrBLUP Package |

Statistical Software | Comprehensive R package for performing genomic prediction, including GBLUP, ridge regression, and cross-validation. |

| BLUPF90 Family | Statistical Software | Suite of programs (e.g., PREGSF90, POSTGSF90) optimized for industry-scale genomic prediction with iterative solvers. |

| GRM Blending Script | Computational Tool | Custom script to blend G with A or I to stabilize matrix inversion, crucial for large, complex pedigrees. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Essential for computationally intensive steps (matrix construction, inversion, model solving) when N > 10,000. |

Within the context of a Genomic Best Linear Unbiased Prediction (GBLUP) methodology framework for large reference populations, the Genomic Relationship Matrix (G-Matrix) serves as the central genetic kernel. It quantifies the realized genomic similarity between individuals based on marker data, replacing the expected pedigree-based relationships with observed genomic coefficients, thereby increasing the accuracy of genomic estimated breeding values (GEBVs).

Core Concepts & Quantitative Data

The G-matrix (G) is typically calculated from a centered matrix of marker genotypes. Two prevalent methods for its construction are presented below, with their formulas and applications summarized.

Table 1: Common G-Matrix Formulations for GBLUP

| Method | Formula | Key Parameter | Primary Use Case |

|---|---|---|---|

| VanRaden (Method 1) | ( G = \frac{WW'}{2\sum pi(1-pi)} ) | ( p_i ): Allele frequency at SNP i | Standardized relationship coefficients. Assumes markers explain all genetic variance. |

| VanRaden (Method 2) | ( G = \frac{ZZ'}{tr(ZZ')/n} ) | ( Z ): M (0,1,2) matrix centered by 2p_i. | Mitigates downward bias in genomic heritability by using a different scaling constant. |

| Endelman & Jannink | ( G = \frac{WW'}{\lambda} ) | ( \lambda = \sum 2pi(1-pi) ) | Equivalent to Method 1, explicit normalization. |

| Typical Dimensions | For a reference population of n=10,000 individuals and m=50,000 SNPs, G is a 10,000 x 10,000 symmetric, positive (semi)-definite matrix. |

Table 2: Impact of G-Matrix on GEBV Accuracy (Simulated Data)

| Trait Heritability (h²) | Pedigree BLUP Accuracy | GBLUP (G-Matrix) Accuracy | Relative Gain |

|---|---|---|---|

| 0.3 | 0.42 | 0.67 | +59.5% |

| 0.5 | 0.55 | 0.78 | +41.8% |

| 0.7 | 0.66 | 0.85 | +28.8% |

Accuracy is the correlation between true breeding value and estimated breeding value in validation populations.

Application Notes & Protocols

Protocol 1: Construction of the G-Matrix for Large-Scale GBLUP

Objective: To compute a robust, scalable G-matrix from high-density SNP array data for a reference population of >5,000 individuals.

Materials (Research Reagent Solutions):

- Genotype Data: High-density SNP array (e.g., Illumina BovineHD, 777K SNPs) in PLINK (.bed/.bim/.fam) or VCF format.

- Software: PLINK v2.0, GEMMA v0.98, GCTA v1.94, or custom R/Python scripts with linear algebra libraries (ARPACK).

- Computing Resources: High-performance computing (HPC) cluster with sufficient memory (~80 GB for n=10,000).

Procedure:

- Quality Control (QC): Use PLINK to filter raw genotypes.

--mind 0.05: Exclude individuals with >5% missing calls.--geno 0.05: Exclude SNPs with >5% missing rate.--maf 0.01: Exclude SNPs with minor allele frequency <1%.--hwe 1e-6: Exclude SNPs violating Hardy-Weinberg equilibrium (p < 1e-6).

- Centering & Scaling:

- Calculate allele frequency (pi) for each retained SNP.

- Construct the n x m genotype matrix M, coded as 0, 1, 2 for homozygous, heterozygous, alternate homozygous.

- Compute the centered matrix Z = M - P, where P is a matrix with columns 2pi.

- Compute the normalization constant: ( \lambda = \sum{i=1}^m 2pi(1-p_i) ).

- Matrix Multiplication:

- Compute G = ZZ' / λ. For large n, perform in blocks or use distributed computing frameworks (e.g., Spark).

- Validation:

- Ensure G is symmetric. Check that mean diagonal elements are ~1.0.

- Visualize a random sub-sample via heatmap to confirm structure.

Protocol 2: Integrating the G-Matrix into GBLUP Model Fitting

Objective: To solve the GBLUP mixed model equations using the constructed G-matrix to obtain GEBVs.

Model: The univariate animal model is: y = Xb + Zg + e Where y is the vector of phenotypes, b is fixed effects, g ~ ( N(0, G\sigma^2_g) ) is the vector of genomic breeding values, and e is the residual.

Procedure:

- Variance Component Estimation: Use REML (Restricted Maximum Likelihood) via software like GEMMA or GCTA to estimate ( \sigma^2g ) and ( \sigma^2e ).

- Command example (GEMMA):

gemma -g genotype -p phenotypes -gk 1 -o g_matrix - Command for REML:

gemma -g genotype -p phenotypes -k output/g_matrix.sXX.txt -lmm 4 -o gblup_results

- Command example (GEMMA):

- Solving Mixed Model Equations (MME):

- Construct the left-hand side (LHS) and right-hand side (RHS) of the MME: [ \begin{bmatrix} X'X & X'Z \ Z'X & Z'Z + G^{-1}\alpha \end{bmatrix} \begin{bmatrix} \hat{b} \ \hat{g} \end{bmatrix} = \begin{bmatrix} X'y \ Z'y \end{bmatrix} ] where ( \alpha = \sigma^2e / \sigma^2g ).

- Solve for ( \hat{g} ) (GEBVs) using sparse solvers or iterative methods (e.g., Preconditioned Conjugate Gradient).

- Accuracy Assessment: Correlate GEBVs of individuals in a validation set (masked phenotypes) with their adjusted phenotypes or, in simulations, with true breeding values.

Visualizations

GBLUP with G-Matrix Analysis Workflow

G-Matrix Construction Logical Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for G-Matrix & GBLUP Research

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| High-Density SNP Arrays | Provides genome-wide marker data for constructing genomic relationships. | Illumina Infinium, Affymetrix Axiom arrays. Species-specific (e.g., HumanOmni, PorcineSNP60). |

| Genotype QC Pipelines | Ensures data quality before G-matrix calculation; prevents bias from poor markers. | PLINK, SNPRelate, VCFTools. Critical for MAF, missingness, HWE filters. |

| G-Matrix Computation Software | Efficiently performs the large-scale linear algebra operations required. | GCTA, GEMMA, preGSf90 (BLUPF90 suite), R rrBLUP package. |

| REML Solvers | Estimates genetic and residual variance components using the G-matrix. | GCTA-REML, GEMMA, AIREMLF90 (BLUPF90). Requires iterative optimization. |

| High-Performance Computing (HPC) | Provides the necessary memory and CPU power for large population matrices. | Cluster computing with >1TB RAM and parallel processing for n > 50,000. |

| Reference Population Database | Curated collection of genotypes and phenotypes for model training. | Internal breeding program databases, public repositories like CattleGenome or Dryad. |

Complex traits, such as human disease susceptibility, crop yield, or livestock productivity, are controlled by numerous genetic loci, each with a small effect. This polygenic architecture fundamentally contrasts with Mendelian traits driven by one or a few major-effect genes. Genomic Best Linear Unbiased Prediction (GBLUP) has become a cornerstone methodology for predicting genetic merit in complex traits precisely because it aligns with this polygenic paradigm. Within the context of a broader thesis on GBLUP methodology for large reference populations, these application notes detail the theoretical and practical superiority of GBLUP for polygenic traits and provide protocols for its implementation.

Key Conceptual Distinction Table:

| Feature | Major Gene Model | Polygenic Model (GBLUP) |

|---|---|---|

| Genetic Architecture | Few loci with large effects. | Many loci with infinitesimally small effects. |

| Primary Method | Single Marker Regression, GWAS. | Genomic Relationship Matrix (GRM)-based BLUP. |

| Variance Explained | High per locus, low total. | Low per locus, high cumulative total. |

| Prediction Accuracy | High for major genes, poor for complex traits. | High and robust for complex, polygenic traits. |

| Population Assumption | Identifies specific causal variants. | Captures overall relatedness and linkage disequilibrium (LD). |

Logical Flow of Model Selection for Complex Traits:

Title: Decision Tree for Genetic Model Selection

GBLUP operates using a Genomic Relationship Matrix (G) calculated from dense genome-wide marker data (e.g., SNP chips). This matrix quantifies the genetic similarity between individuals based on observed alleles, effectively capturing the collective effect of all markers. The model is expressed as:

y = Xb + Zu + e

Where y is the vector of phenotypic observations, b is the vector of fixed effects, u ~ N(0, Gσ²_g) is the vector of genomic breeding values, and e is the residual. The G matrix centralizes the polygenic "infinitesimal model" assumption, allowing the prediction of an individual's total genetic merit by borrowing information across all markers and related individuals in the reference population.

Quantitative Performance Comparison (Summary from Recent Studies):

| Study (Trait) | Population Size | Major Gene Model Accuracy (r) | GBLUP Accuracy (r) | Key Insight |

|---|---|---|---|---|

| Human Height | ~250,000 | 0.12-0.25 (Top GWAS SNPs) | 0.40-0.65 | GBLUP captures ~50% of additive variance vs. <10% by top SNPs. |

| Dairy Cattle Milk Yield | ~20,000 bulls | 0.10-0.30 (Prior GWAS-based) | 0.65-0.75 | G matrix accounts for family structure and LD, improving selection. |

| Wheat Grain Yield | ~5,000 lines | 0.20-0.35 (MAS) | 0.50-0.70 | Accuracy scales with reference population size and trait heritability. |

| Psychiatric Disorders | Case-Control Cohorts | Low (AUC ~0.55-0.60) | AUC ~0.65-0.72 | Polygenic risk scores (derived from GBLUP logic) show utility. |

Experimental Protocol: Implementing GBLUP for Complex Trait Prediction

Protocol 1: Constructing the Genomic Relationship Matrix (G) and Running GBLUP

Objective: To predict genomic estimated breeding values (GEBVs) for a complex quantitative trait in a large plant/animal reference population.

Materials & Computational Tools:

- Genotype Data: High-density SNP array data (e.g., Illumina, Affymetrix) in PLINK (.bed/.bim/.fam) or VCF format.

- Phenotype Data: Cleaned, pre-adjusted phenotypic records for the target trait(s) with associated fixed effects (e.g., herd, year, batch).

- Software:

PLINK 2.0for QC,GCTAorBLUPF90suite for GRM calculation and GBLUP,RwithrrBLUPorsommerpackages for analysis and validation. - High-Performance Computing (HPC) Cluster: Essential for large-scale GRM computation (>10K individuals).

Procedure: Step 1: Data Quality Control (QC)

- Filter genotypes for call rate (>95%), minor allele frequency (MAF > 0.01-0.05), and Hardy-Weinberg equilibrium (p > 1e-6).

- Remove individuals with excessive missing data (>10%) or cryptic relatedness (identity-by-descent > 0.99).

- Impute missing genotypes using software like

BEAGLEorMinimac4.

Step 2: Construct the Genomic Relationship Matrix (G)

- Using

GCTA:gcta64 --bfile [QCed_data] --autosome --make-grm --out [G_matrix] - This creates the G matrix where element G_ij is proportional to the genetic covariance between individuals i and j based on SNPs.

Step 3: Perform GBLUP Analysis

- Using the

BLUPF90family: Prepare parameter and data files specifying the mixed model equations incorporating the G matrix as the covariance structure for random animal effects. - Using

R(rrBLUPpackage):

Step 4: Cross-Validation and Accuracy Assessment

- Partition the reference population into k folds (e.g., 5-fold).

- Iteratively use k-1 folds to train the GBLUP model and predict GEBVs for the remaining validation fold.

- Correlate predicted GEBVs with adjusted phenotypes in the validation set. This correlation is the prediction accuracy. Correct for heritability to estimate the unbiased accuracy.

Protocol 2: Single-Step GBLUP (ssGBLUP) for Combined Genomic-Pedigree Analysis

Objective: To integrate genomic and pedigree data for more accurate predictions, especially when not all individuals are genotyped.

Procedure:

- Construct the H matrix, which is a combined relationship matrix inverse blending the pedigree-based A⁻¹ and genomic G⁻¹ matrices.

- Use software like

BLUPF90(preGSf90andblupf90) orTHRGIBBSF90which are optimized for single-step analyses. - The model simultaneously uses information from genotyped and non-genotyped relatives, maximizing the use of all available data.

Title: GBLUP Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item/Category | Function in GBLUP Research | Example/Notes |

|---|---|---|

| High-Density SNP Arrays | Provides genome-wide marker data to construct the Genomic Relationship Matrix (G). | Illumina BovineHD (777K SNPs), Illumina Infinium HTS Human-1M, Thermo Fisher Axiom Wheat Breeder's Genotyping Array. |

| Genotype Imputation Software | Increases marker density and uniformity by inferring missing or ungenotyped variants using a reference haplotype panel. | BEAGLE, Minimac4, Eagle2. Critical for combining datasets from different arrays. |

| GBLUP Analysis Software | Solves the mixed model equations to estimate variance components and predict GEBVs. | BLUPF90 suite (industry standard), GCTA, ASReml, R packages (rrBLUP, sommer). |

| Pedigree Database | Provides historical relationship data for constructing the numerator relationship matrix (A), used in ssGBLUP. | Internally maintained SQL databases; software like PEDIG for managing and checking pedigrees. |

| High-Performance Computing (HPC) Resource | Enables the computationally intensive inversion and manipulation of large G and H matrices (>50,000 individuals). | Linux clusters with sufficient RAM (≥512GB) and multi-core processors. |

| Phenotype Standardization Pipelines | Cleans and adjusts raw phenotypic data for non-genetic fixed effects (environment, management) to improve heritability estimates. | Custom scripts in R or Python using linear models (lm, lmer). |

Application Notes

In genomic prediction using Genomic Best Linear Unbiased Prediction (GBLUP) with large reference populations, three critical assumptions underpin model reliability and accuracy. Violations of these assumptions can lead to biased estimates, reduced prediction accuracy, and flawed biological interpretation.

1. Additivity: The standard GBLUP model assumes that genotypic values result from the sum of individual marker effects, ignoring dominance and epistasis. This simplification is computationally efficient but may misrepresent complex trait architecture.

2. Linkage Disequilibrium (LD): GBLUP relies on LD between markers and quantitative trait loci (QTL). The model assumes that marker-based relationships capture the genetic similarity at causal loci. The decay of LD over generations or across diverse populations can violate this assumption.

3. Population Structure: The model often assumes a homogeneous, randomly mating population. Structured populations (subgroups, breeds, families) can induce spurious associations, confounding genomic relationships with environmental or ancestral similarities.

The following table summarizes the impact of violating these assumptions on GBLUP predictions, based on recent simulation and empirical studies.

Table 1: Impact of Assumption Violations on GBLUP Predictive Ability

| Assumption | State | Primary Impact | Typical Reduction in Predictive Accuracy (r²)* | Mitigation Strategies |

|---|---|---|---|---|

| Additivity | Strictly Additive Trait | Minimal bias; optimal performance. | Baseline (0% reduction) | Standard GBLUP is sufficient. |

| Non-Additive Trait (Dominance/Epistasis) | Underestimation of genetic variance; biased GEBVs. | 10-25% | Include dominance/epistatic effects in GRM; use non-linear ML methods. | |

| Linkage Disequilibrium | Strong, Persistent LD | High accuracy within reference population. | Baseline | Ensure marker density suffices for LD coverage. |

| Rapid LD Decay (e.g., across generations) | Accuracy declines in subsequent generations. | 15-40% | Use functional annotations; prioritize markers in low-recombination regions. | |

| Inconsistent LD (Cross-Population) | Poor portability of predictions between populations. | 30-60% | Use multi-population reference sets; implement Bayesian variable selection. | |

| Population Structure | Homogeneous Population | Accurate, unbiased predictions. | Baseline | Standardize for known covariates. |

| Unaccounted Stratification | Inflation of GEBVs, false positives. | 5-20% | Include principal components as fixed effects; use adjusted GRM (e.g., PCA-GBLUP). | |

| Admixed/Breed-Specific Effects | Over/under-prediction for underrepresented groups. | 20-50% | Apply breed-specific or weighted GRMs; use meta-analysis frameworks. |

*Percentage reductions are approximate ranges from recent literature (2023-2024) and vary by trait heritability and dataset size.

Experimental Protocols

Protocol 1: Quantifying and Correcting for Population Structure in GBLUP

Objective: To assess and adjust for population stratification within a large reference population to prevent spurious genomic predictions. Materials: Genotype data (SNP array or WGS), phenotype data, computing cluster with PLINK2, GCTA, and R/Python. Procedure:

- Quality Control: Filter genotypes for call rate (>95%), minor allele frequency (>1%), and Hardy-Weinberg equilibrium (p > 10⁻⁶).

- Population Genetics Analysis:

- Perform Principal Component Analysis (PCA) on the genotype matrix using PLINK2 (

--pca). - Visualize the first 3-5 PCs to identify clusters.

- Calculate genomic inflation factor (λ) from a simple GWAS without correction.

- Perform Principal Component Analysis (PCA) on the genotype matrix using PLINK2 (

- Model Comparison:

- Model A (Naïve GBLUP): Fit GBLUP using a genomic relationship matrix (GRM) computed from all SNPs. Model:

y = 1μ + Zu + e, whereu ~ N(0, Gσ²_g). - Model B (PCA-Adjusted GBLUP): Include the top k PCs (sufficient to explain population structure) as fixed covariates. Model:

y = 1μ + Qβ + Zu + e, whereQis the matrix of k PCs. - Model C (Adjusted GRM): Compute a GRM after regressing out the top k PCs from the genotype matrix (e.g., using

--remove-pcin GCTA), then fit standard GBLUP.

- Model A (Naïve GBLUP): Fit GBLUP using a genomic relationship matrix (GRM) computed from all SNPs. Model:

- Validation: Use 5-fold cross-validation within and across identified sub-populations. Compare the predictive accuracy (correlation between GEBVs and adjusted phenotypes) and bias (regression slope of phenotype on GEBV) of the three models.

- Interpretation: The model minimizing bias across groups while maintaining high within-group accuracy is preferred.

Protocol 2: Evaluating the Additivity Assumption via Dominance GRM

Objective: To partition and estimate the proportion of non-additive (dominance) genetic variance for a complex trait. Materials: High-density SNP data, phenotype records, software: GCTA (for GRM construction) and mixed model solver (e.g., DMU, BLUPF90). Procedure:

- Compute Relationship Matrices:

- Additive GRM (GA): Compute using standard method (VanRaden, 2008).

- Dominance GRM (GD): Compute using expectations based on Hardy-Weinberg equilibrium (Vitezica et al., 2013). Code snippet for GCTA:

gcta64 --make-grm-d --autosome-num 29 --out G_D.

- Model Fitting: Fit a series of mixed models via REML:

y = Xb + Z_a u_a + e(Additive only)y = Xb + Z_a u_a + Z_d u_d + e(Additive + Dominance) Whereu_a ~ N(0, G_A σ²_a),u_d ~ N(0, G_D σ²_d).

- Variance Component Estimation: Estimate σ²a, σ²d, and residual variance σ²_e. Calculate the proportion of total genetic variance due to dominance:

σ²_d / (σ²_a + σ²_d). - Prediction Assessment: In a cross-validation scheme, compare the accuracy of GEBVs from the additive-only model vs. the sum of additive and dominance EBVs from the full model.

- Decision Point: If dominance explains >10% of genetic variance and improves prediction accuracy, consider implementing a permanent dominance GRM in the breeding program.

Protocol 3: Assessing LD Decay Impact on Multi-Generational Prediction

Objective: To measure the persistence of GBLUP accuracy across generations and link decay to LD breakdown. Materials: Multi-generational genotype and phenotype data (e.g., grandparents, parents, progeny). Procedure:

- Define Reference and Validation Sets: Use the earliest generation (Gen0) as the reference population. Successive, non-overlapping generations (Gen1, Gen2) serve as validation sets.

- Calculate LD Metrics: Within the reference population, compute the average pairwise

r²between SNPs at varying physical distances (e.g., 0-10kb, 10-50kb, 50-100kb). Plotr²against distance to visualize LD decay. - Train and Predict: Compute the GRM using only Gen0. Fit the GBLUP model on Gen0 phenotypes to estimate SNP effects (or directly predict GEBVs for all generations).

- Accuracy Trajectory: Calculate the predictive accuracy (correlation) for Gen0 (within), Gen1, and Gen2.

- Model LD-Aware Predictions: Reparameterize the GRM by weighting SNPs inversely by regional LD score (to upweight SNPs in low-LD, potentially informative regions) and repeat prediction.

- Analysis: Correlate the decay in predictive accuracy across generations with the rate of LD decay. Assess if LD-aware weighting mitigates accuracy loss.

Diagrams

Title: GBLUP Assumptions, Violations, and Mitigations

Title: Population Structure Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for GBLUP Assumption Testing

| Item | Category | Function & Relevance to Assumptions |

|---|---|---|

| High-Density SNP Array (e.g., Illumina BovineHD, PorcineGGP 80K) | Genotyping Platform | Provides genome-wide marker data. Density is critical for capturing LD and constructing robust GRMs. |

| Whole Genome Sequencing (WGS) Data | Genotyping Platform | Gold standard for variant discovery. Allows precise study of LD patterns and identification of causal variants, testing additivity. |

| PLINK 2.0 | Bioinformatics Software | Performs core QC, PCA, basic association tests, and data management. Primary tool for initial population structure analysis. |

| GCTA (GREML) | Statistical Genetics Software | Computes additive and non-additive GRMs, estimates variance components, and performs REML analysis. Central for testing additivity and LD assumptions. |

| BLUPF90 / DMU | Mixed Model Solver | Efficiently solves large-scale mixed models (GBLUP). Essential for fitting complex models with multiple GRMs and covariates. |

| Pre-Computed LD Scores (e.g., from 1000 Genomes Project) | Reference Data | Enables LD-informed weighting of SNPs in the GRM to account for region-specific LD structure, mitigating LD decay issues. |

| Annotated Genome Reference (e.g., ENSEMBL, UCSC) | Reference Data | Provides functional annotations. Used to prioritize markers in coding/regulatory regions, potentially capturing more additive effects. |

| Kinship Matrix (Pedigree-Based) | Validation Data | Serves as a benchmark for the additive GRM. Large discrepancies can indicate population structure or LD problems. |

| Simulated Data with Known Architecture | Validation Data | Allows controlled testing of assumptions by generating traits with specific additive/dominance variance and population structures. |

Within the broader methodological thesis on Genomic Best Linear Unbiased Prediction (GBLUP), the scalability of the reference population (N) represents a pivotal frontier. This application note delineates the quantitative and mechanistic advantages of large-scale genomic datasets in enhancing the accuracy and robustness of GBLUP predictions for complex traits. The core principle is that increasing N refines the estimation of the genomic relationship matrix (GRM), mitigates overfitting, and improves the portability of models across diverse populations and environments—a critical consideration for pharmaceutical and agricultural trait development.

Quantitative Evidence: The Impact of Reference Population Size

Empirical studies across species consistently demonstrate a logarithmic increase in prediction accuracy with increasing N, followed by a plateau as genetic architecture is fully captured.

Table 1: Impact of Reference Population Size on GBLUP Accuracy for Complex Traits

| Species / Study (Source) | Trait Category | Reference Size (N) | Prediction Accuracy (rg) | Key Finding |

|---|---|---|---|---|

| Dairy Cattle (Wiggans et al., 2017) | Milk Yield | 5,000 | 0.45 | Baseline accuracy for moderately heritable trait. |

| 25,000 | 0.68 | ~51% relative increase; GRM estimation significantly improved. | ||

| 100,000+ | 0.75 | Diminishing returns observed; plateau approached. | ||

| Humans (UK Biobank) (Timmerman et al., 2023) | Height (Polygenic) | 50,000 | 0.40 | Accuracy limited for highly polygenic traits. |

| 200,000 | 0.55 | Robust increase; better capture of small-effect variants. | ||

| 500,000 | 0.65 | Near-ceiling for current SNP arrays; highlights "Large N" requirement for human genetics. | ||

| Maize (Crossa et al., 2023) | Grain Yield | 1,000 | 0.30 | High GxE interaction reduces accuracy in small panels. |

| 10,000 | 0.52 | Large N enables better modeling of GxE, improving robustness across environments. | ||

| Swine (Wang et al., 2022) | Disease Resilience | 3,000 | 0.25 | Low accuracy for low-heritability, complex trait. |

| 15,000 | 0.41 | Substantial gain; demonstrates necessity of big data for hard-to-measure traits. |

Table 2: Statistical Robustness Metrics vs. Population Size

| Metric | Small N (1k-5k) | Large N (50k+) | Implication for Research |

|---|---|---|---|

| Standard Error of GEBV | High (± 0.15) | Low (± 0.05) | More reliable individual predictions. |

| Bias (Regression Coeff.) | Often < 1.0 (Overfitting) | Closer to 1.0 | Predictions are less inflated and more consistent. |

| Model Portability (r between populations) | 0.1 - 0.4 | 0.5 - 0.7 | Enhanced generalizability in drug development cohorts. |

| GWAS Signal Enhancement (via GREML) | Low-powered | High-powered | Improved variant discovery for target identification. |

Experimental Protocols

Protocol 3.1: Establishing a Large-N Reference Population for GBLUP

Objective: To create a genetically and phenotypically robust reference population for high-accuracy genomic prediction. Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Cohort Ascertainment: Recruit or access a cohort with N > 50,000. Ensure phenotypic data is standardized (e.g., clinical endpoints, yield measurements) and covariates (age, sex, batch) are recorded.

- Genotyping & QC: Perform genome-wide SNP genotyping (e.g., array-based). Apply quality control: sample call rate > 98%, SNP call rate > 99%, Hardy-Weinberg equilibrium p > 10-6, minor allele frequency (MAF) > 0.01.

- Phenotype Processing: Correct phenotypes for significant covariates using a linear model. Transform residuals to approximate normality if required.

- Population Stratification: Perform principal component analysis (PCA) on the genotype matrix. Use the first k principal components as fixed effects in the model to control for population structure.

- Data Partitioning: Randomly divide the data into a reference/training set (80-90%) and a validation set (10-20%). Ensure genetic relatedness between sets is representative.

Protocol 3.2: Running Large-Scale GBLUP Analysis

Objective: To estimate genomic breeding values (GEBVs) and model parameters using a large reference population. Software: GCTA, BLUPF90, or custom R/Python scripts using HE-regression libraries. Procedure:

- Compute Genomic Relationship Matrix (GRM): Calculate the GRM using the method of VanRaden (2008): G = (M-P)(M-P)' / 2Σpj(1-pj). M is the allele dosage matrix, P is a matrix of twice the allele frequency, and pj is the frequency of the alternate allele for SNP j.

- Fit the GBLUP Model: Solve the mixed model equations: y = Xβ + Zu + e. Where y is the vector of phenotypes, X is the design matrix for fixed effects (e.g., PCs), β is the vector of fixed effect solutions, Z is the design matrix relating individuals to genetic values, u ~ N(0, Gσ²u) is the vector of genomic values, and *e ~ N(0, *Iσ²e) is the residual.

- Variance Component Estimation: Use Restricted Maximum Likelihood (REML) via the AI or EM algorithm to estimate σ²u (genetic variance) and σ²e (residual variance).

- Predict GEBVs: Obtain GEBVs as the solutions for û.

- Validate: Correlate GEBVs (from the training set model) with observed phenotypes in the held-out validation set to estimate prediction accuracy. Calculate the regression coefficient of observed on predicted values to assess bias.

Protocol 3.3: Assessing Robustness via Cross-Population Validation

Objective: To test the generalizability of a GBLUP model trained on a large-N population. Procedure:

- Model Training: Train a GBLUP model on Population A (Large-N source).

- External Validation Cohort: Genotype and phenotype an independent Population B (target population, e.g., a different breed or clinical trial cohort).

- Project GRM: Align SNP data from Population B to Population A. Re-calculate allele frequencies from Population A and use them to center the genotype matrix of Population B before predicting GEBVs using the model from Step 1.

- Evaluate Portability: Calculate the prediction accuracy (correlation) in Population B. Compare to the accuracy achieved when Population B uses its own small-N model. A smaller performance gap indicates higher robustness from the large-N training set.

Visualizations

Diagram Title: Mechanism of Large-N Advantage in GBLUP

Diagram Title: Large-N GBLUP Analysis Protocol Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Large-N GBLUP Research

| Item / Solution | Function in Large-N GBLUP | Example / Specification |

|---|---|---|

| High-Density SNP Genotyping Array | Provides the dense, genome-wide marker data required to construct the GRM. Critical for capturing linkage disequilibrium. | Illumina Global Screening Array, Affymetrix Axiom Genomic Breeding Array. |

| High-Throughput Phenotyping Platform | Enables accurate, standardized, and scalable measurement of complex traits across tens to hundreds of thousands of individuals. | Automated clinical analyzers, imaging-based field scanners, digital health monitoring apps. |

| Biobank/LIMS Software | Manages the massive metadata linking genotype, phenotype, and sample provenance. Essential for data integrity and QC. | FreezerPro, LabVantage, custom SQL databases. |

| High-Performance Computing (HPC) Cluster | Necessary for the computationally intensive steps of GRM calculation (O(N²M)) and REML estimation on large matrices. | Linux cluster with >1TB RAM and parallel processing (e.g., SLURM scheduler). |

| GBLUP/REML Software Suite | Specialized software optimized for solving large mixed models and handling genomic data. | GCTA, BLUPF90 suite, MTG2, or specialized R packages (rrBLUP, sommer). |

| Population Genetics QC Toolkit | For quality control, population stratification analysis, and data formatting. | PLINK, GCTA, EIGENSOFT, bcftools. |

| Secure Cloud Storage & Compute | Alternative to on-premise HPC; provides scalability for data sharing and collaborative analysis of massive cohorts. | AWS S3/EC2, Google Cloud Storage/Compute, with appropriate encryption for human data. |

Building the Engine: A Step-by-Step Guide to Implementing GBLUP for Massive Datasets

The efficacy of Genomic Best Linear Unbiased Prediction (GBLUP) for complex trait prediction in large reference populations is fundamentally contingent on the quality and consistency of the input genomic data. This protocol details the essential preprocessing steps—Quality Control (QC), Genotype Imputation, and Data Scaling—required to transform raw genotype data from hundreds of thousands of samples into a robust, analysis-ready dataset for GBLUP modeling.

Quality Control (QC) Protocol

Initial QC removes low-quality samples and markers to minimize technical artifacts. The following thresholds, optimized for large-scale biobank-level data, should be applied sequentially.

Table 1: Recommended QC Filters for Large-Scale Genomic Data

| QC Step | Target | Recommended Threshold | Rationale |

|---|---|---|---|

| Sample-level | Call Rate | < 98% | Exclude samples with excessive missingness. |

| Sex Discrepancy | Inconsistency between reported and genotypic sex | Identify sample swaps or contamination. | |

| Heterozygosity | ± 3 SD from mean | Identify contaminated samples or inbreeding outliers. | |

| Variant-level | Call Rate | < 95% | Exclude markers with high missingness. |

| Hardy-Weinberg Equilibrium (HWE) | p < 1x10⁻⁶ | Exclude markers with severe deviation, suggesting genotyping errors. | |

| Minor Allele Frequency (MAF) | < 0.0001 (0.01%) | Remove ultra-rare variants unstable for GBLUP. |

Experimental Protocol 1.1: Sample QC using PLINK

- Input: Raw genotype files in PLINK format (

.bed,.bim,.fam). - Calculate Missingness:

plink --bfile [input] --missing --out [output] - Identify Sex Discrepancy:

plink --bfile [input] --check-sex ycount 0.2 0.8 --out [output] - Calculate Heterozygosity:

plink --bfile [input] --het --out [output] - Apply Filters:

plink --bfile [input] --mind 0.02 --check-sex --maf 0.0001 --hwe 1e-6 --geno 0.05 --make-bed --out [qc_cohort]

Genotype Imputation Protocol

Imputation infers ungenotyped markers using a reference haplotype panel, increasing marker density and consistency across genotyping arrays.

Table 2: Comparison of Imputation Workflows for Large Samples

| Software | Primary Use | Strengths for Large N | Key Consideration |

|---|---|---|---|

| Minimac4/IMPUTE5 | Phasing & Imputation | Highly optimized for speed and memory. | Requires pre-phasing (e.g., with Eagle2). |

| Beagle 5.4 | Integrated Phasing & Imputation | User-friendly, all-in-one tool. | Computational demands scale with sample size. |

| GLIMPSE2 | Low-Coverage/Array Imputation | Efficient for large cohort imputation. | Divided into chunk-based workflow. |

Experimental Protocol 2.1: Pre-Phasing and Imputation with Eagle2 & Minimac4

- Data Preparation: Convert QC'd data to VCF format. Align alleles to reference panel (e.g., TOPMed r2) orientation.

- Phasing:

eagle --vcfRef [ref.vcf.gz] --vcfTarget [target.vcf] --geneticMapFile [map.txt] --outPrefix [phased] - Imputation:

minimac4 --refHaps [ref_panel.m3vcf] --haps [phased.vcf] --format DS,GP --prefix [imputed] --cpus 8 - Post-Imputation QC: Filter imputed variants for

R² > 0.3(imputation quality metric) andMAF > 0.0001.

Data Scaling & Standardization Protocol

Scaling ensures numerical stability for the GBLUP mixed model equations and prevents over-weighting of variants based on allele frequency.

Experimental Protocol 3.1: Genotype Matrix Scaling for GBLUP

- Input: A n x m genotype matrix G (n=samples, m=variants), coded as 0,1,2 for the number of effect alleles.

- Calculate Scaling Factor: For each variant j, compute the scaling factor ( wj = 1 / \sqrt{2pj(1-pj)} ), where ( pj ) is the observed allele frequency.

- Apply Scaling: Generate the scaled matrix Z by centering and scaling each column: ( Z{.j} = (G{.j} - 2pj) * wj ).

- Output: The scaled matrix Z is used directly to construct the Genomic Relationship Matrix (GRM) for GBLUP: ( GRM = ZZ^T / m ).

Table 3: Impact of Scaling on GRM Properties

| Scaling Method | GRM Diagonal Mean | Interpretation in GBLUP |

|---|---|---|

| No Scaling | Variable, depends on MAF | Biases genetic variance estimates. |

| Allele Frequency Scaling | ~1.0 | Standardized genetic relationships, assumed in GBLUP. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Large-Scale Genomic Preprocessing

| Item | Function | Example/Note |

|---|---|---|

| High-Performance Computing Cluster | Provides necessary CPU, memory, and parallel processing for large datasets. | Essential for imputation of >100k samples. |

| Reference Haplotype Panel | Panel of sequenced haplotypes used as a template for imputation. | TOPMed Freeze 8, HRC r1.1, 1000 Genomes Phase 3. |

| Genetic Map File | Specifies recombination hot/cold spots for accurate phasing. | HapMap Phase II b37 genetic map. |

| PLINK 2.0 | Core software for efficient genome-wide association analysis & QC. | Handles large datasets more efficiently than PLINK 1.9. |

| BCFtools | Utilities for variant calling file (VCF/BCF) manipulation, indexing, and query. | Used for post-imputation filtering and format conversion. |

| R/Python with Data.table/Pandas | For scripting custom QC checks, scaling operations, and results aggregation. | Critical for automating pipeline steps and parsing logs. |

Workflow Diagrams

Title: Overall Genomic Data Preprocessing Pipeline for GBLUP

DOT Diagram 2: Detailed Quality Control Steps

Title: Sequential Sample and Variant Quality Control Filters

DOT Diagram 3: Genotype Scaling for GRM Construction

Title: Workflow for Genotype Scaling and GRM Calculation

Within the broader thesis on Genomic Best Linear Unbiased Prediction (GBLUP) methodology for large reference populations, the efficient construction of the Genomic Relationship Matrix (G-Matrix) is a foundational computational bottleneck. As genotyping technologies advance, datasets now routinely contain hundreds of thousands to millions of single nucleotide polymorphisms (SNPs) genotyped on tens or hundreds of thousands of individuals. This scale renders naive, double-loop algorithms for G-Matrix calculation—requiring O(mn²) operations for *m SNPs and n individuals—prohibitively slow and memory-intensive. This document details modern algorithmic approaches, protocols, and toolkits for efficient G-Matrix construction, directly enabling scalable GBLUP research.

Core Algorithms and Performance Comparison

The standard G-Matrix (G) according to VanRaden (Method 1) is calculated as:

G = ZZ′ / 2∑pᵢ(1-pᵢ)

where Z is an n x m matrix of centered and scaled genotype codes (e.g., 0-2p, 1-2p, 2-2p for genotypes AA, AB, BB, with p being the allele frequency), and the denominator is a scaling factor.

Efficient algorithms focus on computing ZZ′ without forming the full Z matrix in memory.

Table 1: Comparison of Algorithms for G-Matrix Construction

| Algorithm | Key Principle | Computational Complexity | Memory Complexity | Best Suited For | Key Limitation |

|---|---|---|---|---|---|

| Naive Double Loop | Direct computation of each relationship. | O(mn*²) | O(n²) for G | Small n (< 5,000) | Intractable for large n. |

| BLAS-based (SGEMM/DGEMM) | Single call to optimized linear algebra library. | O(mn*²) but highly optimized. | O(mn) for Z, O(n*²) for G | Moderate n, m (Z fits in RAM). | Requires storing full Z matrix. |

| Blocked Algorithm | Processes Z in contiguous blocks (columns or rows). | O(mn*²) | O(bn) for block size *b. | Large m, limited RAM. | Requires careful I/O management. |

| Compressed Data Structures | Uses bit-level compression (e.g., 2 bits per call). | O(mn² / k) for compression factor *k. | O(mn* / k) for Z. | Very large m and n. | Added compression/decompression overhead. |

| Parallel (MPI/OpenMP) | Distributes computations across CPU cores/nodes. | O(mn² / P) for *P processors. | Distributed across nodes. | HPC clusters, very large n. | Requires parallel programming expertise. |

| Approximate (Randomized SVD) | Computes a low-rank approximation G ≈ QQ′. | O(mn* log k + n k²) for rank k. | O(kn*) for Q. | n > 100,000 for dimensionality reduction. | Approximation, not exact G. |

Experimental Protocols for Algorithm Benchmarking

Protocol 3.1: Benchmarking Computational Efficiency

Objective: To compare the runtime and memory usage of different G-matrix construction algorithms on a standardized dataset.

Materials: High-performance computing node (e.g., 32 cores, 256 GB RAM), PLINK 2.0 binary genotype files (.bed), compiled software (e.g., calc_grm from GCTA, custom scripts).

Procedure:

- Data Preparation: Use PLINK2 to generate a standardized test dataset with simulated or real genotypes (n=10,000, m=100,000). Create subsets (e.g., n=1k, 5k, 10k; m=10k, 50k, 100k).

- Algorithm Implementation:

a. Baseline (BLAS): Load full Z matrix into RAM and compute G = Z Z′ using a single

sgemm/dgemmcall from Intel MKL or OpenBLAS. b. Blocked Algorithm: Implement an algorithm that reads genotypes in batches of b SNPs (e.g., b=1,000). For each batch i, compute a sub-matrix Gᵢ = Zᵢ Zᵢ′ and add to the accumulating G. c. Parallel Algorithm (OpenMP): Modify the blocked algorithm to use OpenMP pragmas to parallelize the inner product loops across multiple CPU cores. - Execution & Profiling: For each algorithm and dataset:

a. Record wall-clock time using

timecommand. b. Monitor peak memory usage with/usr/bin/time -vor a memory profiler. c. Repeat each run 5 times, calculate mean and standard deviation. - Validation: Verify all algorithms produce identical G matrices (within floating-point tolerance) by comparing a random subset of elements.

Protocol 3.2: Validating an Approximate (Low-Rank) G-Matrix

Objective: To assess the fidelity of an approximate G-matrix generated via randomized algorithms for use in GBLUP.

Materials: As in Protocol 3.1, plus software for randomized SVD (e.g., rsvd R package, frovedis library).

Procedure:

- Exact G-Matrix Construction: Compute the exact G using a validated, exact algorithm (e.g., BLAS method) for a moderately large dataset (n=20,000).

- Approximate Construction: Compute the approximate matrix Gₖ. a. Compute the n x k matrix Q via randomized SVD on Z (target rank k, e.g., k=2,000). b. Construct Gₖ = Q Q′.

- Quantitative Assessment: a. Frobenius Norm Error: Calculate ‖G - Gₖ‖F / ‖G‖F. b. Eigenvalue Spectrum: Compare the top k eigenvalues of G and Gₖ. c. GBLUP Impact: Perform a genomic prediction analysis using both G and Gₖ as covariance matrices in a simple GBLUP model. Compare the predicted breeding values (PBVs) for a validation population via correlation and mean squared error.

Visualizations

Title: Workflow for Efficient G-Matrix Construction

Title: Randomized Algorithm for Approximate G

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Libraries for G-Matrix Construction

| Item (Software/Library) | Category | Primary Function & Explanation |

|---|---|---|

| PLINK 2.0 | Data Management | Converts, filters, and manages large-scale SNP data in compressed binary format (.bed), essential for efficient I/O in downstream algorithms. |

| Intel Math Kernel Library (MKL) | Computational Library | Provides highly optimized BLAS routines (e.g., ?GEMM) for the matrix multiplications at the heart of G-matrix calculation, offering massive speedups. |

| OpenBLAS | Computational Library | An open-source alternative to MKL, providing optimized BLAS and LAPACK routines for various CPU architectures. |

| OpenMP/MPI | Parallel Programming | Standards for shared-memory (multi-core) and distributed-memory (multi-node) parallelization, respectively, crucial for scaling to very large n. |

| GCTA Toolkit | Specialized Software | Includes the --make-grm function, which implements efficient, blocked algorithms for constructing the G-matrix directly from PLINK files. |

| PROC GLIMMIX (SAS) | Statistical Modeling | A procedure capable of fitting mixed models with a user-supplied G-matrix, used for final GBLUP analysis after G is constructed. |

rrBLUP or BGLR R Packages |

Statistical Modeling | R packages that can accept a user-computed G-matrix to perform genomic prediction via GBLUP and related models. |

Randomized SVD Libraries (e.g., rsvd) |

Approximate Algorithm | Implements probabilistic algorithms for low-rank matrix decomposition, enabling the approximation of G for ultra-large n. |

| HDF5/NetCDF Formats | Data Storage | File formats for storing large, dense matrices like G in a compressed, chunked manner that supports partial access. |

In the context of Genomic Best Linear Unbiased Prediction (GBLUP) for large reference populations, the core computational challenge is solving the Mixed Model Equations (MMEs). These equations, fundamental for estimating genomic breeding values (GEBVs) and variance components, become intractable with standard linear algebra as population sizes (N) exceed hundreds of thousands. This document details current computational shortcuts and iterative solvers essential for scaling GBLUP to modern genomic datasets.

Core Computational Strategies and Data

Table 1: Comparison of Iterative Solvers for Large-Scale MME

| Solver Algorithm | Convergence Rate | Memory Complexity (approx.) | Best Suited For | Key Limitation |

|---|---|---|---|---|

| Preconditioned Conjugate Gradient (PCG) | Fast (if good preconditioner) | O(N²) for G-inverse | Single-trait, large N | Preconditioner design is critical |

| Modified-Successive Over-Relaxation (MSOR) | Moderate, stable | O(N²) | Multi-trait models | Slower convergence than PCG |

| Direct Sparse Cholesky | N/A (direct) | Depends on sparsity | Structured pedigrees (A-inverse) | Fails for dense genomic relationships (G) |

| Alternating Direction Implicit (ADI) | Fast for partitioned systems | O(N²/k) for k partitions | Partitioned G-matrices | Requires effective data splitting |

| Stochastic Gradient Descent (SGD) | Variable, noisy | O(N) | Extremely large N (>1M) | Results may require careful validation |

Table 2: Computational Shortcuts for GBLUP Components

| Shortcut Method | Formula/Approach | Computational Saving | Applicability |

|---|---|---|---|

| Direct G-inverse via Eigendecomposition | G = UDU', G⁻¹ = UD⁻¹U' | Reduces inversion cost from O(N³) to O(N³) but enables iterative use of eigenvalues | N < 50,000 |

| SNP-based MME (ssGBLUP) | H-inverse blending A⁻¹ and G⁻¹ | Avoids explicit construction of dense G (uses SNP matrix Z) | N >> number of SNPs |

| Low-Rank G Approximation | G ≈ TT' (rank k) | Reduces memory to O(N*k), k << N | When effective population size is small |

| Randomized SVD for G | Approximates eigenvectors/values | Faster eigendecomposition for very large G | Pre-processing step for PCG |

| Parallel Computing (GPU) | Parallelized matrix-vector multiplications | Near-linear speedup for core PCG operations | All iterative methods |

Experimental Protocols

Protocol 3.1: Implementing a Preconditioned Conjugate Gradient (PCG) Solver for Single-Trait GBLUP

Objective: Solve MME Cb = r for genomic EBVs without directly inverting C. Materials: Genotype matrix (Z), phenotype vector (y), computing cluster/GPU access. Procedure:

- Construct the MME: For model y = Xb + Zu + e, form coefficient matrix C = [[X'R⁻¹X, X'R⁻¹Z]; [Z'R⁻¹X, Z'R⁻¹Z + G⁻¹λ]], where λ = σ²e/σ²u.

- Choose Preconditioner (M⁻¹): A common block-diagonal preconditioner uses the diagonal blocks of C. Compute M⁻¹ as the inverse of these blocks (trivial).

- PCG Initialization:

- Set initial solution vector b⁽⁰⁾ = 0.

- Compute initial residual r⁽⁰⁾ = r - Cb⁽⁰⁾.

- Solve Mz⁽⁰⁾ = r⁽⁰⁾ for z⁽⁰⁾.

- Set initial search direction p⁽⁰⁾ = z⁽⁰⁾.

- Iterate (k=0,1,... until ||r⁽ᵏ⁾|| < tolerance): a. Compute α⁽ᵏ⁾ = (r⁽ᵏ⁾ᵀz⁽ᵏ⁾) / (p⁽ᵏ⁾ᵀCp⁽ᵏ⁾). b. Update solution: b⁽ᵏ⁺¹⁾ = b⁽ᵏ⁾ + α⁽ᵏ⁾p⁽ᵏ⁾. c. Update residual: r⁽ᵏ⁺¹⁾ = r⁽ᵏ⁾ - α⁽ᵏ⁾Cp⁽ᵏ⁾. d. Solve Mz⁽ᵏ⁺¹⁾ = r⁽ᵏ⁺¹⁾. e. Compute β⁽ᵏ⁾ = (r⁽ᵏ⁺¹⁾ᵀz⁽ᵏ⁺¹⁾) / (r⁽ᵏ⁾ᵀz⁽ᵏ⁾). f. Update search direction: p⁽ᵏ⁺¹⁾ = z⁽ᵏ⁺¹⁾ + β⁽ᵏ⁾p⁽ᵏ⁾.

- Output: Converged solution vector b containing fixed effect estimates and GEBVs.

Protocol 3.2: Multi-Trait GBLUP using Modified Successive Over-Relaxation (MSOR)

Objective: Solve multi-trait MMEs where the coefficient matrix is large and block-structured. Materials: Multi-trait phenotype data, genetic covariance matrix (G₀), residual covariance matrix (R₀). Procedure:

- Form Block MME: For t traits, the MME system is a t x t block matrix, where each block corresponds to the design matrices scaled by elements of G₀⁻¹ and R₀⁻¹.

- Decompose Coefficient Matrix: Express C as C = D + L + U, where D is block diagonal, L is strictly block lower triangular, U is strictly block upper triangular.

- MSOR Iteration: For iteration v = 0,1,2,...:

- b⁽ᵛ⁺¹⁾ = (D + ωL)⁻¹ [ωr - (ωU + (ω-1)D) b⁽ᵛ⁾]

- where ω is the over-relaxation parameter (typically 1.1 to 1.5 for acceleration).

- Convergence Check: Iterate until the norm of the difference between successive solutions is below a predefined threshold (e.g., 10⁻⁸).

Mandatory Visualizations

Title: GBLUP MME Computational Workflow

Title: Preconditioned Conjugate Gradient (PCG) Algorithm Loop

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Computational Experiment |

|---|---|

| High-Performance Computing (HPC) Cluster | Provides parallel CPU cores and high memory for matrix operations and distributed solving of MMEs. |

| GPU Accelerators (e.g., NVIDIA A100) | Dramatically speeds up the dense matrix-vector multiplications that are the bottleneck of iterative solvers like PCG. |

| Optimized BLAS/LAPACK Libraries (Intel MKL, cuBLAS) | Low-level routines for efficient linear algebra computations, forming the backbone of any custom solver code. |

| Specialized Genetics Software (BLUPF90, MiXBLUP) | Provides validated, production-ready implementations of iterative solvers (PCG, Gauss-Seidel) for standard animal models. |

| Programming Environment (Julia, Python with NumPy/SciPy, C++) | Languages and libraries used to prototype new algorithms or customize existing solvers for specific GBLUP model variations. |

| Preconditioning Matrix (M⁻¹) | A simpler, easily invertible approximation of the MME coefficient matrix (C) that accelerates solver convergence. |

| Genetic Relationship Matrix (G) | The dense N x N matrix of genomic similarities between all individuals, central to forming the MME. |

| Variance Component Estimates (σ²u, σ²e) | Required to compute the scaling factor λ = σ²e/σ²u in the MME; often estimated via AI-REML in an outer loop. |

Within the broader thesis on Genomic Best Linear Unbiased Prediction (GBLUP) methodology for large reference populations, the practical implementation of computational pipelines is paramount. This guide details the core software toolkit—preGSf90, GCTA, and supporting R/Python packages—providing application notes and standardized protocols for researchers and drug development professionals engaged in genomic prediction and variance component estimation.

The table below summarizes the core functions, optimal use cases, and quantitative performance benchmarks for the primary software tools in large-scale GBLUP analyses.

Table 1: Core Software Toolkit for GBLUP Implementation

| Software/Tool | Primary Function | Key Strength | Typical Scale (Samples x SNPs) | Common Outputs |

|---|---|---|---|---|

| preGSf90 | Pre/Post-processing for BLUPF90 suite | Efficient handling of pedigree + genomic relationships, inversion of blended H matrix. | Up to ~1M animals, ~50K-800K SNPs | GRM, inverted relationship matrices, adjusted phenotypes. |

| GCTA | Genome-wide Complex Trait Analysis | Robust REML for variance component estimation, MLMA, GWAS, large GRM construction. | Up to ~500K individuals, ~1M SNPs | Variance components, heritability, genomic predictions, GRM. |

| R: sommer | Mixed model solving in R | Flexible user interface, various covariance structures, integrates with R's stats ecosystem. | Up to ~50K individuals (RAM-limited) | BLUPs, variance components, stability analysis. |

| Python: PySTAN | Bayesian mixed models | Customizable prior distributions, full Bayesian inference for uncertainty quantification. | Model complexity-limited (~10-20K individuals) | Posterior distributions of GEBVs and variance parameters. |

Experimental Protocols

Protocol 2.1: Genomic Relationship Matrix (GRM) Construction & Quality Control

Objective: To construct a robust GRM from high-density SNP data for downstream GBLUP analysis. Materials: Genotype data in PLINK binary format (.bed, .bim, .fam). Software: GCTA. Procedure:

- Data QC: Execute

plink --bfile data --maf 0.01 --hwe 1e-6 --geno 0.1 --mind 0.1 --make-bed --out data_qc. - GRM Calculation: Run

gcta64 --bfile data_qc --autosome --make-grm --out data_grm. This computes the GRM using the method of VanRaden (2008). - GRM Quality Check: Use

gcta64 --grm data_grm --grm-cutoff 0.05 --make-grm --out data_grm_cleanto remove one individual from pairs with relatedness >0.05 to avoid bias. - GRM Visualization: In R, use the

heatmap()function on a subset of the GRM (loaded viagcta64 --grm data_grm --grm-adj 0) to inspect structure.

Protocol 2.2: Single-Step GBLUP (ssGBLUP) Implementation

Objective: To integrate genomic and pedigree information for genetic evaluation using a single-step approach.

Materials: Phenotype file (pheno.txt), pedigree file (pedigree.txt), genotype file (geno.txt), parameter file.

Software: preGSf90 & blupf90 family.

Procedure:

- Data Preparation: Format files as per BLUPF90 requirements. The genotype file is typically in SNP-wise orientation (animals x SNPs).

- Run preGSf90: Execute

preGSf90 par.txtto perform quality control on genotypes, construct the inverse of the blended H matrix (H = A + A22-1 - G22-1), and create sparse formatted files. - Run blupf90: Execute

blupf90 par.txtto solve the mixed model equations (y = Xb + Zu + e) using the prepared H-1. - Post-Processing: Use

postGSf90to calculate reliability of genomic predictions and convert solutions to readable formats.

Mandatory Visualization

Diagram 1: ssGBLUP Software Workflow and Data Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Research Reagents

| Reagent (Software/Module) | Function in Experiment | Key Parameter/Setting |

|---|---|---|

GCTA --make-grm |

Constructs the genomic relationship matrix, the core "reagent" for genomic covariance. | --grm-cutoff (filter relatedness), --autosome (use autosomes only). |

preGSf90 --prepare |

Prepares and inverts the blended relationship matrix (H) for single-step analysis. | Tau & Omega (scaling factors for A22-1 and G22-1). |

| BLUPF90 PARAMETER file | The "reaction buffer" specifying the statistical model, files, and solver settings. | EFFECT (fixed/random), RANDOM (type of covariance structure), OPTION solv_method FSPAK. |

R asreml or sommer package |

Provides a flexible environment for alternative model testing and diagnostics. | vs() function for variance structures (sommer), ginverse for custom relationships (asreml). |

Python numpy & pandas |

Essential for data manipulation, reformatting, and preliminary summary statistics. | DataFrame.merge() (join phenotype and genotype tables), numpy.linalg for custom matrix operations. |

Genomic Best Linear Unbiased Prediction (GBLUP) is a cornerstone methodology for predicting complex traits from genome-wide marker data. Within large reference populations, GBLUP leverages a genomic relationship matrix (GRM) to estimate the genetic merit (breeding value) of individuals. Translating these breeding values into clinically interpretable scores for disease risk and drug response is a critical step for precision medicine. This document provides application notes and protocols for this translation process, framed within a thesis on advancing GBLUP for large-scale biomedical cohorts.

Core Quantitative Outputs of GBLUP and Their Interpretation

GBLUP analysis yields several key outputs. The summarized data below provides a comparative overview of their roles in breeding versus clinical contexts.

Table 1: Core GBLUP Outputs and Their Dual Interpretation

| Output | Definition in Breeding/Genetics | Clinical Translation & Interpretation |

|---|---|---|

| Estimated Breeding Value (EBV) | The sum of the effects of all genetic markers, representing an individual's genetic merit for a trait relative to the population mean. | Polygenic Score (PGS) / Genetic Liability: Represents an individual's innate genetic predisposition for a disease or drug metabolism phenotype. Often scaled to a more interpretable risk percentile. |

| Genomic Relationship Matrix (GRM) | A matrix quantifying the genetic similarity between individuals based on shared alleles, correcting for population structure. | Genetic Similarity Network: Informs on population stratification and cryptic relatedness in the cohort, which are confounders for clinical prediction. Essential for validating model assumptions. |

| Variance Components (σ²g, σ²e) | The estimated genetic (σ²g) and residual (σ²e) variances. Heritability (h²) = σ²g / (σ²g + σ²e). | Trait Heritability: Indicates the upper bound of predictive accuracy achievable from genetic data alone. Low h² suggests greater role of environment or more complex genetics. |

| Prediction Accuracy (rgg) | The correlation between genomic estimated breeding values (GEBVs) and true breeding values in validation sets. | Clinical Predictive Performance: Often measured as the correlation between the PGS and the observed clinical endpoint (e.g., ICD code, lab value) or the Area Under the ROC Curve (AUC) for case-control status. |

Table 2: Scaling GBLUP Outputs to Clinical Risk Categories (Hypothetical Example for CVD Risk)

| GBLUP Output (Scaled PGS) | Percentile Range | Proposed Clinical Risk Category | Suggested Action (Example) |

|---|---|---|---|

| High Genetic Risk | > 95th | Significantly Elevated | Aggressive primary prevention (e.g., early statin therapy, lifestyle intervention). |

| Moderate-High Risk | 75th - 95th | Elevated | Enhanced monitoring and standard prevention guidelines. |

| Average Risk | 25th - 75th | Average | Population-based screening and prevention. |

| Low-Moderate Risk | 5th - 25th | Below Average | Reassurance, maintain healthy behaviors. |

| Low Genetic Risk | < 5th | Significantly Below Average | Reassurance. |

Protocols for Translating GBLUP to Clinical Scores

Protocol 3.1: From Breeding Values to Standardized Polygenic Scores

Objective: Transform raw GBLUP EBVs into standardized, interpretable polygenic scores for clinical evaluation.

- Input: Raw GBLUP EBVs for all individuals in the reference and target cohorts.

- Standardization: Within a representative hold-out subset or the original reference population, standardize the EBVs to have a mean of 0 and a standard deviation of 1.

PGS_std = (EBV_individual - μ_EBV_reference) / σ_EBV_reference - Percentile Ranking: Calculate the percentile rank of each individual's PGS_std against the reference distribution.

- Optional Scaling to Liability: For case-control traits, scale the PGS_std using the observed disease prevalence and estimated heritability to place it on a liability threshold scale.

- Output: A table for each individual containing: Individual ID, Raw EBV, PGSstd, PGSpercentile.

Protocol 3.2: Validating Clinical Predictive Performance

Objective: Assess the real-world predictive accuracy of the GBLUP-derived PGS.

- Data Partitioning: Split the genotyped and phenotyped cohort into a training set (≥70%) for GBLUP model training and a strictly independent test set (≤30%) for validation.

- Model Training: Run GBLUP on the training set to estimate marker effects and variance components.

- Score Generation: Apply the model from Step 2 to generate PGS for individuals in the test set.

- Performance Metrics Calculation:

- For continuous traits (e.g., LDL cholesterol): Calculate the Pearson correlation (r) between the PGS and the measured phenotype.

- For binary traits (e.g., Type 2 Diabetes case/control): Calculate the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). Generate the ROC curve by plotting sensitivity vs. (1-specificity) across all PGS thresholds.

- Output: Validation report containing correlation coefficients, AUC, and associated confidence intervals.

Protocol 3.3: Integrating PGS with Clinical Covariates for Enhanced Prediction

Objective: Build a combined model integrating genetic and non-genetic risk factors.

- Input: PGS (from Protocol 3.1), clinical covariates (e.g., age, sex, BMI, smoking status), and the target clinical endpoint for the test set.

- Model Specification: Fit a multiple logistic (for binary) or linear (for continuous) regression model:

Clinical Endpoint ~ PGS + Age + Sex + BMI + ... - Model Comparison: Compare the performance (AUC or r²) of:

- Model A: Clinical covariates only.

- Model B: PGS only.

- Model C: Clinical covariates + PGS.

- Net Reclassification Improvement (NRI): Quantify the improvement in risk classification when adding PGS to the clinical model, focusing on predefined risk categories (see Table 2).

- Output: A final integrated risk score equation, performance metrics for all models, and NRI analysis.

Visualizing Workflows and Relationships

GBLUP to Clinical Score Translation Workflow

Relationship Between PGS, Environment, and Clinical Outcome

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Tools for GBLUP Clinical Translation Studies

| Item / Reagent | Function / Purpose | Example/Note |

|---|---|---|

| High-Density SNP Genotyping Array | Provides genome-wide marker data to build the Genomic Relationship Matrix (GRM). | Illumina Global Screening Array, UK Biobank Axiom Array. Essential for large-scale cohort genotyping. |

| Whole Genome Sequencing (WGS) Data | Gold standard for variant discovery; enables more comprehensive GRM construction, including rare variants. | Used in top-tier reference populations (e.g., All of Us, Genomics England). |

| GBLUP Software | Performs the core statistical analysis to estimate variance components and breeding values. | GCTA, BLUPF90, MTG2, BGLR. Choice depends on model complexity and dataset size. |

| PLINK | Universal toolset for genome association analysis, data management, and format conversion pre/post-GBLUP. | Critical for QC, filtering, and preparing genotype inputs in .bed/.bim/.fam format. |

| R Statistical Environment with key packages | Data manipulation, statistical analysis, visualization, and clinical metric calculation. | rrBLUP, `caret` (for AUC), `PredictABEL` (for NRI), `ggplot2`. The primary platform for protocol execution. |

| Curated Clinical Phenotype Database | Accurate, deep phenotyping of disease status, drug response metrics, and relevant covariates. | EHR-derived phenotypes with expert curation. Quality here limits predictive performance. |

| High-Performance Computing (HPC) Cluster | Necessary for the intensive linear algebra operations (inverting GRM) on cohorts with N > 10,000. | Cloud (AWS, GCP) or on-premise clusters with sufficient RAM and parallel processing capabilities. |

Overcoming Hurdles: Solving Computational and Statistical Challenges in Large GBLUP Analyses

Application Notes

The implementation of Genomic Best Linear Unbiased Prediction (GBLUP) for large reference populations (>100,000 individuals genotyped for >500,000 markers) presents immense computational challenges. The core bottleneck is the manipulation and inversion of the Genomic Relationship Matrix (G), which scales quadratically with population size (n²). The following notes detail strategies to manage these demands.

Table 1: Computational Complexity & Resource Estimates for GBLUP on Large Populations

| Population Size (n) | Markers (m) | Full G Matrix Size (RAM) | Direct Inversion Time (Est.) | Recommended Approach |

|---|---|---|---|---|

| 50,000 | 500K | ~20 GB (double precision) | 10-20 hours | Single node, optimized BLAS, 128+ GB RAM |

| 100,000 | 500K | ~80 GB | 2-4 days | Distributed memory (MPI), out-of-core solvers |

| 250,000 | 500K | ~500 GB | Infeasible | Algorithmic reformulation (e.g., SNP-BLUP), Iterative solvers (PCG) |

| 1,000,000 | 50K | ~8 TB | Infeasible | Partitioned GRM, Cloud-based distributed computing |

Key Strategies:

- Memory Management: For n > 50k, storing the full G matrix in RAM becomes prohibitive. Strategies include:

- Out-of-Core Computation: Using libraries like SLEPc to perform operations on matrices stored on fast SSDs.

- On-the-Fly Computation: Computing elements of G as needed within iterative solvers, trading memory for CPU cycles.

- Storage Optimization: Utilizing compressed data formats (e.g., PLINK's .bed, Zarr arrays) for genotype storage. The relationship matrix can be stored in sparse or low-rank formats if applicable.

- Parallel Processing: A hybrid approach is often optimal.

- MPI (Message Passing Interface): Distributes the genotype matrix or segments of G across multiple nodes for large-scale parallel operations.

- OpenMP/Threading: Parallelizes operations on shared-memory chunks within a single node (e.g., matrix multiplications).

- GPU Acceleration: Offloading dense linear algebra operations (BLAS Level 3) to GPUs can yield 10-50x speedups.

Experimental Protocols

Protocol 1: Setting Up a Distributed GBLUP Analysis using Iterative Solvers

Objective: To estimate genomic breeding values for a population of n=120,000 with m=650,000 SNPs using limited cluster resources.

Materials: High-performance computing cluster with MPI, optimized BLAS libraries (e.g., Intel MKL, OpenBLAS), and the software preGSf90 from the BLUPF90 suite.

Procedure:

- Data Preparation: Convert genotype data to a compressed binary format. Calculate the G matrix using a distributed MPI method (e.g.,

--mpiflag inpreGSf90). - Model Formulation: Define the mixed linear model: y = Xb + Zg + e, where g ~ N(0, Gσ²_g).

- Solver Configuration: Employ the Preconditioned Conjugate Gradient (PCG) method. Use a diagonal preconditioner to improve convergence.

- Parallel Execution:

- Partition the genotype data across P MPI processes.

- Each process computes its segment of the matrix-vector product Gv required in each PCG iteration.

- Master process aggregates results, updates the solution vector, and checks convergence.

- Post-analysis: Collect distributed results to estimate SNP effects and breeding values.

Protocol 2: Comparative Benchmarking of GBLUP Solver Implementations

Objective: To evaluate the performance and scalability of direct vs. iterative solvers for GBLUP.

Materials: Genotype dataset (n=50,000, m=100,000), compute nodes with 256GB RAM, 32 cores, NVIDIA V100 GPU (optional). Software: BLUPF90 (direct/iterative), GCTA (direct), custom Python/NumPy script with CuPy for GPU.

Procedure:

- Baseline Measurement: Using BLUPF90's direct solver, compute G and its inverse, recording total wall-clock time and peak memory usage (via

/usr/bin/time -v). - Iterative Solver Test: Run BLUPF90 with the PCG solver option on the same dataset. Record time per iteration, total iterations to convergence (e.g., ||Ax-b||/||b|| < 10⁻⁹), and total time.

- GPU-Accelerated Test: Implement the core G matrix multiplication kernel (

ZZ' / k) using CuPy. Benchmark the time for this operation against a NumPy/OpenBLAS CPU implementation. - Scalability Test: Repeat steps 1-3 on synthetic datasets of size n=10k, 25k, 50k, 75k.

- Data Analysis: Plot time/memory versus n. Determine the crossover point where iterative methods become more efficient than direct inversion.

Mandatory Visualization

GBLUP Computational Workflow Decision Tree

Hybrid MPI+OpenMP Parallel Architecture

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Large-Scale GBLUP

| Item | Function/Description | Example/Note |

|---|---|---|

| BLAS/LAPACK Libraries | Optimized low-level routines for linear algebra (matrix multiplication, inversion). Critical for performance. | Intel MKL, OpenBLAS, NVIDIA cuBLAS (for GPU). |

| Distributed Computing Framework | Enables parallel processing across multiple compute nodes. | MPI (OpenMPI, MPICH), Apache Spark (for fault-tolerant processing). |

| Iterative Solver Library | Provides robust implementations of Conjugate Gradient and related algorithms. | PETSc, SLEPc (for eigenvalue problems). |

| Compressed Genotype Format | Reduces storage I/O overhead, enabling faster data loading. | PLINK .bed, Zarr array, HDF5 with chunking. |

| High-Performance File System | Fast, parallel storage for handling massive intermediate files (e.g., G matrix). | Lustre, BeeGFS, or cloud object storage with caching. |

| Profiling & Monitoring Tools | Measure resource usage (memory, CPU, I/O) to identify bottlenecks. | perf, vtune, nvprof (for GPU), Slurm job statistics. |

| Containerization Platform | Ensures reproducibility and simplifies software deployment on clusters/cloud. | Docker, Singularity/Apptainer. |

Within the broader thesis on Genomic Best Linear Unbiased Prediction (GBLUP) methodology for large reference populations, optimizing model parameters is critical for enhancing the accuracy of genomic estimated breeding values (GEBVs) and variance component estimation. Two foundational parameters are the trait heritability (h²) and the inclusion of appropriate fixed effects. Mis-specification of these parameters can lead to biased genomic predictions and reduced utility in breeding programs and biomedical trait mapping. This protocol details the methodologies for robust heritability estimation and systematic fixed effect testing within the GBLUP framework.

Application Notes on Heritability Estimation

Heritability, the proportion of phenotypic variance attributable to additive genetic effects, directly influences the shrinkage applied to marker effects in GBLUP. Accurate estimation is paramount.