Mitigating Technical Variability in RNA-seq Analysis: A 2024 Guide for Biomedical Researchers

This comprehensive guide addresses the pervasive challenge of high technical variability in RNA-seq data, which can obscure true biological signals and compromise downstream analysis.

Mitigating Technical Variability in RNA-seq Analysis: A 2024 Guide for Biomedical Researchers

Abstract

This comprehensive guide addresses the pervasive challenge of high technical variability in RNA-seq data, which can obscure true biological signals and compromise downstream analysis. We first explore the core sources of technical noise, from library preparation to sequencing platforms. We then detail robust methodological pipelines and normalization strategies specifically designed to handle variable data. The article provides a troubleshooting framework for diagnosing and correcting technical artifacts, and concludes with best practices for validating results and benchmarking analysis tools. Tailored for researchers and drug development professionals, this resource offers actionable strategies to enhance the reliability and reproducibility of transcriptomic studies.

Understanding the Sources: What Drives High Technical Variability in RNA-seq?

In RNA-seq data analysis, distinguishing between technical and biological variability is crucial for deriving accurate biological conclusions. Technical variability arises from measurement error and protocol inconsistencies, while biological variability reflects true differences between biological subjects or conditions. This guide provides a technical support framework for troubleshooting common issues in experiments with high technical variability.

Troubleshooting Guides & FAQs

Q1: My PCA plot shows batch effects separating my samples by sequencing run, not by treatment group. What should I do? A: This indicates high technical variability (batch effect). First, verify this using a negative control (e.g., replicate samples) across runs. Proceed with batch effect correction only after confirming the effect is technical. Use methods like ComBat-seq or RUVseq, but apply them cautiously and document the adjustment, as over-correction can remove biological signal.

Q2: I have high replicate-to-replicate variability in gene expression counts. How can I determine if it's technical or biological? A: Implement an experimental design that includes both technical replicates (same biological sample processed multiple times) and biological replicates (different samples from the same condition). Analyze the variance components.

Key Experiment Protocol: Variance Component Analysis

- Design: For one condition, include 3 distinct biological subjects. From each subject, create 2 library preps (technical replicates). Sequence all 6 libraries.

- Analysis: Use a linear mixed model (e.g.,

lmerin R) with log-normalized counts for a housekeeping gene:Expression ~ 1 + (1|Biological_Subject) + (1|Technical_Replicate:Subject). The variance estimates from the random effects will quantify the contribution of each source. - Interpretation: A high variance for the technical term relative to the biological term suggests protocol issues dominate.

Q3: My negative controls (e.g., blank library prep) show non-zero read counts. Is this a problem? A: Yes, this indicates contamination or index hopping, contributing to technical noise. First, ensure lab hygiene and use of unique dual indices (UDIs) to mitigate index hopping. To diagnose, sequence a negative control (water) in every batch. If counts are high, the entire batch data may be suspect.

Protocol: Monitoring Contamination with Negative Controls

- Include a negative control (nuclease-free water) in every library preparation batch.

- Sequence alongside experimental samples.

- Post-sequencing, map the control reads. A significant number of alignments (>0.01% of total reads in the control) suggests reagent or environmental contamination. Consider re-preparing the affected batch.

Table 1: Typical Variance Contributions in RNA-seq Experiments

| Variability Source | Description | Typical Contribution to Total Variance (Range) | Mitigation Strategy |

|---|---|---|---|

| Biological | Differences between individual organisms or cell lines. | 40% - 70% | Increase biological replication (n>=3). |

| Technical - Library Prep | Variation from RNA extraction, reverse transcription, amplification. | 15% - 35% | Standardize protocols, use automation, include technical replicates for QC. |

| Technical - Sequencing | Flow cell lane effects, read depth variation, cluster generation. | 5% - 20% | Use multiplexing across lanes, sequence to sufficient depth (>20M reads/sample), use UDIs. |

| Technical - Bioinformatics | Read alignment and quantification ambiguity. | 5% - 15% | Use standardized, version-controlled pipelines (e.g., nf-core/rnaseq), and the same reference genome. |

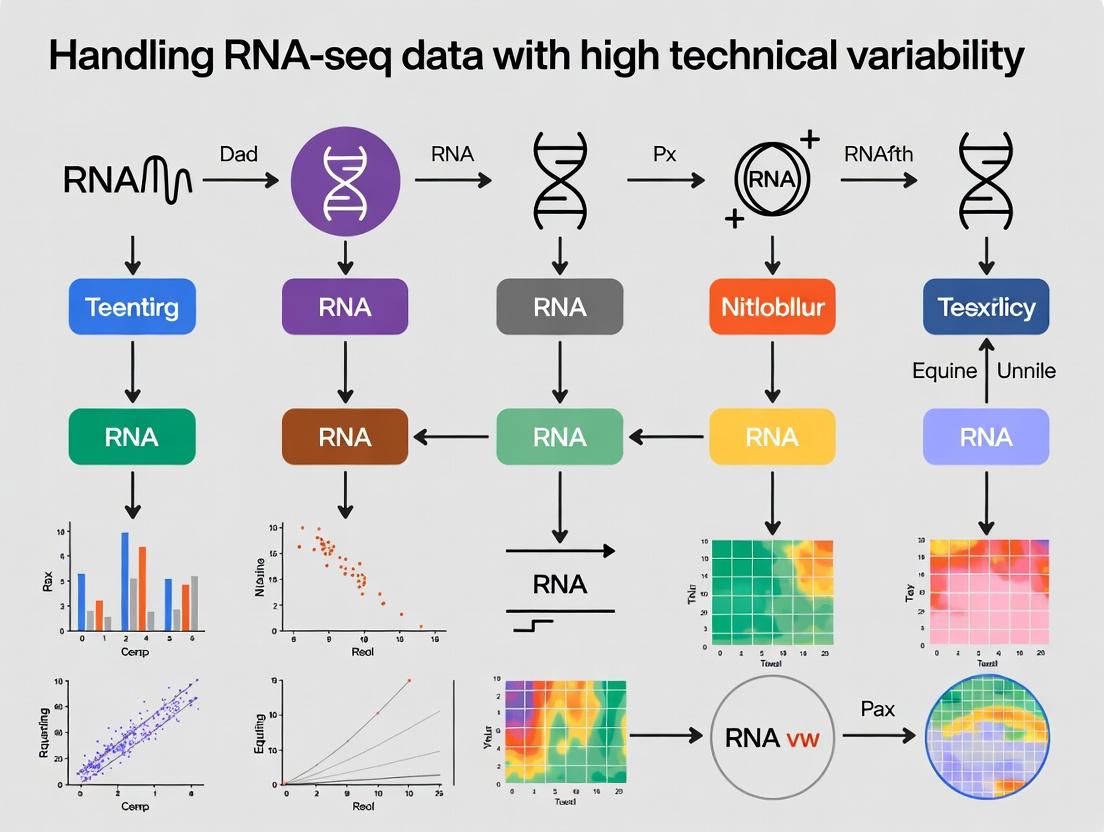

Visualizing the Analysis Workflow

Title: RNA-seq Workflow with Technical Variability Check

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Controlling Technical Variability

| Item | Function in Controlling Variability |

|---|---|

| External RNA Controls Consortium (ERCC) Spike-Ins | Synthetic RNA molecules added to samples in known ratios. They distinguish technical from biological variation by measuring protocol performance. |

| Unique Dual Indexes (UDIs) | Molecular barcodes that uniquely tag each sample, preventing index hopping (sample cross-talk), a major technical artifact. |

| Ribosomal RNA Depletion Kits | Consistent removal of rRNA is critical for mRNA-seq. Kit lot-to-lot variability can introduce technical noise; track lot numbers. |

| Reverse Transcriptase with High Fidelity | Reduces bias in cDNA synthesis, a major source of technical variation in expression measurements. |

| DNA/RNA Cleanup Beads (Solid Phase Reversible Immobilization) | Standardized bead-based cleanup improves consistency over column-based methods across technicians and batches. |

| Quantification Standard (e.g., dPCR standards) | Provides absolute quantification for calibrating RNA input, reducing preparation variability. |

Troubleshooting Guides & FAQs

RNA Extraction & Quality Control

Q1: My RNA Integrity Number (RIN) is consistently low (<7). What are the major culprits and how can I address them?

A: Low RIN is primarily caused by RNase contamination or improper tissue handling. Key culprits and solutions:

- Culprit: Delay in tissue processing or flash-freezing.

- Solution: Snap-freeze tissue in liquid nitrogen within 5 minutes of collection. Use RNase-inhibiting storage reagents.

- Culprit: Ineffective homogenization leading to incomplete lysis.

- Solution: Use a combination of mechanical disruption (e.g., bead beating) and potent lysis buffers. Validate protocol for your specific tissue type.

- Culprit: Contamination with RNases from the environment, skin, or contaminated equipment.

- Solution: Use dedicated RNase-free consumables, change gloves frequently, and use a designated clean area. Include chaotropic salts (e.g., guanidinium thiocyanate) in the lysis buffer.

Protocol: Rapid Tissue Processing for High-Quality RNA

- Pre-cool a bio-safe container with liquid nitrogen.

- Excise tissue (≤30 mg) and immediately submerge in liquid nitrogen for 10 seconds.

- Transfer frozen tissue to a tube containing 1 mL of QIAzol Lysis Reagent (or equivalent phenol-guanidine isothiocyanate reagent) pre-cooled on dry ice.

- Homogenize using a sterile, RNase-free bead mill (2 cycles of 45 sec at 20 Hz).

- Proceed with standard RNA extraction (e.g., acid-phenol:chloroform separation) and purification on a silica-membrane column.

- Elute in 30-50 µL of RNase-free water and assess RIN on a Bioanalyzer or TapeStation.

Q2: How does RNA quality quantitatively impact downstream sequencing data?

A: Degraded RNA introduces significant technical variability in RNA-seq data, skewing gene expression profiles.

| RNA Quality Metric | Target Value | Impact on Sequencing Data if Suboptimal |

|---|---|---|

| RIN (Agilent Bioanalyzer) | ≥8.5 (Ideal), ≥7 (Acceptable) | Reduced library complexity, 3' bias in read coverage, loss of long transcripts. |

| DV200 (% fragments >200 nt) | ≥70% | Lower library yield, over-representation of short RNAs, inaccurate quantification. |

| 28S/18S rRNA Ratio | ~2.0 (Mammalian) | Deviation indicates degradation; correlates with poor library preparation efficiency. |

| Concentration (Qubit) | ≥20 ng/µL | Low input leads to over-amplification in library prep, increasing duplicate rates and noise. |

Library Preparation & Sequencing

Q3: My library yields are highly variable despite using identical input RNA. What could be the cause?

A: Inconsistent reverse transcription and double-stranded cDNA synthesis are major culprits. Variability in enzymatic reaction efficiency (reverse transcriptase, ligase) is often the source. Ensure precise thermocycler calibration, use master mixes to minimize pipetting error, and strictly control reaction times. The quality of magnetic bead-based cleanups (ratio, temperature, drying time) also significantly impacts yield consistency.

Q4: We observe batch effects correlated with sequencing runs. What run-specific factors should we investigate?

A: Technical variability between sequencing runs is a critical confounder in multi-run studies.

| Sequencing Run Factor | Potential Effect | Monitoring & Mitigation Strategy |

|---|---|---|

| Cluster Density | Deviation >±10% from optimal can affect pass filter rates and demultiplexing efficiency. | Track per-lane density metrics from the sequencing platform. Balance samples across lanes/runs. |

| Phasing/Prephasing | Increase in erroneous base calls, reducing Q30 score. | Check instrument diagnostics. Use balanced libraries and optimal cluster density. |

| Reagent Lot Variation | Batch effects in nucleotide incorporation or flow cell chemistry. | Record reagent lot numbers. Include control samples across batches if possible. |

| Flow Cell Condition | Declining performance over time, leading to low output. | Use flow cell quality control reports. Sequence high-priority samples on newer flow cells. |

Protocol: Spike-in Control Protocol for Batch Normalization

- Select an appropriate external RNA spike-in mix (e.g., ERCC for bulk RNA-seq, SIRV for isoform analysis).

- Add a defined, small volume (e.g., 1 µL of a 1:100,000 dilution) to each sample lysate prior to any RNA extraction step to control for the entire workflow.

- Proceed with standard library preparation.

- During bioinformatics analysis, map reads to the spike-in reference sequences. Use the measured spike-in abundances to correct for technical variation between samples (e.g., using RUVg or similar methods).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| RNase Inhibitors (e.g., recombinant porcine) | Protects RNA from degradation during extraction and cDNA synthesis by non-covalently binding RNases. Essential for long or sensitive reactions. |

| Magnetic SPRI Beads | Size-selects nucleic acid fragments via PEG/NaCl precipitation. Critical for library cleanup, adapter dimer removal, and size selection consistency. |

| Dual-Index UMI Adapters | Unique Molecular Identifiers (UMIs) tag original molecules to correct for PCR duplication bias. Dual indexing minimizes index hopping cross-talk between samples. |

| ERCC RNA Spike-In Mix | A set of synthetic RNA transcripts at known concentrations. Added to samples to generate a standard curve for assessing sensitivity, dynamic range, and correcting technical noise. |

| Fragment Analyzer / Bioanalyzer | Microfluidics-based system for precisely assessing RNA/DNA integrity (RIN, DV200) and library fragment size distribution. Superior to spectrophotometry for QC. |

| Ribo-depletion Kits (rRNA removal) | Selectively removes abundant ribosomal RNA (>90% of total RNA) to enrich for mRNA and non-coding RNA, improving sequencing depth of targets. |

Visualizations

Title: High-Quality RNA Extraction Protocol Workflow

Title: Sources and Impact of Sequencing Run Batch Effects

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My PCA plot shows clear separation by sequencing batch, not by treatment group. What is the first step to diagnose this? A: This is a classic sign of a strong batch effect. First, generate a detailed sample metadata table. Create a visualization of the PCA colored by every technical variable (e.g., sequencing lane, library prep date, technician, RNA integrity number (RIN)) and biological variable. Use this to identify which technical factors correlate with the major principal components.

Q2: I've normalized my RNA-seq counts with TPM, but batch effects remain. What should I do next?

A: TPM corrects for library size and gene length but not for composition and technical bias. Proceed with a dedicated normalization method for differential expression. Use DESeq2 (which employs its own median-of-ratios method) or edgeR (TMM normalization). After this, if batch is known, apply a statistical batch correction tool like ComBat-seq (for count data) or limma's removeBatchEffect (for continuous log-CPM data).

Q3: How do I decide between using ComBat-seq and sva for my dataset? A: The choice depends on your data stage and need.

- ComBat-seq: Use if you want to correct raw count data before differential expression, preserving integer counts. It is effective when batch is known.

- sva (Surrogate Variable Analysis): Use

svaseqto estimate hidden batch effects or unmodeled covariates when the batch is unknown. It identifies surrogate variables that can be added to your statistical model.

Q4: My negative controls (e.g., saline-treated samples) cluster separately in the batch-corrected data. Is the correction overfitting?

A: Possibly. Over-correction occurs when the batch correction method removes biological signal. To diagnose, check if known housekeeping genes or positive control genes show expected expression. Validate with an external dataset or qPCR on a subset of samples. Using the limma method with block design and duplicateCorrelation is often more conservative and protects biological variation.

Key Experimental Protocols

Protocol 1: Experimental Design to Minimize Batch Effects

- Randomization: Assign samples from different experimental groups (e.g., treatment vs. control) randomly across library preparation batches and sequencing lanes.

- Balancing: Ensure each batch contains a similar proportion of samples from all biological groups.

- Replication: Include technical replicates (the same biological sample processed independently) in at least one batch to assess intra-batch variability.

- Controls: Spike-in exogenous controls (e.g., ERCC RNA Spike-In Mix) at the start of RNA isolation to monitor technical performance across batches.

Protocol 2: In-silico Batch Effect Diagnosis using PCA

- Generate a normalized expression matrix (e.g., log2(CPM+1)) from your raw count data.

- Perform PCA using the

prcomp()function in R on the top 500 most variable genes. - Plot the first two principal components (PC1 vs. PC2).

- Color the data points by known biological conditions (e.g., disease state) and by technical factors (e.g., batch ID, RIN, sequencing date).

- Interpret: If samples cluster more strongly by technical factors than by biology, a significant batch effect is present.

Protocol 3: Applying ComBat-seq for Batch Correction

- Input: Prepare a raw read count matrix and a sample information data frame with batch and biological condition.

- Installation: Install the

svapackage in R/Bioconductor. - Run ComBat-seq:

- Output: Use the adjusted integer counts for downstream differential expression analysis with

DESeq2oredgeR.

Summarized Quantitative Data

Table 1: Impact of Batch Effect Correction on Differential Expression Results

| Metric | Uncorrected Data | After ComBat-seq Correction |

|---|---|---|

| False Discovery Rate (FDR) in null datasets | High (up to 50%) | Reduced (<5%) |

| Number of significant DEGs (p-adj < 0.05) | Often inflated or suppressed | More biologically plausible |

| Cluster purity (separation by biology in PCA) | Low (e.g., 40% variance from batch) | High (e.g., 70% variance from condition) |

| Reproducibility with external study | Low (Jaccard index ~0.2) | Improved (Jaccard index ~0.6) |

Table 2: Comparison of Common Batch Effect Correction Tools

| Tool / Method | Data Type | Batch Knowledge Required | Model Preserves | Best Use Case |

|---|---|---|---|---|

| ComBat-seq | Raw counts | Known | Integer counts | Known, discrete batches |

| limma removeBatchEffect | log-Normalized | Known | Continuous data | Final visualization/PCA |

| svaseq | Any | Unknown | Estimates covariates | Discovering hidden factors |

DESeq2 with design=~batch+condition |

Raw counts | Known | Statistical model | Direct DE analysis with batch covariate |

Visualizations

Diagram Title: RNA-seq Batch Effect Diagnosis & Correction Workflow

Diagram Title: Interpreting PCA Results Before and After Correction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Managing Technical Variability

| Item | Function | Example Product |

|---|---|---|

| External RNA Controls (ERC) | Spike-in synthetic RNAs at known concentrations to calibrate measurements across runs and detect technical failures. | ERCC RNA Spike-In Mix (Thermo Fisher) |

| UMI Adapters | Unique Molecular Identifiers (UMIs) attached to each molecule during library prep to correct for PCR amplification bias. | NEBNext Unique Dual Index UMI Adapters |

| Automated Nucleic Acid Extraction System | Standardizes RNA isolation to minimize variability from manual handling. | QIAcube (Qiagen) |

| RNA Integrity Number (RIN) Standard | Provides a consistent reference for evaluating RNA quality on Bioanalyzer/TapeStation. | RNA 6000 Nano Kit (Agilent) |

| Commercial Library Prep Kit | Using the same, optimized kit across all batches reduces protocol-induced variability. | Illumina Stranded mRNA Prep |

| Pooling Normalization Beads | Magnetic beads for accurate and automated normalization of library concentrations before pooling and sequencing. | SPRIselect (Beckman Coulter) |

Troubleshooting Guides & FAQs

FAQ 1: Why does my PCA plot show extreme clustering by sequencing batch rather than biological group?

- Answer: This indicates high technical variability (batch effect) is obscuring biological signal. First, verify the Sample Correlation Heatmap. High intra-batch and low inter-batch correlations confirm the issue. Check the RIN values table; if RIN is consistently high (>8) across all batches, the issue likely originates from library prep or sequencing. To troubleshoot, apply batch correction algorithms like ComBat-seq after QC but before differential expression. Always keep an uncorrected set for comparison.

FAQ 2: What is an acceptable threshold for sample correlation coefficients in my heatmap?

- Answer: Acceptability is experiment-dependent. Use the following table as a guide:

| Sample Type Comparison | Expected Pearson Correlation (r) Range | Action if Below Range |

|---|---|---|

| Technical Replicates | r ≥ 0.95 | Investigate library prep or quantification errors. |

| Biological Replicates (Same Condition) | 0.8 ≤ r < 0.95 | Expected range. Lower values may indicate high heterogeneity or QC issues. |

| Different Conditions/Groups | Variable, but should cluster by group. | If samples cluster by other factors (e.g., batch), technical variability is too high. |

FAQ 3: Can I proceed with RNA-seq analysis if some samples have a RIN value below 7?

- Answer: Proceeding requires caution and depends on your study's robustness. See the decision framework below:

Experimental Protocols

Protocol 1: Generating PCA for Batch Effect Detection

- Input: Normalized count matrix (e.g., from DESeq2

varianceStabilizingTransformationorrlog). - Calculate: Perform PCA on the top 500 most variable genes using the

prcomp()function in R. - Visualize: Plot PC1 vs. PC2 and PC1 vs. PC3. Color points by

biological_groupand shape bysequencing_batch. - Interpret: Clustering by shape (batch) indicates a strong technical artifact requiring correction.

Protocol 2: Sample Correlation Heatmap Construction

- Input: Normalized, log-transformed count matrix.

- Calculate: Compute pairwise Pearson correlations between all samples using the

cor()function in R. - Cluster & Visualize: Perform hierarchical clustering and plot using

pheatmap()orComplexHeatmap. Use a blue-white-red color scale. - Annotate: Add annotation bars for

biological_group,batch, andRINvalue bin.

Protocol 3: RIN Integration into Metadata

- Measurement: Obtain RIN values using Agilent Bioanalyzer or TapeStation before library prep.

- Tabulate: Create a sample metadata table including RIN, date of extraction, operator, and batch ID.

- Correlate: Plot RIN vs. key QC metrics (e.g., library yield, % aligned reads) to identify degradation-related technical variability.

- Model: Include RIN as a covariate in differential expression models if it does not correlate with the condition of interest.

Key QC Metrics Relationships Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in QC & Variability Control |

|---|---|

| Agilent Bioanalyzer RNA Nano Kit | Provides accurate RIN and RNA integrity assessment prior to library prep. |

| SPRIselect Beads (Beckman Coulter) | For consistent size selection and library clean-up, reducing prep variability. |

| Kapa mRNA HyperPrep Kit (Roche) | A robust, standardized library prep protocol to minimize technical noise. |

| ERCC RNA Spike-In Mix (Thermo Fisher) | Exogenous controls added to samples to distinguish technical from biological variation. |

| Phusion High-Fidelity DNA Polymerase (NEB) | High-fidelity PCR during library amplification to minimize sequence errors. |

| Unique Dual Indexes (UDIs, Illumina) | Eliminates index hopping (sample cross-talk), a source of technical variability. |

| RNase Inhibitor (e.g., RNaseOUT) | Protects RNA samples from degradation during handling and storage. |

FAQs & Troubleshooting Guides

Q1: Our PCA plot shows batch effects overwhelming biological groups. What are the first steps to diagnose and correct this?

A: This indicates high technical variability is confounding your differential expression (DE) analysis. First, diagnose using a negative control sample or an SVD plot. For correction, use a linear model-based method like limma's removeBatchEffect() or ComBat-seq (for count data). Critical Protocol: Batch Correction with ComBat-seq:

- Input: Raw count matrix (genes x samples) and a batch covariate vector.

- Install:

devtools::install_github("zhangyuqing/ComBat-seq") - Run in R:

- Validation: Re-run PCA on adjusted counts. Biological clusters should be tighter.

Q2: After batch correction, our DE gene list is unstable—small changes in the model yield very different lists. How do we increase robustness? A: This is common in high-variability data. Implement a consensus approach.

- Methodology: Run DE analysis using at least two different tools (e.g.,

DESeq2,edgeR,limma-voom) on the same corrected data. - Consensus Call: A gene is considered high-confidence DE only if it meets significance (FDR < 0.05) in all tools.

- Supporting Data: See Table 1 for a comparison of tool sensitivities.

Table 1: Comparison of DE Tool Performance in High-Variability Simulations

| Tool | Underlying Model | Key Strength for High Variability | Recommended Use Case |

|---|---|---|---|

| DESeq2 | Negative Binomial | Robust to outliers via Cook's distances | When biological variation is large. |

| edgeR | Negative Binomial | Flexible dispersion estimation (tagwise) | For complex designs with many factors. |

| limma-voom | Linear Model + Precision Weights | Superior speed & stability for n > 5/group | For smaller sample sizes post-correction. |

Q3: How can we validate potential biomarkers from a noisy dataset before moving to expensive validation studies? A: Employ rigorous in-silico and cross-validation filtering.

- Protocol: Biomarker Candidate Prioritization:

a. Effect Size Filter: From your DE list, filter for

abs(log2FoldChange) > 1. b. Expression Level Filter: Retain genes with baseMean > 50 (or median count > 10). c. Machine Learning Filter: Use the filtered gene set in a LASSO regression or Random Forest model with repeated (e.g., 10x) k-fold cross-validation. Genes consistently selected as top predictors across folds are high-priority candidates. - Diagnostic: If no genes survive step (c), the initial DE signal may be too weak for robust biomarker discovery, suggesting a need for increased sample size.

Q4: What quality control (QC) metrics are most critical to monitor for biomarker discovery pipelines? A: Focus on sample-level and gene-level metrics. See Table 2.

Table 2: Critical QC Metrics for High-Variability RNA-seq Studies

| Metric | Target | Indicates a Problem If... | Tool to Check |

|---|---|---|---|

| Library Size | Consistent across samples | Extreme outliers exist (> 3x median) | FastQC, MultiQC |

| Gene Body Coverage | Uniform 5' to 3' coverage | 3' or 5' bias is pronounced | RSeQC |

| PCA on Controls | Control samples cluster tightly | Controls are dispersed, indicating technical drift | Custom R/Python script |

| Dispersion Plot | Dispersion decreases with mean | Dispersion is high for abundant genes | DESeq2::plotDispEsts() |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in High-Variability Context |

|---|---|

| UMI-based Library Prep Kits (e.g., Parse Biosciences) | Eliminates PCR duplication bias, a major source of technical noise. |

| ERCC Spike-In Mix | External RNA controls added to samples to accurately diagnose and calibrate technical variation. |

| RIN-equivalent QC System (e.g., TapeStation) | Ensures high-quality input RNA, reducing degradation-induced variability. |

| Automated Nucleic Acid Purification System | Minimizes sample-handling differences between operators (a common batch effect source). |

| Multiplexed Sequencing Pooling Kit | Allows balanced pooling of many samples in one lane, reducing lane-to-lane batch effects. |

Workflow & Pathway Diagrams

Title: RNA-seq Biomarker Discovery Workflow for Noisy Data

Title: Impact of Technical Variability on DE & Solutions

Building Robust Pipelines: Methods to Correct and Normalize Variable Data

Preprocessing and QC Tools for Identifying Technical Outliers

Frequently Asked Questions (FAQs)

Q1: After aligning my RNA-seq data, my PCA plot shows one sample clustering far from its replicates. What are the first QC metrics I should check? A1: First, verify the alignment statistics and library complexity metrics for the outlier sample.

- Check Alignment Rate: A sudden drop (e.g., <70% vs >85% for others) indicates poor sequencing or sample degradation.

- Examine Duplication Rate: An unusually high duplication level suggests low library complexity or over-amplification.

- Review Total Read Count: A dramatic difference in total reads can skew expression estimates. Use the table below for benchmark values.

Q2: My samples show large variations in gene body coverage. What does this indicate, and how can I fix it in my analysis? A2: Inconsistent gene body coverage typically indicates RNA degradation or ribosomal RNA contamination. This introduces technical bias in 3' bias. To address this:

- Diagnosis: Generate gene body coverage plots using tools like

RSeQCorQualimap. A sharp 3' bias confirms degradation. - Mitigation: You can filter out severely degraded samples. For moderate cases, use normalization methods (e.g., TMM in edgeR, median-of-ratios in DESeq2) that are robust to such biases, but note they may not fully correct it.

- Protocol Check: Review RNA Integrity Number (RIN) values from the bioanalyzer; samples with RIN < 7 are of concern.

Q3: I suspect a batch effect from different library preparation dates. Which tools can best identify and visualize this before differential expression analysis? A3: Use a combination of unsupervised clustering and dedicated batch-effect detectors.

- Primary Tool: PCA Plot. Color samples by the suspected batch variable (e.g., prep date). Clear separation by color signals a batch effect.

- Quantitative Tool: PVCA (Principal Variance Component Analysis). This combines PCA and linear mixed models to quantify the proportion of variance attributable to batch versus biological factors. A batch variance contribution > 20% is a major concern.

- Corrective Action: If identified, include the batch as a covariate in your statistical model (e.g.,

~ batch + conditionin DESeq2) or use batch correction tools likeComBat-seq(for count data) after careful consideration.

Q4: When using outlier detection tools like arrayQualityMetrics, what specific diagnostic plots are most informative for RNA-seq data derived from high-variability sources?

A4: For high-variability data (e.g., patient biopsies), focus on relative rather than absolute metrics.

- Boxplot of log-expression distributions: Identifies samples with globally shifted medians.

- Distance heatmap: Shows pairwise distances between samples; the outlier will have uniformly high distances to all others.

- MA plots for each sample against the median reference: Highlights genes with consistently biased expression in one sample across the dynamic range.

- Key: The outlier should be evident across multiple plots, not just one.

Troubleshooting Guides

Issue: High Discrepancy in Read Counts Between Technical Replicates

Problem: Technical replicates (same library sequenced across lanes/flowcells) show unexpectedly high variation in gene counts. Investigation Steps:

- Confirm Technical Replicates: Verify the samples are indeed technical, not biological, replicates.

- Check Sequencing Performance Metrics:

- Run

FastQCon each replicate's raw FASTQ files. ComparePer base sequence qualityandSequence duplication levels. - A lane with poor quality scores or an abnormal duplication profile may be the cause.

- Run

- Examine GC Bias: Use

Qualimapto plot mean GC content vs. read count. Strong deviations in the curve for one replicate indicate GC bias, often from PCR artifacts during library prep. - Action: If a single replicate is faulty, it may be excluded. If all show acceptable QC but high variability, consider using a statistical model that accounts for over-dispersion (e.g., negative binomial in DESeq2/edgeR) and ensure you are not relying on a single replicate for critical conclusions.

Issue: Unexpected Global Expression Shift Identified in Sample-Level QC

Problem: One sample's expression profile is shifted (higher/lower across most genes) compared to others in density plots or boxplots. Diagnosis and Solution Workflow:

Title: Workflow for diagnosing global expression shifts.

Data Presentation

Table 1: Key RNA-seq QC Metrics and Thresholds for Outlier Detection

| Metric | Tool for Calculation | Typical "Good" Range | Potential Outlier Flag | Indicated Issue |

|---|---|---|---|---|

| Alignment Rate | STAR, HISAT2, Qualimap | >85% (Human/Mouse) | <70% | Poor sequencing, adapter contamination, sample degradation. |

| Duplication Rate | Picard MarkDuplicates | 10-50% (varies with protocol) | >70% (for poly-A) | Low input, over-amplification, PCR bias. |

| Exonic Rate | Qualimap, RSeQC | >60% (for poly-A) | <40% | High ribosomal RNA, immature mRNA, or genomic DNA contamination. |

| 5' to 3' Bias | RSeQC, Qualimap | Gene body coverage slope ~0 | >0.3 or <-0.3 | RNA degradation, biased fragmentation/library prep. |

| Total Genes Detected | FeatureCounts, HTSeq | Depends on depth & tissue | >3 SD from group mean | Library complexity issue or sample mix-up. |

| Library Size (M reads) | Raw FASTQ count | Consistent across project | >2x difference from median | Sequencing depth outlier affecting normalization. |

Experimental Protocols

Protocol 1: Generating a PCA Plot for Outlier Detection from a Count Matrix

Purpose: To visually identify sample outliers and batch effects using principal component analysis.

Input: Normalized count matrix (e.g., log2(CPM), vst-transformed counts).

Software: R with ggplot2 and ggfortify packages.

Steps:

- Prepare Data: Transform your raw count matrix. For example, using DESeq2's

varianceStabilizingTransformation()or edgeR'scpm(log=TRUE)function. - Perform PCA: Use the R

prcomp()function on the transposed transformed matrix (samples as rows, genes as columns).

- Extract Variance: Summarize the PCA result to see variance explained by each PC (

summary(pca_results)). - Plot: Use

autoplot(pca_results, data = sample_metadata, colour = 'Group', shape = 'Batch'). Color by biological condition and shape by technical batch (e.g., prep date). - Interpretation: A sample distant from its group on PC1 or PC2 (which explain most variance) is a candidate outlier. Clustering by

batchinstead ofGroupindicates a strong technical effect.

Protocol 2: Running PVCA to Quantify Batch Effects

Purpose: To quantitatively attribute variance in the expression data to biological and technical factors.

Input: Normalized expression matrix and a metadata table with factors (e.g., Condition, Batch, RIN).

Software: R with pvca package (or manual implementation via lme4).

Steps:

- Install/Load: Install the

pvcapackage from Bioconductor. - Set Parameters: Define the assay data (normalized matrix) and the metadata factors of interest. Assign a threshold (e.g., 0.6) for the cumulative proportion of variance explained by top principal components.

- Execute Analysis:

- Plot Results: Use

bp <- barplot(pvcaObj$dat, ...)to visualize the proportion of variance explained by each factor and their residuals. - Interpretation: A high variance proportion (

>0.2-0.3) for a technical factor likeBatchorRINconfirms it is a major source of technical noise that must be addressed statistically.

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools for QC and Outlier Management

| Item | Category | Primary Function in QC/Outlier Analysis |

|---|---|---|

| Bioanalyzer/TapeStation | Instrument | Provides RNA Integrity Number (RIN) to assess input RNA quality before library prep, the first defense against degradation outliers. |

| SPRIselect Beads | Reagent | Used for size selection and clean-up during library prep; consistent bead-to-sample ratio is critical for reproducible library yields and preventing adapter dimer contamination. |

| ERCC ExFold RNA Spike-Ins | Control | Artificial RNA molecules added at known ratios to the sample. Deviations from expected fold-changes in the final data identify systematic technical outliers in quantification. |

| PhiX Control Library | Control | Spiked into sequencing runs for cluster generation and calibration; helps monitor lane-to-lane performance variations. |

| RiboMinus/ RiboZero Kits | Reagent | Deplete ribosomal RNA in samples with low poly-A+ content (e.g., bacterial, degraded FFPE). Inconsistent depletion efficiency is a major source of technical variability. |

| Unique Molecular Identifiers (UMIs) | Method | Short random barcodes ligated to each mRNA molecule before PCR amplification, allowing precise removal of PCR duplicates to correct for amplification bias and improve quantification accuracy. |

Diagram: RNA-seq QC and Outlier Analysis Workflow

Title: Core RNA-seq QC workflow for outlier identification.

Troubleshooting Guides & FAQs

Q1: My RNA-seq data shows clear batch effects after PCA. I used ComBat-seq, but the biological groups are now overlapping. What went wrong? A: This is often due to over-correction. ComBat-seq estimates batch parameters using all genes. If your biological signal is strong and confounded with batch, it can be mistakenly removed.

- Solution: Use the

modelargument in theComBat_seqfunction to specify a biological factor of interest (e.g.,model=~disease_status). This protects the biological variation while removing batch effects. Also, consider using theshrinkargument to stabilize estimates for small batches.

Q2: When applying RUVg (using control genes), my results become highly sensitive to the choice of k (number of factors). How do I determine the optimal k? A: There is no universal optimal k. It requires empirical evaluation.

- Protocol:

- Apply RUVg across a range of k values (e.g., 1 to 10).

- For each k, perform PCA on the normalized data.

- Plot the PCA results. The optimal k typically removes batch-associated clustering without collapsing biological replicates.

- Use metrics like the

plotRLEandplotPCAfunctions from theRUVSeqpackage to visualize the improvement. A k that minimizes the variance between replicate samples is preferred.

Q3: SVA returns "surrogate variables" (SVs). How do I interpret and use them in my downstream differential expression analysis? A: SVs are estimated unmodeled factors of variation.

- Protocol:

- Use the

svafunction from thesvapackage to estimate SVs, specifying your full model (biological interest) and null model (background). - The SVs are returned as a matrix. You must add them as covariates to your linear model in the differential expression step.

- Example in DESeq2:

dds <- DESeqDataSetFromMatrix(countData, colData, design = ~ SV1 + SV2 + condition) - Interpretation: The SVs themselves are unitless factors. Their primary use is as covariates to adjust for hidden confounding. You can correlate SVs with known batch variables (e.g., sequencing lane) to identify their potential source.

- Use the

Q4: I have a complex design with multiple known batches and biological conditions. Which method is most appropriate? A: For multiple known batches, ComBat-seq or a GLM-based approach within DESeq2/edgeR is often suitable. For unknown or unmodeled factors, RUV or SVA is preferred. For a hybrid approach, see the workflow below.

Key Normalization Method Comparison

| Method | Core Principle | Input Data | Handles Known Batches? | Handles Unknown Factors? | Key Assumption | Best For |

|---|---|---|---|---|---|---|

| RUV (RUVs/RUVg) | Uses control genes/samples to estimate unwanted variation. | Raw counts | Yes (as covariates) | Yes | Control features are not influenced by biological conditions. | Experiments with spike-ins, housekeeping genes, or replicate samples. |

| SVA | Decomposes data matrix to estimate surrogate variables for unmodeled variation. | Normalized log-counts | Yes (in model) | Yes | Major sources of variation are orthogonal to the biological signal. | Complex studies where confounding factors are unknown or unmeasured. |

| ComBat-seq | Empirical Bayes framework to adjust for batch effects in count data. | Raw counts | Yes | No | Batch effect is additive and consistent across genes. | Removing strong, known batch effects (e.g., sequencing run) while preserving counts. |

DESeq2's RUVg |

Integration of RUV factors into the DESeq2 GLM. | Raw counts | Yes | Yes | Similar to RUV. | Users wanting RUV correction within the robust DESeq2 statistical framework. |

Experimental Protocol: Hybrid Normalization Workflow for High-Variability Data

Title: Integrated Batch Correction and Analysis Protocol.

- Quality Control & Initial Modeling: Generate count matrix. Perform exploratory PCA. Identify known batches (e.g.,

Batch_K). - Primary Batch Adjustment: Apply ComBat-seq (

svapackage) to the raw count matrix, modeling the biological condition of interest to protect it:adjusted_counts <- ComBat_seq(counts, batch=Batch_K, group=Condition). - Residual Unwanted Variation Removal: On the ComBat-seq-adjusted counts, use RUVg (

RUVSeqpackage) with a set of empirical control genes (e.g., genes with least significance from a first-pass DE analysis). - Differential Expression Analysis: Input the normalized counts from RUVg into DESeq2, including the RUV-estimated factors (W_) as covariates in the design formula:

design = ~ W_1 + W_2 + Condition. - Validation: Post-analysis, visualize final PCA and examine distribution of p-values to ensure batch effect removal and biological signal retention.

Visualization of Method Selection Logic

Title: Decision Workflow for Normalization Method Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Normalization/Experiments |

|---|---|

| External RNA Controls Consortium (ERCC) Spike-in Mix | Artificial RNA sequences added to lysate. Provides an absolute standard for technical variation estimation, crucial for RUV methods. |

| Housekeeping Gene Panels | Genes presumed stable across conditions. Used as negative control genes for RUVg or to assess normalization quality. |

| UMI (Unique Molecular Identifier) Adapters | Molecular barcodes attached to each cDNA molecule during library prep. Enables precise correction for PCR amplification bias, reducing technical noise at source. |

| Poly-A RNA Control Spikes | (e.g., from other species). Monitors mRNA capture efficiency. Can serve as a control set for between-sample normalization. |

| Commercial Normalization Kits | (e.g., based on duplex-specific nuclease). Reduce library complexity variation prior to sequencing, mitigating composition-based bias. |

This guide provides a technical support framework for implementing RUV-seq (Remove Unwanted Variation) within the context of thesis research on handling RNA-seq data with high technical variability. The method uses control genes or samples to estimate and adjust for unwanted technical noise, improving downstream differential expression analysis.

Experimental Protocols

Protocol: Identifying Negative Control Genes for RUVg

Purpose: To select genes not influenced by the biological conditions of interest for estimating unwanted variation factors. Steps:

- Perform a standard differential expression analysis (e.g., using edgeR or DESeq2) on your raw count matrix.

- Sort genes by p-value (from lowest to highest).

- Select the least significant genes (e.g., top 5000-10000 genes with the highest p-values) as empirical negative controls. These genes are likely invariant across your biological conditions.

- Alternatively, use spike-in controls or housekeeping genes if available and validated for your system.

Protocol: Implementing RUVg (Using Control Genes)

Purpose: To remove unwanted variation using a set of negative control genes. Steps:

- Format your data: Create a counts matrix (M genes x N samples) and a design matrix specifying your biological groups.

- Define your set of k negative control genes (from Protocol 1).

- Use the

RUVSeqpackage in R.

- Extract the adjusted counts:

normalizedCounts <- normCounts(set_ruvg) - Use the W_1 matrix (unwanted factors) as a covariate in your downstream DE analysis model.

Protocol: Determining the Optimal k (Number of Factors)

Purpose: To choose the number of unwanted factors to remove. Steps:

- Run RUVg or RUVs across a range of k values (e.g., k=1 to 5).

- Perform Principal Component Analysis (PCA) on the adjusted data for each k.

- Plot the PCA results. The optimal k should remove technical batch effects without obscuring primary biological clustering.

- A quantitative method is to use the

RUVcorrorRUVNaiveRidgefunctions to assess residual correlation.

Troubleshooting Guides & FAQs

Q1: My biological groups are collapsing after RUV-seq normalization. What went wrong?

A: This indicates over-correction, likely because k is too high or your control genes are not suitable. Reduce k. Re-evaluate your control genes: ensure they are truly invariant biologically. Consider using spike-ins or switching to the RUVs method if you have replicate samples.

Q2: How do I choose between RUVg, RUVs, and RUVr? A: See the decision table below.

Q3: The RUV-seq analysis is removing all variation, and my DE results are empty. How to fix?

A: This is often due to incorrect specification of the design matrix. When using the adjusted counts for DE analysis with edgeR or DESeq2, you must include the estimated factors of unwanted variation (e.g., W_1, W_2) as covariates in your design formula. For example, in edgeR:

Q4: Can I use RUV-seq with single-cell RNA-seq data?

A: Direct application is problematic due to data sparsity. Use methods specifically designed for scRNA-seq like RUVIII (in the scMerge package) or fastRUV-III, which use replicate-based controls.

Data Presentation

Table 1: Comparison of RUV-seq Method Variants

| Method | Control Type | Ideal Use Case | Key Assumption |

|---|---|---|---|

| RUVg | Negative control genes (e.g., spike-ins, housekeeping genes). | Experiments with reliable, a priori known invariant genes. | Control genes are not influenced by biology of interest. |

| RUVs | Replicate/negative control samples (technical replicates or sample groups). | Designs with technical replicates or groups where differences are purely technical. | Differences within replicate sets are purely unwanted variation. |

| RUVr | Residuals from a first-pass GLM regression. | When no good controls are available; uses residuals as noise estimate. | Residuals from the initial model primarily represent unwanted variation. |

Table 2: Impact of Parameterkon Differential Expression Results

| k value | Median P-value Distribution | Number of Significant DE Genes (FDR<0.05) | Observed Batch Effect in PCA |

|---|---|---|---|

| k=0 (Raw) | Skewed towards low p-values (inflation) | 1250 | Strong batch clustering |

| k=1 | More uniform, peak near 1 | 850 | Batch effect reduced |

| k=2 | Uniform | 820 | Biological clusters clear, batch minimal |

| k=3 | Slight peak at 1 (conservative) | 780 | Biological clusters slightly diffuse |

Visualizations

Title: RUVg Normalization Workflow

Title: Choosing the Right RUV-seq Method

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for RUV-seq Implementation

| Item | Function in RUV-seq | Example/Note |

|---|---|---|

| Spike-in RNA Controls | Provide known, invariant transcripts for perfect negative controls in RUVg. | ERCC (External RNA Controls Consortium) mixes or SIRV sets. |

| Housekeeping Gene Panels | Candidate endogenous negative control genes for RUVg. | Must be validated per experiment (e.g., GAPDH, ACTB may vary). |

| Technical Replicate Samples | Required for the RUVs method to estimate within-group variation. | Aliquots of the same biological sample processed separately. |

| RUVSeq R/Bioconductor Package | Core software suite implementing RUVg, RUVs, and RUVr algorithms. | Version >= 1.34.0. Primary tool for implementation. |

| EdgeR or DESeq2 Package | Downstream differential expression analysis after RUV adjustment. | Used with the W matrix as a covariate in the design formula. |

| High-Quality RNA-seq Library Prep Kit | Minimizes library-specific technical variation a priori. | Kits with low technical noise (e.g., Illumina Stranded mRNA). |

Integrating Batch Correction into DESeq2 and edgeR Workflows

Troubleshooting Guides & FAQs

Q1: My DESeq2 model fails to converge after adding a batch term. What should I do?

A: This often occurs with small sample sizes or a batch variable with too many levels relative to sample number. First, verify your design formula using design(dds). Consider simplifying the model if you have fewer than 3-4 replicates per group per batch. Use lfcThreshold=0 in results() to apply a more stable GLM fitting routine. Increasing the maxit argument in DESeq() (e.g., maxit=1000) can also help. For severe cases, pre-correcting counts with limma::removeBatchEffect on the log2(normalized counts + 1) and then running DESeq2 on the corrected matrix (without a batch term) is a last-resort alternative, though it compromises variance estimation.

Q2: After batch correction in edgeR, my dispersion estimates are extremely low or zero. Why?

A: Over-correction is the likely cause. If you used removeBatchEffect() on the counts prior to estimateDisp(), you may have removed biological signal. In edgeR, always incorporate batch as a term in your design matrix (e.g., ~ batch + group). Then, estimate dispersions using this design: estimateDisp(y, design). Do not correct the counts input to estimateDisp. This allows the model to account for batch-related variability in its dispersion estimates.

Q3: How do I diagnose if batch correction is necessary or has worked?

A: Perform Principal Component Analysis (PCA) before and after correction. Use the plotPCA function in DESeq2 on the rlog or vst transformed data. For edgeR, use plotMDS on the CPM values. Look for a clustering of samples by batch in the initial plot. After applying correction within your model, samples should cluster primarily by experimental group, not batch. A formal test like PERMANOVA (via vegan::adonis2) on the sample distances can quantify batch effect significance pre- and post-correction.

Q4: Can I include a continuous covariate (e.g., RIN score) alongside a batch factor?

A: Yes, both DESeq2 and edgeR support this. In DESeq2, the design would be ~ RIN + batch + condition. In edgeR, the design matrix is constructed similarly: model.matrix(~ RIN + batch + group). Ensure the continuous covariate is centered or scaled if its numeric range is very large, as this improves model stability. The coefficient for the covariate will then be interpreted as the change in expression per unit change in the covariate.

Q5: I have a paired design (e.g., patient-matched samples) with a batch effect. How do I model this?

A: For paired designs with an additional technical batch, use a design that accounts for both. In DESeq2: ~ patient + batch + treatment. Here, patient absorbs the paired variability. In edgeR: Create a design matrix with model.matrix(~ patient + batch + treatment). Remember to not include the intercept if you use this form, or use model.matrix(~0 + patient + batch + treatment). For edgeR, you may need to combine patient factors if some levels have few replicates. The key is that batch is added after the pairing variable.

Q6: What is the difference between svaseq/RUVSeq and including batch in the design?

A: Including a known batch in the design directly models and adjusts for its effect. svaseq (from sva package) and RUVSeq are used to estimate unknown or unmodeled batch factors or confounders from the data itself. svaseq uses control genes or the full data to estimate surrogate variables (SVs), which are then added to the DESeq2/edgeR design matrix (e.g., ~ SV1 + SV2 + group). RUVSeq uses negative control genes or empirical controls to estimate factors of unwanted variation. These methods are crucial when the batch is not explicitly recorded.

Experimental Protocol: Integrating Surrogate Variable Analysis (SVA) with DESeq2

Objective: To identify and adjust for unknown batch effects and confounders in an RNA-seq differential expression analysis.

Materials: R (v4.3+), DESeq2 (v1.40+), sva (v3.48+), ggplot2.

Method:

- Prepare Data: Create a DESeqDataSet object

ddswith a basic design reflecting your primary variable of interest (e.g.,~ group). - Initial Modeling: Run a preliminary, simplified DESeq analysis to obtain residuals.

Estimate Surrogate Variables: Use the

svaseqfunction on the normalized, filtered count matrix.Integrate SVs into DESeq2: Append the significant SVs to the

colDataof yourddsobject and update the design formula.Re-run DESeq2: Perform the final differential expression analysis with the adjusted design.

Validation: Generate PCA plots colored by

groupand by eachSVto confirm adjustment.

Data Presentation: Comparison of Batch Correction Methods

Table 1: Key Characteristics of Batch Adjustment Approaches in DESeq2 and edgeR

| Method | Package/Function | Input Data | When to Use | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| Design Matrix | DESeq2::DESeq(~batch+group), edgeR::glmFit(~batch+group) |

Raw Counts | Known, discrete batch effects. | Statistically rigorous, preserves full count distribution for dispersion estimation. | Requires explicit recording of batch. Can use many degrees of freedom. |

| Combat-seq | sva::Combat_seq |

Raw Counts | Multiple, known discrete batch effects with complex designs. | Models batch effect directly on counts, suitable for RNA-seq. Can handle small sample sizes. | May over-correct if batch and condition are confounded. Output is adjusted counts, which are then re-fed to DESeq2/edgeR. |

| Surrogate Variable Analysis (SVA) | sva::svaseq |

Normalized, Filtered Counts | Unknown batch effects or confounders. | Discovers hidden factors without prior knowledge. | Risk of removing biological signal if SVs correlate with condition. Requires careful interpretation. |

| RUV Methods | RUVSeq::RUVg/s/r |

Normalized Counts | Unknown batch effects with sets of negative control genes or empirical controls. | Flexible use of control genes; multiple algorithmic variants (RUVg, RUVs, RUVr). | Performance highly dependent on quality of control gene set. |

| RemoveBatchEffect (Pre-correction) | limma::removeBatchEffect |

log2(CPM/norm counts) | Visualization (PCA/MDS) only. Not for DE analysis. | Cleans data for clear visualization of biological groups. | Removes variability, invalidating downstream statistical tests in DESeq2/edgeR if used on input counts. |

Table 2: Impact of Batch Correction on Simulated RNA-seq Data (n=12, 2 groups, 2 batches)

| Metric | No Correction (DESeq2) | Batch in Design (DESeq2) | SVA Correction (DESeq2) |

|---|---|---|---|

| PCA: % Variance Explained (Batch) | PC1: 45% | PC1: <8% | PC1: <5% |

| PCA: % Variance Explained (Condition) | PC2: 22% | PC1: 65% | PC1: 70% |

| False Discovery Rate (FDR) at α=0.05 | 0.38 (High) | 0.052 | 0.048 |

| True Positive Rate (Power) | 0.89 | 0.85 | 0.83 |

| Number of DEGs (FDR<0.05) | 1250 | 1023 | 998 |

The Scientist's Toolkit

Research Reagent & Computational Solutions

| Item/Resource | Function & Application in Batch Correction |

|---|---|

| Negative Control Genes (e.g., ERCC Spike-ins, Housekeeping Genes) | Used in RUVSeq methods to estimate factors of unwanted variation. Provide a stable signal across samples from which technical noise can be modeled. |

sva R Package (v3.48+) |

Contains Combat_seq for direct count adjustment and svaseq for surrogate variable estimation. Primary tool for handling unknown confounders. |

RUVSeq R Package (v1.34+) |

Provides multiple algorithms (RUVg, RUVs, RUVr) for batch correction using control genes or residuals. Integrates smoothly with edgeR/DESeq2 workflows. |

limma R Package (v3.56+) |

Provides removeBatchEffect function. Crucial Note: Use only for visualizing data (PCA/MDS plots), not as a pre-processing step before DESeq2/edgeR's statistical testing. |

DEGreport R Package |

Useful for generating diagnostic plots post-correction, such as clustering heatmaps and PCA plots colored by batch/condition, to visually assess correction efficacy. |

ggplot2 & pheatmap |

Essential for creating publication-quality diagnostic plots (PCA, boxplots of expression) before and after correction to validate the procedure. |

Workflow & Relationship Diagrams

Title: RNA-seq Batch Correction Decision & Integration Workflow

Title: Integration Points for Batch Variables in DESeq2 and edgeR

Troubleshooting Guides & FAQs

Q1: After applying batch correction (e.g., ComBat) to our multi-institution RNA-seq dataset, some biologically relevant subtype signals appear diminished. What went wrong? A: This is often a sign of over-correction, where the algorithm mistakes strong biological signal for batch effect. Diagnose by:

- Inspect PCA Plots: Generate PCA plots colored by both batch and known biological groups (e.g., cancer subtype) before and after correction. If biological clusters separate by batch pre-correction but are merged post-correction, over-correction is likely.

- Use Negative Controls: Include known, stable housekeeping genes or positive control samples across batches in your analysis. Their variance should decrease after correction; if they become artificially correlated, the model is too aggressive.

- Solution: Refine the correction model. For

sva::ComBat, consider using themean.only=TRUEoption if batch effects are primarily additive. Alternatively, use a method likelimma::removeBatchEffectwith a model matrix that explicitly preserves your biological covariates of interest.

Q2: Our diagnostic plots show strong batch effects remain after standard correction. What advanced steps can we take? A: Persistent batch effects suggest complex, non-linear artifacts or batch-by-condition interactions.

- Diagnose Interaction: Check if the batch effect magnitude differs between sample groups (e.g., is stronger in tumor vs. normal). A model incorporating an interaction term may be needed.

- Explore Non-Linear Methods: Implement algorithms designed for deep, non-linear correction:

- Harmony: Integrates across batches without over-correction.

- scRNA-seq inspired: Methods like Seurat's CCA integration or Scanorama, though designed for single-cell data, can be adapted for complex bulk RNA-seq batch issues.

- Protocol for Harmony on Bulk RNA-seq:

Q3: How do we validate that batch correction improved data quality without losing biological truth? A: Employ a multi-metric validation framework. Calculate the metrics below on your data before and after correction.

Table 1: Key Metrics for Batch Correction Validation

| Metric | Formula/Interpretation | Desired Change Post-Correction |

|---|---|---|

| Average Silhouette Width (by Batch) | Measures cluster compactness. Ranges from -1 to 1. | Decrease. Lower values indicate batch labels are less predictive of sample proximity. |

| Principal Component Regression (PCR) - R² | Variance in top PCs explained by batch. | Decrease. Less variance attributable to batch. |

| Biological Variance Preservation | Variance explained by key biological covariate (e.g., ER status in breast cancer). | Preserve or Increase. Biological signal should remain or strengthen. |

| Distance Between Positive Controls | Mean pairwise distance between replicate controls across batches. | Decrease. Technical replicates should cluster more tightly. |

Q4: For drug development, how do we handle batch effects introduced when adding new cohorts to a discovery dataset? A: This is a common challenge in extending biomarker studies. A reference-based correction is recommended.

- Protocol: Using a Fixed Reference with ComBat:

- Step 1: Designate the original large cohort as the reference batch.

- Step 2: When adding the new cohort as the query batch, apply ComBat in a reference-based mode. Using the

ref.batchparameter anchors the correction to the original dataset's distribution.

- Critical Note: Always sequence the new cohort with a subset of original samples as inter-batch controls to empirically assess and correct for the technical gap.

Experimental Protocol: A Standardized Batch Correction Workflow

Title: Systematic Batch Effect Correction and Validation for Multi-Batch RNA-seq Analysis.

1. Pre-processing & Quality Control:

- Input: Raw count matrices from all batches.

- Filter lowly expressed genes (e.g., keep genes with >10 counts in ≥20% of samples).

- Perform exploratory data analysis (EDA): PCA, heatmaps of sample distances, colored by batch, lab, sequencing date, and biological conditions.

2. Model Selection and Correction:

- If batch effects are linear and independent of biological group: Apply ComBat or limma::removeBatchEffect.

- If effects are complex or integrated clustering is the goal: Apply Harmony on PCA embeddings.

- Always include a model matrix (

mod) in ComBat/limma to protect primary biological variables of interest (e.g., cancer subtype, treatment status).

3. Post-Correction Validation:

- Regenerate all EDA plots from Step 1 using the corrected data.

- Quantitatively assess using metrics from Table 1.

- Perform downstream analysis (Differential Expression) both with and without the batch variable in the statistical model to confirm robustness of findings.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for RNA-seq Batch Management

| Item | Function & Rationale |

|---|---|

| Universal Human Reference RNA (UHRR) | A pooled RNA standard from multiple cell lines. Spiked into samples across batches to monitor and correct for technical variability via linear modeling. |

| External RNA Controls Consortium (ERCC) Spike-in Mix | Synthetic RNA transcripts at known concentrations. Added pre-library prep to diagnose batch effects in amplification, sequencing depth, and to calibrate measurements. |

| Inter-Batch Control Samples | Aliquots from a single, large biological sample (e.g., a well-characterized cell line pool) included in every sequencing batch. Serves as the empirical gold standard for batch correction algorithms. |

| RNase Inhibitors & Stabilization Reagents (e.g., RNAstable) | Critical for multi-site studies to prevent degradation-induced bias, which can be confounded with batch effect. Ensures input material integrity is consistent. |

Visualization

Title: Batch Correction Decision Workflow

Title: Goal of Batch Correction: Remove Technical Noise, Keep Biology

Diagnosing and Fixing Common Artifacts in Problematic Datasets

Welcome to the Technical Support Center for RNA-seq Analysis. This resource is designed within the context of research on Handling RNA-seq data with high technical variability, providing troubleshooting guides and FAQs for researchers, scientists, and drug development professionals.

FAQs & Troubleshooting Guides

Q1: My principal component analysis (PCA) plot shows sample clustering primarily by batch, not by experimental group. Is this a red flag?

A: Yes, this is a primary red flag for significant technical variability (batch effect). When samples from different experimental groups within the same batch cluster more closely together than replicates of the same condition across batches, it indicates batch effects are overshadowing biological signals. You must apply batch correction methods (e.g., ComBat, limma's removeBatchEffect) after careful evaluation, and document this step thoroughly.

Q2: I observe high correlation between technical replicates but very low correlation between biological replicates. What does this mean? A: This pattern strongly suggests that technical noise (e.g., library preparation date, reagent lot, personnel) is a major source of your data's variance. While technical replicates should be highly correlated, biological replicates should also show reasonable correlation (typically Pearson's R > 0.8 for most organisms/tissues). Low biological replicate correlation invalidates most downstream differential expression analyses.

Q3: My negative controls or blank samples show unexpectedly high numbers of reads or specific gene expression. What should I do?

A: This is a critical red flag for contamination or index hopping (in multiplexed runs). First, check the percentage of reads aligning to your target organism versus others. For suspected index hopping, consult the percentage of reads with undetermined barcodes from the sequencing facility's report (a rate > 1-2% is concerning). Re-analyze data with tools like decontam (R package) to identify and remove contaminant sequences.

Q4: The distribution of read counts across samples (boxplot of logCPM) shows markedly different medians or spreads. Is this problematic? A: Yes, significant differences in library size or distribution are major technical artifacts. They can arise from differences in RNA input, cDNA synthesis efficiency, or sequencing depth. Normalization (e.g., TMM in edgeR, or median-of-ratios in DESeq2) is designed to correct for this. If extreme outliers remain post-normalization, consider excluding those samples after investigating the wet-lab protocol notes.

Q5: How can I distinguish a true high-variance gene from one showing variability due to technical artifacts? A: Plot the gene's expression (e.g., logCPM) against the sample's overall sequencing depth or against batch variables. Technically variable genes will often show a pattern correlated with these factors. Additionally, inspect the coefficient of variation (CV) across biological replicates within the same condition. A CV exceeding 50% often warrants caution unless the gene is known to be highly dynamic.

The following table summarizes critical metrics used to spot technical variability in RNA-seq data.

| Metric | Calculation/Description | Acceptable Range | Red Flag Threshold | Suggested Action |

|---|---|---|---|---|

| Sequencing Saturation | Percentage of library molecules sequenced. | >70-80% for standard discovery. | < 50% | Sequence deeper or improve library complexity. |

| % Aligned Reads | Reads mapping to reference genome/transcriptome. | >70-90% (species/tool dependent). | < 50% | Check RNA quality, adapter contamination, reference. |

| % rRNA Reads | Reads aligning to ribosomal RNA. | < 5-10% (for poly-A selection). | > 30% | Use more effective rRNA depletion. |

| Inter-Replicate Correlation (Pearson's R) | Correlation of logCPM between biological replicates. | R > 0.80-0.85 (tissue-dependent). | R < 0.70 | Investigate wet-lab protocol; potential outlier. |

| Undetermined Index % | Percentage of reads with unrecognized barcodes. | < 1-2%. | > 5% | Indicates severe index hopping; contact core facility. |

| 3'/5' Bias (for stranded kits) | Ratio of coverage at 3' end vs. 5' end of transcripts. | ~1:1 for intact RNA. | > 3:1 or < 1:3 | RNA degradation or poor cDNA synthesis efficiency. |

| Library Size Variation | Total reads per sample before normalization. | Within 2-3 fold range. | > 5-10 fold difference | Check for failed library prep; apply robust normalization. |

Experimental Protocols

Protocol 1: Systematic Quality Control Assessment for RNA-seq Data

Purpose: To generate a standardized report on technical variability metrics. Steps:

- Raw Read Assessment: Use

FastQCon all raw FASTQ files. Consolidate reports withMultiQC. Pay special attention to per-base sequence quality, adapter content, and overrepresented sequences. - Alignment & Quantification: Align reads to a reference genome/transcriptome using a splice-aware aligner (e.g., STAR, HISAT2). Quantify reads per gene using featureCounts or HTSeq.

- Initial R Analysis: Load count matrix into R. Calculate and visualize:

- Library sizes (total counts per sample).

- Distribution of logCPM (boxplots).

- PCA plot (top 500 most variable genes) colored by

Batchand byExperimental Group. - Sample-to-sample distance heatmap.

- Metric Calculation: Using the count matrix and sample metadata, calculate all metrics in the table above. Format into a QC report.

Protocol 2: Wet-Lab Spike-In Control Experiment

Purpose: To explicitly monitor technical variability across samples/batches. Methodology:

- Spike-In Selection: Use exogenous RNA controls (e.g., ERCC RNA Spike-In Mix). Dilute to a concentration series covering several orders of magnitude.

- Sample Processing: Spike a fixed volume of the same ERCC dilution series into a constant amount (e.g., 500 ng) of each sample's total RNA before library preparation.

- Library Prep & Sequencing: Proceed with standard library preparation (poly-A selection or rRNA depletion) and sequencing.

- Analysis: Quantify spike-in reads separately. The log2 expression of each spike-in should be consistent across samples. High variance in spike-in counts, especially those spiked at similar levels, directly quantifies technical noise introduced during library prep and sequencing.

Visualizations

Title: RNA-seq Technical Variability Spotting Workflow

Title: Signal vs. Noise in RNA-seq Data Model

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function/Benefit | Example Product/Brand |

|---|---|---|

| ERCC Exogenous Spike-In Controls | Defined mix of synthetic RNA transcripts added to samples before library prep. Provides an absolute standard to measure technical variance and sensitivity. | Thermo Fisher Scientific ERCC Spike-In Mix |

| UMI (Unique Molecular Index) Adapters | Oligonucleotide tags that label each individual mRNA molecule before PCR amplification. Allows bioinformatic correction for PCR duplicate bias. | Illumina TruSeq UD Indexes, SMARTer smRNA-Seq Kit (Takara Bio) |

| Ribo-Depletion/Ribo-Zero Kits | Removes cytoplasmic and mitochondrial ribosomal RNA, preserving both coding and non-coding RNA, including degraded samples. Crucial for non-poly-A applications. | Illumina Ribo-Zero Plus, NEBNext rRNA Depletion Kit |

| RNA Integrity Number (RIN) Assay | Microfluidic capillary electrophoresis (e.g., Bioanalyzer, TapeStation) to quantitatively assess RNA degradation. The primary QC gatekeeper. | Agilent Bioanalyzer RNA Nano Kit |

| Automated Library Prep Systems | Reduces hands-on time and inter-personnel variability through liquid handling robotics. Improves reproducibility across large batches. | Beckman Coulter Biomek, Hamilton STARlet |

| Commercial "One-Pot" Library Prep Kits | Integrated workflows that combine multiple enzymatic steps, reducing sample loss and manipulation-induced variability. | NEBNext Ultra II, Illumina Stranded mRNA Prep |

| Phylogenetic Diversity Spikes | Synthetic RNAs from non-target organisms (e.g., Salmon, Phage) added to monitor for contamination and cross-sample carryover. | Lexogen SIRV & ERCC Spike-In Set |

Troubleshooting Guide & FAQ

Q1: After processing my RNA-seq data, my PCA plot shows a single sample far from all others. Has normalization failed? What should I check first?

A1: Yes, this indicates a potential normalization failure due to an extreme outlier. First, check technical artifacts:

- Library Size: Compare the total read counts (library size) for all samples. The outlier likely has a drastically different total.

- Contamination: Run a fastQC check on the raw reads of the outlier sample for overrepresented sequences.

- Sample Swap/Mislabeling: Verify metadata. Could the tissue type or condition be different?

- Protocol Deviation: Check the lab log. Was there a reagent lot change or protocol interruption for this sample?

Table 1: Initial Diagnostic Checks for a Suspected Outlier Sample

| Check | Method | Expected Result | Outlier Indicator |

|---|---|---|---|

| Total Read Count | sum(colSums(count_matrix)) |

Similar across all samples (within 2-3 fold). | Count orders of magnitude higher/lower. |

| Gene Detection Rate | colSums(count_matrix > 0) |

Similar across samples in same condition. | Drastically fewer or more genes detected. |

| % of Reads in Top 20 Genes | Calculate percentage alignment. | Typically < 30% for a balanced library. | Often > 50-60%, suggesting dominance by few genes. |

Q2: Standard median-of-ratios (DESeq2) or TMM (edgeR) normalization didn't work. What are my next strategic options?

A2: When classical scaling methods fail, employ robust or conditional strategies:

- Use of Trimmed Means: Re-run TMM normalization with a more severe trim (e.g., 30% on each side instead of the default) to minimize outlier influence.

- Housekeeping-Gene Normalization: If you have validated, stable housekeeping genes in your system, normalize counts directly to their geometric mean.

- Quantile Normalization (with caution): Forces all sample distributions to be identical. Use only if you believe the outlier's distribution is technical, but it drastically alters biological signals.

- Leave-Out Normalization: Normalize the dataset excluding the outlier sample, then project the outlier into the normalized space using the calculated size factors from the main cohort. This prevents the outlier from distorting factors for all other samples.

Experimental Protocol: Leave-Out Normalization for DESeq2

Q3: When should I completely remove an outlier sample versus trying to salvage it?

A3: Use this decision framework:

Table 2: Criteria for Removing vs. Retaining an Outlier Sample

| Factor to Consider | Favor REMOVAL | Favor RETAINING/Robust Normalization |

|---|---|---|

| Technical Proof | Clear evidence (failed QC, wrong protocol, contamination). | No technical fault found; could be valid biological extremity. |

| Replication Impact | Outlier is a single replicate; other replicates for its condition cluster tightly. | Outlier is a key biological group (e.g., rare disease subtype) or has no replicate. |

| Effect on Downstream Analysis | Changes the conclusion of differential expression dramatically for many genes. | Global gene expression patterns remain consistent with or without it. |

| Biological Plausibility | Expression profile suggests a different tissue or cell type entirely. | Extreme response within expected biological range for the condition. |

Q4: How can I design my experiment upfront to mitigate the impact of such outliers?

A4: Proactive experimental design is key:

- Increase Biological Replicates: More replicates (n>=5) provide statistical robustness to identify and withstand a single outlier.

- Randomize Processing: Do NOT process all replicates for one condition together. Spread them across library prep batches and sequencing lanes.

- Include Positive Controls: Spike-in RNAs (e.g., ERCC) can help disentangle technical from biological variation.

- Metadata Rigor: Document every potential technical covariate (extraction kit lot, technician, sequencing run date, etc.).

Visualization: Outlier Decision Workflow

Title: RNA-seq Outlier Handling Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Managing Technical Variability

| Item | Function in Outlier Mitigation |

|---|---|

| ERCC RNA Spike-In Mix (Thermo Fisher) | Artificial RNA controls at known concentrations. Used to monitor technical sensitivity, normalization accuracy, and diagnose assay failures. |

| UMI Adapters (e.g., from SMART-seq) | Unique Molecular Identifiers tag individual mRNA molecules to correct for PCR amplification bias, a common source of extreme counts. |

| RIN Standard RNA (Agilent) | Quality control standard to calibrate Bioanalyzer/TapeStation systems, ensuring accurate RNA Integrity Number assessment across batches. |

| Duplicate Plasma/Universal Human Reference RNA (UHRR) | A consistent biological reference sample run across experiments/batches to assess inter-study technical variation and align datasets. |

| RNase Inhibitors (e.g., Protector) | Critical during cDNA synthesis to prevent degradation artifacts that can create low-quality outliers. |

| Magnetic Bead Cleanup Kits (SPRI) | Consistent post-PCR cleanup is vital for library quality; using the same bead:buffer ratio across all samples minimizes yield variability. |

Technical Support Center: Troubleshooting High Technical Variability in RNA-seq Experiments

Troubleshooting Guides

Problem: Unexpected Batch Effects in Final PCA Plot

- Symptoms: Samples cluster strongly by sequencing date, library prep batch, or technician, rather than by experimental condition.

- Diagnosis: Inadequate randomization during sample processing. Technical factors have systematically confounded biological signals.

- Solution: Implement a full randomization protocol for all steps post-nucleic acid extraction. Re-analyze using a linear model that includes "batch" as a blocking factor (e.g., in

limmaorDESeq2). See Protocol 1.

Problem: High Within-Group Variance Obscuring Differential Expression

- Symptoms: Low power to detect DE genes, high mean-dispersion in

DESeq2, poor replication between sample replicates. - Diagnosis: Heterogeneous experimental material (e.g., tissues from multiple subjects/litters, cells from different passages) was not grouped and controlled for.

- Solution: Apply a blocked experimental design. Use paired or multi-level analysis to account for the known source of variability. See Protocol 2.

Problem: Insufficient Statistical Power Despite Adequate Replication

- Symptoms: Power analysis suggested n=5 per group is sufficient, but results are non-significant and unstable.

- Diagnosis: Unaccounted-for technical noise inflates observed variance, reducing effective power.

- Solution: Introduce technical replicates at the most variable step (e.g., library prep) to quantify this noise. Use blocking in the design matrix to partition this variance. Never use technical replicates as biological replicates in analysis.

Frequently Asked Questions (FAQs)