Navigating Variants of Unknown Significance in Whole Exome Sequencing: From Interpretation to Clinical Action and Drug Discovery

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to manage Variants of Unknown Significance (VUS) in Whole Exome Sequencing (WES).

Navigating Variants of Unknown Significance in Whole Exome Sequencing: From Interpretation to Clinical Action and Drug Discovery

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to manage Variants of Unknown Significance (VUS) in Whole Exome Sequencing (WES). It covers the foundational challenge of VUS in clinical and research settings, explores advanced methodologies and bioinformatics tools for interpretation, details strategies for troubleshooting and optimizing diagnostic pipelines, and discusses validation frameworks for establishing clinical actionability. By synthesizing current evidence and best practices, this guide aims to enhance diagnostic yield, facilitate the translation of genetic findings into therapeutic insights, and improve patient outcomes in rare diseases and complex disorders.

Understanding VUS: Defining the Challenge in Genomic Medicine and Research

The Nature and Origin of VUS in Whole Exome Sequencing Data

FAQs: Understanding VUS in WES

What is a Variant of Uncertain Significance (VUS)? A VUS is a genetic variant for which the impact on human health cannot be definitively determined as either pathogenic (disease-causing) or benign with the current available evidence [1] [2]. It represents a "grey area" in genetic interpretation, complicating clinical decision-making [3].

Why do VUS results occur so frequently in WES? The frequency of VUS detections increases in proportion to the amount of DNA sequenced [1]. Whole Exome Sequencing analyzes approximately 30 million base-pairs of protein-coding regions, generating vast amounts of variation data [4]. Several factors contribute to high VUS rates:

- Limited population data: VUS are more likely for patients from populations with limited representation in genomic datasets (e.g., those not of European ancestry) [1].

- Expanded gene panels: Large multi-gene panels may include genes with doubtful disease associations, increasing VUS findings [1].

- Evidence scarcity: For many rare variants, sufficient clinical or functional evidence does not yet exist to determine pathogenicity [2].

What is the typical ratio of VUS to pathogenic findings? VUS substantially outnumber pathogenic findings in clinical sequencing [1]. The table below summarizes findings from key studies:

| Scenario | VUS to Pathogenic Variant Ratio | Details |

|---|---|---|

| Breast Cancer Predisposition (Meta-analysis) | 2.5:1 [1] | VUS were 2.5 times more frequent than pathogenic findings |

| 80-Gene Cancer Panel (2,984 patients) | ~3.6:1 [1] | 47.4% patients had VUS vs. 13.3% with pathogenic/likely pathogenic findings |

| Overall Rare Diseases (ClinVar database) | Majority [2] | Most variants categorized as VUS among 94,287 rare disease variants |

What happens to VUS over time? As new evidence emerges, VUS may be reclassified. Current data suggests that 10-15% of reclassified VUS are upgraded to likely pathogenic/pathogenic, while the remainder are downgraded to likely benign/benign [1]. However, reclassification occurs slowly - one study found only 7.7% of unique VUS were resolved over a 10-year period in cancer-related testing [1].

What are the practical consequences of a VUS finding?

- Clinical decision-making: VUS results fail to resolve the clinical question for which testing was performed [1]

- Psychological impact: May cause worry, anxiety, disappointment, or frustration [1]

- Clinical management: Potential for unnecessary procedures or clinical surveillance despite professional guidelines recommending against intervention based solely on VUS [1]

- Healthcare resources: Significant time required for variant interpretation and reinvestigation [1]

Troubleshooting Guide: Addressing VUS Challenges

Challenge: High VUS Rates in Specific Populations

Problem: Patients from underrepresented populations receive VUS results more frequently.

Solution:

- Utilize population-specific databases: Incorporate ancestry-matched population frequency data from sources like gnomAD [2]

- Implement family studies: Perform segregation analysis when possible - lack of segregation with disease provides strong evidence for benign classification [1]

- Advocate for diversity: Support research efforts to expand genomic datasets for underrepresented populations [1] [5]

Challenge: Automated Tools Provide Conflicting VUS Interpretations

Problem: Different computational tools yield varying classifications for the same variant.

Solution:

- Use expert-curated guidelines: Follow ACMG/AMP/ACGS standards for variant interpretation [6] [2] [7]

- Combine multiple evidence sources: Integrate population data, computational predictions, functional data, and clinical findings [1]

- Verify with specialized tools: Implement gene-specific interpretation methods like GAVIN that combine ExAC allele frequencies with SnpEff and CADD predictions [2]

- Maintain human oversight: Automated tools show significant limitations with VUS and still require expert review [6]

Challenge: Determining Clinical Actionability of VUS

Problem: Difficulty deciding whether a VUS should influence patient management.

Solution:

- Apply network-based analysis: Tools like VarClass utilize biological network associations to prioritize VUS with potential clinical significance [3]

- Implement functional studies: Multiplexed Assays for Variant Effect (MAVEs) can generate functional data at scale [5]

- Establish institutional protocols: Develop clear guidelines for when to pursue functional validation based on gene-disease validity and clinical context [5] [7]

Experimental Protocols for VUS Resolution

Protocol 1: Comprehensive Variant Interpretation Workflow

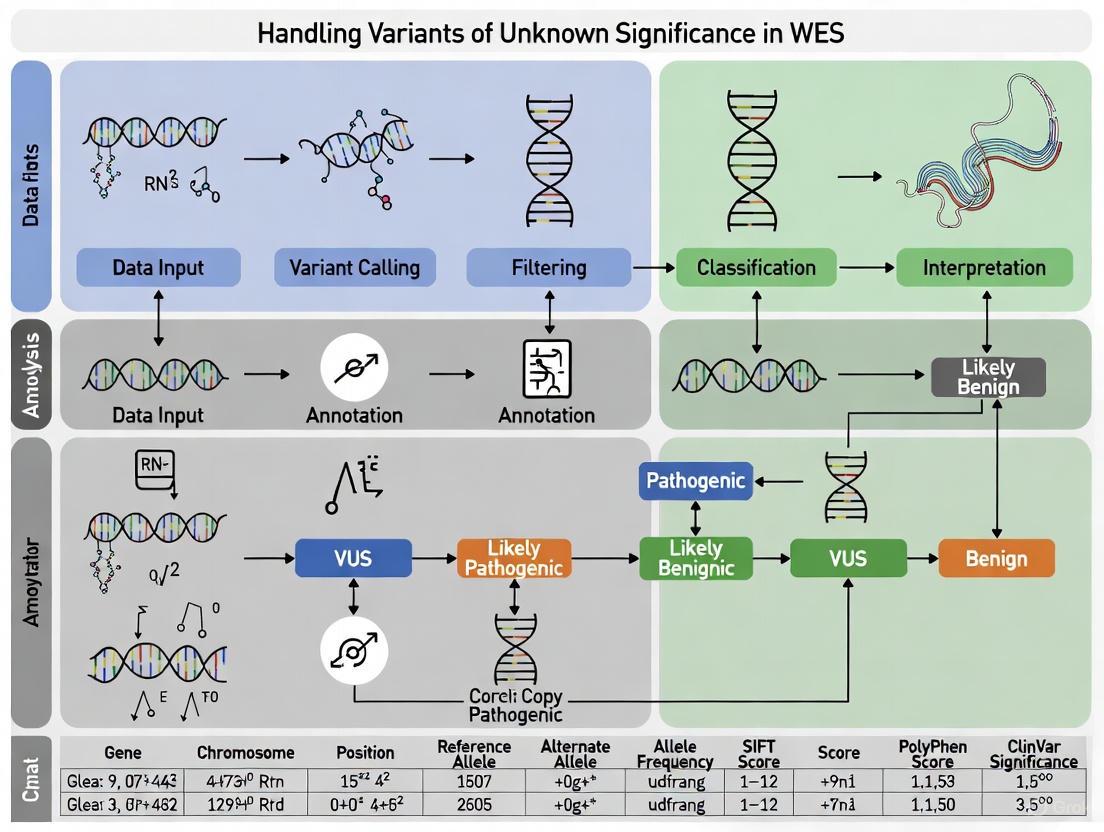

The following diagram illustrates the systematic approach to variant interpretation recommended by major genetics organizations:

Step-by-Step Methodology:

- Variant Identification: Call variants from WES data using standardized pipelines [7]

- Population Frequency Filtering: Compare against population databases (gnomAD) - variants with frequency higher than disease prevalence provide strong evidence for benign classification [1]

- Computational Prediction: Apply in silico tools (CADD, SIFT, PolyPhen) to predict functional impact [2]

- Database Review: Search clinical databases (ClinVar) for existing interpretations [2]

- Functional Evidence Integration: Incorporate data from functional assays when available [5]

- Segregation Analysis: Perform family studies when possible - segregation with disease provides evidence of pathogenicity [1]

- Evidence Synthesis: Apply ACMG/AMP guidelines to integrate all evidence sources [6] [2]

- Final Classification: Assign definitive classification based on cumulative evidence [7]

Protocol 2: Functional Validation of VUS

The diagram below outlines the functional validation pathway for VUS resolution:

Detailed Methodologies:

Mini-gene Splicing Assays (as used in DEPDC5 epilepsy study [8]):

- Construct Design: Clone genomic fragments encompassing the VUS and flanking intronic sequences into splicing reporter vectors

- Transfection: Introduce constructs into relevant cell lines

- RNA Analysis: Isolve RNA, perform RT-PCR, and analyze splicing products via gel electrophoresis or capillary electrophoresis

- Validation: Compare splicing patterns between wild-type and VUS constructs to identify aberrant splicing

Multiplexed Assays for Variant Effect (MAVEs):

- Variant Library Construction: Generate comprehensive variant libraries covering all possible amino acid substitutions in the protein of interest

- Functional Selection: Express variant libraries in appropriate cellular systems and apply functional selection pressure

- Deep Sequencing: Quantify variant abundance before and after selection using next-generation sequencing

- Variant Effect Mapping: Calculate functional scores for each variant based on enrichment/depletion patterns [5]

Research Reagent Solutions for VUS Investigation

| Reagent Category | Specific Examples | Function in VUS Resolution |

|---|---|---|

| Computational Prediction Tools | CADD, SIFT, PolyPhen-2, REVEL [2] | Predict functional impact of amino acid substitutions using evolutionary conservation and structural features |

| Variant Interpretation Platforms | PathoMAN, VIP-HL, VarClass [6] [3] | Automate ACMG guideline application and integrate multiple evidence sources for classification |

| Functional Assay Systems | Mini-gene constructs, MAVE libraries, iPSCs [5] [8] | Provide experimental evidence of variant impact on protein function, splicing, or cellular phenotype |

| Population Databases | gnomAD, dbSNP, 1000 Genomes [2] | Determine variant frequency across populations to assess rarity and potential pathogenicity |

| Clinical Databases | ClinVar, ClinGen, LOVD [2] | Access curated information on variant interpretations and gene-disease relationships |

| Network Analysis Tools | VarClass, GeneMANIA [3] | Prioritize VUS through biological network associations and gene-level relationships |

Quantitative Data on VUS Classification Outcomes

VUS Reclassification Statistics:

| Reclassification Direction | Percentage | Supporting Evidence |

|---|---|---|

| Upgraded to Pathogenic/Likely Pathogenic | 10-15% [1] | Accumulation of pathogenic evidence across multiple evidence types |

| Downgraded to Benign/Likely Benign | 85-90% [1] | Benign population frequency, lack of segregation, functional studies showing no deleterious effect |

| Resolved through functional data | 15-75% [5] | Gene-dependent; higher for well-characterized genes like BRCA1, TP53, PTEN |

Evidence Strengths for Variant Interpretation:

| Evidence Type | Strong Evidence Examples | Moderate/Supporting Examples |

|---|---|---|

| Population Data | Variant prevalence higher than disease prevalence [1] | Absent from population databases or very low frequency |

| Segregation Data | Segregation with disease in multiple families [1] | Segregation in single family with limited members |

| Functional Data | Well-validated assays showing deleterious impact [5] | Experimental data from preliminary or non-validated assays |

| Computational Data | Concordant predictions across multiple algorithms [2] | Single algorithm prediction without additional support |

In genomic medicine, a Variant of Uncertain Significance (VUS) represents a genetic variant for which there is insufficient evidence to classify it as either pathogenic or benign [9]. This classification is not a definitive result but rather an acknowledgment of the current limitations in genomic knowledge. The prevalence of VUS is substantial, affecting between 20% to 40% of patients undergoing genetic testing [10]. In the context of rare diseases alone, an analysis of the ClinVar database revealed that the majority of the 94,287 variants associated with rare diseases were categorized as VUS [2].

The fundamental challenge stems from the gap between our ability to detect genetic variants through advanced sequencing technologies and our understanding of their biological and clinical implications. While next-generation sequencing can identify millions of variants, interpreting their functional impact requires extensive evidence that often does not yet exist [10]. This creates significant challenges for researchers, clinicians, and patients, particularly in the context of Whole Exome Sequencing (WES) research where accurate variant interpretation is crucial for diagnosis and discovery.

VUS Classification and Reporting Frameworks

Standardized Classification Guidelines

Multiple professional organizations have established guidelines for variant classification to standardize interpretation across laboratories:

- ACMG/AMP Guidelines: The American College of Medical Genetics and Genomics (ACMG) and the Association for Molecular Pathology (AMP) use a five-tier classification system: Pathogenic (P), Likely Pathogenic (LP), Variant of Uncertain Significance (VUS), Likely Benign (LB), and Benign (B) [11] [9]. The "likely" categories correspond to >90% confidence in the classification [11].

- Oncology-Specific Frameworks: For somatic variants in cancer, the AMP/ASCO/CAP guidelines established a four-tier system (Tier I-IV), while more recently, ClinGen/CGC/VICC released specialized guidelines for classifying oncogenicity [11].

The VUS Reporting Dilemma

A central debate in clinical genetics involves whether and when to report VUS findings. Reporting practices vary significantly across contexts:

- Prenatal Settings: A 2025 study of prenatal exome sequencing found that VUS were reported in exceptional cases after stringent selection, with only a minority subsequently reclassified as (likely) pathogenic [12]. This careful approach reflects the time-sensitive nature of prenatal decisions.

- General Practice: Experts generally recommend against using VUS in clinical decision-making, emphasizing that patient management should be based on personal and family history rather than the presence of a VUS alone [9]. Multidisciplinary review is considered essential for VUS management [12].

Quantitative Landscape of VUS in Genomic Databases

Table: VUS Distribution and Reclassification Evidence

| Database/Context | VUS Prevalence | Reclassification Rate | Key Evidence |

|---|---|---|---|

| General Genetic Testing | 20-40% of patients receive a VUS [10] | Not specified | Based on clinical testing cohorts |

| Rare Diseases (ClinVar) | Majority of 94,287 rare disease variants [2] | Not specified | Database analysis as of October 2024 |

| Prenatal WES | 31 VUS reported in 27 pregnancies [12] | 5 of 7 reclassified VUS upgraded to (likely) pathogenic [12] | Retrospective review in Dutch academic hospitals |

| MAVE-Informed Reclassification | Not applicable | 55% (937 of 1,711 VUS) reclassified [10] | Analysis across twelve published studies |

Technical Challenges in VUS Interpretation

Evidence Assessment and Integration

VUS interpretation requires synthesizing multiple types of evidence, each with limitations:

- Population Frequency Data: Databases like gnomAD, dbSNP, and 1000 Genomes provide allele frequency information. Variants too common in general populations are unlikely to cause rare diseases, but absence from databases doesn't guarantee pathogenicity [11] [2].

- Computational Predictions: In silico tools (SIFT, CADD, GERP) predict variant impact using evolutionary conservation and protein structure models, but their predictions are not definitive for clinical classification [2] [10].

- Functional Evidence: Experimental data from biochemical assays or cell-based models can provide direct evidence of functional impact but are often unavailable for specific variants [11] [10].

- Segregation Analysis: Co-segregation of a variant with disease in families provides strong evidence, but is often limited by family size, availability, and late-onset conditions [9].

VUS in Specialized Contexts

- Tumor Suppressors vs. Oncogenes: These gene categories exhibit fundamentally different biological behaviors that affect VUS interpretation. Tumor suppressors typically require loss-of-function variants, while oncogenes are typically activated by gain-of-function variants [11].

- Non-Coding Variants: Variants in regulatory regions present particular challenges as their effects are often quantitative and poorly understood without specialized functional studies [2].

- Different Technical Platforms: Variants may be annotated differently depending on sequencing methodology (e.g., tissue vs. liquid biopsies, with or without matched normal samples), complicating cross-platform comparisons [11].

Research Approaches for VUS Resolution

Advanced Analytical Frameworks

Large-scale statistical approaches are increasingly powerful for VUS resolution:

- Gene Burden Testing: The geneBurdenRD framework, applied to the 100,000 Genomes Project, identified 141 new disease-gene associations by testing enrichment of rare protein-coding variants in cases versus controls [13]. This approach can implicate genes where VUS cluster in specific patient populations.

- Multidimensional Assessment: A holistic approach evaluates variants across multiple biological axes, including splicing impact, mutation interactions, copy number thresholds, and genome-wide signatures [11].

Experimental Solutions: Multiplexed Assays of Variant Effect (MAVE)

MAVEs (also called Deep Mutational Scanning) represent a transformative approach for generating functional data at scale:

- Methodology: These assays systematically generate thousands of missense variants in a gene, introduce them into functional assays, and measure their impact using high-throughput approaches [10].

- Impact: MAVEs have already enabled reclassification of 55% of VUS (937 of 1,711) across twelve studies [10]. The MaveDB database currently contains approximately 2,000 datasets comprising ~7 million variant effect measurements [10].

- Integration with Prediction Tools: MAVE data can refine computational predictors by providing experimental training data, creating a virtuous cycle of improved prediction and classification [10].

Computational and Filtration Strategies

Table: Research Reagent Solutions for VUS Interpretation

| Tool/Category | Primary Function | Application in VUS Resolution |

|---|---|---|

| In Silico Predictors (SIFT, CADD, GERP) [2] | Predict variant impact using evolutionary and structural features | Preliminary variant prioritization; evidence integration |

| Gene-Specific Tools (GAVIN) [2] | Combines gene-specific data with in silico predictions | Context-specific variant interpretation |

| Custom Filtration Pipelines [14] | Population-specific variant filtration | Reduce candidate variants from ~600,000 to 5-15 per case |

| Mathematical Models & ML [2] | Simulate biological outcomes and pattern recognition | Handle complex data relationships for classification |

| Variant Databases (ClinVar, gnomAD, dbSNP) [11] [2] | Aggregate population frequency and clinical assertions | Evidence gathering for classification |

Targeted filtration strategies can dramatically improve VUS interpretation efficiency. In consanguineous populations, focusing on autosomal recessive homozygous variants reduced the number of candidate variants from hundreds of thousands to 5-15 per case while maintaining an 82% detection rate for disease-causing variants [14]. This approach completed analysis in approximately 45 minutes per case compared to 5 hours without filtration [14].

FAQs: Troubleshooting Common VUS Challenges

Q1: How should I handle a VUS result in my research when trying to establish a new gene-disease association?

A: Begin by gathering all available evidence across multiple axes: search population databases for frequency data, conduct literature reviews for functional characterization, and use computational predictors for preliminary impact assessment [11] [2]. For stronger evidence, consider family segregation studies if possible [9], and explore whether the gene shows constraint against variation in population databases [13]. Large-scale gene burden testing in cohorts like the 100,000 Genomes Project can provide statistical evidence for novel disease-gene associations [13].

Q2: What is the most efficient workflow for triaging numerous VUS findings in a WES study?

A: Implement a stepwise filtration strategy:

- Start with quality control and population frequency filtering using databases like gnomAD [11] [14]

- Apply inheritance pattern filters based on your patient population and phenotype [14]

- Use impact prediction tools to prioritize loss-of-function and deleterious missense variants [13] [14]

- Consider gene-level characteristics such as constraint metrics (gnomAD o/e scores) and biological relevance to the phenotype [13]

- For remaining candidates, proceed to experimental validation or segregation analysis [9]

Q3: How reliable are computational predictions for VUS classification, and which tools should I use?

A: Computational predictions provide supporting evidence but should not be used alone for definitive classification [10]. Current tools have limitations but are improving, especially with new AI approaches [10]. Use multiple complementary tools (e.g., SIFT, CADD, GERP) and consider gene-specific classifiers like GAVIN when available [2]. Remember that predictions are more reliable for some gene categories than others, and functional validation remains the gold standard [11] [10].

Q4: What emerging technologies show the most promise for resolving VUS on a large scale?

A: Multiplexed Assays of Variant Effect (MAVEs) currently represent the most promising approach for large-scale VUS resolution [10]. These high-throughput functional assays can test thousands of variants simultaneously, generating functional data that has already reclassified 55% of VUS in studies to date [10]. Additionally, machine learning models trained on expanding genomic datasets are improving prediction accuracy, and large-scale statistical gene burden testing in biobanks is identifying new disease-gene associations [2] [13].

Q5: How should I handle a situation where different classification systems provide conflicting evidence for a VUS?

A: Conflicting interpretations are not uncommon and reflect the evolving nature of genomic evidence. In these situations:

- Carefully evaluate the quality and source of each evidence item, giving more weight to functional studies and large family segregation data over computational predictions alone [11] [10]

- Consider the context of the evidence - some may be more relevant to specific populations or disease mechanisms [11]

- Consult multiple databases and recent literature, as classifications can change rapidly with new evidence [9]

- When possible, generate additional evidence through functional studies or family testing to resolve the conflict [9] [10]

- Document all evidence and the rationale for your final classification decision [9]

The VUS challenge represents both a significant obstacle and an opportunity for advancement in genomic medicine. Current research approaches, including large-scale statistical analyses, multiplexed functional assays, and improved computational predictions, are steadily transforming VUS interpretation. The research community is working toward the National Human Genome Research Institute's goal of solving the VUS problem by 2030 [10], though substantial challenges remain, particularly for non-coding variants and genes with complex biological roles.

For researchers navigating VUS in WES studies, success depends on implementing systematic filtration strategies, leveraging growing public datasets, participating in data sharing initiatives, and maintaining cautious interpretation of results until sufficient evidence accumulates. As genomic technologies continue to evolve and collaborative efforts expand, the current gray zone of VUS will progressively give way to more definitive classifications that enhance both diagnosis and discovery.

In clinical whole exome sequencing (WES), understanding the distinction between different types of findings is crucial for effective research and patient communication.

- Primary Findings: Results relevant to the initial diagnostic question for which sequencing was ordered [15].

- Incidental Findings (IFs): Pathogenic alterations discovered unintentionally in genes unrelated to the diagnostic indication. Some guidelines use the term "unsolicited findings" (UFs) for variants that are found unintentionally, as opposed to actively sought [16] [17].

- Secondary Findings (SFs): Pathogenic alterations in genes not related to the diagnostic indication but that are deliberately sought and analyzed according to professional guidelines [15] [16].

- Variants of Uncertain Significance (VUS): Genetic variants for which available evidence is insufficient to classify them as clearly pathogenic or benign [2].

? Frequently Asked Questions (FAQs)

What exactly is a Variant of Uncertain Significance (VUS)?

A VUS is a genetic change identified through sequencing where current scientific knowledge cannot determine whether it causes disease, is benign, or has any health impact. VUS represent a significant challenge in genomics, comprising a substantial portion of classified variants. Research indicates they are among the most common variant classifications in databases like ClinVar [2]. They should not be used for clinical decision-making until more evidence becomes available [18].

What is the difference between incidental and secondary findings?

The key distinction lies in how they are discovered:

- Incidental/Unsolicited Findings: Discovered unintentionally during analysis [16] [17]

- Secondary Findings: Actively sought through deliberate analysis of specific genes based on professional guidelines [15] [16]

International policy documents vary in their terminology and approach to these findings, with some advocating for a more restrictive approach to secondary findings [16].

How common are unsolicited/incidental findings in WES?

Large-scale studies indicate the overall frequency of medically actionable UFs in clinical WES is relatively low. One study of 16,482 individuals found:

| Finding Type | Frequency | Rate |

|---|---|---|

| Any UF | 95/16,482 | 0.58% |

| Medically actionable UF | 86/16,482 | 0.52% |

Source: Lessons learned from unsolicited findings in clinical exome sequencing of 16,482 individuals [17]

The same study found significant differences in UF rates based on analysis strategy:

- Restricted disease-gene panels: 0.03% UF rate

- Whole-exome/Mendeliome analysis: 1.03% UF rate [17]

Which criteria determine if an incidental finding should be reported?

Two main criteria guide reporting decisions [16]:

- Clinical significance: Pathogenicity and medical actionability

- Patient-related factors: Patient preference to know, patient characteristics, and age

Professional consensus emphasizes that medically actionable findings should be disclosed when interventions can change disease course or allow prevention [17]. The ACMG recommends reporting mutations in specific genes associated with conditions where individuals remain asymptomatic for long periods and preventive measures/treatments are available [15].

How should I handle VUS in my research reporting?

Best practices include:

- Clearly label all VUS as having uncertain significance

- Do not use VUS for clinical decision-making [18]

- Implement periodic re-evaluation processes as knowledge evolves

- Report VUS in the context of the specific research question

- Acknowledge limitations in variant interpretation

? Troubleshooting Common Scenarios

Scenario: Managing Patient/Subject Anxiety About VUS

Challenge: Research participants express anxiety about receiving a VUS result.

Solution Framework:

- Emphasize that VUS are common findings with unknown implications

- Explain that most VUS are eventually reclassified as benign

- Provide clear timeline for re-evaluation

- Offer resources for genetic counseling support

Scenario: Deciding Whether to Report an Incidental Finding

Challenge: Determining whether a discovered incidental finding meets reporting thresholds.

Solution Framework:

- Apply the clinical significance criteria (pathogenicity, actionability)

- Consider patient/subject preferences and consent agreements

- Consult professional guidelines (ACMG, ESHG, etc.)

- Document the decision-making process thoroughly

- When in doubt, seek multidisciplinary consultation

? Experimental Protocols

Protocol 1: Systematic Approach to Variant Classification

Objective: Standardize variant interpretation across research team

Methodology:

- Variant Identification: Filter variants from WES data using quality metrics

- Database Annotation: Cross-reference with:

- Population databases (gnomAD)

- Disease-specific databases (ClinVar)

- In silico prediction tools (SIFT, PolyPhen-2, CADD)

- Evidence Collection: Gather data on:

- Population frequency

- Computational predictions

- Functional data

- Segregation data

- Literature evidence

- ACMG/AMP Guidelines Application: Classify using standardized criteria [2]

- Documentation: Record classification evidence and rationale

Expected Outcomes: Consistent variant classification across research cohort

Protocol 2: Incidentalfinding Evaluation Workflow

Objective: Ensure consistent handling of potential incidental findings

Methodology:

- Initial Identification: Flag pathogenic/likely pathogenic variants in genes unrelated to primary indication

- Clinical Actionability Assessment: Evaluate whether:

- Effective interventions exist

- Condition has significant health implications

- Early detection improves outcomes

- Consent Verification: Review participant preferences regarding result return

- Multidisciplinary Review: Discuss findings with ethics team, clinicians, researchers

- Decision Documentation: Record reporting decision and rationale

? Research Reagent Solutions

| Reagent/Resource | Function in VUS/IF Research |

|---|---|

| ClinVar Database | Repository of clinically relevant variants with interpretations [2] |

| ACMG/AMP Guidelines | Standardized framework for variant pathogenicity classification [2] |

| Genome Aggregation Database (gnomAD) | Population frequency data for variant filtering [2] |

| In silico Prediction Tools (SIFT, CADD) | Computational prediction of variant impact [2] |

| HGVS Nomenclature Standards | Standardized terminology for clear variant communication [19] |

? Workflow Visualization

Variant Interpretation and Reporting Workflow: This diagram illustrates the pathway from initial WES data generation through to reporting decisions for primary, secondary, and incidental findings, highlighting key decision points in the process.

Incidental Finding Rates by Analysis Type

| Analysis Approach | UF Rate | Study Population |

|---|---|---|

| Restricted disease-gene panels | 0.03% | 16,482 individuals [17] |

| Whole-exome/Mendeliome analysis | 1.03% | 16,482 individuals [17] |

| Overall WES cohort | 0.58% | 16,482 individuals [17] |

UF Characteristics from Large Cohort Study

| UF Characteristic | Percentage | Notes |

|---|---|---|

| In ACMG59 genes | 61% | [17] |

| Beyond ACMG59 list | 39% | Categories include disorders similar to ACMG59 (25%), modifiable disorders (7%), reproductive options (2%), pharmacogenetic (5%) [17] |

| Medically actionable | 91% | 86/95 UFs disclosed due to medical actionability [17] |

This technical support resource provides foundational information for researchers navigating the complex landscape of VUS and incidental findings in WES research. Regular consultation with current guidelines and multidisciplinary collaboration remain essential for ethical genomic research practice.

Troubleshooting Guides

Guide 1: Troubleshooting VUS Interpretation in WES Data

Problem: A Variant of Uncertain Significance (VUS) is identified in a gene relevant to your disease model, creating uncertainty for downstream research or validation experiments.

Background: A VUS is a genetic variant for which there is insufficient evidence to classify it as pathogenic or benign [9]. This is a classification of exclusion for alterations that lack key scientific evidence or present conflicting data [11]. In the context of WES research, it is crucial to remember that, per ACMG/AMP guidelines, a VUS should not be used for clinical decision-making [9] [20].

Investigation and Solution:

| Step | Investigation Action | Common Findings & Solutions |

|---|---|---|

| 1 | Verify Data Quality | Finding: Apparent variant is a sequencing artifact. Solution: Check sequencing depth and quality scores; confirm variant with Sanger sequencing if needed. |

| 2 | Interrogate Population Databases | Finding: Variant has a high allele frequency in gnomAD or 1000 Genomes. Solution: If frequency is higher than the disease prevalence, it is likely benign. |

| 3 | Query Clinical & Variant Databases | Finding: Variant is listed in ClinVar with conflicting interpretations. Solution: Weigh the evidence from submitters; check if your functional assay can resolve the conflict. |

| 4 | Utilize In-Silico Prediction Tools | Finding: Tools like SIFT, CADD, and GERP provide conflicting scores. Solution: Use meta-predictors or gene-specific calibration (e.g., GAVIN) for more accurate impact inference [2]. |

| 5 | Investigate Gene & Variant Function | Finding: The variant's effect on protein function (e.g., missense, nonsense) is unknown. Solution: Propose a functional study (e.g., cell-based assay) to characterize the variant's biochemical effect. |

Guide 2: Resolving VUS Reclassification Through Data Sharing

Problem: Your research has generated data that could help reclassify a VUS, but the path to formal reclassification is unclear.

Background: VUS reclassification is a collaborative process between the laboratory and the clinician/researcher [9]. Over time, as more evidence becomes available, variants can be reclassified. A recent study found that 91% of reclassified variants were downgraded to "benign," while only 9% were upgraded to "pathogenic" [20]. Data sharing is underscored as a critical component for facilitating this process and fostering equitable genomic medicine [21].

Investigation and Solution:

| Step | Investigation Action | Common Findings & Solutions |

|---|---|---|

| 1 | Collate All Available Evidence | Finding: Data is scattered across lab notes, published papers, and internal databases. Solution: Systematically gather all data, including functional assay results, case reports, and segregation data. |

| 2 | Submit Data to Public Databases | Finding: Your functional data is the missing evidence needed for reclassification. Solution: Submit all validated evidence to public repositories like ClinVar. Transparency in reporting is extremely important for the genetic community [9]. |

| 3 | Engage in Collaborative Consortia | Finding: Your single case is suggestive but not definitive. Solution: Share your findings with groups like ClinGen, VICC, or disease-specific research networks to find collaborating families or functional labs [21] [11]. |

| 4 | Contact the Original Testing Lab | Finding: The diagnostic lab that issued the original VUS report is unaware of your new data. Solution: formally present your evidence to the lab; they have the curation expertise to initiate a reclassification. |

Frequently Asked Questions (FAQs)

FAQ 1: VUS Fundamentals

Q1: What exactly is a VUS, and why is it so common in WES? A: A VUS is a genetic variant for which the available evidence is insufficient to determine whether it is disease-causing (pathogenic) or harmless (benign) [9]. It is common in WES because sequencing the ~20,000 genes in the exome reveals many rare variants that science has not yet had the chance to study in enough individuals to determine their clinical significance [2] [20].

Q2: Does a VUS result mean my research participant has an elevated disease risk? A: No. A VUS should not be used for clinical decision-making [9] [20]. All clinical and research management decisions should be based on personal and family history, not on the presence of the VUS [9].

Q3: Are all VUSs created equal? A: No. Many clinical laboratories now subclassify VUS into categories such as VUS-high (evidence leans towards pathogenic), VUS-mid (equivocal or no evidence), and VUS-low (evidence leans towards benign) [22]. This helps prioritize variants for further investigation.

FAQ 2: Reclassification Processes

Q4: How often are VUSs reclassified, and in what direction? A: Reclassification is an ongoing process. Data from four clinical laboratories shows distinct reclassification rates for different VUS subclasses [22]. A study from MD Anderson found that when reclassification occurs, about 91% of the time a VUS is downgraded to "benign," and only 9% of the time is it upgraded to "pathogenic" [20].

Q5: What is the most powerful evidence for reclassifying a VUS? A: The evidence is cumulative. Key types include:

- Genetic Evidence: Finding the same variant in multiple unrelated individuals with the same disease (case-control data) [9].

- Segregation Data: Demonstrating the variant tracks with the disease in a family [9].

- Functional Evidence: Experimental data from biochemical or cell-based assays showing a deleterious effect [11] [2].

- Population Data: Establishing that the variant is too common in healthy populations to be causative for a rare disease [11].

Q6: What is my role as a researcher in VUS reclassification? A: You are a critical part of the ecosystem. Your role is to:

- Share Data: Publish and submit your findings (e.g., functional data, case reports) to public databases like ClinVar [21] [9].

- Collaborate: Partner with clinical labs and research consortia to combine data on specific variants or genes [9].

- Investigate: Use research techniques to generate new biological evidence for VUS in your field of study.

Experimental Protocols & Workflows

Protocol 1: A Methodological Framework for VUS Reclassification Analysis

This protocol outlines the steps for systematically gathering evidence to support VUS reclassification, synthesizing guidelines from ACMG/AMP and recent laboratory practices [11] [22] [2].

1. Evidence Collection

- Population Frequency: Query large-scale population databases (e.g., gnomAD). A frequency higher than the disease prevalence is strong evidence for benign impact [11] [2].

- Computational Predictions: Run a suite of in-silico tools (e.g., SIFT, PolyPhen-2, CADD, REVEL) to predict the variant's effect on protein function. Use gene- or disease-specific calibrated tools where available [2].

- Literature and Database Mining: Conduct a comprehensive review of ClinVar, PubMed, and gene-specific databases. Look for functional studies, case reports, and existing classifications [11].

- Segregation Analysis: If possible, perform family studies to see if the variant co-segregates with the disease phenotype in affected relatives [9].

- Functional Studies: Design and execute in vitro or in vivo experiments (e.g., assays of protein function, splicing, or cell growth) to directly test the variant's biological impact [11].

2. Evidence Integration and Curation

- Weigh all collected evidence according to standardized guidelines like the ACMG/AMP criteria [21] [11].

- Resolve conflicting evidence. Strong evidence on one side can outweigh multiple weaker pieces of evidence on the other.

- Assign a final classification (Pathogenic, Likely Pathogenic, VUS, Likely Benign, Benign) based on the totality of evidence. For VUS, a subclass (High, Mid, Low) should be assigned based on the strength and direction of the available evidence [22].

3. Data Sharing and Reporting

- Submit the new classification and all supporting evidence to public databases such as ClinVar.

- If the VUS was found in a clinical test, report the new evidence to the diagnostic laboratory that issued the original report so they can issue a revised finding [20].

VUS Investigation Workflow: This diagram outlines the systematic process for resolving a VUS, from initial evidence gathering to final data sharing.

Visualizing VUS Subclassification and Reclassification

The following diagram and table summarize the VUS subclassification system used by leading laboratories and the observed reclassification outcomes, based on a recent multi-laboratory study [22].

VUS Subclassification Spectrum: This continuum shows the relationship between variant classifications. Dashed arrows indicate the most common reclassification pathways for each VUS subclass.

| VUS Subclass | Typical Evidence Level | Likely Reclassification Direction | Notes for Researchers |

|---|---|---|---|

| VUS-High | Evidence leans pathogenic but is insufficient. | More likely to be upgraded to Pathogenic/Likely Pathogenic [22]. | Highest priority for functional validation. Strong candidate for causative variant. |

| VUS-Mid | Equivocal, conflicting, or absent evidence. | Can be reclassified in either direction [22]. | Target for gathering new evidence (e.g., more cases, functional data). |

| VUS-Low | Evidence leans benign but is insufficient. | More likely to be downgraded to Benign/Likely Benign [22]. | Low priority for further investigation. Often filtered out in research analyses. |

Table 1: VUS Subclassification and Reclassification Trends. This table summarizes the characteristics and expected outcomes for the three VUS subclasses.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent Category | Function in VUS Resolution | Key Examples |

|---|---|---|

| Public Population Databases | Determine if a variant is too common in healthy populations to be disease-causing. | gnomAD [11] [2], 1000 Genomes Project [11] [2], dbSNP [2] |

| Clinical & Variant Databases | Provide curated information on variant pathogenicity and interpretations from other labs. | ClinVar [2], dbVar [2] |

| In-Silico Prediction Tools | Computationally predict the functional impact of a variant on the protein or gene. | SIFT [2], CADD (Combined Annotation Dependent Depletion) [2], GERP (Genomic Evolutionary Rate Profiling) [2] |

| Gene-Specific Curation Tools | Provide gene-specific calibration for variant interpretation, improving accuracy. | GAVIN (Gene-Aware Variant Interpretation) [2] |

| Functional Study Reagents | Experimentally test the biochemical consequences of a variant in a model system. | Site-Directed Mutagenesis Kits, cDNA clones, Antibodies for protein expression/ localization, Cell-based reporter assays, CRISPR-Cas9 tools for isogenic cell line creation |

| Data Sharing Platforms | Disseminate new evidence to the community to aid in collective reclassification efforts. | ClinVar, PubMed, Gene-specific databases |

Advanced Methodologies for VUS Interpretation and Prioritization

Troubleshooting Common Variant Calling Pipeline Issues

How do I resolve low concordance with GIAB benchmark datasets?

Low concordance with Genome in a Bottle (GIAB) benchmarks often originates from suboptimal tool selection or parameter configuration. A systematic evaluation of variant callers reveals significant performance differences [23].

Table: Variant Caller Performance Benchmark on GIAB Datasets [23] [24]

| Variant Caller | SNV Precision (%) | SNV Recall (%) | Indel Precision (%) | Indel Recall (%) | Key Characteristics |

|---|---|---|---|---|---|

| DeepVariant | >99 | >99 | >96 | >96 | Highest overall performance and robustness; deep learning-based |

| DRAGEN Enrichment | >99 | >99 | >96 | >96 | High precision/recall; commercial solution |

| Strelka2 | >98 | >98 | >94 | >94 | Well-established; consistent performance |

| GATK HaplotypeCaller | >97 | >97 | >92 | >92 | Traditional gold standard; requires filtering |

| Clair3 | >98 | >98 | >95 | >95 | Excellent for long-read data; fast processing |

| FreeBayes | >95 | >95 | >90 | >90 | Sensitivity to indels; higher false positives |

Solution: Implement a multi-caller approach. Start with DeepVariant or Strelka2 for primary analysis, using GATK HaplotypeCaller for validation. For commercial environments, DRAGEN provides excellent performance with computational efficiency [23] [24].

How can I address excessive false positive variant calls?

Excessive false positives frequently stem from inadequate read alignment, insufficient quality filtering, or PCR artifacts. Systematic benchmarking identifies several contributing factors [23] [25].

Troubleshooting Steps:

- Verify alignment quality: Check mapping quality scores (MAPQ) in BAM files. Remap with BWA-MEM if Bowtie2 was used, as Bowtie2 demonstrates significantly worse performance in variant calling benchmarks [23].

- Implement duplicate marking: Use Picard MarkDuplicates or Sambamba to remove PCR duplicates, which typically represent 5-15% of sequencing reads [25].

- Apply base quality score recalibration (BQSR): Utilize GATK's BQSR to correct for systematic errors in base quality scores [25].

- Implement rigorous filtering: For GATK calls, use Variant Quality Score Recalibration (VQSR) with population frequency data from gnomAD [25].

Table: Recommended Filtering Thresholds for Germline Variants [25]

| Filter Type | SNV Threshold | Indel Threshold | Rationale |

|---|---|---|---|

| Quality (QUAL) | >30 | >30 | Basic call quality threshold |

| Depth (DP) | >10 | >15 | Minimum read support |

| Mapping Quality (MQ) | >40 | >40 | Confidence in read placement |

| Strand Bias (FS) | <60 | <200 | Fisher's exact test for bias |

| Allele Balance (AB) | 0.25-0.75 | 0.25-0.75 | Heterozygous ratio expectation |

How do I optimize pipeline runtime and computational efficiency?

Bioinformatics pipelines can become computationally intensive, particularly with deep learning-based variant callers [23] [26].

Optimization Strategies:

- Tool Selection: DNAscope and Clair3 offer favorable speed-accuracy tradeoffs. DNAscope achieves significant reduction in computational cost compared to DeepVariant and GATK while maintaining high accuracy [26].

- Workflow Management: Implement Nextflow or Snakemake for parallel processing and resource optimization [27].

- Resource Allocation: For DeepVariant, utilize GPU acceleration where available. DNAscope efficiently leverages multi-threaded CPU processing without requiring GPU resources [26].

- Data Subsampling: For pipeline testing and development, use subsets (100K reads) rather than full datasets to accelerate iteration cycles [28].

Filtering Strategy Troubleshooting

How can I efficiently prioritize candidate variants from thousands of calls?

Whole exome sequencing typically identifies 20,000-30,000 variants per sample, making prioritization essential [29]. A stratified filtering approach dramatically improves efficiency.

Variant Prioritization Workflow for Efficient Candidate Identification

Implementation Protocol:

Initial Quality Filtering [25]:

- Apply quality thresholds: QUAL > 30, DP > 10, MQ > 40

- Remove variants failing strand bias filters

Population Frequency Filtering:

- Filter against gnomAD, 1000 Genomes, and population-specific databases

- Exclude variants with allele frequency > disease prevalence

Inheritance Pattern Application [14]:

- For consanguineous populations: prioritize homozygous variants

- For dominant disorders: focus on heterozygous variants

- For trio analysis: leverage segregation patterns

Variant Impact Assessment:

- Prioritize loss-of-function variants (stop-gain, frameshift, splice-site)

- Consider missense variants with high pathogenicity predictions

Phenotype Integration:

- Annotate with Human Phenotype Ontology (HPO) terms

- Match against known gene-disease associations (OMIM, ClinVar)

This approach can narrow candidates to 5-15 variants per case while maintaining high detection rates, reducing analysis time from 5 hours to approximately 45 minutes [14].

How should I handle Variants of Uncertain Significance (VUS) in clinical reporting?

VUS classification presents significant challenges in clinical interpretation and communication [30].

VUS Management Framework:

Evidence Collection:

- Gather segregation data within families

- Search for same variant in unrelated affected individuals

- Review functional studies in literature

- Assess conservation and computational predictions

Internal VUS Subclassification [30]:

- "VUS-high suspicion": Multiple supporting evidence lines

- "VUS-low suspicion": Minimal supporting evidence

- Note: These subclasses are for internal prioritization only

Reporting and Communication:

- Clearly state that VUS should not be used for predictive testing

- Explain evidence supporting and against pathogenicity

- Outline plan for potential reclassification

- Discuss implications for family members

Critical Consideration: VUS results require careful pre-test counseling and post-test communication to manage patient expectations and prevent clinical decision-making based on uncertain information [30].

Pipeline Implementation FAQs

What are the essential components of a robust variant calling pipeline?

Table: Research Reagent Solutions for Variant Calling Pipelines [28] [25]

| Component | Recommended Tools | Function | Key Considerations |

|---|---|---|---|

| Alignment | BWA-MEM, Minimap2 | Map reads to reference genome | BWA-MEM outperforms Bowtie2 for variant calling [23] |

| Duplicate Marking | Picard, Sambamba | Identify PCR duplicates | Essential for removing technical artifacts |

| Variant Calling | DeepVariant, GATK, Strelka2 | Detect SNVs/indels | Multi-caller improves sensitivity [23] |

| Variant Annotation | VEP, SnpEff | Predict functional impact | Critical for prioritization |

| Quality Control | FastQC, MultiQC | Assess data quality | Identify sequencing issues early |

| Workflow Management | Nextflow, Snakemake | Pipeline orchestration | Ensures reproducibility |

How do I validate my variant calling pipeline performance?

Benchmarking Protocol:

Utilize GIAB Reference Materials:

Performance Metric Calculation:

- Precision: TP/(TP+FP) - measures false positive rate

- Recall: TP/(TP+FN) - measures sensitivity

- F-score: Harmonic mean of precision and recall

Stratified Performance Analysis:

- Evaluate performance in different genomic contexts (high/low GC, mappability)

- Assess variant type-specific accuracy (SNVs vs. indels)

- Check performance in clinically relevant genes

Ongoing Monitoring:

- Include control samples in each sequencing batch

- Track performance metrics over time

- Investigate significant deviations immediately

What strategies improve detection of variants in challenging genomic regions?

Technical Solutions:

Leverage AI-Based Callers: DeepVariant and Clair3 demonstrate improved performance in repetitive regions and complex variant types due to their pattern recognition capabilities [26].

Utilize Multi-Platform Data: Integrate short-read and long-read sequencing where possible. Tools like Medaka specialize in Oxford Nanopore data, while DeepVariant supports multiple sequencing technologies [26].

Implement Region-Aware Filtering: Adjust filtering thresholds for known problematic regions (e.g., reduce strand bias thresholds in GC-rich regions).

Leverage Family Information: For trio sequencing, tools like DeepTrio incorporate familial relationships to improve variant calling accuracy, particularly for de novo mutations and in challenging regions [26].

Advanced Filtering and Interpretation FAQs

How can I optimize my filtering strategy for specific populations?

Population-specific considerations significantly impact filtering efficacy [14].

Consanguineous Population Protocol [14]:

Primary Filter - Homozygous Variants:

- Focus on variants with high homozygous alternate allele frequency

- Prioritize variants in autozygous regions (runs of homozygosity)

- This approach identifies ~82% of disease-causing variants in consanguineous populations

Secondary Filter - Compound Heterozygotes:

- Identify genes with multiple rare heterozygous variants

- Verify variants are in trans (phasing)

Population Frequency Database Selection:

- Use population-specific frequency data when available

- Adjust allele frequency thresholds based on disease prevalence

Outbred Population Protocol:

Broad Inheritance Model Consideration:

- Simultaneously evaluate autosomal dominant, recessive, and X-linked models

- Prioritize de novo variants for sporadic cases

Burden Testing:

- For cohort analysis, implement gene-based burden testing

- Identify genes enriched for rare variants in affected individuals

What are the best practices for clinical reporting of filtered variants?

Comprehensive Reporting Framework [7]:

Variant Classification:

- Follow ACMG/AMP guidelines for pathogenicity assessment

- Document evidence supporting classification

- Clearly distinguish between definitive and uncertain findings

Clinical Correlation:

- Match variant to patient phenotype using HPO terms

- Assess genotype-phenotype specificity

- Consider differential diagnoses

Reporting Structure:

- Primary findings: Variants explaining the indication for testing

- Secondary findings: Medically actionable incidental findings (if consented)

- Clearly separate definitive results from VUS

Family Communication Guidance:

- Provide specific recommendations for family member testing

- Include implications for reproductive decision-making

- Offer resources for genetic counseling

This troubleshooting guide provides a foundation for optimizing variant calling and filtering strategies in WES research. Regular benchmarking against gold standard datasets and continuous refinement based on emerging tools and technologies will ensure ongoing pipeline improvement and clinical reliability.

Within Whole Exome Sequencing (WES) research, a significant proportion of analyzed genetic variants are classified as Variants of Unknown Significance (VUS) [4]. A VUS is a change in a gene where the effect on the gene's function and its link to disease is not yet known [18]. Interpreting these VUS is one of the major unsolved challenges in clinical WES, as it is difficult to determine whether they are the cause of a patient's symptoms [4]. A powerful strategy to address this challenge is to incorporate detailed phenotypic data—the observable clinical symptoms and characteristics of a patient.

The Human Phenotype Ontology (HPO) provides a standardized, structured vocabulary for describing human phenotypic abnormalities [31]. By annotating diseases and patient symptoms with HPO terms, researchers can computationally analyze phenotypic similarities. For a VUS discovered via WES, demonstrating that the patient's HPO-annotated symptoms show significant similarity to the symptoms of other patients or known diseases linked to the same gene provides crucial, independent evidence to support the variant's potential pathogenicity [32]. This guide provides technical support for implementing and troubleshooting HPO-based symptom similarity scoring in a research setting.

FAQs: Core Concepts for Researchers

Q1: What is the HPO and how does its structure enable similarity calculation?

The HPO is a structured, controlled vocabulary of over 12,000 terms representing individual phenotypic anomalies [31] [33]. Its terms are organized as a directed acyclic graph, where each term can have multiple parent terms. This "is a" relationship creates a hierarchy from general to specific terms. For example, the term "Atrial septal defect" is a child of the more general term "Abnormality of the cardiac septa" [31]. This structure allows for flexible searches and similarity measurements based on shared ancestry between terms.

Q2: How can HPO-based semantic similarity help in prioritizing VUS from a WES analysis?

When a WES analysis yields multiple VUS in different genes, HPO-based similarity provides a data-driven method to prioritize them. The phenotypic profile of the patient (their symptoms as HPO terms) can be compared to the known phenotypic profile associated with each gene harboring a VUS. The gene whose associated phenotypes are most semantically similar to the patient's profile is considered a stronger candidate [32] [33]. This method uses phenotypic data to corroborate genetic findings, adding evidence beyond population frequency and in-silico prediction scores.

Q3: What are the common sources of error when mapping patient symptoms to HPO terms?

Incorrect phenotypic similarity scores often stem from issues during the initial annotation phase:

- Imprecision: Using terms that are too general (e.g., "Abnormality of the musculoskeletal system") instead of the most specific term available (e.g., "Arachnodactyly") [33].

- Noise: Including HPO terms that describe features not present in the patient, which can lead to incorrect disease matches [33].

- Incomplete Annotation: Failing to capture the full spectrum of the patient's phenotype, which can reduce the strength of similarity to the correct genetic disease.

Q4: Our analysis yielded a high similarity score to a disease, but the gene is not listed as associated. How should we proceed?

This can indicate a novel gene-disease association or a shared biological pathway. First, verify the accuracy and completeness of your patient's HPO annotations. If confirmed, this finding can be followed up by:

- Investigating the biological network (e.g., protein-protein interactions, shared pathways) between your candidate gene and the genes known to cause the matched disease [31].

- Searching for additional patients with similar phenotypes and variants in the same gene through collaborative platforms or the literature.

- Undertaking functional studies to validate the gene's biological role in the disease context.

Troubleshooting Common Experimental Issues

| Problem | Potential Cause | Solution |

|---|---|---|

| Low discrimination between candidate diseases. | Using overly broad HPO terms that are annotated to many diseases. | Re-annotate the patient using the most specific HPO terms possible. Leverage the HPO hierarchy to ensure you are not using high-level parent terms. |

| Computationally intensive similarity calculations. | Comparing large patient phenotype sets against thousands of diseases using a complex method. | For initial screening, use a faster method like Resnik. Consider pre-filtering the disease database based on a few key HPO terms before running the full similarity analysis. |

| Inconsistent results when using different similarity measures. | Different algorithms (e.g., Lin, Jiang-Conrath) have different theoretical foundations and sensitivities. | This is expected. Use multiple established measures (Resnik, Lin) and the RelativeBestPair method to create a consensus ranking of candidate genes/diseases [33]. |

| The true underlying disease is not ranked highly. | The patient's phenotype may be noisy, imprecise, or incomplete. | Re-evaluate the patient's clinical data for missing or inaccurately annotated features. Consider simulating noise/imprecision to test your method's robustness [33]. |

Detailed Experimental Protocols

Protocol: Calculating Patient-to-Disease Semantic Similarity

This protocol outlines the steps to quantify the similarity between a patient's phenotypic profile and a database of known genetic disorders using the HPO.

- Objective: To generate a ranked list of candidate genes or diseases based on phenotypic similarity to aid in the interpretation of VUS from WES.

- Principle: The method calculates a semantic similarity score by comparing the information content of HPO terms shared between the patient and known disease profiles [31] [33].

Workflow Diagram: HPO-Based Similarity Scoring for VUS Prioritization

Step-by-Step Methodology:

Phenotype Annotation:

- Input: Detailed clinical description of the patient.

- Action: Systematically map each clinical feature to the most specific applicable HPO term. Tools like PhenoTips can assist in this process [33].

- Output: A set of HPO terms, P = {p₁, p₂, ..., pₙ}, representing the patient's phenotype.

Data Preparation:

Calculate Information Content (IC):

Select a Similarity Measure and Calculate Scores:

- Choose a semantic similarity method. A robust and widely used method is the Resnik measure [31] [33].

- For a patient profile P and a disease profile TD, the similarity is calculated by comparing all terms between the two sets. One common approach is to take the average of the best-matching term pairs [31]:

- sim(P → TD) = (1/|P|) * Σ{p in P} max{t in TD} sim{Resnik}(p, t)

- where sim_{Resnik}(p, t) = IC(MICA(p, t)). MICA is the Most Informative Common Ancestor of terms p and t.

Rank and Interpret Results:

- Rank all diseases in the database based on their final similarity score to the patient.

- Cross-reference the top-ranking diseases with the list of genes harboring VUS from the WES analysis. A VUS in a gene associated with a high-ranking disease becomes a prime candidate for further investigation.

Protocol: Implementing the RelativeBestPair Method for Robust Matching

For cases involving noisy or imprecise phenotypic data, the RelativeBestPair method has been shown to outperform traditional measures [33].

- Objective: To reliably identify the correct underlying disease even when patient HPO annotations contain inaccuracies.

- Principle: This method assigns a score to a disease based on the sum of the inverse frequencies of the patient's terms that annotate it, with a cap to prevent single rare terms from dominating [33].

Methodology:

Precompute Term-Disease Scores:

- For each HPO term t, let N_t be the number of diseases annotated by t or any of its descendants.

- The score of a disease D given term t is defined as:

- S(D|t) = 1/N_t, if D is annotated by t (or a descendant)

- S(D|t) = 0, otherwise [33].

Calculate Aggregate Similarity Score:

- For a patient with a set of query terms {t₁, t₂, …, tₙ}, the similarity score for a disease D_k is:

- Sim(Dk | t₁, t₂, …, tₙ) = Σ{i=1}^n min(α, S(Dk | ti))

- The threshold α (typically set to 0.01) limits the influence of any single, extremely rare term [33].

- For a patient with a set of query terms {t₁, t₂, …, tₙ}, the similarity score for a disease D_k is:

Rank Diseases:

- Diseases are ranked by their Sim(D_k | ...) score in descending order.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in HPO Analysis | Key Features & Notes |

|---|---|---|

| HPO Ontology File | Core knowledge base of phenotypic terms and their relationships. | Available from the HPO website. Requires periodic updating to the latest version. |

| Phenotype-Annotated Disease Database (e.g., OMIM, Orphanet) | Provides the ground truth for training and testing similarity measures. | OMIM annotations are included with the HPO download [31]. |

| Semantic Similarity Software (e.g., HPOsim R package) | Provides pre-implemented algorithms (Resnik, Lin, etc.) for calculating similarity. | Saves development time and ensures methodological correctness [33]. |

| Phenotyping Tools (e.g., PhenoTips) | Facilitates accurate and consistent initial mapping of clinical notes to HPO terms. | Reduces annotation errors and time spent on manual curation [33]. |

| Custom Scripts (Python/R) | For implementing custom analysis pipelines, such as the RelativeBestPair method. | Essential for flexibility and integrating phenotypic analysis with WES variant data. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of integrating LOEUF scores into my WES variant filtering pipeline?

LOEUF (Loss-of-function Observed/Expected Upper bound Fraction) scores quantify a gene's intolerance to loss-of-function (LoF) mutations. Genes with low LOEUF scores (<0.35) are highly constrained and likely haploinsufficient, making LoF variants within them strong candidates for pathogenicity. Integrating this metric helps prioritize variants in genes under strong purifying selection, significantly improving the diagnostic yield of WES analysis [34] [35].

FAQ 2: How can I functionally validate a cryptic splice variant identified by SpliceAI?

A multi-step approach is recommended for validating putative splice-altering variants:

- In silico confirmation: Use complementary tools like Pangolin or MaxEntScan to support the SpliceAI prediction.

- Experimental validation: Perform RNA sequencing (RNA-seq) on patient-derived tissue or cell lines. This allows you to directly observe aberrant splicing, such as exon skipping, intron retention, or the creation of cryptic splice sites [34] [36].

- Visualization: Use tools like the Integrative Genomics Viewer (IGV) to visualize the aberrant transcripts from RNA-seq data compared to controls [34].

FAQ 3: My WES analysis identified a VUS with a high REVEL score but in a gene with a high (tolerant) LOEUF score. How should I proceed?

A high REVEL score indicates the missense variant is likely deleterious. However, a high LOEUF score suggests the gene tolerates haploinsufficiency. In this case:

- The variant might still be pathogenic through a gain-of-function or dominant-negative mechanism, which LOEUF does not directly assess.

- Prioritize the variant but place more weight on the REVEL score and the clinical phenotype match.

- Investigate gene-specific literature for known mechanisms of disease and seek additional functional evidence to support the variant's role [37] [35].

FAQ 4: What are the common pitfalls when running SpliceAI, and how can I avoid them?

Common issues and their solutions include:

- Pitfall: Using an old version of gene annotations (Gencode), leading to inaccurate scores.

- Solution: Always use the most recent Gencode version (e.g., v47) compatible with your genome build to ensure accurate transcript modeling [38].

- Pitfall: Misinterpreting scores for insertions (ins) with a position of

0bp.- Solution: Be cautious, as these scores can be difficult to interpret; consult the SpliceAI Lookup site for warnings on specific variants [38].

- Pitfall: Over-relying on the default delta score threshold of 0.2.

Troubleshooting Guides

Issue 1: Low Diagnostic Yield in WES After Standard Exonic Analysis

Problem: After analyzing exonic and canonical splice site variants (±2 bp), a large proportion of cases, often more than 50%, remain undiagnosed [34].

Solution: Implement a comprehensive re-analysis strategy that includes non-canonical regions and advanced bioinformatic filters.

- Step 1: Integrate Genetic Intolerance Scores. Annotate your variant call format (VCF) files with LOEUF scores using a VEP plugin. Filter for variants in genes with LOEUF < 0.35 to prioritize haploinsufficient genes [34] [39].

- Step 2: Perform Intronic Variant Analysis. Use SpliceAI to screen intronic regions (e.g., up to 500bp from exon-intron boundaries) for cryptic splice-altering variants. A delta score > 0.2 is a common threshold for further consideration [34] [37].

- Step 3: Combine Constraints. For cases with a suggestive phenotype but no candidate variant after Steps 1 and 2, analyze all variants (exonic, splice site, intronic) specifically within the set of genes with low LOEUF scores. This highly targeted approach can identify high-impact variants in critical genes [34].

This integrated workflow for re-analyzing undiagnosed WES cases can be visualized as follows:

Issue 2: Inconsistent SpliceAI Predictions Across Transcripts

Problem: A single variant yields different SpliceAI scores for different transcripts of the same gene, leading to uncertainty in interpretation.

Solution: Establish a consistent protocol for transcript selection.

- Prioritize MANE Select Transcripts: The MANE (Matched Annotation from NCBI and EBI) Select transcript is a representative, well-supported transcript for a gene and is recommended for clinical interpretation [38].

- Use Gencode "Basic" Annotation Set: When running SpliceAI or looking up scores, select the "basic" set of Gencode transcripts to reduce redundancy and focus on the most biologically relevant transcripts [38].

- Check VEP Consequences: Use the Ensembl VEP to determine the canonical transcript and the most severe transcriptional consequence of the variant. Let this guide your final assessment [39] [38].

Issue 3: High Number of VUSs with Moderate REVEL Scores

Problem: The REVEL score filter returns a large number of VUSs with scores in the intermediate range (e.g., 0.4-0.7), making prioritization difficult.

Solution: Apply a tiered filtering approach that combines REVEL with other lines of evidence.

- Set Stringent REVEL Thresholds: Use a high threshold (e.g., > 0.7 or 0.75) for "strong" evidence of pathogenicity and a lower threshold (e.g., > 0.5) for "supporting" evidence, in line with ACMG guidelines.

- Integrate with Genetic Constraint: Cross-reference with LOEUF scores. A REVEL score of 0.65 in a gene with a very low LOEUF score (e.g., <0.2) is much more compelling than the same REVEL score in a tolerant gene.

- Leverage Phenotype: Filter against genes associated with your patient's Human Phenotype Ontology (HPO) terms. A variant's priority increases significantly if it is found in a gene known to be associated with the observed clinical phenotype [40].

Data Presentation

Table 1: Interpretation Guidelines for Key In Silico Scores

| Tool | Score Range | Interpretation Guideline | Clinical / Research Utility |

|---|---|---|---|

| LOEUF | < 0.35 | Highly constrained gene. LoF variants are strong candidates for pathogenicity. | Prioritizes variants in haploinsufficient genes; provides gene-level context [34] [35]. |

| 0.35 - 0.7 | Moderately constrained gene. | Use with supporting evidence from other tools. | |

| > 0.7 | Tolerant gene. LoF variants are more likely to be benign. | Can be used to deprioritize variants, but does not rule out gain-of-function mechanisms. | |

| SpliceAI | 0.2 - 0.5 | Potential splice-altering effect. | Good for screening; requires additional evidence (e.g., other tools, RNA-seq) [34] [37]. |

| 0.5 - 0.8 | Strong likelihood of a splice defect. | Can be used as supporting evidence for pathogenicity. | |

| > 0.8 | Very high likelihood of a splice defect. | Can be used as moderate evidence for pathogenicity. | |

| REVEL | 0.5 - 0.75 | Supporting evidence for pathogenicity. | Useful for VUS classification; integrate with gene constraint and phenotype [39]. |

| 0.75 - 0.93 | Moderate evidence for pathogenicity. | ||

| > 0.93 | Strong evidence for pathogenicity. |

Table 2: Essential Research Reagent Solutions

| Reagent / Resource | Function / Application | Key Details |

|---|---|---|

| SpliceAI Lookup | Web-based tool for retrieving SpliceAI scores for specific variants. | Supports hg19 and hg38; allows selection of Gencode basic/comprehensive transcripts; integrates Pangolin and AlphaMissense scores [38]. |

| Ensembl VEP Plugins | Framework for annotating VCF files with LOEUF, REVEL, and SpliceAI scores. | Centralizes annotation; plugins exist for LOEUF, dbNSFP (which includes REVEL), and SpliceAI [39]. |

| gnomAD Browser | Population frequency database. | Essential for filtering common variants; provides LOEUF scores for genes [37] [35]. |

| ConSpliceML | Machine learning tool that combines SpliceAI predictions with regional splicing constraint. | Outperforms SpliceAI alone in prioritizing deleterious cryptic splicing variants [37]. |

| DECIPHER | Database of genomic variation and phenotype in patients. | Useful for comparing VUSs against variants found in other patients with similar phenotypes [41]. |

Experimental Protocols

Protocol 1: Comprehensive Re-analysis of Undiagnosed WES Cases Using Intronic Screening and Genetic Constraint

This protocol is adapted from a 2025 study that improved diagnostic yield by re-analyzing WES data from cases with congenital anomalies [34].

- Data Preparation: Start with the raw sequence data (VCF files) from the initial, inconclusive trio-WES.

- LOEUF Annotation: Re-annotate the VCF using Ensembl VEP with the LOEUF plugin. This adds the gene-level constraint score to each variant.

- Intronic Screening with SpliceAI:

- a. For cases with a phenotype suggestive of a specific gene set (e.g., known disease-associated genes for the observed anomalies), run SpliceAI on all exonic, splice site, and intronic variants within those genes.

- b. Use a delta score cutoff of > 0.2 to select candidates. Automated scripts (Bash/Python) can process trio-VCF files, with an average runtime of 5-10 minutes per family.

- Genome-wide Constrained Gene Screening:

- a. For remaining undiagnosed cases, run SpliceAI on all variants (exonic, splice site, intronic) located within a pre-defined set of highly constrained genes (e.g., genes with LOEUF < 0.35).

- b. This automated script, which also filters for variant frequency and SpliceAI score, takes approximately 30-60 minutes per family to run.

- Variant Prioritization and Validation: Manually curate the list of candidate variants, applying inheritance filtering and ACMG/AMP guidelines. Validate putative splice variants using RNA sequencing.

Protocol 2: Functional Validation of a Cryptic Splice Variant via RNA Sequencing

This protocol is derived from methods used to validate intronic variants identified by SpliceAI [34] [36].

- Sample Acquisition: Obtain patient-derived tissue relevant to the disease phenotype (e.g., placental villi, fibroblasts, lymphoblastoid cell lines (LCLs)).

- RNA Extraction: Extract total RNA using a commercial kit (e.g., RNeasy Fibrous Tissue Mini Kit), including a DNase digestion step to remove genomic DNA.

- Library Preparation and Sequencing: Prepare an RNA-seq library using a strand-specific kit (e.g., NEBNext Ultra II Directional RNA Library Prep Kit). Sequence on a platform such as Illumina NovaSeq6000 in 100 bp paired-end mode.

- Data Analysis:

- Alignment: Map the sequencing reads to the reference genome using a splice-aware aligner like HISAT2.

- Visualization: Load the aligned BAM files into the Integrative Genomics Viewer (IGV). Compare the patient's splicing pattern at the variant locus against control samples to visually confirm aberrant splicing (e.g., exon skipping, intron retention).

- Quantification: Use tools like

rMATSto statistically quantify the differential splicing events between case and control samples.

Performance Metrics of Variant Prioritization Tools

The following table summarizes key quantitative data on the performance of optimized variant prioritization systems, demonstrating their impact on diagnostic yield.

| Tool / Strategy | Dataset | Key Performance Metric | Default Performance | Optimized Performance | Reference / Notes |

|---|---|---|---|---|---|

| Exomiser (Optimized) | Genome Sequencing (GS) | Diagnostic variants in top 10 | 49.7% | 85.5% | [42] |

| Exomiser (Optimized) | Exome Sequencing (ES) | Diagnostic variants in top 10 | 67.3% | 88.2% | [42] |

| Genomiser (Optimized) | Non-coding variants | Diagnostic variants in top 10 | 15.0% | 40.0% | Recommended as complementary to Exomiser [42] |

| Exomiser Reanalysis Strategy | 24,015 unsolved cases | New diagnoses identified | N/A | 463 (2%) | Strategy for periodic reanalysis [43] |

| AutScore.r | 441 ASD probands | Detection accuracy rate | N/A | 85% | Diagnostic yield of 10.3%; cut-off ≥ 0.335 [44] |

Frequently Asked Questions

Troubleshooting Guide: Exomiser and Genomiser

Why are my diagnostic variants not ranking in the top candidates?

Poor ranking is often linked to suboptimal parameter settings. A 2025 study demonstrated that customizing parameters significantly improves performance.

- Problem: Using Exomiser's default settings.

- Solution:

- Optimize Key Parameters: Systematically adjust parameters for gene-phenotype association data, variant pathogenicity predictors, and the quality of input Human Phenotype Ontology (HPO) terms [42].

- Validate HPO Terms: Ensure the use of a comprehensive and accurate list of HPO terms. The number and quality of terms directly impact the phenotype score and ranking accuracy [42].

- Check Variant Data: Confirm the inclusion and accuracy of family variant data in the PED file, as segregation analysis is crucial for prioritization [42].

Recommended Methodology for Parameter Optimization [42]:

- Systematic Evaluation: Assess how tool performance is affected by key parameters, including:

- Gene-phenotype association data sources.

- Variant pathogenicity prediction algorithms.

- Phenotype term quality and quantity.

- Accuracy of familial variant segregation data.

- Benchmarking: Use a cohort of solved cases, such as diagnosed probands from the Undiagnosed Diseases Network (UDN), to benchmark tool performance and identify optimal settings.

How can I effectively manage a high volume of Variants of Uncertain Significance (VUS) in my results?

VUS constitute the largest category of variants in rare disease research, creating a major interpretation bottleneck [2]. An integrative, score-based approach can streamline their assessment.